of first gemma model Launched early final yr, it has since grown right into a thriving Gemmaverse with over 160 million whole downloads. This ecosystem features a household of greater than a dozen fashions specialised in every part from security safety to medical functions, and most impressively, numerous improvements from the neighborhood. From innovators like Roboflow constructing enterprise laptop imaginative and prescient to Tokyo College of Science making a high-performance Japanese Gemma variant, your work has proven us the best way ahead.

Constructing on this unimaginable momentum, we’re excited to announce the total launch of Gemma 3n. in the meantime Last month’s preview We have had a glimpse, however as we speak we’re unleashing the total energy of this mobile-first structure. Gemma 3n is designed for the developer neighborhood that helped form Gemma. Supported by your favourite instruments like Hugging Face Transformers, llama.cpp, Google AI Edge, Ollama, and MLX, it is simple to tweak and deploy in your particular on-device functions. This publish is detailed for builders. We discover a few of the improvements behind Gemma 3n, share new benchmark outcomes, and present you tips on how to begin constructing as we speak.

What’s new in Gemma 3n?

Gemma 3n represents a serious advance in on-device AI, bringing highly effective multimodal capabilities to edge gadgets with efficiency beforehand solely seen in final yr’s cloud-based Frontier fashions.

Attaining this leap in on-device efficiency required a elementary rethinking of the mannequin. The muse is Gemma 3n’s distinctive mobile-first structure, and all of it begins with MatFormer.

MatFormer: One mannequin, completely different sizes

On the core of Gemma 3n is: mat former (🪆Matryoshka Transformers) structurea brand new nested transformer constructed for elastic inference. Consider it like a matryoshka doll. Giant fashions embody totally practical smaller variations. This method extends the next ideas: Matryoshka expression learning From easy embedding to all transformer parts.

As proven within the determine above, throughout MatFormer coaching of a 4B efficient parameter (E4B) mannequin, a 2B efficient parameter (E2B) submodel is concurrently optimized inside it. This now offers two highly effective options and use instances for builders.

1: Pre-extracted mannequin: You’ll be able to immediately obtain and use the primary E4B mannequin for optimum performance, or standalone E2B submodels which have already been extracted, offering as much as 2x sooner inference.

2: Customized dimension by combine and match: For extra management to fit your particular {hardware} constraints, you’ll be able to create a custom-sized spectrum of fashions between E2B and E4B utilizing a way referred to as Combine-n-Match. This system permits us to exactly slice the parameters of the E4B mannequin, primarily by adjusting the hidden dimensions (8192 to 16384) of the feedforward community per layer and selectively skipping some layers. is being launched. mat former laba device that reveals tips on how to acquire these optimum fashions, recognized by evaluating completely different settings on benchmarks comparable to MMLU.

MMLU scores for pre-trained Gemma 3n checkpoints at varied mannequin sizes (utilizing Combine-n-Match)

Trying to the long run, the MatFormer structure additionally opens the door to: elastic execution. Though not a part of the implementation launched as we speak, this function permits a single deployed E4B mannequin to dynamically swap between E4B and E2B inference paths on the fly, optimizing efficiency and reminiscence utilization in real-time based mostly on the present activity and gadget load.

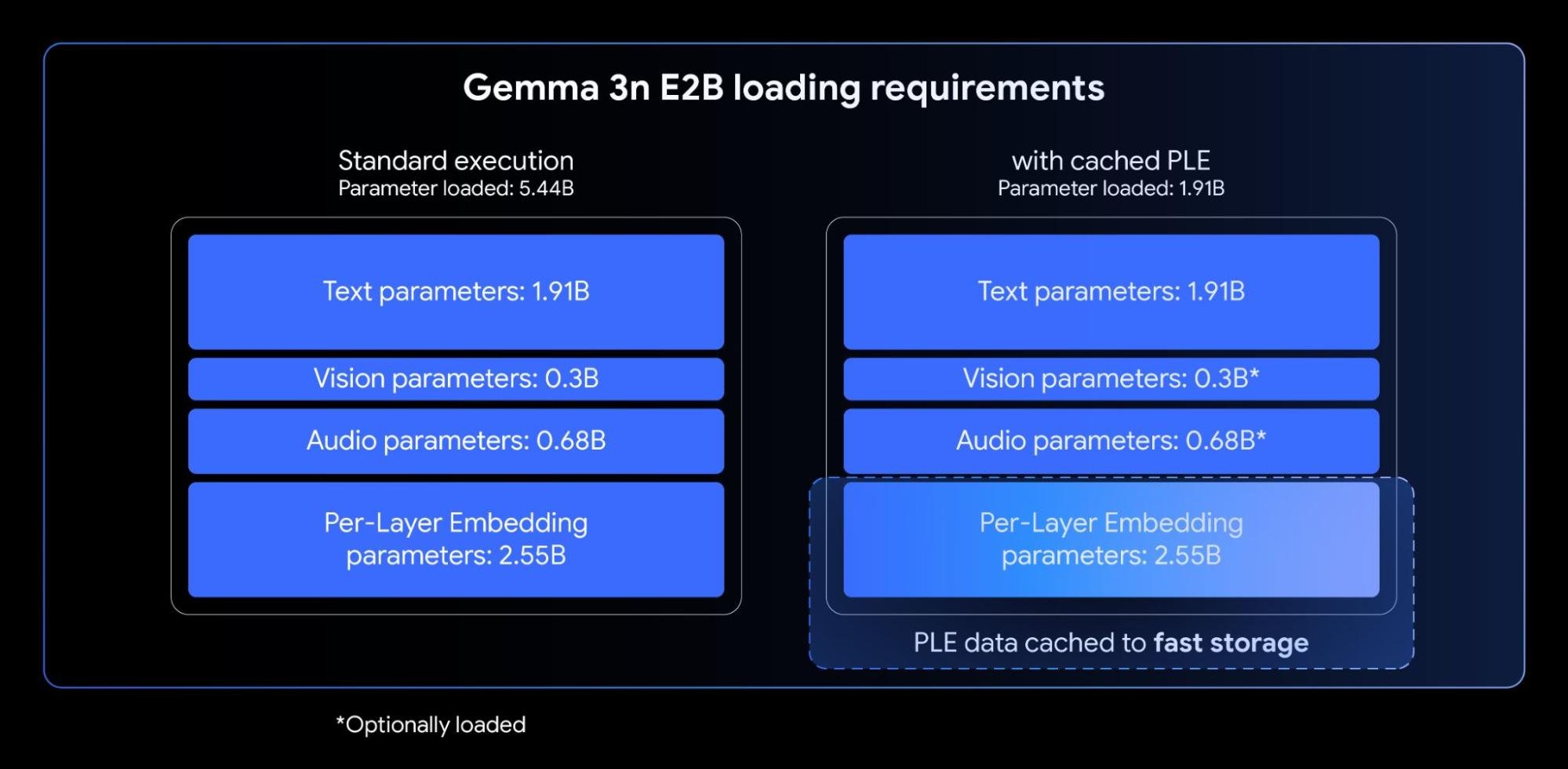

Per-layer embedding (PLE): Enhancing reminiscence effectivity

Gemma 3n fashions incorporate: Layer-by-layer embedding (PLE). This innovation is tailor-made for on-device deployments to dramatically enhance mannequin high quality with out rising the high-speed reminiscence footprint required by gadget accelerators (GPU/TPU).

The entire variety of parameters for the Gemma 3n E2B and E4B fashions is 5B and 8B, respectively, however utilizing PLE, most of those parameters (the embeddings related to every layer) might be loaded onto the CPU and computed effectively. Because of this solely the core transformer weight (about 2B for E2B and 4B for E4B) must be saved within the usually extra constrained accelerator reminiscence (VRAM).

Layer-wise embedding permits you to use Gemma 3n E2B whereas loading solely ~2B parameters into the accelerator.

KV cache sharing: dashing up lengthy context processing

Processing lengthy inputs, comparable to sequences derived from audio or video streams, is important to many superior on-device multimodal functions. Gemma 3n introduces KV cache sharing, a function designed to considerably cut back time to first token for streaming response functions.

KV cache sharing optimizes how the mannequin handles the preliminary enter processing stage (also known as the “prefill” part). Center-tier keys and values from native and international consideration are shared immediately with all top-tiers, delivering a notable 2x enchancment in prefill efficiency in comparison with Gemma 3 4B. Because of this the mannequin can seize and perceive lengthy immediate sequences a lot sooner than earlier than.

Understanding Speech: Introducing Speech-to-Textual content Conversion and Translation

Gemma 3n is Universal Speech Model (USM). The encoder generates a token each 160 milliseconds of audio (roughly 6 tokens per second), and these tokens are built-in as enter to the language mannequin to offer an in depth illustration of the sound context.

This built-in audio performance allows key options for on-device growth, together with:

- Computerized speech recognition (ASR): Allow high-quality audio-to-text transcription immediately in your gadget.

- Computerized speech translation (AST): Translate spoken phrases into textual content in one other language.

We’ve seen significantly sturdy AST outcomes for translations between English and Spanish, French, Italian, and Portuguese, providing nice potential for builders focusing on functions in these languages. For duties comparable to speech translation, thought chain prompts can tremendously enhance outcomes. For instance:

<bos><start_of_turn>consumer

Transcribe the next speech phase in Spanish, then translate it into English:

<start_of_audio><end_of_turn>

<start_of_turn>mannequinplain textual content

At launch, a Gemma 3n encoder is carried out to course of audio clips of as much as 30 seconds. Nevertheless, this isn’t a elementary limitation. The underlying audio encoder is a streaming encoder that may course of audio of arbitrary size with extra long-form audio coaching. Comply with-up implementation allows low-latency, long-time streaming functions.

MobileNet-V5: The brand new state-of-the-art imaginative and prescient encoder

Along with built-in audio capabilities, Gemma 3n incorporates a new high-efficiency imaginative and prescient encoder. Cell Internet-V5-300Moffers state-of-the-art efficiency for multimodal duties on edge gadgets.

MobileNet-V5 is designed for flexibility and energy even on constrained {hardware}, offering builders with the next capabilities:

- A number of enter resolutions: Native assist for 256×256, 512×512, and 768×768 pixel resolutions permits you to steadiness efficiency and element for particular functions.

- Broad visible understanding: Collectively educated on in depth multimodal datasets and excels at a variety of picture and video understanding duties.

- excessive throughput: Course of as much as 60 frames per second on Google Pixel, enabling real-time on-device video analytics and interactive experiences.

This stage of efficiency is achieved by means of a number of architectural improvements, together with:

- Superior foundations of the MobileNet-V4 block, together with common inverse bottleneck and cell MQA.

- Considerably scaled-up structure. It incorporates a hybrid deep pyramid mannequin that’s 10x bigger than the most important MobileNet-V4 variant.

- New Multi-Scale Fusion VLM adapter that enhances token high quality to enhance accuracy and effectivity.

Benefiting from a brand new architectural design and superior distillation methods, MobileNet-V5-300M considerably outperforms Gemma 3’s baseline SoViT (educated on SigLip, no distillation). Google Pixel Edge TPU Achieves 13x speedup with quantization (6.5x with out quantization), requires 46% fewer parameters, and makes use of 4x much less reminiscencewhereas considerably bettering accuracy in visual-linguistic duties.

We sit up for sharing extra particulars in regards to the work behind this mannequin. Keep tuned for future MobileNet-V5 technical reviews. This report particulars mannequin structure, knowledge scaling methods, and superior distillation methods.

Making Gemma 3n accessible from day one was a prime precedence. We’ve partnered with many nice open supply builders to create merchandise comparable to AMD, Axolotl, dockerhug face, llama.cpp, LMStudio, MLX, NvidiaOllama, RedHat, SGLang, Unsloth, and vLLM.

However this ecosystem is only the start. The true energy of this know-how lies in what you construct with it. That is why we Gemma 3n impact challenge. Your mission: To construct merchandise for a greater world utilizing Gemma 3n’s distinctive on-device, offline, and multimodal capabilities. For a $150,000 prize, we’re on the lookout for compelling video tales and “wow” issue demos that show real-world influence. take part in the challenge and contribute to constructing a greater future.

Get began with Gemma 3n now

Are you able to discover the probabilities of Gemma 3n now? This is how.

- Let’s experiment immediately: use Google AI Studio You’ll be able to attempt Gemma 3n in only a few clicks. Gemma fashions may also be deployed on to Cloud Run from AI Studio.

- Study and combine: soar into us Comprehensive documentation Rapidly combine Gemma into your undertaking or get began with our inference and fine-tuning information.