In 2024, the Ministry of Financial system, Commerce and Trade (METI) launched the Generative AI Accelerator Challenge (GENIAC)—a Japanese nationwide program to spice up generative AI by offering corporations with funding, mentorship, and large compute sources for basis mannequin (FM) growth. AWS was chosen because the cloud supplier for GENIAC’s second cycle (cycle 2). It supplied infrastructure and technical steering for 12 collaborating organizations. On paper, the problem appeared simple: give every group entry to a whole lot of GPUs/Trainium chips and let innovation ensue. In follow, profitable FM coaching required excess of uncooked {hardware}.

AWS found that allocating over 1,000 accelerators was merely the start line—the true problem lay in architecting a dependable system and overcoming distributed coaching obstacles. Throughout GENIAC cycle 2, 12 clients efficiently deployed 127 Amazon EC2 P5 situations (NVIDIA H100 TensorCore GPU servers) and 24 Amazon EC2 Trn1 situations (AWS Trainium1 servers) in a single day. Over the next 6 months, a number of large-scale fashions had been skilled, together with notable tasks like Stockmark-2-100B-Instruct-beta, Llama 3.1 Shisa V2 405B, and Llama-3.1-Future-Code-Ja-8B, and others.

This put up shares the important thing insights from this engagement and beneficial classes for enterprises or nationwide initiatives aiming to construct FMs at scale.

Cross-functional engagement groups

A vital early lesson from technical engagement for the GENIAC was that working a multi-organization, national-scale machine studying (ML) initiative requires coordinated assist throughout numerous inner groups. AWS established a digital group that introduced collectively account groups, specialist Options Architects, and repair groups. The GENIAC engagement mannequin thrives on shut collaboration between clients and a multi-layered AWS group construction, as illustrated within the following determine.

Prospects (Cx) usually encompass enterprise and technical leads, together with ML and platform engineers, and are accountable for executing coaching workloads. AWS account groups (Options Architects and Account Managers) handle the connection, preserve documentation, and preserve communication flows with clients and inner specialists. The World Broad Specialist Group (WWSO) Frameworks group makes a speciality of large-scale ML workloads, with a deal with core HPC and container companies reminiscent of AWS ParallelCluster, Amazon Elastic Kubernetes Service (Amazon EKS), and Amazon SageMaker HyperPod. The WWSO Frameworks group is accountable for establishing this engagement construction and supervising technical engagements on this program. They lead the engagement in partnership with different stakeholders and function an escalation level for different stakeholders. They work straight with the service groups—Amazon Elastic Compute Cloud (Amazon EC2), Amazon Easy Storage Service (Amazon S3), Amazon FSx, and SageMaker HyperPod—to assist navigate engagements, escalations (enterprise and technical), and ensure the engagement framework is in working order. They supply steering on coaching and inference to clients and educate different groups on the know-how. The WWSO Frameworks group labored carefully with Lead Options Architects (Lead SAs), a job particularly designated to assist GENIAC engagements. These Lead SAs function a cornerstone of this engagement. They’re an extension of the Frameworks specialist group and work straight with clients and the account groups. They interface with clients and interact their Framework specialist counterparts when clarification or additional experience is required for in-depth technical discussions or troubleshooting. With this layered construction, AWS can scale technical steering successfully throughout complicated FM coaching workloads.

One other vital success issue for GENIAC was establishing sturdy communication channels between clients and AWS members. The inspiration of our communication technique was a devoted inner Slack channel for GENIAC program coordination, connecting AWS account groups with lead SAs. This channel enabled real-time troubleshooting, information sharing, and speedy escalation of buyer points to the suitable technical specialists and repair group members. Complementing this was an exterior Slack channel that bridged AWS groups with clients, making a collaborative atmosphere the place individuals may ask questions, share insights, and obtain instant assist. This direct line of communication considerably lowered decision occasions and fostered a group of follow amongst individuals.

AWS maintained complete workload monitoring paperwork, which clarifies every buyer’s coaching implementation particulars (mannequin structure, distributed coaching frameworks, and associated software program parts) alongside infrastructure specs (occasion sorts and portions, cluster configurations for AWS ParallelCluster or SageMaker HyperPod deployments, and storage options together with Amazon FSx for Lustre and Amazon S3). This monitoring system additionally maintained a chronological historical past of buyer interactions and assist circumstances. As well as, the engagement group held weekly assessment conferences to trace excellent buyer inquiries and technical points. This common cadence made it doable for group members to share classes realized and apply them to their very own buyer engagements, fostering steady enchancment and information switch throughout this system.

With a structured method to communication and documentation, we may determine widespread challenges, reminiscent of misconfigured NCCL library impacting multi-node performance, share options throughout groups, and repeatedly refine our engagement mannequin. The detailed monitoring system supplied beneficial insights for future GENIAC cycles, serving to us anticipate buyer wants and proactively handle potential bottlenecks within the FM growth course of.

Reference architectures

One other early takeaway was the significance of stable reference architectures. Relatively than let every group configure their very own cluster from scratch, AWS created pre-validated templates and automation for 2 most important approaches: AWS ParallelCluster (for a user-managed HPC cluster) and SageMaker HyperPod (for a managed, resilient cluster service). These reference architectures coated the total stack—from compute, community, and storage to container environments and monitoring—and had been delivered as a GitHub repository so groups may deploy them with minimal friction.

AWS ParallelCluster proved invaluable as an open supply cluster administration device for multi-node GPU coaching. AWS ParallelCluster automates the setup of a Slurm-based HPC cluster on AWS. AWS ParallelCluster simplifies cluster provisioning primarily based on the open supply Slurm scheduler, utilizing a easy YAML config to face up the atmosphere. For the GEINIAC program, AWS additionally provided SageMaker HyperPod as an alternative choice for some groups. SageMaker HyperPod is a managed service that provisions GPU and Trainium clusters for large-scale ML. HyperPod integrates with orchestrators like Slurm or Kubernetes (Amazon EKS) for scheduling, offering further managed performance round cluster resiliency. By together with reference architectures for each AWS ParallelCluster and SageMaker HyperPod, the GENIAC program gave individuals flexibility—some opted for the fine-grained management of managing their very own HPC cluster, whereas others most popular the comfort and resilience of a managed SageMaker HyperPod cluster.

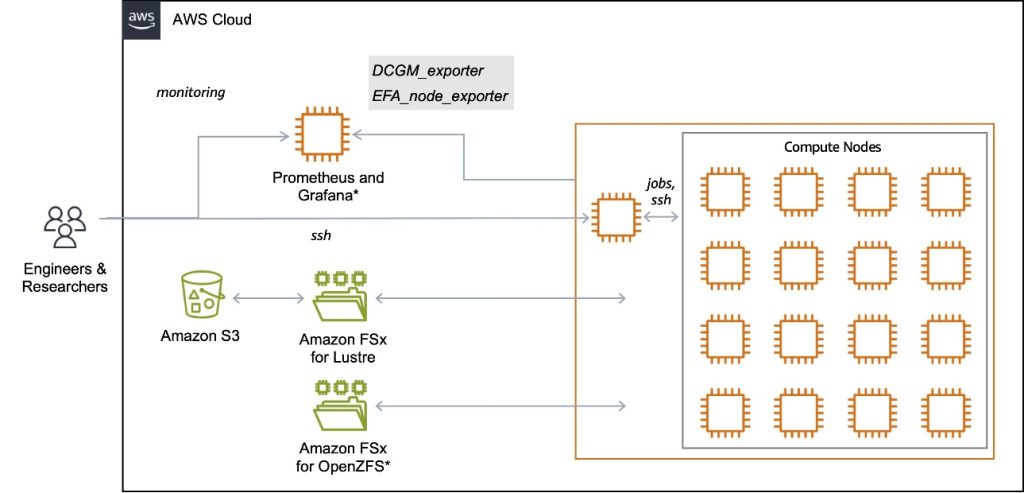

The reference structure (proven within the following diagram) seamlessly combines compute, networking, storage, and monitoring into an built-in system particularly designed for large-scale FM coaching.

The bottom infrastructure stack is offered as an AWS CloudFormation template that provisions the whole infrastructure stack with minimal effort. This template routinely configures a devoted digital non-public cloud (VPC) with optimized networking settings and implements a high-performance FSx for Lustre file system for coaching information (complemented by optionally available Amazon FSx for OpenZFS assist for shared residence directories). The structure is accomplished with an S3 bucket that gives sturdy, long-term storage for datasets and mannequin checkpoints, sustaining information availability effectively past particular person coaching cycles. This reference structure employs a hierarchical storage method that balances efficiency and cost-effectiveness. It makes use of Amazon S3 for sturdy, long-term storage of coaching information and checkpoints, and hyperlinks this bucket to the Lustre file system by an information repository affiliation (DRA). The DRA permits computerized and clear information switch between Amazon S3 and FSx for Lustre, permitting high-performance entry with out guide copying. You should utilize the next CloudFormation template to create the S3 bucket used on this structure.

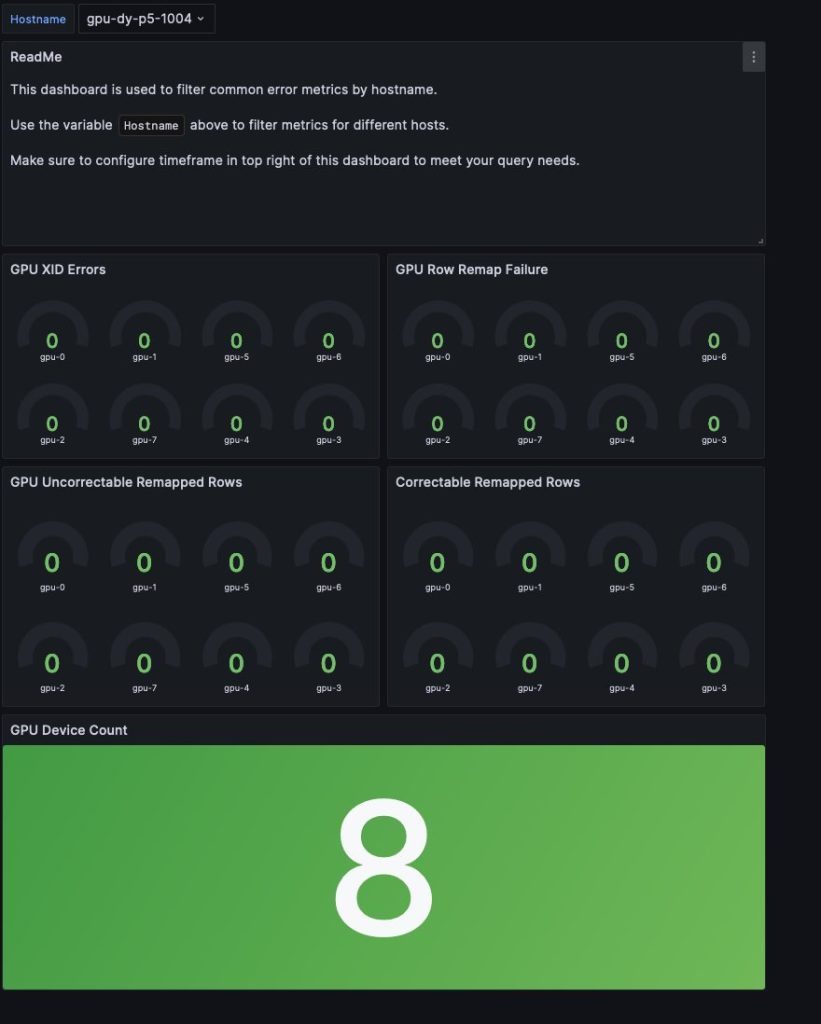

The optionally available monitoring infrastructure combines Amazon Managed Service for Prometheus and Amazon Managed Grafana (or self-managed Grafana service running on Amazon EC2) to supply complete observability. It built-in DCGM Exporter for GPU metrics and EFA Exporter for community metrics, enabling real-time monitoring of system well being and efficiency. This setup permits for steady monitoring of GPU well being, community efficiency, and coaching progress, with automated alerting for anomalies by Grafana Dashboards. For instance, the GPU Health Dashboard (see the next screenshot) gives metrics of widespread GPU errors, together with Uncorrectable Remapped Rows, Correctable Remapped Rows, XID Error Codes, Row Remap Failure, Thermal Violations, and Lacking GPUs (from Nvidia-SMI), serving to customers determine {hardware} failures as rapidly as doable.

Reproducible deployment guides and structured enablement periods

Even the perfect reference architectures are solely helpful if groups know how one can use them. A vital ingredient of GENIAC’s success was reproducible deployment guides and structured enablement by workshops.On October 3, 2024, AWS Japan and the WWSO Frameworks group carried out a mass enablement session for GENIAC Cycle 2 individuals, inviting Frameworks group members from the USA to share finest practices for FM coaching on AWS.

The enablement session welcomed over 80 individuals and supplied a complete mixture of lectures, hands-on labs, and group discussions—incomes a CSAT rating of 4.75, reflecting its robust impression and relevance to attendees. The lecture periods coated infrastructure fundamentals, exploring orchestration choices reminiscent of AWS ParallelCluster, Amazon EKS, and SageMaker HyperPod, together with the software program parts vital to construct and practice large-scale FMs utilizing AWS. The periods highlighted sensible challenges in FM growth—together with large compute necessities, scalable networking, and high-throughput storage—and mapped them to applicable AWS companies and finest practices. (For extra info, see the slide deck from the lecture session.) One other session centered on finest practices, the place attendees realized to arrange efficiency dashboards with Prometheus and Grafana, monitor EFA site visitors, and troubleshoot GPU failures utilizing NVIDIA’s DCGM toolkit and customized Grafana dashboards primarily based on the Frameworks group’s expertise managing a cluster with 2,000 P5 situations.

Moreover, the WWSO group ready workshops for each AWS ParallelCluster (Machine Learning on AWS ParallelCluster) and SageMaker HyperPod (Amazon SageMaker HyperPod Workshop), offering detailed deployment guides for the aforementioned reference structure. Utilizing these supplies, individuals carried out hands-on workout routines deploying their coaching clusters utilizing Slurm with file techniques together with FSx for Lustre and FSx for OpenZFS, working multi-node PyTorch distributed coaching. One other section of the workshop centered on observability and efficiency tuning, instructing individuals how one can monitor useful resource utilization, community throughput (EFA site visitors), and system well being. By the top of those enablement periods, clients and supporting AWS engineers had established a shared baseline of data and a toolkit of finest practices. Utilizing the belongings and information gained through the workshops, clients participated in onboarding periods—structured, hands-on conferences with their Lead SAs. These periods differed from the sooner workshops by specializing in customer-specific cluster deployments tailor-made to every group’s distinctive use case. Throughout every session, Lead SAs labored straight with groups to deploy coaching environments, validate setup utilizing NCCL checks, and resolve technical points in actual time.

Buyer suggestions

“To basically remedy information entry challenges, we considerably improved processing accuracy and cost-efficiency by making use of two-stage reasoning and autonomous studying with SLM and LLM for normal gadgets, and visible studying with VLM utilizing 100,000 artificial information samples for detailed gadgets. We additionally utilized Amazon EC2 P5 situations to reinforce analysis and growth effectivity. These formidable initiatives had been made doable because of the assist of many individuals, together with AWS. We’re deeply grateful for his or her in depth assist.”

– Takuma Inoue, Govt Officer, CTO at AI Inside

“Future selected AWS to develop large-scale language fashions specialised for Japanese and software program growth at GENIAC. When coaching large-scale fashions utilizing a number of nodes, Future had considerations about atmosphere settings reminiscent of inter-node communication, however AWS had a variety of instruments, reminiscent of AWS ParallelCluster, and we obtained robust assist from AWS Options Architects, which enabled us to begin large-scale coaching rapidly.”

– Makoto Morishita, Chief Analysis Engineer at Future

Outcomes and searching forward

GENIAC has demonstrated that coaching FMs at scale is basically an organizational problem, not merely a {hardware} one. By way of structured assist, reproducible templates, and a cross-functional engagement group (WWSO Frameworks Staff, Lead SAs, and Account Groups), even small groups can efficiently execute large workloads within the cloud. Because of this construction, 12 clients launched over 127 P5 situations and 24 Trn1 situations throughout a number of AWS Areas, together with Asia Pacific (Tokyo), in a single day. A number of massive language fashions (LLMs) and customized fashions had been skilled efficiently, together with a 32B multimodal mannequin on Trainium and a 405B tourism-focused multilingual mannequin.The technical engagement framework established by GENIAC Cycle 2 has supplied essential insights into large-scale FM growth. Constructing on this expertise, AWS is advancing enhancements throughout a number of dimensions: engagement fashions, technical belongings, and implementation steering. We’re strengthening cross-functional collaboration and systematizing information sharing to ascertain a extra environment friendly assist construction. Reference architectures and automatic coaching templates proceed to be enhanced, and sensible technical workshops and finest practices are being codified primarily based on classes realized.AWS has already begun preparations for the subsequent cycle of GENIAC. As a part of the onboarding course of, AWS hosted a complete technical occasion in Tokyo on April 3, 2025, to equip FM builders with hands-on expertise and architectural steering. The occasion, attended by over 50 individuals, showcased the dedication AWS has to supporting scalable, resilient generative AI infrastructure.

The occasion highlighted the technical engagement mannequin of AWS for GENIAC, alongside different assist mechanisms, together with the LLM Improvement Assist Program and Generative AI Accelerator. The day featured an intensive workshop on SageMaker HyperPod and Slurm, the place individuals gained hands-on expertise with multi-node GPU clusters, distributed PyTorch coaching, and observability instruments. Classes coated important matters, together with containerized ML, distributed coaching methods, and AWS purpose-built silicon options. Classmethod Inc. shared sensible SageMaker HyperPod insights, and AWS engineers demonstrated architectural patterns for large-scale GPU workloads. The occasion showcased AWS’s end-to-end generative AI assist panorama, from infrastructure to deployment instruments, setting the stage for GENIAC Cycle 3. As AWS continues to develop its assist for FM growth, the success of GENIAC serves as a blueprint for enabling organizations to construct and scale their AI capabilities successfully.

By way of these initiatives, AWS will proceed to supply sturdy technical assist, facilitating the graceful execution of large-scale FM coaching. We stay dedicated to contributing to the development of generative AI growth everywhere in the world by our technical experience.

This put up was contributed by AWS GENIAC Cycle 2 core members Masato Kobayashi, Kenta Ichiyanagi, and Satoshi Shirasawa, Accelerated Computing Specialist Mai Kiuchi, in addition to Lead SAs Daisuke Miyamoto, Yoshitaka Haribara, Kei Sasaki, Soh Ohara, and Hiroshi Tokoyo with Govt Sponsorship from Toshi Yasuda. Hiroshi Hata and Tatsuya Urabe additionally supplied assist as core member and Lead SA throughout their time at AWS.

The authors lengthen their gratitude to WWSO Frameworks members Maxime Hugues, Matthew Nightingale, Aman Shanbhag, Alex Iankoulski, Anoop Saha, Yashesh Shroff, Natarajan Chennimalai Kumar, Shubha Kumbadakone, and Sundar Ranganathan for his or her technical contributions. Pierre-Yves Aquilanti supplied in-depth assist throughout his time at AWS.

Concerning the authors

Keita Watanabe is a Senior Specialist Options Architect on the AWS WWSO Frameworks group. His background is in machine studying analysis and growth. Previous to becoming a member of AWS, Keita labored within the ecommerce trade as a analysis scientist creating picture retrieval techniques for product search. He leads GENIAC technical engagements.

Keita Watanabe is a Senior Specialist Options Architect on the AWS WWSO Frameworks group. His background is in machine studying analysis and growth. Previous to becoming a member of AWS, Keita labored within the ecommerce trade as a analysis scientist creating picture retrieval techniques for product search. He leads GENIAC technical engagements.

Masaru Isaka is a Principal Enterprise Improvement on the AWS WWSO Frameworks group, specializing in machine studying and generative AI options. Having engaged with GENIAC since its inception, he leads go-to-market methods for AWS’s generative AI choices.

Masaru Isaka is a Principal Enterprise Improvement on the AWS WWSO Frameworks group, specializing in machine studying and generative AI options. Having engaged with GENIAC since its inception, he leads go-to-market methods for AWS’s generative AI choices.