Managing ModelOps workflows might be advanced and time-consuming. In the event you’ve struggled with organising mission templates on your knowledge science group, you recognize that the earlier strategy utilizing AWS Service Catalog required configuring portfolios, merchandise, and managing advanced permissions—including vital administrative overhead earlier than your group might begin constructing machine studying (ML) pipelines.

Amazon SageMaker AI Tasks now provides a better path: Amazon S3 primarily based templates. With this new functionality, you’ll be able to retailer AWS CloudFormation templates straight in Amazon Easy Storage Service (Amazon S3) and handle their total lifecycle utilizing acquainted S3 options comparable to versioning, lifecycle insurance policies, and S3 Cross-Area replication. This implies you’ll be able to present your knowledge science group with safe, version-controlled, automated mission templates with considerably much less overhead.

This publish explores how you need to use Amazon S3-based templates to simplify ModelOps workflows, stroll by way of the important thing advantages in comparison with utilizing Service Catalog approaches, and demonstrates create a customized ModelOps resolution that integrates with GitHub and GitHub Actions—giving your group one-click provisioning of a totally practical ML atmosphere.

What’s Amazon SageMaker AI Tasks?

Groups can use Amazon SageMaker AI Tasks to create, share, and handle totally configured ModelOps tasks. Inside this structured atmosphere, you’ll be able to arrange code, knowledge, and experiments—facilitating collaboration and reproducibility.

Every mission can embrace steady integration and supply (CI/CD) pipelines, mannequin registries, deployment configurations, and different ModelOps parts, all managed inside SageMaker AI. Reusable templates assist standardize ModelOps practices by encoding greatest practices for knowledge processing, mannequin improvement, coaching, deployment, and monitoring. The next are well-liked use-cases you’ll be able to orchestrate utilizing SageMaker AI Tasks:

- Automate ML workflows: Arrange CI/CD workflows that mechanically construct, take a look at, and deploy ML fashions.

- Implement governance and compliance: Assist your tasks observe organizational requirements for safety, networking, and useful resource tagging. Constant tagging practices facilitate correct value allocation throughout groups and tasks whereas streamlining safety audits.

- Speed up time-to-value: Present pre-configured environments so knowledge scientists deal with ML issues, not infrastructure.

- Enhance collaboration: Set up constant mission constructions for simpler code sharing and reuse.

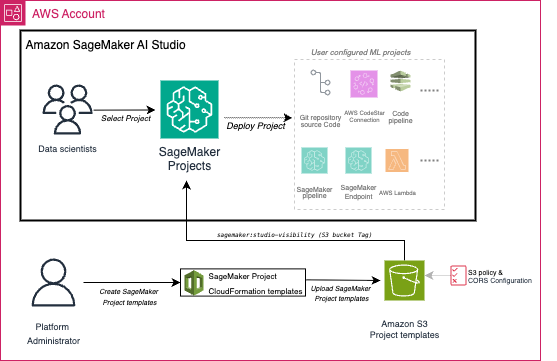

The next diagram exhibits how SageMaker AI Tasks provides separate workflows for directors and ML engineers and knowledge scientists. The place the admins create and handle the ML use-case templates and the ML engineers and knowledge scientists eat the accredited templates in self-service trend.

What’s new: Amazon SageMaker AI S3-based mission templates

The most recent replace to SageMaker AI Tasks introduces the flexibility for directors to retailer and handle ML mission templates straight in Amazon S3. S3-based templates are a simpler and extra versatile different to the beforehand required Service Catalog. With this enhancement, AWS CloudFormation templates might be versioned, secured, and effectively shared throughout groups utilizing the wealthy entry controls, lifecycle administration, and replication options offered by S3. Now, knowledge science groups can launch new ModelOps tasks from these S3-backed templates straight inside Amazon SageMaker Studio. This helps organizations preserve consistency and compliance at scale with their inside requirements.

While you retailer templates in Amazon S3, they grow to be out there in all AWS Areas the place SageMaker AI Tasks is supported. To share templates throughout AWS accounts, you need to use S3 bucket insurance policies and cross-account entry controls. The flexibility to activate versioning in S3 gives a whole historical past of template modifications, facilitating audits and rollbacks, whereas additionally supplying an immutable document of mission template evolution over time. In case your groups at present use Service Catalog-based templates, the S3-based strategy gives an easy migration path. When migrating from Service Catalog to S3, the first concerns contain provisioning new SageMaker roles to exchange Service Catalog-specific roles, updating template references accordingly, importing templates to S3 with correct tagging, and configuring domain-level tags to level to the template bucket location. For organizations utilizing centralized template repositories, cross-account S3 bucket insurance policies should be established to allow template discovery from shopper accounts, with every shopper account’s SageMaker area tagged to reference the central bucket. Each S3-based and Service Catalog templates are displayed in separate tabs inside the SageMaker AI Tasks creation interface, so organizations can introduce S3 templates step by step with out disrupting present workflows through the migration.

The S3-based ModelOps tasks help customized CloudFormation templates that you just create on your group ML use case. AWS-provided templates (such because the built-in ModelOps mission templates) proceed to be out there solely by way of Service Catalog. Your customized templates should be legitimate CloudFormation information in YAML format. To start out utilizing S3-based templates with SageMaker AI Tasks, your SageMaker area (the collaborative workspace on your ML groups) should embrace the tag sagemaker:projectS3TemplatesLocation with worth s3://<bucket-name>/<prefix>/. Every template file uploaded to S3 should be tagged with sagemaker:studio-visibility=true to seem within the SageMaker AI Studio Tasks console. You’ll need to grant learn entry to SageMaker execution roles on the S3 bucket coverage and allow CORS onfiguration on the S3 bucket to permit SageMaker AI Tasks entry to the S3 templates.

The next diagram illustrates how S3-based templates combine with SageMaker AI Tasks to allow scalable ModelOps workflows. The setup operates in two separate workflows – one-time configuration by directors and mission launch by ML Engineers / Information Scientists. When ML Engineers / Information Scientists launch a brand new ModelOps mission in SageMaker AI, SageMaker AI launches an AWS CloudFormation stack to provision the assets outlined within the template and as soon as the method is full, you’ll be able to entry all specified assets and the configured CI/CD pipelines in your mission.

Managing the lifecycle of launched tasks might be achieved by way of the SageMaker Studio console the place customers can navigate to S3 Templates, choose a mission, and use the Actions dropdown menu to replace or delete tasks. Mission updates can be utilized to switch present template parameters or the template URL itself, triggering CloudFormation stack updates which are validated earlier than execution, whereas mission deletion removes all related CloudFormation assets and configurations. These lifecycle operations can be carried out programmatically utilizing the SageMaker APIs.

To display the ability of S3-based templates, let’s have a look at a real-world situation the place an admin group wants to supply knowledge scientists with a standardized ModelOps workflow that integrates with their present GitHub repositories.

Use case: GitHub-integrated MLOps template for enterprise groups

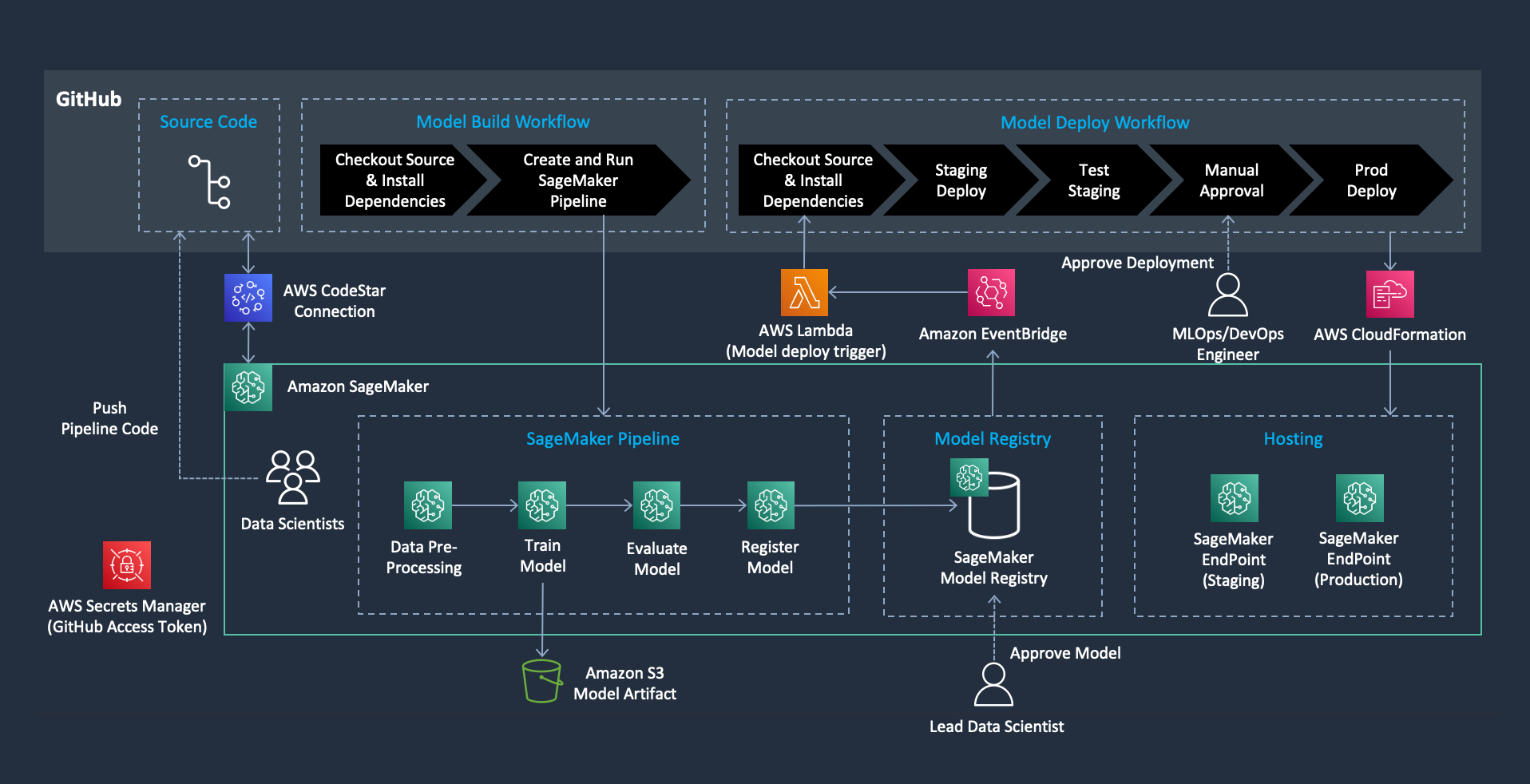

Many organizations use GitHub as their main supply management system and need to use GitHub Actions for CI/CD whereas utilizing SageMaker for ML workloads. Nonetheless, organising this integration requires configuring a number of AWS companies, establishing safe connections, and implementing correct approval workflows—a fancy process that may be time-consuming if completed manually. Our S3-based template solves this problem by provisioning a whole ModelOps pipeline that features, CI/CD orchestration, SageMaker Pipelines parts and event-drive automation. The next diagram illustrates the end-to-end workflow provisioned by this ModelOps template.

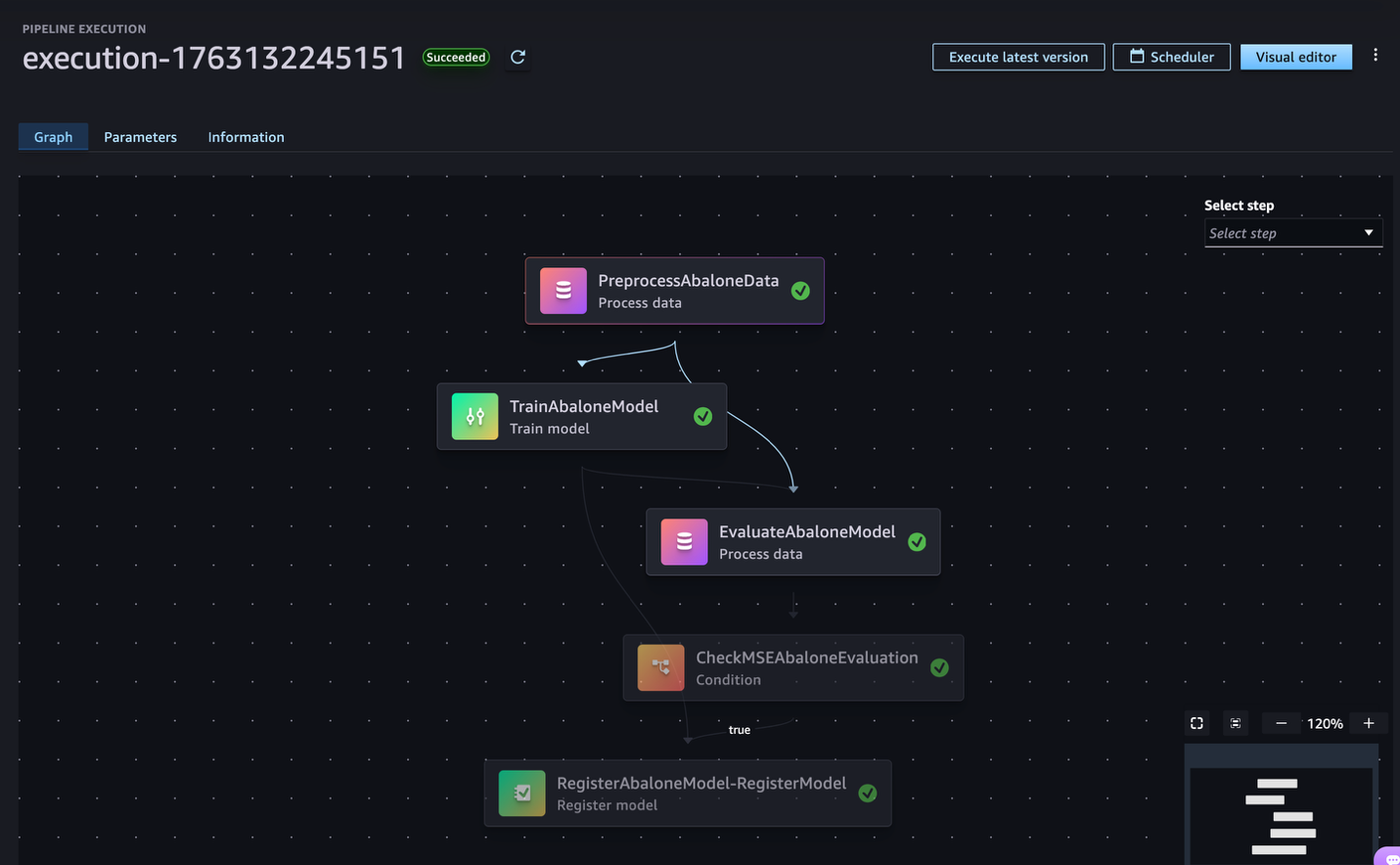

This pattern ModelOps mission with S3-based templates allows totally automated and ruled ModelOps workflows. Every ModelOps mission features a GitHub repository pre-configured with Actions workflows and safe AWS CodeConnections for seamless integration. Upon code commits, a SageMaker pipeline is triggered to orchestrate a standardized course of involving knowledge preprocessing, mannequin coaching, analysis, and registration. For deployment, the system helps automated staging on mannequin approval, with sturdy validation checks, a guide approval gate for selling fashions to manufacturing, and a safe, event-driven structure utilizing AWS Lambda and Amazon EventBridge. All through the workflow, governance is supported by SageMaker Mannequin Registry for monitoring mannequin variations and lineage, well-defined approval steps, safe credential administration utilizing AWS Secrets and techniques Supervisor, and constant tagging and naming requirements for all assets.

When knowledge scientists choose this template from SageMaker Studio, they provision a totally practical ModelOps atmosphere by way of a streamlined course of. They push their ML code to GitHub utilizing built-in Git performance inside the Studio built-in improvement atmosphere (IDE), and the pipeline mechanically handles mannequin coaching, analysis, and progressive deployment by way of staging to manufacturing—all whereas sustaining enterprise safety and compliance necessities. The whole setup directions together with the code for this ModelOps template is out there in our GitHub repository.

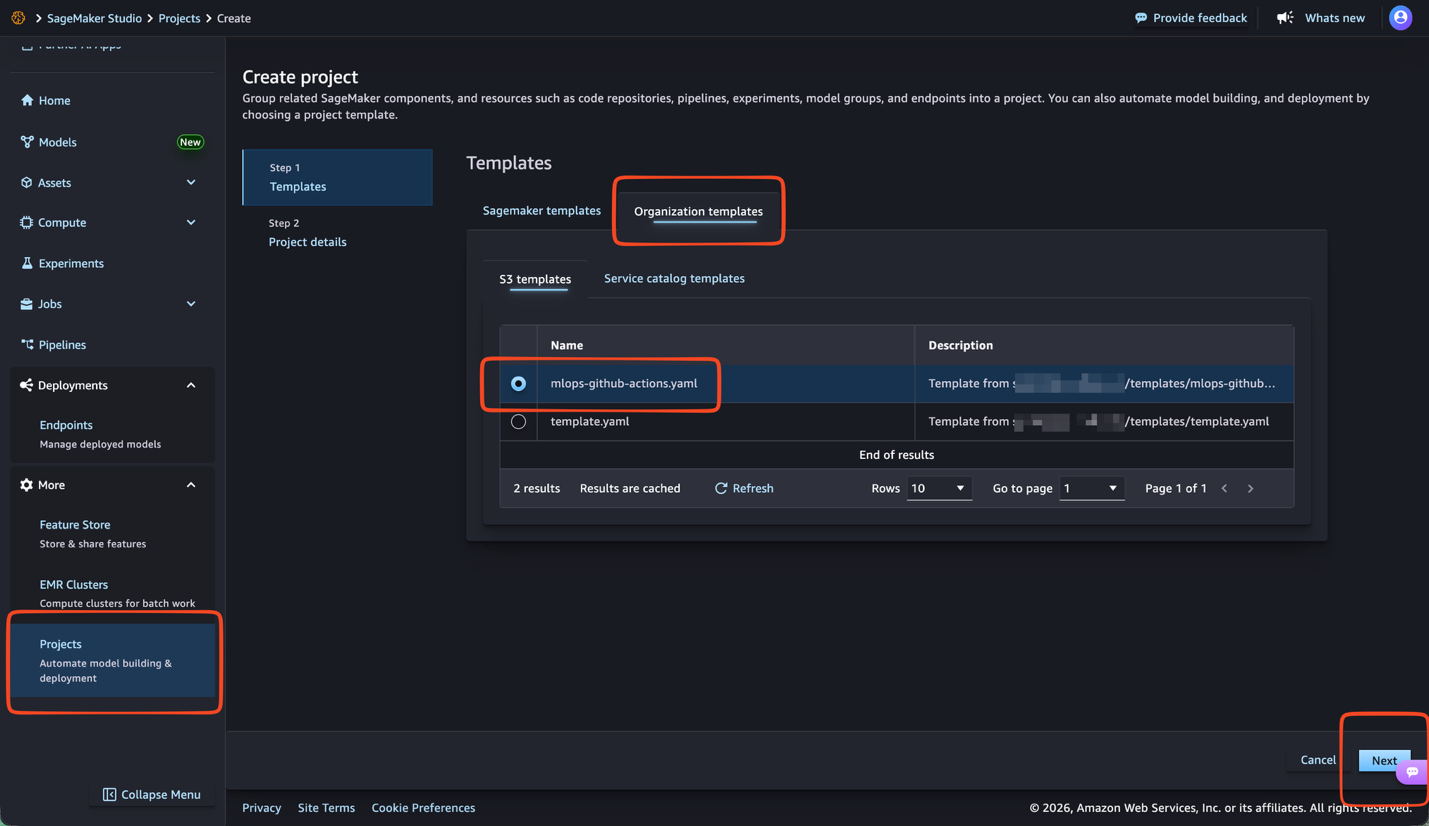

After you observe the directions within the repository you could find the mlops-github-actions template within the SageMaker AI Tasks part within the SageMaker AI Studio console by selecting Tasks from the navigation pane and deciding on the Group templates tab and selecting Subsequent, as proven within the following picture.

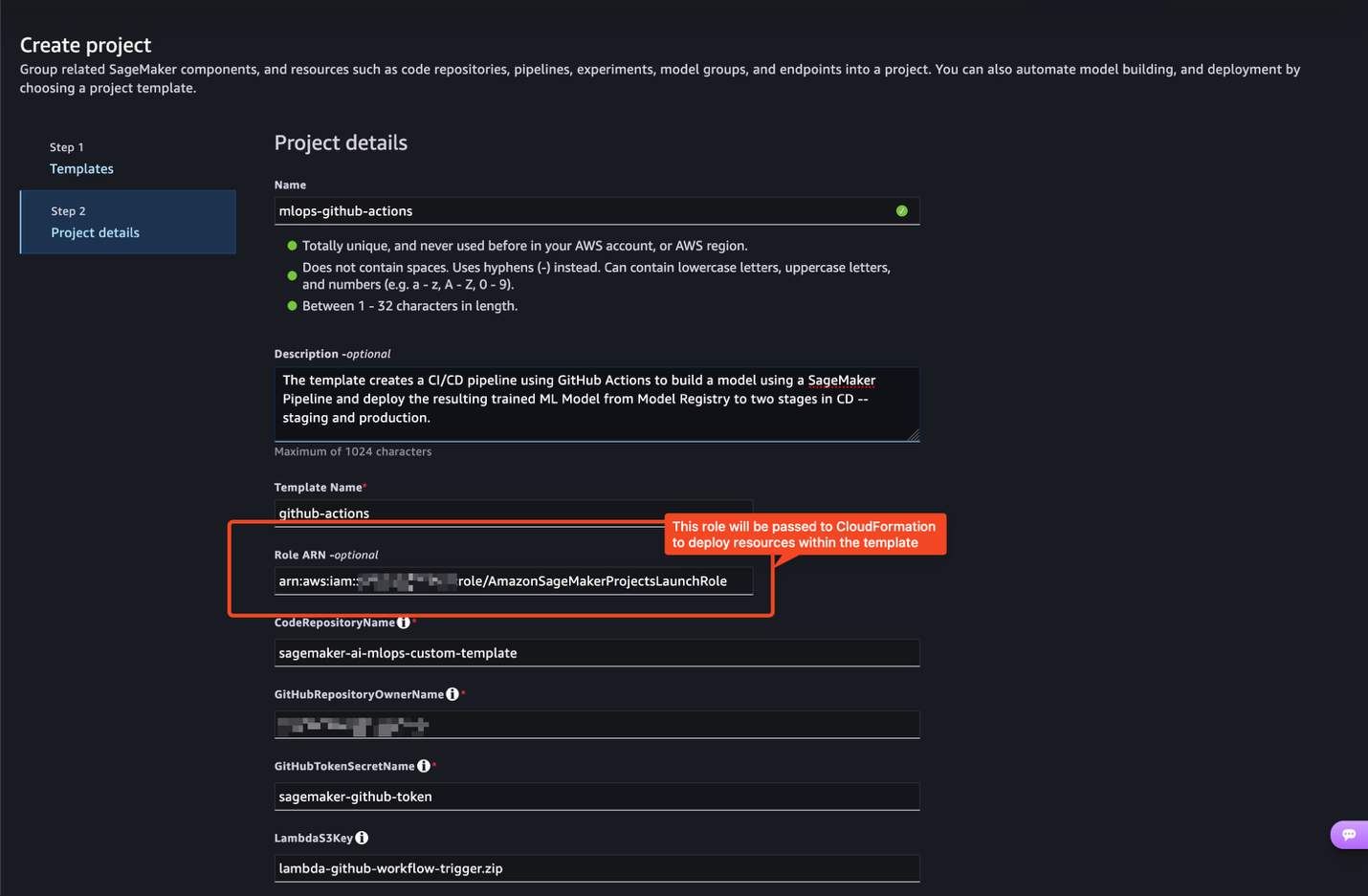

To launch the ModelOps mission, it’s essential to enter project-specific particulars together with the Position ARN discipline. This discipline ought to include the AmazonSageMakerProjectsLaunchRole ARN created throughout setup, as proven within the following picture.

As a safety greatest follow, use the AmazonSageMakerProjectsLaunchRole Amazon Useful resource Title (ARN), not your SageMaker execution position.

The AmazonSageMakerProjectsLaunchRole is a provisioning position that acts as an middleman through the ModelOps mission creation. This position accommodates all of the permissions wanted to create your mission’s infrastructure, together with AWS Identification and Entry Administration (IAM) roles, S3 buckets, AWS CodePipeline, and different AWS assets. Through the use of this devoted launch position, ML engineers and knowledge scientists can create ModelOps tasks with out requiring broader permissions in their very own accounts. Their private SageMaker execution position stays restricted in scope—they solely want permission to imagine the launch position itself.

This separation of duties is essential for sustaining safety. With out launch roles, each ML practitioner would wish intensive IAM permissions to create code pipelines, AWS CodeBuild tasks, S3 buckets, and different AWS assets straight. With launch roles, they solely want permission to imagine a pre-configured position that handles the provisioning on their behalf, conserving their private permissions minimal and safe.

Enter your required mission configuration particulars and select Subsequent. The template will then create two automated ModelOps workflows—one for mannequin constructing and one for mannequin deployment—that work collectively to supply CI/CD on your ML fashions. The whole ModelOps instance might be discovered within the mlops-github-actions repository.

Clear up

After deployment, you’ll incur prices for the deployed assets. In the event you don’t intend to proceed utilizing the setup, delete the ModelOps mission assets to keep away from pointless expenses.

To destroy the mission, open SageMaker Studio and select Extra within the navigation pane and choose Tasks. Select the mission you need to delete, select the vertical ellipsis above the upper-right nook of the tasks listing and select Delete. Overview the data within the Delete mission dialog field and choose Sure, delete the mission to verify. After deletion, confirm that your mission now not seems within the tasks listing.

Along with deleting a mission, which can take away and deprovision the SageMaker AI Mission, you additionally have to manually delete the next parts in the event that they’re now not wanted: Git repositories, pipelines, mannequin teams, and endpoints.

Conclusion

The Amazon S3-based template provisioning for Amazon SageMaker AI Tasks transforms how organizations standardize ML operations. As demonstrated on this publish, a single AWS CloudFormation template can provision a whole CI/CD workflow integrating your Git repository (GitHub, Bitbucket, or GitLab), SageMaker Pipelines, and SageMaker Mannequin Registry—offering knowledge science groups with automated workflows whereas sustaining enterprise governance and safety controls. For extra details about SageMaker AI Tasks and S3-based templates, see ModelOps Automation With SageMaker Tasks.

By usging S3-based templates in SageMaker AI Tasks, directors can outline and govern the ML infrastructure, whereas ML engineers and knowledge scientists achieve entry to pre-configured ML environments by way of self-service provisioning. Discover the GitHub samples repository for well-liked ModelOps templates and get began as we speak by following the offered directions. You can too create customized templates tailor-made to your group’s particular necessities, safety insurance policies, and most popular ML frameworks.

Concerning the authors

Christian Kamwangala is an AI/ML and Generative AI Specialist Options Architect at AWS, primarily based in Paris, France. He companions with enterprise clients to architect, optimize, and deploy production-grade AI options leveraging the great AWS machine studying stack . Christian makes a speciality of inference optimization methods that steadiness efficiency, value, and latency necessities for large-scale deployments. In his spare time, Christian enjoys exploring nature and spending time with household and mates

Christian Kamwangala is an AI/ML and Generative AI Specialist Options Architect at AWS, primarily based in Paris, France. He companions with enterprise clients to architect, optimize, and deploy production-grade AI options leveraging the great AWS machine studying stack . Christian makes a speciality of inference optimization methods that steadiness efficiency, value, and latency necessities for large-scale deployments. In his spare time, Christian enjoys exploring nature and spending time with household and mates

Sandeep Raveesh is a Generative AI Specialist Options Architect at AWS. He works with buyer by way of their AIOps journey throughout mannequin coaching, generative AI functions like brokers, and scaling generative AI use-cases. He additionally focuses on go-to-market methods serving to AWS construct and align merchandise to unravel trade challenges within the generative AI area. You may join with Sandeep on LinkedIn to study generative AI options.

Sandeep Raveesh is a Generative AI Specialist Options Architect at AWS. He works with buyer by way of their AIOps journey throughout mannequin coaching, generative AI functions like brokers, and scaling generative AI use-cases. He additionally focuses on go-to-market methods serving to AWS construct and align merchandise to unravel trade challenges within the generative AI area. You may join with Sandeep on LinkedIn to study generative AI options.

Paolo Di Francesco is a Senior Options Architect at Amazon Internet Providers (AWS). He holds a PhD in Telecommunications Engineering and has expertise in software program engineering. He’s captivated with machine studying and is at present specializing in utilizing his expertise to assist clients attain their targets on AWS, in discussions round MLOps. Exterior of labor, he enjoys enjoying soccer and studying.

Paolo Di Francesco is a Senior Options Architect at Amazon Internet Providers (AWS). He holds a PhD in Telecommunications Engineering and has expertise in software program engineering. He’s captivated with machine studying and is at present specializing in utilizing his expertise to assist clients attain their targets on AWS, in discussions round MLOps. Exterior of labor, he enjoys enjoying soccer and studying.