Offering LLM in manufacturing has change into a system concern fairly than an issue. generate() loop. For real-world workloads, the selection of inference stack can Tokens per second, tail latencyand finally Price per million tokens On particular GPU fleets.

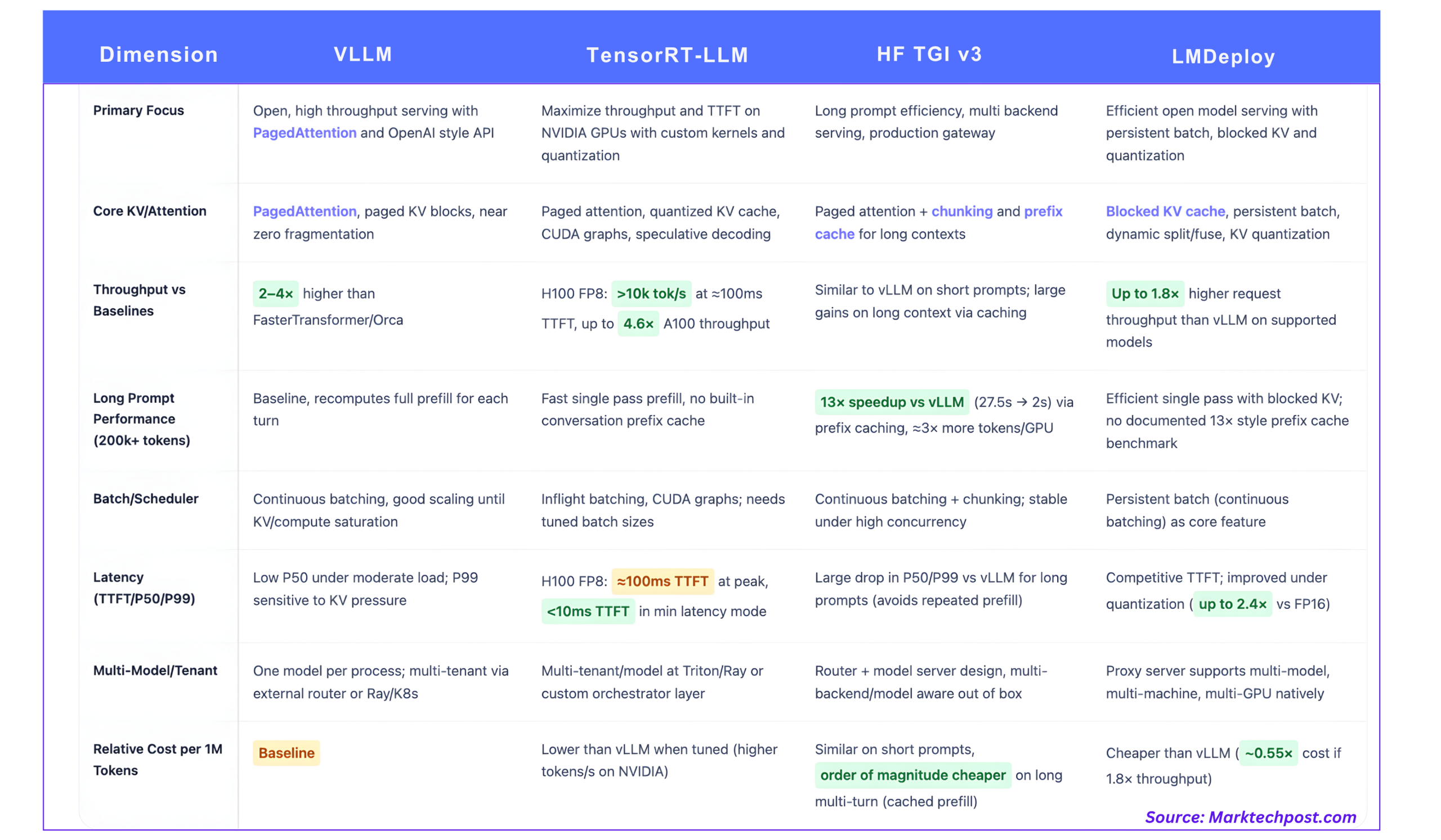

This comparability focuses on 4 extensively used stacks.

- vLLM

- NVIDIA TensorRT-LLM

- Hug Face Textual content Era Inference (TGI v3)

- LMDeploy

1. vLLM, PagedAttendance as an open baseline

core concept

vLLM is constructed round: Paginated considerationa notable implementation that treats the KV cache like paged digital reminiscence fairly than a single contiguous buffer per sequence.

As a substitute of allocating one massive KV area per request, vLLM:

- Cut up the KV cache into mounted measurement blocks

- Maintains a block desk that maps logical tokens to bodily blocks.

- Share blocks between sequences with overlapping prefixes

This reduces exterior fragmentation and permits the scheduler to pack extra concurrent sequences into the identical VRAM.

Throughput and latency

vLLM will increase throughput by: 2~4× Latency much like techniques like FasterTransformer and Orca, with better achieve for longer sequences.

Primary properties of operators:

- steady batch processing (often known as in-flight batching) merges incoming requests into present GPU batches as an alternative of ready for a set batch window.

- For a typical chat workload, throughput will increase nearly linearly with concurrency till KV reminiscence or compute is saturated.

- P50’s latency stays low at reasonable concurrency, however P99’s latency can degrade as queues get longer or KV reminiscence runs out, particularly for closely prefilled queries.

vLLM is OpenAI suitable HTTP API It’s extensively used as an open baseline as a result of it integrates effectively with Ray Serve and different orchestrators.

KV and multitenancy

- PagedAttendance provides Nearly zero KV waste Versatile prefix sharing inside and between requests.

- Every vLLM course of gives a service 1 mannequinmulti-tenant and multi-model setups are usually constructed utilizing exterior routers or API gateways that fan out to a number of vLLM cases.

2. TensorRT-LLM, {hardware} maximums on NVIDIA GPUs

core concept

TensorRT-LLM is an inference library optimized for NVIDIA’s GPUs. This library gives customized consideration kernels, in-flight batch processing, paged KV cache, quantization as much as FP4 and INT4, and speculative decoding.

It’s tightly coupled with NVIDIA {hardware}, together with FP8 tensor cores from Hopper and Blackwell.

measured efficiency

NVIDIA’s H100 and A100 scores are essentially the most particular public references.

- On H100 with FP8, TensorRT-LLM is Greater than 10,000 output tokens/sec At peak throughput 64 concurrent requestsand ~100ms Time to first token.

- H100 FP8 is max. 4.6x most throughput and First token latency is 4.4x sooner than the identical mannequin A100.

For delay delicate mode:

- TensorRT-LLM on H100 can drive TTFT lower than 10ms Batch 1 configuration reduces general throughput.

These numbers are mannequin and geometry particular, however give a sensible scale.

Prefill and decode

TensorRT-LLM optimizes each phases.

- Prefill advantages from the high-throughput FP8 consideration kernel and tensor parallelism.

- Benefits of decoding with CUDA graphs, speculative decoding, quantized weights and KV, and kernel fusion

The consequence could be very excessive tokens per second over a variety of enter and output lengths, particularly if the engine is tuned to that mannequin and batch profile.

KV and multitenancy

TensorRT-LLM gives:

- Paged KV cache Use configurable structure

- Help for lengthy sequences, KV reuse, and offloading

- Scheduling primitives which are conscious of batch processing and precedence throughout execution

NVIDIA combines this with Ray-based or Triton-based orchestration patterns for multi-tenant clusters. Multi-model assist is completed on the orchestrator degree, not inside a single TensorRT-LLM engine occasion.

3. Hugging Face TGI v3, Lengthy Immediate Specialist, and Multi-Backend Gateway

core concept

Text Generative Inference (TGI) is a Rust and Python-based service stack that provides:

- HTTP and gRPC APIs

- steady batch scheduler

- Observability and autoscaling hooks

- Pluggable backend together with vLLM type engine, TensorRT-LLM, and different runtimes

Model 3 focuses on long-running immediate dealing with. Chunking and prefix caching.

Comparability of lengthy immediate benchmarks and vLLM

of TGI v3 documentation Give a transparent benchmark:

- For lengthy prompts longer than 200,000 tokensreply to the required dialog 27.5 seconds with vLLM May be offered for roughly 2 seconds for TGI v3.

- That is reported as 13x speedup About that workload.

- TGI v3 can course of: 3x extra tokens in the identical GPU reminiscence By lowering reminiscence footprint and using chunking and caching.

The mechanism is as follows.

- TGI makes use of the unique dialog context as prefix cacheso subsequent turns solely make incremental token funds.

- The cache lookup overhead is: microsecondwhich is negligible in comparison with pre-populated calculations.

That is an optimization focused at workloads the place prompts are very lengthy and are reused throughout turns, akin to RAG pipelines and evaluation summaries.

Structure and latency conduct

Primary elements:

- Chunkingvery lengthy prompts are damaged into manageable segments for KV and scheduling.

- prefix cachean information construction that shares lengthy contexts between turns

- steady batch processingthe incoming request joins the already working batch of sequences

- PagedAttention and fusion kernels Contained in the GPU backend

For brief chat-style workloads, throughput and latency are much like vLLM. For lengthy cacheable contexts, each P50 and P99 latency are considerably improved because the engine avoids repeating prefills.

Multi-backends and multi-models

TGI is designed to: router plus mannequin server structure. can:

- Route requests throughout many fashions and replicas

- Goal completely different backends (e.g. TensorRT-LLM on H100 and CPUs or small GPUs for low precedence visitors)

This makes it appropriate as a central service layer in a multi-tenant setting.

4. LMDeploy, TurboMind with blocked KV and aggressive quantization

core concept

LMDeploy A toolkit for compressing and serving LLMs from the InternLM ecosystem. turbo thoughts engine. We concentrate on:

- Excessive-throughput request processing

- Blocked KV cache

- Persistent Batch Processing (Steady Batch Processing)

- Weight quantization and KV cache

Comparability of relative throughput and vLLM

The undertaking states:

- “LMDeploy delivers as much as 1.8x larger request throughput than vLLM”‘ options assist for persistent batch, blocked KV, dynamic splitting and fusion, tensor parallelism, and an optimized CUDA kernel.

KV, quantization, latency

LMDeploy consists of:

- Blocked KV cacheHelpful for packing many sequences into VRAM, much like paged KV

- assist for KV cache quantizationKV Usually int8 or int4 to cut back reminiscence and bandwidth

- Weight-only quantization passes akin to 4-bit AWQ

- Benchmark harness that reviews token throughput, request throughput, and first token latency

This makes LMDeploy enticing if you wish to run bigger open fashions like InternLM or Qwen on midrange GPUs with aggressive compression whereas sustaining good tokens/second.

Multi-model deployment

LMDeploy is proxy server Can deal with:

- Multi-model introduction

- Multi-machine, multi-GPU setup

- Routing logic that selects a mannequin based mostly on request metadata

Due to this fact, it’s architecturally nearer to TGI than a single engine.

when to make use of what?

- Whenever you want most throughput and really low TTFT on NVIDIA GPUs

- TensorRT-LLM is the primary selection

- Push tokens per second utilizing sub-FP8 precision, customized kernels, and speculative decoding to maintain TTFT beneath 100ms at excessive concurrency and beneath 10ms at low concurrency.

- Predominantly lengthy prompts with reuse, akin to RAGs for big contexts

- TGI v3 is a robust default

- That prefix cache and chunking surrender 3 x token capability and Latency diminished by 13x It outperforms vLLM on printed lengthy immediate benchmarks with none further configuration.

- You need an open, easy engine with sturdy baseline efficiency and an OpenAI-style API.

- vLLM Stay normal baseline

- With PagedAttendance and steady batch processing, 2-4x sooner It is sooner than older stacks with related latency and integrates cleanly with Ray and K8s.

- Concentrating on open fashions akin to InternLM and Qwen and specializing in aggressive quantization with multi-model serving

- LMDeploy It matches effectively

- Blocked KV cache, persistent batching, and int8 or int4 KV quantization. As much as 1.8x larger request throughput than vLLM Supported fashions embrace router layer

In apply, many improvement groups combine these techniques. For instance, use TensorRT-LLM for high-volume proprietary chats, TGI v3 for lengthy context evaluation, and vLLM or LMDeploy for experimental and open mannequin workloads. The bottom line is to regulate the throughput, latency tail, and KV conduct to the precise token distribution in your visitors, and calculate the fee per million tokens from tokens/second measured by yourself {hardware}.

References

- vLLM / Paged Consideration

- TensorRT-LLM efficiency and overview

- HF Textual content Generated Inference (TGI v3) Lengthy Immediate Habits

- LMDeploy/TurboMind

Michal Sutter is an information science knowledgeable with a grasp’s diploma in information science from the College of Padova. With a robust basis in statistical evaluation, machine studying, and information engineering, Michal excels at remodeling advanced datasets into actionable insights.