Meta has been launched Mobilellm-R1a household of light-weight edge inference fashions at present accessible. Hugging my face. This launch consists of fashions starting from 140m to 950m parameters, specializing in environment friendly arithmetic, coding, and scientific reasoning on billions of scales.

Not like the general-purpose chat mannequin, the Mobilellm-R1 is designed for edge deployment and goals to maintain cutting-edge inference correct computationally environment friendly.

Which structure enhances Mobilellm-R1?

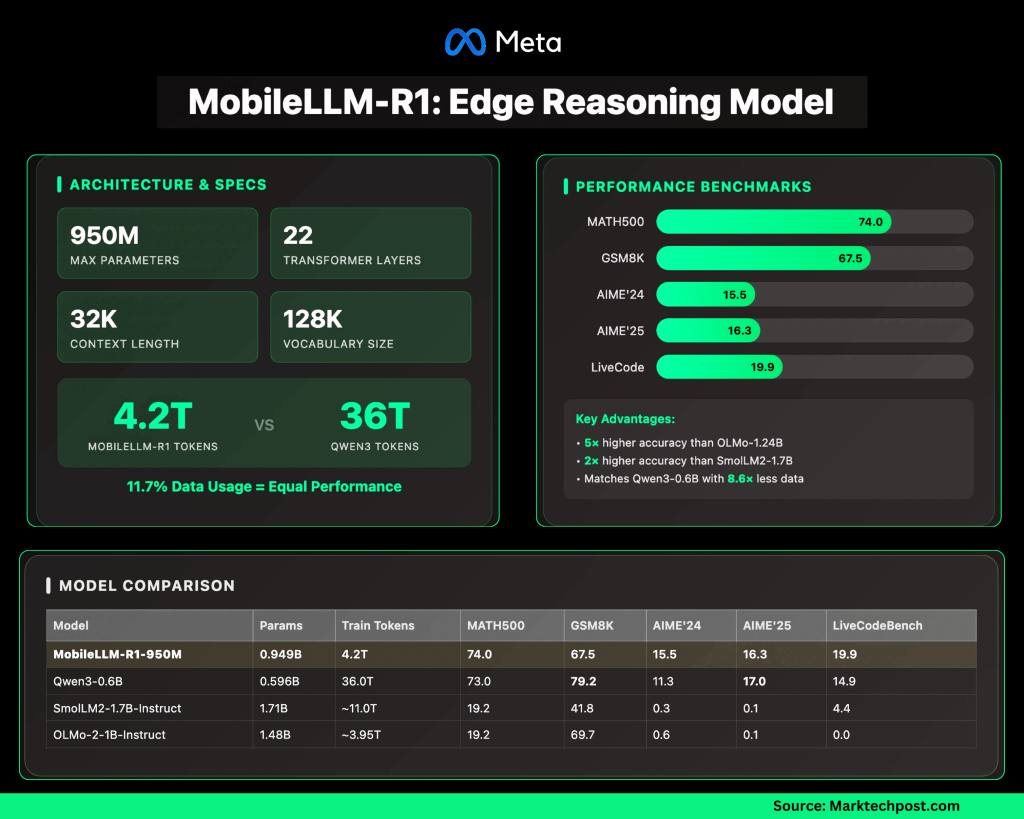

The most important mannequin, Mobilellm-R1-950M,Integrates a number of architectural optimizations.

- 22 Transformer Layer It options 24 consideration heads and 6 grouped KV heads.

- Embedded dimensions: 1536; Hidden dimensions: 6144.

- Grouped Question Notes (GQA) Reduces calculations and reminiscence.

- Weight sharing by block Reduces parameter counts with out heavy latency penalties.

- Activating Swiglu Enhance small mannequin representations.

- Context Size: Base 4K, 32K for the post-training mannequin.

- 128k vocabulary Use shared enter/output embedding.

The main focus is to scale back computational and reminiscence necessities, making it appropriate for deployment to constrained units.

How environment friendly is coaching?

The Mobilellm-R1 is notable for its knowledge effectivity.

- I am skilled ~4.2t token complete.

- As compared, QWEN3’s 0.6b The mannequin was skilled 36T token.

- Because of this Mobilellm-R1 solely makes use of utilization ≈11.7% QWEN3 accuracy reaches or exceeds the info.

- After coaching, apply monitored fine-tuning to the arithmetic, coding, and inference datasets.

This effectivity interprets straight into lowering coaching prices and useful resource demand.

How does it work in opposition to different open fashions?

On the benchmark, the Mobilellm-R1-950m reveals vital advantages.

- Math (Math500 Dataset): ~Greater accuracy than 5x Greater than Olmo-1.24b and~Greater accuracy than 2x Greater than SMOLLM2-1.7B.

- Inference and Coding (GSM8K, AIME, LIVECODEBENCH): Match or exceed QWEN3-0.6Bregardless of utilizing a lot much less tokens.

This mannequin usually offers outcomes related to bigger architectures, whereas sustaining a smaller footprint.

The place is Mobilellm-R1 lacking?

Mannequin focus creates limits.

- sturdy Arithmetic, code, and structured reasoning.

- weak Normal dialog, frequent sense, inventive duties In comparison with the bigger LLM.

- Distributed beneath Truthful NC (Non-Industrial) Licenselimits use in manufacturing settings.

- Longer context (32K) rises KV cache and reminiscence necessities With reasoning.

How does Mobilellm-R1 evaluate to QWEN3, SMOLLM2, and OLMO?

Efficiency Snapshot (post-training mannequin):

| Mannequin | Parameters | Coaching the token

Essential observations:

abstractMeta’s Mobilellm-R1 highlights the pattern in direction of smaller domain-optimized fashions that present aggressive inference with out massive coaching budgets. By reaching 2×5x efficiency throughout coaching with only a small portion of the info, the effectivity of defining the following stage of LLM deployment, particularly for arithmetic, coding, and scientific use circumstances, reveals that it defines the following stage of LLM deployment on edge units. Please test Model hugging her face. Please be at liberty to test GitHub pages for tutorials, code and notebooks. Additionally, please be at liberty to comply with us Twitter And remember to affix us 100k+ ml subreddit And subscribe Our Newsletter.

Asif Razzaq is CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, ASIF is dedicated to leveraging the chances of synthetic intelligence for social advantages. His newest efforts are the launch of MarkTechPost, a man-made intelligence media platform. That is distinguished by its detailed protection of machine studying and deep studying information, and is simple to know by a technically sound and huge viewers. The platform has over 2 million views every month, indicating its reputation amongst viewers. ConverterEditors PickNewsletterRelated PostsLatestBest sellingTop ratedProducts Knowledge Unleashed Welcome to Ivugangingo!At Ivugangingo, we're passionate about delivering insightful content that empowers and informs our readers across a spectrum of crucial topics. Whether you're delving into the world of insurance, navigating the complexities of cryptocurrency, or seeking wellness tips in health and fitness, we've got you covered. @2023 IVUGANINGO – All Right Reserved. Close

Privacy Overview

This website uses cookies so that we can provide you with the best user experience possible. Cookie information is stored in your browser and performs functions such as recognising you when you return to our website and helping our team to understand which sections of the website you find most interesting and useful.

Strictly Necessary Cookies

Strictly Necessary Cookie should be enabled at all times so that we can save your preferences for cookie settings. If you disable this cookie, we will not be able to save your preferences. This means that every time you visit this website you will need to enable or disable cookies again. |

|---|