introduction

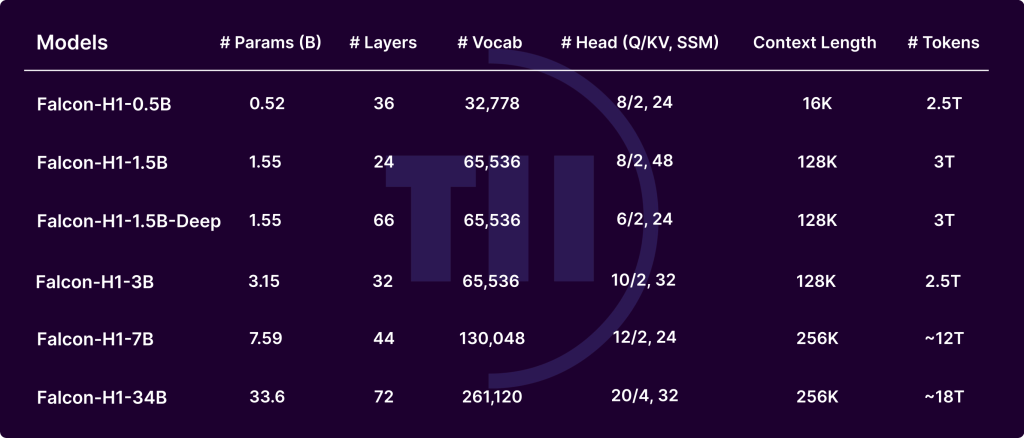

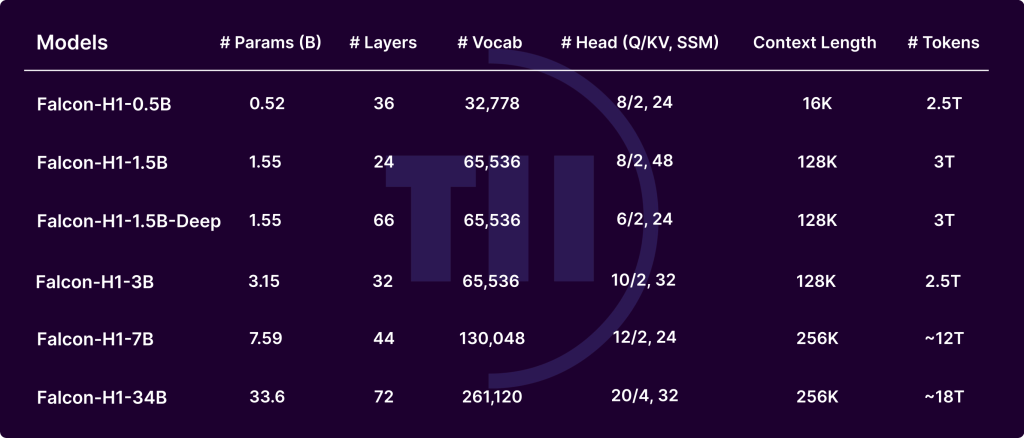

The Falcon-H1 sequence, developed by the Expertise Innovation Institute (TII), demonstrates main advances within the evolution of large-scale language fashions (LLMS). By integrating Transformer-based consideration with a Mamba-based state-space mannequin (SSM) right into a hybrid parallel configuration, Falcon-H1 delivers distinctive efficiency, reminiscence effectivity, and scalability. Launched in a number of sizes (0.5B to 34B parameters) and variations (base, directive tuning, and quantization), the FALCON-H1 mannequin redefines the trade-off between computational funds and output high quality, offering higher parameter effectivity than many trendy fashions, corresponding to QWEN2.5-72B and LLAMA3.3-70B.

Main Architectural Improvements

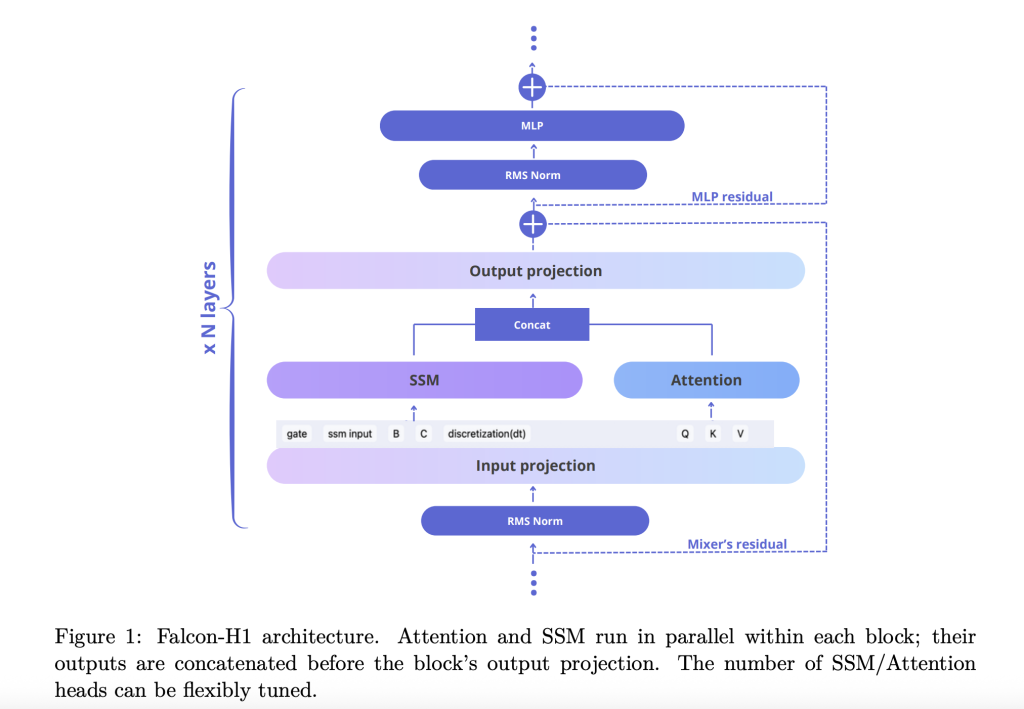

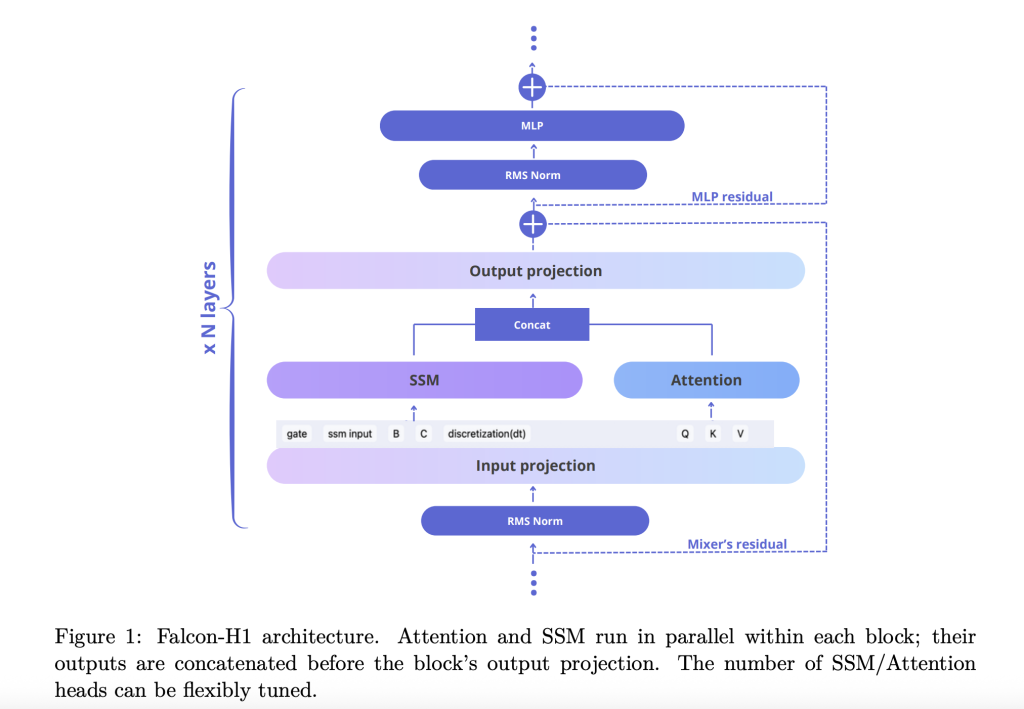

Technical Report Falcon-H1 explains find out how to undertake novels Parallel Hybrid Structure Each the eye and the SSM modules function concurrently, and their outputs are concatenated earlier than projection. This design deviates from conventional sequential integration and gives the flexibleness to independently coordinate the variety of consideration and SSM channels. The default configuration makes use of a 2:1:5 ratio for SSM, consideration, and MLP channels respectively to optimize each effectivity and studying dynamics.

To additional refine the mannequin, Falcon-H1 explores:

- Channel Task: Ablation reveals that rising consideration channels can worsen efficiency and balanced SSM and MLP offers strong achieve.

- Block configuration: SA_M configuration (semi-parallel with consideration and SSM run collectively adopted by MLP) is finest fitted to coaching loss and computational effectivity.

- Rope Base Frequency: The unusually excessive base frequency of rotary place embedding (rope) is perfect, bettering generalization throughout long-term steady coaching.

- Deep trade-offs: Experiments present that deeper fashions are superior to wider fashions below mounted parameter budgets. The Falcon-H1-1.5B-Deep (66 layers) outperforms many 3B and 7B fashions.

Token Drug Technique

FALCON-H1 makes use of a personalized byte-pair encoding (BPE) tokenizer suite with vocabulary sizes starting from 32K to 261K. The primary design choices are:

- Numbers and punctuation splits: empirically improves the efficiency of code and multilingual settings.

- Latex token injection: Will increase mannequin accuracy for arithmetic benchmarks.

- Multilingual Help: Covers 18 languages and scales over 100 utilizing optimized fertility and byte/token metrics.

Earlier registration corpus and knowledge methods

The Falcon-H1 mannequin is skilled with as much as 18T tokens from a rigorously curated 20T token corpus.

- Top quality internet knowledge (Effective Internet Filtered)

- Multilingual knowledge set: Curation assets for Widespread Crawl, Wikipedia, Arxiv, OpenSubTitles, and 17 languages

- Code Corpus: Dealt with through 67 languages, Minhash deduplication, Codebert High quality filters, and PII scrubbing

- Mathematical knowledge set: Arithmetic, GSM8K, and in-house latex bolstered crawl

- Artificial knowledgeTextbook model QA from 30K Wikipedia-based subjects rewritten from RAW Corpora utilizing quite a lot of LLMS

- Lengthy Context Sequence: Enhanced through mid-range, sorting and artificial inference duties as much as 256K tokens

Coaching Infrastructure and Methodology

Coaching used personalized most replace parameterization (µP) to help easy scaling of the complete mannequin dimension. The mannequin employs a complicated parallelism technique.

- Mixer Parallelism (MP) and Context Parallelism (CP): Improves throughput for lengthy context processing

- Quantization: Launched in BFLOAT16 and 4-bit variants to facilitate edge deployment

Rankings and Efficiency

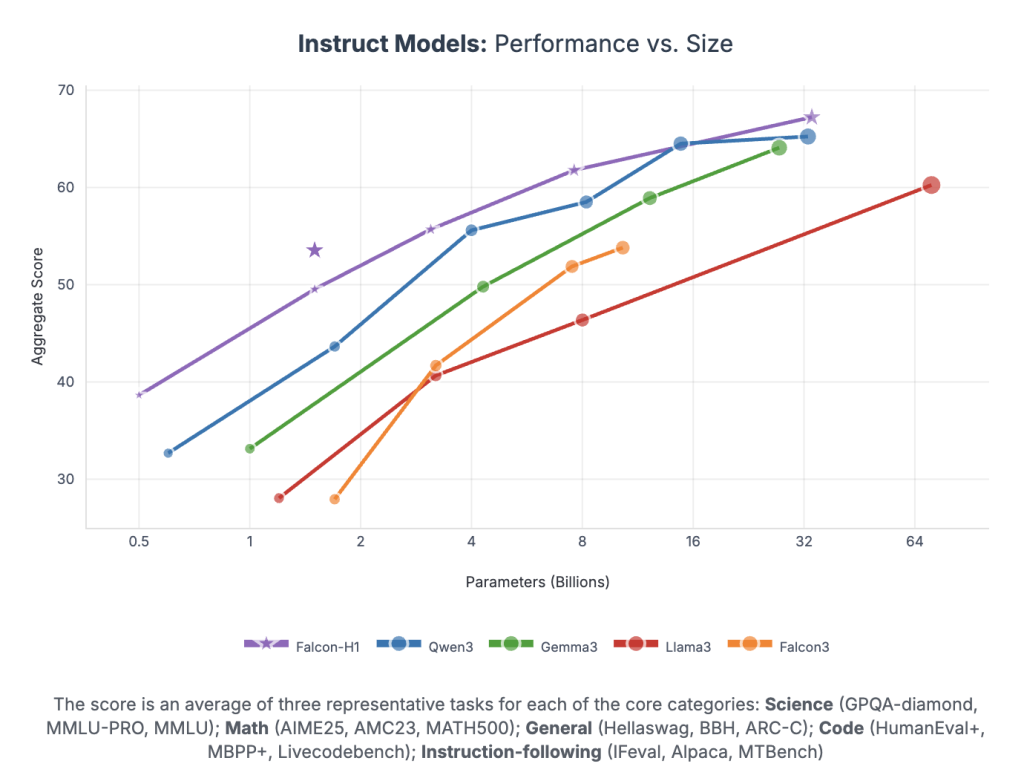

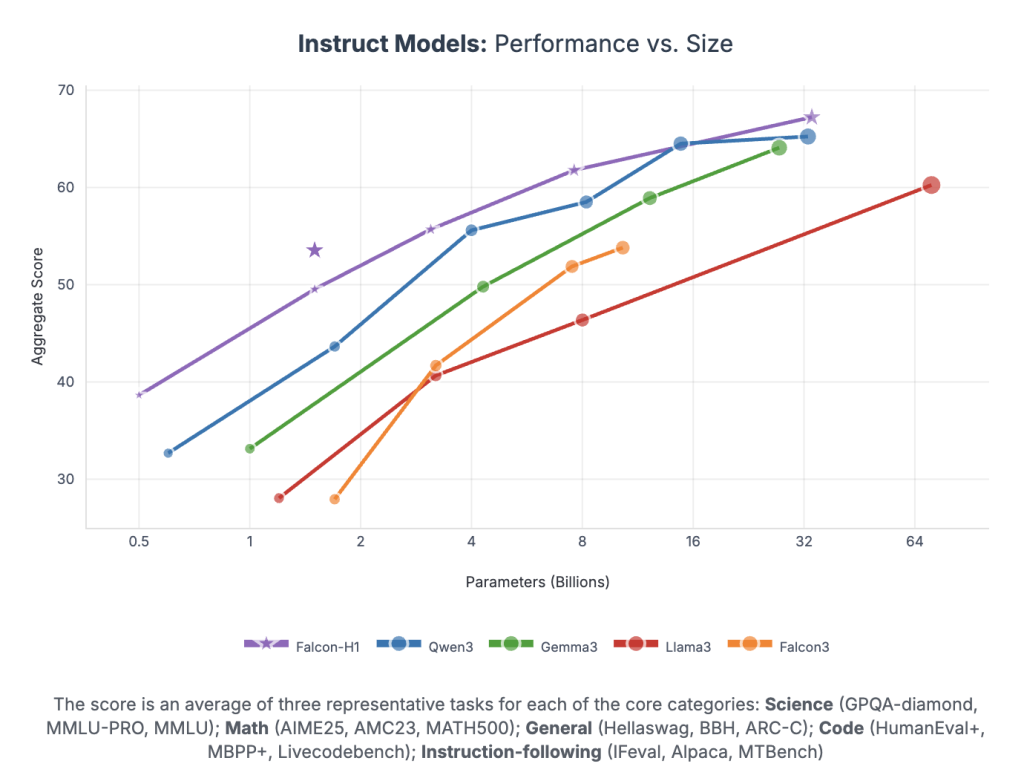

Falcon-H1 achieves unprecedented efficiency per parameter.

- Falcon-H1-34B-Instruct Overcoming 70b scale fashions corresponding to QWEN2.5-72B and llama3.3-70b throughout inference, arithmetic, instruction observe, and multilingual duties

- Falcon-H1-1.5B-Deep Rival 7B to 10B fashions

- Falcon-H1-0.5b Gives efficiency for the 2024 period

The benchmark spans duties for MMLU, GSM8K, Humanval, and LongContext. The mannequin reveals sturdy alignment through SFT and direct choice optimization (DPO).

Conclusion

Falcon-H1 units a brand new normal for open-weight LLM by integrating parallel hybrid structure, versatile tokenization, environment friendly coaching dynamics and strong multilingual capabilities. The strategic mixture of SSM and a focus permits for unparalleled efficiency inside sensible calculations and reminiscence budgets, making it perfect for each analysis and deployment throughout various environments.

Please test paper and Model hugging her face. Be at liberty to Check out the AI Agent and Agent AI tutorial page for various applications. Additionally, please be happy to observe us Twitter And do not forget to hitch us 100k+ ml subreddit And subscribe Our Newsletter.

Mikal Sutter is a knowledge science skilled with a Grasp’s diploma in Information Science from Padova College. With its stable foundations of statistical evaluation, machine studying, and knowledge engineering, Michal excels at remodeling complicated datasets into actionable insights.