The multimodal large-scale language mannequin (MLLM) panorama has moved from experimental “wrappers” wherein separate imaginative and prescient or audio encoders are sewn onto a text-based spine to native end-to-end “omnimodal” architectures. Alibaba Qwen staff’s newest launch, Qwen3.5-Omnirepresents an necessary milestone on this evolution. Designed as a direct competitor to flagship fashions reminiscent of Gemini 3.1 Professional, the Qwen3.5-Omni sequence introduces an built-in framework that may course of textual content, photos, audio, and video concurrently inside a single computational pipeline.

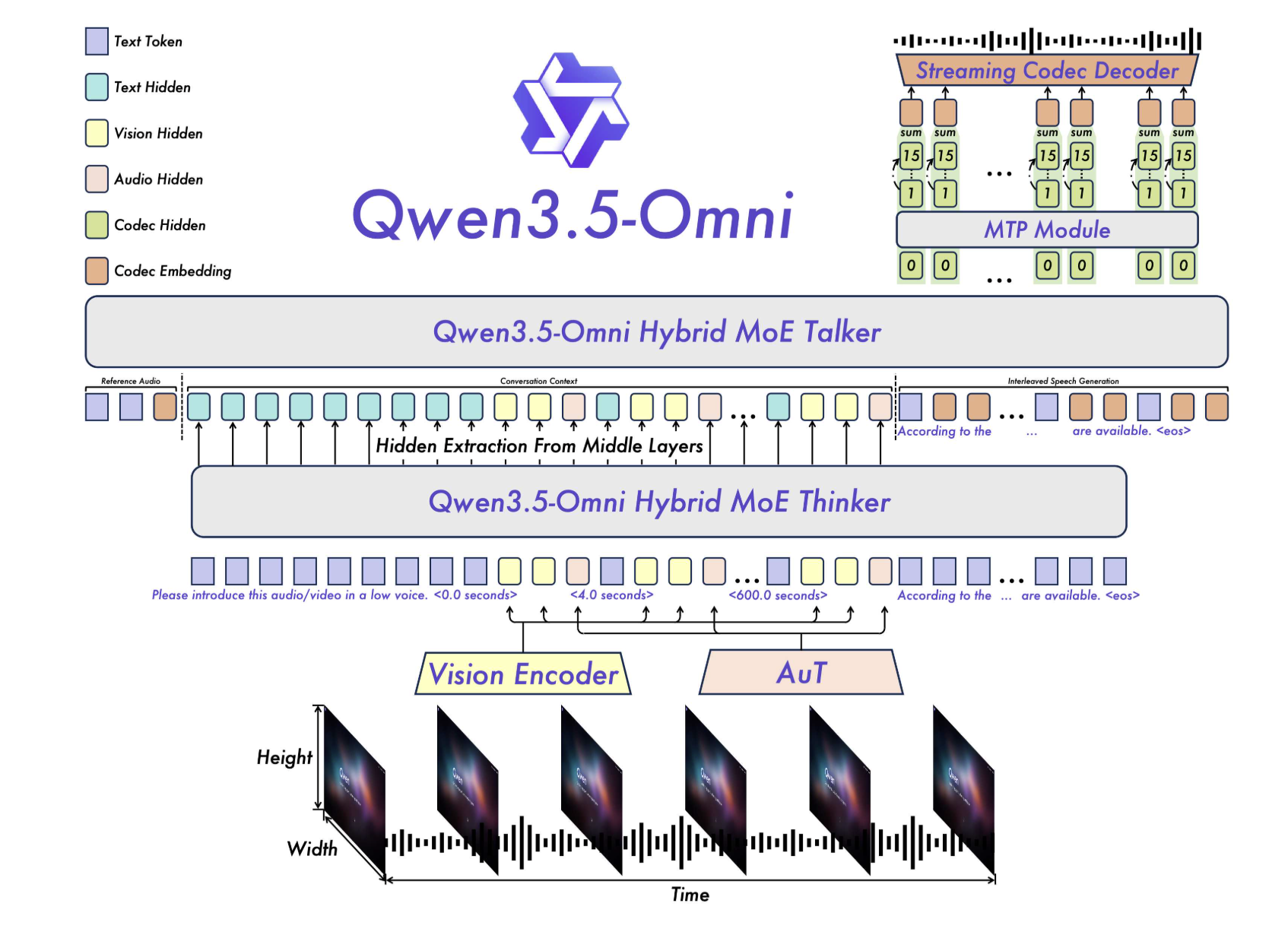

The technical significance of Qwen3.5-Omni is thinker, talker Structure and its use Hybrid Attentional Combination by Specialists (MoE) throughout all modalities. This strategy permits the mannequin to deal with giant context home windows and real-time interactions with out incurring the standard delay penalties related to cascading techniques.

mannequin hierarchy

The sequence is obtainable in three sizes to stability efficiency and price.

- plus: Extremely complicated reasoning and highest accuracy.

- flash: Optimized for high-throughput, low-latency interactions.

- Mild: A smaller model for efficiency-oriented duties.

Thinker and Talker Structure: A Unified MoE Framework

On the core of Qwen3.5-Omni is a branched but tightly built-in structure consisting of two main elements: thinker and talker.

In earlier iterations, multimodal fashions typically relied on pre-trained exterior encoders (reminiscent of Whisper for audio). Qwen3.5-Omni goes past this by leveraging native options. Audio transformer (AuT) encoder. This encoder was pre-trained with: 100 million hours This gives the mannequin with a grounded understanding of temporal and acoustic nuances that conventional text-first fashions lack.

Hybrid Attentional Combination by Specialists (MoE)

Those that suppose and people who converse, Hybrid Consideration MoE. In a normal MoE setup, solely a subset of parameters (the “skilled”) is activated for a given token, permitting the entire variety of parameters to be elevated whereas conserving the energetic computational price low. Making use of this to a hybrid consideration mechanism, Qwen3.5-Omni can successfully weigh the significance of various modalities (e.g., specializing in visible tokens throughout video evaluation duties) whereas sustaining the throughput required for streaming providers.

This structure helps 256k lengthy context inputs, permitting the mannequin to ingest and infer:

- That is all 10 hours of steady audio.

- That is all 400 seconds of 720p audiovisual content material (sampled at 1 FPS).

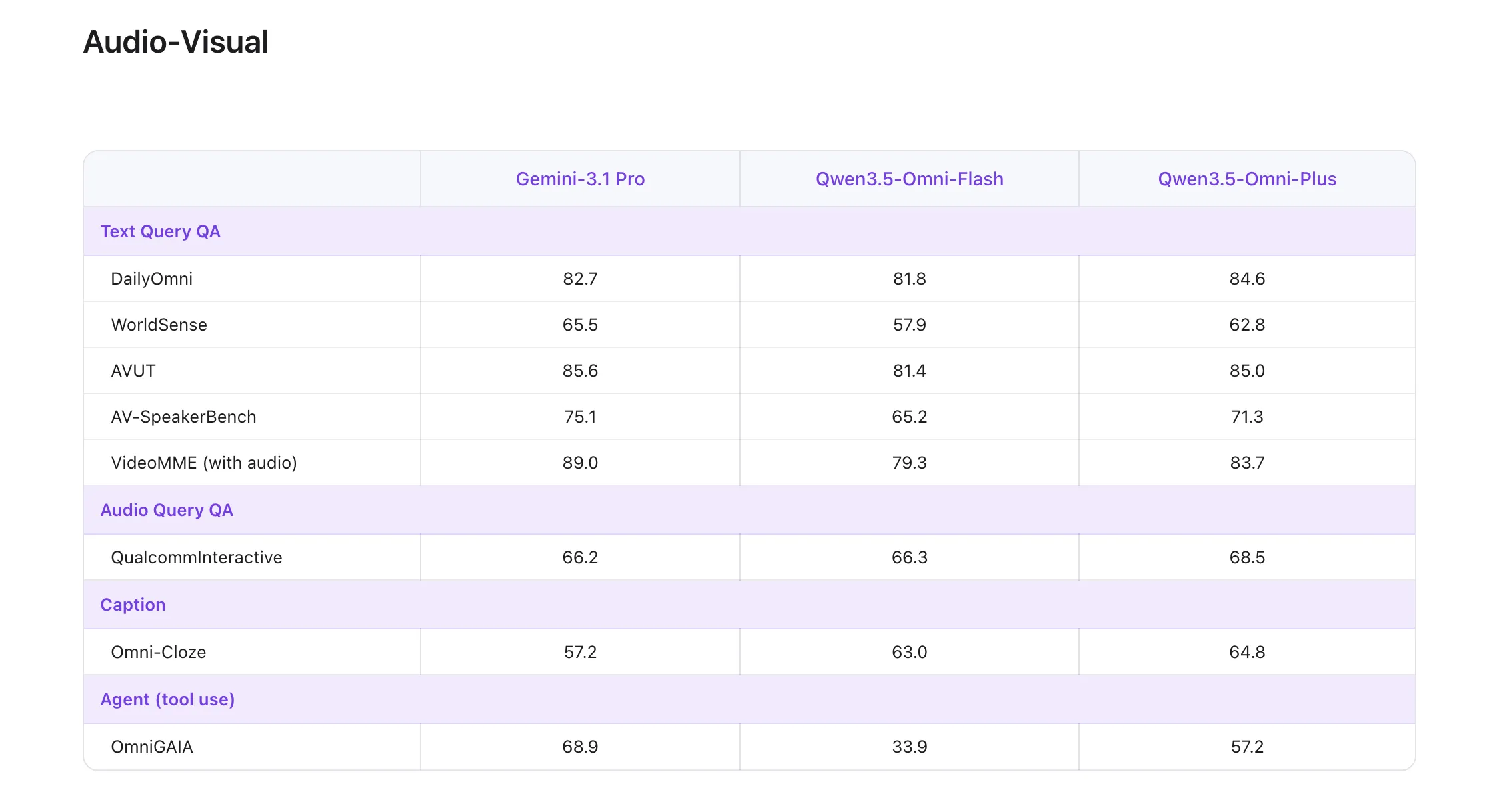

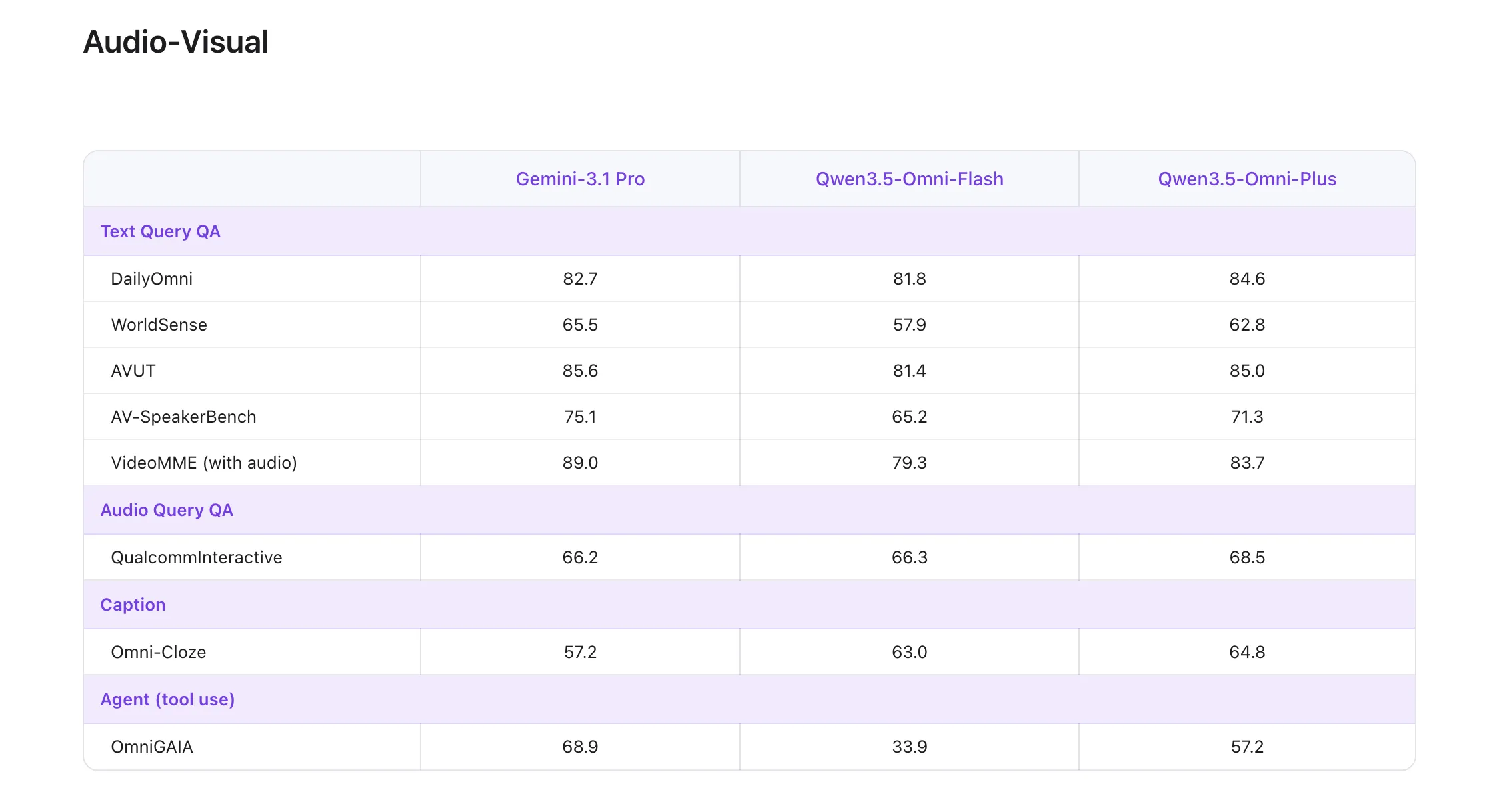

Efficiency Benchmark: “215 SOTA” Milestone

One of many hottest technological claims concerning the flagship Qwen3.5-Omni-Plus The mannequin is efficiency on international leaderboards. achieved mannequin State-of-the-art (SOTA) outcomes on 215 audio and audiovisual understanding, reasoning, and interplay subtasks..

These 215 SOTA wins span particular technical benchmarks, not simply broad metrics, reminiscent of:

- Three audiovisual benchmarks and 5 frequent audio benchmarks.

- 8 ASR (Computerized Speech Recognition) Benchmark.

- 156 language-specific Speech-to-Textual content Translation (S2TT) duties.

- 43 language-specific ASR duties.

In accordance with their officers, technical reporthigher than Qwen3.5-Omni-Plus gemini 3.1 professional Usually, speech understanding, reasoning, recognition, and translation. It maintains the core textual content and visible efficiency of the usual Qwen3.5 sequence, whereas delivering efficiency on par with Google’s flagships in audiovisual understanding.

Technical options for real-time interplay

Constructing a mannequin that may “converse” and “hear” in actual time requires fixing particular engineering challenges associated to streaming stability and dialog circulation.

ARIA: Adaptive Fee Interleaved Alignment

A standard failure mode in streaming voice interactions is “audio instability.” As a result of textual content and audio tokens have totally different encoding efficiencies, the mannequin might misinterpret or minimize off numbers when attempting to synchronize textual content inference with audio output.

To handle this, Alibaba Qwen staff has developed. ARIA (Adaptive Fee Interleave Alignment). This know-how dynamically adjusts textual content and audio models throughout era. ARIA improves the naturalness and robustness of speech synthesis with out growing latency by adjusting the interleaving charge based mostly on the density of data being processed.

Semantic breaks and turn-taking

For AI builders constructing voice assistants, coping with interruptions is notoriously tough. Qwen3.5-Omni introduces native Recognizing the intention of flip taking. This permits the mannequin to tell apart between “backchanneling” (meaningless background noise or listener suggestions reminiscent of “uh uh”) and precise semantic interruptions when the person is about to say one thing. This performance is constructed straight into the mannequin’s API and allows extra human-like full-duplex conversations.

Emergency perform: audio visible vibe coding

Maybe essentially the most distinctive options recognized throughout Qwen3.5-Omni’s native multimodal scaling are: audio visible vibe coding. In contrast to conventional code era that depends on textual content prompts, Qwen3.5-Omni can carry out coding duties based mostly straight on audiovisual directions.

For instance, if a developer information a video of a software program UI and verbally describes a bug whereas pointing to a selected component, the mannequin can straight generate a repair. This emergence means that the mannequin has developed a cross-modal mapping between visible UI hierarchy, linguistic intent, and symbolic code logic.

Vital factors

- Qwen3.5-Omni makes use of native thinker, talker A multimodal structure for built-in textual content, audio, and video processing.

- mannequin helps 256k context, 10+ hours of audioand 400+ seconds of 720p video At 1FPS.

- alibaba report Speech recognition for 113 languages/dialects and Speech era in 36 languages/dialects.

- Key system options embody: Semantic interruption, Recognizing the intention of flip taking, TMRoPEand Aria For real-time interplay.

Please verify technical details, Quen Chat, Online demo on HF and Offline demo on HF. Additionally, be at liberty to comply with us Twitter Do not forget to hitch us 120,000+ ML subreddits and subscribe our newsletter. cling on! Are you on telegram? You can now also participate by telegram.