Deploying machine studying (ML) fashions into manufacturing is usually a posh and resource-intensive activity, particularly for purchasers with out deep ML or DevOps experience. Amazon SageMaker Canvas simplifies mannequin constructing by offering a code-free interface, so you need to use your current knowledge sources to create extremely correct ML fashions with out writing a single line of code. However constructing the mannequin is just half the battle. Environment friendly and cost-effective implementation is equally necessary. Amazon SageMaker Serverless Inference is designed for workloads with various site visitors patterns and idle intervals. Robotically provision and scale your infrastructure primarily based on demand, decreasing the necessity to handle servers and preconfigure capability.

This publish reveals you the right way to take an ML mannequin constructed on SageMaker Canvas and deploy it utilizing SageMaker Serverless Inference. This answer helps you go from mannequin creation to production-ready predictions rapidly and effectively with out managing infrastructure.

Resolution overview

Let us take a look at an instance workflow to exhibit making a serverless endpoint for a skilled mannequin in SageMaker Canvas.

- Add the skilled mannequin to the Amazon SageMaker mannequin registry.

- Create a brand new SageMaker mannequin with the right configuration.

- Create a serverless endpoint configuration.

- Deploy a serverless endpoint utilizing the mannequin and endpoint configuration you created.

You can too automate the method, as proven within the following diagram.

This instance deploys a pre-trained regression mannequin to a serverless SageMaker endpoint. On this manner, the mannequin can be utilized for quite a lot of workloads that don’t require real-time inference.

Conditions

As a prerequisite, you should have entry to Amazon Easy Storage Service (Amazon S3) and Amazon SageMaker AI. In case your account doesn’t have already got a SageMaker AI area configured, you additionally want permission to create a SageMaker AI area.

You additionally want a skilled regression or classification mannequin. You may prepare your SageMaker Canvas mannequin as typical. This consists of creating an Amazon SageMaker Knowledge Wrangler move, performing any crucial knowledge transformations, and choosing mannequin coaching settings. If you do not have a skilled mannequin but, you’ll be able to observe one of many following labs. Amazon SageMaker Canvas Immersion Day Please create one earlier than persevering with. This instance makes use of a classification mannequin skilled on the canvas-sample-shipping-logs.csv pattern dataset.

Save the mannequin to the SageMaker mannequin registry

To save lots of your mannequin to the SageMaker mannequin registry, observe these steps:

- Within the SageMaker AI console, choose: studio Launch Amazon SageMaker Studio.

- Within the SageMaker Studio interface, while you launch SageMaker Canvas, a brand new tab opens.

- Discover the mannequin and mannequin model you need to deploy to your serverless endpoint.

- Within the choices menu (three vertical dots), choose: Add to mannequin registry.

Now you can sign off and exit SageMaker Canvas. To handle prices and stop further workspace expenses, you may as well configure SageMaker Canvas to mechanically shut down when idle.

Approve mannequin deployment

After including the mannequin to the mannequin registry, do the next:

- Within the SageMaker Studio UI, choose: mannequin within the navigation pane.

Fashions exported from SageMaker Canvas have to be added in deployment standing. Ready for guide approval.

- Choose the model of the mannequin you need to deploy and replace the standing as follows: accepted Choose your deployment standing.

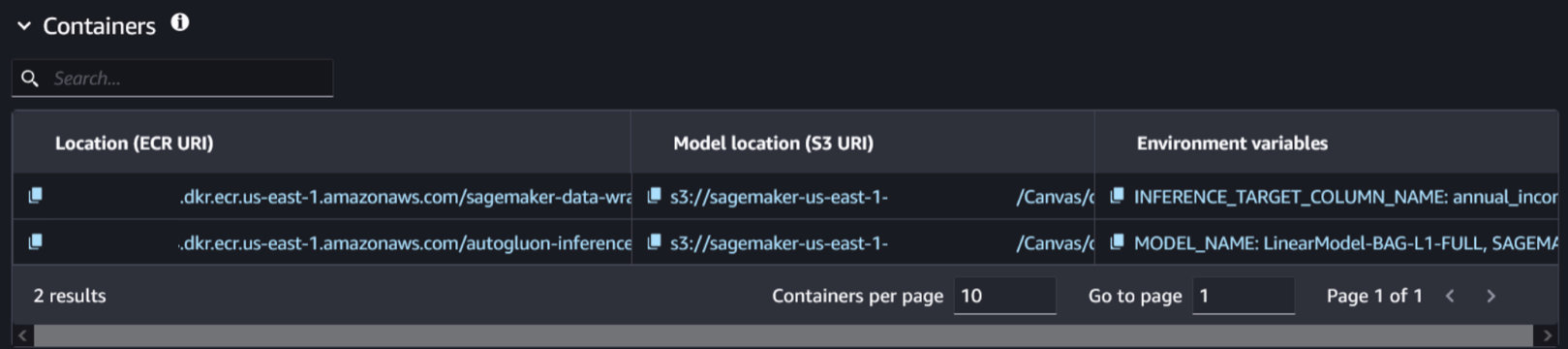

- Choose your mannequin model and navigate to: increase tab. This shows info associated to the mannequin and related containers.

- Choose the container and mannequin location related to the skilled mannequin. You may determine it by checking the existence of the setting variable

SAGEMAKER_DEFAULT_INVOCATIONS_ACCEPT.

Create a brand new mannequin

To create a brand new mannequin, observe these steps:

- As an alternative of closing the SageMaker Studio tab, open a brand new tab to open the SageMaker AI console.

- select mannequin in inference Please choose a piece Making a mannequin.

- Identify your mannequin.

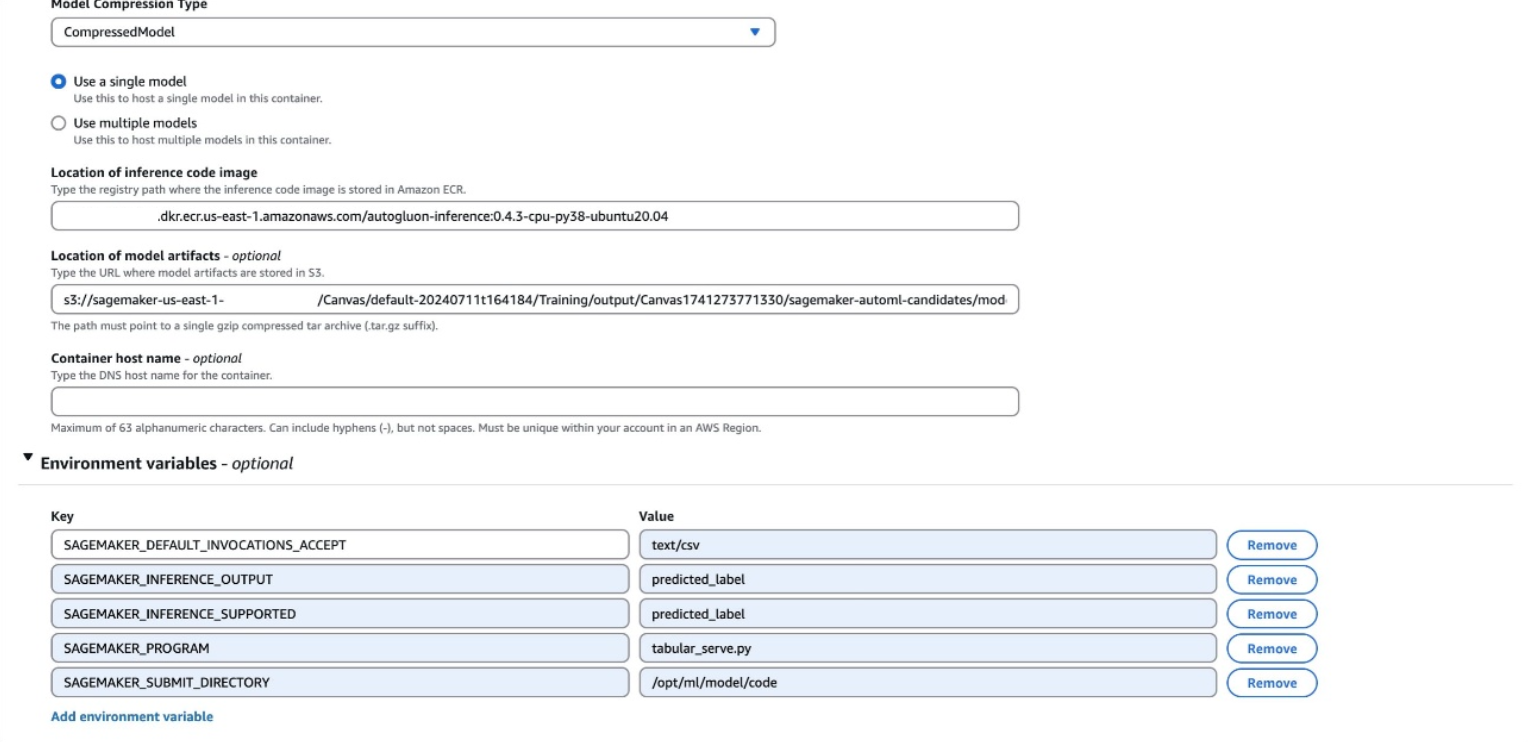

- Go away the container enter possibility as is. Gives location for mannequin artifacts and inference photos and used

CompressedModelkind. - Enter the Amazon Elastic Container Registry (Amazon ECR) URI, Amazon S3 URI, and setting variables that you simply discovered within the earlier step.

Setting variables are displayed as a single line in SageMaker Studio within the following format:

You could have completely different variables than within the earlier instance. All variables from setting variables have to be added to the mannequin. When creating a brand new mannequin, be certain that every setting variable is on a separate line.

- select Making a mannequin.

Create an endpoint configuration

To create an endpoint configuration, observe these steps:

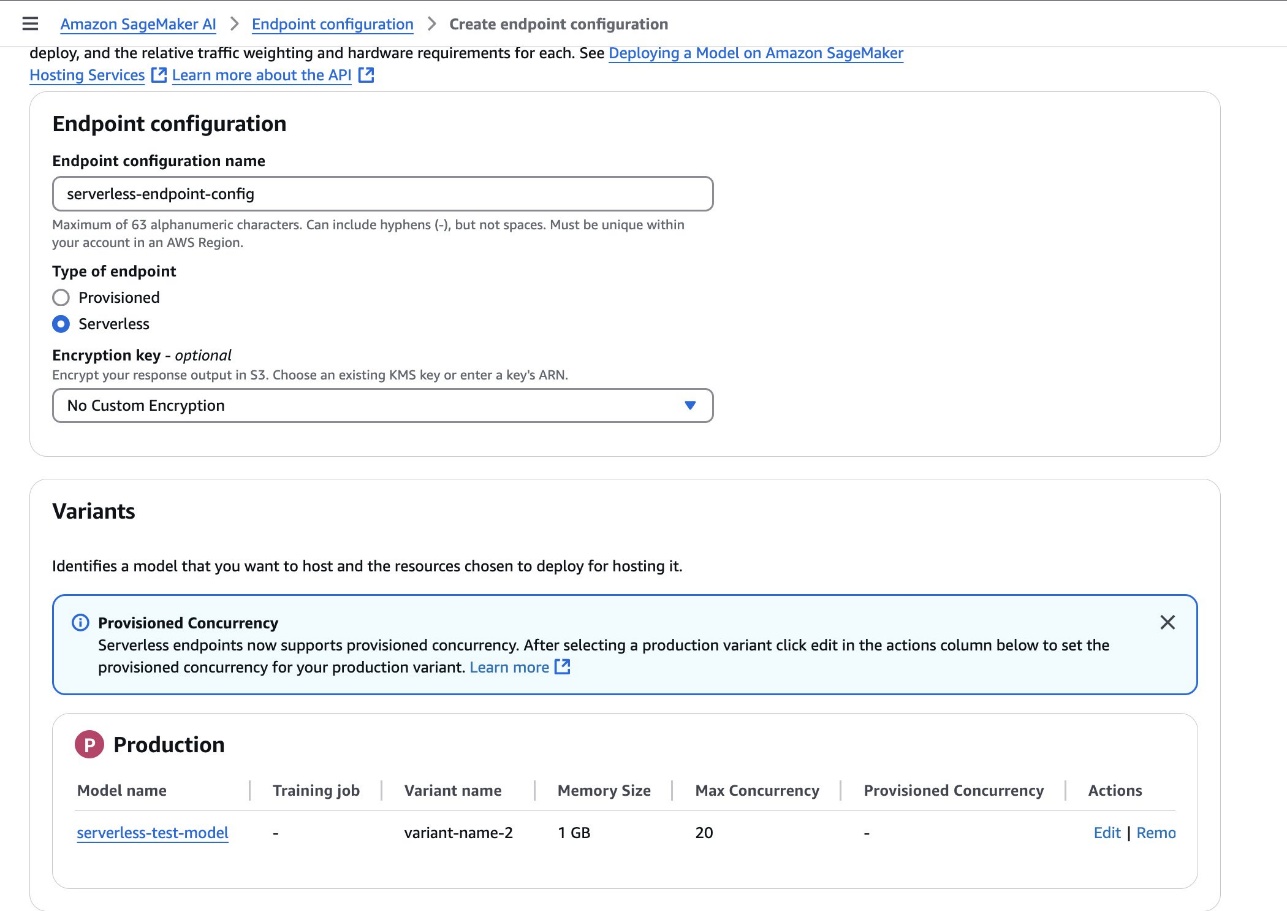

- Within the SageMaker AI console, choose: Endpoint configuration Create a brand new mannequin endpoint configuration.

- Set the endpoint kind as follows: serverless Set the mannequin variant to the mannequin created within the earlier step.

- select Creating an endpoint configuration.

Create an endpoint

To create an endpoint, observe these steps:

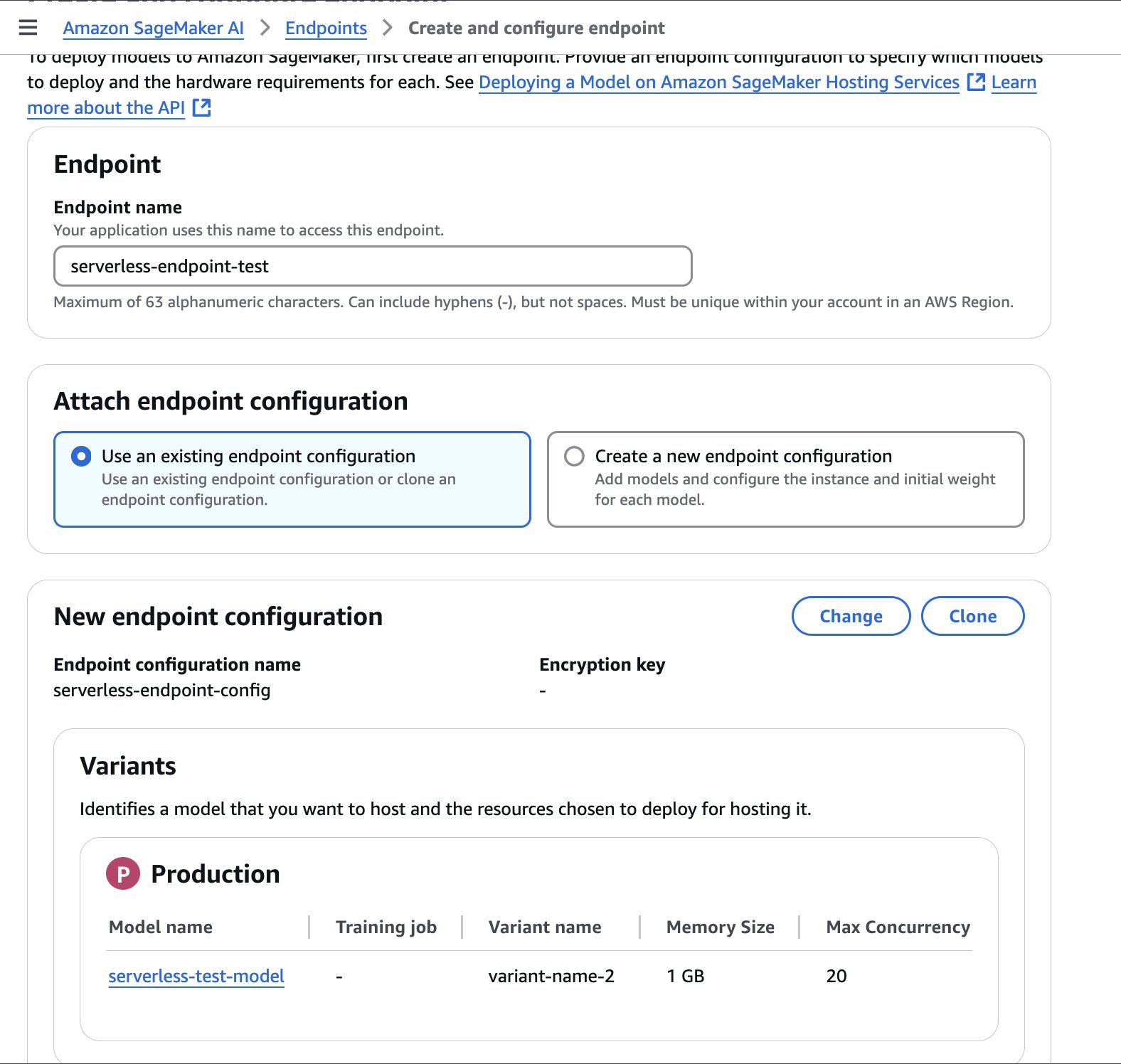

- Within the SageMaker AI console, choose: endpoint Create a brand new endpoint within the navigation pane.

- Identify your endpoint.

- Choose the endpoint configuration created within the earlier step, Choose endpoint configuration.

- select Creating an endpoint.

Creating the endpoint might take a couple of minutes. When the standing is up to date as follows: In operationClick on to start out calling the endpoint.

The next pattern code reveals the right way to name an endpoint from a Jupyter pocket book in a SageMaker Studio setting.

Automate processes

To mechanically create a serverless endpoint every time a brand new mannequin is accepted, you need to use the next YAML file with AWS CloudFormation. This file automates the creation of a SageMaker endpoint with the required configuration.

This pattern CloudFormation template is offered for inspiration solely and isn’t supposed for direct use in a manufacturing setting. Builders ought to totally take a look at this template based on their group’s safety tips earlier than deployment.

This stack limits automated creation of serverless endpoints to particular AWS Areas and domains. Yow will discover your area ID when accessing SageMaker Studio from the SageMaker AI console or by operating the next command: aws sagemaker list-domains —area [your-region]

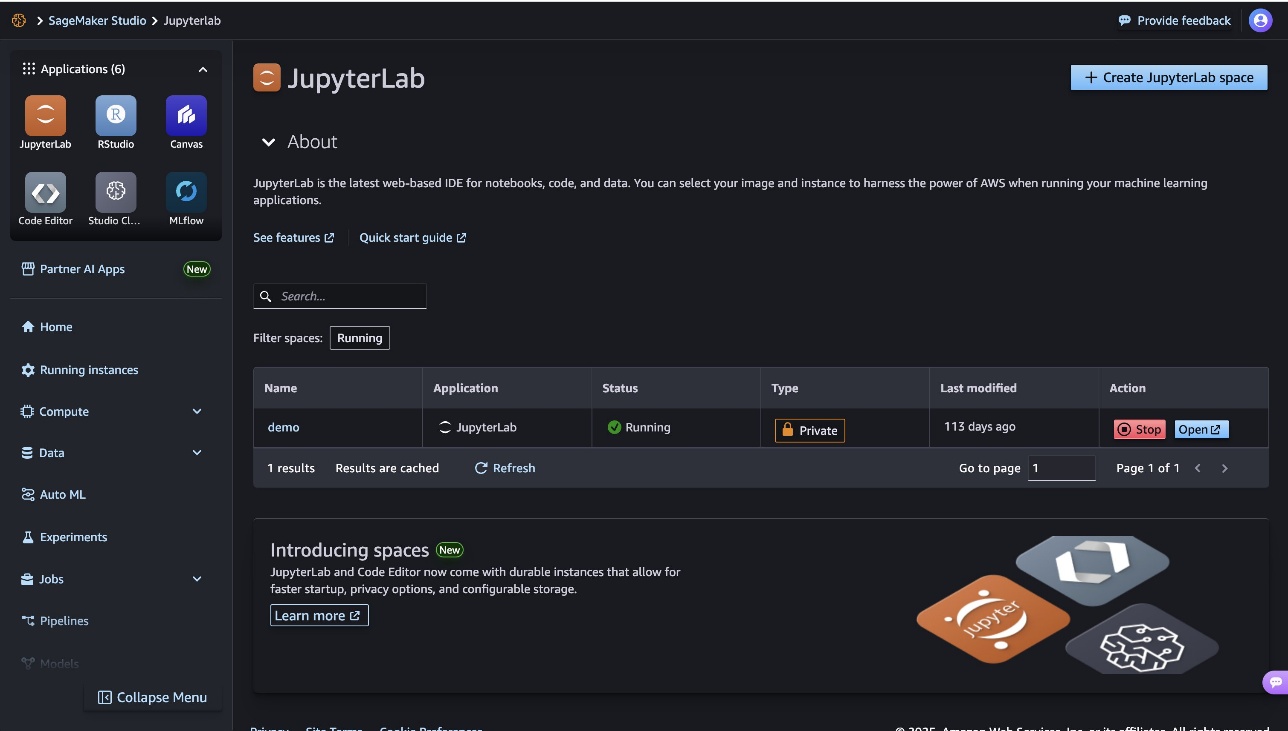

cleansing

To handle your prices and keep away from incurring further expenses to your workspace, ensure you sign off of SageMaker Canvas. In case you used a Jupyter pocket book to check your endpoints, you’ll be able to selectively shut down your JupyterLab occasion. Cease Alternatively, configure automated shutdown of JupyterLab.

This publish confirmed the right way to use SageMaker Serverless Inference to deploy SageMaker Canvas fashions to serverless endpoints. This serverless strategy lets you rapidly and effectively ship predictions from SageMaker Canvas fashions with out managing the underlying infrastructure.

This seamless deployment expertise is only one instance of how AWS providers like SageMaker Canvas and SageMaker Serverless Inference simplify your ML efforts and assist corporations of assorted sizes and technical sophistication unlock the worth of AI and ML. As you proceed exploring the SageMaker ecosystem, be sure you learn to unlock knowledge governance for no-code ML utilizing Amazon DataZone and seamlessly transition between no-code and code-first mannequin growth utilizing SageMaker Canvas and SageMaker Studio.

Concerning the creator

Nadiya Polanco I am an AWS Options Architect primarily based in Brussels, Belgium. On this position, she helps organizations trying to incorporate AI and machine studying into their workloads. In her free time, Nadiya enjoys her ardour for espresso and journey.

Nadiya Polanco I am an AWS Options Architect primarily based in Brussels, Belgium. On this position, she helps organizations trying to incorporate AI and machine studying into their workloads. In her free time, Nadiya enjoys her ardour for espresso and journey.

Brajendra Singh He’s a Principal Options Architect at Amazon Internet Providers, the place he companions with enterprise prospects to design and implement revolutionary options. With a powerful background in software program growth, he brings deep experience in knowledge analytics, machine studying, and generative AI.

Brajendra Singh He’s a Principal Options Architect at Amazon Internet Providers, the place he companions with enterprise prospects to design and implement revolutionary options. With a powerful background in software program growth, he brings deep experience in knowledge analytics, machine studying, and generative AI.