Video semantic search is unlocking new worth throughout industries. The demand for video-first experiences is reshaping how organizations ship content material, and clients count on quick, correct entry to particular moments inside video. For instance, sports activities broadcasters have to floor the precise second a participant scored to ship spotlight clips to followers immediately. Studios want to search out each scene that includes a selected actor throughout hundreds of hours of archived content material to create customized trailers and promotional content material. Information organizations have to retrieve footage by temper, location, or occasion to publish breaking tales quicker than rivals. The purpose is identical: ship video content material to finish customers shortly, seize the second, and monetize the expertise.

Video is of course extra advanced than different modalities like textual content or picture as a result of it amalgamates a number of unstructured indicators: the visible scene unfolding on display screen, the ambient audio and sound results, the spoken dialogue, the temporal info, and the structured metadata describing the asset. A person looking for “a tense automotive chase with sirens” is asking a few visible occasion and an audio occasion on the similar time. A person looking for a selected athlete by identify could also be on the lookout for somebody who seems prominently on display screen however isn’t spoken aloud.

The dominant method immediately grounds all video indicators into textual content, whether or not by transcription, handbook tagging, or automated captioning, after which applies textual content embeddings for search. Whereas this works for dialogue-heavy content material, changing video to textual content inevitably loses vital info. Temporal understanding disappears, and transcription errors emerge from visible and audio high quality points. What should you had a mannequin that would course of all modalities and immediately map them right into a single searchable illustration with out shedding element? Amazon Nova Multimodal Embeddings is a unified embedding mannequin that natively processes textual content, paperwork, photographs, video, and audio right into a shared semantic vector house. It delivers main retrieval accuracy and price effectivity.

On this publish, we present you how you can construct a video semantic search resolution on Amazon Bedrock utilizing Nova Multimodal Embeddings that intelligently understands person intent and retrieves correct video outcomes throughout all sign sorts concurrently. We additionally share a reference implementation you’ll be able to deploy and discover with your personal content material.

Determine 1: Instance screenshot from remaining search resolution

Answer overview

We constructed our resolution on Nova Multimodal Embeddings mixed with an clever hybrid search structure that fuses semantic and lexical indicators throughout all video modalities. Lexical search matches actual key phrases and phrases, whereas semantic search understands which means and context. We are going to clarify our alternative of this hybrid method and its efficiency advantages in later sections.

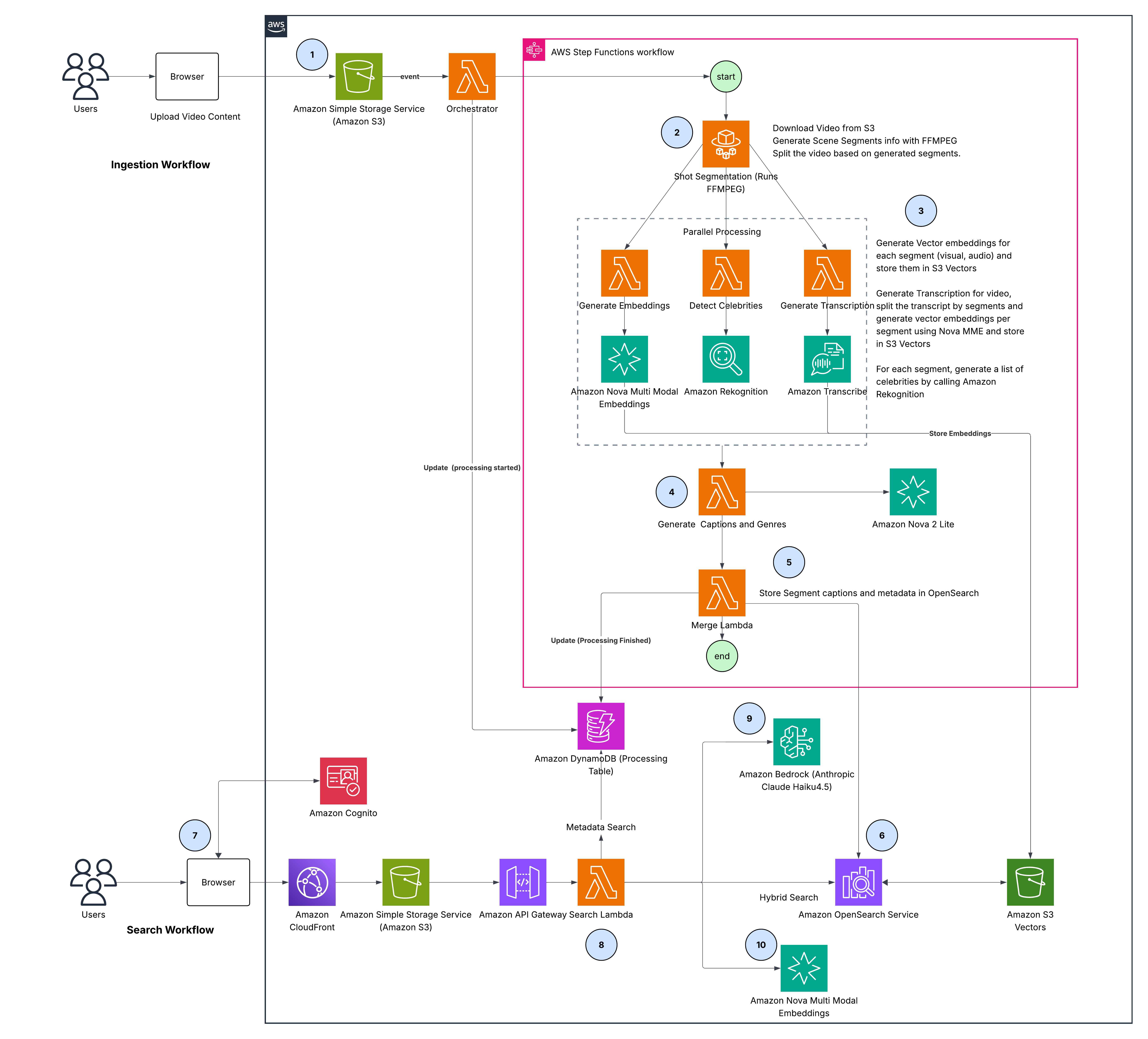

Determine 2: Finish-to-end resolution structure

The structure consists of two phases: an ingestion pipeline (steps 1-6) that processes video into searchable embeddings, and a search pipeline (steps 7-10) that routes person queries intelligently throughout these representations and merges outcomes right into a ranked listing. Listed here are particulars for every of the steps:

- Add – Movies uploaded by way of browser are saved in Amazon Easy Storage Service (Amazon S3), triggering the Orchestrator AWS Lambda to replace Amazon DynamoDB standing and begin the AWS Step Capabilities pipeline

- Shot segmentation – AWS Fargate makes use of FFmpeg scene detection to separate video into semantically coherent segments

- Parallel processing – Three concurrent branches course of every section:

- Embeddings: Nova Multimodal Embeddings generates 1024-dimensional vectors for visible and audio, saved in Amazon S3 Vectors

- Transcription: Amazon Transcribe converts speech to textual content, aligned to segments. Amazon Nova Multimodal Embeddings generates textual content embeddings saved in Amazon S3 Vectors

- Superstar detection: Amazon Rekognition identifies identified people, mapped to segments by timestamp

- Caption & style technology – Amazon Nova 2 Lite synthesizes segment-level captions and style labels from visible content material and transcripts

- Merge – AWS Lambda assembles all metadata (captions, transcripts, celebrities, style) and retrieves embeddings from Amazon S3 Vectors

- Index – Full section paperwork with metadata and vectors which might be bulk-indexed into Amazon OpenSearch Service

- Authentication – Customers authenticate by way of Amazon Cognito and entry the entrance finish by Amazon CloudFront

- Question processing – Amazon API Gateway routes requests to Search Lambda, which executes two parallel operations: intent evaluation and question embedding

- Intent evaluation – Amazon Bedrock (utilizing Anthropic Claude Haiku) assigns relevance weights (0.0-1.0) throughout visible, audio, transcription, and metadata modalities

- Question embedding – Nova Multimodal Embeddings embeds the question 3 times for visible, audio, and transcription similarity search

This versatile structure addresses 4 key design choices that the majority video search programs overlook: sustaining temporal context, dealing with multimodal queries, scaling throughout large content material libraries, and optimizing retrieval accuracy. An entire reference implementation is offered on GitHub, and we encourage you to observe together with the next walkthrough to see how every choice contributes to correct, scalable search throughout all sign sorts.

Segmentation for context continuity

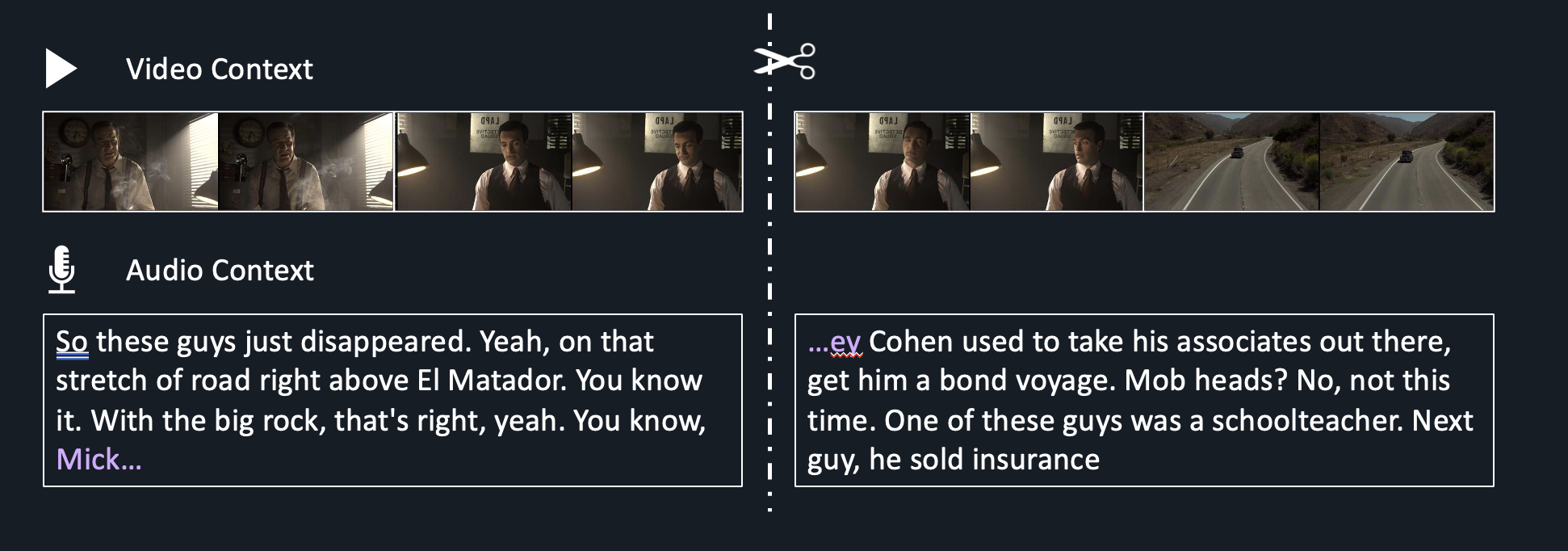

Earlier than producing any embeddings, it’s essential divide your video into searchable items, and the boundaries you draw have a direct affect on search accuracy. Every section turns into the atomic unit of retrieval. If a section is simply too quick, it loses the encompassing context that offers a second its which means. Whether it is too lengthy, it fuses a number of matters or scenes collectively, diluting relevance and making it more durable for the search system to floor the proper second. For simplicity, you can begin with fixed-length chunks. Nova Multimodal Embeddings helps as much as 30 seconds per embedding, supplying you with flexibility to seize full scenes. Nonetheless, remember that fastened boundaries could arbitrarily truncate a scene mid-action or break up a sentence mid-thought, disrupting the semantic which means that makes a second retrievable, as proven within the following determine.

Determine 3: Video segmentation methods

The purpose is semantic continuity: every section ought to signify a coherent unit of which means moderately than an arbitrary slice of time. Mounted 10-second blocks are easy to provide, however they ignore the pure construction of the content material. A scene change mid-segment splits a visible concept throughout two chunks, degrading each retrieval precision and embedding high quality.

To unravel this, we use FFmpeg‘s scene detection to establish the place the visible content material really adjustments. FFmpeg is an open supply multimedia framework extensively used for video processing, format conversion, and evaluation. The _detect_scenes perform that follows runs ffprobe (FFmpeg’s related instrument for media inspection) in opposition to the video and returns an inventory of timestamps, every marking a scene boundary:

The output is an easy listing of timestamps like 12.345, 28.901, 45.678, every marking a pure boundary the place the scene shifts.

With these boundaries in hand, the segmentation algorithm snaps every minimize to the closest scene change inside an appropriate window, focusing on round 10 seconds with a minimal of 5 seconds and a most of 15 seconds from the present begin. If no scene adjustments fall in that vary, it falls again to a tough minimize on the goal period. The result’s a set of segments that really feel pure: 8.3s, 11.1s, 9.8s, 12.4s, 7.6s, every aligned to an actual scene boundary moderately than a set ticker.

This easy shot-based segmentation makes certain section boundaries align with pure visible transitions moderately than reducing arbitrarily. The goal section period ought to be calibrated primarily based in your content material kind and use case: action-heavy content material with frequent cuts could profit from visible segmentation like this, whereas documentary or interview content material with longer takes may fit higher with longer, topic-based segmentation. For extra superior segmentation methods, together with audio-based matter segmentation and mixed visible and audio approaches, we advocate studying Media2Cloud on AWS Steerage: Scene and Ad-Break Detection and Contextual Understanding for Promoting Utilizing Generative AI.

Generate separate embeddings for visible, audio, and transcript indicators

With segments outlined, the selection of embedding mannequin is the place the most important high quality hole opens between approaches. The dominant method immediately grounds all video indicators into textual content earlier than producing embeddings, however as we established earlier, video carries way more which means than any transcript or caption can specific. Visible motion, ambient sound, on-screen textual content, and entity context are both misplaced completely or approximated by imprecise descriptions.

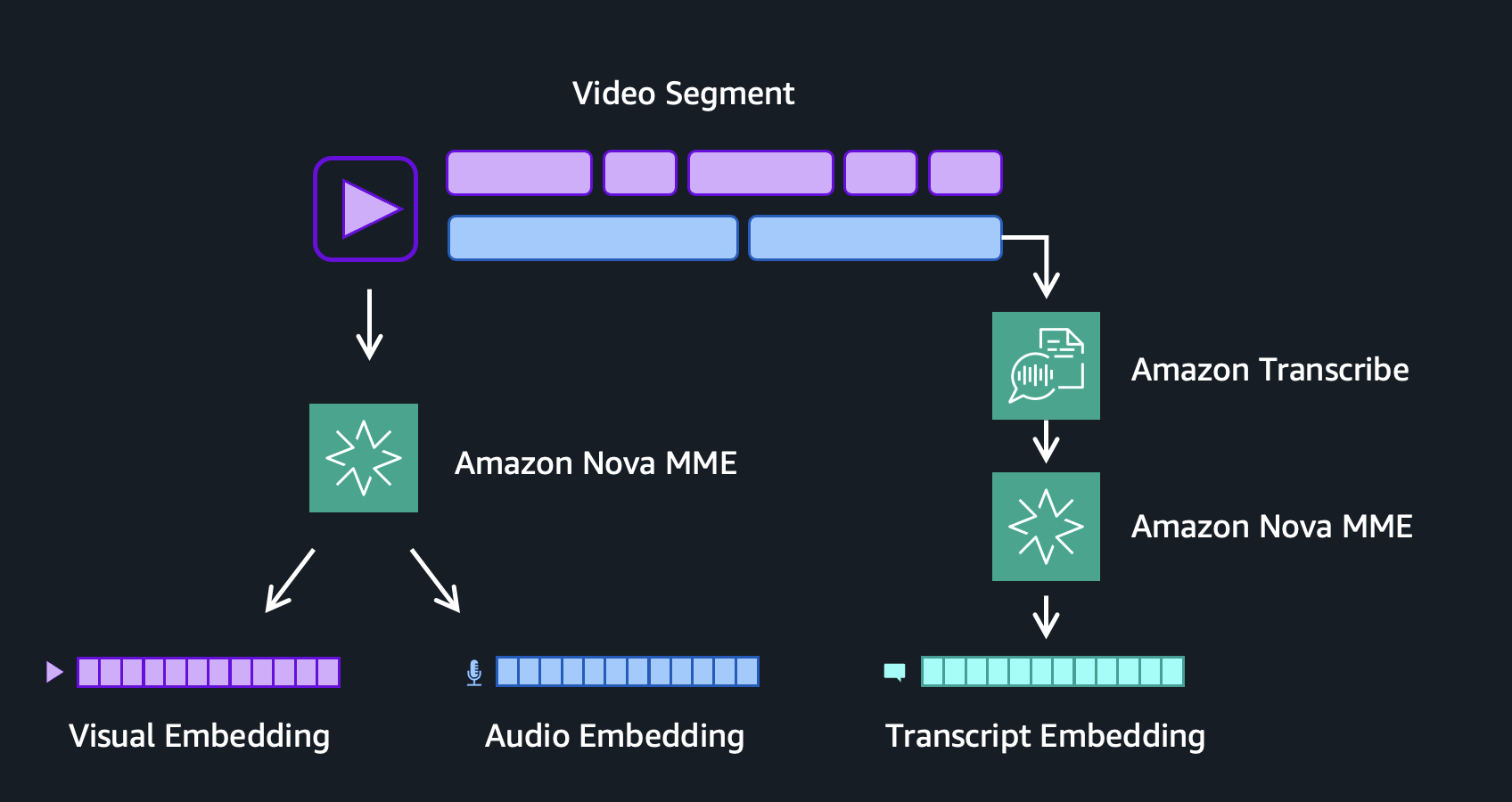

Nova Multimodal Embeddings adjustments this basically as a result of it’s a video-native mannequin that may generate embeddings in two modes. The mixed mode fuses visible and audio indicators right into a unified illustration, capturing an important indicators collectively. This method advantages storage value and retrieval latency by requiring solely a single embedding per section. Alternatively, the AUDIO_VIDEO_SEPARATE mode generates distinct visible and audio embeddings. This method offers most illustration in modality-specific embeddings and provides you higher management over when to go looking visible content material versus audio content material.

In our implementation, we even added a 3rd speech embedding derived from Amazon Transcribe. This embedding is created from aligning full sentence transcripts to the embedding section timestamps, earlier than and after, preserving the semantic integrity of spoken language and guaranteeing {that a} full thought isn’t break up throughout two embeddings.

Determine 4: Visible, audio, and speech embedding technology per video section

Collectively, these three embeddings cowl the complete sign house of a video section. The visible embedding captures what the digicam sees: objects, scenes, actions, colours, and spatial composition. The audio embedding captures what the microphone hears: music, sound results, ambient noise, and the acoustic texture of a scene. The transcript embedding captures what individuals say, representing the semantic which means of spoken dialogue and narration. Collapsing all three indicators right into a single mixed embedding compresses distinct modalities into one vector. This blurs the boundaries between what’s seen, heard, and spoken, and loses the fine-grained element that makes every sign helpful by itself. Holding them separate provides you exact management to dial every modality up or down primarily based on question intent, permitting the search pipeline to match in opposition to the modality probably to comprise the reply.

Mix metadata and embeddings for hybrid search

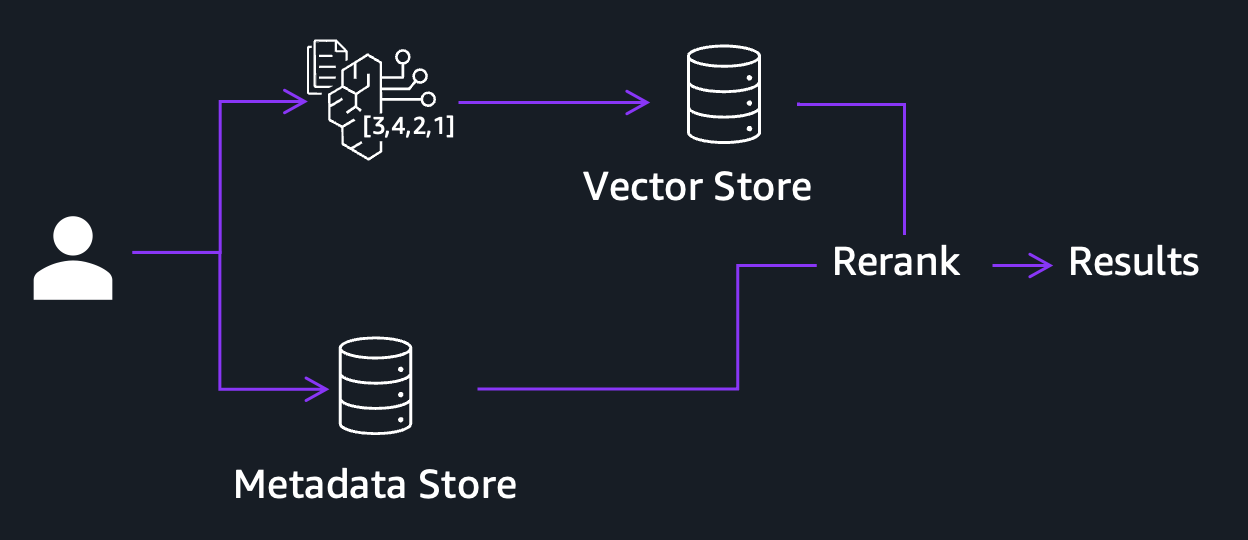

Even with three impartial embeddings protecting visible, audio, and spoken content material, there may be nonetheless a category of queries the system can not reply effectively. Embeddings are designed to seize semantic similarity. They excel at discovering a “tense crowd second” or a “solar setting over water” as a result of these are ideas with wealthy visible and audio which means. However when a person searches for a selected identify, product mannequin quantity, geolocation, or a specific date, embeddings will possible fail. These are discrete entities with little semantic indicators on their very own. That is the place hybrid search is available in. Slightly than counting on embeddings alone, the system runs two parallel retrieval paths as proven within the following determine: a semantic path that matches in opposition to your visible, audio, and transcript embeddings to seize conceptual similarity, and a lexical path that performs actual key phrase and entity matching in opposition to structured metadata.

Determine 5: Hybrid search pipeline combining semantic and lexical retrieval

How a lot metadata do you want? The reply depends upon your content material kind, group, and use case, and capturing all the things upfront is impractical. For illustration functions, we chosen a number of classes of metadata to signify widespread kinds of metadata in media and leisure content material.

First, we chosen video title and datetime to signify technical metadata extracted immediately from the content material catalog or file metadata. Then we added section captions, style, and celeb recognition to signify contextual metadata, generated utilizing Amazon Nova 2 Lite and Amazon Rekognition. Captions are generated from the video and transcript of every section, giving the mannequin each visible and spoken context. Style is predicted from the complete video transcript throughout all segments, which is cheaper and extra dependable than re-sending all video clips. Superstar identification is dealt with by Amazon Rekognition, which acknowledges identified public figures showing on display screen with out requiring customized coaching.

Instance prompts used for caption technology and style classification are proven within the following examples:

The idea extends naturally to different metadata sorts. Technical metadata could embrace decision or file dimension, whereas contextual metadata may embrace location, temper, or model. The suitable stability depends upon your search use case. Moreover, overlaying metadata filters throughout retrieval can additional improve search scalability and accuracy by narrowing the search house earlier than semantic matching.

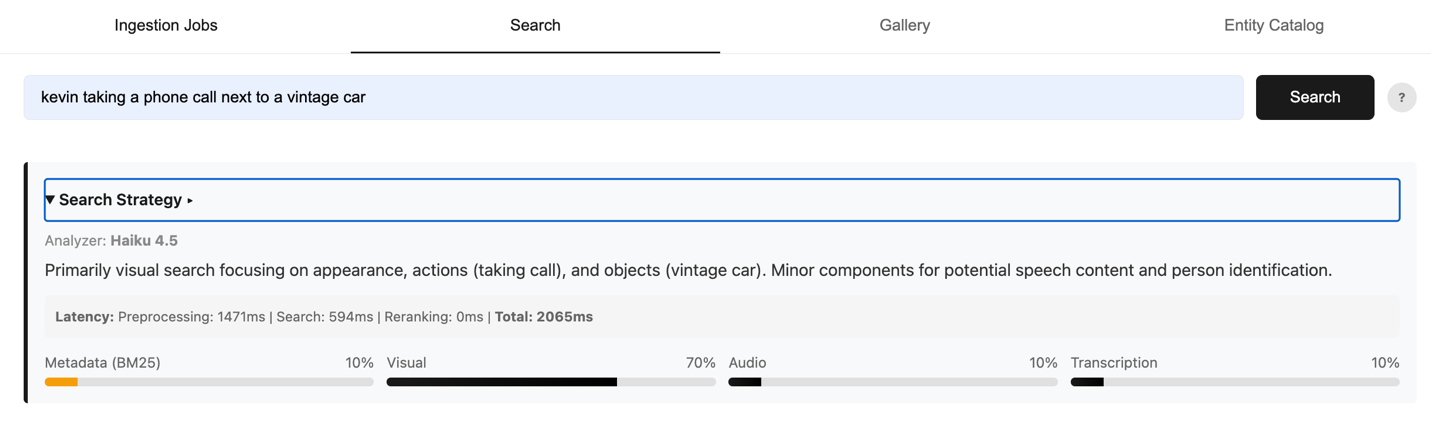

Optimize search relevance with intent-aware question routing

Now you have got three embeddings and metadata, 4 searchable dimensions. However how have you learnt when to make use of which for a given question? Intent is all the things. To unravel this, we constructed an clever intent evaluation router that makes use of the Haiku mannequin to research every incoming question and assign weight to every modality channel: visible, audio, transcript, and metadata. See the instance search question within the following determine.

“Kevin taking a cellphone name subsequent to a classic automotive”

Determine 6: Instance question with intelligently weights assigned primarily based on search intent

The Haiku mannequin is prompted to return a JSON object with weights that sum to 1.0, together with a short reasoning hint explaining the project. See the next immediate:

The weights immediately management which sub-queries execute. Any modality beneath a 5% weight threshold is skipped completely, eliminating pointless embedding API calls and decreasing search latency with out sacrificing accuracy. The remaining channels execute in parallel, every looking its personal index independently. Outcomes from all lively channels are then scored utilizing a weighted arithmetic imply. BM25 scores (a lexical relevance measure primarily based on time period frequency and doc size) and cosine similarity scores (a geometrical measure of how intently two embedding vectors level in the identical path) dwell on very completely different scales. To deal with this, every sub-query’s scores are first normalized to a 0-1 vary, then mixed utilizing the router’s intent weights:

We selected the weighted arithmetic imply as our reranking method as a result of it immediately incorporates question intent by the router’s weights. Not like Reciprocal Rank Fusion (RRF), which treats all lively channels equally no matter intent, the weighted imply amplifies channels the router deems most related for a given question. From our testing, this produced extra correct outcomes for our search duties.

Select the proper storage technique for vectors and metadata

The ultimate design choice is the place and how you can retailer all of it. Every video section produces as much as three embeddings and a set of metadata fields, and the way you retailer them determines each your search efficiency and your value at scale. We break up this throughout two providers with complementary roles: Amazon S3 Vectors for vector storage, and Amazon OpenSearch Service for hybrid search.

S3 Vectors shops three vector indices per undertaking, one for every embedding kind:

nova-visual-{project_id}# visible embeddingsnova-audio-{project_id}# audio embeddingsnova-transcription-{project_id}# transcript embeddings

OpenSearch holds one index per undertaking, the place every doc represents a single video section containing each textual content fields for BM25 search and vector fields for k-nearest neighbors (kNN) search:

We selected S3 Vectors for its cost-to-performance advantages. Amazon S3 Vectors reduces the fee for storing and querying vectors by as much as 90% in comparison with various specialised options. If search latency just isn’t vital in your use case, S3 Vectors is a powerful default alternative. For those who want the bottom potential latency, we advocate utilizing vectors in reminiscence with the OpenSearch Hierarchical Navigable Small World (HNSW) engine.

Lastly, it’s value calling out that some use circumstances require looking inside longer, semantically dense video segments corresponding to a full interview, a multi-minute documentary scene, or an prolonged product demonstration. Most multimodal embedding fashions, together with Nova Multimodal Embeddings, have a most enter period of 30 seconds, which suggests a 3-minute clip can’t be embedded as a single unit. Trying to take action would both fail or pressure chunking that loses the broader context.

The nested vector assist in OpenSearch addresses this by permitting a single doc to comprise a number of sub-segment embeddings:

At question time, OpenSearch scores the doc primarily based on the best-matching sub-segment moderately than a single averaged illustration, so an extended scene can match a selected visible second inside it whereas nonetheless being returned as one coherent consequence.

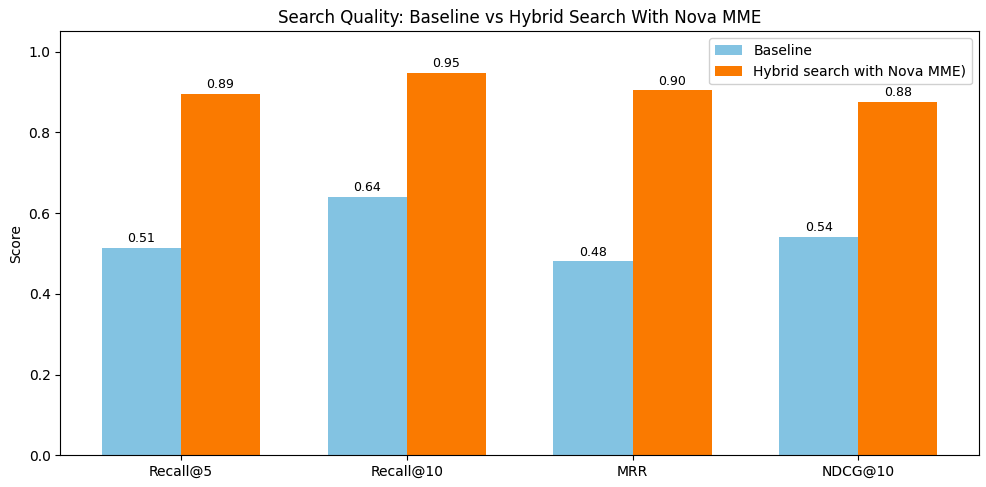

Efficiency outcomes: How the optimized method outperforms the baseline

To validate our design choices, we benchmarked the optimized hybrid search in opposition to Nova Multimodal Embeddings baseline AUDIO_VIDEO_COMBINED mode utilizing 10 inside long-form movies (5-20 minutes) evaluated throughout 20 queries spanning visible, audio, transcript, and metadata-focused searches. The baseline makes use of a single unified vector per 10-second section with one index and one kNN question. Our optimized method generates separate visible, audio, and transcript embeddings, enriches segments with structured metadata, and applies intent-aware routing that dynamically weights modality channels. The next determine exhibits outcomes throughout 4 commonplace retrieval metrics:

Determine 7: Efficiency Comparability Throughout Retrieval Metrics for Hybrid Search with Nova MME vs. Baseline

The next desk captures key metrics:

| Recall@5 | Recall@10 | MRR | NDCG@10 | |

| Hybrid search W/ Nova Multimodal Embeddings | 90% | 95% | 90% | 88% |

| Baseline | 51% | 64% | 48% | 54% |

Key metrics defined:

- Recall@5: Of all related segments, what fraction seems within the prime 5 outcomes? This implies the protection of the search outcomes.

- Recall@10: Of all related segments, what fraction seems within the prime 10 outcomes? This implies the protection of the search outcomes.

- MRR (Imply Reciprocal Rank): 1/rank of the primary related consequence, averaged throughout queries. This measures how shortly you discover one thing related.

- NDCG@10: Normalized Discounted Cumulative Achieve rewards related outcomes ranked increased and penalizes these ranked decrease. It is a commonplace rating high quality metric.

The outcomes present substantial enhancements throughout all metrics. The optimized hybrid search achieved 90+% Recall@5 and Recall@10 versus 51% and 64% for the baseline (~40% elevate on protection accuracy). MRR jumped from 48% to 90%, and NDCG@10 rose from 54% to 88%. These 30-40 proportion level beneficial properties validate our core architectural choices: semantic segmentation preserves content material continuity, separate embeddings present exact search management, metadata enrichment captures factual entities, and intent-aware routing makes certain the proper indicators drive every question. By treating every modality independently whereas intelligently combining them primarily based on question intent, the system adapts to various search patterns and delivers persistently related outcomes as your video archive scales.

Clear up

To keep away from incurring future prices, delete the sources used on this resolution by eradicating the AWS CloudFormation stack. For detailed instructions, discuss with the GitHub repository.

Conclusion

On this publish, we confirmed how you can construct a video semantic search resolution on AWS utilizing Nova Multimodal Embeddings, protecting 4 key design choices: segmentation for semantic continuity, multimodal embeddings that seize visible, audio, and speech indicators independently, metadata that fills the precision hole for entity-specific queries, and an information construction that organizes all the things for environment friendly retrieval at scale. Along with an clever intent evaluation router and weighted reranking, these choices rework a fragmented set of indicators right into a unified, correct search expertise that understands video. Extra optimizations may be achieved to additional tune search accuracy, together with mannequin customization for the intent routing layer. Learn Half 2 to go deeper on these methods. For a production-ready implementation of this video search and metadata administration method at scale, see the Steerage for a Media Lake on AWS.

In regards to the authors