Within the present laptop imaginative and prescient panorama, normal working procedures embody a modular “Lego brick” strategy. That’s, it combines a pre-trained imaginative and prescient encoder for characteristic extraction and a separate decoder for job prediction. Though efficient, this architectural separation complicates scaling and creates a bottleneck for the interplay between language and imaginative and prescient.

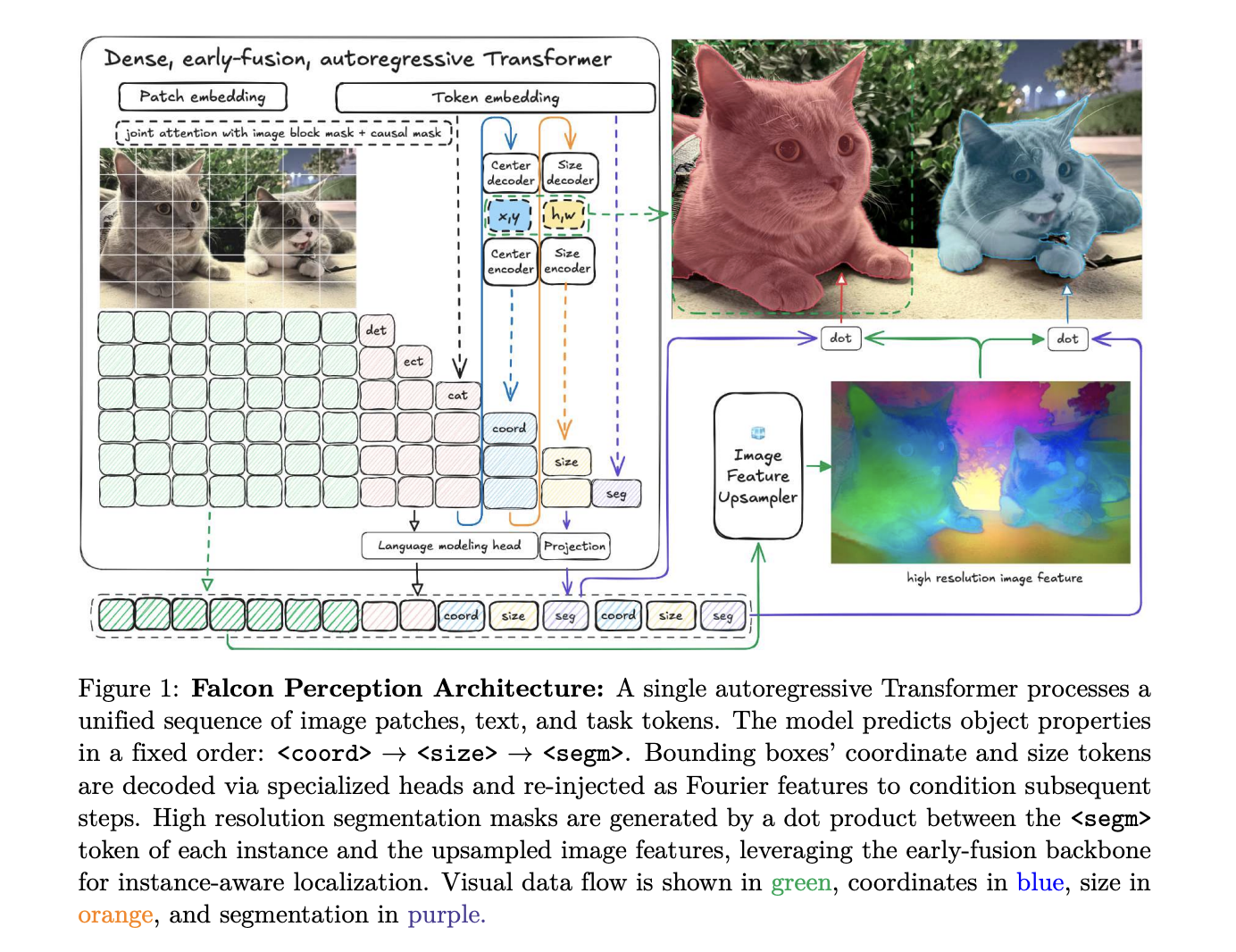

of Know-how Innovation Institute (TII) The analysis staff is difficult this paradigm. Falcon recognitionan built-in high-density transformer with 600M parameters. By processing picture patches and textual content tokens in a shared parameter area from the primary layer, the TII analysis staff early fusion A stack that handles recognition and job modeling extraordinarily effectively.

Structure: Single stack for all modalities

Falcon Notion’s core design is constructed on the speculation {that a} single Transformer can concurrently study visible representations and carry out task-specific era..

Hybrid consideration and GGROPE

In contrast to normal language fashions that use strict causal masking, Falcon Notion Hybrid consideration technique. Picture tokens correspond bidirectionally to one another to construct a world visible context, whereas textual content and job tokens correspond to all previous tokens (causal masking) to allow autoregressive prediction..

To take care of 2D spatial relationships in flattened sequences, the analysis staff makes use of: 3D rotation place embedding. This decomposes the pinnacle dimensions into steady and spatial elements utilizing: Golden Gate Rope (GGROPE). GGROPE permits the eye head to concentrate to relative place alongside any angle, making the mannequin strong to rotation and facet ratio variations.

minimalist sequence logic

The fundamental architectural sequence is: chain of notion format:

[Image] [Text] <coord> <measurement> <seg> ... <eos>.

This ensures that the mannequin resolves spatial ambiguities (location and measurement) as conditioning alerts earlier than producing the ultimate segmentation masks..

Engineering for scale: Muon, FlexAttending, and raster ordering

The TII analysis staff launched a number of optimizations to stabilize coaching and maximize GPU utilization for these heterogeneous sequences.

- Muon optimization: The analysis staff muon optimizer For specialised heads (coordinates, measurement, segmentation), coaching loss was decreased in comparison with normal AdamW and efficiency was improved on benchmarks.

- FlexAttendant and sequence packing: To be able to course of photographs at their native decision with out losing computation on padding, the mannequin is Distributed pack technique. Legitimate patches are packed into fixed-length blocks, flex consideration Used to restrict self-attention inside the boundaries of every picture pattern.

- Raster order: If a number of objects exist, Falcon Notion predicts them as follows: raster order (Prime to backside, left to proper). We discover that this converges quicker and has much less coordinate loss than random or size-based ordering.

Coaching Recipe: Distillation as much as 685GT

The mannequin makes use of multi-teacher distillation Extract information for initialization DINOv3 (ViT-H) Regional traits and SigLIP2 (So400m) For options tailor-made to the language. Following initialization, the mannequin undergoes the next processing: Three-stage perceptual coaching pipeline In complete, roughly 685 Gigatoken (GT):

- In-context listing (450 GT): Discover ways to “listing” scene inventories to construct international context.

- Coordination of duties (225 GT): Migrating to impartial question duties utilizing question masking It ensures that the mannequin bases every question solely on photographs.

- Lengthy context fine-tuning (10 GT): Quick-term adaptation to excessive densities will increase the masks restrict to 600 per expression.

These levels use task-specific serialization.

<picture>expr1<current><coord><measurement><seg> <eoq>expr2<absent> <eoq> <eos>.

of <current> and <absent> The token forces the mannequin to make a binary resolution in regards to the object’s existence earlier than localization..

PBench: Profiling capabilities past saturation baselines

To measure progress, the TII analysis staff launched: P bencha benchmark that disentangles mannequin failure modes by organizing samples into 5 ranges of semantic complexity.

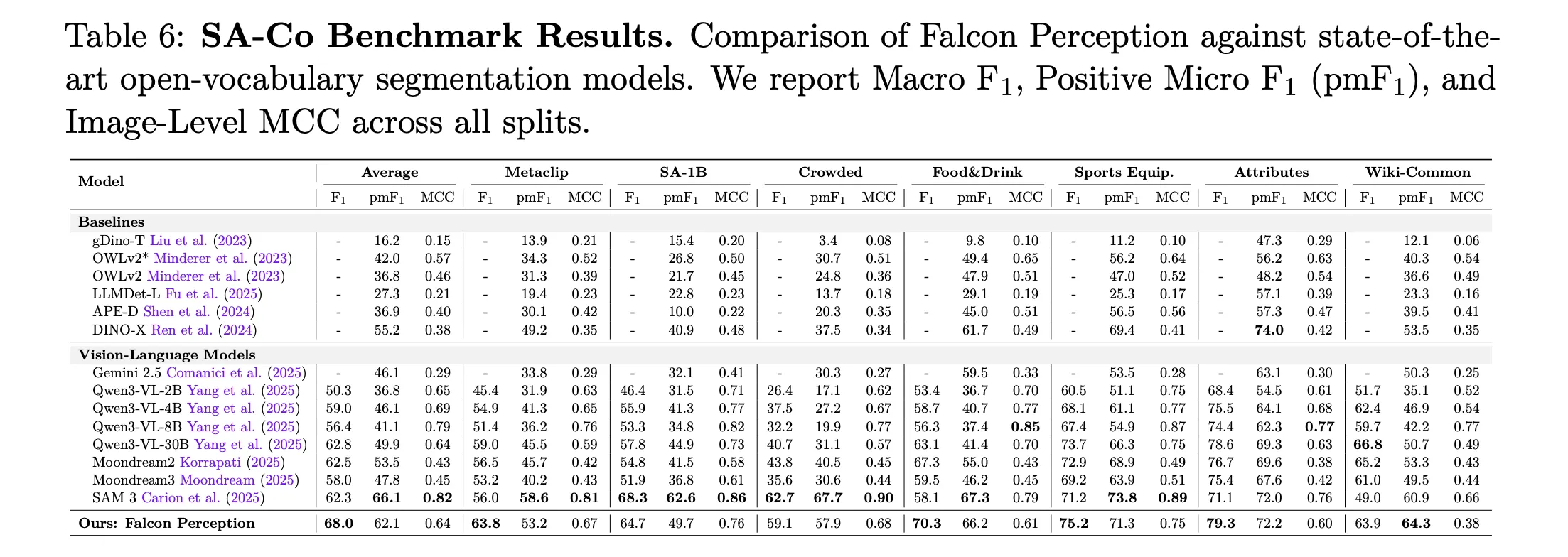

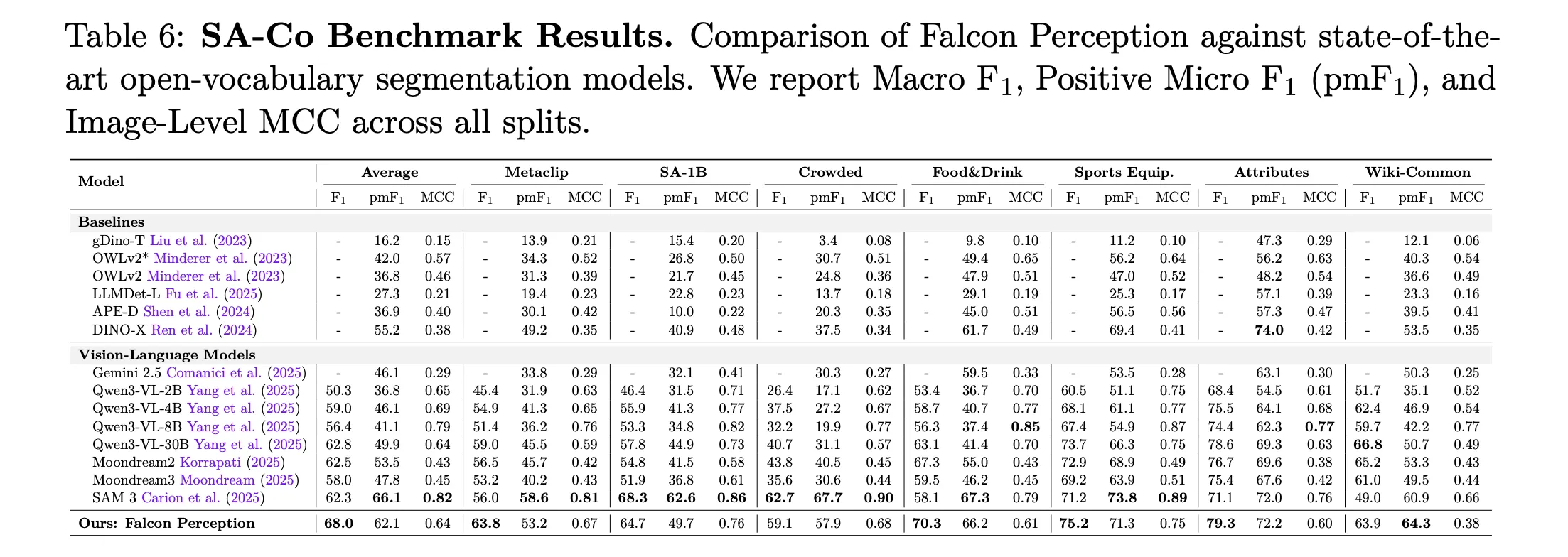

Key Outcomes: Falcon Notion vs. SAM 3 (Macro-F1)

| benchmark cut up | sam 3 | Falcon Notion (600M) |

| L0: Easy object | 64.3 | 65.1 |

| L1: Attribute | 54.4 | 63.6 |

| L2: OCR Information | 24.6 | 38.0 |

| L3: Spatial understanding | 31.6 | 53.5 |

| L4: Human relationships | 33.3 | 49.1 |

| dense partition | 58.4 | 72.6 |

Falcon Notion considerably outperforms SAM 3 on complicated semantic duties, particularly +21.9 factors earned About spatial understanding (stage 3).

FalconOCR: 300 million doc specialist

The TII staff prolonged this preliminary fusion recipe as follows. Falcon OCR,compact 300M parameters The mannequin is initialized from scratch to prioritize fine-grained glyph recognition. FalconOCR competes with a number of massive proprietary modular OCR methods.

- Orm OCR: obtain 80.3% accuracyequals or exceeds Gemini 3 Professional (80.2%) and GPT 5.2 (69.8%).

- Omnidoc bench: Reached total rating 88.64higher than GPT 5.2 (86.56) and Mistral OCR 3 (85.20), however inferior to the highest modular pipeline PaddleOCR VL 1.5 (94.37).

Essential factors

- Unified early convergence structure: Falcon Notion replaces modular encoder/decoder pipelines with a single dense Transformer that processes picture patches and textual content tokens in a shared parameter area from the primary layer. It makes use of a hybrid consideration masks (bidirectional for visible tokens and causal for job tokens) to behave concurrently as a imaginative and prescient encoder and an autoregressive decoder.

- perceptual chain sequence: The mannequin serializes the segmentation of cases right into a structured sequence. This forces spatial location and measurement to be resolved as conditioning alerts earlier than producing the pixel-level masks.

- Specialised head and GGROPE: To handle dense spatial information, the mannequin makes use of Fourier characteristic encoders for high-dimensional coordinate mapping and makes use of Golden Gate ROPE (GGROPE) to allow isotropic 2D spatial consideration. The Muon optimizer is employed in these specialised heads to stability the training charge towards the pre-trained spine.

- Enhancing semantic efficiency: Within the new PBench benchmark that disentangles semantic options (ranges 0-4), the 600M mannequin reveals vital enchancment over SAM 3 in complicated classes, together with a +13.4 level lead in OCR guided queries and a +21.9 level lead in spatial understanding.

- Extremely environment friendly OCR extension: The structure scales all the way down to Falcon OCR, a 300M parameter mannequin that achieves 80.3% on olmOCR and 88.64 on OmniDocBench. Obtain accuracy akin to or higher than a lot bigger methods corresponding to Gemini 3 Professional and GPT 5.2 whereas sustaining excessive throughput for large-scale doc processing.

Please verify paper, model weight, lipo and technical details. Please be at liberty to observe us too Twitter Remember to affix us 120,000+ ML subreddits and subscribe our newsletter. hold on! Are you on telegram? You can now also participate by telegram.