This submit was co-authored with NVIDIA’s Abdullahi Olaoye, Curtis Lockhart, and Nirmal Kumar Juluru.

I’m happy to announce this NVIDIA’s Nemotron 3 Nano is now obtainable as a completely managed serverless mannequin on Amazon Bedrock. This follows our earlier announcement at AWS re:Invent to help the NVIDIA Nemotron 2 Nano 9B and NVIDIA Nemotron 2 Nano VL 12B fashions.

and NVIDIA Nemotron Amazon Bedrock’s open mannequin lets you speed up innovation and ship tangible enterprise worth with out managing infrastructure complexity. You need to use Nemotron’s capabilities by Amazon Bedrock’s inference capabilities to energy your generated AI functions and make the most of its intensive capabilities and instruments.

On this submit, we discover the technical options of the NVIDIA Nemotron 3 Nano mannequin and talk about potential software use instances. Moreover, we offer technical steerage that can assist you get began utilizing this mannequin on your generated AI functions inside your Amazon Bedrock surroundings.

About Nemotron 3 Nano

NVIDIA Nemotron 3 Nano is a small language mannequin (SLM) with a hybrid mixed-of-experts (MoE) structure that delivers excessive computational effectivity and accuracy that builders can use to construct specialised agent AI techniques. The mannequin is totally open with open weights, datasets, and recipes, selling transparency and belief for builders and companies. In comparison with different equally sized fashions, the Nemotron 3 Nano excels at coding and inference duties, main in benchmarks corresponding to SWE Bench Verified, AIME 2025, Area Onerous v2, and IFBench.

Mannequin overview:

- Structure:

- Combined Experience (MoE) with Hybrid Transformer-Mamba Structure

- Helps token budgets and offers precision whereas avoiding overthinking

- Accuracy:

- Glorious accuracy in coding, scientific reasoning, arithmetic, calling instruments, following directions, and chatting

- Nemotron 3 Nano outperforms in benchmarks like SWE Bench, AIME 2025, Humanity Final Examination, IFBench, RULER, Area Onerous (in comparison with different open language fashions with MoE under 30 billion)

- Mannequin measurement: 30 B (with 3 B energetic parameters)

- Context size: 256K

- Mannequin enter: textual content

- Mannequin output: textual content

Nemotron 3 Nano combines Mamba, Transformer, and Combination-of-Specialists layers into one spine to assist steadiness effectivity, inference accuracy, and scale. Mamba allows long-range sequence modeling with low reminiscence overhead, whereas the Transformer layer helps present exact consideration to structured reasoning duties corresponding to code, math, and planning. MoE routing additional improves scalability by activating solely a subset of specialists per token, which helps enhance latency and throughput. This makes Nemotron 3 Nano significantly appropriate for agent clusters that run many light-weight workflows concurrently.

For extra data on the Nemotron 3 Nano structure and the way to practice it, see: Inside the NVIDIA Nemotron 3: Techniques, tools, and data that make your NVIDIA Nemotron 3 efficient and accurate.

Benchmarking the mannequin

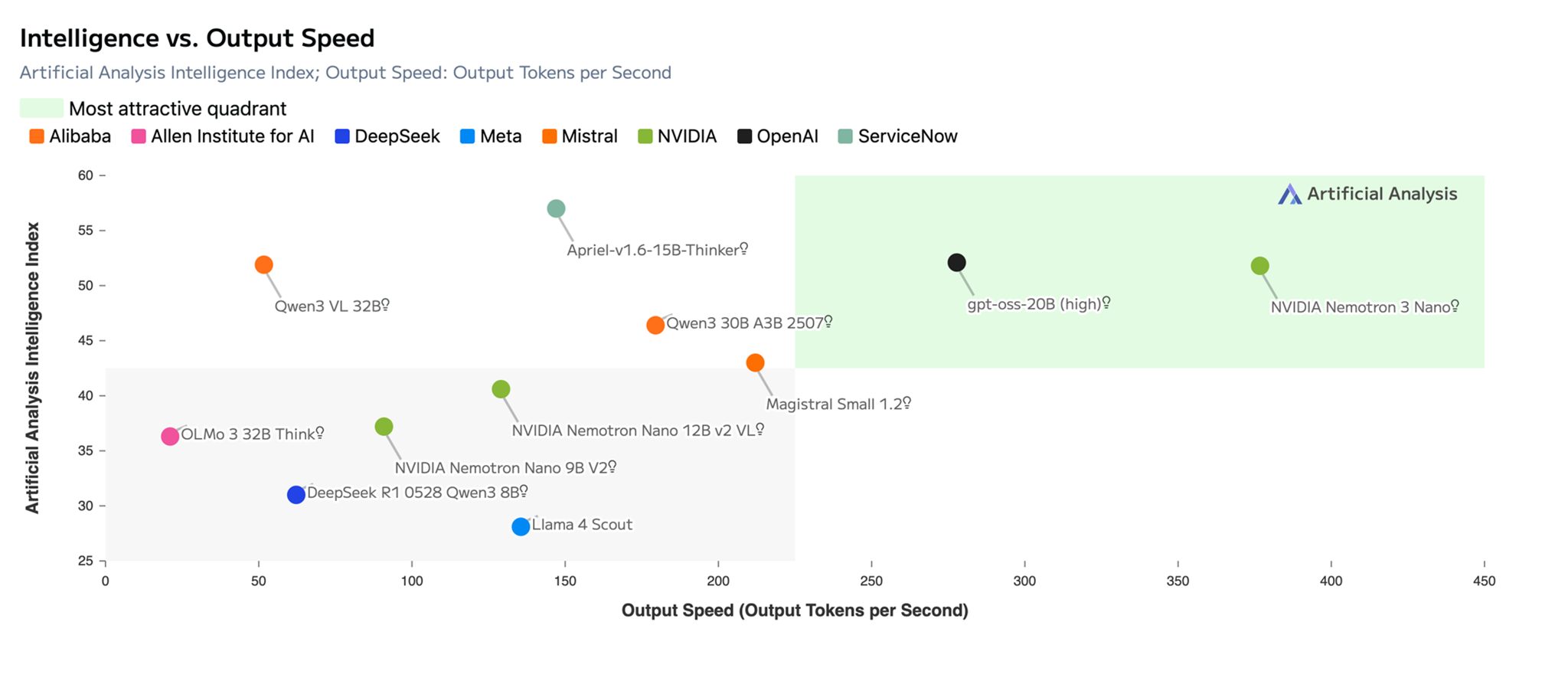

The next picture exhibits the Nemotron 3 Nano main in essentially the most enticing quadrant. artificial analysis Openness index and intelligence index. Why openness issues: Openness builds belief by transparency. Builders and enterprises can construct with confidence on Nemotron, which offers clear visibility into fashions, information pipelines, and information traits for straightforward auditing and governance.

title: Graph exhibiting that Nemotron 3 Nano is in essentially the most enticing quadrant of synthetic evaluation openness and intelligence index (supply: artificial analysis)

As proven within the picture under, the Nemotron 3 Nano affords superior accuracy with the best effectivity of any open mannequin, incomes a formidable rating of 52 factors, considerably larger than the earlier Nemotron 2 Nano mannequin. As agent AI will increase the demand for tokens, the power to “assume quick” (reaching the suitable reply shortly utilizing fewer tokens) is necessary. Nemotron 3 Nano delivers excessive throughput with an environment friendly Hybrid Transformer-Mamba and MoE structure.

title: NVIDIA Nemotron 3 Nano affords the best accuracy and highest effectivity of any open mannequin, with a formidable rating of 52 factors within the Synthetic Analytics Intelligence vs. Output Pace Index. (sauce: artificial analysis)

NVIDIA Nemotron 3 Nano utilization instance

Nemotron 3 Nano helps energy numerous use instances in numerous industries. Examples of use embody:

- Finance – Speed up mortgage processing by extracting information, analyzing income patterns, detecting fraud, and lowering cycle time and danger.

- Cybersecurity – Robotically prioritize vulnerabilities, carry out deep malware evaluation, and proactively seek for safety threats.

- Software program improvement – Help with duties corresponding to code summarization.

- Retail – Optimize stock administration and improve in-store service with real-time personalised product suggestions and help.

Strive utilizing NVIDIA Nemotron 3 Nano on Amazon Bedrock

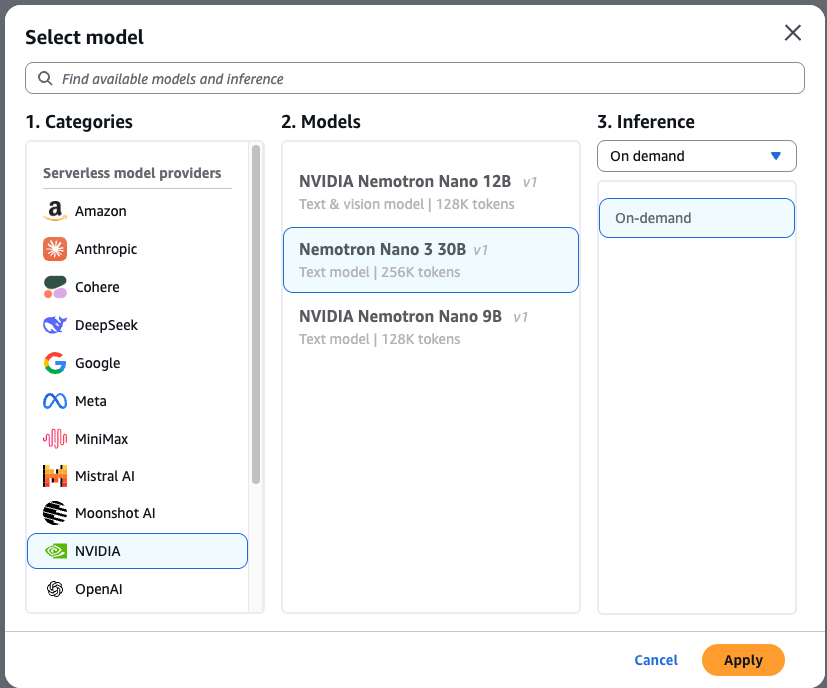

To check the NVIDIA Nemotron 3 Nano on Amazon Bedrock, observe these steps:

- Transfer to. Amazon Bedrock Console and choose Chat/Textual content Playground From the menu on the left ( check part).

- select Please choose a mannequin It’s positioned within the higher left nook of the playground.

- select Nvidia Choose from the class checklist. NVIDIA Nemotron 3 Nano.

- select apply Click on to load the mannequin.

After making your choice, you’ll be able to instantly check your mannequin. Let’s generate a unit check in Python code utilizing the next immediate. pytest Framework:

Write a pytest unit check suite for a Python operate known as calculate_mortgage(principal, price, years). Embrace check instances for: 1) A regular 30-year mounted mortgage 2) An edge case with 0% curiosity 3) Error dealing with for damaging enter values.

Complicated duties like this immediate can profit from a chain-of-thought strategy to generate correct outcomes primarily based on the inference capabilities natively constructed into the mannequin.

Utilizing the AWS CLI and SDKs

You may entry the mannequin programmatically utilizing the mannequin ID. nvidia.nemotron-nano-3-30b. This mannequin helps each InvokeModel and Converse APIs by the AWS Command Line Interface (AWS CLI) and AWS SDKs nvidia.nemotron-nano-3-30b as a mannequin ID. Moreover, it helps Amazon Bedrock OpenAI SDK appropriate APIs.

Invoke the mannequin straight from the terminal by operating the next command: AWS Command Line Interface (AWS CLI) and InvokeModel API:

To name the mannequin by the AWS SDK for Python (boto3), Immediate the mannequin utilizing the next script. On this case, use the Converse API.

To name a mannequin through Amazon Bedrock OpenAI Compatibility ChatCompletions Endpoints let you do that utilizing the OpenAI SDK.

Utilizing NVIDIA Nemotron 3 Nano with Amazon Bedrock Options

Energy your generative AI functions by combining Nemotron 3 Nano with Amazon Bedrock administration instruments. Implement security measures utilizing Amazon Bedrock Guardrails and create sturdy retrieval extension technology (RAG) workflows utilizing Amazon Data Base.

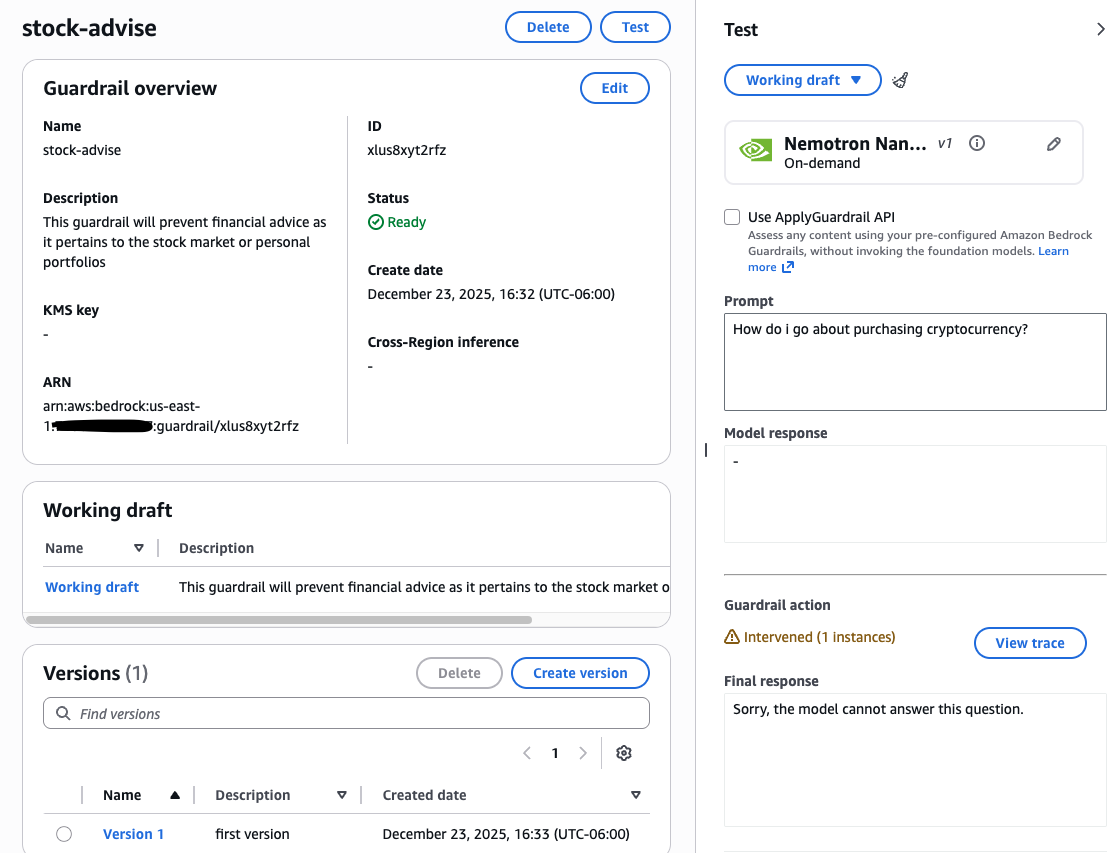

Amazon bedrock guardrail

Guardrails is a managed security layer that helps implement accountable AI by filtering dangerous content material, redacting delicate data (PII), and blocking particular subjects throughout prompts and responses. Works throughout a number of fashions to assist detect rapid injection assaults and hallucinations.

Utilization instance: For those who’re constructing a mortgage assistant, you’ll be able to select to not present normal funding recommendation. Configuring a filter for the phrase “shares” instantly blocks person prompts containing that phrase and permits them to obtain a customized message.

To arrange guardrails, observe these steps:

- in Amazon Bedrock ConsoleTransfer to construct Choose the part on the left guardrail.

- Create new guardrails and configure the filters wanted on your use case.

After configuration, check your guardrails utilizing numerous prompts to test efficiency. You may then fine-tune settings corresponding to denied subjects, phrase filters, and PII enhancing to fit your particular security necessities. For extra data, see Create Guardrails.

Amazon Bedrock Data Base

Amazon Bedrock Data Bases automates the entire RAG workflow. It handles ingesting content material from information sources, chunking it into searchable segments, changing it to vector embedding, and saving it to a vector database. Then, when a person submits a question, the system matches the enter towards the saved vectors to seek out semantically related content material. This content material is used to enhance the prompts despatched to the underlying mannequin.

On this instance, we uploaded a PDF (e.g. buy a new house, Mortgage Toolkit, Buy a Mortgage) to Amazon Easy Storage Service (Amazon S3) and chosen Amazon OpenSearch Serverless because the vector retailer. The next code exhibits the way to use the RetrieveAndGenerate API to question this data base to routinely facilitate security compliance changes by a particular Guardrail ID.

This instructs the NVIDIA Nemotron 3 Nano mannequin to make use of a customized immediate template to synthesize retrieved documentation into clear, well-founded solutions. To arrange your individual pipeline, try the entire tutorial within the Amazon Bedrock Person Information.

conclusion

On this submit, we confirmed you the way to get began utilizing NVIDIA Nemotron 3 Nano with Amazon Bedrock for totally managed serverless inference. We additionally confirmed you the way to use the mannequin with Amazon Bedrock Data Bases and Amazon Bedrock Guardrails. This mannequin is at present obtainable within the following AWS Areas: US East (N. Virginia), US East (Ohio), US West (Oregon), Asia Pacific (Tokyo), Asia Pacific (Mumbai), South America (São Paulo), Europe (London), and Europe (Milan). Test the entire area checklist for future updates. If you want to study extra, please go to right here NVIDIA Nemotron Strive NVIDIA Nemotron 3 Nano on Amazon Bedrock console as we speak.

In regards to the writer