Liquid AI launched LFM2-2.6B-Exp, an experimental checkpoint for the LFM2-2.6B language mannequin skilled with pure reinforcement studying on the present LFM2 stack. The objective is easy: to enhance information duties, and arithmetic, guided by a small 3B class mannequin that continues to focus on machine and edge deployments.

The place LFM2-2.6B-Exp suits into the LFM2 household?

LFM2 is the second era of liquid basis fashions. Designed for environment friendly deployment on telephones, laptops, and different edge gadgets. Liquid AI describes LFM2 as a hybrid mannequin that mixes short-range LIV convolution blocks and grouped question consideration blocks, managed by multiplication gates.

This household contains 4 high-density sizes: LFM2-350M, LFM2-700M, LFM2-1.2B, and LFM2-2.6B. All share a context size of 32,768 tokens, a vocabulary measurement of 65,536, and bfloat16 precision. The two.6B mannequin makes use of 30 layers, together with 22 convolutional layers and eight consideration layers. Every measurement is skilled with a token price range of 10 trillion.

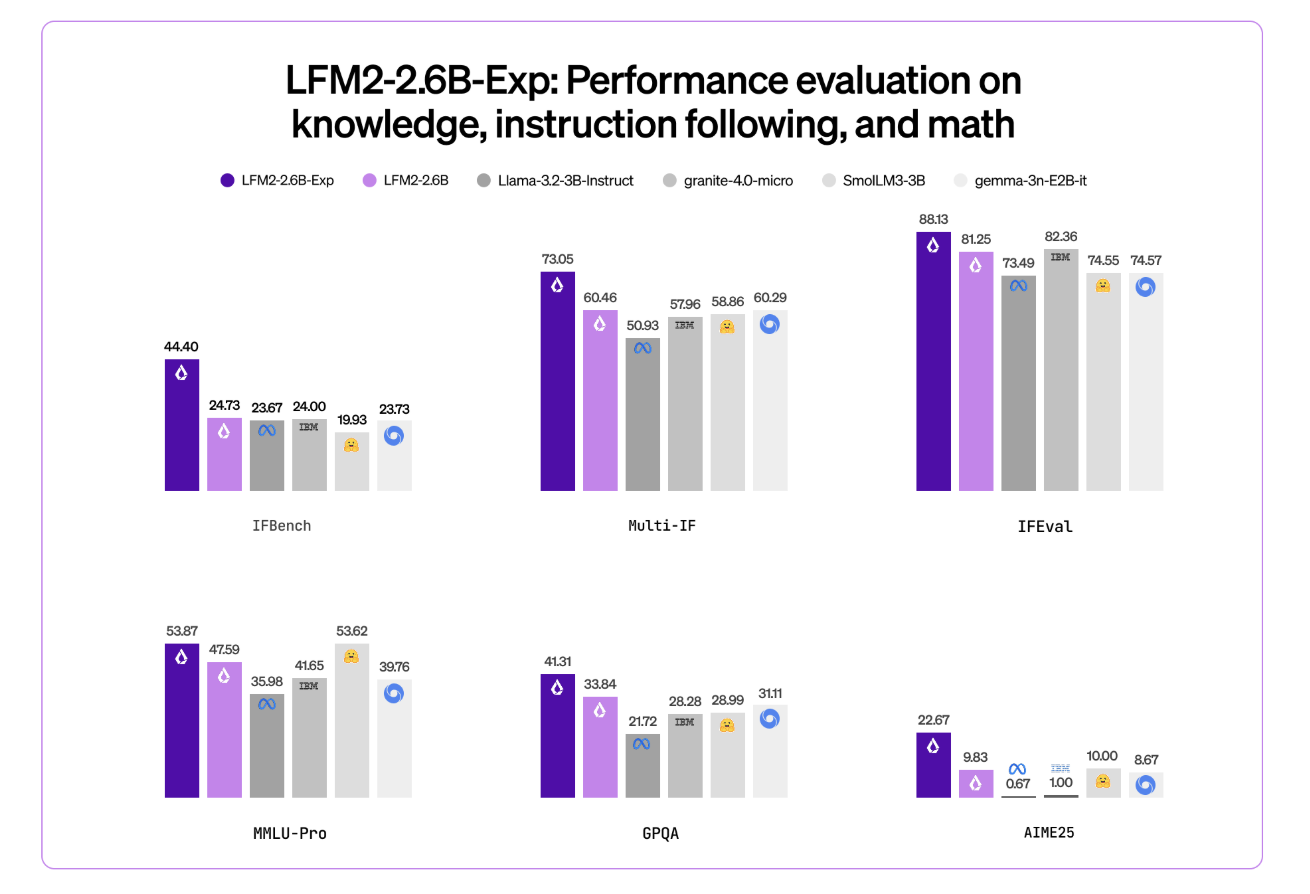

LFM2-2.6B is already positioned as a excessive effectivity mannequin. It reaches 82.41 p.c for GSM8K and 79.56 p.c for IFEval. This places it forward of a number of 3B class fashions similar to Llama 3.2 3B Instruct, Gemma 3 4B it, and SmolLM3 3B on these benchmarks.

LFM2-2.6B-Exp maintains this structure. Reuse the identical tokenization, context home windows, and {hardware} profiles. Checkpoints are solely centered on altering habits via a reinforcement studying part.

Pure RL on prime of a pre-trained and tuned base

This checkpoint is constructed on prime of LFM2-2.6B utilizing pure reinforcement studying. They’re particularly skilled in following directions, information, and arithmetic.

The underlying LFM2 coaching stack combines a number of phases. This contains very large-scale supervised fine-tuning combining downstream duties and customary domains, customized direct-first optimization with size normalization, iterative mannequin merging, and reinforcement studying with verifiable rewards.

However does it precisely imply “pure reinforcement studying”? LFM2-2.6B-Exp begins from the present LFM2-2.6B checkpoint and goes via a steady RL coaching schedule. It begins with following directions and extends RL coaching to knowledge-oriented prompts, arithmetic, and the usage of a small quantity of instruments with none extra SFT warm-up or distillation steps on the finish.

Importantly, LFM2-2.6B-Exp doesn’t change the essential structure or pre-training. Primarily based on a mannequin that has already been monitored and preferences adjusted, we modify the coverage via an RL stage utilizing verifiable rewards on a set of domains of curiosity.

Benchmark indicators, particularly IFBench

The Liquid AI crew highlights IFBench as a key metric. IFBench is an instruction-following benchmark that checks how reliably a mannequin follows complicated and constrained directions. On this benchmark, LFM2-2.6B-Exp outperforms DeepSeek R1-0528, which is reported to be 263 instances bigger in variety of parameters.

LFM2 fashions present sturdy efficiency throughout normal benchmark units similar to MMLU, GPQA, IFEval, GSM8K, and associated suites. The two.6B base mannequin is already fairly aggressive within the 3B section. RL checkpoints then push instruction following and computation additional whereas staying throughout the identical 3B parameter price range.

Key structure and options

This structure makes use of 10 double gate brief vary LIV convolution blocks and 6 grouped question consideration blocks organized in a hybrid stack. This design reduces the price of KV caching and retains inference quick on client GPUs and NPUs.

The pre-training combination makes use of roughly 75% English, 20% multilingual information, and 5% code. Supported languages embrace English, Arabic, Chinese language, French, German, Japanese, Korean, and Spanish.

The LFM2 mannequin exposes utilization tokens for templates and native instruments like ChatML. Instruments are written as JSON between devoted device record markers. The mannequin then points Python-like calls between device name markers and reads device responses between device response markers. This construction makes this mannequin appropriate as an agent core for instruments that decision the stack with out customized immediate engineering.

LFM2-2.6B, and by extension LFM2-2.6B-Exp, can also be the one mannequin within the household that permits dynamic hybrid inference via particular thought tokens on complicated or multilingual inputs. RL checkpoints don’t change tokenization or structure, so the performance remains to be accessible.

Vital factors

- LFM2-2.6B-Exp is an experimental checkpoint for LFM2-2.6B that provides a pure reinforcement studying stage on prime of a pre-trained, supervised, most popular base, focusing on instruction following, information duties, and arithmetic.

- The LFM2-2.6B spine makes use of a hybrid structure that mixes double-gated short-range LIV convolution blocks and grouped question consideration blocks, with 30 layers, 22 convolution layers and eight consideration layers, 32,768 token context size, and a ten trillion token coaching price range with 2.6B parameters.

- The LFM2-2.6B is already within the 3B class, reaching sturdy benchmark scores of round 82.41 p.c in GSM8K and 79.56 p.c in IFEval. The LFM2-2.6B-Exp RL checkpoint additionally additional improves instruction monitoring and computational efficiency with out altering the structure or reminiscence profile.

- Liquid AI experiences that LFM2-2.6B-Exp outperforms DeepSeek R1-0528 in IFBench, an instruction that follows the benchmark. Though DeepSeek R1-0528 has extra parameters within the latter, it exhibits higher efficiency for every parameter within the constrained deployment settings.

- LFM2-2.6B-Exp is launched with Hugging Face with open weights below the LFM Open License v1.0 and is supported via Transformers, vLLM, llama.cpp GGUF quantization, and ONNXRuntime, making it appropriate for agent techniques, structured information extraction, search extension era, and machine assistants that require compact 3B fashions.

Please test Click here for the model. Please be happy to comply with us too Twitter Remember to hitch us 100,000+ ML subreddits and subscribe our newsletter. grasp on! Are you on telegram? You can now also participate by telegram.

Max is an AI analyst at MarkTechPost primarily based in Silicon Valley, the place he actively shapes the way forward for know-how. We train robotics at Brainvyne, struggle spam at ComplyEmail, and use AI daily to translate complicated technological advances into clear, easy-to-understand insights.