Why do present audio AI fashions usually carry out poorly once they generate longer inferences moderately than making choices based mostly on the precise sound? The StepFun analysis crew releases Step-Audio-R1, a brand new audio LLM designed for computational scaling of take a look at occasions. We tackle this failure mode by exhibiting that the lack of accuracy because of chain of thought just isn’t a limitation of the audio, however moderately a difficulty with the basics of the coaching and modality.

The central situation is that audio fashions are extra affordable than textual content surrogates.

Most present audio fashions inherit their inference habits from textual content coaching. They study to cause as in the event that they had been studying a transcript, not as in the event that they had been listening. The StepFun crew calls this textual surrogate inference. This mannequin makes use of imagined phrases and descriptions rather than acoustic cues akin to pitch contours, rhythm, timbre, and background noise patterns.

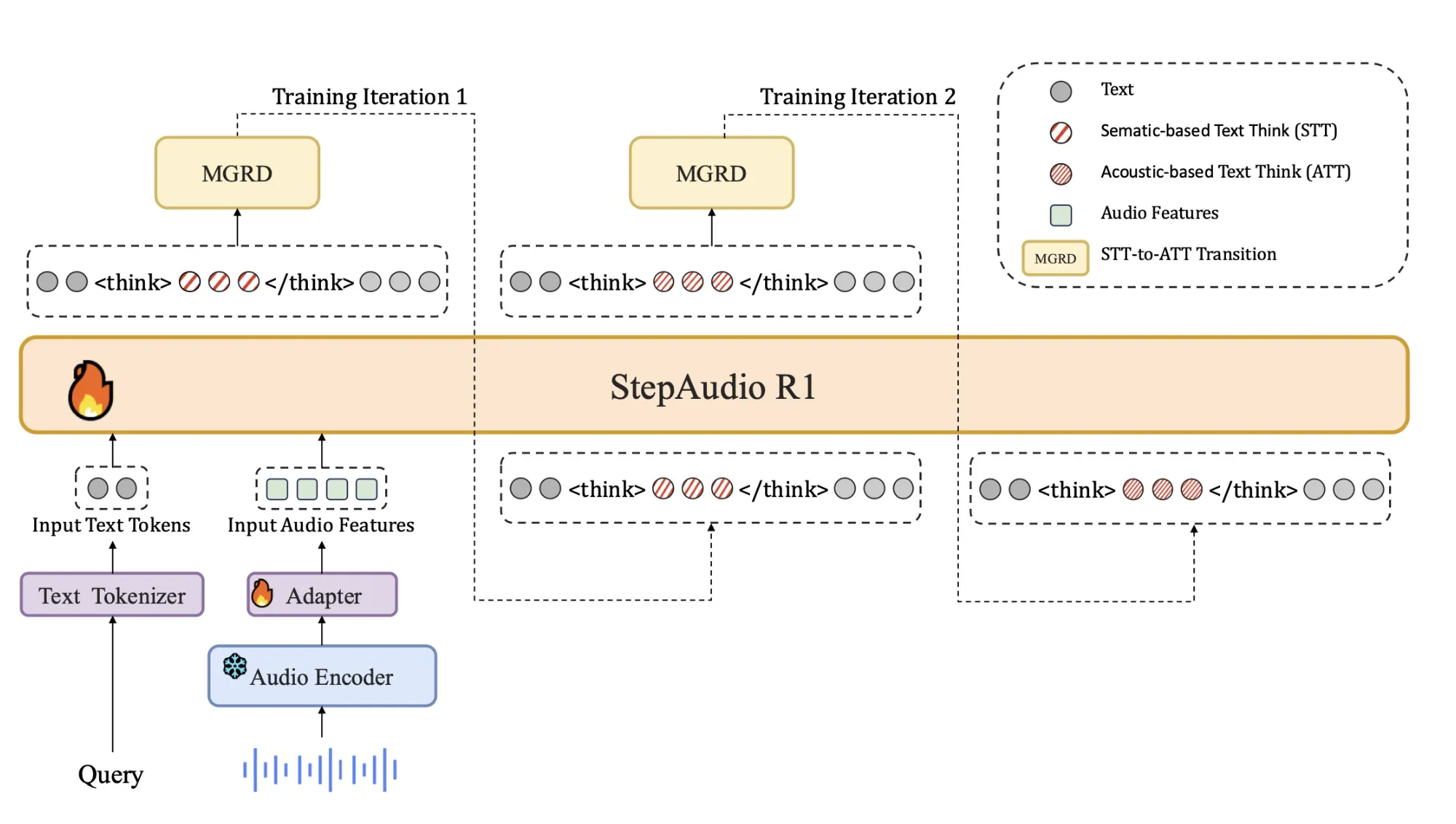

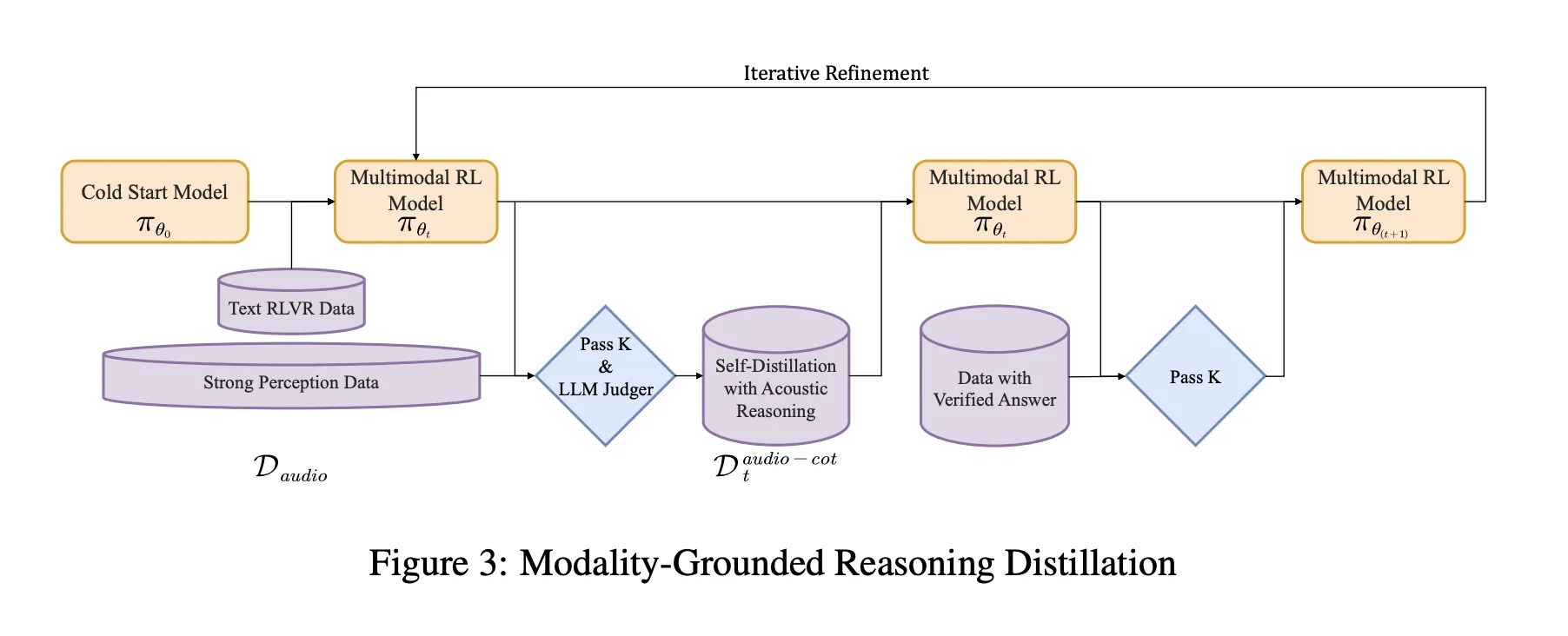

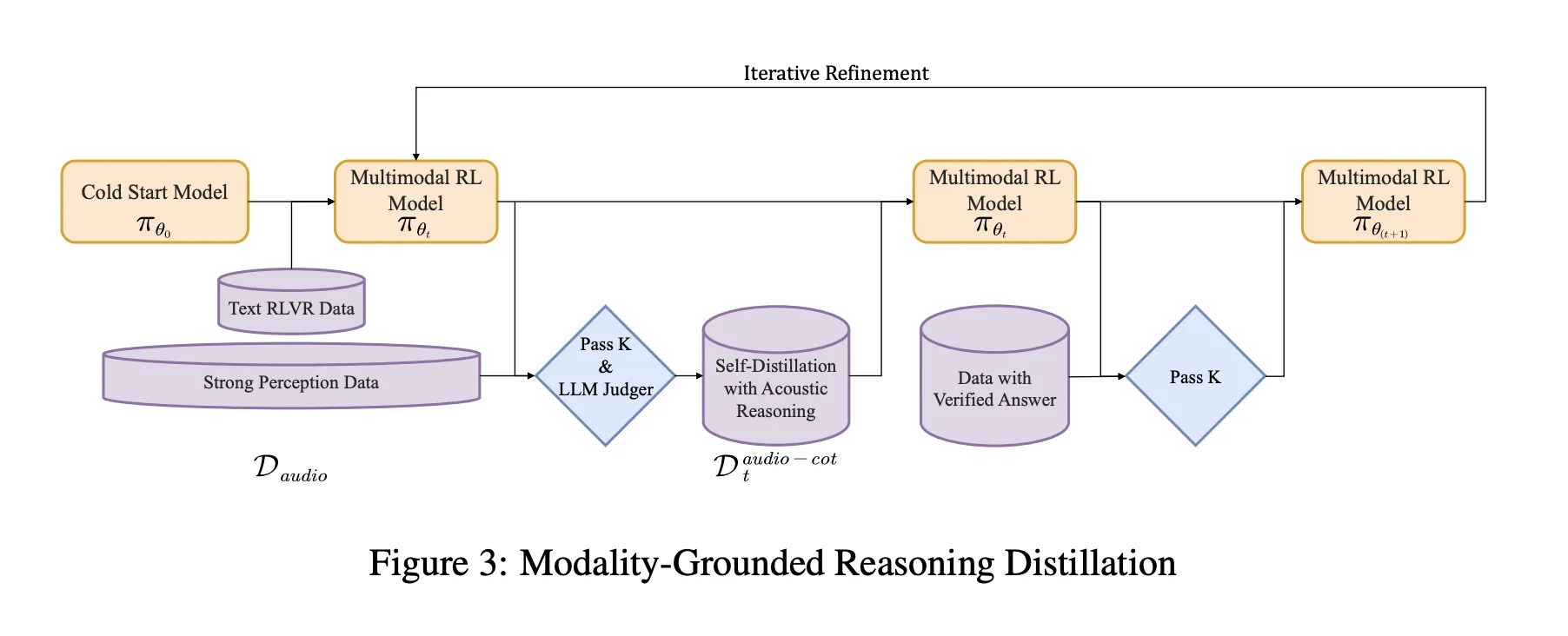

This discrepancy explains why audio efficiency usually deteriorates with longer thought chains. The mannequin spends extra tokens elaborating on incorrect or modality-irrelevant assumptions. Step-Audio-R1 assaults this by forcing the mannequin to justify its reply utilizing acoustic proof. The coaching pipeline is organized round Modality Grounded Reasoning Distillation (MGRD), which selects and extracts inference traces that explicitly reference audio options.

structure

Structure stays near earlier Step Audio programs:

- The Qwen2-based audio encoder processes uncooked waveforms at 25 Hz.

- The audio adapter downsamples the encoder output by an element of two to 12.5 Hz and aligns the frames with the language token stream.

- Qwen2.5 32B decoder makes use of audio options to generate textual content.

The decoder all the time generates an express inference block internally. <assume> and </assume> tag, adopted by the ultimate reply. This separation permits coaching targets to form the construction and content material of the inference with out shedding deal with activity accuracy. This mannequin is launched as an audio text-to-text mannequin with 33B parameters. Hug face in Apache 2.0.

Coaching Pipeline, From Chilly Begin to Audio Grounded RL

The pipeline has a supervised chilly begin stage and a reinforcement studying stage, each with a mixture of textual content and audio duties.

The chilly begin makes use of roughly 5 million samples, protecting 1 billion tokens of text-only information and 4 billion tokens of voice-paired information. Speech duties embrace automated speech recognition, paralinguistic understanding, and voice query textual content reply model dialogs. Among the audio information contains thought-tracking audio chains generated by earlier fashions. Textual content information contains multi-turn dialogue, solutions to data questions, arithmetic and code reasoning. All samples share a format by which the inference is wrapped. <assume> The tag is identical even when the inference block is initially empty.

Supervised studying trains Step-Audio-R1 to comply with this format and generate helpful inferences for each audio and textual content. This supplies a fundamental chain of thought operations, however remains to be biased towards text-based reasoning.

Distillation of modality-based reasoning MGRD

MGRD is utilized in a number of repetitions. In every spherical, the researchers pattern audio questions whose labels rely upon precise acoustic traits. For instance, questions concerning the speaker’s feelings, the background occasions of a sound scene, or the construction of the music. The present mannequin generates a number of inferences and potential solutions for every query. The filter retains solely chains that meet the next three constraints:

- These consult with acoustic cues moderately than mere textual descriptions or imaginary transcriptions.

- They’re logically constant as brief step-by-step directions.

- The ultimate reply is appropriate in response to the label or programmatic examine.

These accepted traces kind a distilled speech thought chain dataset. The mannequin is fine-tuned on this dataset together with the unique textual content inference information. That is adopted by reinforcement studying with verified rewards (RLVR). For textual content questions, rewards are based mostly on the share of appropriate solutions. For audio questions, rewards are a mixture of reply correctness and inference kind, with typical weightings of 0.8 for accuracy and 0.2 for inference. For coaching, we use PPO with roughly 16 sampled responses per immediate, supporting sequences of as much as roughly 10,240 tokens to allow lengthy deliberation.

Benchmark narrows the hole with Gemini 3 Professional

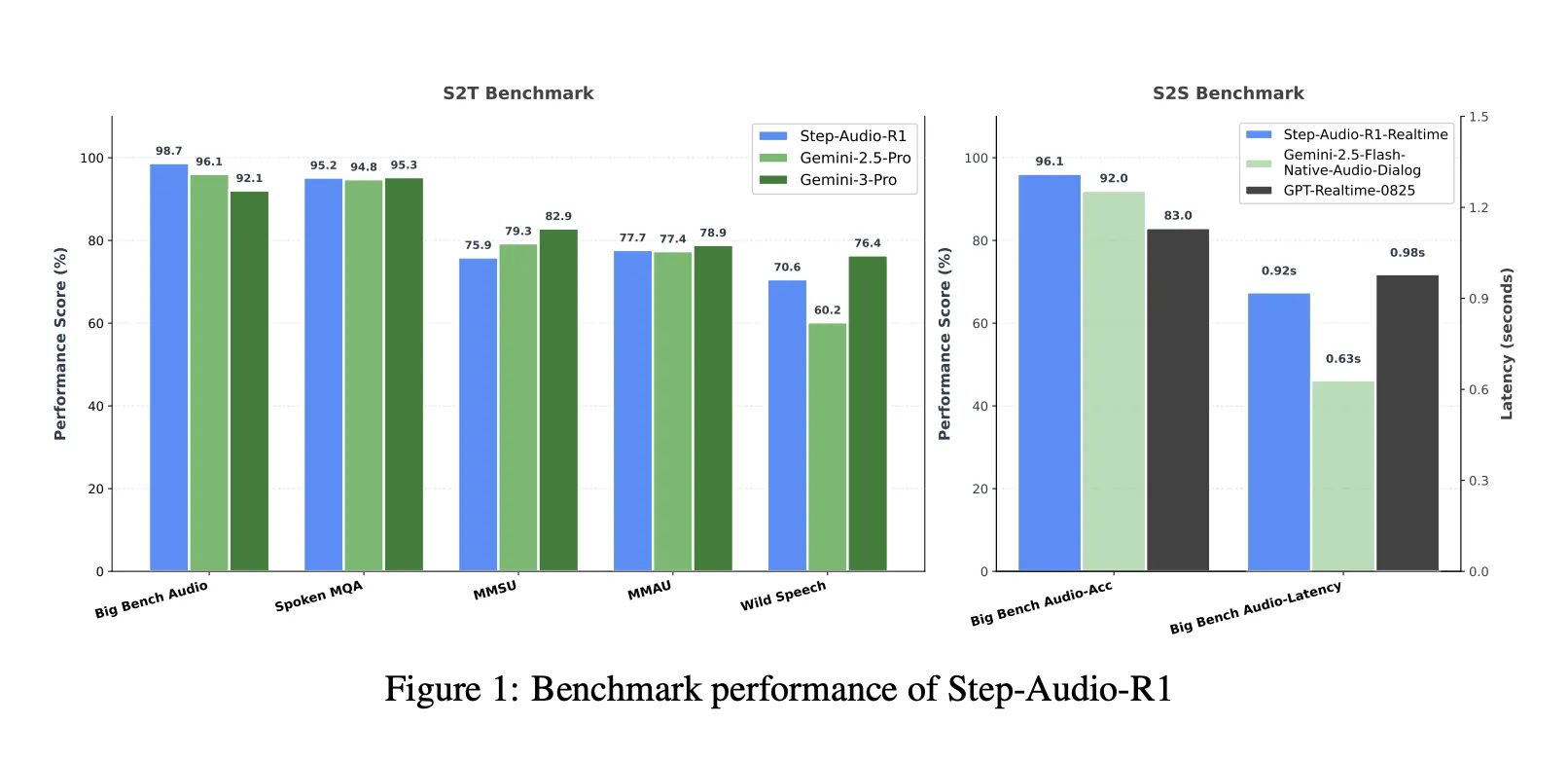

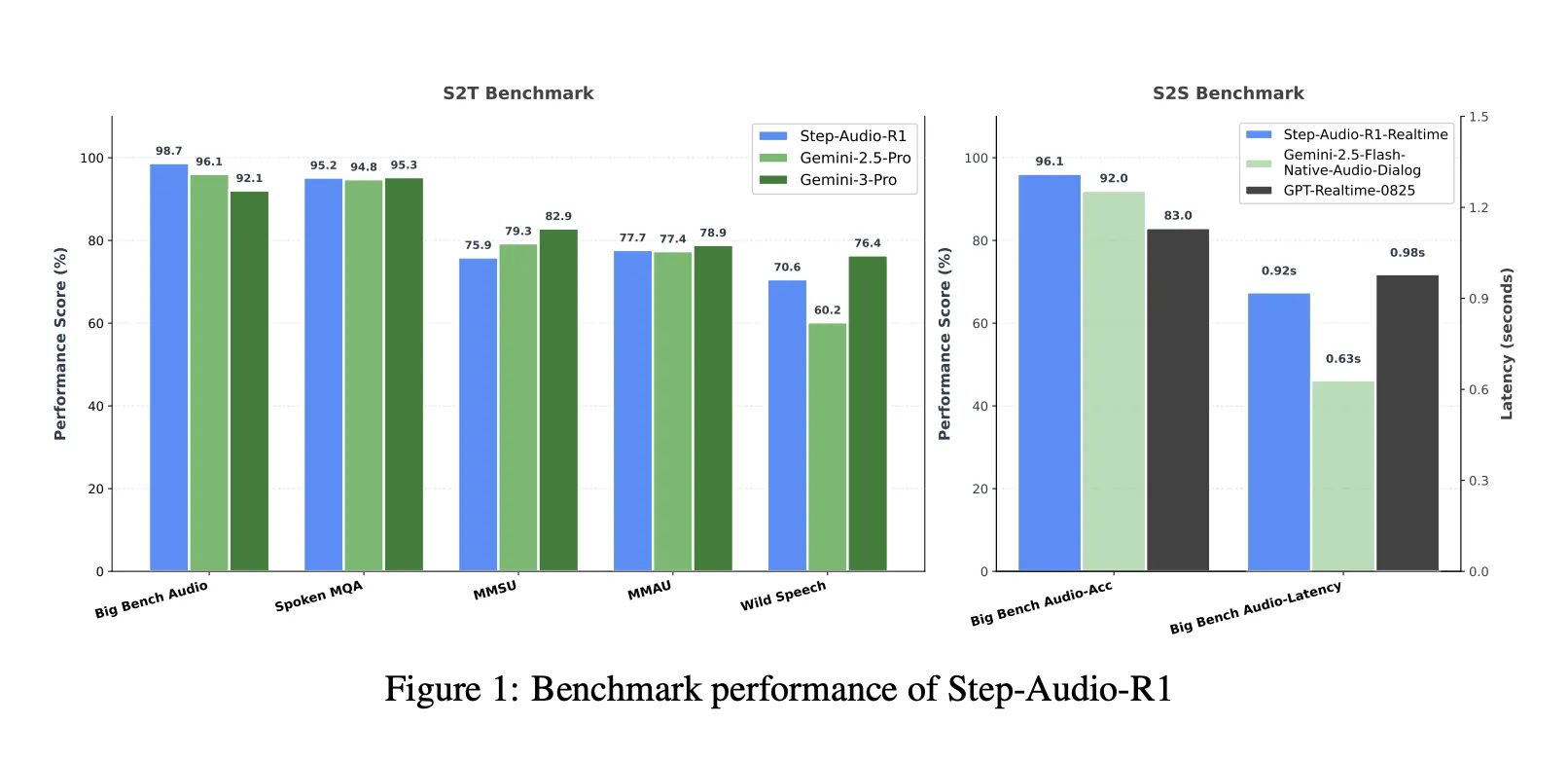

When mixed with a speech-to-text benchmark suite that features Huge Bench Audio, Spoken MQA, MMSU, MMAU, and Wild Speech, Step-Audio-R1 reaches a mean rating of about 83.6 %. Gemini 2.5 Professional experiences reaching round 81.5 % and Gemini 3 Professional round 85.1 %. On Huge Bench Audio alone, Step-Audio-R1 reaches about 98.7 %, which is greater than each Gemini variations.

For speech-to-speech inference, the Step-Audio-R1 Realtime variant employs listen-while-think and think-while-speak types of streaming. Huge Bench Audio’s speech-to-speech has a primary packet delay of about 0.92 seconds and an inference accuracy of about 96.1 %. This rating outperforms GPT-based real-time baselines and Gemini 2.5 Flash-style native audio dialog whereas sustaining sub-second interactions.

Ablation, necessary for speech inference

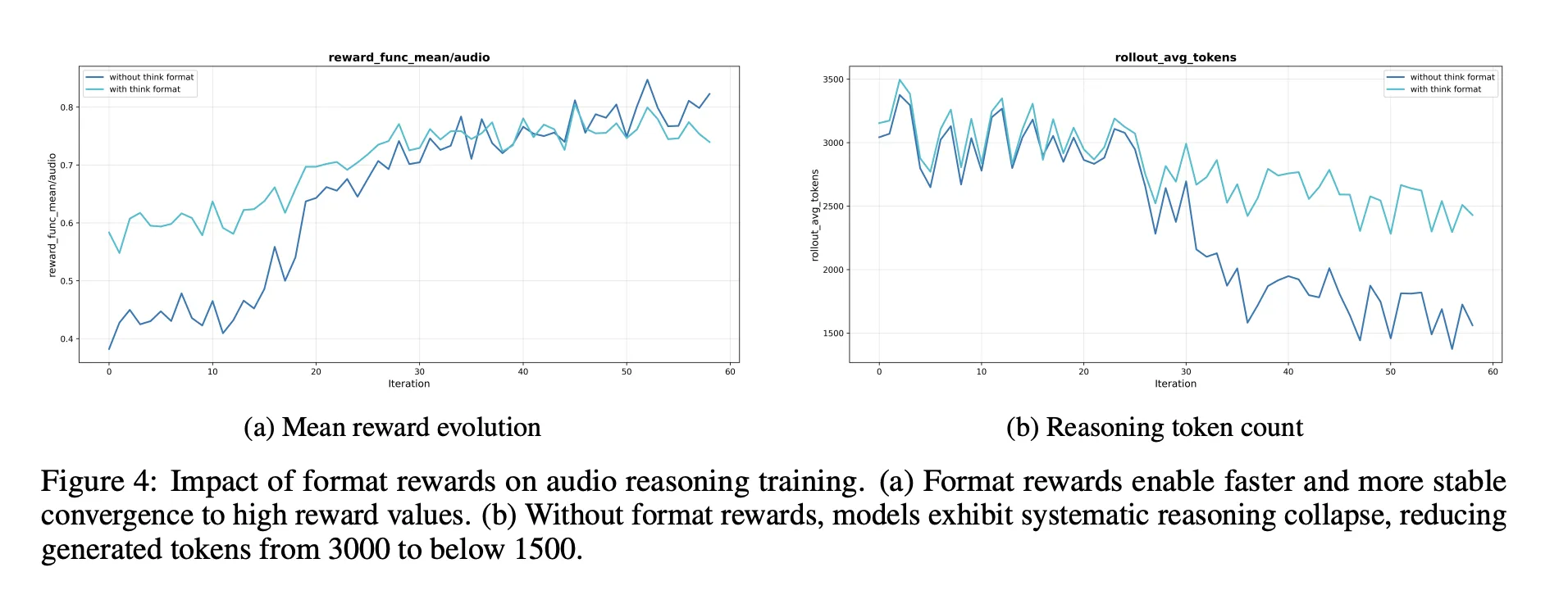

The ablation part supplies a number of design alerts to the engineer.

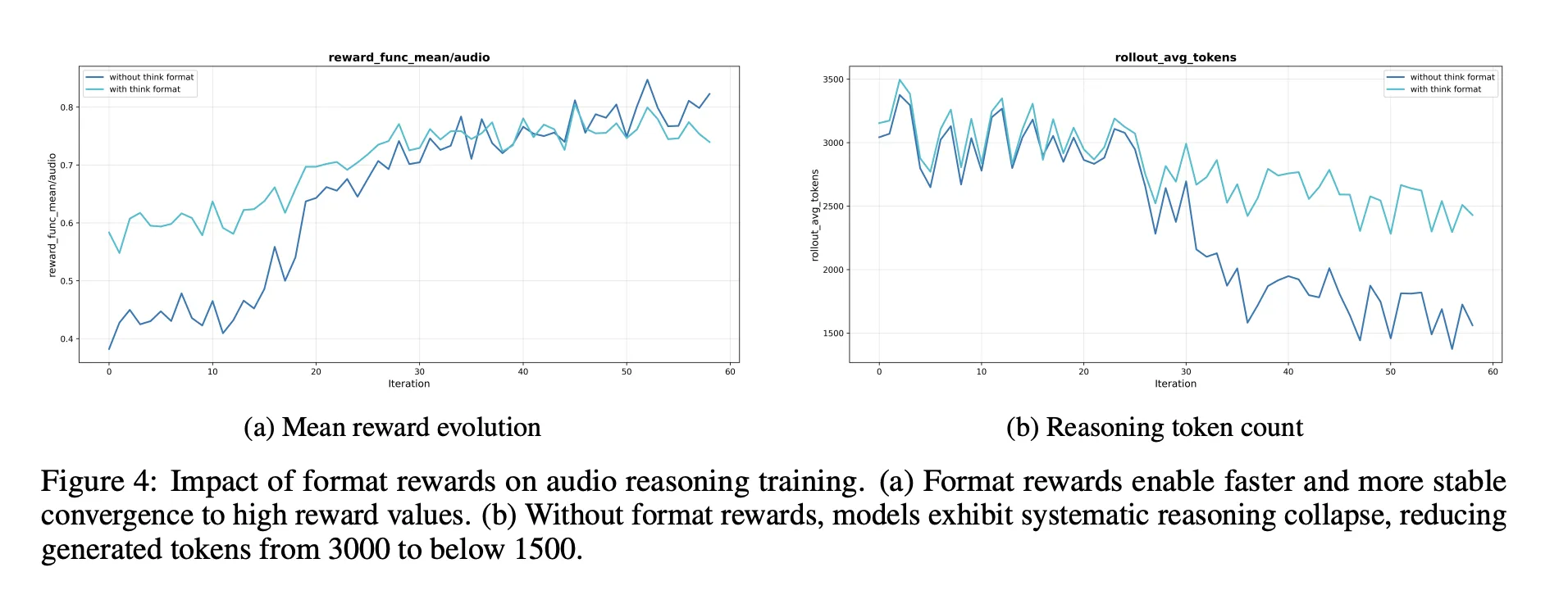

- A reward within the type of a deduction is important. With out this, reinforcement studying tends to shorten or take away chains of thought, leading to decrease audio benchmark scores.

- RL information ought to goal issues of medium problem. Selecting questions with a move within the center band of 8 offers you a extra constant reward and can maintain longer inferences.

- Scaling RL audio information with out such a range is ineffective. The standard of prompts and labels is extra necessary than uncooked measurement.

The researchers additionally describe a self-aware correction pipeline that reduces the frequency of solutions akin to “I can solely learn textual content and can’t hear audio” in fashions educated to course of audio. It makes use of direct configuration optimization for fastidiously chosen configuration pairs the place the right habits is to acknowledge and use voice enter.

Essential factors

- Step-Audio-R1 is likely one of the first audio language fashions to show longer chains of thought into constant accuracy good points for audio duties, resolving the inverse scaling failure seen in earlier audio LLMs.

- This mannequin makes use of modality grounded inference distillation to explicitly goal textual surrogate inference. It filters and distills solely inferential traces that depend on acoustic cues akin to pitch, timbre, and rhythm, moderately than imagined transcripts.

- Architecturally, Step-Audio-R1 combines a Qwen2-based audio encoder and adapter, and a always generated Qwen2.5 32B decoder.

<assume>Creates an inference phase earlier than the reply and is launched as a 33B audio text-to-text mannequin in Apache 2.0. - Throughout complete speech understanding and inference benchmarks protecting speech, environmental sounds, and music, Step-Audio-R1 outperforms Gemini 2.5 Professional and reaches efficiency akin to Gemini 3 Professional, whereas additionally supporting a real-time variant for low-latency voice-to-voice interplay.

- This coaching recipe combines large-scale supervised thought chains, modality-based distillation, and reinforcement studying with validated rewards to supply a concrete and reproducible blueprint for constructing future speech inference fashions that truly profit from computational scaling of take a look at occasions.

Modifying notes

Step-Audio-R1 is a big launch as a result of it transforms Chain of Thought from Debt into a useful gizmo for audio inference by instantly addressing textual content surrogate inference utilizing modality grounded inference distillation and reinforcement studying with verified rewards. This reveals that when inference relies on acoustic options, computational scaling of take a look at occasions advantages the audio mannequin, offering benchmark outcomes akin to Gemini 3 Professional whereas remaining open and virtually usable for engineers. General, this analysis work transforms long-term issues in audio LLM from constant failure modes to controllable and reproducible design patterns.

Please examine paper, lipo, Project page and model weights. Please be happy to test it out GitHub page for tutorials, code, and notebooks. Please be happy to comply with us too Twitter Do not forget to affix us 100,000+ ML subreddits and subscribe our newsletter. dangle on! Are you on telegram? You can now also participate by telegram.

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of synthetic intelligence for social good. His newest endeavor is the launch of Marktechpost, a synthetic intelligence media platform. It stands out for its thorough protection of machine studying and deep studying information, which is technically sound and simply understood by a large viewers. The platform boasts over 2 million views monthly, demonstrating its recognition amongst viewers.