Organizations usually face challenges when implementing one-shot fine-tuning approaches for generative AI fashions. One-shot fine-tuning strategies contain deciding on coaching information, configuring hyperparameters, and anticipating the outcomes to be as anticipated with out having to make incremental changes. One-off tweaks usually produce sub-optimal outcomes, and if enhancements are wanted, the complete course of should begin from scratch.

Amazon Bedrock now helps iterative fine-tuning, enabling systematic mannequin enchancment by managed, incremental coaching rounds. This characteristic permits you to construct on beforehand custom-made fashions, whether or not created by fine-tuning or distillation, offering a basis for steady enchancment with out the dangers related to full retraining.

On this publish, we discover find out how to implement Amazon Bedrock’s iterative fine-tuning capabilities to systematically enhance your AI fashions. We clarify the important thing advantages in comparison with one-shot approaches, stroll by real-world implementations utilizing each the console and SDK, focus on deployment choices, and share greatest practices for maximizing the outcomes of iterative tweaking.

When to make use of iterative fine-tuning

Iterative fine-tuning has a number of benefits over one-shot approaches and is effective for manufacturing environments. You may cut back threat by incremental enhancements, permitting you to check and validate modifications earlier than making large-scale modifications. This strategy permits you to make data-driven optimizations primarily based on real-world efficiency suggestions quite than theoretical assumptions about what works. This system additionally helps builders apply completely different coaching methods in sequence to refine mannequin habits. Most significantly, iterative fine-tuning permits for evolving enterprise necessities pushed by steady reside information visitors. As consumer patterns change over time and new use circumstances emerge that weren’t current throughout preliminary coaching, you’ll be able to leverage this new information to enhance mannequin efficiency with out ranging from scratch.

Methods to implement iterative tweaking in Amazon Bedrock

Establishing iterative fine-tuning includes making ready an surroundings and making a coaching job that builds an current {custom} mannequin programmatically utilizing the console interface or the SDK.

Stipulations

Earlier than you begin iterative tweaking, you want a beforehand custom-made mannequin as a place to begin. This base mannequin could be created from a fine-tuning or distillation course of and helps customizable fashions and variants accessible on Amazon Bedrock. Additionally, you will want:

- Commonplace IAM permissions for customizing Amazon Bedrock fashions

- Incremental coaching information centered on addressing particular efficiency gaps

- S3 bucket for coaching information and job output

Incremental coaching information ought to goal particular areas the place the present mannequin wants enchancment, quite than trying to retrain for each doable state of affairs.

Utilizing the AWS Administration Console

The Amazon Bedrock console gives a simple interface for creating iterative fine-tuning jobs.

Transfer to. {custom} mannequin Choose a piece Making a fine-tuning job. The principle distinction in iterative fine-tuning is within the collection of the bottom mannequin, the place you select a beforehand custom-made mannequin as an alternative of the bottom mannequin.

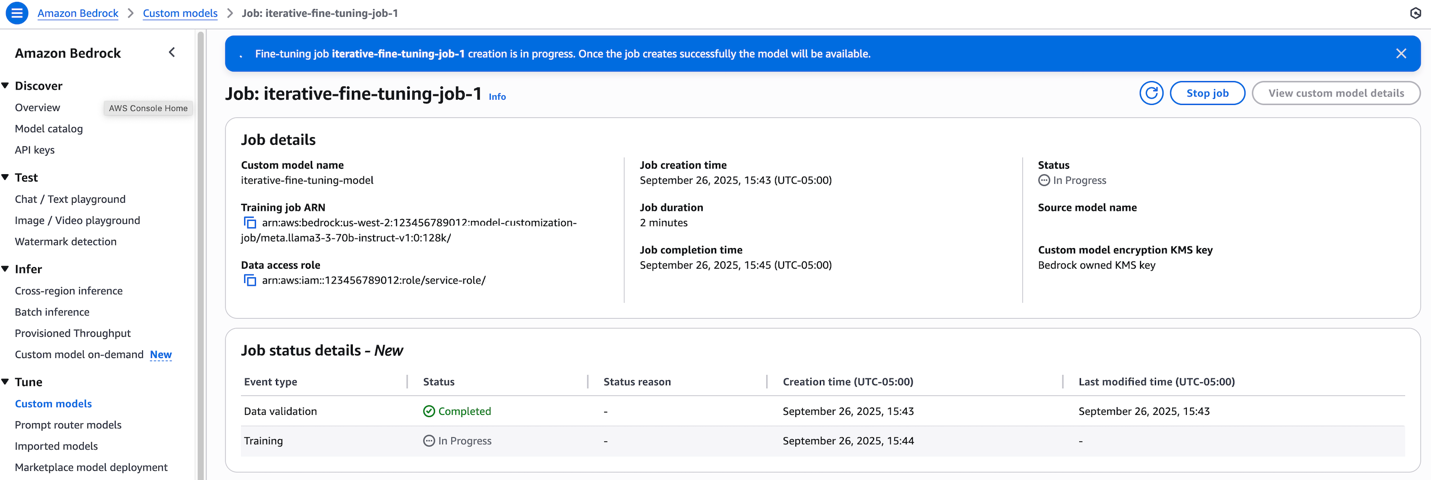

Throughout coaching, you’ll be able to go to: {custom} mannequin Monitor the standing of your job on a web page within the Amazon Bedrock console.

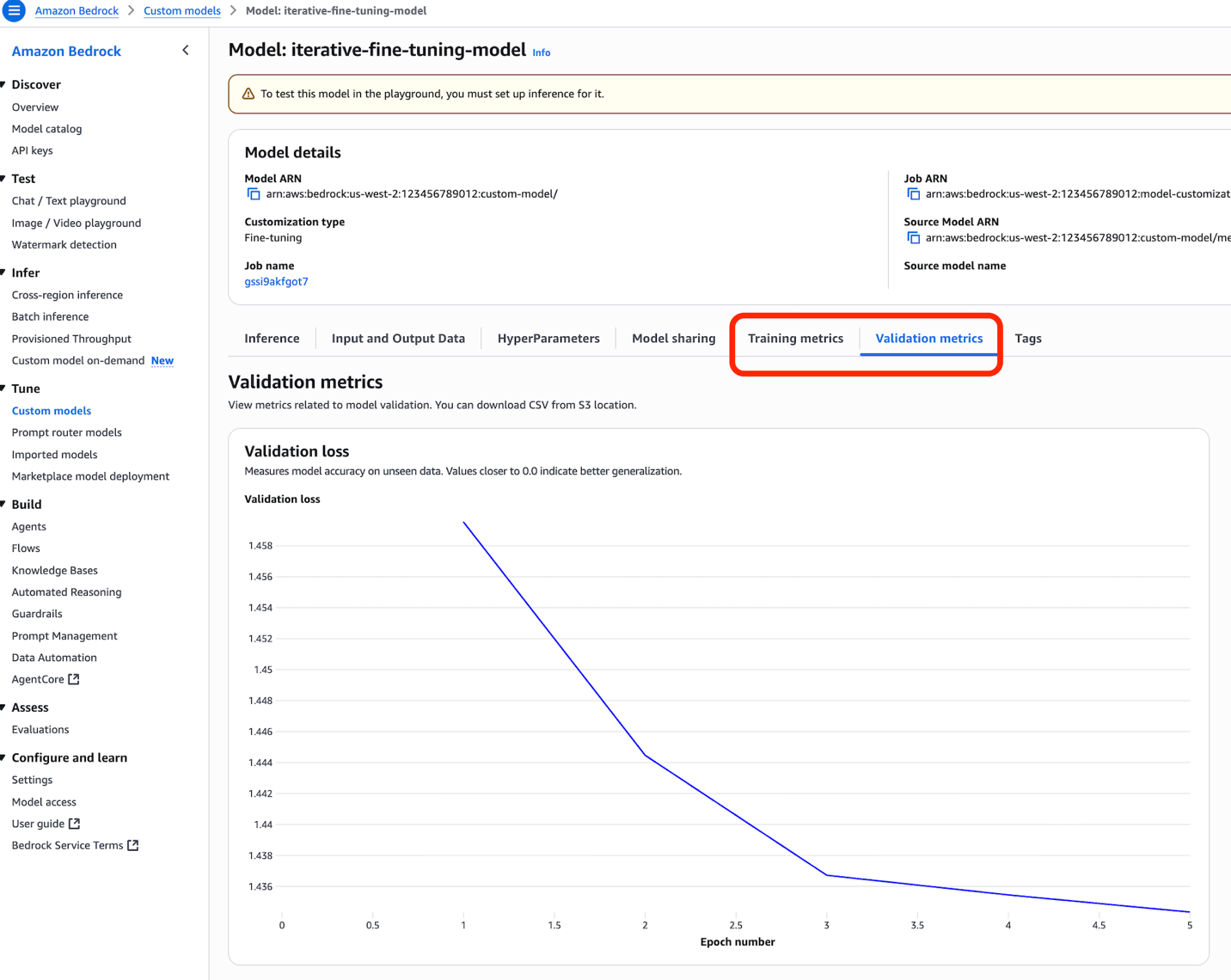

As soon as accomplished, you’ll be able to monitor your job’s efficiency metrics by a number of metric graphs on the console. coaching indicators and Validation metrics tab.

Utilizing the SDK

The programmatic implementation of iterative fine-tuning follows the same sample to plain fine-tuning, with one vital distinction being that you simply specify a beforehand custom-made mannequin as the bottom mannequin identifier. An instance implementation is proven under.

Arrange inference for iteratively fine-tuned fashions

As soon as the iterative fine-tuning job is full, there are two important choices for deploying the mannequin for inference: provisioned throughput and on-demand inference, every appropriate for various utilization patterns and necessities.

provisioned throughput

Provisioned throughput gives steady efficiency for predictable workloads with constant throughput necessities. This feature gives devoted capability for iteratively fine-tuned fashions to keep up efficiency requirements in periods of peak utilization. Setup includes buying mannequin items primarily based on anticipated visitors patterns and efficiency necessities.

On-demand inference

On-demand inference gives flexibility to accommodate completely different workloads and experimental situations. Along with the Amazon Nova Micro, Lite, and Professional fashions, Amazon Bedrock now additionally helps the Llama 3.3 mannequin for on-demand inference with pay-per-token. This feature avoids the necessity for capability planning, permitting you to iteratively take a look at fine-tuned fashions with out upfront dedication. The pricing mannequin routinely scales with utilization, making it cost-effective for functions with unpredictable or low-volume inference patterns.

greatest practices

Profitable iterative fine-tuning requires consideration to a number of key areas. Most significantly, your information technique ought to emphasize high quality over amount of incremental datasets. Quite than including massive numbers of latest coaching samples, deal with high-quality information that addresses particular efficiency gaps recognized in earlier iterations.

To successfully observe progress, constant evaluations between iterations permit for significant comparisons of enhancements. Set up baseline metrics in the course of the first iteration and keep the identical analysis framework all through the method. Amazon Bedrock analysis permits you to systematically establish the place gaps exist in mannequin efficiency every time you carry out customizations. This consistency helps you perceive whether or not your modifications are yielding significant enhancements.

Lastly, figuring out when to cease an iterative course of will help forestall poor return on funding. Monitor the efficiency enchancment between iterations and think about terminating the method when the advance turns into marginal in comparison with the hassle required.

conclusion

Iterative fine-tuning in Amazon Bedrock gives a scientific strategy to mannequin enchancment that reduces threat whereas enabling steady enchancment. Utilizing an iterative fine-tuning strategy, organizations can construct on current investments in {custom} fashions quite than ranging from scratch when changes are wanted.

To begin making iterative tweaks, go to the Amazon Bedrock console and {custom} mannequin part. For detailed implementation steerage, please confer with the Amazon Bedrock documentation.

In regards to the writer

Yangyang Zhang She is a Senior Generative AI Information Scientist at Amazon Internet Companies and a Generative AI Specialist engaged on cutting-edge AI/ML applied sciences to assist clients use generative AI to attain their desired outcomes. Yanyan graduated from Texas A&M College with a PhD in electrical engineering. Outdoors of labor, I really like touring, figuring out, and exploring new issues.

Yangyang Zhang She is a Senior Generative AI Information Scientist at Amazon Internet Companies and a Generative AI Specialist engaged on cutting-edge AI/ML applied sciences to assist clients use generative AI to attain their desired outcomes. Yanyan graduated from Texas A&M College with a PhD in electrical engineering. Outdoors of labor, I really like touring, figuring out, and exploring new issues.

Gautam Kumar is an Engineering Supervisor at AWS AI Bedrock, the place he leads mannequin customization initiatives throughout large-scale foundational fashions. He makes a speciality of distributed coaching and fine-tuning. Outdoors of labor, I get pleasure from studying and touring.

Gautam Kumar is an Engineering Supervisor at AWS AI Bedrock, the place he leads mannequin customization initiatives throughout large-scale foundational fashions. He makes a speciality of distributed coaching and fine-tuning. Outdoors of labor, I get pleasure from studying and touring.

jesse manders is a senior product supervisor for Amazon Bedrock, an AWS Generative AI developer service. He works on the intersection of AI and human interplay with the objective of making and enhancing generative AI services and products that meet our wants. Beforehand, Jesse held engineering crew management roles at Apple and Lumileds, and was a senior scientist at a Silicon Valley startup. He has a grasp’s diploma and a Ph.D. He holds a BA from the College of Florida and an MBA from the Haas Faculty of Enterprise on the College of California, Berkeley.

jesse manders is a senior product supervisor for Amazon Bedrock, an AWS Generative AI developer service. He works on the intersection of AI and human interplay with the objective of making and enhancing generative AI services and products that meet our wants. Beforehand, Jesse held engineering crew management roles at Apple and Lumileds, and was a senior scientist at a Silicon Valley startup. He has a grasp’s diploma and a Ph.D. He holds a BA from the College of Florida and an MBA from the Haas Faculty of Enterprise on the College of California, Berkeley.