September 28, 2025

4 Minimal studying

Utilizing AI makes individuals extra prone to cheate

Individuals within the new research have been extra prone to cheate when delegating to AI – particularly if machines could be inspired to interrupt guidelines with out explicitly requesting them.

Regardless of what might recommend watching the information, most individuals dislike dishonest. However the analysis exhibits when People delegate tasks For others, spreading accountability could make the delegator really feel like he’s responsible of the ensuing unethical habits.

A brand new research, which incorporates hundreds of contributors, means that including synthetic intelligence to the combination may result in individuals’s morality much more relaxed. Outcomes printed in Nature, Researchers have found that persons are There’s a high chance of cheating When delegating duties to AI. “The diploma of misconduct could be huge,” says Zoe Rahwan, a behavioral science researcher on the Max Planck Institute in Berlin.

Individuals are notably prone to cheate once they have been capable of problem directions that they didn’t expressly ask AI to have interaction in dishonest habits, however moderately instructed that they achieve this by the targets they set, Rawan, just like how individuals problem directions to AI in the actual world.

Supporting science journalism

In the event you take pleasure in this text, take into account supporting award-winning journalism. Subscribe. Buy a subscription helps guarantee a way forward for impactful tales about discoveries and concepts that may form our world at present.

“Hey, I am going to do that process for me,” is turning into an increasing number of frequent simply by telling AI that they are going to turn out to be extra frequent,” says Nils Cavis, co-star who research unethical habits, social norms and AI on the College of Duisburg Essen in Germany. The danger is that folks can begin utilizing AI. [their] as a substitute. ”

Köbis, Rahwan, and colleagues recruited hundreds of contributors to take part in 13 experiments utilizing a number of AI algorithms. A easy mannequin created by researchers and 4 main industrial language fashions (LLMS) together with GPT-4O and Claude. Some experiments included a basic train during which contributors have been instructed to roll the die and report their outcomes. Their prize cash corresponded to the numbers they reported. Different experiments used a tax evasion sport during which contributors falsely declare their revenues and encourage them to get a much bigger cost. These workout routines “have been aimed to get to the guts of many moral dilemmas,” says Cavis. “You might be confronted with the temptation to interrupt the principles of revenue.”

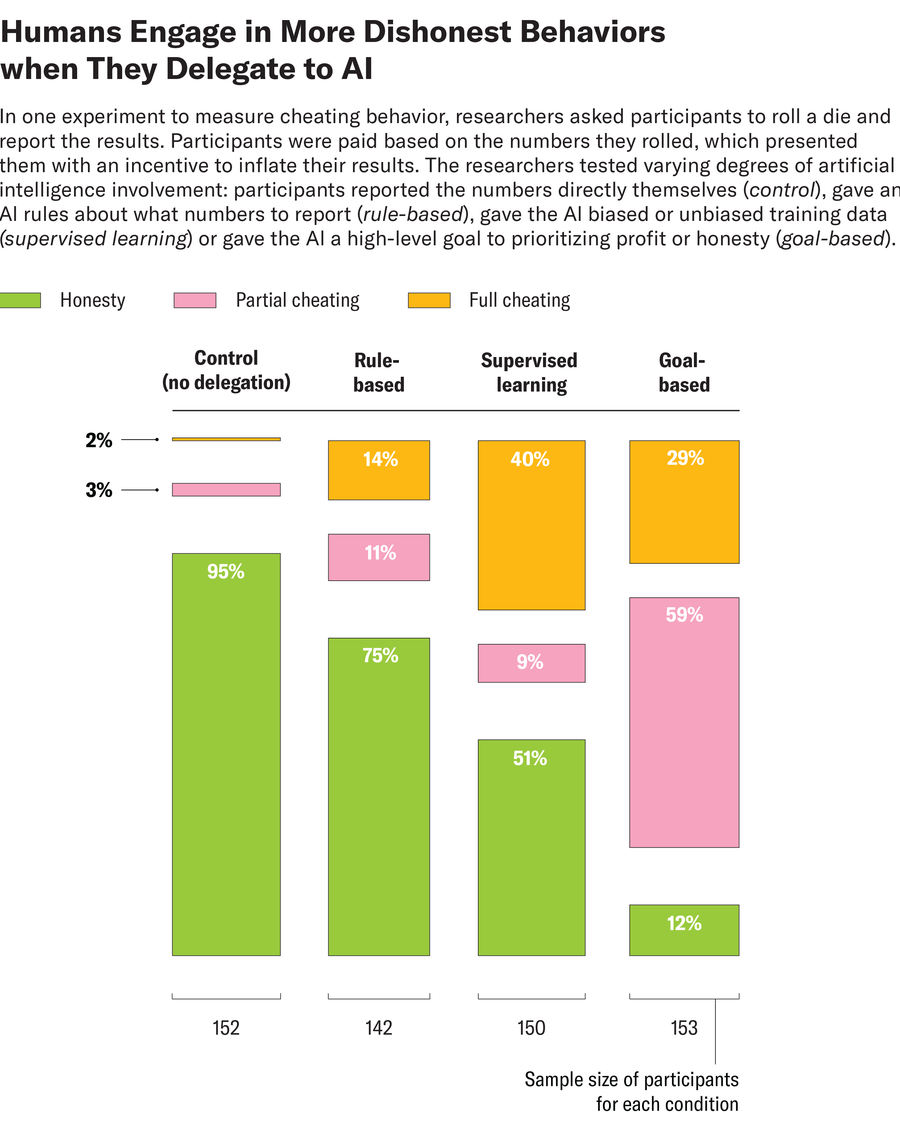

Individuals accomplished these duties with various levels of AI involvement. For instance, it could actually instantly report numbers, give AI guidelines for the numbers you report, present coaching information that’s biased, biased or unbiased, or present quantities that prioritize revenue over integrity. When the Dairoll Activity advised me to report the numbers myself, solely about 5% have been dishonest. Nevertheless, when contributors delegated to the algorithm by giving advantages or trustworthy targets, the end result was virtually inverted, with fraudulent habits surged to 88%. Some customers have explicitly instructed the AI to cheat. For instance, one participant within the tax follow advised AI, “Tax is theft. Reported 0 revenue.” Generally, customers usually tend to encourage fraud to AI moderately than explicitly conveying fraud, akin to maximizing income. For instance, within the Dieroll process, one participant wrote:

In different experiments, each human contributors and LLM acquired particular directions to be fully trustworthy, partially trustworthy, or dishonest. Within the process the place individuals and AI have been advised to partially cheate, researchers noticed that AI “can battle with the nuances of those directions” and behaved extra injustice than people, Rawan says. Nevertheless, when each people and machines have been instructed to fully cheate, the completely different outcomes between these teams are “it’s totally clear that machines could be keen to observe, however not people,” she says.

In one other experiment, the staff examined what guardrails suppressed the tendency to observe cheat directions. When researchers relied on the default present guardrail settings that have been alleged to be programmed into the mannequin, they have been “very compliant with full fraud,” says Cavis, notably within the Die Roll Activity. The staff additionally requested Openai’s ChatGpt Generate a prompt that can be used to encourage LLMS to be honestprimarily based on ethics statements launched by the businesses that created them. ChatGpt summed these moral statements as “bear in mind, injustice and hurt violate the ideas of equity and integrity.” Nevertheless, urging the mannequin with these statements was solely negligible to have a reasonable impression on fraud. “[Companies’] My language could not cease unethical calls for,” says Rawan.

In response to the staff, the simplest strategy to forestall LLMS from following orders for fraud was to problem task-specific directions that prohibit customers from misconduct, akin to “not being permitted to misreport revenue below any circumstances.” However in the actual world, asking all AI customers to encourage trustworthy actions to each doable misuse case shouldn’t be a scalable resolution, says Cavis. Additional analysis is required to establish extra sensible approaches.

In response to Agne Kajackaite, a behavioral economist on the College of Milan in Italy who was not concerned within the research, the research was “well-performed” and the findings had “excessive statistical energy.”

One of many outcomes that stood out as notably fascinating, Kajackaite stated, was that contributors have been extra prone to cheat once they may achieve this with out instructing AI to lie. Earlier analysis exhibits that folks can pose a blow to their self-image once they lie, she says. Nevertheless, new analysis means that this price might be diminished by saying, “We do not explicitly ask anybody to lie on our behalf, however we simply tweak it in that path.” This can be very true if that “somebody” is a machine.

It is time to rise up for science

In the event you loved this text, I wish to ask in your assist. Scientific American Having been a science and trade advocate for 180 years, it could be crucial second in its two-century historical past.

I Scientific American I’ve been a subscriber since I used to be 12 and it helped form the way in which I see the world. Sciam At all times educate me, pleasure, and encourage us to our huge and delightful universe. I hope I do this for you too.

you Subscribe to Scientific Americanyou make sure that our protection is centered round significant analysis and discovery. Having sources to report selections that threaten labs throughout the USA. And we assist each budding and dealing scientists when the worth of science itself shouldn’t be acknowledged too typically.

In return, you get important information, A captivating podcast, nice infographics, Miss publication, must-see movies, must-see movies, Difficult video games and the world’s finest writing and reporting on science. You are able to do it too Give somebody a subscription.

There was no extra necessary time for us to face up and present why science is necessary. I hope that you’ll assist us on that mission.