This put up is co-written with Samit Verma, Eusha Rizvi, Manmeet Singh, Troy Smith, and Corey Finley from Verisk.

Verisk Score Insights as a characteristic of ISO Electronic Rating Content (ERC) is a robust software designed to offer summaries of ISO Score modifications between two releases. Historically, extracting particular submitting data or figuring out variations throughout a number of releases required guide downloads of full packages, which was time-consuming and susceptible to inefficiencies. This problem, coupled with the necessity for correct and well timed buyer help, prompted Verisk to discover modern methods to reinforce consumer accessibility and automate repetitive processes. Utilizing generative AI and Amazon Internet Providers (AWS) companies, Verisk has made vital strides in making a conversational consumer interface for customers to simply retrieve particular data, establish content material variations, and enhance total operational effectivity.

On this put up, we dive into how Verisk Score Insights, powered by Amazon Bedrock, giant language fashions (LLM), and Retrieval Augmented Era (RAG), is remodeling the best way clients work together with and entry ISO ERC modifications.

The problem

Score Insights supplies priceless content material, however there have been vital challenges with consumer accessibility and the time it took to extract actionable insights:

- Handbook downloading – Prospects needed to obtain total packages to get even a small piece of related data. This was inefficient, particularly when solely part of the submitting wanted to be reviewed.

- Inefficient information retrieval – Customers couldn’t rapidly establish the variations between two content material packages with out downloading and manually evaluating them, which might take hours and typically days of research.

- Time-consuming buyer help – Verisk’s ERC Buyer Help group spent 15% of their time weekly addressing queries from clients who have been impacted by these inefficiencies. Moreover, onboarding new clients required half a day of repetitive coaching to make sure they understood entry and interpret the info.

- Handbook evaluation time – Prospects usually spent 3–4 hours per check case analyzing the variations between filings. With a number of check instances to handle, this led to vital delays in crucial decision-making.

Answer overview

To resolve these challenges, Verisk launched into a journey to reinforce Score Insights with generative AI applied sciences. By integrating Anthropic’s Claude, obtainable in Amazon Bedrock, and Amazon OpenSearch Service, Verisk created a classy conversational platform the place customers can effortlessly entry and analyze score content material modifications.

The next diagram illustrates the high-level structure of the answer, with distinct sections displaying the info ingestion course of and inference loop. The structure makes use of a number of AWS companies so as to add generative AI capabilities to the Rankings Perception system. This technique’s parts work collectively seamlessly, coordinating a number of LLM calls to generate consumer responses.

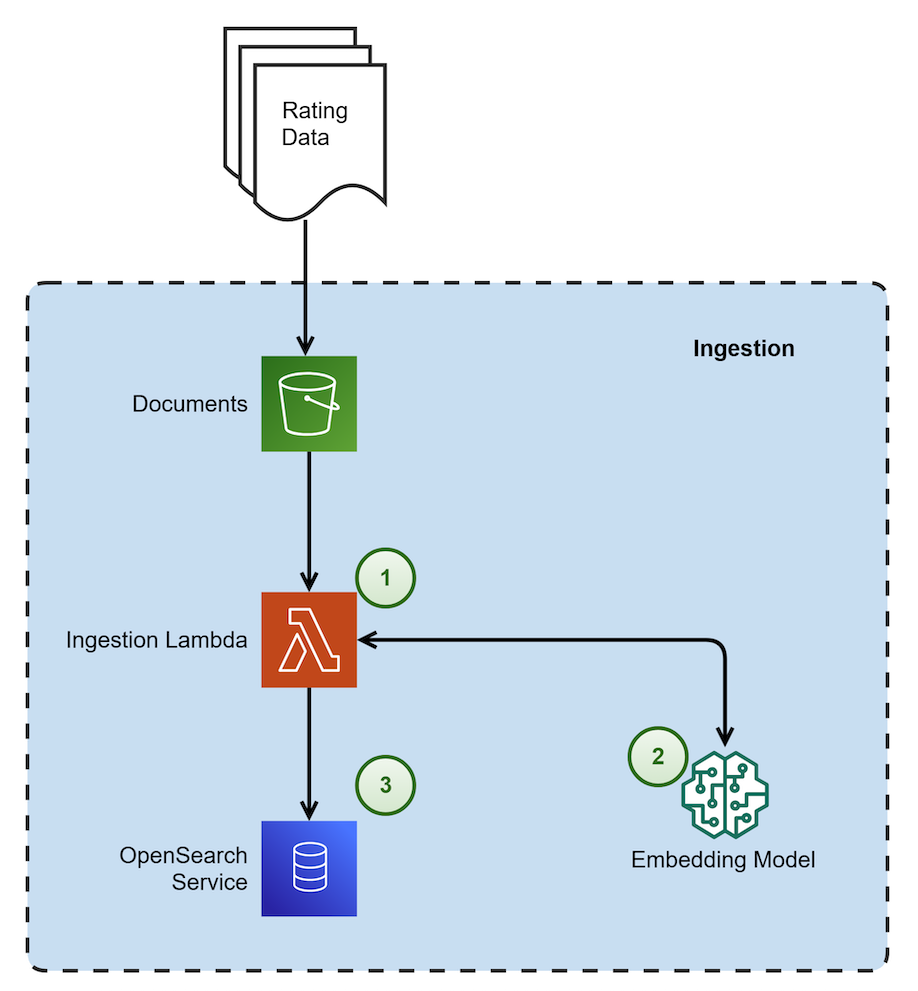

The next diagram reveals the architectural parts and the high-level steps concerned within the Knowledge Ingestion course of.

|

The steps within the information ingestion course of proceed as follows:

- This course of is triggered when a brand new file is dropped. It’s accountable for chunking the doc utilizing a {custom} chunking technique. This technique recursively checks every part and retains them intact with out overlap. The method then embeds the chunks and shops them in OpenSearch Service as vector embeddings.

- The embedding mannequin utilized in Amazon Bedrock is amazon titan-embed-g1-text-02.

- Amazon OpenSearch Serverless is utilized as a vector embedding retailer with metadata filtering functionality.

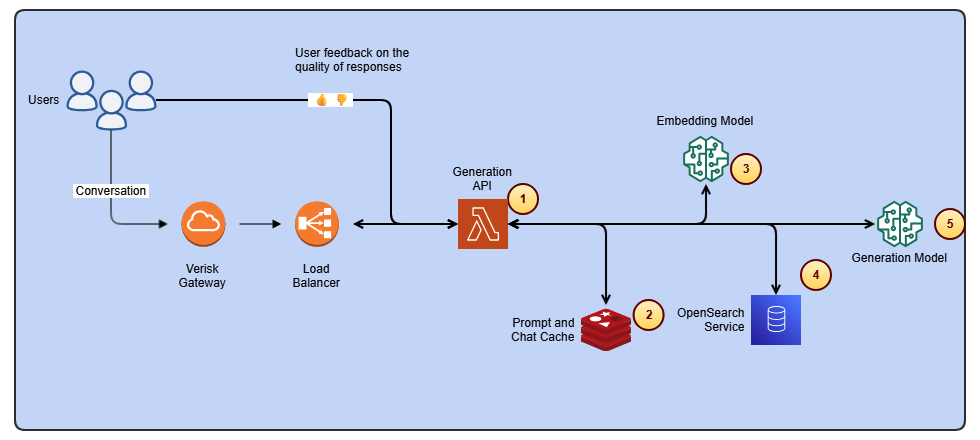

The next diagram reveals the architectural parts and the high-level steps concerned within the inference loop to generate consumer responses.

The steps within the inference loop proceed as follows:

- This element is accountable for a number of duties: it dietary supplements consumer questions with current chat historical past, embeds the questions, retrieves related chunks from the vector database, and eventually calls the era mannequin to synthesize a response.

- Amazon ElastiCache is used for storing current chat historical past.

- The embedding mannequin utilized in Amazon Bedrock is amazon titan-embed-g1-text-02.

- OpenSearch Serverless is carried out for RAG (Retrieval-Augmented Era).

- For producing responses to consumer queries, the system makes use of Anthropic’s Claude Sonnet 3.5 (mannequin ID: anthropic.claude-3-5-sonnet-20240620-v1:0), which is offered by Amazon Bedrock.

Key applied sciences and frameworks used

We used Anthropic’s Claude Sonnet 3.5 (mannequin ID: anthropic.claude-3-5-sonnet-20240620-v1:0) to grasp consumer enter and supply detailed, contextually related responses. Anthropic’s Claude Sonnet 3.5 enhances the platform’s skill to interpret consumer queries and ship correct insights from complicated content material modifications. LlamaIndex, which is an open supply framework, served because the chain framework for effectively connecting and managing completely different information sources to allow dynamic retrieval of content material and insights.

We carried out RAG, which permits the mannequin to drag particular, related information from the OpenSearch Serverless vector database. This implies the system generates exact, up-to-date responses based mostly on a consumer’s question without having to sift by large content material downloads. The vector database permits clever search and retrieval, organizing content material modifications in a manner that makes them rapidly and simply accessible. This eliminates the necessity for guide looking out or downloading of total content material packages. Verisk utilized guardrails in Amazon Bedrock Guardrails together with {custom} guardrails across the generative mannequin so the output adheres to particular compliance and high quality requirements, safeguarding the integrity of responses.

Verisk’s generative AI answer is a complete, safe, and versatile service for constructing generative AI purposes and brokers. Amazon Bedrock connects you to main FMs, companies to deploy and function brokers, and instruments for fine-tuning, safeguarding, and optimizing fashions together with data bases to attach purposes to your newest information so that you’ve every thing it’s essential rapidly transfer from experimentation to real-world deployment.

Given the novelty of generative AI, Verisk has established a governance council to supervise its options, making certain they meet safety, compliance, and information utilization requirements. Verisk carried out strict controls inside the RAG pipeline to make sure information is just accessible to approved customers. This helps keep the integrity and privateness of delicate data. Authorized evaluations guarantee IP safety and contract compliance.

The way it works

The mixing of those superior applied sciences permits a seamless, user-friendly expertise. Right here’s how Verisk Score Insights now works for patrons:

- Conversational consumer interface – Customers can work together with the platform through the use of a conversational interface. As an alternative of manually reviewing content material packages, customers enter a pure language question (for instance, “What are the modifications in protection scope between the 2 current filings?”). The system makes use of Anthropic’s Claude Sonnet 3.5 to grasp the intent and supplies an on the spot abstract of the related modifications.

- Dynamic content material retrieval – Because of RAG and OpenSearch Service, the platform doesn’t require downloading total recordsdata. As an alternative, it dynamically retrieves and presents the particular modifications a consumer is searching for, enabling faster evaluation and decision-making.

- Automated distinction evaluation – The system can mechanically examine two content material packages, highlighting the variations with out requiring guide intervention. Customers can question for exact comparisons (for instance, “Present me the variations in score standards between Launch 1 and Launch 2”).

- Custom-made insights – The guardrails in place imply that responses are correct, compliant, and actionable. Moreover, if wanted, the system might help customers perceive the impression of modifications and help them in navigating the complexities of filings, offering clear, concise insights.

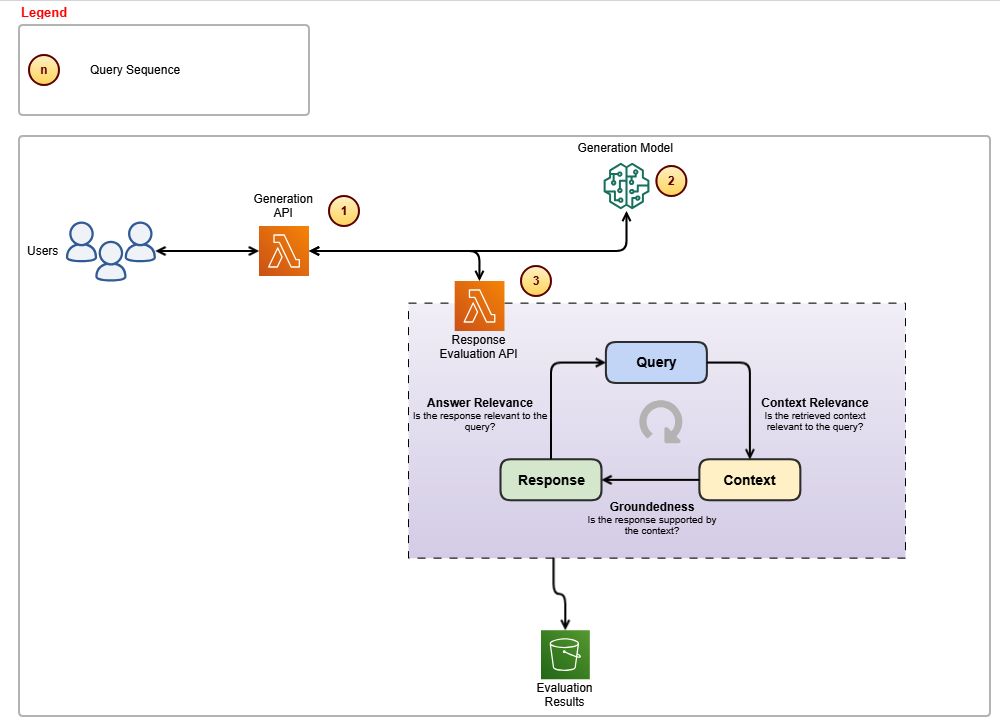

The next diagram reveals the architectural parts and the high-level steps concerned within the analysis loop to generate related and grounded responses.

The steps within the analysis loop proceed as follows:

- This element is accountable for calling Anthropic’s Claude Sonnet 3.5 mannequin and subsequently invoking the custom-built analysis APIs to make sure response accuracy.

- The era mannequin employed is Anthropic’s Claude Sonnet 3.5, which handles the creation of responses.

- The Analysis API ensures that responses stay related to consumer queries and keep grounded inside the supplied context.

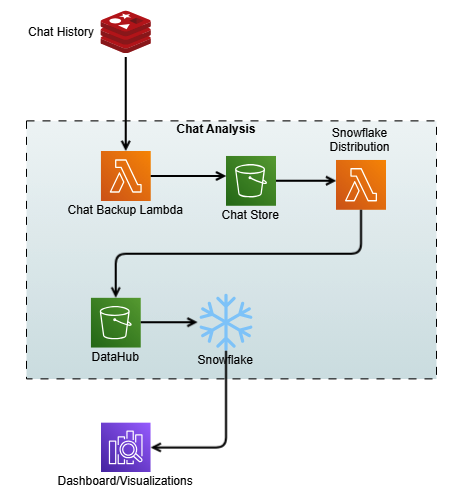

The next diagram reveals the method of capturing the chat historical past as contextual reminiscence and storage for evaluation.

High quality benchmarks

The Verisk Score Insights group has carried out a complete analysis framework and suggestions loop mechanism respectively, proven within the above figures, to help steady enchancment and handle the problems which may come up.

Guaranteeing excessive accuracy and consistency in responses is crucial for Verisk’s generative AI options. Nevertheless, LLMs can typically produce hallucinations or present irrelevant particulars, affecting reliability. To deal with this, Verisk carried out:

- Analysis framework – Built-in into the question pipeline, it validates responses for precision and relevance earlier than supply.

- Intensive testing – Product material consultants (SMEs) and high quality consultants rigorously examined the answer to make sure accuracy and reliability. Verisk collaborated with in-house insurance coverage area consultants to develop SME analysis metrics for accuracy and consistency. A number of rounds of SME evaluations have been performed, the place consultants graded these metrics on a 1–10 scale. Latency was additionally tracked to evaluate velocity. Suggestions from every spherical was integrated into subsequent assessments to drive enhancements.

- Continuous mannequin enchancment – Utilizing buyer suggestions serves as a vital element in driving the continual evolution and refinement of the generative fashions, enhancing each accuracy and relevance. By seamlessly integrating consumer interactions and suggestions with chat historical past, a sturdy information pipeline is created that streams the consumer interactions to an Amazon Easy Storage Service (Amazon S3) bucket, which acts as a knowledge hub. The interactions then go into Snowflake, which is a cloud-based information platform and information warehouse as a service that provides capabilities akin to information warehousing, information lakes, information sharing, and information trade. By this integration, we constructed complete analytics dashboards that present priceless insights into consumer expertise patterns and ache factors.

Though the preliminary outcomes have been promising, they didn’t meet the specified accuracy and consistency ranges. The event course of concerned a number of iterative enhancements, akin to redesigning the system and making a number of calls to the LLM. The first metric for fulfillment was a guide grading system the place enterprise consultants in contrast the outcomes and supplied steady suggestions to enhance total benchmarks.

Enterprise impression and alternative

By integrating generative AI into Verisk Score Insights, the enterprise has seen a exceptional transformation. Prospects loved vital time financial savings. By eliminating the necessity to obtain total packages and manually seek for variations, the time spent on evaluation has been drastically lowered. Prospects not spend 3–4 hours per check case. What at one time took days now takes minutes.

This time financial savings introduced elevated productiveness. With an automatic answer that immediately supplies related insights, clients can focus extra on decision-making slightly than spending time on guide information retrieval. And by automating distinction evaluation and offering a centralized, easy platform, clients could be extra assured within the accuracy of their outcomes and keep away from lacking crucial modifications.

For Verisk, the profit was a lowered buyer help burden as a result of the ERC buyer help group now spends much less time addressing queries. With the AI-powered conversational interface, customers can self-serve and get solutions in actual time, liberating up help sources for extra complicated inquiries.

The automation of repetitive coaching duties meant faster and extra environment friendly buyer onboarding. This reduces the necessity for prolonged coaching classes, and new clients turn into proficient sooner. The mixing of generative AI has lowered redundant workflows and the necessity for guide intervention. This streamlines operations throughout a number of departments, resulting in a extra agile and responsive enterprise.

Conclusion

Trying forward, Verisk plans to proceed enhancing the Score Insights platform twofold. First, we’ll increase the scope of queries, enabling extra subtle queries associated to completely different submitting varieties and extra nuanced protection areas. Second, we’ll scale the platform. With Amazon Bedrock offering the infrastructure, Verisk goals to scale this answer additional to help extra customers and extra content material units throughout numerous product traces.

Verisk Score Insights, now powered by generative AI and AWS applied sciences, has remodeled the best way clients work together with and entry score content material modifications. By a conversational consumer interface, RAG, and vector databases, Verisk intends to get rid of inefficiencies and save clients priceless time and sources whereas enhancing total accessibility. For Verisk, this answer has improved operational effectivity and supplied a powerful basis for continued innovation.

With Amazon Bedrock and a concentrate on automation, Verisk is driving the way forward for clever buyer help and content material administration, empowering each their clients and their inside groups to make smarter, sooner selections.

For extra data, check with the next sources:

In regards to the authors

Samit Verma serves because the Director of Software program Engineering at Verisk, overseeing the Score and Protection improvement groups. On this position, he performs a key half in architectural design and supplies strategic route to a number of improvement groups, enhancing effectivity and making certain long-term answer maintainability. He holds a grasp’s diploma in data expertise.

Samit Verma serves because the Director of Software program Engineering at Verisk, overseeing the Score and Protection improvement groups. On this position, he performs a key half in architectural design and supplies strategic route to a number of improvement groups, enhancing effectivity and making certain long-term answer maintainability. He holds a grasp’s diploma in data expertise.

Eusha Rizvi serves as a Software program Improvement Supervisor at Verisk, main a number of expertise groups inside the Rankings Merchandise division. Possessing sturdy experience in system design, structure, and engineering, Eusha provides important steerage that advances the event of modern options. He holds a bachelor’s diploma in data programs from Stony Brook College.

Eusha Rizvi serves as a Software program Improvement Supervisor at Verisk, main a number of expertise groups inside the Rankings Merchandise division. Possessing sturdy experience in system design, structure, and engineering, Eusha provides important steerage that advances the event of modern options. He holds a bachelor’s diploma in data programs from Stony Brook College.

Manmeet Singh is a Software program Engineering Lead at Verisk and AWS Licensed Generative AI Specialist. He leads the event of an agentic RAG-based generative AI system on Amazon Bedrock, with experience in LLM orchestration, immediate engineering, vector databases, microservices, and high-availability structure. Manmeet is keen about making use of superior AI and cloud applied sciences to ship resilient, scalable, and business-critical programs.

Manmeet Singh is a Software program Engineering Lead at Verisk and AWS Licensed Generative AI Specialist. He leads the event of an agentic RAG-based generative AI system on Amazon Bedrock, with experience in LLM orchestration, immediate engineering, vector databases, microservices, and high-availability structure. Manmeet is keen about making use of superior AI and cloud applied sciences to ship resilient, scalable, and business-critical programs.

Troy Smith is a Vice President of Score Options at Verisk. Troy is a seasoned insurance coverage expertise chief with greater than 25 years of expertise in score, pricing, and product technique. At Verisk, he leads the group behind ISO Digital Score Content material, a extensively used useful resource throughout the insurance coverage trade. Troy has held management roles at Earnix and Capgemini and was the cofounder and unique creator of the Oracle Insbridge Score Engine.

Troy Smith is a Vice President of Score Options at Verisk. Troy is a seasoned insurance coverage expertise chief with greater than 25 years of expertise in score, pricing, and product technique. At Verisk, he leads the group behind ISO Digital Score Content material, a extensively used useful resource throughout the insurance coverage trade. Troy has held management roles at Earnix and Capgemini and was the cofounder and unique creator of the Oracle Insbridge Score Engine.

Corey Finley is a Product Supervisor at Verisk. Corey has over 22 years of expertise throughout private and industrial traces of insurance coverage. He has labored in each implementation and product help roles and has led efforts for main carriers together with Allianz, CNA, Residents, and others. At Verisk, he serves as Product Supervisor for VRI, RaaS, and ERC.

Corey Finley is a Product Supervisor at Verisk. Corey has over 22 years of expertise throughout private and industrial traces of insurance coverage. He has labored in each implementation and product help roles and has led efforts for main carriers together with Allianz, CNA, Residents, and others. At Verisk, he serves as Product Supervisor for VRI, RaaS, and ERC.

Arun Pradeep Selvaraj is a Senior Options Architect at Amazon Internet Providers (AWS). Arun is keen about working together with his clients and stakeholders on digital transformations and innovation within the cloud whereas persevering with to study, construct, and reinvent. He’s artistic, energetic, deeply customer-obsessed, and makes use of the working backward course of to construct fashionable architectures to assist clients remedy their distinctive challenges. Join with him on LinkedIn.

Arun Pradeep Selvaraj is a Senior Options Architect at Amazon Internet Providers (AWS). Arun is keen about working together with his clients and stakeholders on digital transformations and innovation within the cloud whereas persevering with to study, construct, and reinvent. He’s artistic, energetic, deeply customer-obsessed, and makes use of the working backward course of to construct fashionable architectures to assist clients remedy their distinctive challenges. Join with him on LinkedIn.

Ryan Doty is a Options Architect Supervisor at Amazon Internet Providers (AWS), based mostly out of New York. He helps monetary companies clients speed up their adoption of the AWS Cloud by offering architectural pointers to design modern and scalable options. Coming from a software program improvement and gross sales engineering background, the chances that the cloud can deliver to the world excite him.

Ryan Doty is a Options Architect Supervisor at Amazon Internet Providers (AWS), based mostly out of New York. He helps monetary companies clients speed up their adoption of the AWS Cloud by offering architectural pointers to design modern and scalable options. Coming from a software program improvement and gross sales engineering background, the chances that the cloud can deliver to the world excite him.