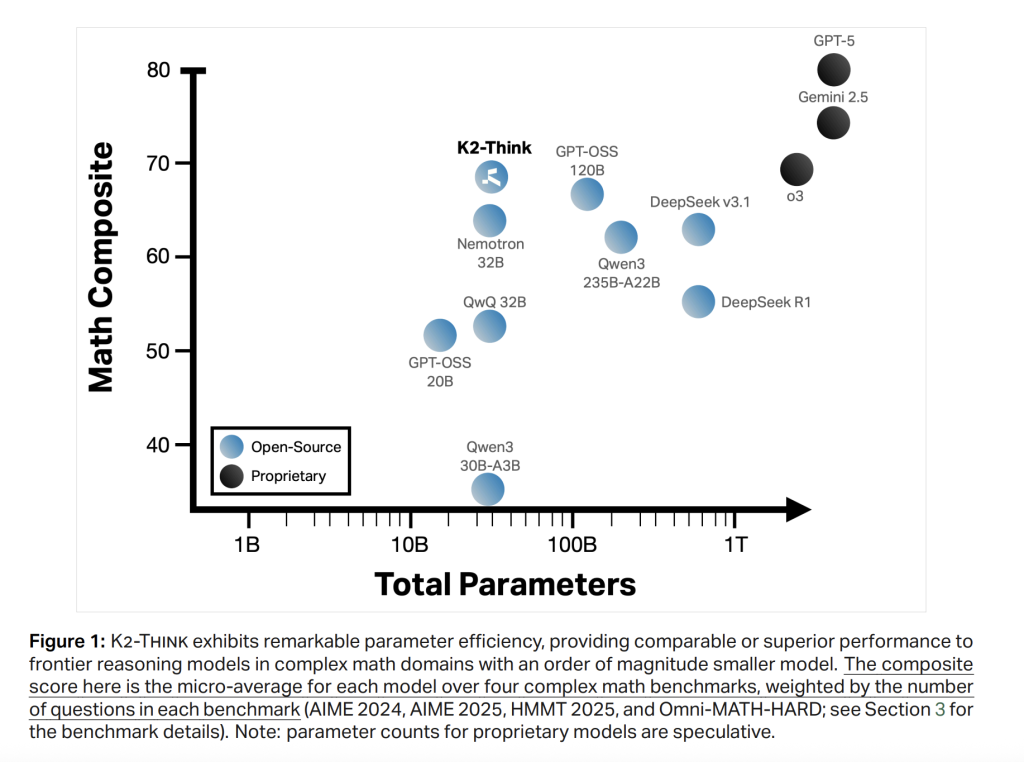

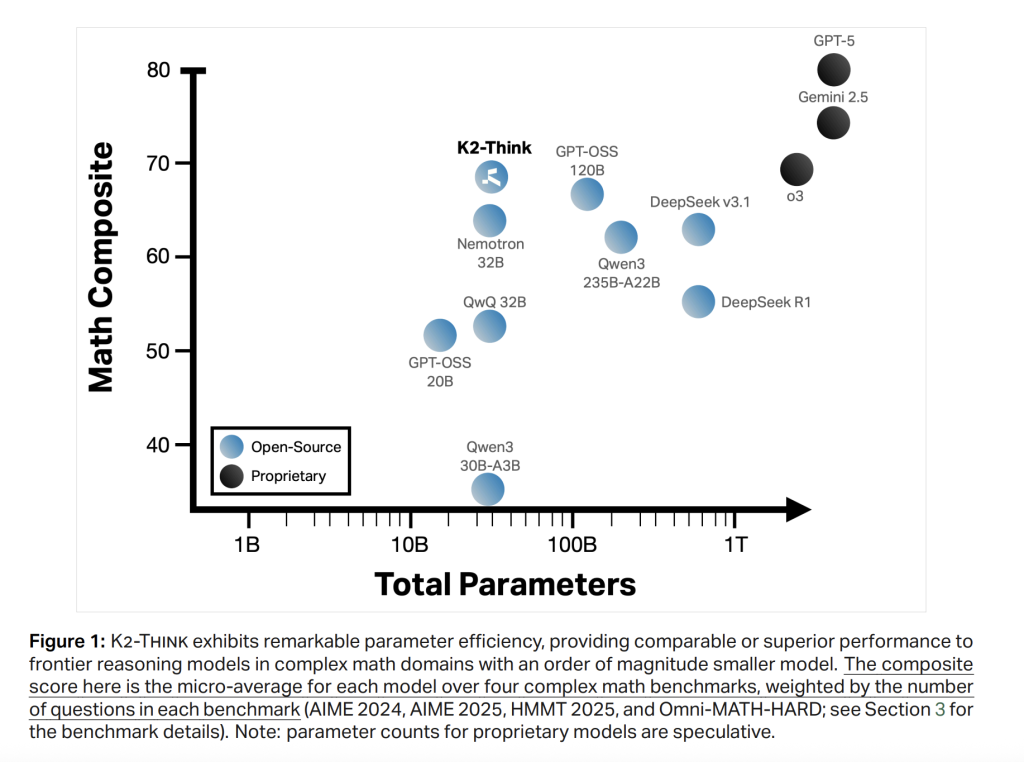

The Mbzuai Basis Mannequin Institute and the G42 Launch K2 Suppose analysis crew are open inference techniques with 32B parameters for superior AI inference. Pair reinforcement studying from verifiable rewards, agent planning, check time scaling, and inference optimization (speculative decoding + wafer scale {hardware}) with monitored tweaks of lengthy mindset. Because of this, frontier-level mathematical efficiency has a considerably decrease variety of parameters, leading to aggressive outcomes concerning code and science.

System Overview

The K2 is constructed by including an open weight QWEN2.5-32B base mannequin after coaching and a light-weight check time calculation scaffold. The design emphasizes the effectivity of the parameters. The 32B spine is deliberately chosen to permit for fast iteration and deployment whereas leaving headroom for post-training advantages. The core recipe combines six “pillars.” (1) Lengthy Ideas (COT) Monitored nice tweaks. (2) Verifiable reward reinforcement studying (RLVR); (3) Agent planning earlier than decision. (4) Take a look at time scaling with finest and choice utilizing validation brokers. (5) Speculative decoding. (6) Wafer scale engine inference.

The objective is easy. Increase the trail @1 on aggressive grade arithmetic benchmarks to keep up robust code/science efficiency, preserving response size and wall 1 quantity delay underneath management by way of deliberate prompts and hardware-conscious inference earlier than planning.

Pillar 1: Lengthy Cot SFT

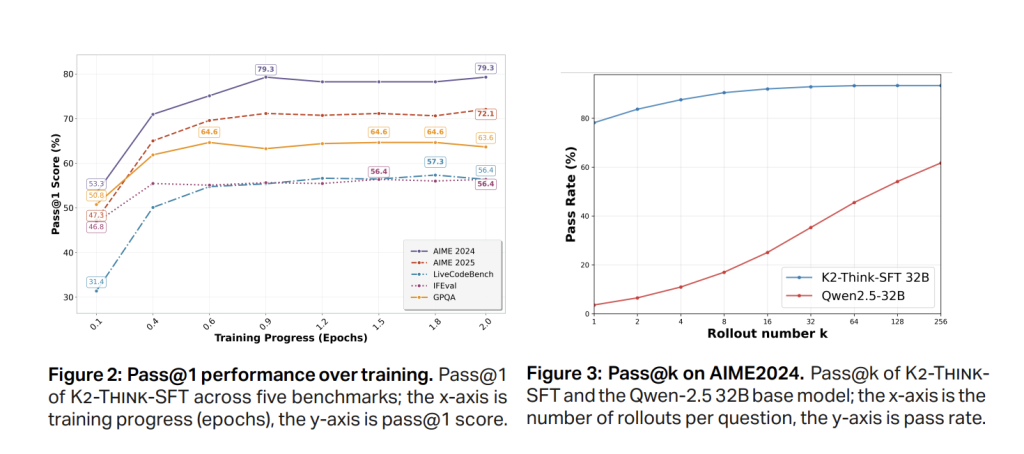

Part 1 SFT makes use of lengthy, curated chain traces and path/response pairs that span arithmetic, code, science, instruction, and common chat (AM-Thinkink-V1-Distilled). The impact is to show the bottom mannequin to externalize intermediate inference and undertake a structured output format. Fast Move@1 achieve happens early (≈0.5 epoch), with AIME’24 steady at about 79% at SFT checkpoint earlier than RL and Aime’25 steady at about 72%, indicating convergence.

Pillar 2: RL with verifiable rewards

K2 thinks they’re going to activate RLVR and prepare First particular person,Designed for verifiable end-to-end accuracy, ~92K immediate, 6 area information set (arithmetic, code, science, logic, simulation, tabular format). Implementation makes use of Verl A library with GRPO-style coverage gradient algorithms. Notable statement: Begin RL from a robust SFT checkpoints lead to a modest absolute enhance and might plateau/degenerate, however making use of the identical RL recipe on to the bottom mannequin has a big relative enchancment that helps the trade-off between SFT depth and RL headroom (e.g. about 40% for goal’24 than coaching).

The second ablation reveals that multi-stage RL with decreased drops within the preliminary context window (e.g., 16K→32K) might confuse the discovered inference patterns to cut back the utmost sequence size underneath the SFT regime by comparable to SFT baseline restoration.

Pillars 3-4: Brokers’ “Suppose earlier than planning” and check time scaling

Inference, the system first induces compact Plan Earlier than producing the entire answer, carry out Greatest-n (e.g. n=3) sampling utilizing Verifiers to pick out the most definitely reply. Two results have been reported. (i) Constant high quality enhancements by combining scaffolding. and (ii) brief Regardless of the addition of a ultimate response plan, the typical token depend has fallen throughout the benchmark, with a discount of as much as 11.7% (e.g. omnihard), and total size akin to a lot bigger open fashions. That is necessary for each delays and prices.

Desk-level evaluation reveals the response size for K2 Suppose brief It’s in the identical vary because the arithmetic GPT-OSS-120B than the QWEN3-235B-A22B. Considering earlier than planning, after including validation brokers, the typical token for K2 is its personal post-token checkpoint (e.g. AIME’24 -6.7%, AIME’25 -3.9%, HMMT25 -7.2%, Omni-Laborious -11.7%, LCBV5-10.5%, GPQA-D a).

Pillars 5-6: Speculative decoding and wafer scale inference

K2 thinks about targets Celebra Swafer Scale Engine Inference of Speculative decodingfrom the adverts to the per-request throughput 2,000 tokens/sec,Take a look at time scaffolding is sensible within the manufacturing and analysis loop. {Hardware}-aware inference paths are central to the discharge and are in keeping with the “small” philosophy of the system.

Analysis protocol

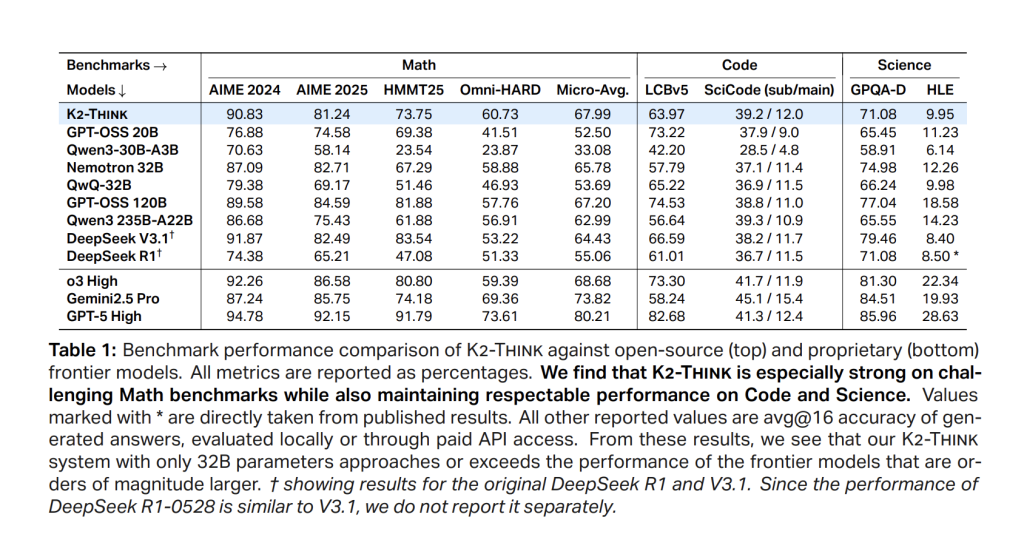

The benchmark covers aggressive stage arithmetic (Aime’24, Aime’25, HMMT’25, Omni-Math-Laborious), code (LiveCodeBench V5; Scicode Sub/Essential), and scientific data/inference (GPQA-Diamond; HLE). The analysis crew studies a standardized setup: most technology size of 64K token, temperature 1.0, prime P 0.95, cease marker </reply>and as every rating 16 Unbiased Move @1 Analysis to cut back variance between runs.

consequence

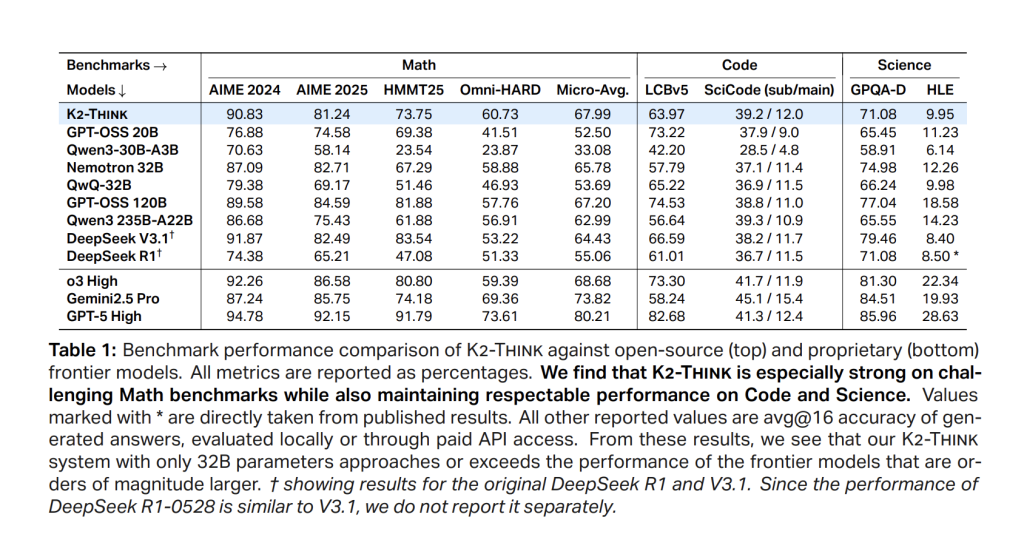

Math (Microaverages throughout Aime’24/’25, HMMT25, Omni-Laborious). I believe K2 will attain it 67.99leads open weight cohorts and compares favorably even with a lot bigger techniques. I will submit 90.83 (Aime’24), 81.24 (Aime’25), 73.75 (HMMT25), and 60.73 In Omnihard, the latter is essentially the most tough division. Positioning is in keeping with robust parameter effectivity in comparison with DeepSeek V3.1 (671b) and GPT-OSS-120B (120b).

code. LiveCodeBench V5 rating is 63.97surpasses friends of comparable sizes and even bigger open fashions (e.g. >qwen3-235b-a22b at 56.64). Within the class code, K2 is considering 39.2/12.0 (Sub/Essential), intently tracks the most effective open system with sub-problem accuracy.

Science. GPQA – Diamonds attain 71.08; hle is 9.95. This mannequin isn’t just for arithmetic consultants. They keep aggressive throughout educated duties.

Key quantity at a look

- spine: QWEN2.5-32B (weight), lengthy COT SFT + RLVR (skilled after coaching with grpo through Verl).

- RL information: Guru (~92k immediate) Arithmetic/Code/Science/Logic/Simulation/Floor.

- Inference Scaffold: Ver-hou-slink + bon with verifiers; shorter output (e.g. omni-hard with -11.7% token).

- Throughput goal: ~2,000 talks/s Celebras WSE with speculative decoding.

- Math Micro-Avg: 67.99 (Aime’24 90.83,Aime’25 81.24hmmt’25 73.75omni laborious 60.73).

- Code/Science: LCBV5 63.97;Science 39.2/12.0; gpqa-d 71.08; hle 9.95.

- Security-4 macro: 0.75 (Rejection 0.83, Conv. Robustness 0.89, Cybersecurity 0.56, Jailbreak 0.72).

abstract

I believe K2 reveals that Submit-integration coaching + check time calculation + hardware-enabled inference Lots of the gaps might be closed to a bigger, distinctive, inference system. With the 32B, nice tuning and serving is simple to deal with. Considering earlier than you intend, utilizing bon-with-verifiers controls your token funds. If there may be speculative decoding in wafer scale {hardware}, it reaches it ~2K TOK/s Every request. K2 is offered as a It is utterly open system-Weights, coaching information, deployment codes, and check time optimization codes.

Please examine paper, On mannequin Hugging my face, github and Direct access. Please be at liberty to examine GitHub pages for tutorials, code and notebooks. Additionally, please be at liberty to observe us Twitter And remember to hitch us 100k+ ml subreddit And subscribe Our Newsletter.

Asif Razzaq is CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, ASIF is dedicated to leveraging the chances of synthetic intelligence for social advantages. His newest efforts are the launch of MarkTechPost, a synthetic intelligence media platform. That is distinguished by its detailed protection of machine studying and deep studying information, and is simple to grasp by a technically sound and large viewers. The platform has over 2 million views every month, indicating its reputation amongst viewers.