We’re excited to announce the overall availability of fine-grained compute and reminiscence quota allocation with HyperPod job governance. With this functionality, clients can optimize Amazon SageMaker HyperPod cluster utilization on Amazon Elastic Kubernetes Service (Amazon EKS), distribute truthful utilization, and help environment friendly useful resource allocation throughout totally different groups or tasks. For extra data, see HyperPod job governance greatest practices for maximizing the worth of SageMaker HyperPod job governance.

Compute quota administration is an administrative mechanism that units and controls compute useful resource limits throughout customers, groups, and tasks. It controls truthful useful resource distribution, stopping a single entity from monopolizing cluster sources, thereby optimizing total computational effectivity.

Due to finances constraints, clients would possibly need to allocate compute sources throughout a number of groups pretty. For instance, an information scientist would possibly want some GPUs (for instance, 4 H100 GPUs) for mannequin growth, however not your complete occasion’s compute capability. In different instances, clients have restricted compute sources however many groups, they usually need to pretty share compute sources throughout these groups, in order that no idle capability is left unused.

With HyperPod job governance, directors can now allocate granular GPU, vCPU, and vCPU reminiscence to groups and tasks—along with your complete occasion sources—based mostly on their most popular technique. Key capabilities embody GPU-level quota allocation by occasion kind and household, or {hardware} kind—supporting each Trainium and NVIDIA GPUs—and optionally available CPU and reminiscence allocation for fine-tuned useful resource management. Directors may also outline the burden (or precedence stage) a staff is given for fair-share idle compute allocation.

“With all kinds of frontier AI information experiments and manufacturing pipelines, with the ability to maximize SageMaker HyperPod Cluster utilization is extraordinarily excessive impression. This requires truthful and managed entry to shared sources like state-of-the-art GPUs, granular {hardware} allocation, and extra. That is precisely what HyperPod job governance is constructed for, and we’re excited to see AWS pushing environment friendly cluster utilization for a wide range of AI use instances.”

– Daniel Xu, Director of Product at Snorkel AI, whose AI information know-how platform empowers enterprises to construct specialised AI functions by leveraging their organizational experience at scale.

On this submit, we dive deep into find out how to outline quotas for groups or tasks based mostly on granular or instance-level allocation. We focus on totally different strategies to outline such insurance policies, and the way information scientists can schedule their jobs seamlessly with this new functionality.

Resolution overview

Stipulations

To comply with the examples on this weblog submit, it’s essential meet the next stipulations:

To schedule and execute the instance jobs within the Submitting Duties part, additionally, you will want:

- An area atmosphere (both your native machine or a cloud-based compute atmosphere), from which to run the HyperPod CLI and kubectl instructions, configured as follows:

- HyperPod Coaching Operator put in within the cluster

Allocating granular compute and reminiscence quota utilizing the AWS console

Directors are the first persona interacting with SageMaker HyperPod job governance and are chargeable for managing cluster compute allocation in alignment with the group’s strategic priorities and targets.

Implementing this function follows the acquainted compute allocation creation workflow of HyperPod job governance. To get began, sign up to the AWS Administration Console and navigate to Cluster Administration below HyperPod Clusters within the Amazon SageMaker AI console. After deciding on your HyperPod cluster, choose the Insurance policies tab within the cluster element web page. Navigate to Compute allocations and select Create.

As with present performance, you possibly can allow job prioritization and fair-share useful resource allocation by cluster insurance policies that prioritize vital workloads and distribute idle compute throughout groups. By utilizing HyperPod job governance, you possibly can outline queue admission insurance policies (first-come-first-serve by default or job rating) and idle compute allocation strategies (first-come-first-serve or fair-share by default). Within the Compute allocation part, you possibly can create and edit allocations to distribute sources amongst groups, allow lending and borrowing of idle compute, configure preemption of low-priority duties, and assign fair-share weights.

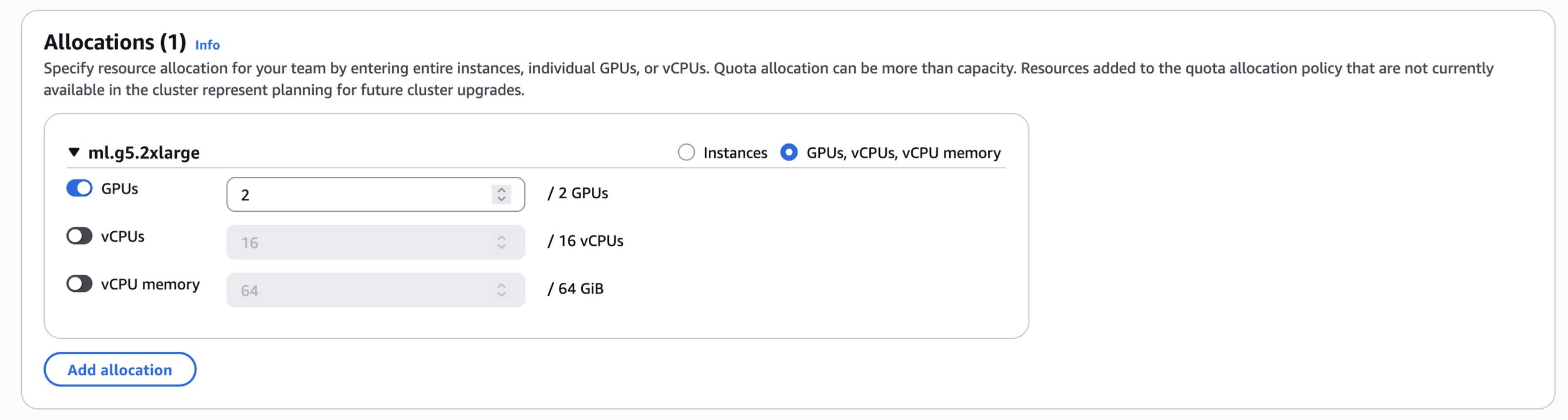

The important thing innovation is within the Allocations part proven within the following determine, the place you’ll now discover fine-grained choices for useful resource allocation. Along with the prevailing instance-level quotas, now you can immediately specify GPU quotas by occasion kind and household or by {hardware} kind. If you outline GPU allocations, HyperPod job governance intelligently calculates acceptable default values for vCPUs and reminiscence that are set proportionally.

For instance, when allocating 2 GPUs from a single p5.48xlarge occasion (which has 8 GPUs, 192 vCPUs, and a pair of TiB reminiscence) in your HyperPod cluster, HyperPod job governance assigns 48 vCPUs and 512 GiB reminiscence as default values—which is equal to 1 quarter of the occasion’s whole sources. Equally, in case your HyperPod cluster comprises 2 ml.g5.2xlarge situations (every with 1 GPU, 8 vCPUs, and 32 GiB reminiscence), allocating 2 GPUs would robotically assign 16 vCPUs and 64 GiB reminiscence from each situations as proven within the following picture.

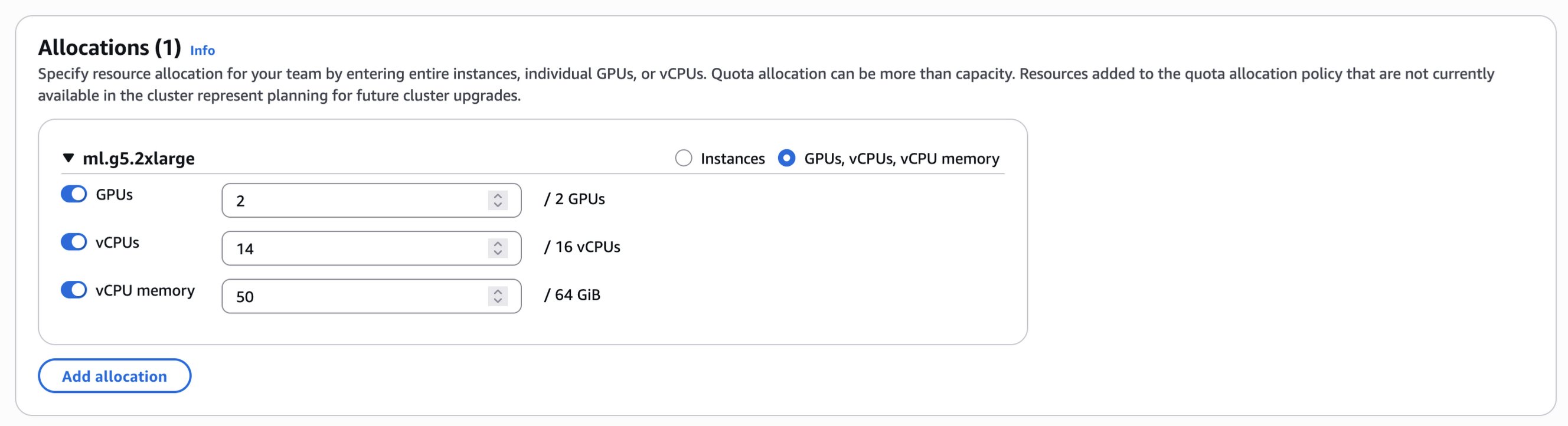

You may both proceed with these robotically calculated default values or customise the allocation by manually adjusting the vCPUs and vCPU reminiscence fields as seen within the following picture.

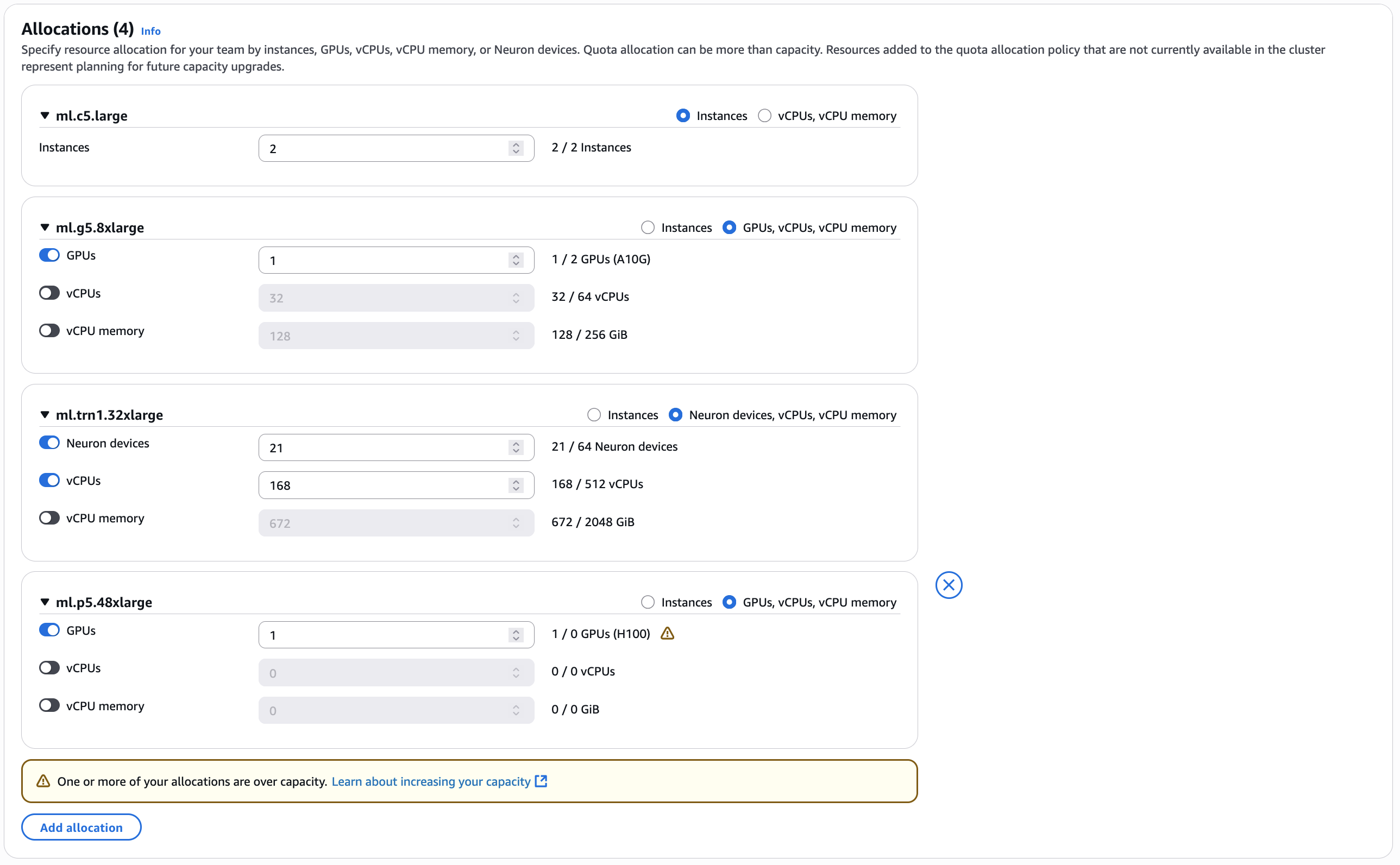

Amazon SageMaker HyperPod helps clusters that embody CPU-based situations, GPU-based situations, and AWS Neuron-based {hardware} (AWS Inferentia and AWS Trainium chips). You may specify useful resource allocation on your staff by situations, GPUs, vCPUs, vCPU reminiscence, or Neuron gadgets, as proven within the following picture.

Quota allocation may be greater than capability. Assets added to the compute allocation coverage that aren’t at the moment obtainable within the cluster characterize planning for future capability upgrades. Jobs that require these unprovisioned sources can be robotically queued and stay in a pending state till the mandatory sources grow to be obtainable. It’s essential to grasp that in SageMaker HyperPod, compute allocations operate as quotas, that are verified throughout workload scheduling to grasp if a workload needs to be admitted or not, no matter precise capability availability. When useful resource requests are inside these outlined allocation limits and present utilization, the Kubernetes scheduler (kube-scheduler) handles the precise distribution and placement of pods throughout the HyperPod cluster nodes.

Allocating granular compute and reminiscence quota utilizing AWS CLI

You may as well create or replace compute quotas utilizing the AWS CLI. The next is an instance for making a compute quota with solely GPU rely specification utilizing the AWS CLI:

Compute quotas may also be created with blended quota varieties, together with a sure variety of situations and granular compute sources, as proven within the following instance:

HyperPod job governance deep dive

SageMaker HyperPod job governance allows allocation of GPU, CPU, and reminiscence sources by integrating with Kueue, a Kubernetes-native system for job queueing.

Kueue doesn’t exchange present Kubernetes scheduling elements, however relatively integrates with the kube-scheduler, such that Kueue decides whether or not a workload needs to be admitted based mostly on the useful resource quotas and present utilization, after which the kube-scheduler takes care of pod placement on the nodes.

When a workload requests particular sources, Kueue selects an acceptable useful resource taste based mostly on availability, node affinity, and job precedence. The scheduler then injects the corresponding node labels and tolerations into the PodSpec, permitting Kubernetes to put the pod on nodes with the requested {hardware} configuration. This helps exact useful resource governance and environment friendly allocation for multi-tenant clusters.

When a SageMaker HyperPod job governance compute allocation is created, Kueue creates ClusterQueues that outline useful resource quotas and scheduling insurance policies, together with ResourceFlavors for the chosen occasion varieties with their distinctive useful resource traits.

For instance, the next compute allocation coverage allocates ml.g6.12xlarge situations with 2 GPUs and 48 vCPUs to the onlygputeam staff, implementing a LendAndBorrow technique with an as much as 50% borrowing restrict. This configuration allows versatile useful resource sharing whereas sustaining precedence by a fair proportion weight of 10 and the flexibility to preempt decrease precedence duties from different groups.

The corresponding Kueue ClusterQueue is configured with the ml.g6.12xlarge taste, offering quotas for two NVIDIA GPUs, 48 CPU cores, and 192 Gi reminiscence.

A Kueue LocalQueue can be additionally created, and can reference the corresponding ClusterQueue. The LocalQueue acts because the namespace-scoped useful resource by which customers can submit workloads, and these workloads are then admitted and scheduled based on the quotas and insurance policies outlined within the ClusterQueue.

Submitting duties

There are two methods to submit duties on Amazon EKS orchestrated SageMaker HyperPod clusters: the SageMaker HyperPod CLI and the Kubernetes command-line instrument, kubectl. With each choices, information scientists must reference their staff’s namespace and job precedence class—along with the requested GPU and vCPU compute and reminiscence sources—to make use of their granular allotted quota with acceptable prioritization. If the consumer doesn’t specify a precedence class, then SageMaker HyperPod job governance will robotically assume the bottom precedence. The particular GPU kind comes from an occasion kind choice, as a result of information scientists need to use GPUs with sure capabilities (for instance, H100 as a substitute of H200) to carry out their duties effectively.

HyperPod CLI

The HyperPod CLI was created to summary the complexities of working with kubectl and in order that builders utilizing SageMaker HyperPod can iterate quicker with customized instructions.The next is an instance of a job submission with the HyperPod CLI requesting each compute and reminiscence sources:

The highlighted parameters allow requesting granular compute and reminiscence sources. The HyperPod CLI requires to put in the HyperPod Coaching Operator within the cluster after which construct a container picture that features the HyperPod Elastic Agent. For additional directions on find out how to construct such container picture, please confer with the HyperPod Coaching Operator documentation.

For extra data on the supported HyperPod CLI arguments and associated description, see the SageMaker HyperPod CLI reference documentation.

Kubectl

The next is an instance of a kubectl command to submit a job to the HyperPod cluster utilizing the required queue. This can be a easy instance of a PyTorch job that may test for GPU availability after which sleep for five minutes. Compute and reminiscence sources are requested utilizing the usual Kubernetes resource management constructs.

Following is a brief reference information for useful instructions when interacting with SageMaker HyperPod job governance:

- Describing cluster policy with the AWS CLI – This AWS CLI command is helpful for viewing the cluster coverage settings on your cluster.

- List compute quota allocations with the AWS CLI – Use this AWS CLI command to view the totally different groups and arrange job governance and their respective quota allocation settings.

- HyperPod CLI – The HyperPod CLI abstracts frequent kubectl instructions used to work together with SageMaker HyperPod clusters similar to submitting, itemizing, and cancelling duties. See the SageMaker HyperPod CLI reference documentation for a full listing of instructions.

- kubectl – You may as well use kubectl to work together with job governance; some helpful instructions are:

kubectl get workloads -n hyperpod-ns-<team-name> kubectl describe workload <workload-name> -n hyperpod-ns-<team-name>. These instructions present the workloads working in your cluster per namespace and supply detailed reasonings on Kueue admission. You should use these instructions to reply questions similar to “Why was my job preempted?” or “Why did my job get admitted?”

Frequent situations

A typical use case for extra granular allocation of GPU compute is fine-tuning small and medium sized massive language fashions (LLMs). A single H100 or H200 GPU is likely to be enough to deal with such a use case (additionally relying on the chosen batch measurement and different components), and machine studying (ML) platform directors can select to allocate a single GPU to every information scientist or ML researcher to optimize the utilization of an occasion like ml.p5.48xlarge, which comes with 8 H100 GPUs onboard.

Small language fashions (SLMs) have emerged as a major development in generative AI, providing decrease latency, decreased deployment prices, and enhanced privateness capabilities whereas sustaining spectacular efficiency on focused duties, making them more and more important for agentic workflows and edge computing situations. The brand new SageMaker HyperPod job governance with fine-grained GPU, CPU, and reminiscence allocation considerably enhances SLM growth by enabling exact matching of sources to mannequin necessities, permitting groups to effectively run a number of experiments concurrently with totally different architectures. This useful resource optimization is especially helpful as organizations develop specialised SLMs for domain-specific functions, with priority-based scheduling in order that vital mannequin coaching jobs obtain sources first whereas maximizing total cluster utilization. By offering precisely the precise sources on the proper time, HyperPod accelerates the event of specialised, domain-specific SLMs that may be deployed as environment friendly brokers in advanced workflows, enabling extra responsive and cost-effective AI options throughout industries.

With the rising recognition of SLMs, organizations can use granular quota allocation to create focused quota insurance policies that prioritize GPU sources, addressing the budget-sensitive nature of ML infrastructure the place GPUs characterize essentially the most vital price and efficiency issue. Organizations can now selectively apply CPU and reminiscence limits the place wanted, making a granular useful resource administration method that effectively helps various machine studying workloads no matter mannequin measurement.

Equally, to help inference workloads, a number of groups may not require a complete occasion to deploy their fashions, serving to to keep away from having total situations geared up with a number of GPUs allotted to every staff and leaving GPU compute sitting idle.

Lastly, throughout experimentation and algorithm growth, information scientists and ML researchers can select to deploy a container internet hosting their most popular IDE on HyperPod, like JupyterLab or Code-OSS (Visible Studio Code open supply). On this situation, they usually experiment with smaller batch sizes earlier than scaling to multi-GPU configurations, therefore not needing total multi-GPU situations to be allotted.Comparable concerns apply to CPU situations; for instance, an ML platform administrator would possibly resolve to make use of CPU situations for IDE deployment, as a result of information scientists desire to scale their coaching or fine-tuning with jobs relatively than experimenting with the native IDE compute. In such instances, relying on the situations of alternative, partitioning CPU cores throughout the staff is likely to be helpful.

Conclusion

The introduction of fine-grained compute quota allocation in SageMaker HyperPod represents a major development in ML infrastructure administration. By enabling GPU-level useful resource allocation alongside instance-level controls, organizations can now exactly tailor their compute sources to match their particular workloads and staff buildings.

This granular method to useful resource governance addresses vital challenges confronted by ML groups right now, balancing finances constraints, maximizing costly GPU utilization, and guaranteeing truthful entry throughout information science groups of all sizes. Whether or not fine-tuning SLMs that require single GPUs, working inference workloads with diverse useful resource wants, or supporting growth environments that don’t require full occasion energy, this versatile functionality helps make sure that no compute sources sit idle unnecessarily.

ML workloads proceed to diversify of their useful resource necessities and SageMaker HyperPod job governance now supplies the adaptability organizations must optimize their GPU capability investments. To study extra, go to the SageMaker HyperPod product web page and HyperPod job governance documentation.

Give this a strive within the Amazon SageMaker AI console and go away your feedback right here.

Concerning the authors

Siamak Nariman is a Senior Product Supervisor at AWS. He’s targeted on AI/ML know-how, ML mannequin administration, and ML governance to enhance total organizational effectivity and productiveness. He has intensive expertise automating processes and deploying numerous applied sciences.

Siamak Nariman is a Senior Product Supervisor at AWS. He’s targeted on AI/ML know-how, ML mannequin administration, and ML governance to enhance total organizational effectivity and productiveness. He has intensive expertise automating processes and deploying numerous applied sciences.

Zhenshan Jin is a Senior Software program Engineer at Amazon Internet Companies (AWS), the place he leads software program growth for job governance on SageMaker HyperPod. In his position, he focuses on empowering clients with superior AI capabilities whereas fostering an atmosphere that maximizes engineering staff effectivity and productiveness.

Zhenshan Jin is a Senior Software program Engineer at Amazon Internet Companies (AWS), the place he leads software program growth for job governance on SageMaker HyperPod. In his position, he focuses on empowering clients with superior AI capabilities whereas fostering an atmosphere that maximizes engineering staff effectivity and productiveness.

Giuseppe Angelo Porcelli is a Principal Machine Studying Specialist Options Architect for Amazon Internet Companies. With a number of years of software program engineering and an ML background, he works with clients of any measurement to grasp their enterprise and technical wants and design AI and ML options that make the very best use of the AWS Cloud and the Amazon Machine Studying stack. He has labored on tasks in numerous domains, together with MLOps, pc imaginative and prescient, and NLP, involving a broad set of AWS providers. In his free time, Giuseppe enjoys taking part in soccer.

Giuseppe Angelo Porcelli is a Principal Machine Studying Specialist Options Architect for Amazon Internet Companies. With a number of years of software program engineering and an ML background, he works with clients of any measurement to grasp their enterprise and technical wants and design AI and ML options that make the very best use of the AWS Cloud and the Amazon Machine Studying stack. He has labored on tasks in numerous domains, together with MLOps, pc imaginative and prescient, and NLP, involving a broad set of AWS providers. In his free time, Giuseppe enjoys taking part in soccer.

Sindhura Palakodety is a Options Architect at AWS. She is captivated with serving to clients construct enterprise-scale Effectively-Architected options on the AWS platform and specializes within the information analytics area.

Sindhura Palakodety is a Options Architect at AWS. She is captivated with serving to clients construct enterprise-scale Effectively-Architected options on the AWS platform and specializes within the information analytics area.