Organizations are more and more excited concerning the potential of AI brokers, however many discover themselves caught in what we name “proof of idea purgatory”—the place promising agent prototypes wrestle to make the leap to manufacturing deployment. In our conversations with prospects, we’ve heard constant challenges that block the trail from experimentation to enterprise-grade deployment:

“Our builders wish to use completely different frameworks and fashions for various use instances—forcing standardization slows innovation.”

“The stochastic nature of brokers makes safety extra complicated than conventional purposes—we’d like stronger isolation between consumer classes.”

“We wrestle with id and entry management for brokers that must act on behalf of customers or entry delicate techniques.”

“Our brokers must deal with numerous enter sorts—textual content, photos, paperwork—usually with massive payloads that exceed typical serverless compute limits.”

“We will’t predict the compute assets every agent will want, and prices can spiral when overprovisioning for peak demand.”

“Managing infrastructure for brokers which may be a mixture of quick and long-running requires specialised experience that diverts our focus from constructing precise agent performance.”

Amazon Bedrock AgentCore Runtime addresses these challenges with a safe, serverless internet hosting setting particularly designed for AI brokers and instruments. Whereas conventional software internet hosting techniques weren’t constructed for the distinctive traits of agent workloads—variable execution occasions, stateful interactions, and complicated safety necessities—AgentCore Runtime was purpose-built for these wants.

The service alleviates the infrastructure complexity that has stored promising agent prototypes from reaching manufacturing. It handles the undifferentiated heavy lifting of container orchestration, session administration, scalability, and safety isolation, serving to builders give attention to creating clever experiences quite than managing infrastructure. On this submit, we focus on find out how to accomplish the next:

- Use completely different agent frameworks and completely different fashions

- Deploy, scale, and stream agent responses in 4 traces of code

- Safe agent execution with session isolation and embedded id

- Use state persistence for stateful brokers together with Amazon Bedrock AgentCore Reminiscence

- Course of completely different modalities with massive payloads

- Function asynchronous multi-hour brokers

- Pay just for used assets

Use completely different agent frameworks and fashions

One benefit of AgentCore Runtime is its framework-agnostic and model-agnostic strategy to agent deployment. Whether or not your staff has invested in LangGraph for complicated reasoning workflows, adopted CrewAI for multi-agent collaboration, or constructed customized brokers utilizing Strands, AgentCore Runtime can use your current code base with out requiring architectural adjustments or any framework migrations. Refer to those samples on Github for examples.

With AgentCore Runtime, you possibly can combine completely different massive language fashions (LLMs) out of your most popular supplier, akin to Amazon Bedrock managed fashions, Anthropic’s Claude, OpenAI’s API, or Google’s Gemini. This makes positive your agent implementations stay moveable and adaptable because the LLM panorama continues to evolve whereas serving to you choose the fitting mannequin on your use case to optimize for efficiency, price, or different enterprise necessities. This offers you and your staff the flexibleness to decide on your favourite or most helpful framework or mannequin utilizing a unified deployment sample.

Let’s study how AgentCore Runtime helps two completely different frameworks and mannequin suppliers:

| LangGraph agent utilizing Anthropic’s Claude Sonnet on Amazon Bedrock | Strands agent utilizing GPT 4o mini via the OpenAI API |

For the complete code examples, check with langgraph_agent_web_search.py and strands_openai_identity.py on GitHub.

Each examples above present how you should utilize AgentCore SDK, whatever the underlying framework or mannequin selection. After you’ve gotten modified your code as proven in these examples, you possibly can deploy your agent with or with out the AgentCore Runtime starter toolkit, mentioned within the subsequent part.

Notice that there are minimal additions, particular to AgentCore SDK, to the instance code above. Allow us to dive deeper into this within the subsequent part.

Deploy, scale, and stream agent responses with 4 traces of code

Let’s study the 2 examples above. In each examples, we solely add 4 new traces of code:

- Import –

from bedrock_agentcore.runtime import BedrockAgentCoreApp - Initialize –

app = BedrockAgentCoreApp() - Beautify –

@app.entrypoint - Run –

app.run()

After you have made these adjustments, essentially the most easy solution to get began with agentcore is to make use of the AgentCore Starter toolkit. We advise utilizing uv to create and handle native growth environments and bundle necessities in python. To get began, set up the starter toolkit as follows:

Run the suitable instructions to configure, launch, and invoke to deploy and use your agent. The next video gives a fast walkthrough.

In your chat model purposes, AgentCore Runtime helps streaming out of the field. For instance, in Strands, find the next synchronous code:

Change the previous code to the next and deploy:

For extra examples on streaming brokers, check with the next GitHub repo. The next is an instance streamlit software streaming again responses from an AgentCore Runtime agent.

Safe agent execution with session isolation and embedded id

AgentCore Runtime basically adjustments how we take into consideration serverless compute for agentic purposes by introducing persistent execution environments that may preserve an agent’s state throughout a number of invocations. Fairly than the standard serverless mannequin the place features spin up, execute, and instantly terminate, AgentCore Runtime provisions devoted microVMs that may persist for as much as 8 hours. This permits refined multi-step agentic workflows the place every subsequent name builds upon the gathered context and state from earlier interactions throughout the identical session. The sensible implication of that is which you can now implement complicated, stateful logic patterns that will beforehand require exterior state administration options or cumbersome workarounds to keep up context between perform executions. This doesn’t obviate the necessity for exterior state administration (see the next part on utilizing AgentCore Runtime with AgentCore Reminiscence), however is a standard want for sustaining native state and recordsdata briefly, inside a session context.

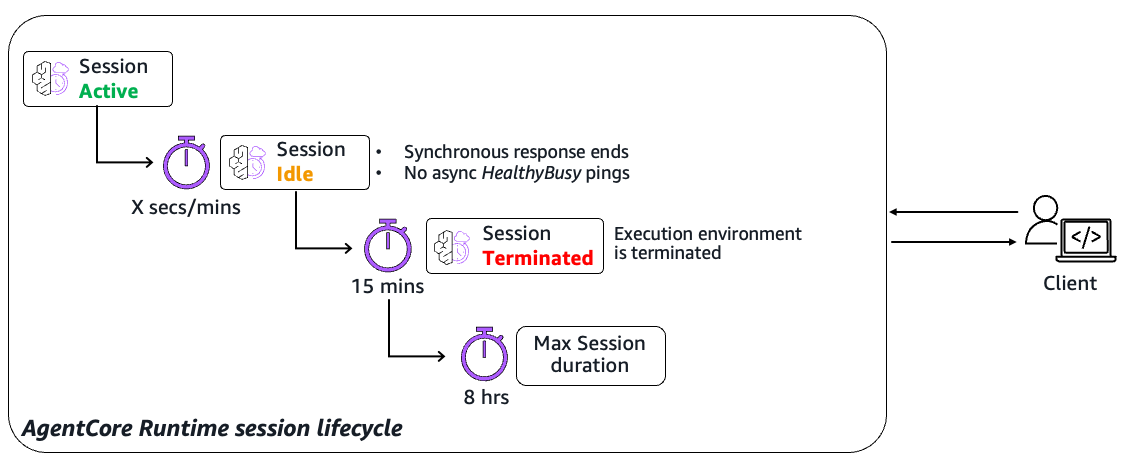

Understanding the session lifecycle

The session lifecycle operates via three distinct states that govern useful resource allocation and availability (see diagram beneath for a excessive degree view of this session lifecycle). While you first invoke a runtime with a novel session identifier, AgentCore provisions a devoted execution setting and transitions it to an Lively state throughout request processing or when background duties are operating.

The system routinely tracks synchronous invocation exercise, whereas background processes can sign their standing via HealthyBusy responses to well being verify pings from the service (see the later part on asynchronous workloads). Periods transition to Idle when not processing requests however stay provisioned and prepared for speedy use, decreasing chilly begin penalties for subsequent invocations.

Lastly, classes attain a Terminated state after they presently exceed a 15-minute inactivity threshold, hit the 8-hour most length restrict, or fail well being checks. Understanding these state transitions is essential for designing resilient workflows that gracefully deal with session boundaries and useful resource cleanup. For extra particulars on session lifecycle-related quotas, check with AgentCore Runtime Service Quotas.

The ephemeral nature of AgentCore classes implies that runtime state exists solely throughout the boundaries of the lively session lifecycle. The information your agent accumulates throughout execution—akin to dialog context, consumer desire mappings, intermediate computational outcomes, or transient workflow state—stays accessible solely whereas the session persists and is totally purged when the session terminates.

For persistent knowledge necessities that reach past particular person session boundaries, AgentCore Reminiscence gives the architectural resolution for sturdy state administration. This purpose-built service is particularly engineered for agent workloads and affords each short-term and long-term reminiscence abstractions that may preserve consumer dialog histories, discovered behavioral patterns, and demanding insights throughout session boundaries. See documentation right here for extra info on getting began with AgentCore Reminiscence.

True session isolation

Session isolation in AI agent workloads addresses basic safety and operational challenges that don’t exist in conventional software architectures. Not like stateless features that course of particular person requests independently, AI brokers preserve complicated contextual state all through prolonged reasoning processes, deal with privileged operations with delicate credentials and recordsdata, and exhibit non-deterministic conduct patterns. This creates distinctive dangers the place one consumer’s agent may doubtlessly entry one other’s knowledge—session-specific info could possibly be used throughout a number of classes, credentials may leak between classes, or unpredictable agent conduct may compromise system boundaries. Conventional containerization or course of isolation isn’t enough as a result of brokers want persistent state administration whereas sustaining absolute separation between customers.

Let’s discover a case examine: In Might 2025, Asana deployed a brand new MCP server to energy agentic AI options (integrations with ChatGPT, Anthropic’s Claude, Microsoft Copilot) throughout its enterprise software program as a service (SaaS) providing. Because of a logic flaw in MCP’s tenant isolation and relying solely on consumer however not agent id, requests from one group’s consumer may inadvertently retrieve cached outcomes containing one other group’s knowledge. This cross-tenant contamination wasn’t triggered by a focused exploit however was an intrinsic safety fault in dealing with context and cache separation throughout agentic AI-driven classes.

The publicity silently endured for 34 days, impacting roughly 1,000 organizations, together with main enterprises. After it was found, Asana halted the service, remediated the bug, notified affected prospects, and launched a repair.

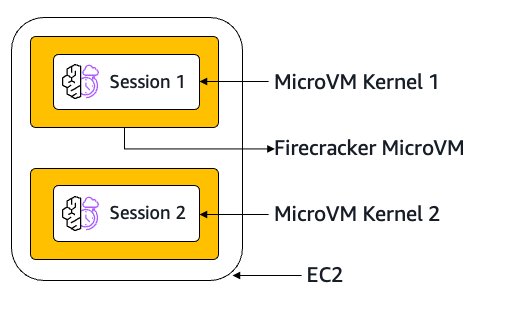

AgentCore Runtime solves these challenges via full microVM isolation that goes past easy useful resource separation. Every session receives its personal devoted digital machine with remoted compute, reminiscence, and file system assets, ensuring agent state, instrument operations, and credential entry stay fully compartmentalized. When a session ends, the complete microVM is terminated and reminiscence sanitized, minimizing the danger of knowledge persistence or cross-contamination. This structure gives the deterministic safety boundaries that enterprise deployments require, even when coping with the inherently probabilistic and non-deterministic nature of AI brokers, whereas nonetheless enabling the stateful, personalised experiences that make brokers invaluable. Though different choices may present sandboxed kernels, with the flexibility to handle your individual session state, persistence, and isolation, this shouldn’t be handled a strict safety boundary. AgentCore Runtime gives constant, deterministic isolation boundaries no matter agent execution patterns, delivering the predictable safety properties required for enterprise deployments. The next diagram exhibits how two separate classes run in remoted microVM kernels.

AgentCore Runtime embedded id

Conventional agent deployments usually wrestle with id and entry administration, significantly when brokers must act on behalf of customers or entry exterior companies securely. The problem turns into much more complicated in multi-tenant environments—for instance, the place that you must make certain Agent A accessing Google Drive on behalf of Consumer 1 can by no means by chance retrieve knowledge belonging to Consumer 2.

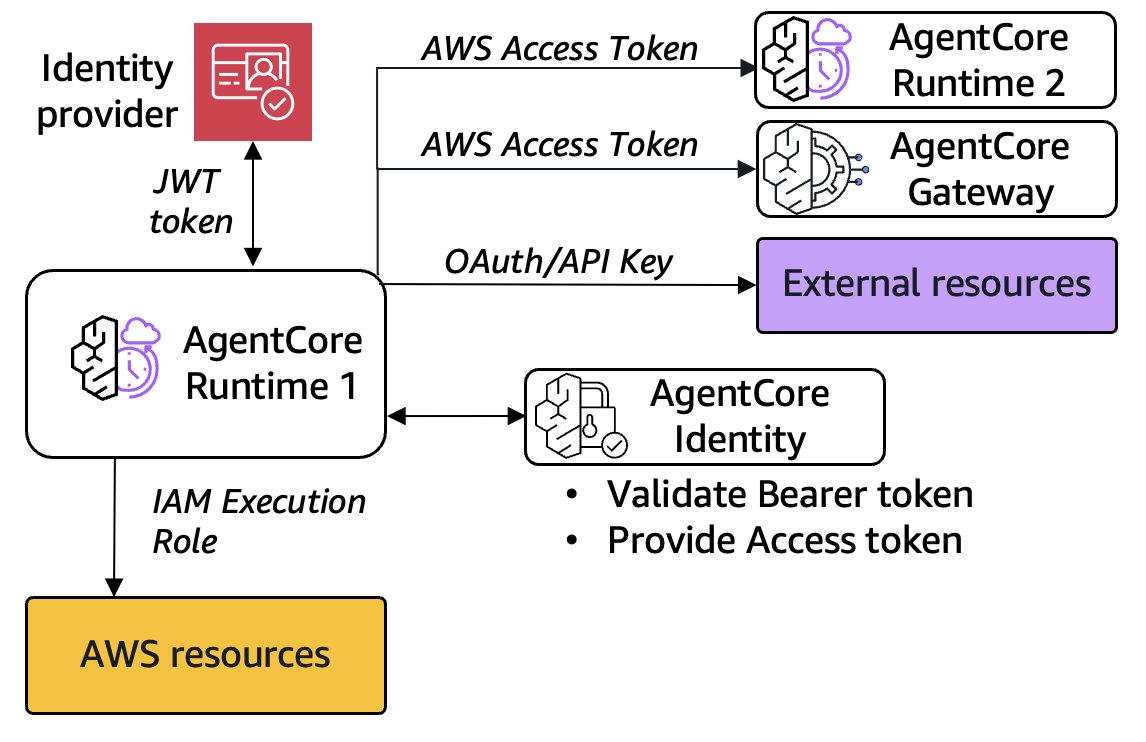

AgentCore Runtime addresses these challenges via its embedded id system that seamlessly integrates authentication and authorization into the agent execution setting. First, every runtime is related to a novel workload id (you possibly can deal with this as a novel agent id). The service helps two major authentication mechanisms for brokers utilizing this distinctive agent id: IAM SigV4 Authentication for brokers working inside AWS safety boundaries, and OAuth primarily based (JWT Bearer Token Authentication) integration with current enterprise id suppliers like Amazon Cognito, Okta, or Microsoft Entra ID.

When deploying an agent with AWS Id and Entry Administration (IAM) authentication, customers don’t have to include different Amazon Bedrock AgentCore Id particular settings or setup—merely configure with IAM authorization, launch, and invoke with the fitting consumer credentials.

When utilizing JWT authentication, you configure the authorizer in the course of the CreateAgentRuntime operation, specifying your id supplier (IdP)-specific discovery URL and allowed shoppers. Your current agent code requires no modification—you merely add the authorizer configuration to your runtime deployment. When a calling entity or consumer invokes your agent, they go their IdP-specific entry token as a bearer token within the Authorization header. AgentCore Runtime makes use of AgentCore Id to routinely validate this token in opposition to your configured authorizer and rejects unauthorized requests. The next diagram exhibits the movement of knowledge between AgentCore runtime, your IdP, AgentCore Id, different AgentCore companies, different AWS companies (in orange), and different exterior APIs or assets (in purple).

Behind the scenes, AgentCore Runtime routinely exchanges validated consumer tokens for workload entry tokens (via the bedrock-agentcore:GetWorkloadAccessTokenForJWT API). This gives safe outbound entry to exterior companies via the AgentCore credential supplier system, the place tokens are cached utilizing the mix of agent workload id and consumer ID because the binding key. This cryptographic binding makes positive, for instance, Consumer 1’s Google token can by no means be accessed when processing requests for Consumer 2, no matter software logic errors. Notice that within the previous diagram, connecting to AWS assets will be achieved just by enhancing the AgentCore Runtime execution position, however connections to Amazon Bedrock AgentCore Gateway or to a different runtime would require reauthorization with a brand new entry token.

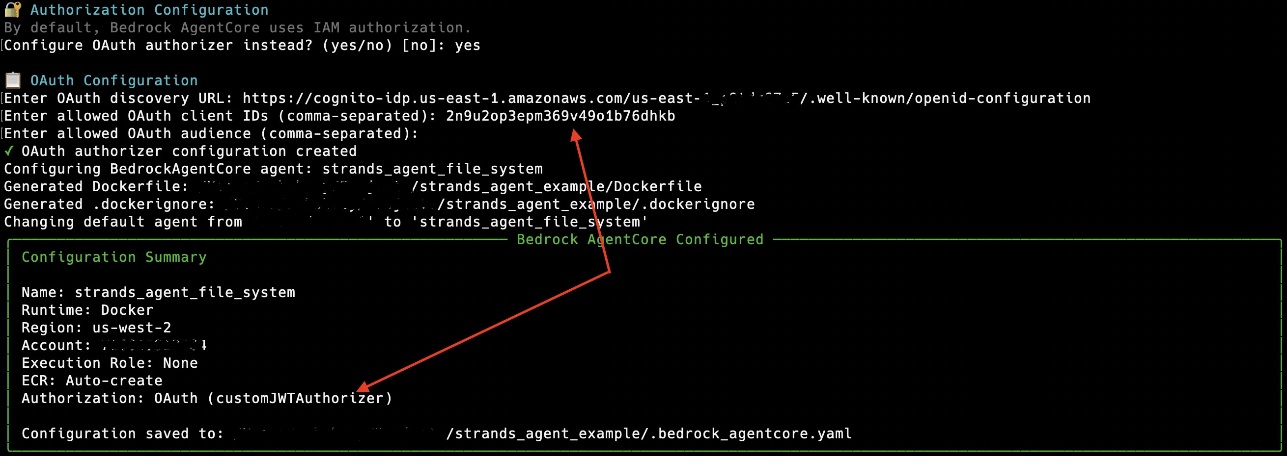

Essentially the most easy solution to configure your agent with OAuth-based inbound entry is to make use of the AgentCore starter toolkit:

- With the AWS Command Line Interface (AWS CLI), comply with the prompts to interactively enter your OAuth discovery URL and allowed Shopper IDs (comma-separated).

- With Python, use the next code:

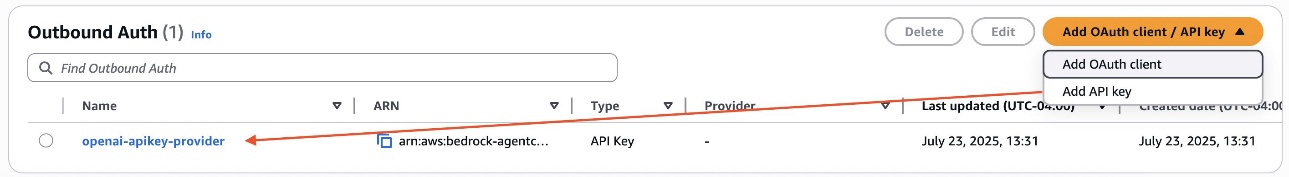

- For outbound entry (for instance, in case your agent makes use of OpenAI APIs), first arrange your keys utilizing the API or the Amazon Bedrock console, as proven within the following screenshot.

- Then entry your keys from inside your AgentCore Runtime agent code:

For extra info on AgentCore Id, check with Authenticate and authorize with Inbound Auth and Outbound Auth and Hosting AI Agents on AgentCore Runtime.

Use AgentCore Runtime state persistence with AgentCore Reminiscence

AgentCore Runtime gives ephemeral, session-specific state administration that maintains context throughout lively conversations however doesn’t persist past the session lifecycle. Every consumer session preserves conversational state, objects in reminiscence, and native momentary recordsdata inside remoted execution environments. For brief-lived brokers, you should utilize the state persistence supplied by AgentCore Runtime while not having to save lots of this info externally. Nevertheless, on the finish of the session lifecycle, the ephemeral state is completely destroyed, making this strategy appropriate just for interactions that don’t require data retention throughout separate conversations.

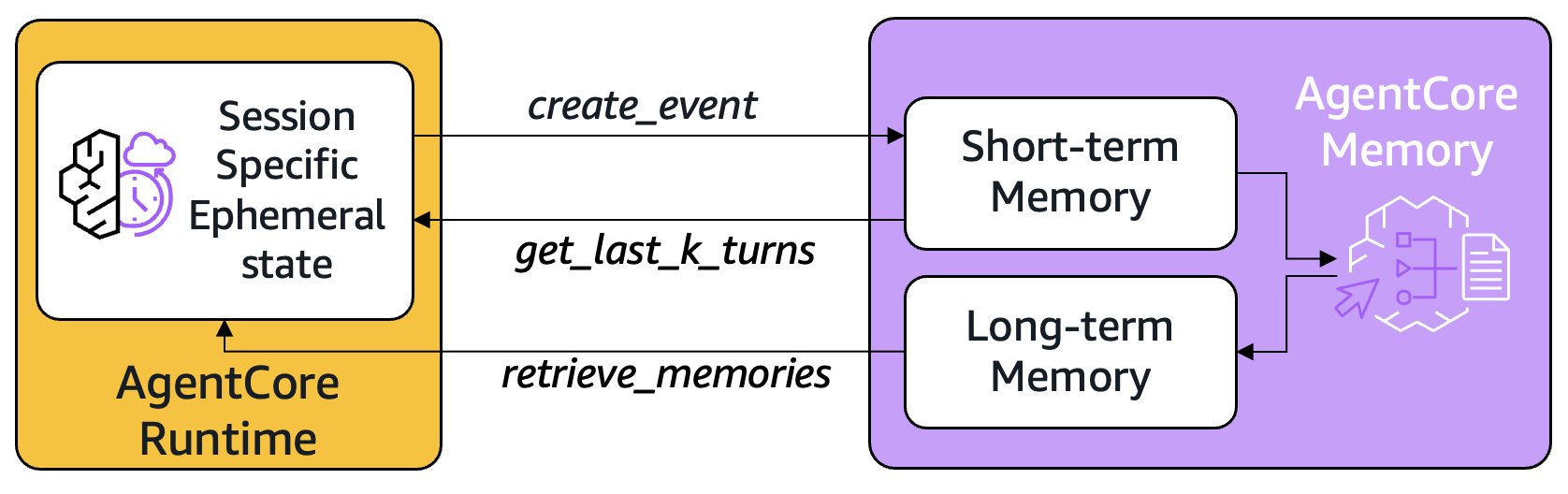

AgentCore Reminiscence addresses this problem by offering persistent storage that survives past particular person classes. Brief-term reminiscence captures uncooked interactions as occasions utilizing create_event, storing the whole dialog historical past that may be retrieved with get_last_k_turns even when the runtime session restarts. Lengthy-term reminiscence makes use of configurable methods to extract and consolidate key insights from these uncooked interactions, akin to consumer preferences, essential information, or dialog summaries. By retrieve_memories, brokers can entry this persistent data throughout fully completely different classes, enabling personalised experiences. The next diagram exhibits how AgentCore Runtime can use particular APIs to work together with Brief-term and Lengthy-term reminiscence in AgentCore Reminiscence.

This primary structure, of utilizing a runtime to host your brokers, and a mixture of short- and long-term reminiscence has develop into commonplace in most agentic AI purposes in the present day. Invocations to AgentCore Runtime with the identical session ID helps you to entry the agent state (for instance, in a conversational movement) as if it had been operating regionally, with out the overhead of exterior storage operations, and AgentCore Reminiscence selectively captures and constructions the precious info price preserving past the session lifecycle. This hybrid strategy means brokers can preserve quick, contextual responses throughout lively classes whereas constructing cumulative intelligence over time. The automated asynchronous processing of long-term recollections in keeping with every technique in AgentCore Reminiscence makes positive insights are extracted and consolidated with out impacting real-time efficiency, making a seamless expertise the place brokers develop into progressively extra useful whereas sustaining responsive interactions. This structure avoids the standard trade-off between dialog velocity and long-term studying, enabling brokers which might be each instantly helpful and repeatedly enhancing.

Course of completely different modalities with massive payloads

Most AI agent techniques wrestle with massive file processing as a result of strict payload measurement limits, sometimes capping requests at just some megabytes. This forces builders to implement complicated file chunking, a number of API calls, or exterior storage options that add latency and complexity. AgentCore Runtime removes these constraints by supporting payloads as much as 100 MB in measurement, enabling brokers to course of substantial datasets, high-resolution photos, audio, and complete doc collections in a single invocation.

Take into account a monetary audit state of affairs the place that you must confirm quarterly gross sales efficiency by evaluating detailed transaction knowledge in opposition to a dashboard screenshot out of your analytics system. Conventional approaches would require utilizing exterior storage akin to Amazon Easy Storage Service (Amazon S3) or Google Drive to obtain the Excel file and picture into the container operating the agent logic. With AgentCore Runtime, you possibly can ship each the excellent gross sales knowledge and the dashboard picture in a single payload from the shopper:

The agent’s entrypoint perform will be modified to course of each knowledge sources concurrently, enabling this cross-validation evaluation:

To check out an instance of utilizing massive payloads, check with the next GitHub repo.

Function asynchronous multi-hour brokers

As AI brokers evolve to sort out more and more complicated duties—from processing massive datasets to producing complete reviews—they usually require multi-step processing that may take vital time to finish. Nevertheless, most agent implementations are synchronous (with response streaming) that block till completion. Whereas synchronous, streaming brokers are a standard solution to expose agentic chat purposes to customers, customers can’t work together with the agent when a activity or instrument remains to be operating, view the standing of, or cancel background operations, or begin extra concurrent duties whereas others have nonetheless not accomplished.

Constructing asynchronous brokers forces builders to implement complicated distributed activity administration techniques with state persistence, job queues, employee coordination, failure restoration, and cross-invocation state administration whereas additionally navigating serverless system limitations like execution timeouts (tens of minutes), payload measurement restrictions, and chilly begin penalties for long-running compute operations—a big heavy carry that diverts focus from core performance.

AgentCore Runtime alleviates this complexity via stateful execution classes that preserve context throughout invocations, so builders can construct upon earlier work incrementally with out implementing complicated activity administration logic. The AgentCore SDK gives ready-to-use constructs for monitoring asynchronous duties and seamlessly managing compute lifecycles, and AgentCore Runtime helps execution occasions as much as 8 hours and request/response payload sizes of 100 MB, making it appropriate for many asynchronous agent duties.

Getting began with asynchronous brokers

You will get began with simply a few code adjustments:

To construct interactive brokers that carry out asynchronous duties, merely name add_async_task when beginning a activity and complete_async_task when completed. The SDK routinely handles activity monitoring and manages compute lifecycle for you.

These two technique calls remodel your synchronous agent into a completely asynchronous, interactive system. Consult with this pattern for extra particulars.

The next instance exhibits the distinction between a synchronous agent that streams again responses to the consumer instantly vs. a extra complicated multi-agent state of affairs the place longer operating, asynchronous background buying brokers use Amazon Bedrock AgentCore Browser to automate a buying expertise on amazon.com on behalf of the consumer.

Pay just for Used Assets

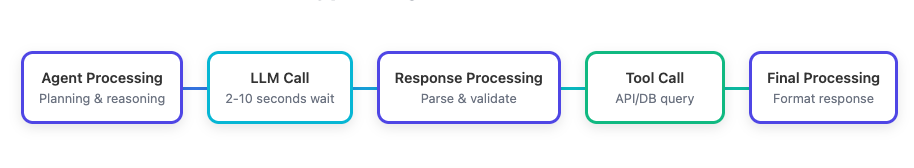

Amazon Bedrock AgentCore Runtime introduces a consumption-based pricing mannequin that basically adjustments the way you pay for AI agent infrastructure. Not like conventional compute fashions that cost for allotted assets no matter utilization, AgentCore Runtime payments you just for what you truly use nevertheless lengthy you utilize it; mentioned otherwise, you don’t should pre-allocate assets like CPU or GB Reminiscence, and also you don’t pay for CPU assets throughout I/O wait durations. This distinction is especially invaluable for AI brokers, which generally spend vital time ready for LLM responses or exterior API calls to finish. Here’s a typical Agent occasion loop, the place we solely anticipate the purple containers to be processed inside Runtime:

The LLM name (mild blue) and gear name (inexperienced) containers take time, however are run exterior the context of AgentCore Runtime; customers solely pay for processing that occurs in Runtime itself (purple containers). Let’s take a look at some real-world examples to know the impression:

Buyer assist agent instance

Take into account a buyer assist agent that handles 10,000 consumer inquiries per day. Every interplay entails preliminary question processing, data retrieval from Retrieval Augmented Era (RAG) techniques, LLM reasoning for response formulation, API calls to order techniques, and last response era. In a typical session lasting 60 seconds, the agent may actively use CPU for under 18 seconds (30%) whereas spending the remaining 42 seconds (70%) ready for LLM responses or API calls to finish. Reminiscence utilization can fluctuate between 1.5 GB to 2.5 GB relying on the complexity of the client question and the quantity of context wanted. With conventional compute fashions, you’d pay for the complete 60 seconds of CPU time and peak reminiscence allocation. With AgentCore Runtime, you solely pay for the 18 seconds of lively CPU processing and the precise reminiscence consumed moment-by-moment:

For 10,000 each day classes, this represents a 70% discount in CPU prices in comparison with conventional fashions that will cost for the complete 60 seconds.

Information evaluation agent instance

The financial savings develop into much more dramatic for knowledge processing brokers that deal with complicated workflows. A monetary evaluation agent processing quarterly reviews may run for 3 hours however have extremely variable useful resource wants. Throughout knowledge loading and preliminary parsing, it would use minimal assets (0.5 vCPU, 2 GB reminiscence). When performing complicated calculations or operating statistical fashions, it would spike to 2 vCPU and eight GB reminiscence for simply quarter-hour of the whole runtime, whereas spending the remaining time ready for batch operations or mannequin inferences at a lot decrease useful resource utilization. By charging just for precise useful resource consumption whereas sustaining your session state throughout I/O waits, AgentCore Runtime aligns prices straight with worth creation, making refined agent deployments economically viable at scale.

Conclusion

On this submit, we explored how AgentCore Runtime simplifies the deployment and administration of AI brokers. The service addresses crucial challenges which have historically blocked agent adoption at scale, providing framework-agnostic deployment, true session isolation, embedded id administration, and assist for big payloads and long-running, asynchronous brokers, all with a consumption primarily based mannequin the place you pay just for the assets you utilize.

With simply 4 traces of code, builders can securely launch and scale their brokers whereas utilizing AgentCore Reminiscence for persistent state administration throughout classes. For hands-on examples on AgentCore Runtime overlaying easy tutorials to complicated use instances, and demonstrating integrations with numerous frameworks akin to LangGraph, Strands, CrewAI, MCP, ADK, Autogen, LlamaIndex, and OpenAI Brokers, check with the next examples on GitHub:

In regards to the authors

Shreyas Subramanian is a Principal Information Scientist and helps prospects by utilizing Generative AI and deep studying to unravel their enterprise challenges utilizing AWS companies like Amazon Bedrock and AgentCore. Dr. Subramanian contributes to cutting-edge analysis in deep studying, Agentic AI, basis fashions and optimization methods with a number of books, papers and patents to his identify. In his present position at Amazon, Dr. Subramanian works with numerous science leaders and analysis groups inside and outdoors Amazon, serving to to information prospects to greatest leverage state-of-the-art algorithms and methods to unravel enterprise crucial issues. Exterior AWS, Dr. Subramanian is a specialist reviewer for AI papers and funding by way of organizations like Neurips, ICML, ICLR, NASA and NSF.

Shreyas Subramanian is a Principal Information Scientist and helps prospects by utilizing Generative AI and deep studying to unravel their enterprise challenges utilizing AWS companies like Amazon Bedrock and AgentCore. Dr. Subramanian contributes to cutting-edge analysis in deep studying, Agentic AI, basis fashions and optimization methods with a number of books, papers and patents to his identify. In his present position at Amazon, Dr. Subramanian works with numerous science leaders and analysis groups inside and outdoors Amazon, serving to to information prospects to greatest leverage state-of-the-art algorithms and methods to unravel enterprise crucial issues. Exterior AWS, Dr. Subramanian is a specialist reviewer for AI papers and funding by way of organizations like Neurips, ICML, ICLR, NASA and NSF.

Kosti Vasilakakis is a Principal PM at AWS on the Agentic AI staff, the place he has led the design and growth of a number of Bedrock AgentCore companies from the bottom up, together with Runtime. He beforehand labored on Amazon SageMaker since its early days, launching AI/ML capabilities now utilized by 1000’s of firms worldwide. Earlier in his profession, Kosti was a knowledge scientist. Exterior of labor, he builds private productiveness automations, performs tennis, and explores the wilderness along with his household.

Kosti Vasilakakis is a Principal PM at AWS on the Agentic AI staff, the place he has led the design and growth of a number of Bedrock AgentCore companies from the bottom up, together with Runtime. He beforehand labored on Amazon SageMaker since its early days, launching AI/ML capabilities now utilized by 1000’s of firms worldwide. Earlier in his profession, Kosti was a knowledge scientist. Exterior of labor, he builds private productiveness automations, performs tennis, and explores the wilderness along with his household.

Vivek Bhadauria is a Principal Engineer at Amazon Bedrock with virtually a decade of expertise in constructing AI/ML companies. He now focuses on constructing generative AI companies akin to Amazon Bedrock Brokers and Amazon Bedrock Guardrails. In his free time, he enjoys biking and mountaineering.

Vivek Bhadauria is a Principal Engineer at Amazon Bedrock with virtually a decade of expertise in constructing AI/ML companies. He now focuses on constructing generative AI companies akin to Amazon Bedrock Brokers and Amazon Bedrock Guardrails. In his free time, he enjoys biking and mountaineering.