For the following wave of AI innovation, unlock 360 petaflops of dense 8-bit floating level (FP8) computing and 1.4 exflops of sparse 4-bit floating level (FP4) by leveraging the ability of 72 state-of-the-art Nvidia Blackwell GPUs in a single system. At the moment, that is precisely what Amazon Sagemaker HyperPod affords with the launch of help for the P6E-GB200 Ultraservers. It’s accelerated by NVIDIA GB200 NVL72the P6E-GB200 Extremely Sorber affords industry-leading GPU efficiency, community throughput, and reminiscence for creating and deploying trillions of AI fashions of scale. By seamlessly integrating these ultrasorbers with the Sagemaker HyperPod’s distributed coaching atmosphere, organizations can rapidly scale mannequin growth, scale back downtime, and simplify the transition from coaching to large-scale deployments. Sagemaker HyperPod’s automated, resilient, and extremely scalable machine studying infrastructure permits organizations to seamlessly distribute massive AI workloads to 1000’s of accelerators, permitting organizations to handle end-to-end mannequin growth with unprecedented effectivity. Utilizing the Sagemaker HyperPod with the P6E-GB200 Extremely Sorber marks a particularly shift to quicker, extra resilient, and cost-effective coaching and deployment for cutting-edge generator AI fashions.

On this submit we’ll assessment the technical specs of the P6E-GB200 Extremely Sorber, clarify the efficiency advantages and spotlight vital use instances. Subsequent, stroll by way of how you can purchase ultrasorber capability by way of a versatile coaching plan and begin with Ultraservers utilizing Sagemaker HyperPod.

Contained in the extremely sorber

The P6E-GB200 Extremely Sorber is accelerated by the NVIDIA GB200 NVL72 and connects 36 NVIDIA GRACE™ CPUs and 72 Blackwell GPUs in the identical NVIDIA NVLINK™ area. Every ML.P6E-GB200.36XLARGE compute node in Ultraserver consists of two NVIDIA GB200 Grace Blackwell SuperChips, every connecting two high-performance NVIDIA Blackwell GPUs and NVIDIA NVLink Chip (C2C) Interconnect and two high-performance NVIDIA GRACE CPUs. The Sagemaker HyperPod launches the P6E-GB200 Extremely Cellbar in two sizes. The ML.U-P6E-GB200X36 Ultraserver features a rack of 9 compute nodes totally related to an NVSwitch (NVS), offering a complete of 36 blackwell GPUs in the identical NVLINK area, and the ML.U-P6E-GB200X72 Ultraserver features a rack pair of daMPUT nodes of the identical NV. The next diagram illustrates this configuration.

Extremely Sorber Efficiency Advantages

This part discusses a few of the efficiency advantages of Extremely Cellbers.

GPU and Computing Energy

The P6E-GB200 Ultraservers can help you entry as much as 72 NVIDIA Blackwell GPUs inside a single NVLink area. There’s a complete of 360 petaq flops (no sparse) for FP8 computing, 1.4 xflops (sparse) for FP4 computing, and 13.4 TB of high-band storage (HBM3E). everyGrace Blackwell Tremendous Chip It pairs with two blackwell GPUs and one GRACE CPU through the NVLINK-C2C interconnect, offering 10 dense FP8 pc 40 petaflops, as much as 372 GB HBM3E, and 850 GB of cache cogent first reminiscence modules. This colocation carries the bandwidth between the GPU and CPU by a number of orders of magnitude in comparison with earlier era cases. Every NVIDIA Blackwell GPU includes a second era transformer engine and helps the newest AI Precision Microscaling (MX) knowledge codecs, such because the MXFP6 and MXFP4. NVIDIA NVFP4. When mixed with a framework like nvidia dynamo, Nvida Tensorrt-llm Nvidia nemo, these transformer engines considerably speed up the inference and coaching of large-scale language mannequin (LLM) and combined (MOE) fashions, supporting the effectivity and efficiency of contemporary AI workloads.

Excessive Efficiency Networking

The P6E-GB200 Ultrasorber affords low latency NVLink bandwidth of as much as 130 Tbps between GPUs for environment friendly large-scale AI workload communications. At twice the bandwidth of its predecessor, the fifth era nvidia nvlink affords two-way direct GPU-to-GPU interconnects as much as 1.8 Tbps, considerably enhancing in-server communication. Every compute node inside an Ultraserver might be configured with as much as 17 bodily community interface playing cards (NICs), every able to supporting as much as 400 Gbps of bandwidth. The P6E-GB200 Ultrasorber affords complete elastic cloth adapter (EFA) V4 networking as much as 28.8 Tbps, utilizing the scalable dependable datagram (SRD) protocol to intelligently route a number of paths and supply easy operation even throughout congestion and {hardware} failures. For extra data, see EFA configuration for a P6E-GB200 occasion.

Storage and Knowledge Throughput

The P6E-GB200 Extremely Sorber helps native NVME SSD storage as much as 405 TB. That is preferrred for big datasets and quick checkpoints throughout AI mannequin coaching. For top efficiency shared storage, Amazon FSX for Luster file methods can entry EFA utilizing GPudirect storage (GDS) and supply direct knowledge switch between file methods and GPU reminiscence utilizing throughput TBP and thousands and thousands of enter/output operations per second (IOPS) to request AI coaching and inference.

Topology Conscious Scheduling

Amazon Elastic Compute Cloud (Amazon EC2) offers topology data that describes the bodily and community relationships between cases in a cluster. For Ultraserver Compute Nodes, Amazon EC2 exposes cases belonging to the identical Ultraserver, so coaching and inference algorithms can perceive the NVLink connection patterns. This topology data helps optimize distributed coaching by permitting frameworks resembling: Nvidia Collective Communications Library (NCCL) Make clever choices about communication patterns and knowledge placement. For extra data, see How Amazon EC2 Occasion Topology Works.

With Amazon Elastic Kubernetes Service (Amazon EKS) orchestration, Sagemaker HyperPod robotically labels Ultraserver compute nodes utilizing their respective AWS space, availability zone, community node layer (1-4), and Ultrasorber IDs. These topology labels can be found Node Affinityand The spread of pod topology Constraints to assign pods to cluster nodes for optimum efficiency.

With Slurm Orchestration, Sagemaker HyperPod robotically permits the topology plugin and topology.conf Every file BlockName, Nodesand BlockSizes To match the ultrasourber capability. This lets you group and section your computing nodes to optimize job efficiency.

Extremely Selver Use Case

The P6E-GB200 Extremely Sorber means that you can effectively prepare your fashions with over 1 trillion parameters because of its unified NVLink area, ultra-fast reminiscence and excessive cross-node bandwidth, making it preferrred for cutting-edge AI growth. Substantial interconnect bandwidth permits even very massive fashions to be cut up and skilled in a really parallel and environment friendly means with out the efficiency retardation seen in disjointed multinode methods. This may pace up repeated cycles and high-quality AI fashions, serving to organizations push the boundaries of cutting-edge AI analysis and innovation.

For real-time parameter mannequin inference, see P6E-GB200 Extremely Sorber In comparison with earlier platforms, it permits 30x quicker inference with frontier trillion parameters LLM, reaching real-time efficiency of generated AI, pure language understanding, and sophisticated fashions utilized in conversational brokers. When paired nvidia dynamothe P6E-GB200 Extremely Sorber offers important efficiency enhancements, particularly with lengthy context lengths. nvidia dynamo Disassembly The extremely computed prefill and memory-heavy decoding phases use a extremely memory-heavy decoding part to completely different GPUs, supporting impartial optimization and useful resource allocation inside massive 72-GPU NVLink domains. This enables for extra environment friendly administration of huge context home windows and excessive present functions.

P6E-GB200 Ultraservers affords nice advantages for startups, analysis and enterprise prospects with a number of groups that have to run numerous distributed coaching and inference workloads on shared infrastructure. When used along side Sagemaker HyperPod process governance, UltraServers affords extraordinary scalability and useful resource pooling, permitting varied groups to begin jobs concurrently with out bottlenecks. Firms can maximize infrastructure utilization, scale back total prices, and speed up venture timelines. That’s, whereas supporting the complicated wants of groups offering superior AI fashions, together with massive LLMS for prime array real-time inference throughout a single resilient platform.

Extremely Sorber Capability Versatile Coaching Plan

Sagemaker AI at the moment affords P6E-GB200 Extremely Sorber Capability by way of a versatile coaching plan within the Dallas AWS Native Zone (us-east-1-dfw-2a). UltraServers can be utilized for each Sagemaker HyperPod and Sagemaker coaching jobs.

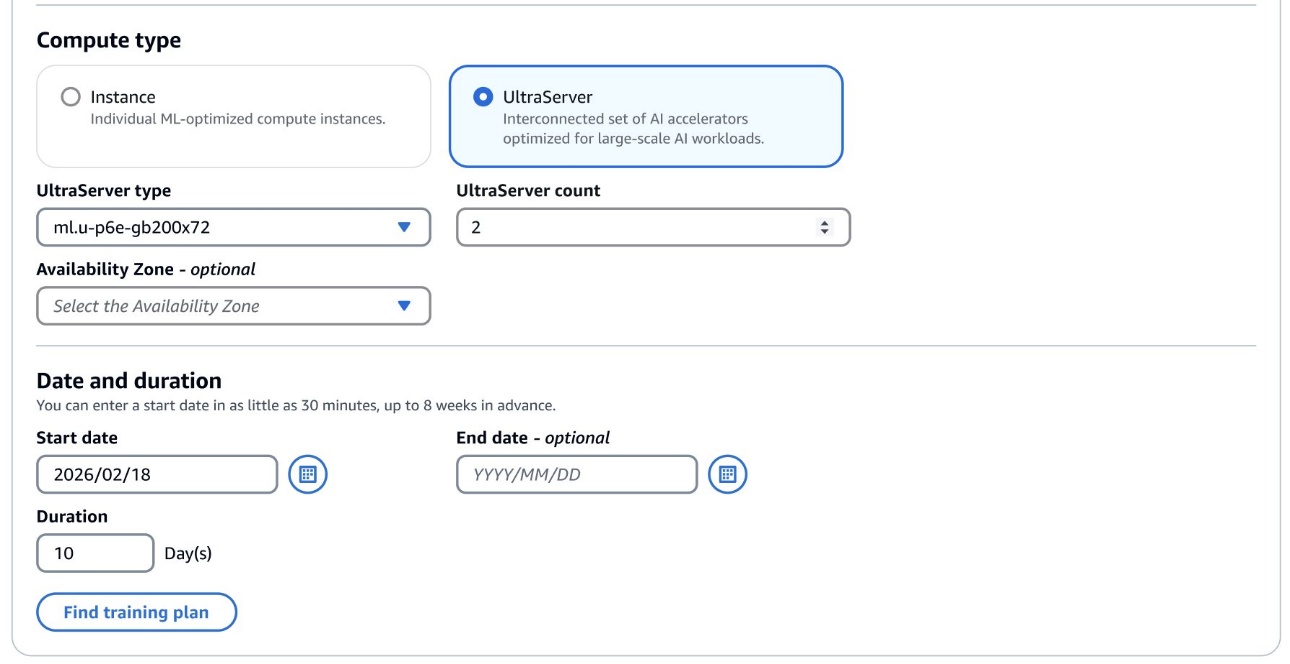

To get began, go to the Sagemaker AI Coaching Plans Console. This consists of the brand new Ultraserver Compute Kind. This lets you choose the Ultraserver Kind. ML.U-P6E-GB200X36 (together with 9 ML.P6E-GB200.36XLARGE CUPUTE NODES) or ML.U-P6E-GB200X72 (ML.P6E-GB200.36XLARGE Compute Node).

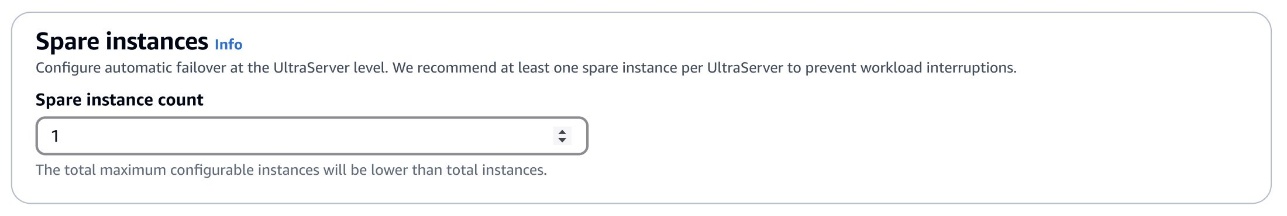

After discovering a coaching plan that fits your wants, we suggest configuring not less than one spare ML.P6E-GB200.36XLARGE pc node.

Create an Ultraserver cluster utilizing Sagemaker HyperPod

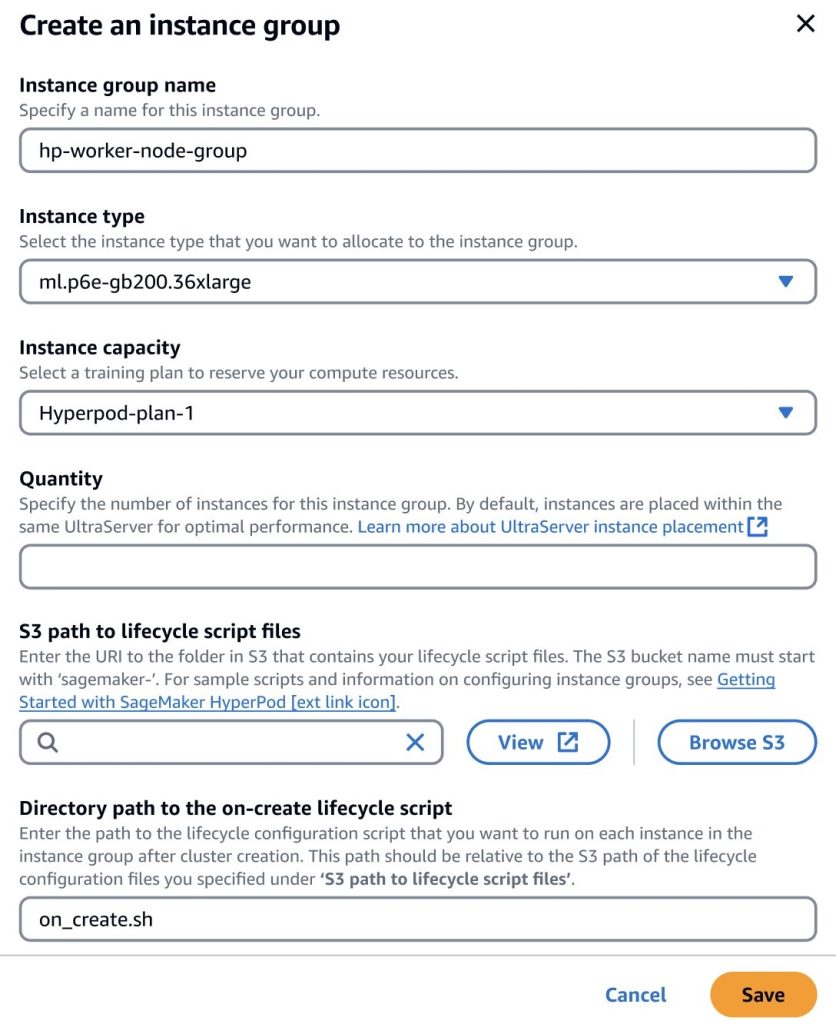

After buying an Ultraserver coaching plan, you possibly can add capability to the ML.P6E-GB200.36XLARGE kind occasion group in your Sagemaker HyperPod cluster and specify the quantity of cases you need to present as much as the quantity accessible in your coaching plan. For instance, for those who bought a coaching plan for the 1 ML.U-P6E-GB200X36 Ultraserver, you possibly can provision as much as 9 compute nodes, however if you buy a coaching plan for the 1 ML.U-P6E-GB200X72 Ultraserver, you possibly can present as much as 18 a number of nodes.

By default, Sagemaker optimizes the location of occasion group nodes inside the identical Ultraserver, permitting GPUs between nodes to be interconnected inside the identical NVLink area, offering optimum knowledge switch efficiency for jobs. For instance, if you buy 17 compute nodes (assuming you have got two spares configured) which can be accessible every with two ml.u-p6e-gb200x72 ultrasorber, creating an occasion group with 24 nodes, the primary 17 compute nodes shall be positioned in Ultrasaver A and the opposite 7 computing nodes shall be positioned in Ultraserver B.

Conclusion

The P6E-GB200 Extremely Sorber helps organizations prepare, tweak and repair the world’s most formidable AI fashions on a big scale. Combining distinctive GPU assets, ultra-fast networking, and industry-leading reminiscence with Sagemaker HyperPod automation and scalability, firms can speed up completely different levels of their AI lifecycle, from experimental and distributed coaching to seamless inference and deployment. This highly effective answer breaks new floor in efficiency and suppleness, reduces operational complexity and prices, permits innovators to unlock new prospects and lead the following period of AI developments.

In regards to the creator

Nathan Arnold I’m AWS Senior AI/ML Specialist Options Architect primarily based in Austin, Texas. He helps AWS prospects (from small startups to massive firms) and effectively drives and deploys primary fashions on AWS. When he isn’t working with purchasers, he enjoys mountaineering, path working and enjoying along with his canine.

Nathan Arnold I’m AWS Senior AI/ML Specialist Options Architect primarily based in Austin, Texas. He helps AWS prospects (from small startups to massive firms) and effectively drives and deploys primary fashions on AWS. When he isn’t working with purchasers, he enjoys mountaineering, path working and enjoying along with his canine.