Not too long ago, I used to be making ready to ship an necessary bottom-of-funnel (BOFU) electronic mail to our viewers. I had two topic strains and couldn‘t determine which one would carry out higher.

Naturally, I assumed, “Let’s A/B take a look at them!” Nevertheless, our electronic mail marketer rapidly identified a limitation I hadn’t thought of:

At first, this appeared counterintuitive. Certainly 5,000 subscribers was sufficient to run a easy take a look at between two topic strains?

This dialog led me down an enchanting rabbit gap into the world of statistical significance and why it issues a lot in advertising selections.

Whereas instruments like HubSpot’s free statistical significance calculator could make the mathematics simpler, understanding what they calculate and the way it impacts your technique is invaluable.

Under, I’ll break down statistical significance with a real-world instance, providing you with the instruments to make smarter, data-driven selections in your advertising campaigns.

Desk of Contents

What’s statistical significance?

In advertising, statistical significance is when the outcomes of your analysis present that the relationships between the variables you are testing (like conversion fee and touchdown web page kind) aren’t random; they affect one another.

Why is statistical significance necessary?

Statistical significance is sort of a reality detector on your knowledge. It helps you establish if the distinction between any two choices — like your topic strains — is probably going an actual or random probability.

Consider it like flipping a coin. In case you flip it 5 occasions and get heads 4 occasions, does that imply your coin is biased? Most likely not.

However in the event you flip it 1,000 occasions and get heads 800 occasions, now you may be onto one thing.

That is the function statistical significance performs: it separates coincidence from significant patterns. This was precisely what our electronic mail skilled was attempting to clarify after I instructed we A/B take a look at our topic strains.

Similar to the coin flip instance, she identified that what seems like a significant distinction — say, a 2% hole in open charges — won’t inform the entire story.

We would have liked to know statistical significance earlier than making selections that might have an effect on our complete electronic mail technique.

She then walked me by way of her testing course of:

- Group A would obtain Topic Line A, and Group B would get Topic Line B.

- She’d monitor open charges for each teams, examine the outcomes, and declare a winner.

“Appears simple, proper?” she requested. Then she revealed the place it will get tough.

She confirmed me a situation: Think about Group A had an open fee of 25% and Group B had an open fee of 27%. At first look, it seems like Topic Line B carried out higher. However can we belief this end result?

What if the distinction was simply as a consequence of random probability and never as a result of Topic Line B was really higher?

This query led me down an enchanting path to know why statistical significance issues a lot in advertising selections. Here is what I found:

Here is Why Statistical Significance Issues

- Pattern measurement influences reliability: My preliminary assumption about our 5,000 subscribers being sufficient was mistaken. When break up evenly between the 2 teams, every topic line would solely be examined on 2,500 individuals. With a mean open fee of 20%, we‘d solely see round 500 opens per group. I discovered that’s not an enormous quantity when attempting to detect small variations like a 2% hole. The smaller the pattern, the upper the possibility that random variability skews your outcomes.

- The distinction won’t be actual: This was eye-opening for me. Even when Topic Line B had 10 extra opens than Topic Line A, that doesn‘t imply it’s definitively higher. A statistical significance take a look at would assist decide if this distinction is significant or if it might have occurred by probability.

- Making the mistaken choice is expensive: This actually hits residence. If we falsely concluded that Topic Line B was higher and used it in future campaigns, we would miss alternatives to have interaction our viewers extra successfully. Worse, we might waste time and assets scaling a method that does not truly work.

By way of my analysis, I found that statistical significance helps you keep away from performing on what could possibly be a coincidence. It asks a vital query: ‘If we repeated this take a look at 100 occasions, how possible is it that we’d see this similar distinction in outcomes?’

If the reply is ‘very possible,’ then you’ll be able to belief the end result. If not, it is time to rethink your strategy.

Although I used to be wanting to be taught the statistical calculations, I first wanted to know a extra basic query: when ought to we even run these assessments within the first place?

Easy methods to Check for Statistical Significance: My Fast Choice Framework

When deciding whether or not to run a take a look at, use this choice framework to evaluate whether or not it’s well worth the effort and time. Right here’s how I break it down.

Run assessments when:

- You’ve gotten a enough pattern measurement. The take a look at can attain statistical significance based mostly on the variety of customers or recipients.

- The change might affect enterprise metrics. For instance, testing a brand new call-to-action might straight enhance conversions.

- When you’ll be able to anticipate the complete take a look at length. Impatience can result in inconclusive outcomes. I at all times make sure the take a look at has sufficient time to run its course.

- The distinction would justify implementation value. If the outcomes result in a significant ROI or diminished useful resource prices, it’s value testing.

Don’t run the take a look at when:

- The pattern measurement is simply too small. With out sufficient knowledge, the outcomes received’t be dependable or actionable.

- You want quick outcomes. If a choice is pressing, testing will not be the perfect strategy.

- The change is minimal. Testing small tweaks, like shifting a button a couple of pixels, usually requires monumental pattern sizes to indicate significant outcomes.

- Implementation value exceeds potential profit. If the assets wanted to implement the profitable model outweigh the anticipated good points, testing isn’t value it.

Check Prioritization Matrix

Once you’re juggling a number of take a look at concepts, I like to recommend utilizing a prioritization matrix to deal with high-impact alternatives.

Excessive-priority assessments:

- Excessive-traffic pages. These pages supply the most important pattern sizes and quickest path to significance.

- Main conversion factors. Check areas like sign-up kinds or checkout processes that straight have an effect on income.

- Income-generating parts. Headlines, CTAs, or provides that drive purchases or subscriptions.

- Buyer acquisition touchpoints. E-mail topic strains, adverts, or touchdown pages that affect lead technology.

Low-priority assessments:

- Low-traffic pages. These pages take for much longer to provide actionable outcomes.

- Minor design parts. Small stylistic adjustments usually don’t transfer the needle sufficient to justify testing.

- Non-revenue pages. About pages or blogs with out direct hyperlinks to conversions might not warrant intensive testing.

- Secondary metrics. Testing for vainness metrics like time on web page might not align with enterprise targets.

This framework ensures you focus your efforts the place they matter most.

However this led to my subsequent huge query: as soon as you’ve got determined to run a take a look at, how do you truly decide statistical significance?

Fortunately, whereas the mathematics may sound intimidating, there are easy instruments and strategies for getting correct solutions. Let’s break it down step-by-step.

Easy methods to Calculate and Decide Statistical Significance

- Resolve what you wish to take a look at.

- Decide your speculation.

- Begin accumulating your knowledge.

- Calculate chi-squared outcomes.

- Calculate your anticipated values.

- See how your outcomes differ from what you anticipated.

- Discover your sum.

- Interpret your outcomes.

- Decide statistical significance.

- Report on statistical significance to your workforce.

1. Resolve what you wish to take a look at.

Step one is to determine what you’d like to check. This could possibly be:

- Evaluating conversion charges on two touchdown pages with totally different pictures.

- Testing click-through charges on emails with totally different topic strains.

- Evaluating conversion charges on totally different call-to-action buttons on the finish of a weblog put up.

The chances are infinite, however simplicity is vital. Begin with a selected piece of content material you wish to enhance, and set a transparent objective — for instance, boosting conversion charges or growing views.

Whilst you can discover extra advanced approaches, like testing a number of variations (multivariate assessments), I like to recommend beginning with an easy A/B take a look at. For this instance, I’ll examine two variations of a touchdown web page with the objective of accelerating conversion charges.

Professional tip: In case you’re curious concerning the distinction between A/B and multivariate assessments, take a look at this guide on A/B vs. Multivariate Testing.

2. Determine your hypothesis.

When it comes to A/B testing, our resident email expert always emphasizes starting with a clear hypothesis. She explained that having a hypothesis helps focus the test and ensures meaningful results.

In this case, since we’re testing two email subject lines, the hypothesis might look like this:

Another key step is deciding on a confidence level before the test begins. A 95% confidence level is standard in most tests, as it ensures the results are statistically reliable and not just due to random chance.

This structured approach makes it easier to interpret your results and take meaningful action.

3. Start collecting your data.

Once you’ve determined what you’d like to test, it’s time to start collecting your data. Since the goal of this test is to figure out which subject line performs better for future campaigns, you’ll need to select an appropriate sample size.

For emails, this might mean splitting your list into random sample groups and sending each group a different subject line variation.

For instance, if you’re testing two subject lines, divide your list evenly and randomly to ensure both groups are comparable.

Determining the right sample size can be tricky, as it varies with each test. A good rule of thumb is to aim for an expected value greater than 5 for each variation.

This helps ensure your results are statistically valid. (I’ll cover how to calculate expected values further down.)

4. Calculate Chi-Squared results.

In researching how to analyze our email testing results, I discovered that while there are several statistical tests available, the Chi-Squared test is particularly well-suited for A/B testing scenarios like ours.

This made perfect sense for our email testing scenario. A Chi-Squared test is used for discrete data, which simply means the results fall into distinct categories.

In our case, an email recipient will either open the email or not open it — there’s no middle ground.

One key concept I needed to understand was the confidence level (also referred to as the alpha of the test). A 95% confidence level is standard, meaning there’s only a 5% chance (alpha = 0.05) that the observed relationship is due to random chance.

For example: “The results are statistically significant with 95% confidence” indicates that the alpha was 0.05, meaning there’s a 1 in 20 chance of error in the results.

My research showed that organizing the data into a simple chart for clarity is the best way to start.

Since I’m testing two variations (Subject Line A and Subject Line B) and two outcomes (opened, did not open), I can use a 2×2 chart:

|

Outcome |

Subject Line A |

Subject Line B |

Total |

|

Opened |

X (e.g., 125) |

Y (e.g., 135) |

X + Y |

|

Did Not Open |

Z (e.g., 375) |

W (e.g., 365) |

Z + W |

|

Total |

X + Z |

Y + W |

N |

This makes it easy to visualize the data and calculate your Chi-Squared results. Totals for each column and row provide a clear overview of the outcomes in aggregate, setting you up for the next step: running the actual test.

While tools like HubSpot’s A/B Testing Kit can calculate statistical significance robotically, understanding the underlying course of helps you make higher testing selections. Let us take a look at how these calculations truly work:

Working the Chi-Squared take a look at

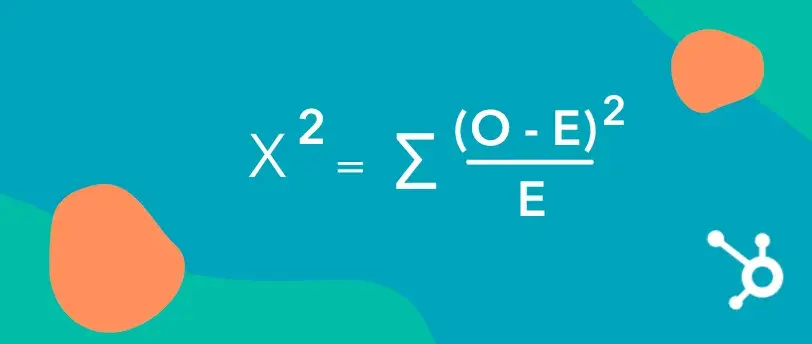

As soon as I’ve organized my knowledge right into a chart, the following step is to calculate statistical significance utilizing the Chi-Squared method.

Right here’s what the method seems like:

On this method:

- Σ means to sum (add up) all calculated values.

- O represents the noticed (precise) values out of your take a look at.

- E represents the anticipated values, which you calculate based mostly on the totals in your chart.

To make use of the method:

- Subtract the anticipated worth (E) from the noticed worth (O) for every cell within the chart.

- Sq. the end result.

- Divide the squared distinction by the anticipated worth (E).

- Repeat these steps for all cells, then sum up all the outcomes after the Σ to get your Chi-Squared worth.

This calculation tells you whether or not the variations between your teams are statistically important or possible as a consequence of probability.

5. Calculate your anticipated values.

Now, it’s time to calculate the anticipated values (E) for every consequence in your take a look at. If there’s no relationship between the topic line and whether or not an electronic mail is opened, we’d count on the open charges to be proportionate throughout each variations (A and B).

Let’s assume:

- Whole emails despatched = 5,000

- Whole opens = 1,000 (20% open fee)

- Topic Line A was despatched to 2,500 recipients.

- Topic Line B was additionally despatched to 2,500 recipients.

Right here’s the way you manage the information in a desk:

|

Consequence |

Topic Line A |

Topic Line B |

Whole |

|

Opened |

500 (O) |

500 (O) |

1,000 |

|

Did Not Open |

2,000 (O) |

2,000 (O) |

4,000 |

|

Whole |

2,500 |

2,500 |

5,000 |

Anticipated Values (E):

To calculate the anticipated worth for every cell, use this method:

E=(Row Whole×Column Whole)Grand TotalE = frac{(textual content{Row Whole} occasions textual content{Column Whole})}{textual content{Grand Whole}}E=Grand Whole(Row Whole×Column Whole)

For instance, to calculate the anticipated variety of opens for Topic Line A:

E=(1,000×2,500)5,000=500E = frac{(1,000 occasions 2,500)}{5,000} = 500E=5,000(1,000×2,500)=500

Repeat this calculation for every cell:

|

Consequence |

Topic Line A (E) |

Topic Line B (E) |

Whole |

|

Opened |

500 |

500 |

1,000 |

|

Did Not Open |

2,000 |

2,000 |

4,000 |

|

Whole |

2,500 |

2,500 |

5,000 |

These anticipated values now present the baseline you’ll use within the Chi-Squared method to match towards the noticed values.

6. See how your outcomes differ from what you anticipated.

To calculate the Chi-Sq. worth, examine the noticed frequencies (O) to the anticipated frequencies (E) in every cell of your desk. The method for every cell is:

χ2=(O−E)2Echi^2 = frac{(O – E)^2}{E}χ2=E(O−E)2

Steps:

- Subtract the noticed worth from the anticipated worth.

- Sq. the end result to amplify the distinction.

- Divide this squared distinction by the anticipated worth.

- Sum up all the outcomes for every cell to get your whole Chi-Sq. worth.

Let’s work by way of the information from the sooner instance:

|

Consequence |

Topic Line A (O) |

Topic Line B (O) |

Topic Line A (E) |

Topic Line B (E) |

(O−E)2/E(O – E)^2 / E(O−E)2/E |

|

Opened |

550 |

450 |

500 |

500 |

(550−500)2/500=5(550-500)^2 / 500 = 5(550−500)2/500=5 |

|

Did Not Open |

1,950 |

2,050 |

2,000 |

2,000 |

(1950−2000)2/2000=1.25(1950-2000)^2 / 2000 = 1.25(1950−2000)2/2000=1.25 |

Now sum up the (O−E)2/E(O – E)^2 / E(O−E)2/E values:

χ2=5+1.25=6.25chi^2 = 5 + 1.25 = 6.25χ2=5+1.25=6.25

That is your whole Chi-Sq. worth, which signifies how a lot the noticed outcomes differ from what was anticipated.

What does this worth imply?

You’ll now examine this Chi-Sq. worth to a vital worth from a Chi-Sq. distribution desk based mostly in your levels of freedom (variety of classes – 1) and confidence degree. In case your worth exceeds the vital worth, the distinction is statistically important.

7. Discover your sum.

Lastly, I sum the outcomes from all cells within the desk to get my Chi-Sq. worth. This worth represents the entire distinction between the noticed and anticipated outcomes.

Utilizing the sooner instance:

|

Consequence |

(O−E)2/E(O – E)^2 / E(O−E)2/E for Topic Line A |

(O−E)2/E(O – E)^2 / E(O−E)2/E for Topic Line B |

|

Opened |

5 |

5 |

|

Did Not Open |

1.25 |

1.25 |

χ2=5+5+1.25+1.25=12.5chi^2 = 5 + 5 + 1.25 + 1.25 = 12.5χ2=5+5+1.25+1.25=12.5

Evaluate your Chi-Sq. worth to the distribution desk.

To find out if the outcomes are statistically important, I examine the Chi-Sq. worth (12.5) to a vital worth from a Chi-Sq. distribution desk, based mostly on:

- Levels of freedom (df): That is decided by (variety of rows −1)×(variety of columns −1)(variety of rows – 1) occasions (variety of columns – 1)(variety of rows −1)×(variety of columns −1). For a 2×2 desk, df=1df = 1df=1.

- Alpha (αalphaα): The arrogance degree of the take a look at. With an alpha of 0.05 (95% confidence), the vital worth for df=1df = 1df=1 is 3.84.

On this case:

- Chi-Sq. Worth = 12.5

- Essential Worth = 3.84

Since 12.5>3.8412.5 > 3.8412.5>3.84, the outcomes are statistically important. This means that there’s a relationship between the topic line and the open fee.

If the Chi-Sq. worth had been decrease…

For instance, if the Chi-Sq. worth had been 0.95 (as within the unique situation), it could be lower than 3.84, that means the outcomes wouldn’t be statistically important. This may point out no significant relationship between the topic line and the open fee.

8. Interpret your outcomes.

As I dug deeper into statistical testing, I discovered that decoding outcomes correctly is simply as essential as working the assessments themselves. By way of my analysis, I found a scientific strategy to evaluating take a look at outcomes.

Robust Outcomes (act instantly)

Outcomes are thought of sturdy and actionable after they meet these key standards:

- 95%+ confidence degree. The outcomes are statistically important with minimal threat of being as a consequence of probability.

- Constant outcomes throughout segments. Efficiency holds regular throughout totally different consumer teams or demographics.

- A transparent winner emerges. One model constantly outperforms the opposite.

- Matches enterprise logic. The outcomes align with expectations or affordable enterprise assumptions.

When outcomes meet these standards, the perfect observe is to behave rapidly: implement the profitable variation, doc what labored, and plan follow-up assessments for additional optimization.

Weak Outcomes (want extra knowledge)

On the flip facet, outcomes are usually thought of weak or inconclusive after they present these traits:

- Under 95% confidence degree. The outcomes do not meet the edge for statistical significance.

- Inconsistent throughout segments. One model performs nicely with sure teams however poorly with others.

- No clear winner. Each variations present related efficiency and not using a important distinction.

- Contradicts earlier assessments. Outcomes differ from previous experiments and not using a clear clarification.

In these instances, the really helpful strategy is to collect extra knowledge by way of retesting with a bigger pattern measurement or extending the take a look at length.

Subsequent Steps Choice Tree

My analysis revealed a sensible choice framework for figuring out subsequent steps after decoding outcomes.

If the outcomes are important:

- Implement the profitable model. Roll out the better-performing variation.

- Doc learnings. Report what labored and why for future reference.

- Plan follow-up assessments. Construct on the success by testing associated parts (e.g., testing headlines if topic strains carried out nicely).

- Scale to related areas. Apply insights to different campaigns or channels.

If the outcomes aren’t important:

- Proceed with the present model. Stick to the present design or content material.

- Plan a bigger pattern take a look at. Revisit the take a look at with a bigger viewers to validate the findings.

- Check larger adjustments. Experiment with extra dramatic variations to extend the chance of a measurable affect.

- Deal with different alternatives. Redirect assets to higher-priority assessments or initiatives.

This systematic strategy ensures that each take a look at, whether or not important or not, contributes beneficial insights to the optimization course of.

9. Decide statistical significance.

By way of my analysis, I found that figuring out statistical significance comes all the way down to understanding interpret the Chi-Sq. worth. Here is what I discovered.

Two key elements decide statistical significance:

- Levels of freedom (df). That is calculated based mostly on the variety of classes within the take a look at. For a 2×2 desk, df=1.

- Essential worth. That is decided by the boldness degree (e.g., 95% confidence has an alpha of 0.05).

Evaluating values:

The method turned out to be fairly simple: you examine your calculated Chi-Sq. worth to the vital worth from a Chi-Sq. distribution desk. For instance, with df=1 and a 95% confidence degree, the vital worth is 3.84.

What the numbers let you know:

- In case your Chi-Sq. worth is bigger than or equal to the vital worth, your outcomes are statistically important. This means the noticed variations are actual and never as a consequence of random probability.

- In case your Chi-Sq. worth is lower than the vital worth, your outcomes aren’t statistically important, indicating the noticed variations could possibly be as a consequence of random probability.

What occurs if the outcomes aren’t important? By way of my investigation, I discovered that non-significant outcomes aren‘t essentially failures — they’re widespread and supply beneficial insights. Here is what I found about dealing with such conditions.

Evaluation the take a look at setup:

- Was the pattern measurement enough?

- Have been the variations distinct sufficient?

- Did the take a look at run lengthy sufficient?

Making selections with non-significant outcomes:

When outcomes aren’t important, there are a number of productive paths ahead.

- Run one other take a look at with a bigger pattern measurement.

- Check for extra dramatic variations which may present clearer variations.

- Use the information as a baseline for future experiments.

10. Report on statistical significance to your workforce.

After working your experiment, it’s important to speak the outcomes to your workforce so everybody understands the findings and agrees on the following steps.

Utilizing the e-mail topic line instance, right here’s how I’d strategy reporting.

- If outcomes aren’t important: I’d inform my workforce that the take a look at outcomes point out no statistically important distinction between the 2 topic strains. This implies the topic line selection is unlikely to affect open charges for future campaigns. We might both retest with a bigger pattern measurement or transfer ahead with both topic line.

- If the outcomes are important: I’d clarify that Topic Line A carried out considerably higher than Topic Line B, with a statistical significance of 95%. Based mostly on this consequence, we must always use Topic Line A for our upcoming marketing campaign to maximise open charges.

Once you’re reporting your findings, listed here are some greatest practices.

- Use clear visuals: Embody a abstract desk or chart that compares noticed and anticipated values alongside the calculated Chi-Sq. worth.

- Clarify the implications: Transcend the numbers to make clear how the outcomes will inform future selections.

- Suggest subsequent steps: Whether or not implementing the profitable variation or planning follow-up assessments, guarantee your workforce is aware of what to do.

By presenting ends in a transparent and actionable means, you assist your workforce make data-driven selections with confidence.

From Easy Check to Statistical Journey: What I Discovered About Knowledge-Pushed Advertising

What began as a easy want to check two electronic mail topic strains led me down an enchanting path into the world of statistical significance.

Whereas my preliminary intuition was to simply break up our viewers and examine outcomes, I found that making really data-driven selections requires a extra nuanced strategy.

Three key insights remodeled how I take into consideration A/B testing:

First, pattern measurement issues greater than I initially thought. What looks as if a big sufficient viewers (even 5,000 subscribers!) won’t truly provide you with dependable outcomes, particularly if you’re searching for small however significant variations in efficiency.

Second, statistical significance isn‘t only a mathematical hurdle — it’s a sensible device that helps stop pricey errors. With out it, we threat scaling methods based mostly on coincidence somewhat than real enchancment.

Lastly, I discovered that “failed” assessments aren‘t actually failures in any respect. Even when outcomes aren’t statistically important, they supply beneficial insights that assist form future experiments and preserve us from losing assets on minimal adjustments that will not transfer the needle.

This journey has given me a brand new appreciation for the function of statistical rigor in advertising selections.

Whereas the mathematics may appear intimidating at first, understanding these ideas makes the distinction between guessing and figuring out — between hoping our advertising works and being assured it does.

Editor’s word: This put up was initially revealed in April 2013 and has been up to date for comprehensiveness.

![New Data: Instagram Engagement Report [Free Download]](https://no-cache.hubspot.com/cta/default/53/9294dd33-9827-4b39-8fc2-b7fbece7fdb9.png)