You might need heard of web site crawling earlier than — chances are you’ll also have a obscure thought of what it’s about — however are you aware why it’s necessary, or what differentiates it from net crawling? (sure, there’s a distinction!)

Search engines like google are more and more ruthless on the subject of the standard of the websites they permit into the search outcomes.

For those who don’t grasp the fundamentals of optimizing for net crawlers (and eventual customers), your natural visitors could nicely pay the value.

An excellent netwebsite crawler can present you find out how to defend and even improve your website’s visibility.

Right here’s what it’s good to learn about each net crawlers and website crawlers.

An online crawler is a software program program or script that robotically scours the web, analyzing and indexing net pages.

Also referred to as an internet spider or spiderbot, net crawlers assess a web page’s content material to resolve find out how to prioritize it of their indexes.

Googlebot, Google’s net crawler, meticulously browses the net, following hyperlinks from web page to web page, gathering knowledge, and processing content material for inclusion in Google’s search engine.

How do net crawlers impression web optimization?

Internet crawlers analyze your web page and resolve how indexable or rankable it’s, which finally determines your means to drive natural visitors.

If you wish to be found in search outcomes, then it’s necessary you prepared your content material for crawling and indexing.

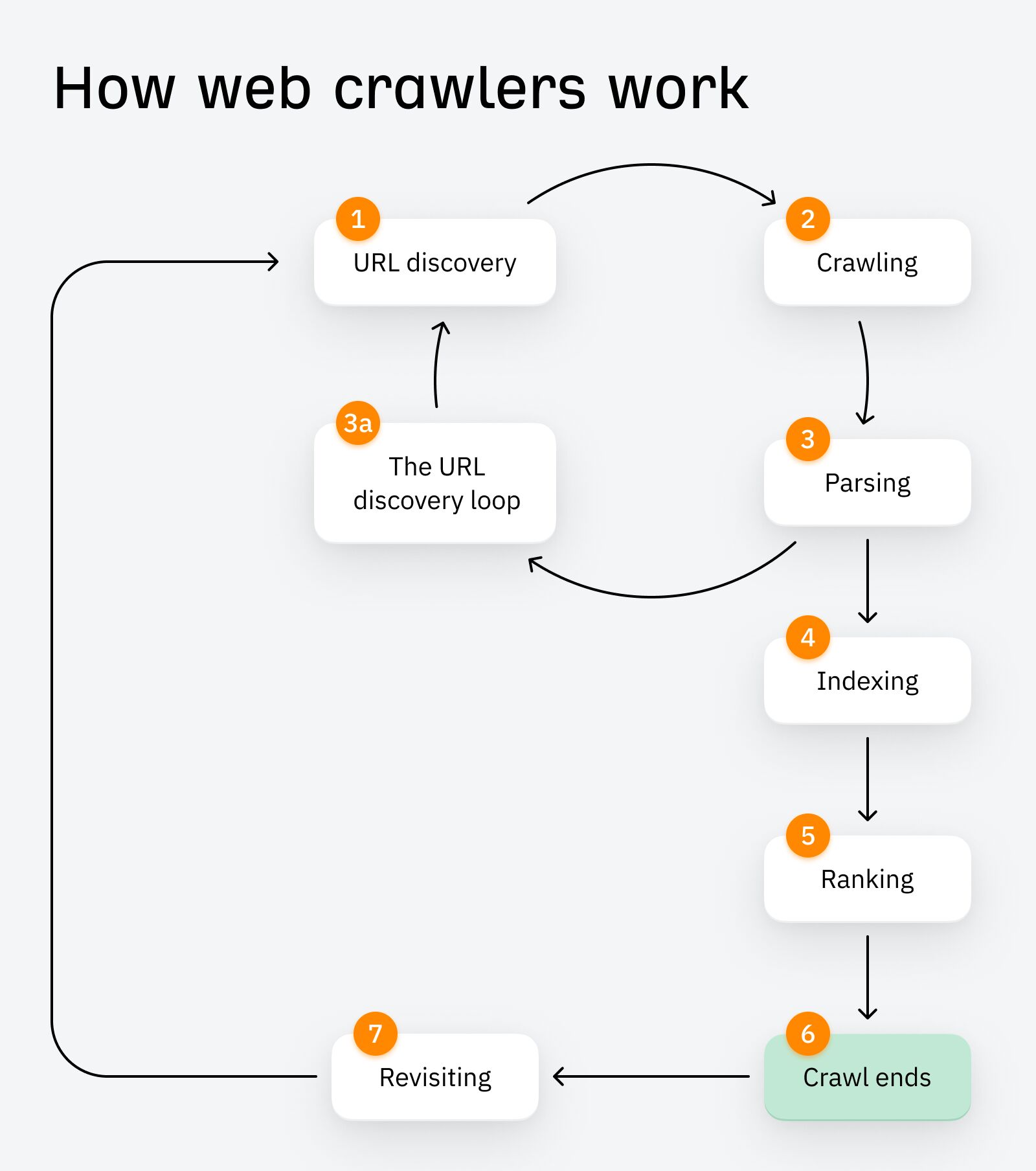

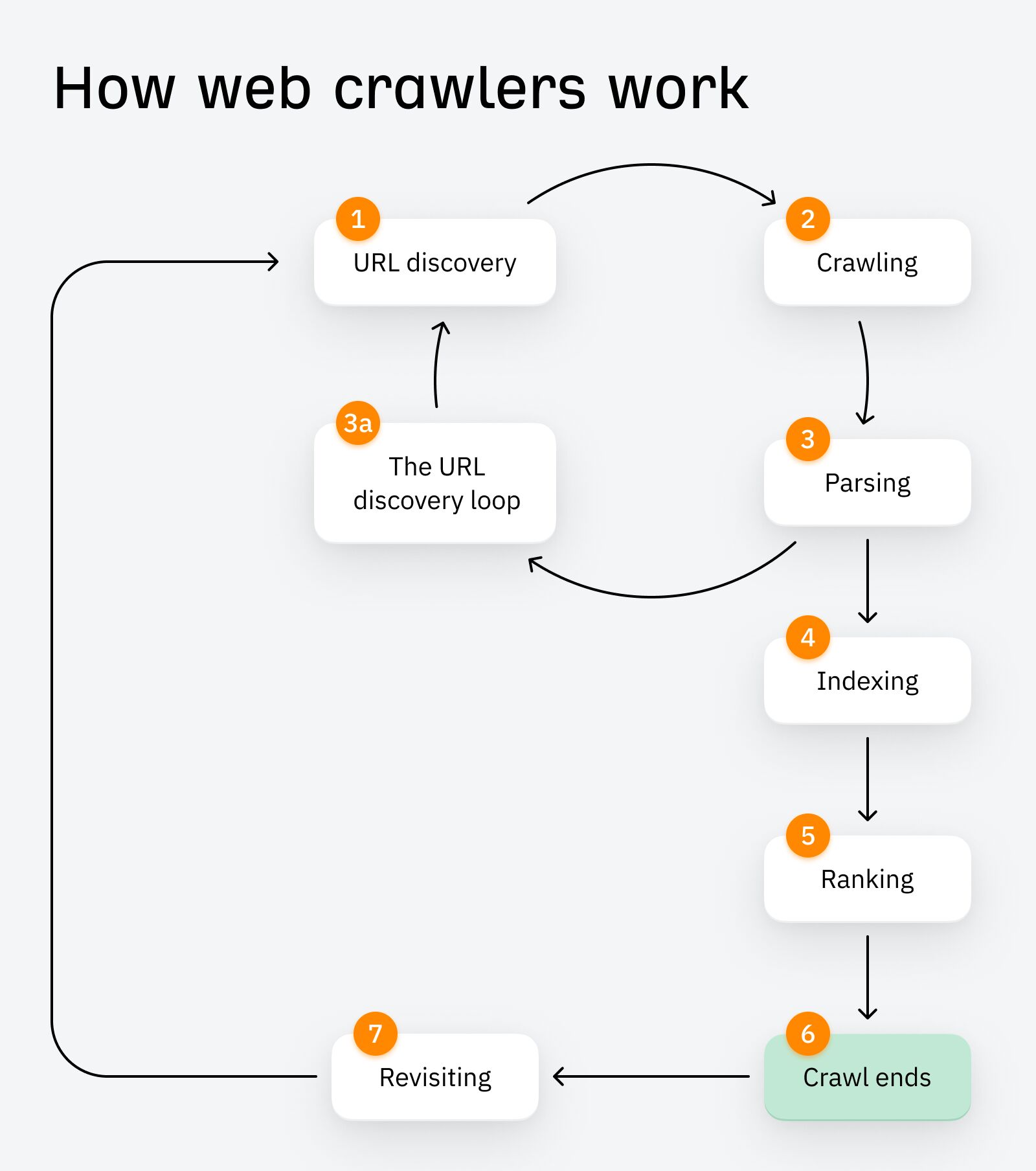

There are roughly seven phases to net crawling:

1. URL Discovery

While you publish your web page (e.g. to your sitemap), the net crawler discovers it and makes use of it as a ‘seed’ URL. Similar to seeds within the cycle of germination, these starter URLs permit the crawl and subsequent crawling loops to start.

2. Crawling

After URL discovery, your web page is scheduled after which crawled. Content material like meta tags, photos, hyperlinks, and structured knowledge are downloaded to the search engine’s servers, the place they await parsing and indexing.

3. Parsing

Parsing primarily means evaluation. The crawler bot extracts the info it’s simply crawled to find out find out how to index and rank the web page.

3a. The URL Discovery Loop

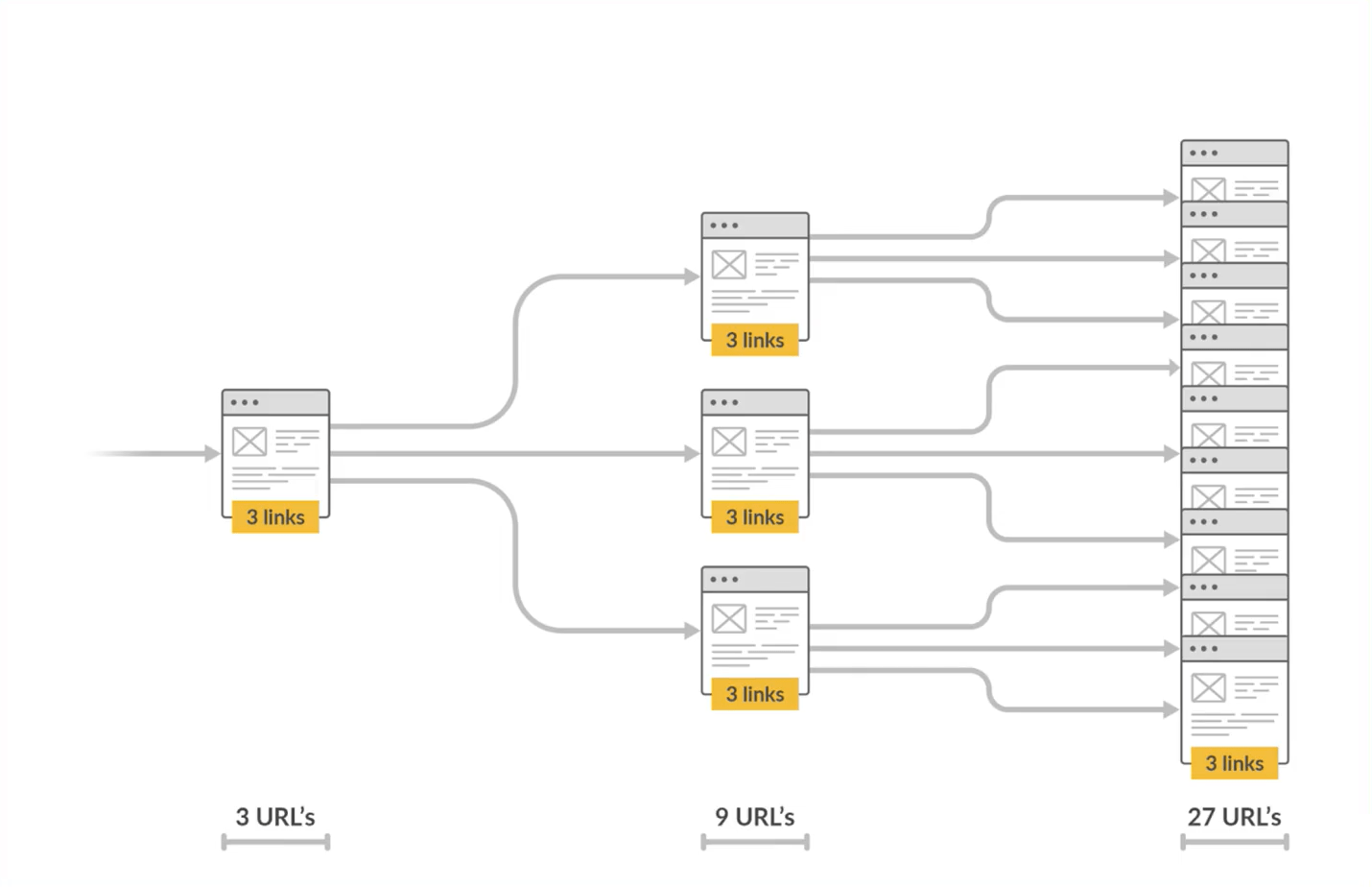

Additionally in the course of the parsing section, however worthy of its personal subsection, is the URL discovery loop. That is when newly found hyperlinks (together with hyperlinks found by way of redirects) are added to a queue of URLs for the crawler to go to. These are successfully new ‘seed’ URLs, and steps 1–3 get repeated as a part of the ‘URL discovery loop’.

4. Indexing

Whereas new URLs are being found, the unique URL will get listed. Indexing is when engines like google retailer the info collected from net pages. It allows them to shortly retrieve related outcomes for person queries.

5. Rating

Listed pages get ranked in engines like google based mostly on high quality, relevance to look queries, and talent to satisfy sure different rating elements. These pages are then served to customers after they carry out a search.

6. Crawl ends

Finally the whole crawl (together with the URL rediscovery loop) ends based mostly on elements like time allotted, variety of pages crawled, depth of hyperlinks adopted and many others.

7. Revisiting

Crawlers periodically revisit the web page to examine for updates, new content material, or adjustments in construction.

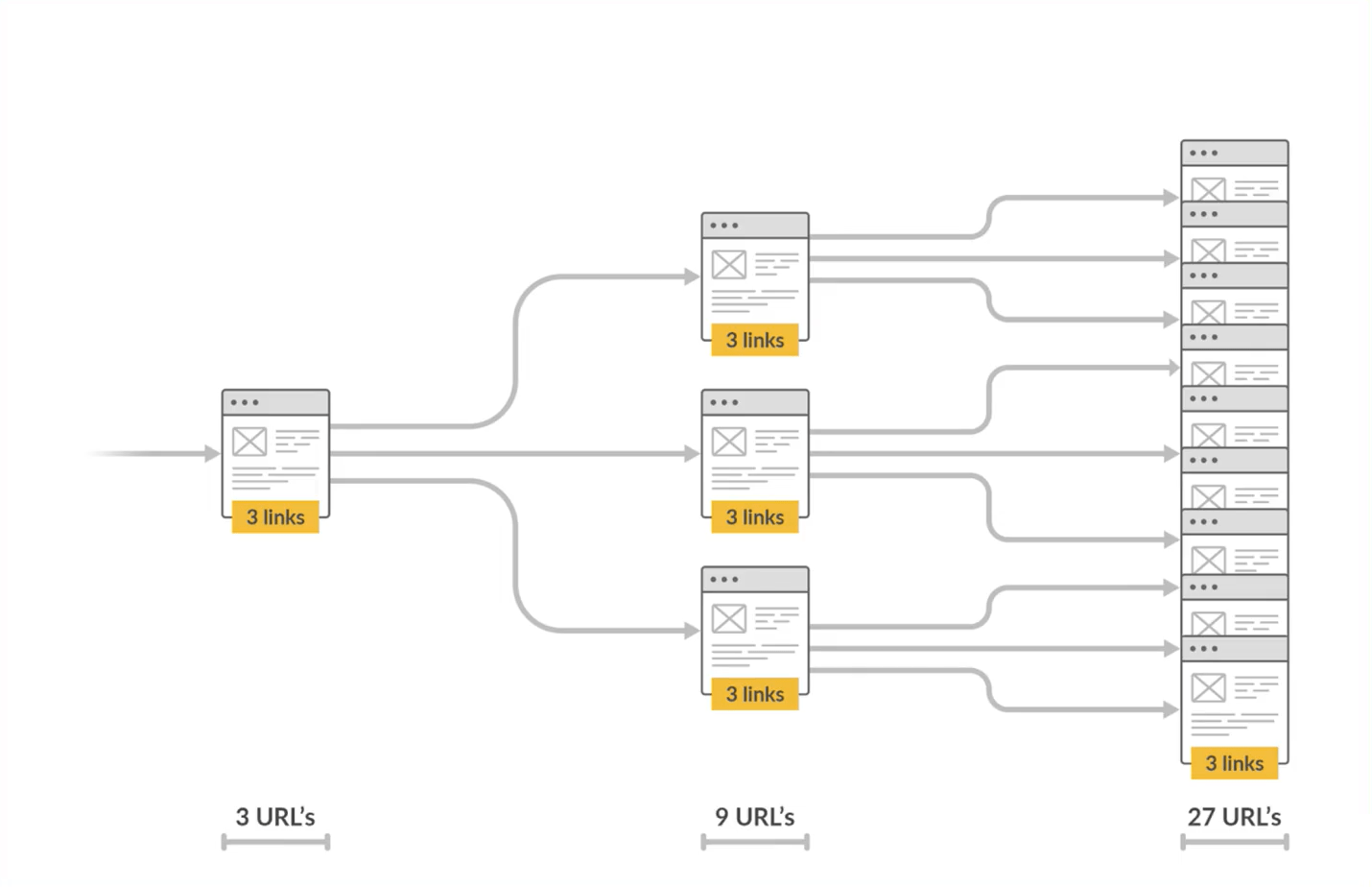

As you may most likely guess, the variety of URLs found and crawled on this course of grows exponentially in only a few hops.

Search engine net crawlers are autonomous, which means you can’t trigger them to crawl or switch them on/off at will.

You may, nonetheless, assist crawlers out with:

XML sitemaps

An XML sitemap is a file that lists all of the necessary pages in your web site to assist engines like google precisely uncover and index your content material.

Google’s URL inspection instrument

You may ask Google to think about recrawling your website content material by way of its URL inspection tool in Google Search Console. You might get a message in GSC if Google is aware of about your URL however hasn’t but crawled or listed it. If that’s the case, learn the way to repair “Found — at the moment not listed”.

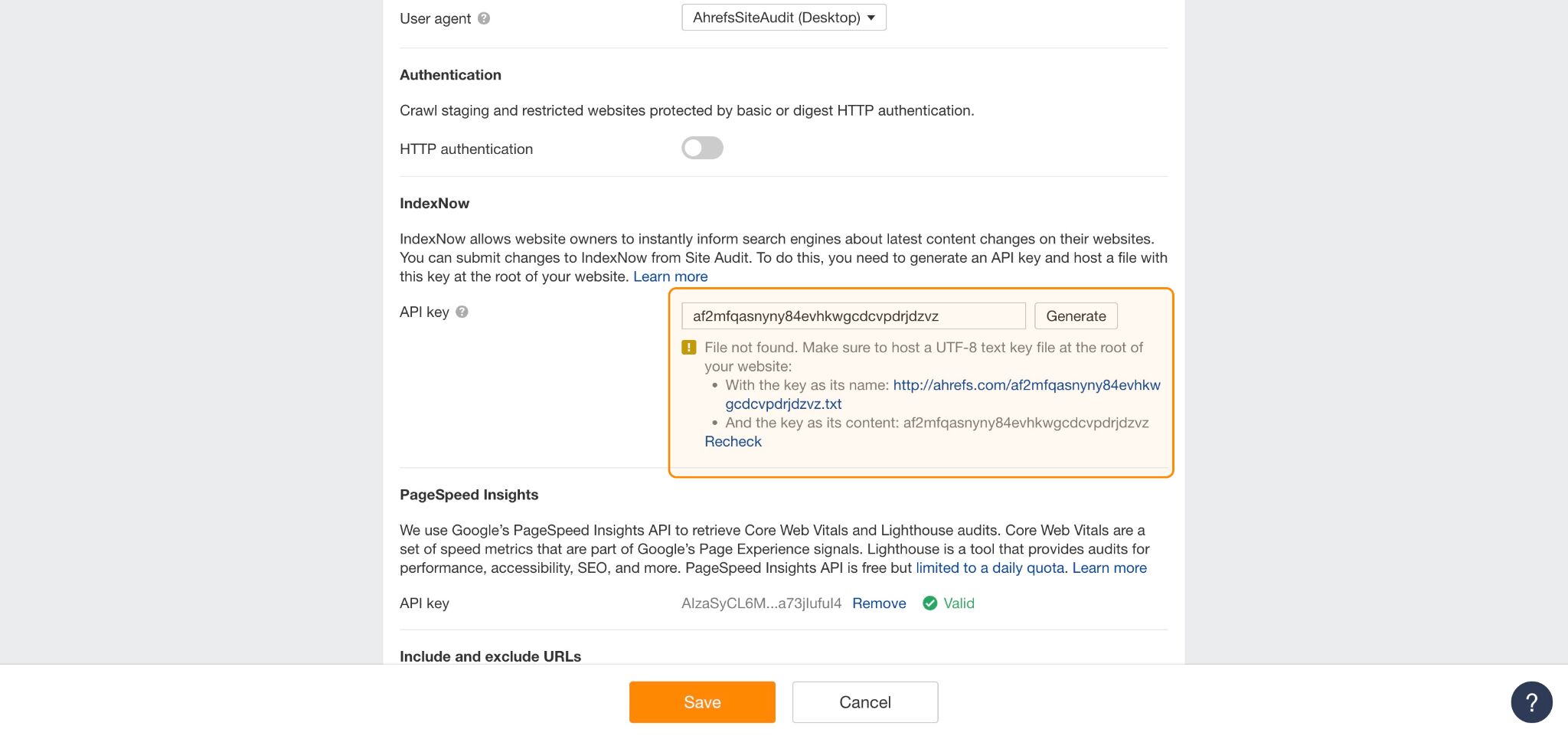

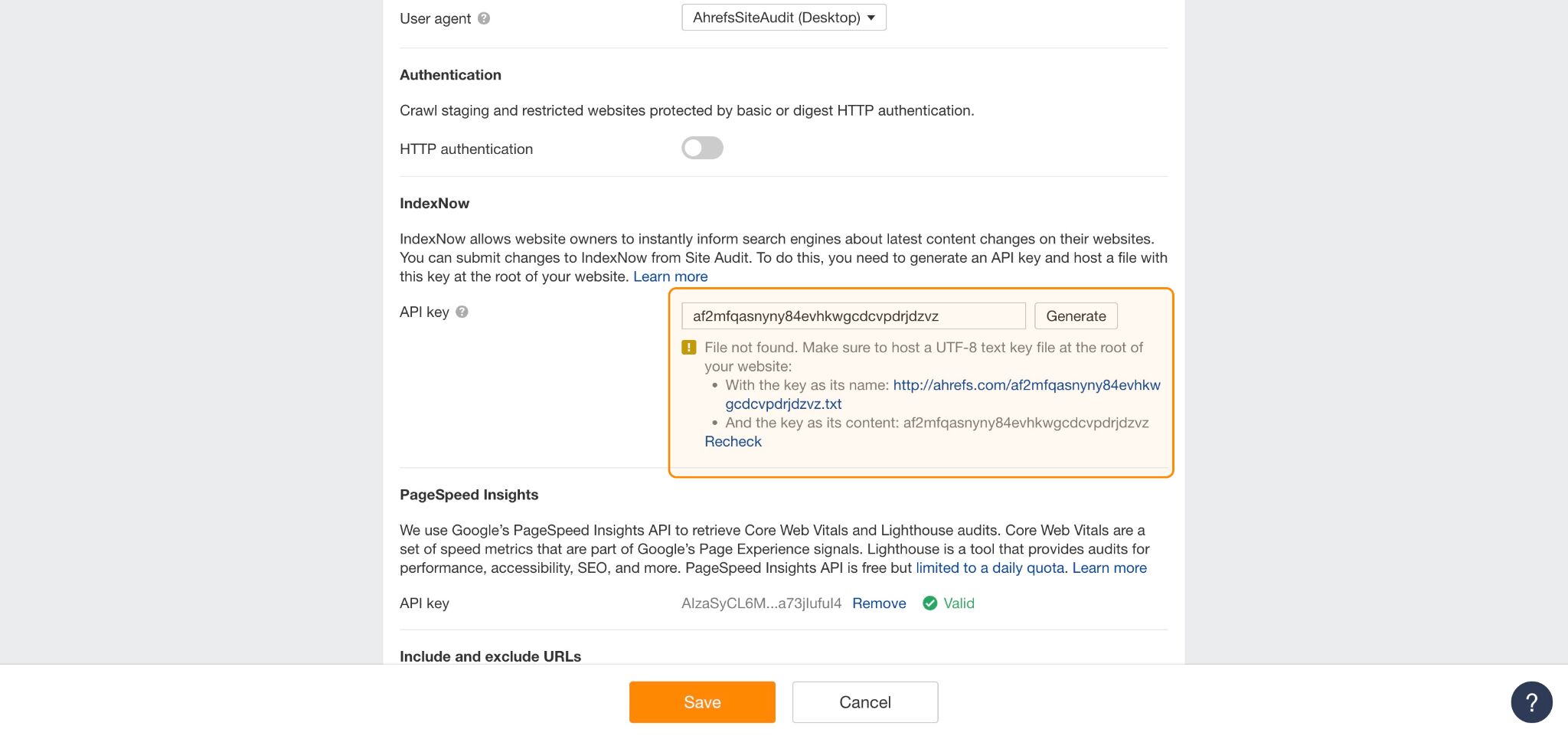

IndexNow

As an alternative of ready for bots to re-crawl and index your content material, you need to use IndexNow to automatically ping search engines like Bing, Yandex, Naver, Seznam.cz, and Yep, everytime you:

- Add new pages

- Replace present content material

- Take away outdated pages

- Implement redirects

You may arrange automated IndexNow submissions by way of Ahrefs Website Audit.

Search engine crawling choices are dynamic and a little obscure.

Though we don’t know the definitive standards Google makes use of to find out when or how usually to crawl content material, we’ve deduced three of a very powerful areas.

That is based mostly on breadcrumbs dropped by Google in help documentation and rep interviews.

1. Prioritize high quality

Pages earning quality links are deemed more important and are ranked higher in search results.

PageRank is a foundational part of Google’s algorithm. It makes sense then that the quality of your links and content plays a big part in how your site is crawled and indexed.

To judge your site’s quality, Google looks at factors such as:

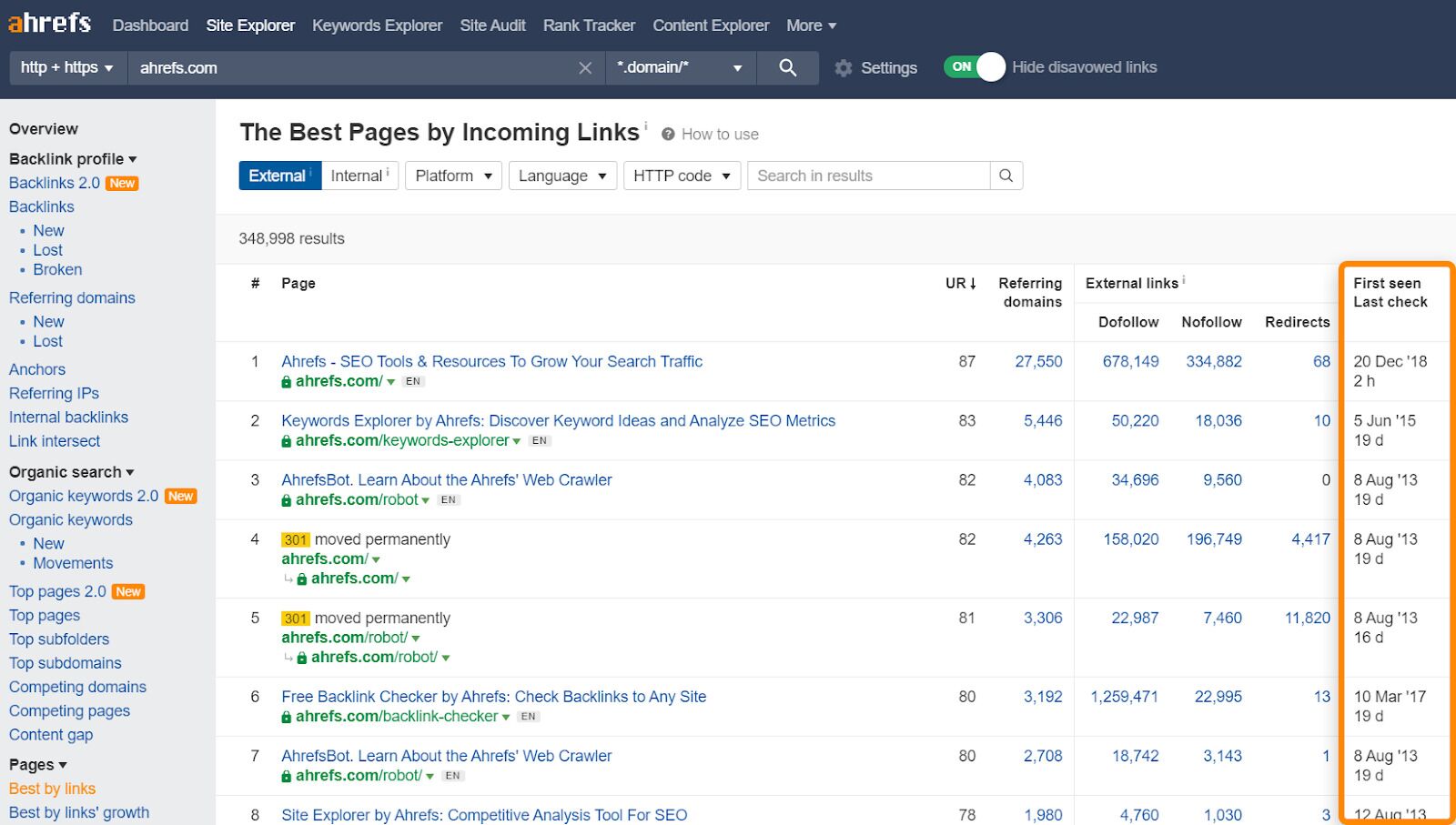

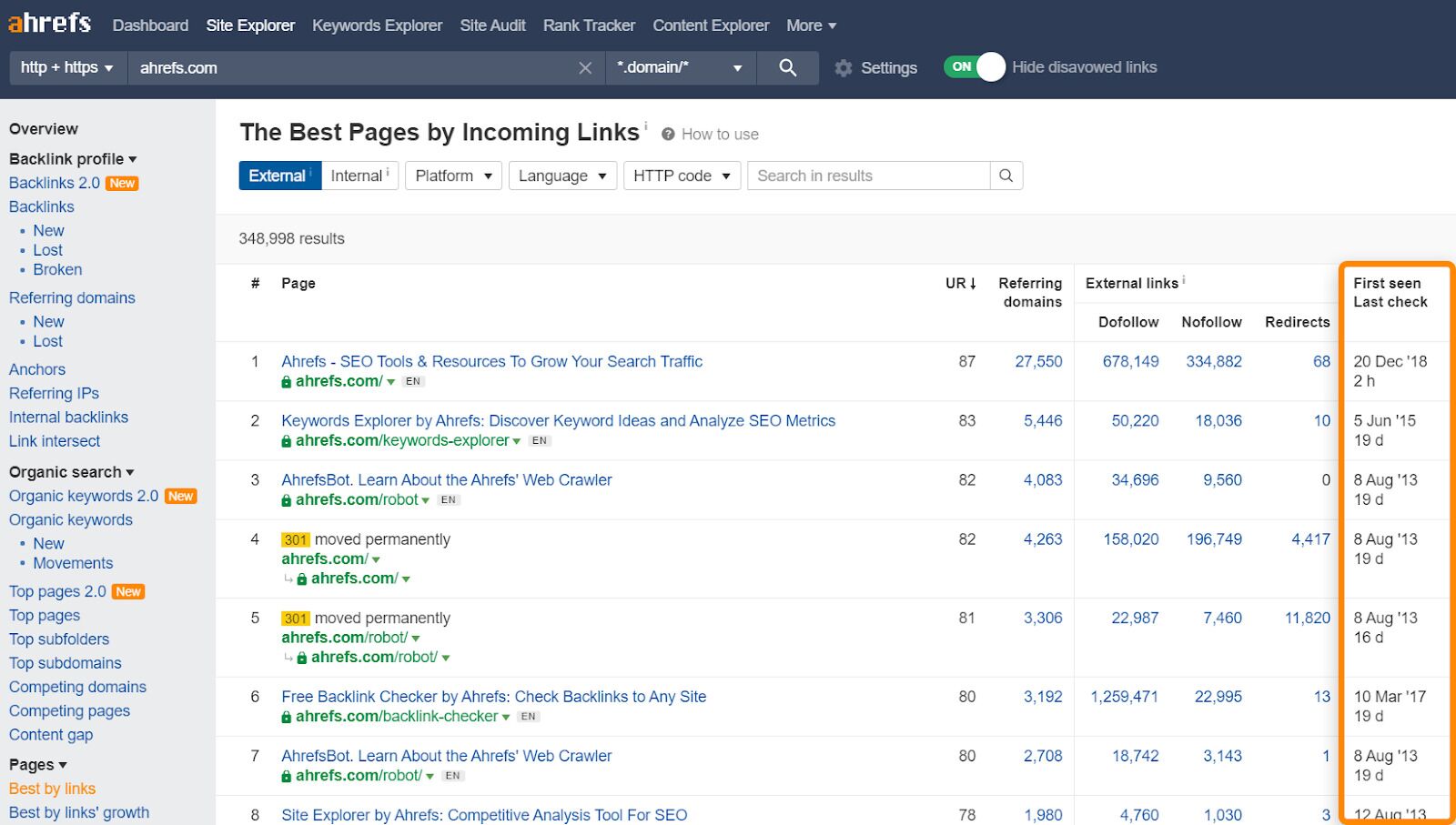

To assess the pages on your site with the most links, check out the Best by Links report in Ahrefs.

Pay attention to the “First seen”, “Last check” column, which reveals which pages have been crawled most often, and when.

2. Keep things fresh

According to Google’s Senior Search Analyst, John Mueller…

Search engines like google recrawl URLs at totally different charges, typically it’s a number of occasions a day, typically it’s as soon as each few months.

However when you usually replace your content material, you’ll see crawlers dropping by extra usually.

Search engines like google like Google need to ship correct and up-to-date data to stay aggressive and related, so updating your content material is like dangling a carrot on a stick.

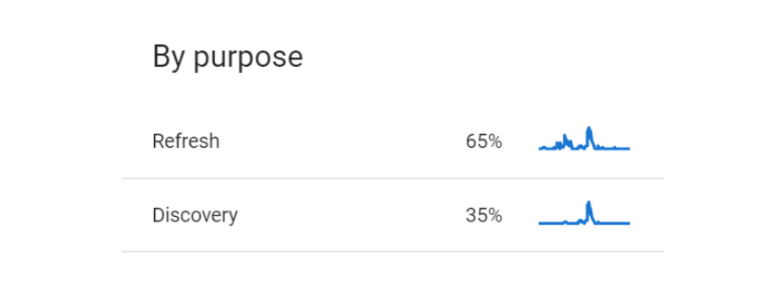

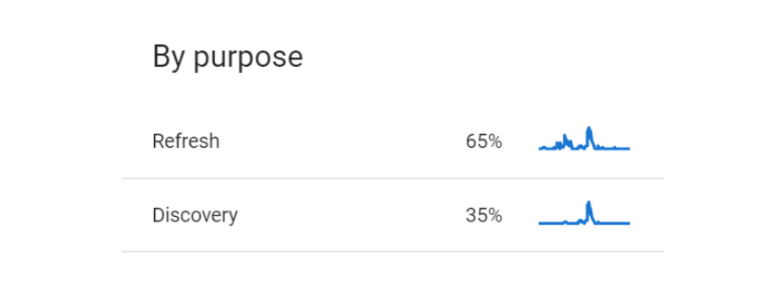

You may study simply how shortly Google processes your updates by checking your crawl stats in Google Search Console.

When you’re there, take a look at the breakdown of crawling “By goal” (i.e. % cut up of pages refreshed vs pages newly found). This may also enable you work out simply how usually you’re encouraging net crawlers to revisit your website.

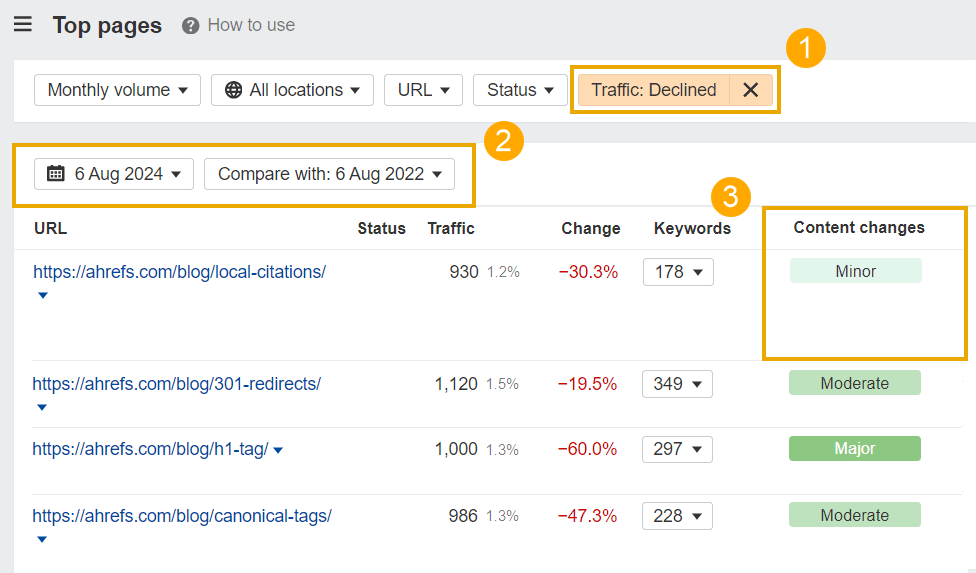

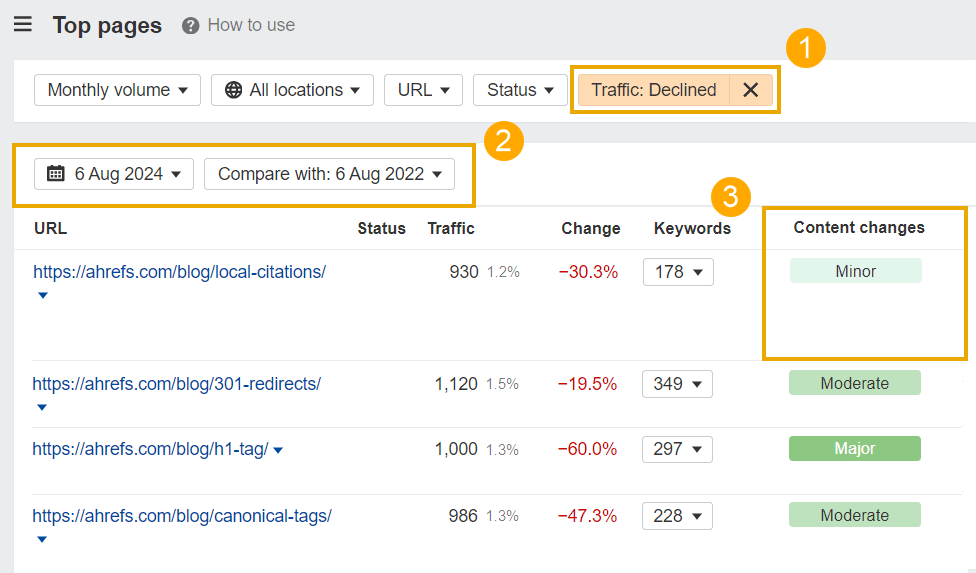

To seek out particular pages that want updating in your website, head to the High Pages report in Ahrefs Website Explorer, then:

- Set the visitors filter to “Declined”

- Set the comparability date to the final yr or two

- Have a look at Content material Adjustments standing and replace pages with solely minor adjustments

High Pages exhibits you the content material in your website driving probably the most natural visitors. Pushing updates to those pages will encourage crawlers to go to your greatest content material extra usually, and (hopefully) enhance any declining visitors.

3. Refine your website construction

Providing a transparent website construction by way of a logical sitemap, and backing that up with related inside hyperlinks will assist crawlers:

- Higher navigate your website

- Perceive its hierarchy

- Index and rank your most respected content material

Mixed, these elements may also please customers, since they help simple navigation, diminished bounce charges, and elevated engagement.

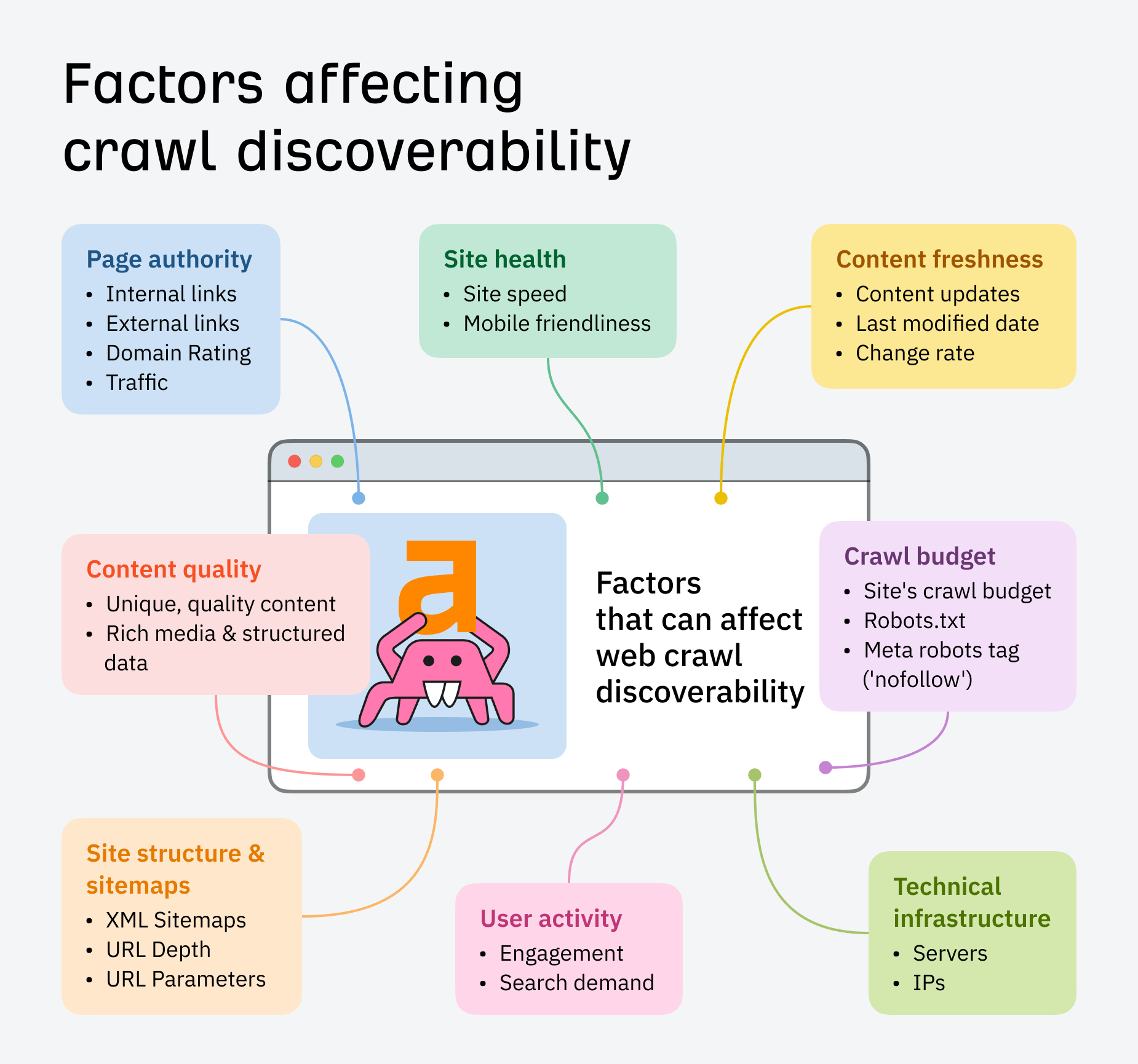

Beneath are some extra parts that may doubtlessly affect how your website will get found and prioritized in crawling:

What’s crawl finances?

Because of this, every website has a crawl finances, which is the variety of URLs a crawler can and desires to crawl. Components like website velocity, mobile-friendliness, and a logical website construction impression the efficacy of crawl finances.

For a deeper dive into crawl budgets, take a look at Patrick Stox’s information: When Should You Worry About Crawl Budget?

Internet crawlers like Google crawl the whole web, and you may’t management which websites they go to, or how usually.

However what you can do is use web site crawlers, that are like your personal personal bots.

Ask them to crawl your web site to seek out and repair necessary web optimization issues, or examine your competitor’s website and switch their greatest weak point into your subsequent alternative.

Website crawlers primarily simulate search efficiency. They enable you perceive how a search engine’s net crawlers would possibly interpret your pages, based mostly on their:

- Construction

- Content material

- Meta knowledge

- Web page load velocity

- Errors

- And many others

Instance: Ahrefs Website Audit

Site Audit helps SEOs to:

- Analyze 170+ technical SEO issues

- Conduct on-demand crawls, with live site performance data

- Assess up to 170k URLs a minute

- Troubleshoot, maintain, and improve their visibility in search engines

From URL discovery to revisiting, website crawlers operate very similarly to web crawlers – only instead of indexing and ranking your page in the SERPs, they store and analyze it in their own database.

You can crawl your site either locally or remotely. Desktop crawlers like ScreamingFrog let you download and customize your site crawl, while cloud-based tools like Ahrefs Site Audit perform the crawl without using your computer’s resources – helping you work collaboratively on fixes and site optimization.

If you wish to scan whole web sites in actual time to detect technical web optimization issues, configure a crawl in Website Audit.

It gives you visible knowledge breakdowns, website well being scores, and detailed repair suggestions that can assist you perceive how a search engine interprets your website.

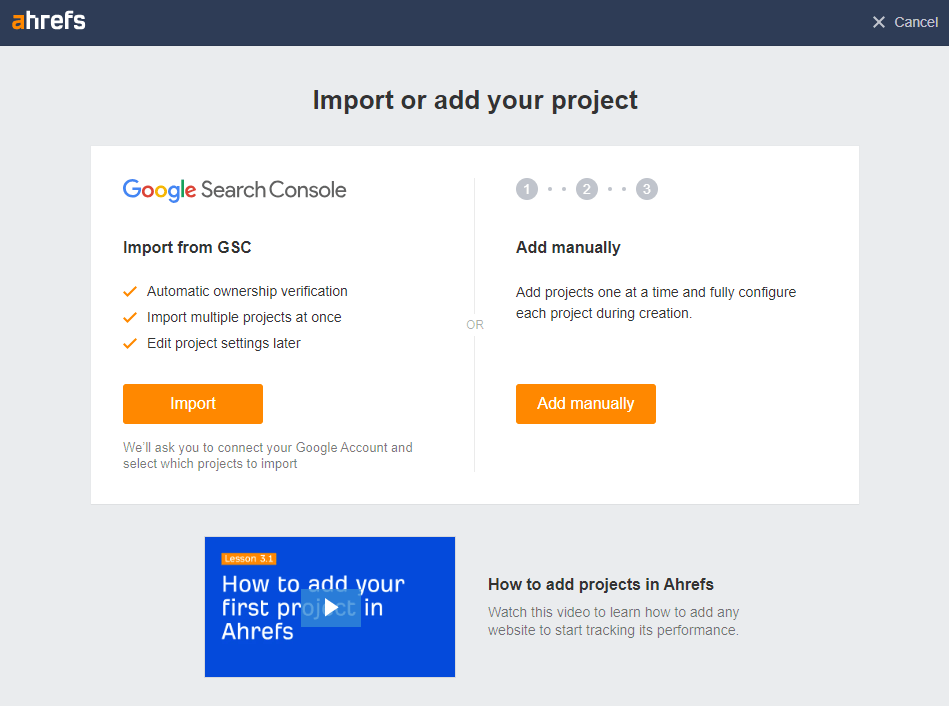

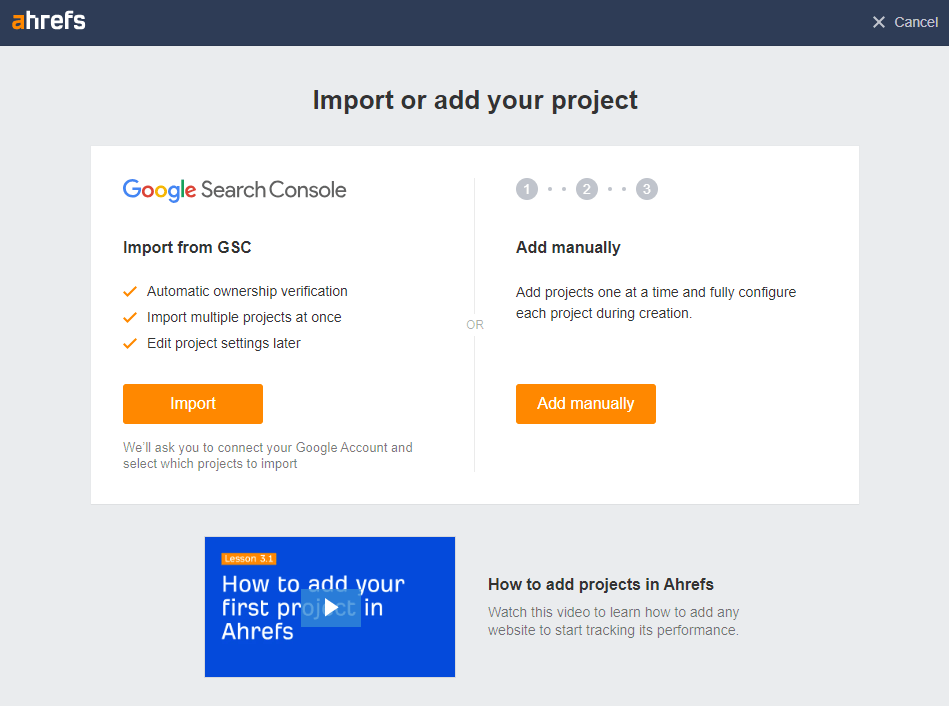

1. Arrange your crawl

Navigate to the Website Audit tab and select an present mission, or set one up.

A mission is any area, subdomain, or URL you need to monitor over time.

When you’ve configured your crawl settings – together with your crawl schedule and URL sources – you can begin your audit and also you’ll be notified as quickly because it’s full.

Listed below are some issues you are able to do proper away.

2. Diagnose high errors

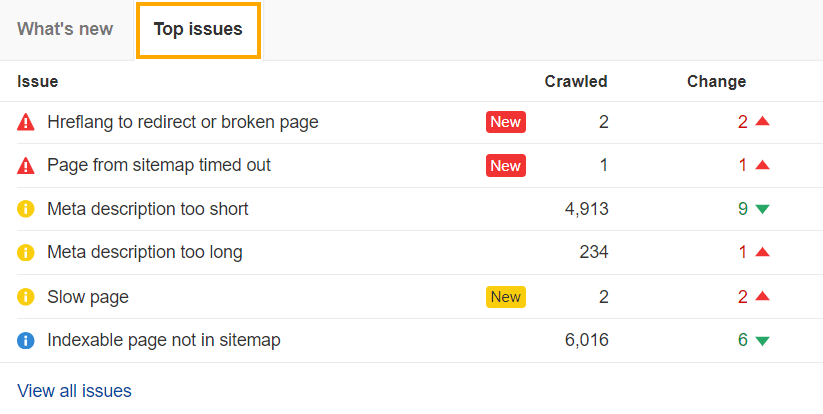

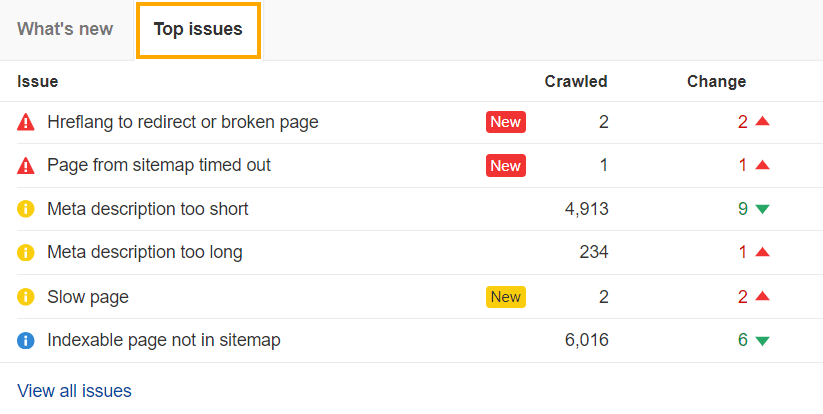

The High Points overview in Website Audit exhibits you your most urgent errors, warnings, and notices, based mostly on the variety of URLs affected.

Working by these as a part of your web optimization roadmap will assist you:

1. Spot errors (crimson icons) impacting crawling – e.g.

- HTTP standing code/consumer errors

- Damaged hyperlinks

- Canonical points

2. Optimize your content material and rankings based mostly on warnings (yellow) – e.g.

- Lacking alt textual content

- Hyperlinks to redirects

- Overly lengthy meta descriptions

3. Keep regular visibility with notices (blue icon) – e.g.

- Natural visitors drops

- A number of H1s

- Indexable pages not in sitemap

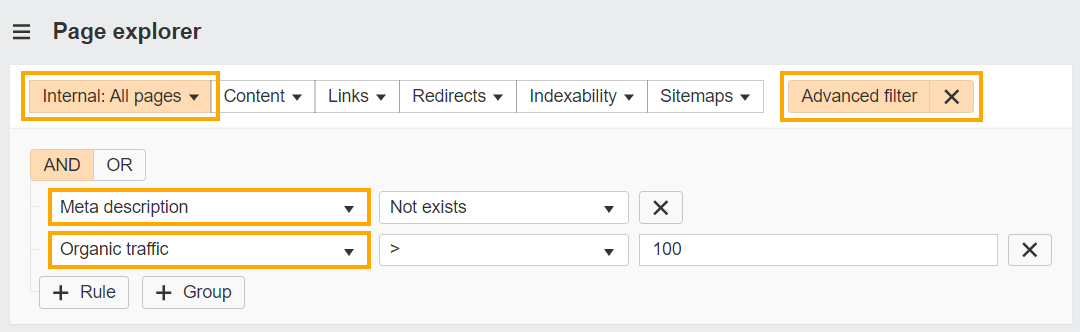

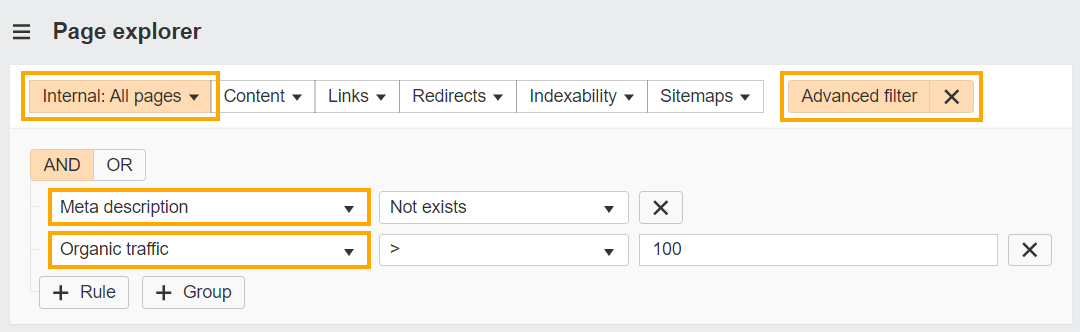

Filter points

You can even prioritize fixes utilizing filters.

Say you could have hundreds of pages with lacking meta descriptions. Make the duty extra manageable and impactful by concentrating on excessive visitors pages first.

- Head to the Web page Explorer report in Website Audit

- Choose the superior filter dropdown

- Set an inside pages filter

- Choose an ‘And’ operator

- Choose ‘Meta description’ and ‘Not exists’

- Choose ‘Natural visitors > 100’

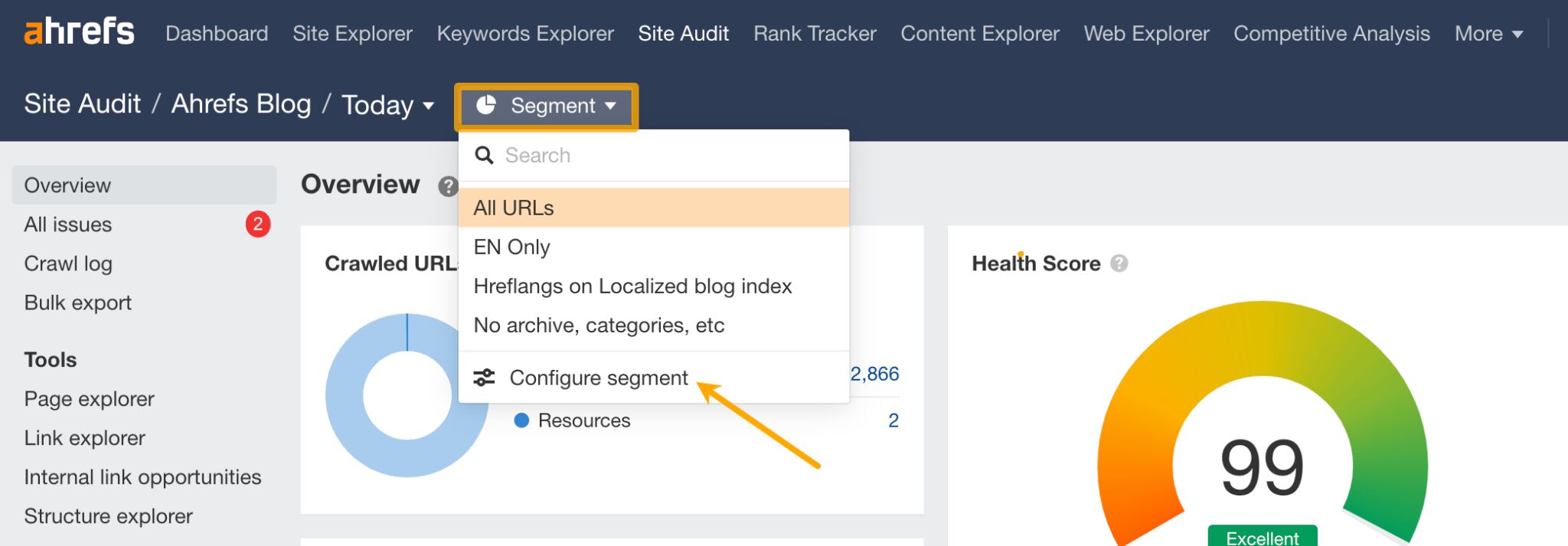

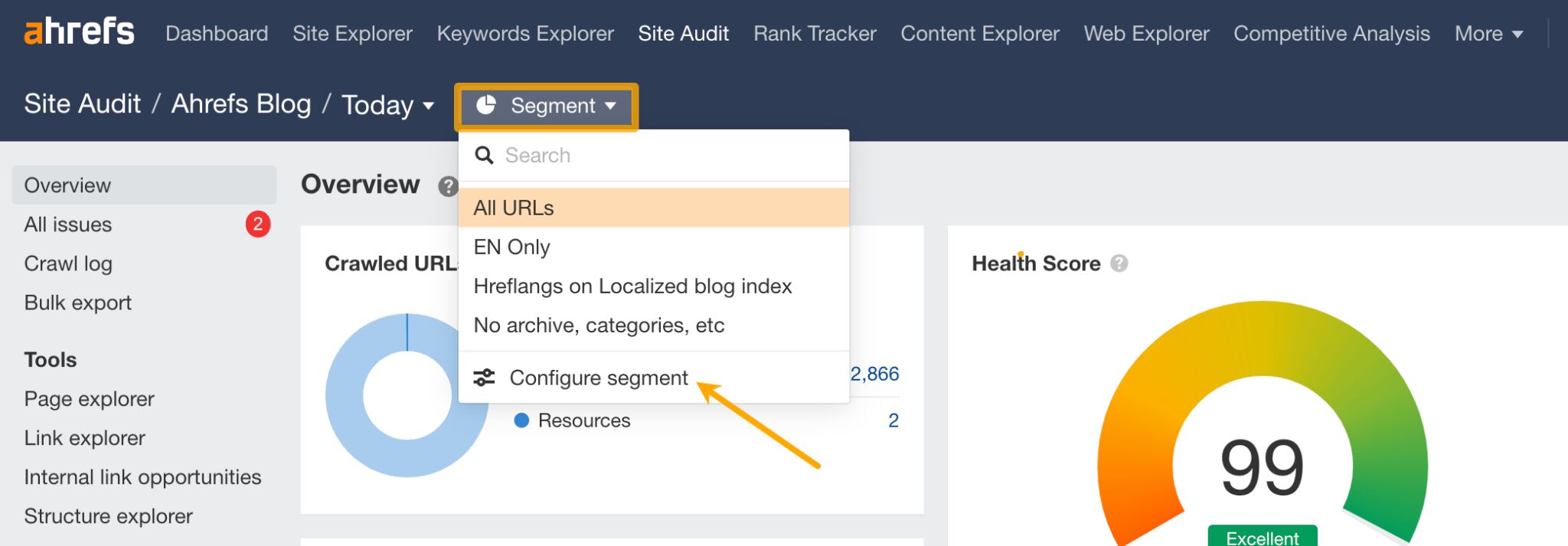

Crawl a very powerful elements of your website

Section and zero-in on a very powerful pages in your website (e.g. subfolders or subdomains) utilizing Website Audit’s 200+ filters – whether or not that’s your weblog, ecommerce retailer, and even pages that earn over a sure visitors threshold.

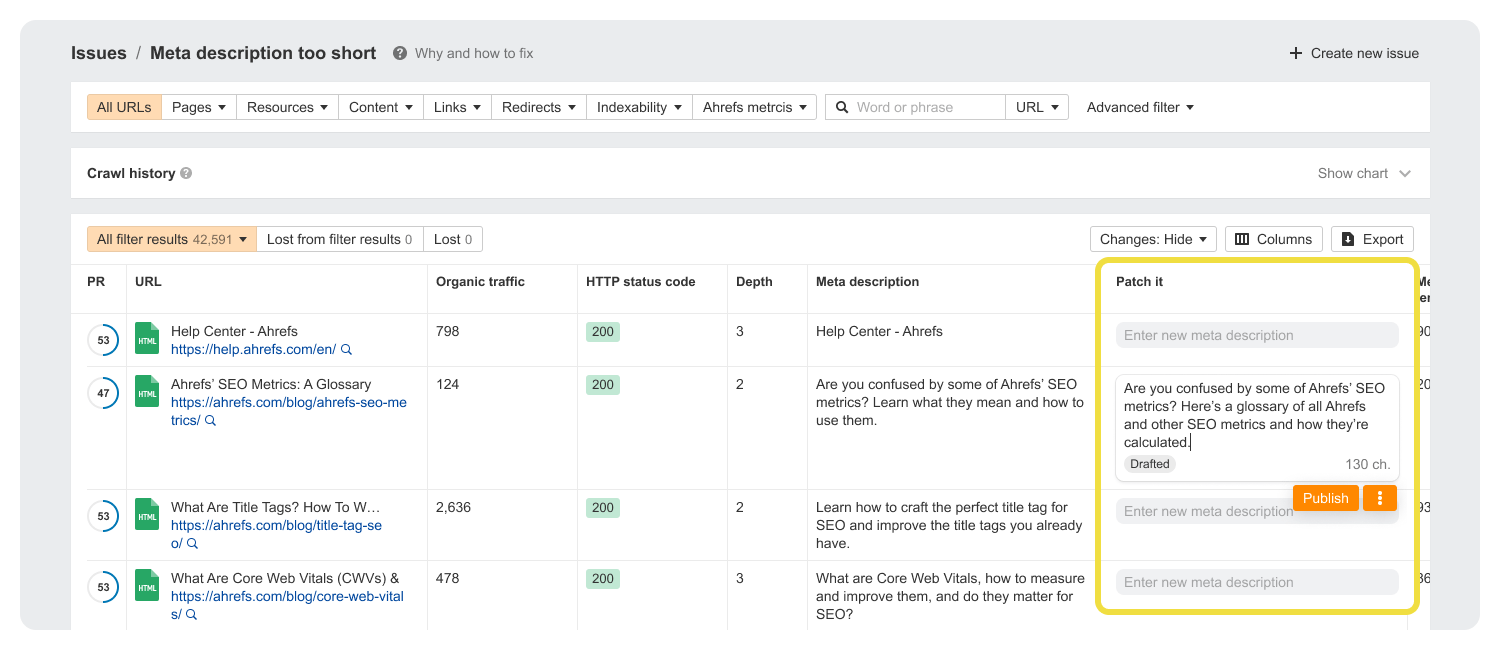

3. Expedite fixes

For those who don’t have coding expertise, then the prospect of crawling your website and implementing fixes may be intimidating.

For those who do have dev help, points are simpler to treatment, however then it turns into a matter of bargaining for an additional particular person’s time.

We’ve received a brand new function on the best way that can assist you clear up for these sorts of complications.

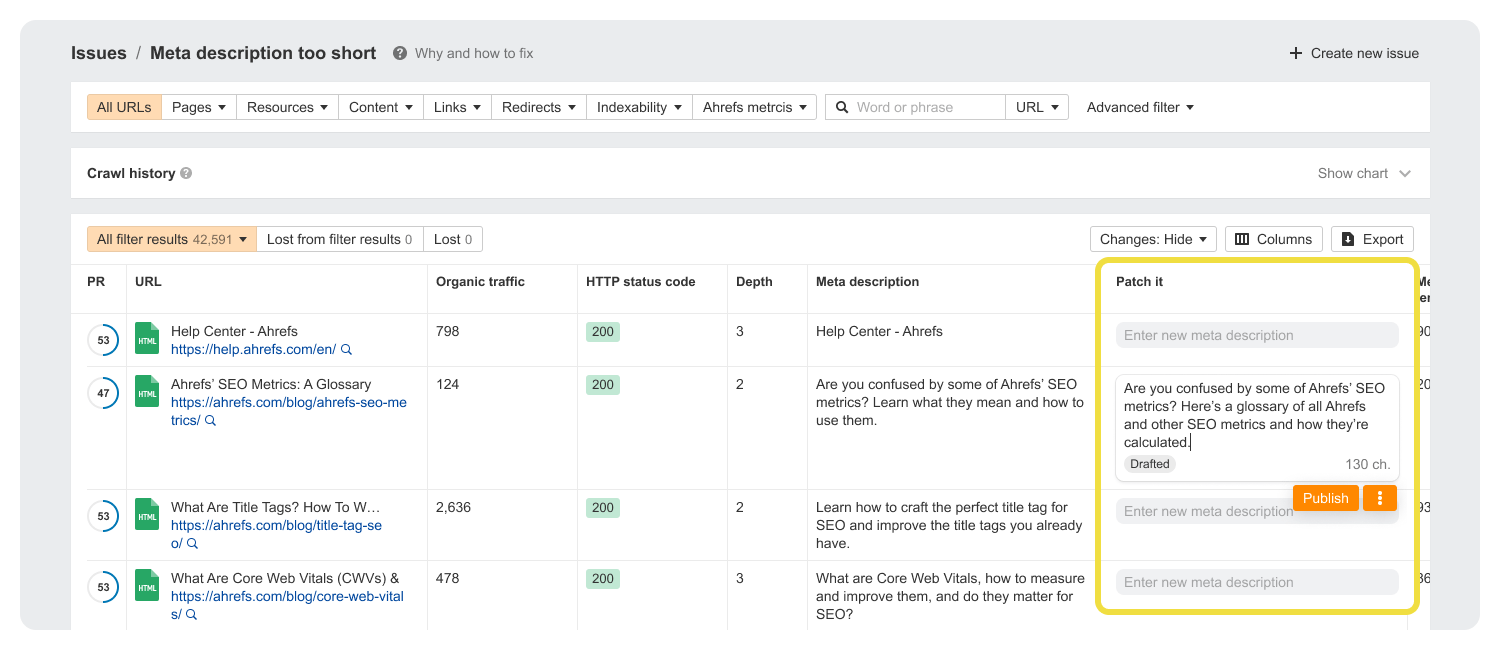

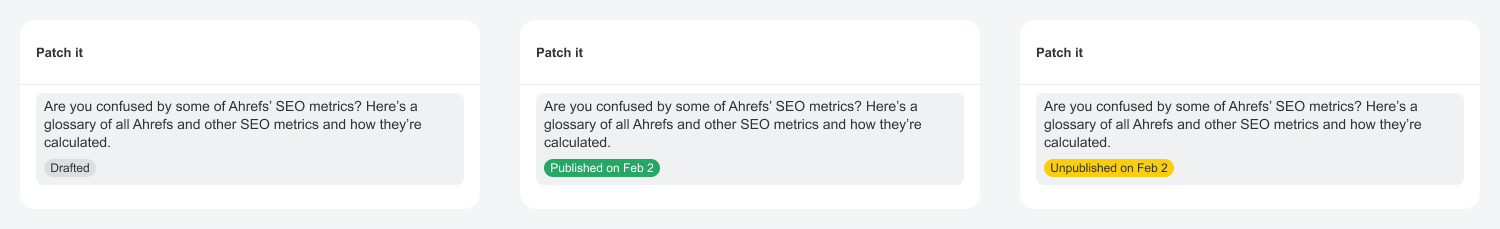

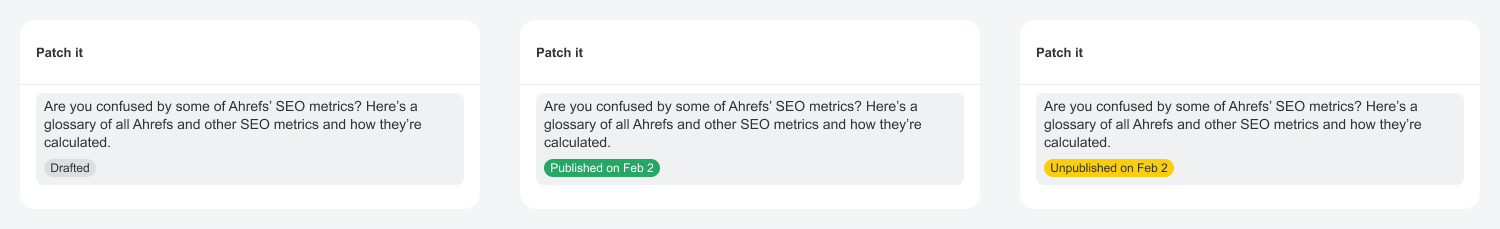

Coming quickly, Patches are fixes you may make autonomously in Website Audit.

Title adjustments, lacking meta descriptions, site-wide damaged hyperlinks – while you face these sorts of errors you may hit “Patch it” to publish a repair on to your web site, with out having to pester a dev.

And when you’re uncertain of something, you may roll-back your patches at any level.

4. Spot optimization alternatives

Auditing your website with a web site crawler is as a lot about recognizing alternatives as it’s about fixing bugs.

Enhance inside linking

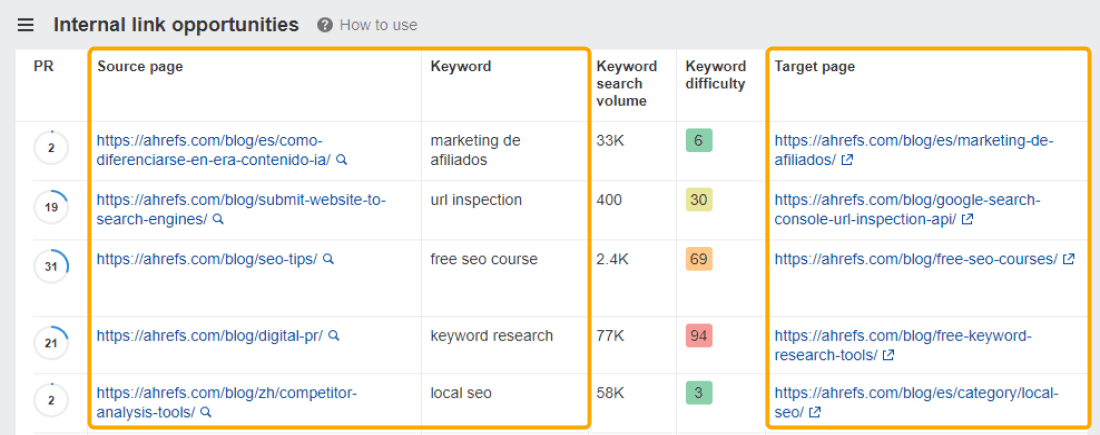

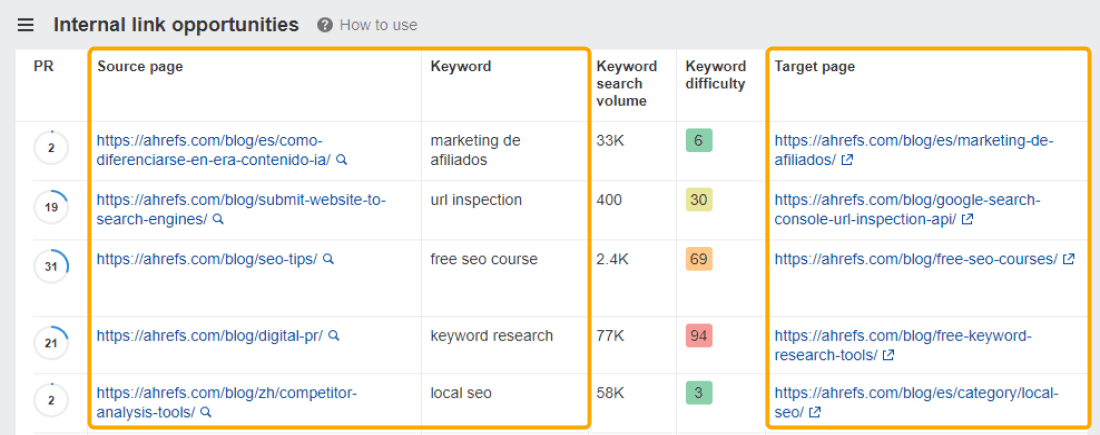

The Inside Hyperlink Alternatives report in Website Audit exhibits you related inside linking ideas, by taking the highest 10 key phrases (by visitors) for every crawled web page, then on the lookout for mentions of them in your different crawled pages.

‘Supply’ pages are those it’s best to hyperlink from, and ‘Goal’ pages are those it’s best to hyperlink to.

The extra prime quality connections you make between your content material, the better it will likely be for Googlebot to crawl your website.

Ultimate ideas

Understanding web site crawling is extra than simply an web optimization hack – it’s foundational information that instantly impacts your visitors and ROI.

Understanding how crawlers work means realizing how engines like google “see” your website, and that’s half the battle on the subject of rating.