OpenAI Whisper is a complicated automated speech recognition (ASR) mannequin with an MIT license. ASR expertise finds utility in transcription companies, voice assistants, and enhancing accessibility for people with listening to impairments. This state-of-the-art mannequin is educated on an enormous and various dataset of multilingual and multitask supervised knowledge collected from the net. Its excessive accuracy and adaptableness make it a useful asset for a big selection of voice-related duties.

Within the ever-evolving panorama of machine studying and synthetic intelligence, Amazon SageMaker supplies a complete ecosystem. SageMaker empowers knowledge scientists, builders, and organizations to develop, practice, deploy, and handle machine studying fashions at scale. Providing a variety of instruments and capabilities, it simplifies the whole machine studying workflow, from knowledge pre-processing and mannequin improvement to easy deployment and monitoring. SageMaker’s user-friendly interface makes it a pivotal platform for unlocking the complete potential of AI, establishing it as a game-changing answer within the realm of synthetic intelligence.

On this publish, we embark on an exploration of SageMaker’s capabilities, particularly specializing in internet hosting Whisper fashions. We’ll dive deep into two strategies for doing this: one using the Whisper PyTorch mannequin and the opposite utilizing the Hugging Face implementation of the Whisper mannequin. Moreover, we’ll conduct an in-depth examination of SageMaker’s inference choices, evaluating them throughout parameters akin to pace, price, payload dimension, and scalability. This evaluation empowers customers to make knowledgeable selections when integrating Whisper fashions into their particular use instances and techniques.

Resolution overview

The next diagram exhibits the principle parts of this answer.

- So as to host the mannequin on Amazon SageMaker, step one is to avoid wasting the mannequin artifacts. These artifacts discuss with the important parts of a machine studying mannequin wanted for varied purposes, together with deployment and retraining. They’ll embody mannequin parameters, configuration information, pre-processing parts, in addition to metadata, akin to model particulars, authorship, and any notes associated to its efficiency. It’s vital to notice that Whisper fashions for PyTorch and Hugging Face implementations consist of various mannequin artifacts.

- Subsequent, we create customized inference scripts. Inside these scripts, we outline how the mannequin must be loaded and specify the inference course of. That is additionally the place we are able to incorporate customized parameters as wanted. Moreover, you may record the required Python packages in a

necessities.txtfile. Through the mannequin’s deployment, these Python packages are robotically put in within the initialization section. - Then we choose both the PyTorch or Hugging Face deep studying containers (DLC) supplied and maintained by AWS. These containers are pre-built Docker pictures with deep studying frameworks and different needed Python packages. For extra info, you may test this hyperlink.

- With the mannequin artifacts, customized inference scripts and chosen DLCs, we’ll create Amazon SageMaker fashions for PyTorch and Hugging Face respectively.

- Lastly, the fashions could be deployed on SageMaker and used with the next choices: real-time inference endpoints, batch remodel jobs, and asynchronous inference endpoints. We’ll dive into these choices in additional element later on this publish.

The instance pocket book and code for this answer can be found on this GitHub repository.

Determine 1. Overview of Key Resolution Parts

Walkthrough

Internet hosting the Whisper Mannequin on Amazon SageMaker

On this part, we’ll clarify the steps to host the Whisper mannequin on Amazon SageMaker, utilizing PyTorch and Hugging Face Frameworks, respectively. To experiment with this answer, you want an AWS account and entry to the Amazon SageMaker service.

PyTorch framework

- Save mannequin artifacts

The primary choice to host the mannequin is to make use of the Whisper official Python package, which could be put in utilizing pip set up openai-whisper. This bundle supplies a PyTorch mannequin. When saving mannequin artifacts within the native repository, step one is to avoid wasting the mannequin’s learnable parameters, akin to mannequin weights and biases of every layer within the neural community, as a ‘pt’ file. You’ll be able to select from totally different mannequin sizes, together with ‘tiny,’ ‘base,’ ‘small,’ ‘medium,’ and ‘massive.’ Bigger mannequin sizes provide increased accuracy efficiency, however come at the price of longer inference latency. Moreover, it is advisable to save the mannequin state dictionary and dimension dictionary, which include a Python dictionary that maps every layer or parameter of the PyTorch mannequin to its corresponding learnable parameters, together with different metadata and customized configurations. The code beneath exhibits how you can save the Whisper PyTorch artifacts.

- Choose DLC

The following step is to pick the pre-built DLC from this link. Watch out when selecting the proper picture by contemplating the next settings: framework (PyTorch), framework model, process (inference), Python model, and {hardware} (i.e., GPU). It is strongly recommended to make use of the most recent variations for the framework and Python at any time when attainable, as this leads to higher efficiency and handle recognized points and bugs from earlier releases.

- Create Amazon SageMaker fashions

Subsequent, we make the most of the SageMaker Python SDK to create PyTorch fashions. It’s vital to recollect so as to add surroundings variables when making a PyTorch mannequin. By default, TorchServe can solely course of file sizes as much as 6MB, whatever the inference sort used.

The next desk exhibits the settings for various PyTorch variations:

| Framework | Setting variables |

| PyTorch 1.8 (primarily based on TorchServe) | ‘TS_MAX_REQUEST_SIZE‘: ‘100000000’‘ TS_MAX_RESPONSE_SIZE‘: ‘100000000’‘ TS_DEFAULT_RESPONSE_TIMEOUT‘: ‘1000’ |

| PyTorch 1.4 (primarily based on MMS) | ‘MMS_MAX_REQUEST_SIZE‘: ‘1000000000’‘ MMS_MAX_RESPONSE_SIZE‘: ‘1000000000’‘ MMS_DEFAULT_RESPONSE_TIMEOUT‘: ‘900’ |

- Outline the mannequin loading technique in inference.py

Within the customized inference.py script, we first test for the supply of a CUDA-capable GPU. If such a GPU is obtainable, then we assign the 'cuda' machine to the DEVICE variable; in any other case, we assign the 'cpu' machine. This step ensures that the mannequin is positioned on the obtainable {hardware} for environment friendly computation. We load the PyTorch mannequin utilizing the Whisper Python bundle.

Hugging Face framework

- Save mannequin artifacts

The second possibility is to make use of Hugging Face’s Whisper implementation. The mannequin could be loaded utilizing the AutoModelForSpeechSeq2Seq transformers class. The learnable parameters are saved in a binary (bin) file utilizing the save_pretrained technique. The tokenizer and preprocessor additionally have to be saved individually to make sure the Hugging Face mannequin works correctly. Alternatively, you may deploy a mannequin on Amazon SageMaker straight from the Hugging Face Hub by setting two surroundings variables: HF_MODEL_ID and HF_TASK. For extra info, please discuss with this webpage.

- Choose DLC

Much like the PyTorch framework, you may select a pre-built Hugging Face DLC from the identical link. Make sure that to pick a DLC that helps the most recent Hugging Face transformers and consists of GPU help.

- Create Amazon SageMaker fashions

Equally, we make the most of the SageMaker Python SDK to create Hugging Face fashions. The Hugging Face Whisper mannequin has a default limitation the place it may well solely course of audio segments as much as 30 seconds. To deal with this limitation, you may embody the chunk_length_s parameter within the surroundings variable when creating the Hugging Face mannequin, and later move this parameter into the customized inference script when loading the mannequin. Lastly, set the surroundings variables to extend payload dimension and response timeout for the Hugging Face container.

| Framework | Setting variables |

|

HuggingFace Inference Container (primarily based on MMS) |

‘MMS_MAX_REQUEST_SIZE‘: ‘2000000000’‘ MMS_MAX_RESPONSE_SIZE‘: ‘2000000000’‘ MMS_DEFAULT_RESPONSE_TIMEOUT‘: ‘900’ |

- Outline the mannequin loading technique in inference.py

When creating customized inference script for the Hugging Face mannequin, we make the most of a pipeline, permitting us to move the chunk_length_s as a parameter. This parameter permits the mannequin to effectively course of lengthy audio information throughout inference.

Exploring totally different inference choices on Amazon SageMaker

The steps for choosing inference choices are the identical for each PyTorch and Hugging Face fashions, so we gained’t differentiate between them beneath. Nevertheless, it’s price noting that, on the time of penning this publish, the serverless inference possibility from SageMaker doesn’t help GPUs, and consequently, we exclude this selection for this use-case.

- Actual-time inference

We are able to deploy the mannequin as a real-time endpoint, offering responses in milliseconds. Nevertheless, it’s vital to notice that this selection is proscribed to processing inputs below 6 MB. We outline the serializer as an audio serializer, which is chargeable for changing the enter knowledge into an appropriate format for the deployed mannequin. We make the most of a GPU occasion for inference, permitting for accelerated processing of audio information. The inference enter is an audio file that’s from the native repository.

- Batch remodel job

The second inference possibility is the batch remodel job, which is able to processing enter payloads as much as 100 MB. Nevertheless, this technique could take a couple of minutes of latency. Every occasion can deal with just one batch request at a time, and the occasion initiation and shutdown additionally require a couple of minutes. The inference outcomes are saved in an Amazon Easy Storage Service (Amazon S3) bucket upon completion of the batch remodel job.

When configuring the batch transformer, you’ll want to embody max_payload = 100 to deal with bigger payloads successfully. The inference enter must be the Amazon S3 path to an audio file or an Amazon S3 Bucket folder containing an inventory of audio information, every with a dimension smaller than 100 MB.

Batch Rework partitions the Amazon S3 objects within the enter by key and maps Amazon S3 objects to cases. For instance, when you could have a number of audio information, one occasion would possibly course of input1.wav, and one other occasion would possibly course of the file named input2.wav to boost scalability. Batch Rework means that you can configure max_concurrent_transforms to extend the variety of HTTP requests made to every particular person transformer container. Nevertheless, it’s vital to notice that the worth of (max_concurrent_transforms* max_payload) should not exceed 100 MB.

- Asynchronous inference

Lastly, Amazon SageMaker Asynchronous Inference is good for processing a number of requests concurrently, providing average latency and supporting enter payloads of as much as 1 GB. This feature supplies wonderful scalability, enabling the configuration of an autoscaling group for the endpoint. When a surge of requests happens, it robotically scales as much as deal with the site visitors, and as soon as all requests are processed, the endpoint scales all the way down to 0 to avoid wasting prices.

Utilizing asynchronous inference, the outcomes are robotically saved to an Amazon S3 bucket. Within the AsyncInferenceConfig, you may configure notifications for profitable or failed completions. The enter path factors to an Amazon S3 location of the audio file. For extra particulars, please discuss with the code on GitHub.

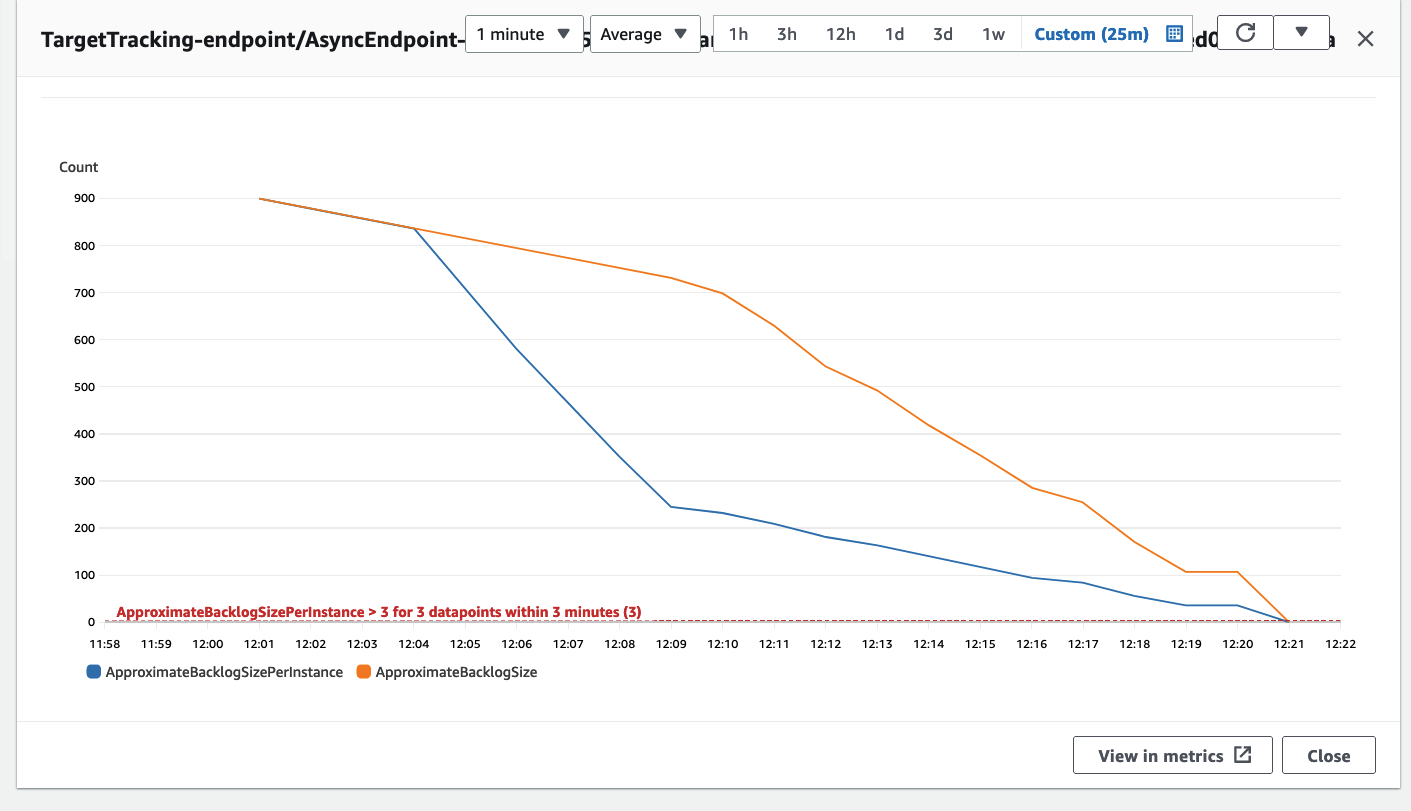

Elective: As talked about earlier, we’ve the choice to configure an autoscaling group for the asynchronous inference endpoint, which permits it to deal with a sudden surge in inference requests. A code instance is supplied on this GitHub repository. Within the following diagram, you may observe a line chart displaying two metrics from Amazon CloudWatch: ApproximateBacklogSize and ApproximateBacklogSizePerInstance. Initially, when 1000 requests have been triggered, just one occasion was obtainable to deal with the inference. For 3 minutes, the backlog dimension constantly exceeded three (please observe that these numbers could be configured), and the autoscaling group responded by spinning up further cases to effectively filter the backlog. This resulted in a major lower within the ApproximateBacklogSizePerInstance, permitting backlog requests to be processed a lot sooner than in the course of the preliminary section.

Determine 2. Line chart illustrating the temporal modifications in Amazon CloudWatch metrics

Comparative evaluation for the inference choices

The comparisons for various inference choices are primarily based on widespread audio processing use instances. Actual-time inference provides the quickest inference pace however restricts payload dimension to six MB. This inference sort is appropriate for audio command techniques, the place customers management or work together with units or software program utilizing voice instructions or spoken directions. Voice instructions are sometimes small in dimension, and low inference latency is essential to make sure that transcribed instructions can promptly set off subsequent actions. Batch Rework is good for scheduled offline duties, when every audio file’s dimension is below 100 MB, and there’s no particular requirement for quick inference response occasions. Asynchronous inference permits for uploads of as much as 1 GB and provides average inference latency. This inference sort is well-suited for transcribing films, TV sequence, and recorded conferences the place bigger audio information have to be processed.

Each real-time and asynchronous inference choices present autoscaling capabilities, permitting the endpoint cases to robotically scale up or down primarily based on the quantity of requests. In instances with no requests, autoscaling removes pointless cases, serving to you keep away from prices related to provisioned cases that aren’t actively in use. Nevertheless, for real-time inference, at the very least one persistent occasion should be retained, which may result in increased prices if the endpoint operates repeatedly. In distinction, asynchronous inference permits occasion quantity to be diminished to 0 when not in use. When configuring a batch remodel job, it’s attainable to make use of a number of cases to course of the job and regulate max_concurrent_transforms to allow one occasion to deal with a number of requests. Subsequently, all three inference choices provide nice scalability.

Cleansing up

After getting accomplished using the answer, guarantee to take away the SageMaker endpoints to forestall incurring further prices. You should use the supplied code to delete real-time and asynchronous inference endpoints, respectively.

Conclusion

On this publish, we confirmed you ways deploying machine studying fashions for audio processing has develop into more and more important in varied industries. Taking the Whisper mannequin for example, we demonstrated how you can host open-source ASR fashions on Amazon SageMaker utilizing PyTorch or Hugging Face approaches. The exploration encompassed varied inference choices on Amazon SageMaker, providing insights into effectively dealing with audio knowledge, making predictions, and managing prices successfully. This publish goals to supply information for researchers, builders, and knowledge scientists interested by leveraging the Whisper mannequin for audio-related duties and making knowledgeable selections on inference methods.

For extra detailed info on deploying fashions on SageMaker, please discuss with this Developer information. Moreover, the Whisper mannequin could be deployed utilizing SageMaker JumpStart. For extra particulars, kindly test the Whisper fashions for automated speech recognition now obtainable in Amazon SageMaker JumpStart publish.

Be at liberty to take a look at the pocket book and code for this challenge on GitHub and share your remark with us.

In regards to the Writer

Ying Hou, PhD, is a Machine Studying Prototyping Architect at AWS. Her main areas of curiosity embody Deep Studying, with a deal with GenAI, Pc Imaginative and prescient, NLP, and time sequence knowledge prediction. In her spare time, she relishes spending high quality moments together with her household, immersing herself in novels, and mountain climbing within the nationwide parks of the UK.

Ying Hou, PhD, is a Machine Studying Prototyping Architect at AWS. Her main areas of curiosity embody Deep Studying, with a deal with GenAI, Pc Imaginative and prescient, NLP, and time sequence knowledge prediction. In her spare time, she relishes spending high quality moments together with her household, immersing herself in novels, and mountain climbing within the nationwide parks of the UK.