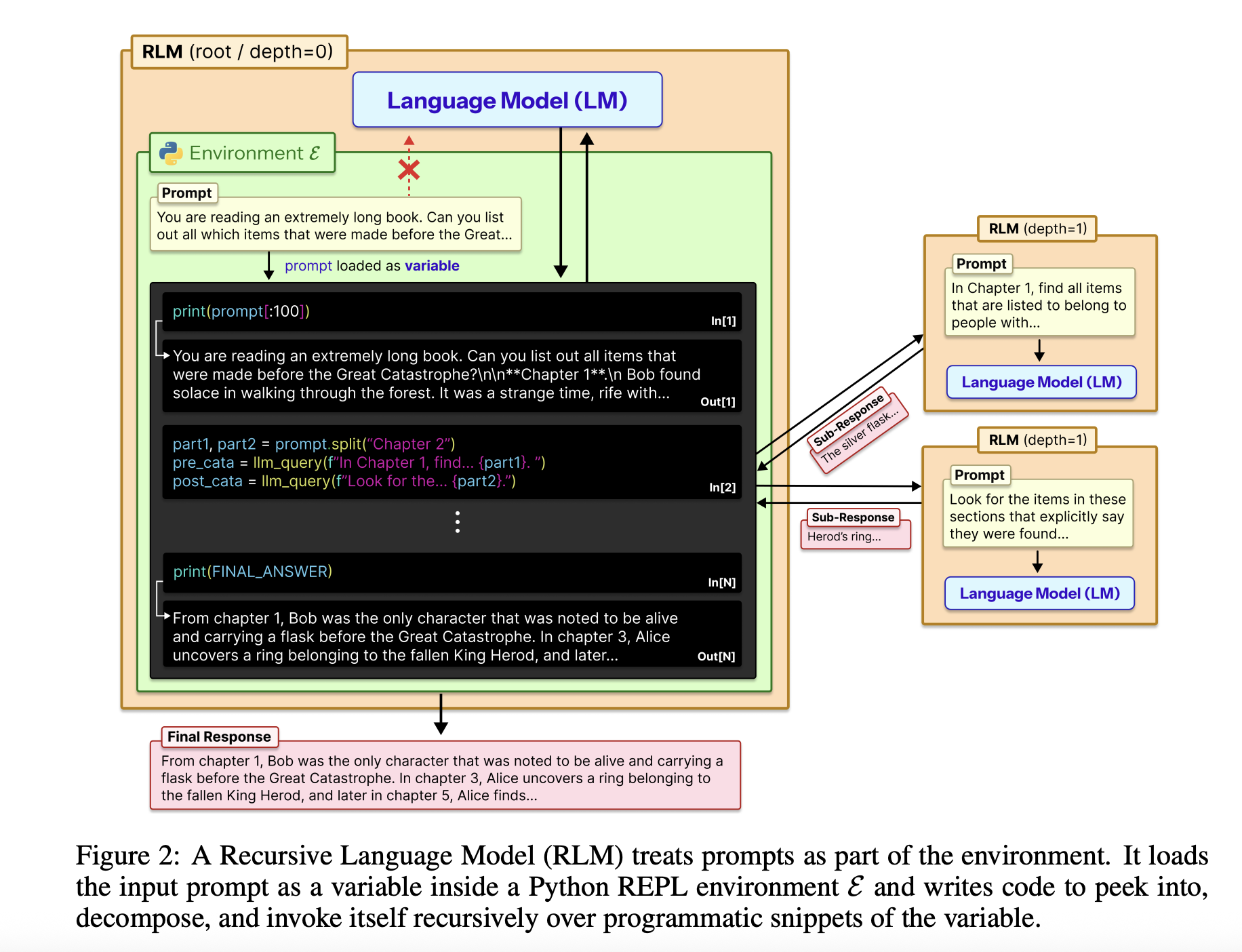

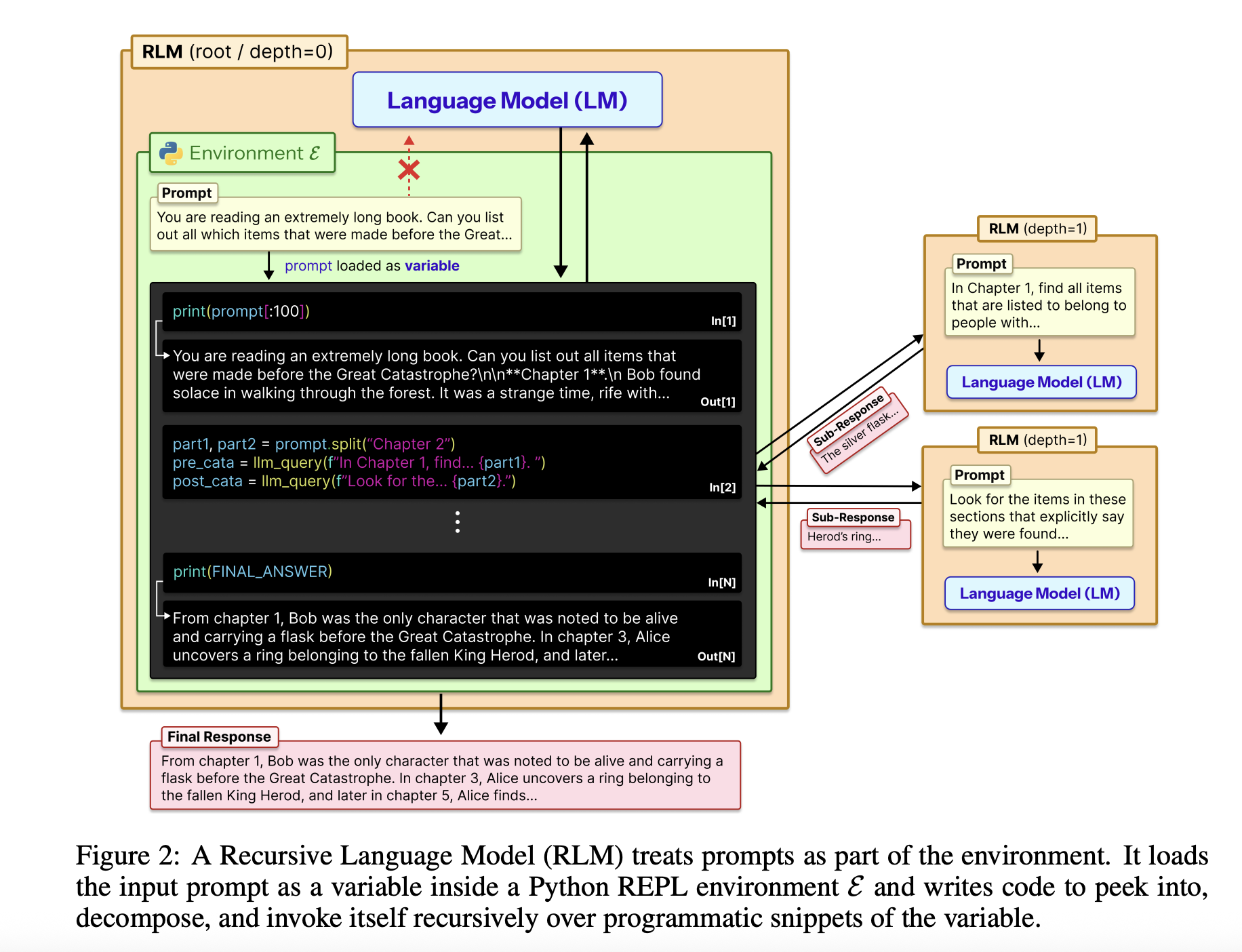

recursive language model It goals to beat the standard trade-off between context size, precision, and value in giant language fashions. Moderately than having the mannequin learn an enormous immediate in a single move, RLM treats the immediate as an exterior surroundings, lets the mannequin determine examine it in code, after which recursively calls itself on smaller items.

primary

The whole enter is loaded into the Python REPL as a single string variable. Root fashions reminiscent of GPT-5 don’t instantly reference that string in context. As a substitute, you obtain system prompts that specify learn a slice of variables, create a helper perform, generate sub-LLM calls, and mix the outcomes. Because the mannequin returns the ultimate textual content reply, the exterior interface stays equivalent to the usual chat completion endpoint.

RLM designs use the REPL as an extended context management aircraft. This surroundings is usually written in Python and exposes instruments reminiscent of string slicing, common expression looking out, and helper capabilities reminiscent of: llm_query This calls a smaller mannequin occasion, reminiscent of GPT-5-mini. The foundation mannequin writes code that calls these helpers to scan, cut up, and summarize exterior context variables. The code can retailer intermediate ends in variables and construct the ultimate reply step-by-step. This construction makes immediate dimension impartial of the mannequin context window and turns lengthy context processing right into a program synthesis drawback.

The place does it stand when it comes to analysis?

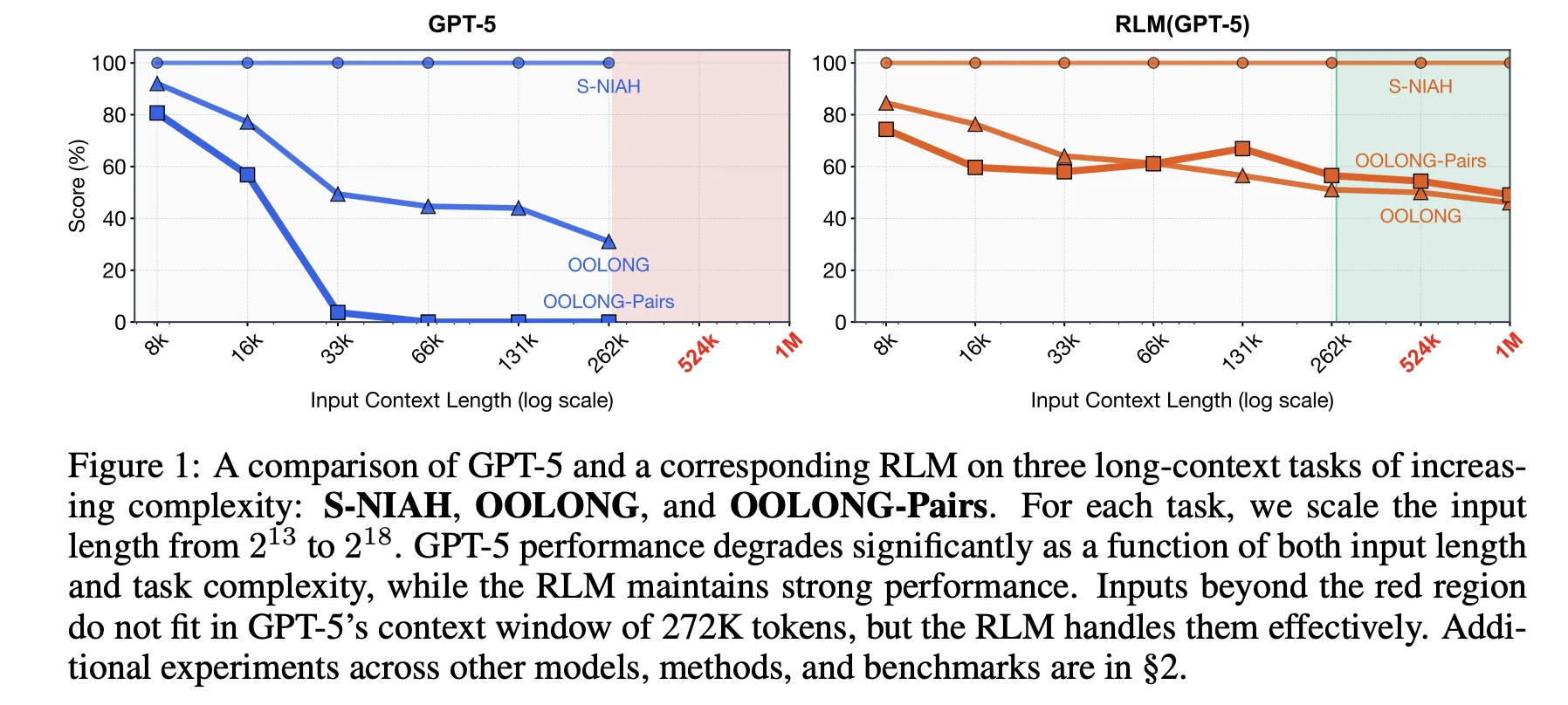

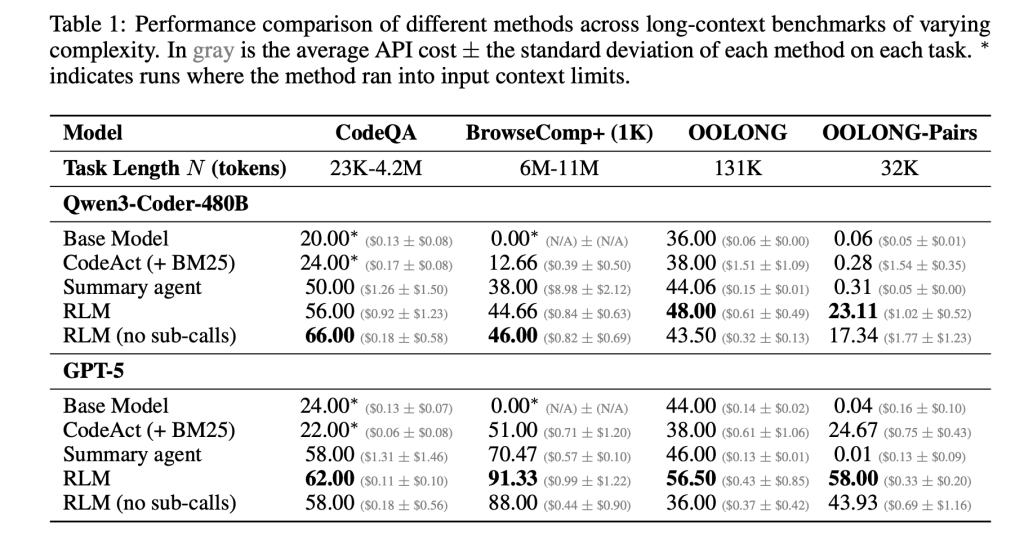

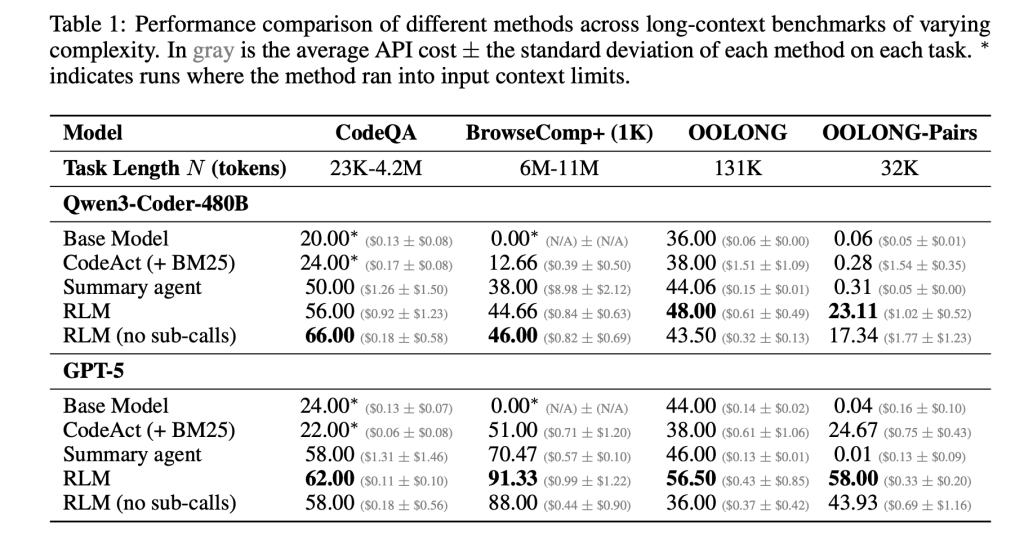

of research paper consider this concept on 4 lengthy context benchmarks with totally different computational buildings. S-NIAH is a continuing complexity needle in a haystack process. BrowseComp-Plus is a multihop web-style query answering benchmark for as much as 1,000 paperwork. OOLONG is an extended context inference process of linear complexity, the place the mannequin should remodel many entries after which mixture them. For OOLONG pairs, the problem is additional elevated by quadratic pairwise aggregation over the inputs. These duties emphasize each the size of context and the depth of inference, not simply retrieval.

On these benchmarks, RLM exhibits vital accuracy enhancements over direct LLM calls and customary lengthy context brokers. For GPT-5 (lengthy doc query answering setup) on CodeQA, the accuracy of the bottom mannequin reaches 24.00 and the accuracy of the summarization agent reaches 41.33. However, RLM reaches 62.00 and RLM with out recursion reaches 66.00. For Qwen3-Coder-480B-A35B, the bottom mannequin has a rating of 20.00, the CodeAct acquisition agent has a rating of 52.00, and the RLM with REPL-only variant has a rating of 56.00 and 44.66.

Acquire is highest on the OOLONG pair, which is probably the most troublesome setting. For GPT-5, when F1 is 0.04, the direct mannequin is sort of unusable. The Summarization and CodeAct brokers are round 0.01 and 24.67. The complete RLM reaches 58.00 F1, whereas the non-recursive REPL variant additionally reaches 43.93. For Qwen3-Coder, the bottom mannequin stays under 0.10 F1, whereas the complete RLM reaches 23.11 and the REPL-only model reaches 17.34. These numbers exhibit that each REPL and recursive subcalls are necessary for dense secondary duties.

BrowseComp-Plus highlights efficient context extensions. The corpus ranges from roughly 6 million to 11 million tokens, exceeding GPT-5’s 272,000 token context window by two orders of magnitude. RLM with GPT 5 maintains good efficiency even with 1,000 paperwork specified within the surroundings variables, whereas the usual GPT-5 baseline degrades because the variety of paperwork will increase. On this benchmark, RLM GPT 5 achieves an accuracy of roughly 91.33 at a median price of $0.99 per question, whereas a digital mannequin that instantly reads the complete context would price between $1.50 and $2.75 at present costs.

of research paper We additionally analyze the execution trajectory of RLM. A number of behavioral patterns emerge. Fashions usually begin with a peek step that examines the primary few thousand characters of the context. Then use grep-style filtering with common expressions or key phrase searches to slender down the related traces. For extra complicated queries, cut up the context into chunks and name a recursive LM on every chunk to carry out labeling or extraction, adopted by programmatic aggregation. For lengthy output duties, RLM shops partial outputs in variables and stitches them collectively. This bypasses the bottom mannequin’s output size limitations.

A brand new have a look at Prime Mind

prime intelligence staff turned this idea right into a concrete surroundings, RLMEnv, and built-in it into the verifier stack and surroundings hub. In that design, the principle RLM solely has a Python REPL, and the sub-LLMs present heavy-duty instruments like net search and file entry. REPL is llm_batch It really works in order that the basis mannequin can fan out many subqueries in parallel. reply Variables to which the ultimate answer must be written and flagged as prepared. This isolates the output of token-intensive instruments from the principle context and permits the RLM to delegate expensive operations to submodels.

prime intelligence We’ll consider this implementation in 4 environments. DeepDive makes use of search and open instruments and extremely detailed pages to check your net analysis. Math Python exposes Python REPLs for troublesome aggressive math issues. Oolong reuses lengthy context benchmarks in RLMEnv. Verbatim copy focuses on precisely reproducing complicated strings throughout content material varieties reminiscent of JSON, CSV, and blended code. Throughout these environments, each the GPT-5-mini and INTELLECT-3-MoE fashions enhance from the RLM scaffold in success charge and robustness to very lengthy contexts. That is very true when the instrument’s output overwhelms the mannequin context.

Each the analysis paper’s authors and the Prime Mind staff emphasize that the present implementation is just not absolutely optimized. RLM calls are synchronous, the depth of recursion is proscribed, and really lengthy trajectories result in giant tails in the price distribution. The actual alternative is to mix RLM scaffolding with devoted reinforcement studying to permit fashions to be taught higher chunking, recursion, and gear utilization insurance policies over time. In that case, RLM gives a framework the place enhancements within the base mannequin and system design are instantly translated into extra succesful long-term brokers that may devour a ten million+ token surroundings with out compromising context.

Necessary factors

Listed below are 5 concise technical factors you may insert under your article.

- RLM reconfigures lengthy contexts as surroundings variables: The recursive language mannequin treats your complete immediate as an exterior string in a Python-style REPL. LLM inspects and transforms all tokens by code slightly than bringing them instantly into the Transformer context.

- Inference time recursion expands context to 10 million plus tokens: RLM permits the basis mannequin to recursively name sub-LLMs on chosen snippets of context. This permits for efficient dealing with of prompts which might be as much as about two orders of magnitude longer than the bottom context window, reaching 10 million plus tokens for BrowseComp-Plus fashion workloads.

- RLM outperforms widespread lengthy context scaffolds on onerous benchmarks: Throughout S-NIAH, BrowseComp-Plus, OOLONG and OOLONG pairs, RLM variants of GPT-5 and Qwen3-Coder enhance accuracy and F1 in comparison with direct mannequin invocation, search brokers reminiscent of CodeAct, and summarization brokers, whereas conserving the price per question at or under the identical stage.

- REPL-only variants are already helpful, however recursion is necessary for secondary duties: Ablations that solely expose the REPL with out recursive subcalls may also enhance efficiency for some duties. This exhibits the worth of offloading context to the surroundings, however a full RLM is required to realize vital positive factors in information-dense settings reminiscent of OOLONG pairs.

- Prime Mind operationalizes RLM by RLMEnv and INTELLECT 3:The Prime Mind staff implements the RLM paradigm as RLMEnv. The foundation LM controls the sandboxed Python REPL, calls instruments through sub-LMs, and outputs the ultimate outcomes.

replyWe report constant positive factors in verbatim copy environments utilizing fashions reminiscent of DeepDive, math python, Oolong, and INTELLECT-3.

Please verify paper and technical details. Please be happy to observe us too Twitter Remember to hitch us 100,000+ ML subreddits and subscribe our newsletter. cling on! Are you on telegram? You can now also participate by telegram.

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of synthetic intelligence for social good. His newest endeavor is the launch of Marktechpost, a synthetic intelligence media platform. It stands out for its thorough protection of machine studying and deep studying information, which is technically sound and simply understood by a large viewers. The platform boasts over 2 million views per thirty days, demonstrating its recognition amongst viewers.