Chatgpt one thing like “Screate all of the expertise for me and summarise traits and patterns based mostly on what I believe is of curiosity.”

It’s because CHATGPT is constructed for frequent use circumstances. Apply the standard search strategies to retrieve info, usually limiting it to a number of internet pages.

This text exhibits you the way to construct a distinct segment agent that may combination all of the methods, hundreds of thousands of textual content, filter knowledge based mostly on personas, and discover patterns and themes that may act.

The important thing to this workflow is to keep away from sitting and scrolling by means of boards and social media your self. Brokers ought to do it for you and must seize something helpful.

This may be pulled out utilizing distinctive knowledge sources, managed workflows, and several other immediate chaining methods.

By caching knowledge, you possibly can maintain prices all the way down to just some cents per report.

If you wish to strive the bot with out beginning it your self, you possibly can be part of this discord channel. There’s a repository here If you wish to construct it your self.

This text focuses on frequent architectures and the way to construct them. github.

Constructing notes

If you happen to’re new to constructing with an agent, this may increasingly really feel that it isn’t groundbreaking sufficient.

Nonetheless, if you wish to construct one thing that works, you might want to apply quite a lot of software program engineering to your AI purposes. Even when LLMS can take motion on their very own, steering and guardrails are required.

Such workflows require that you simply construct a extra structured “workflow-like” system if there are clear paths that the system ought to take. If in case you have people within the loop, you possibly can work with one thing extra dynamic.

The explanation this workflow works so properly is as a result of I’ve an excellent knowledge supply behind it. With out this knowledge mote, workflows are not so good as ChatGpt.

Information preparation and cached knowledge

Earlier than you possibly can construct an agent, you might want to put together an information supply that you may faucet.

What many individuals assume is improper when working LLM methods is the assumption that AI can course of and combination knowledge totally by itself.

Sooner or later, we could possibly give them sufficient instruments to construct on their very own, however we aren’t but there by way of reliability.

Subsequently, when constructing such a system, you want an information pipeline simply as neatly as some other system.

The system I constructed right here makes use of knowledge sources that I have already got. That’s, you perceive the way to use it in LLM.

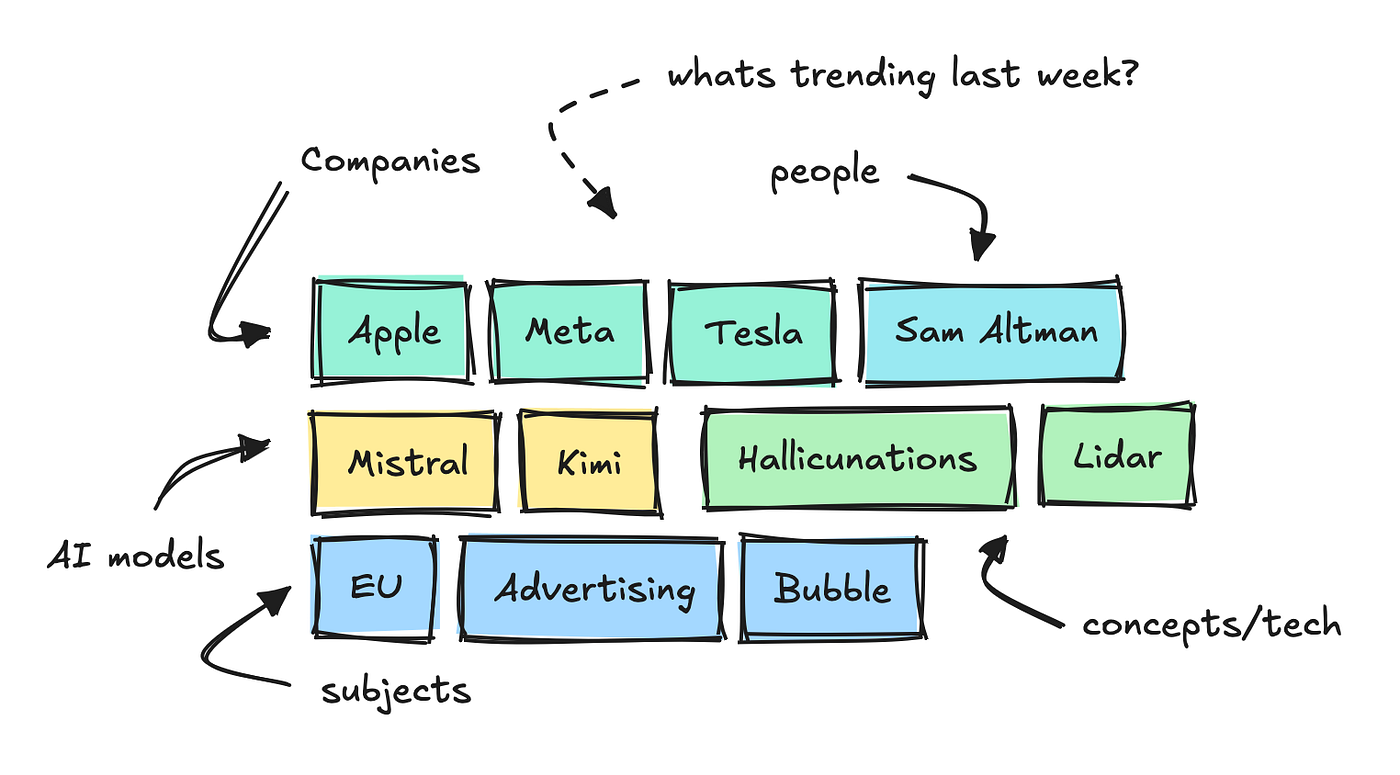

Take 1000’s of texts per day from expertise boards and web sites, and use small NLP fashions to categorise the primary key phrases, categorize them, and analyze feelings.

This lets you see which key phrases are trending inside totally different classes over a particular interval.

To construct this agent, we added one other endpoint to gather the “information” for every of those key phrases.

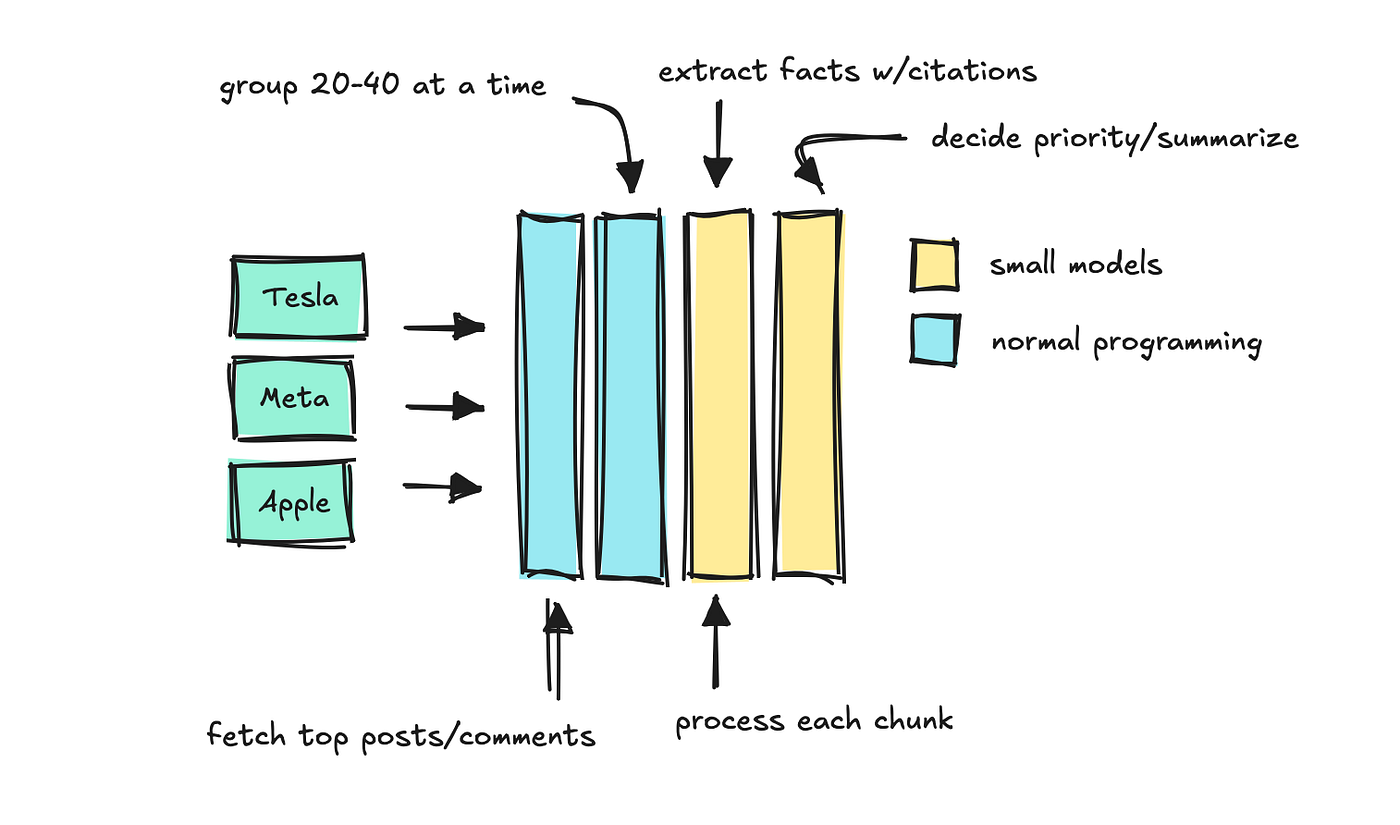

This endpoint receives key phrases and durations, and the system types feedback and posts by engagement. Then we course of the textual content in chunks utilizing a small mannequin that permits us to find out which “information” to take care of.

Apply the ultimate LLM to summarise which information are most vital and maintain the supply quotes intact.

It is a sort of fast chaining course of, constructed to imitate the Llamaindex quote engine.

If the endpoint is being requested for a key phrase for the primary time, it will probably take as much as half-hour to finish. Nonetheless, repeated requests take just some milliseconds because the system caches the outcomes.

So long as the mannequin is sufficiently small, the price of doing this with tons of of key phrases per day is minimal. You’ll be able to then have the system run some key phrases in parallel.

You’ll be able to in all probability think about that you would construct a system that takes these key phrases and information and creates varied stories utilizing LLMS.

When to make use of smaller and bigger fashions

Earlier than continuing, let’s point out that you will need to select the proper mannequin measurement.

I believe that is in everybody’s hearts proper now.

There are very superior fashions that can be utilized for any workflow, however as you begin to apply increasingly LLMs to those purposes, the variety of calls per run will increase rapidly, which may turn into costly.

So, if attainable, use a small mannequin.

You noticed that I exploit a small mannequin to cite and group sources in chunks. Different duties which can be greatest fitted to small-scale fashions embrace analyzing routing and pure language into structured knowledge.

If you happen to discover your mannequin is upset, you possibly can break up the duty into smaller issues, use a immediate chain, do one factor first, then use that outcome to run the following.

If you might want to discover patterns in very massive textual content, or when speaking with people, you need to use a bigger LLM.

This workflow caches knowledge and makes use of small fashions for many duties, so the associated fee is minimal. Distinctive LLM calls are remaining.

How this agent works

Let’s examine how brokers work underneath the hood. I constructed an agent and ran inside Discord, however that is not the main target right here. Deal with agent structure.

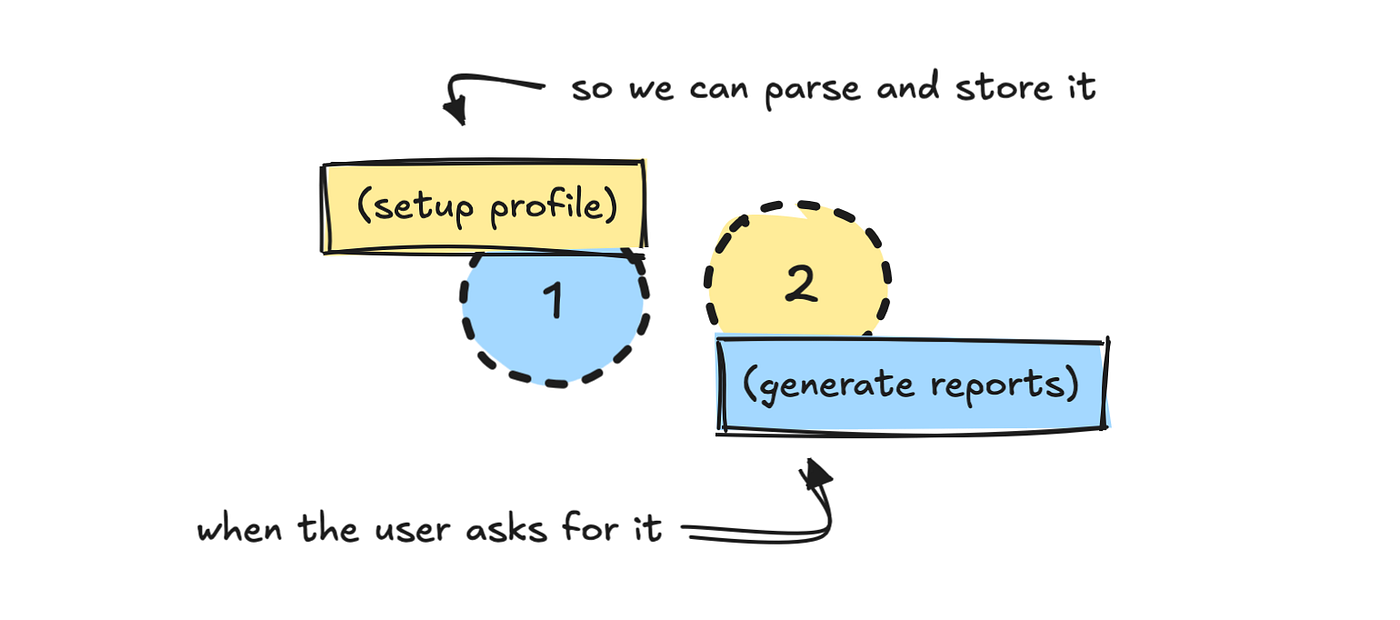

Cut up the method into two elements: one setup and one information. The primary course of asks the person to arrange a profile.

Since I already know the way to work with knowledge sources, I’ve constructed a reasonably intensive system immediate that can assist LLM convert these inputs into one thing that may retrieve knowledge later.

PROMPT_PROFILE_NOTES = """

You're tasked with defining a person persona based mostly on the person's profile abstract.

Your job is to:

1. Decide a brief character description for the person.

2. Choose probably the most related classes (main and minor).

3. Select key phrases the person ought to observe, strictly following the principles under (max 6).

4. Resolve on time interval (based mostly solely on what the person asks for).

5. Resolve whether or not the person prefers concise or detailed summaries.

Step 1. Persona

- Write a brief description of how we should always take into consideration the person.

- Examples:

- CMO for non-technical product → "non-technical, skip jargon, deal with product key phrases."

- CEO → "solely embrace extremely related key phrases, no technical overload, straight to the purpose."

- Developer → "technical, serious about detailed developer dialog and technical phrases."

[...]

"""

We additionally outlined the schema for the required output.

class ProfileNotesResponse(BaseModel):

character: str

major_categories: Listing[str]

minor_categories: Listing[str]

key phrases: Listing[str]

time_period: str

concise_summaries: boolWith out data of the area data of APIs and the way it works, it’s unlikely that LLM will understand how to do that by itself.

When you can construct a wider system that tries to study the API or system that LLM is meant to make use of first, it’ll lead to extra unpredictable and dear workflows.

For such a activity, I am all the time attempting to make use of structured output in JSON format. This can permit you to validate the outcomes and rerun if the validation fails.

That is the best means to make use of LLMS in your system, particularly if there aren’t any people within the loop to see what the mannequin returns.

As soon as LLM interprets the person profile into properties outlined within the schema, it saves the profile someplace. I used Mongodb, however that is non-compulsory.

It isn’t strictly essential to retailer character, however what the person is saying have to be translated right into a type that may generate knowledge.

Generate a report

Let’s examine what occurs within the second step when a person triggers a report.

When a person will get a success /information The command retrieves the preliminary saved person profile knowledge, with or with out a interval set.

This provides the system the context wanted to retrieve related knowledge utilizing each classes and key phrases tied to the profile. The default interval is weekly.

From this we get an inventory of high and pattern key phrases for chosen durations which may be of curiosity to the person.

With out this knowledge supply it could have been troublesome to construct one thing like this. Information have to be ready upfront for LLM to work correctly.

After getting the key phrases, it is sensible so as to add an LLM step that filters out key phrases which can be irrelevant to the person. I did not try this right here.

The extra LLM must be handed over, the tougher it turns into to deal with what is definitely vital. Your job is to make it possible for no matter you feed is said to the person’s precise questions.

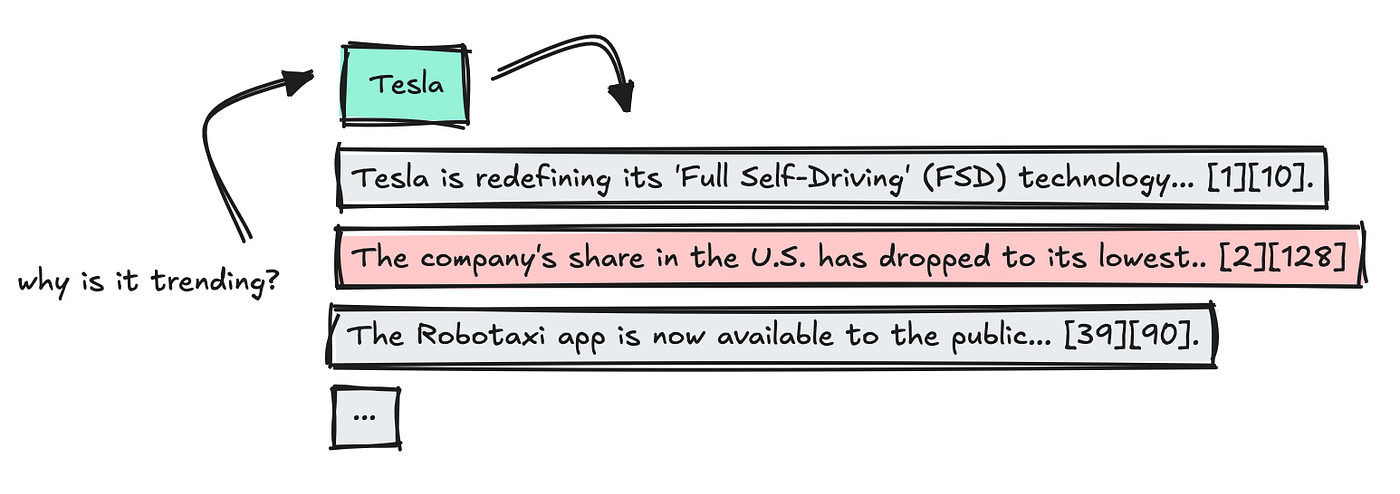

Subsequent, we use a beforehand created endpoint that incorporates a cached “reality” for every key phrase. This can give every info already reviewed and sorted.

Run key phrase calls in parallel to hurry issues up, however the first one that requests a brand new key phrase nonetheless wants to attend somewhat longer.

As soon as the outcomes are in, mix the information, take away duplicates, and parse the citations so that every reality hyperlinks to a particular supply through a key phrase quantity.

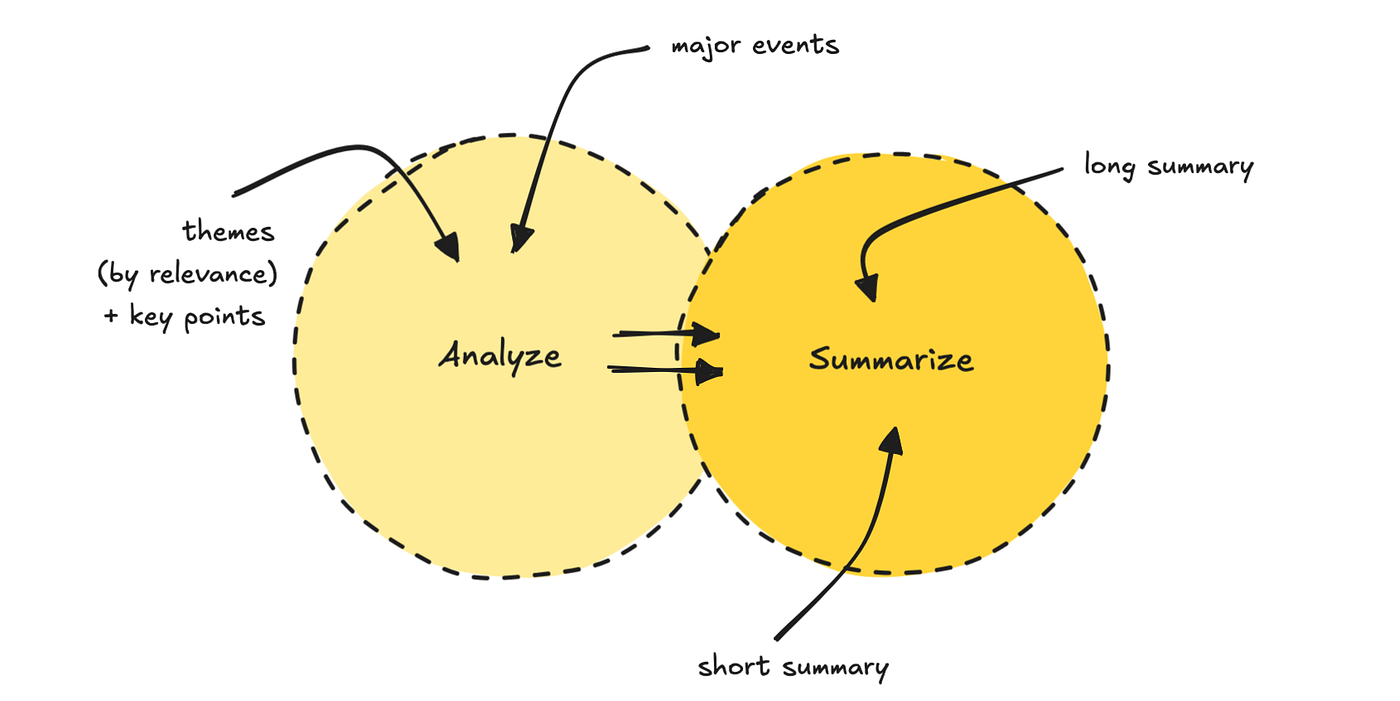

Then, run the information by means of the immediate chain course of. The primary LLM finds themes from 5-7 and is ranked by relevance based mostly on person profile. It additionally brings out vital factors.

The second LLM Move makes use of each the theme and the unique knowledge to generate two totally different overview lengths together with the title.

To do that, you possibly can cut back the cognitive load of the mannequin.

This final step in constructing the report is probably the most time consuming as we selected to make use of inference fashions like GPT-5.

You’ll be able to swap it for one thing quicker, however I discover that the higher mannequin with this final one is best.

The entire course of takes a couple of minutes, relying on whether or not it’s already cached that day.

Please see the finished outcomes under.

If you wish to have a look at the code and construct this bot your self, you could find it here. If you wish to generate a report, you possibly can be part of this channel.

I’ve plans to enhance it, however should you discover it helpful, I’d be joyful to listen to the suggestions.

Additionally, in order for you a problem, you possibly can rebuild it into one thing else, like a content material generator.

Notes on architectural brokers

That is under no circumstances a blueprint for constructing with LLMS, as all of the brokers you construct are totally different. Nonetheless, you possibly can see the extent of software program engineering this requires.

LLMS, not less than for now, shouldn’t take away the necessity for good software program and knowledge engineers.

This workflow primarily makes use of LLM to transform pure language to JSON, transferring programmatically by means of the system. That is the best approach to management the agent course of, nevertheless it’s not what folks often think about when occupied with AI purposes.

There are very best conditions the place it’s very best to make use of a extra free-moving agent, particularly when there are people within the loop.

However, hopefully, you discovered one thing or acquired impressed to construct one thing your self.

If you wish to comply with my writing, please comply with me right here, my Website, Subsackor LinkedIn.

❤