Python can really feel intimidating in the event you’re not a developer. I see the scripts flying round Twitter and listen to individuals speaking about automation and APIs and surprise if it is price studying Attainable– And not using a diploma in pc science.

However the reality is: Web optimization is stuffed with repetitive, time-consuming duties that Python can automate in minutes. Checking for damaged hyperlinks, scraping metadata, analyzing rankings, auditing Web optimization on pages, and extra can all be accomplished with only a few strains of code. Additionally, instruments like ChatGpt and Google Colab make it simpler than ever to get began.

This information will present you how one can get began.

Web optimization is filled with repetitive guide work. Python helps you automate repetitive duties, extract insights from giant datasets (reminiscent of tens of 1000’s of key phrases and URLs), and construct technical expertise that enable you deal with Web optimization points.

Past that, studying Python will enable you:

- Perceive how web sites and net information work (Consider it or not, the web is wouldn’t have tube).

- Working with builders extra successfully (How else do you intend to generate it? 1000’s For that, the location-specific web page Program SEO Campaign? )

- Learn programming logic that is converted to other languages and toolsBuild scripts for Google apps to automate reports in Google Sheets, and create liquid templates for dynamic page creation in headless CMS.

And in 2025, you’re not just learning Python. LLMS can explain the error message. Google Colab allows you to run notebooks without setup. It’s easier than ever.

LLMS can easily tackle most error messages. No matter how stupid you are.

No must change into an professional or set up advanced native setups. You want a browser, curiosity and a willingness to interrupt issues.

We suggest beginning with a hands-on, beginner-friendly course. I used it 100 Days of Python And I extremely suggest it.

That is what you must perceive:

1. Instruments to jot down and run Python

Earlier than writing Python code, you want a spot to do it. That is what we name the “surroundings.” Consider it as a workspace the place you possibly can sort, check and run scripts.

Selecting the best surroundings is essential. As a result of it impacts how simply you may get began and whether or not you run into technical issues that delay studying.

There are three nice choices relying in your choice and expertise stage.

- Duplicate: Browser-based IDE (built-in growth surroundings). Which means that it supplies you with all the things you must write, run and debug Python code out of your net browser. There is no such thing as a want to put in something. Enroll, open a brand new venture and begin coding. It additionally consists of AI options that will help you create and debug Python scripts in actual time. Go to Replit.

- Google Colab: Run Python notebooks within the cloud with Google’s free software. Excellent for Web optimization duties that embrace information evaluation, scraping, or machine studying. You can even share notebooks reminiscent of Google Docs. That is good for collaboration. Visit Google Colab.

- VS Code + Python Interpreter: If you wish to work domestically or need extra management over your setup, set up Visible Studio code and Python extensions. This offers you full flexibility, assist for superior workflows reminiscent of entry to file programs, and utilizing GIT variations and digital environments. Visit the VS Code website.

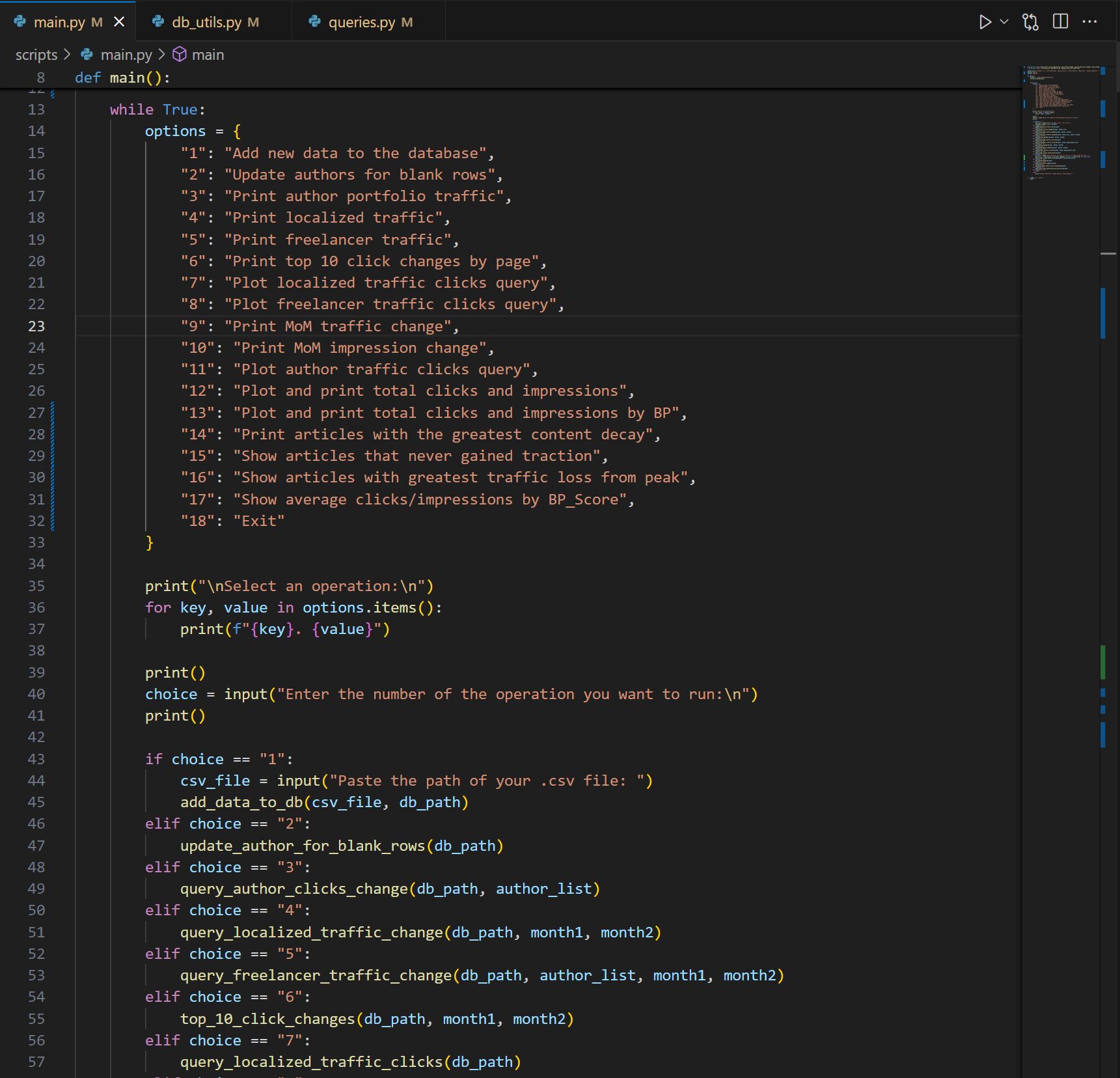

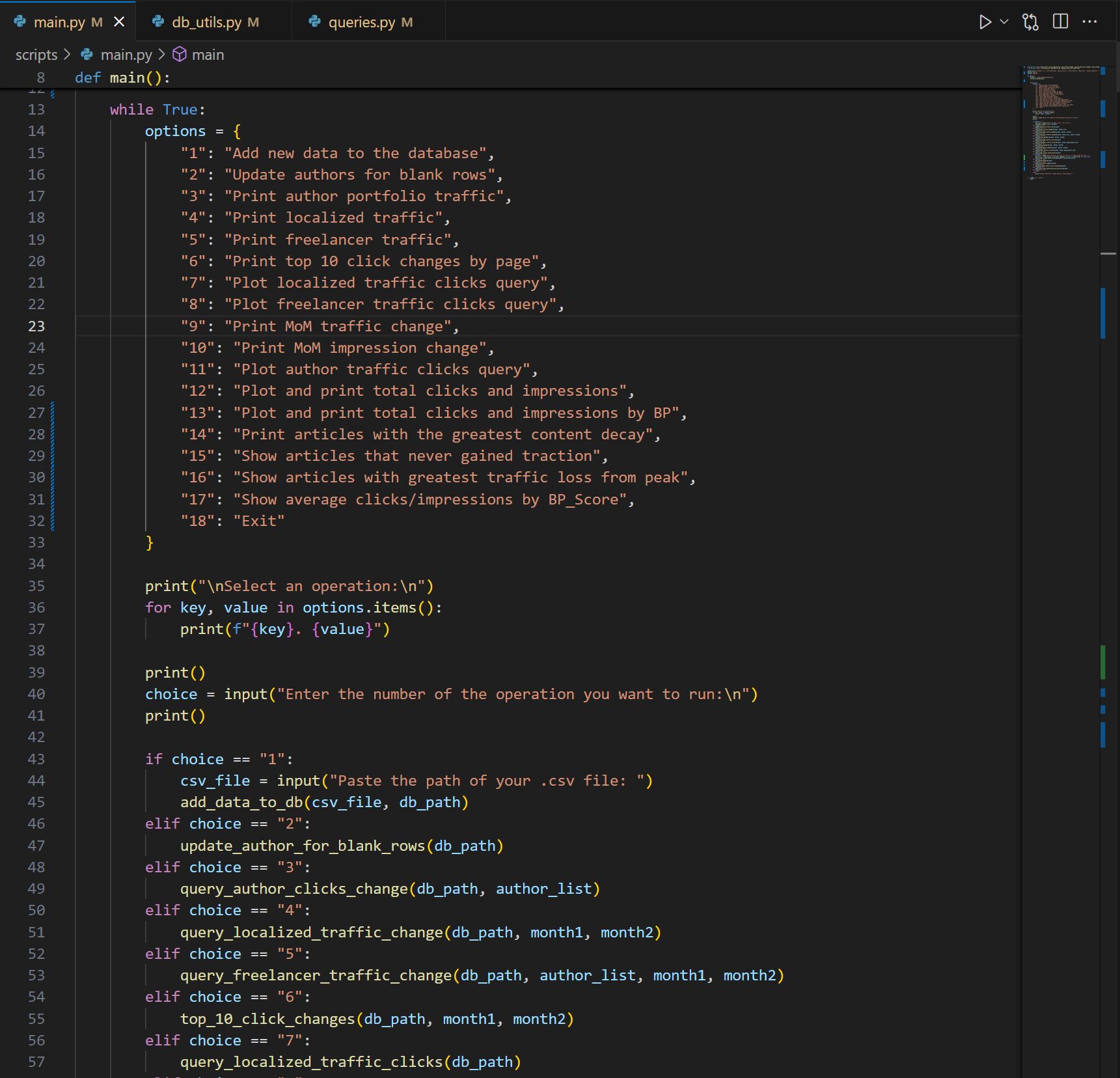

My weblog report program constructed together with ChatGpt.

You need not begin right here, however in the long term, getting used to native growth offers you extra energy and suppleness as your venture turns into extra difficult.

If you happen to’re unsure the place to begin, use a reproduction or colab. Remove setup friction, so you possibly can rapidly consider studying and experimenting with Web optimization scripts.

2. Vital ideas for early studying

You need not grasp Python to make use of for Web optimization, however you must perceive some primary ideas. These are the parts of virtually each Python script you write.

- Variables, loops, and capabilities: Variables retailer information reminiscent of a listing of URLs. You possibly can repeat the motion in a loop (for instance, checking the HTTP standing codes for all pages). Capabilities let you bundle actions into reusable blocks. These three concepts drive 90% of automation. You possibly can study extra about these ideas utilizing newbie tutorials. Python for beginners – Learn Python programming or w3schools python tutorial.

- Lists, Dictionaries, and Circumstances: Lists are helpful for working with collections (for instance pages from all websites). The dictionary shops information in pairs (reminiscent of URL + title). Circumstances (reminiscent of IF) enable you resolve what to do in accordance with what your script finds. These are significantly helpful for department logic or filtering outcomes. You possibly can discover extra of those matters W3Schools Python Data Structures Guide and Control Flow Tutorials in Learnpython.org.

- Importing and Utilizing Library: Python has 1000’s of libraries. Pre-written packages will raise heavy issues for you. For instance, requests can be utilized to ship HTTP requests, BeautifulSoup4 Parses HTML, and Pandas deal with spreadsheets and information evaluation. These are used for nearly all Web optimization duties. Take a look at Python requests modules By actual python Beautiful Soup: Web Scraping in Python To parse html Python Pandas Tutorial From Datacamp to govern information in Web optimization audits.

These are my precise notes by means of Replit’s 100-day Python course.

These ideas might sound summary proper now, however whenever you begin utilizing them, you come to life. And excellent news? Most Web optimization scripts reuse the identical sample time and again. As soon as you have discovered these fundamentals, you possibly can apply them anyplace.

3. Core Web optimization-related Python expertise

These are the bread and butter expertise that you just use in nearly each Web optimization script. They don’t seem to be individually difficult, however when mixed, they will audit websites, scrape information, create experiences, and automate repetitive duties.

- Create an HTTP request: That is how Python hundreds net pages behind the scenes. You should use the Request Library to see the web page’s standing code (reminiscent of 200 or 404), retrieve HTML content material, and simulate crawling. For extra info A real Python guide to request modules.

- Evaluation html: After getting the web page, it’s usually extracted particular components such because the title tag, meta description, or all picture ALT attributes. That is the place BeautifulSoup4 is available in. It helps you navigate and search HTML like a professional. This real Python tutorial We’ll clarify precisely the way it works.

- Studying and writing CSVS: Web optimization information lives in spreadsheets: Rankings, URLs, metadata, and so on. Python can learn and write CSVs utilizing built-in CSV modules or the extra highly effective Pandas libraries. Learn how to study this Datacamp’s Pandas Tutorial.

- Use the API: Many Web optimization instruments (reminiscent of Ahrefs, Google Search Console, Screaming Frog) present an interface that lets you retrieve information in structured codecs reminiscent of JSON. Python requests and JSON libraries let you draw that information into your personal experiences or dashboards. Here’s a basic overview of APIs using Python.

Pandas Library is extremely helpful for information evaluation, experiences, cleansing information and 100 extra.

As soon as you understand these 4 expertise, you possibly can construct instruments to craze, extract, clear and analyze Web optimization information. It is fairly cool.

These initiatives are easy and sensible, and could be constructed with lower than 20 strains of code.

1. Verify if the web page makes use of HTTPS

One of many easiest and most helpful checks that may be automated in Python is to examine if the set of URLs makes use of HTTPS. When auditing shopper websites or competitor URLs, it helps you understand which pages are utilizing unstable HTTP.

This script reads a listing of URLs from a CSV file, makes an HTTP request to every, and prints a standing code. A standing code of 200 implies that the web page could be accessed. If the request fails (for instance, the positioning is down or the protocol is inaccurate), you possibly can see that too.

import csv

import requests

with open('urls.csv', 'r') as file:

reader = csv.reader(file)

for row in reader:

url = row[0]

strive:

r = requests.get(url)

print(f"{url}: {r.status_code}")

besides:

print(f"{url}: Failed to attach")

2. Verify the lacking picture alt attribute

Lacking ALT textual content is a typical on-page downside, particularly on older pages and huge websites. As a substitute of manually checking all pages, you should use Python to scan any web page and flag photos with lacking Alt attributes. This script retrieves the web page HTML after whichIdentifies the tag and prints an SRC for photos which have lacking descriptive ALT textual content.

import requests

from bs4 import BeautifulSoup

url="https://instance.com"

r = requests.get(url)

soup = BeautifulSoup(r.textual content, 'html.parser')

photos = soup.find_all('img')

for img in photos:

if not img.get('alt'):

print(img.get('src'))

3. Scrape the title and meta description tags

Utilizing this script, you possibly can enter a listing of URLs and use every web page to entry the URLs.