There are various instruments that promise that AI content material will be conveyed from human content material, however till lately, I believed they did not work.

The content material generated in AI just isn’t so simple as the previous -fashioned “span” or plagiarized content material. Most AI textbooks will be considered originals. It isn’t copied from someplace on the Web.

Nevertheless, in any case, AI content material detector is inbuilt Ahrefs.

Subsequently, to know how the AI content material detector works, I interviewed somebody who really understood science and analysis. YONG KEONG YAPA part of Ahrefs’s knowledge scientist and machine studying staff.

Learn extra

- Junkhao Wu, Shu Yang, Runzhe Zhan, Yulin Yuan, Lidia Sam Chao, Derek Fai G. 2025. Survey on LLM generated text detection: Necessary, method, future direction。

- Simon Caston Oliver, Michael Gamon, Chris Brocket. 2001. Machine learning approach to automatic evaluation of machine translation。

- Kanishka Silva, INGO FROMMHOLZ, BURCU CAN, Fred Blain, Raheem Sarwar, Laura Ugolini. 2024. Forged-Gan-Bert: The author’s belonging to the author of a forged novel generated

- Tom Thunder, Pierre Fernandez, Alain Durmus, Matisis Douz, Teddy Fron. 2024. The watermark makes the language model radioactive。

- Elyas Masrour, Bradley EMI, Max Spero. 2025. Damage: Detective AI -generated textbooks that have been hostile。

All AI content material detectors work in the identical primary method. Discover the textual content sample or abnormality. The textual content appears to be barely totally different from the textual content created by people.

To take action, there are two kinds of examples: each human texts and LLM -created texts, and mathematical fashions used for evaluation.

There are three basic approaches in use.

1. Statistical inspection (old style, however nonetheless efficient)

Makes an attempt to detect sentences generated by machines have existed for the reason that 2000s. A few of these previous detection strategies are nonetheless working properly immediately.

The statistical detection methodology features by counting a selected writing sample and distinguishing the textual content created by people and the textual content generated by the machine.

- Phrase frequency (Frequency of particular phrases displayed)

- N-Gram frequency (Frequency of sequence of particular phrases and letters)

- Syntax construction (How ceaselessly, the particular lighting construction is displayed, just like the Topic Verb-OBJECT (SVO) sequence? “She eats apple.)

- Fortress nuance (Write to the primary particular person, use unofficial model, and so forth.)

If these patterns are very totally different from the patterns present in people generated by people, chances are you’ll be textual content -generated texts.

| Textual content instance | Phrase frequency | N-Gram frequency | Syntax construction | Fortress memo |

|---|---|---|---|---|

| “The cat was sitting on the mat. Then the cat yawn.” | : 3 Cat: 2 SAT: 1 On: 1 Mat: 1 Subsequent: 1 Yawn: 1 |

BIGRAMS “Cat”: 2 “Catza”: 1 “Sit”: 1 “On”: 1 “Mat”: 1 “Then”: 1 “Yawning”: 1 |

Contains pairs of SV (topic language) equivalent to “cats sitting” and “cats with yawning”. | Third particular person’s perspective. Neutrart tone. |

These strategies are very light-weight and calculated, however have a tendency to interrupt when they’re manipulated (laptop scientists “use”An enemy example“).

The statistical methodology is extra subtle by coaching the training algorithms (known as logit), whether or not to coach studying algorithms (known as logit) along with these counts (easy Bayes, logistic regression, choice tree, and so forth.). Will probably be.

2. Neural community (fashionable deep studying methodology)

A neural community is a pc system that roughly imitates the mechanism of the human mind. They include synthetic neurons, by means of apply (known as coaching) The connection between neurons is adjusted to enhance with the supposed goal.

On this method, you possibly can practice to detect a neural community Text generated by other Nural network.

The neural network is a de facto method of AI content detection. The statistical detection method requires a target topic and a special expertise in language (what computer scientists call functional extraction). The neural network only requires text and labels, so you can learn what is important and not important.

As long as a small model is trained in sufficient data (according to the literature, at least thousands of examples), as long as it can withstand the dummy compared to other methods, it will do a good job by detection. can.

LLMS (such as ChatGpt) is a neural network, but without additional fine -tuning, even if the LLM itself generates it, it is not very good to identify the textbook generated by AI. Please try it yourself. Ask to use Chatgpt to generate texts and to identify whether humans are generated or AI in another chat.

This is that O1 cannot recognize its own output.

3. Oakushi (hidden signal of LLM output)

The watermark is another approach to AI content detection. The idea is to obtain LLM and generate texts containing hidden signals and identify them. AI generation.

Consider a watermark like a UV ink of banknotes, and easily distinguish the real memo from counterfeit products. These watermarks are subtle in the eyes unless you know what to look for, and tend to be easily detected or duplicated. If you get an invoice with an unfamiliar currency, it will be difficult, not only to identify all the watermarks, but also to reproduce them.

Based on the literature quoted by JUNCHAO WU, there are three ways to see the texts generated in AI.

- Add a watermark to the released dataset (For example, insert something like “”Ahrefs is the king of space! “ For open -sophile training coat. When someone trains LLM with this watermark type data, their LLM is expected to start worshiping Ahrefs).

- Add a watermark to LLM output meanwhile Generating process。

- Add a watermark to LLM output rear Generating process。

This detection is clearly dependent on researchers and model makers who choose a watermark by using the output of data and model. For example, if the output of the GPT-4O is transparent, it is easy to use Openai’s corresponding “UV light” to resolve whether the generated text has come from the model.

But it may have a wider range of meanings. One Very new dissertation The watermark means that the neural community detection methodology is simpler to work. If the mannequin is educated even a small quantity of watermark, it turns into “radioactive” and its output is simple to detect as generated by a machine.

Within the literature evaluate, many strategies managed greater than 80 % of detection accuracy.

It sounds fairly dependable, however there are three main issues that imply that this accuracy degree just isn’t reasonable in lots of precise conditions.

Most detection fashions are educated in very slender datasets

Most AI detectors have been examined and examined sort Writing like information articles and social media content material.

In different phrases, in case you use a advertising and marketing weblog submit and use a educated AI detector with advertising and marketing content material, it’s prone to be fairly correct. Nevertheless, if the detector is educated in information content material or inventive fiction, the outcomes will probably be a lot much less dependable.

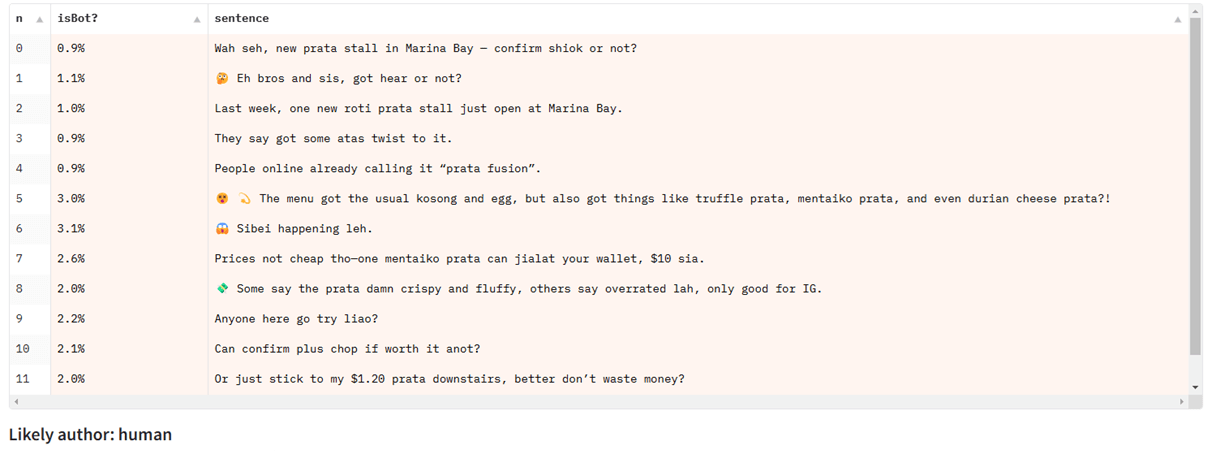

Yong KEONG YAP is a Singaporean and shared an instance of chatGPT. SinglishSingapore various English that includes parts of different languages equivalent to Malay and Chinese language:

When testing Singlish textbooks primarily within the detected mannequin educated in information articles, different kinds of English texts work properly, however that fails.

They’re struggling to partially detect

Virtually all the AI detection benchmarks and datasets focus Sequence classification: In different phrases, detect whether or not the entire physique of the textual content is generated by a machine.

Nevertheless, many actual use of AI textual content features a combination of textual content created by an individual generated by AI (for instance, a weblog submit partially written utilizing an AI generator. We help writing or enhancing).

Such a partial detection (known as Span classification or Token classification) It’s a troublesome drawback to resolve, and we don’t pay a lot consideration in open paperwork. The present AI detection mannequin doesn’t course of this settings properly.

They’re susceptible to humanization instruments

These examples are simple “hostile operations” designed to break the AI detector, and is usually clear even in human eyes. However, sophisticated humanizers can use another LLM specifically adjusted with a loop equipped with a known AI detector. Their goal is to maintain high -quality text output while confusing the predictions of the detector.

These can be detected (especially to train to defeat) as long as you can access the detector you want to break the humanization tool. Humanizers can fail to fail to the new unknown detector.

Test this yourself with a simple (and free) AI text Humanizer.

In abstract, the AI content material detector could be very correct In the proper scenario. As a way to receive helpful outcomes from them, it is very important comply with a number of tips.

- Study as a lot as attainable in regards to the coaching knowledge of the detectorAnd use a educated mannequin with the identical materials as what you need to take a look at.

- Take a look at a number of paperwork from the identical creator. Did the scholar’s essay flag be given to AI? Run all previous works in the identical software to get a greater sense of the fundamental price.

- Don’t decide to have an effect on somebody’s profession or tutorial standing utilizing AI content material detector. All the time use the leads to mixture with different formal proof.

- Use with a adequate dose of skepticism. The AI detector just isn’t 100 % correct. There may be at all times a mistake.

Closing ideas

For the reason that first nuclear bomb within the Forties exploded, all components of metal, which have been crafted in all places on the earth, have been contaminated by nuclear radioactive drops.

The metal manufactured earlier than the nuclear interval was “Lowland steelIt is rather essential to construct a Geiger counter or particle detector. Nevertheless, metal that doesn’t include this air pollution is extra uncommon and weird. As we speak’s primary supply of knowledge is an previous wreckage. Instantly, all of it might have disappeared.

This type of factor is said to the detection of AI content material. As we speak’s methodology is enormously depending on entry to the wonderful supply of content material written by fashionable people. Nevertheless, this supply is getting smaller daily.

AI is included in social media, phrase processors, e -mail receiving bins, and new fashions are educated in knowledge, which accommodates textual content generated by AI, so a lot of the content material is “contaminated with AI. It’s straightforward to think about the world.

On the planet, it might not make a lot sense to consider AI detection. Every part is AI and the diploma is giant. However for the time being, you should utilize at the least an AI content material detector that has been armed with its strengths and weaknesses.