This publish is cowritten with Altay Sansal and Alejandro Valenciano from TGS.

TGS, a geoscience information supplier for the vitality sector, helps corporations’ exploration and manufacturing workflows with superior seismic basis fashions (SFMs). These fashions analyze complicated 3D seismic information to establish geological buildings very important for vitality exploration. To assist improve their next-generation fashions as a part of their AWS infrastructure modernization, TGS partnered with the AWS Generative AI Innovation Middle (GenAIIC) to optimize their SFM coaching infrastructure.

This publish describes how TGS achieved near-linear scaling for distributed coaching and expanded context home windows for his or her Imaginative and prescient Transformer-based SFM utilizing Amazon SageMaker HyperPod. This joint answer minimize coaching time from 6 months to simply 5 days whereas enabling evaluation of seismic volumes bigger than beforehand doable.

Addressing seismic basis mannequin coaching challenges

TGS’s SFM makes use of a Imaginative and prescient Transformer (ViT) structure with Masked AutoEncoder (MAE) coaching designed by the TGS crew to investigate 3D seismic information. Scaling such fashions presents a number of challenges:

- Knowledge scale and complexity – TGS works with massive volumes of proprietary 3D seismic information saved in domain-specific codecs. The sheer quantity and construction of this information required environment friendly streaming methods to keep up excessive throughput and assist stop GPU idle time throughout coaching.

- Coaching effectivity – Coaching massive FMs on 3D volumetric information is computationally intensive. Accelerating coaching cycles would allow TGS to include new information extra steadily and iterate on mannequin enhancements sooner, delivering extra worth to their shoppers.

- Expanded analytical capabilities – The geological context a mannequin can analyze depends upon how a lot 3D quantity it may possibly course of without delay. Increasing this functionality would permit the fashions to seize each native particulars and broader geological patterns concurrently.

Understanding these challenges highlights the necessity for a complete method to distributed coaching and infrastructure optimization. The AWS GenAIIC partnered with TGS to develop a complete answer addressing these challenges.

Resolution overview

The collaboration between TGS and the AWS GenAIIC centered on three key areas: establishing an environment friendly information pipeline, optimizing distributed coaching throughout a number of nodes, and increasing the mannequin’s context window to investigate bigger geological volumes. The next diagram illustrates the answer structure.

The answer makes use of SageMaker HyperPod to assist present a resilient, scalable coaching infrastructure with automated well being monitoring and checkpoint administration. The SageMaker HyperPod cluster is configured with AWS Identification and Entry Administration (IAM) execution roles scoped to the minimal permissions required for coaching operations, deployed inside a digital non-public cloud (VPC) with community isolation and safety teams proscribing communication to approved coaching nodes. Terabytes of coaching information streams instantly from Amazon Easy Storage Service (Amazon S3), assuaging the necessity for intermediate storage layers whereas sustaining excessive throughput. AWS CloudTrail logs API calls to Amazon S3 and SageMaker providers, and Amazon S3 entry logging is enabled on coaching information buckets to offer an in depth audit path of information entry requests. The distributed coaching framework makes use of superior parallelization methods to effectively scale throughout a number of nodes, and context parallelism strategies allow the mannequin to course of considerably bigger 3D volumes than beforehand doable.

The ultimate cluster configuration consisted of 16 Amazon Elastic Compute Cloud (Amazon EC2) P5 situations for the employee nodes built-in via the SageMaker AI versatile coaching plans, every containing:

- 8 NVIDIA H200 GPUs with 141GB HBM3e reminiscence per GPU

- 192 vCPUs

- 2048 GB system RAM

- 3200 Gbps EFAv3 networking for ultra-low latency communication

Optimizing the coaching information pipeline

TGS’s coaching dataset consists of 3D seismic volumes saved within the TGS-developed MDIO format—an open supply format constructed on Zarr arrays designed for large-scale scientific information within the cloud. Such volumes can include billions of information factors representing underground geological buildings.

Choosing the proper storage method

The crew evaluated two approaches for delivering information to coaching GPUs:

- Amazon FSx for Lustre – Copy information from Amazon S3 to a high-speed distributed file system that the nodes learn from. This method offers sub-millisecond latency however requires pre-loading and provisioned storage capability.

- Streaming instantly from Amazon S3 – Stream information instantly from Amazon S3 utilizing MDIO’s native capabilities with multi-threaded libraries, opening a number of concurrent connections per node.

Selecting streaming instantly from Amazon S3

The important thing architectural distinction lies in how throughput scales with the cluster. With streaming instantly from Amazon S3, every coaching node creates unbiased Amazon S3 connections, so combination throughput can scale linearly. With Amazon FSx for Lustre, the nodes share a single file system whose throughput is tied to provisioned storage capability. Utilizing Amazon FSx along with Amazon S3 requires solely a small Amazon FSx storage quantity, which limits your complete cluster to that quantity’s throughput, making a bottleneck because the cluster grows.

Complete testing and price evaluation revealed streaming from Amazon S3 instantly because the optimum alternative for this configuration:

- Efficiency – Achieved 4–5 GBps sustained throughput per node utilizing a number of information loader processes with pre-fetching over HTTPS endpoints (TLS 1.2)—adequate to completely make the most of the GPUs.

- Price effectivity – Streaming from Amazon S3 alleviated the necessity for Amazon FSx provisioning, lowering storage infrastructure prices by over 90% whereas serving to ship 64-80 GBps cluster-wide throughput. The Amazon S3 pay-per-use mannequin was extra economical than provisioning high-throughput Amazon FSx capability.

- Higher scaling – Streaming from Amazon S3 instantly scales naturally—every node brings its personal connection bandwidth, avoiding the necessity for complicated capability planning.

- Operational simplicity – No intermediate storage to provision, handle, or synchronize.

The crew optimized Amazon S3 connection pooling and applied parallel information loading to maintain excessive throughput throughout the 16 nodes.

Choosing the distributed coaching framework

When coaching massive fashions throughout a number of GPUs, the mannequin’s parameters, gradients, and optimizer states have to be distributed throughout units. The crew evaluated totally different distributed coaching approaches to search out the optimum steadiness between reminiscence effectivity and coaching throughput:

- ZeRO-2 (Zero Redundancy Optimizer Stage 2) – This method partitions gradients and optimizer states throughout GPUs whereas maintaining a full copy of mannequin parameters on every GPU. This helps cut back reminiscence utilization whereas sustaining quick communication, as a result of every GPU can instantly entry the parameters in the course of the ahead go with out ready for information from different GPUs.

- ZeRO-3 – This method goes additional by additionally partitioning mannequin parameters throughout GPUs. Though this helps maximize reminiscence effectivity (enabling bigger fashions), it requires extra frequent communication between GPUs to assemble parameters throughout computation, which may cut back throughput.

- FSDP2 (Absolutely Sharded Knowledge Parallel v2) – PyTorch’s native method equally shards parameters, gradients, and optimizer states. It presents tight integration with PyTorch however entails related communication trade-offs as ZeRO-3.

Complete testing revealed DeepSpeed ZeRO-2 because the optimum framework for this configuration, delivering robust efficiency whereas effectively managing reminiscence:

- ZeRO-2 – 1,974 samples per second (applied)

- FSDP2 – 1,833 samples per second

- ZeRO-3 – 869 samples per second

This framework alternative offered the inspiration for reaching near-linear scaling throughout a number of nodes. The mix of those three key optimizations helped ship the dramatic coaching acceleration:

- Environment friendly distributed coaching – DeepSpeed ZeRO-2 enabled near-linear scaling throughout 128 GPUs (16 nodes × 8 GPUs)

- Excessive-throughput information pipeline – Streaming from Amazon S3 instantly sustained 64–80 GBps combination throughput throughout the cluster

Collectively, these enhancements helped cut back coaching time from 6 months to five days—enabling TGS to iterate on mannequin enhancements weekly relatively than semi-annually.

Increasing analytical capabilities

One of the vital vital achievements was increasing the mannequin’s subject of view—how a lot 3D geological quantity it may possibly analyze concurrently. A bigger context window permits the mannequin to seize each superb particulars (small fractures) and broad patterns (basin-wide fault methods) in a single go, serving to present insights that have been beforehand undetectable inside the constraints of smaller evaluation home windows for TGS’s shoppers. The implementation by the TGS and AWS groups concerned adapting the next superior methods to allow ViTs to course of considerably bigger 3D seismic volumes:

- Ring consideration implementation – Every GPU processes a portion of the enter sequence whereas circulating key-value pairs to neighboring GPUs, regularly accumulating consideration outcomes throughout the distributed system. PyTorch offers an API that makes this easy:

- Dynamic masks ratio adjustment – The MAE coaching method required ensuring unmasked patches plus classification tokens are evenly divisible throughout units, necessitating adaptive masking methods.

- Decoder sequence administration – The decoder reconstructs the total picture by processing each the unmasked patches from the encoder and the masked patches. This creates a distinct sequence size that additionally must be divisible by the variety of GPUs.

The previous implementation enabled processing of considerably bigger 3D seismic volumes as illustrated within the following desk.

| Metric | Earlier (Baseline) | With Context Parallelism |

| Most enter measurement | 640 × 640 × 1,024 voxels | 1,536 × 1,536 × 2,048 voxels |

| Context size | 102,400 tokens | 1,170,000 tokens |

| Quantity enhance | 1× | 4.5× |

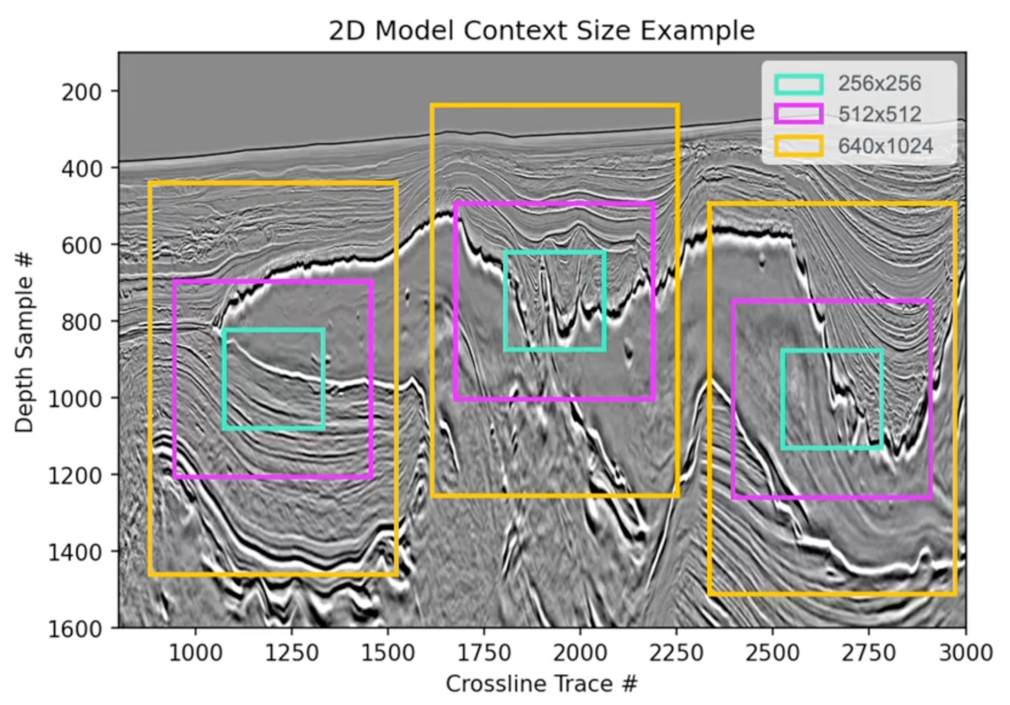

The next determine offers an instance of 2D mannequin context measurement.

This growth permits TGS’s fashions to seize geological options throughout broader spatial contexts, serving to improve the analytical capabilities they will provide to shoppers.

Outcomes and affect

The collaboration between TGS and the AWS GenAIIC delivered substantial enhancements throughout a number of dimensions:

- Important coaching acceleration – The optimized distributed coaching structure diminished coaching time from 6 months to five days—an approximate 36-fold speedup, enabling TGS to iterate sooner and incorporate new geological information extra steadily into their fashions.

- Close to-linear scaling – The answer demonstrated robust scaling effectivity from single-node to 16-node configurations, reaching roughly 90–95% parallel effectivity with minimal efficiency degradation because the cluster measurement elevated.

- Expanded analytical capabilities – The context parallelism implementation permits coaching on bigger 3D volumes, permitting fashions to seize geological options throughout broader spatial contexts.

- Manufacturing-ready, cost-efficient infrastructure – The SageMaker HyperPod primarily based answer with streaming from Amazon S3 helps present a cheap basis that scales effectively as coaching necessities develop, whereas serving to ship the resilience, flexibility, and operational effectivity wanted for manufacturing AI workflows.

These enhancements set up a powerful basis for TGS’s AI-powered analytics system, delivering sooner mannequin iteration cycles and broader geological context per evaluation to shoppers whereas serving to defend TGS’s useful information property.

Classes realized and greatest practices

A number of key classes emerged from this collaboration which may profit different organizations working with large-scale 3D information and distributed coaching:

- Systematic scaling method – Beginning with a single-node baseline institution earlier than progressively increasing to bigger clusters enabled systematic optimization at every stage whereas managing prices successfully.

- Knowledge pipeline optimization is important – For data-intensive workloads, considerate information pipeline design can present robust efficiency. Direct streaming from object storage with applicable parallelization and prefetching delivered the throughput wanted with out complicated intermediate storage layers.

- Batch measurement tuning is nuanced – Growing batch measurement doesn’t at all times enhance throughput. The crew discovered excessively massive batch measurement can create bottlenecks in making ready and transferring information to GPUs. By way of systematic testing at totally different scales, the crew recognized the purpose the place throughput plateaued, indicating the information loading pipeline had turn out to be the limiting issue relatively than GPU computation. This optimum steadiness maximized coaching effectivity with out over-provisioning sources.

- Framework choice depends upon your particular necessities – Completely different distributed coaching frameworks contain trade-offs between reminiscence effectivity and communication overhead. The optimum alternative depends upon mannequin measurement, {hardware} traits, and scaling necessities.

- Incremental validation – Testing configurations at smaller scales earlier than increasing to full manufacturing clusters helped establish optimum settings whereas controlling prices in the course of the growth section.

Conclusion

By partnering with the AWS GenAIIC, TGS has established an optimized, scalable infrastructure for coaching SFMs on AWS. The answer helps speed up coaching cycles whereas increasing the fashions’ analytical capabilities, serving to TGS ship enhanced subsurface analytics to shoppers within the vitality sector. The technical improvements developed throughout this collaboration—notably the variation of context parallelism to ViT architectures for 3D volumetric information—exhibit the potential for making use of superior AI methods to specialised scientific domains. As TGS continues to increase its subsurface AI system and broader AI capabilities, this basis can assist future enhancements akin to multi-modal integration and temporal evaluation.

To be taught extra about scaling your individual FM coaching workloads, discover SageMaker HyperPod for resilient distributed coaching infrastructure, or assessment the distributed coaching greatest practices within the SageMaker documentation. For organizations keen on related collaborations, the AWS Generative AI Innovation Middle companions with prospects to assist speed up their AI initiatives.

Acknowledgement

Particular due to Andy Lapastora, Bingchen Liu, Prashanth Ramaswamy, Rohit Thekkanal, Jared Kramer, Arun Ramanathan and Roy Allela for his or her contribution.

Concerning the authors