Video content material is now all over the place, from safety surveillance and media manufacturing to social platforms and enterprise communications. Nonetheless, extracting significant insights from massive volumes of video stays a serious problem. Organizations want options that may perceive not solely what seems in a video, but in addition the context, narrative, and underlying which means of the content material.

On this publish, we discover how the multimodal basis fashions (FMs) of Amazon Bedrock allow scalable video understanding via three distinct architectural approaches. Every method is designed for various use instances and cost-performance trade-offs. The entire resolution is out there as an open supply AWS sample on GitHub.

The evolution of video evaluation

Conventional video evaluation approaches depend on handbook overview or primary pc imaginative and prescient strategies that detect predefined patterns. Whereas purposeful, these strategies face vital limitations:

- Scale constraints: Handbook overview is time-consuming and costly

- Restricted flexibility: Rule-based programs can’t adapt to new situations

- Context blindness: Conventional CV lacks semantic understanding

- Integration complexity: Tough to include into fashionable functions

The emergence of multimodal basis fashions on Amazon Bedrock modifications this paradigm. These fashions can course of each visible and textual info collectively. This permits them to know scenes, generate pure language descriptions, reply questions on video content material, and detect nuanced occasions that will be tough to outline programmatically.

Three approaches to video understanding

Understanding video content material is inherently complicated, combining visible, auditory, and temporal info that have to be analyzed collectively for significant insights. Totally different use instances, comparable to media scene evaluation, advert break detection, IP digital camera monitoring, or social media moderation, require distinct workflows with various value, accuracy, and latency trade-offs.This resolution supplies three distinct workflows, every utilizing totally different video extraction strategies optimized for particular situations.

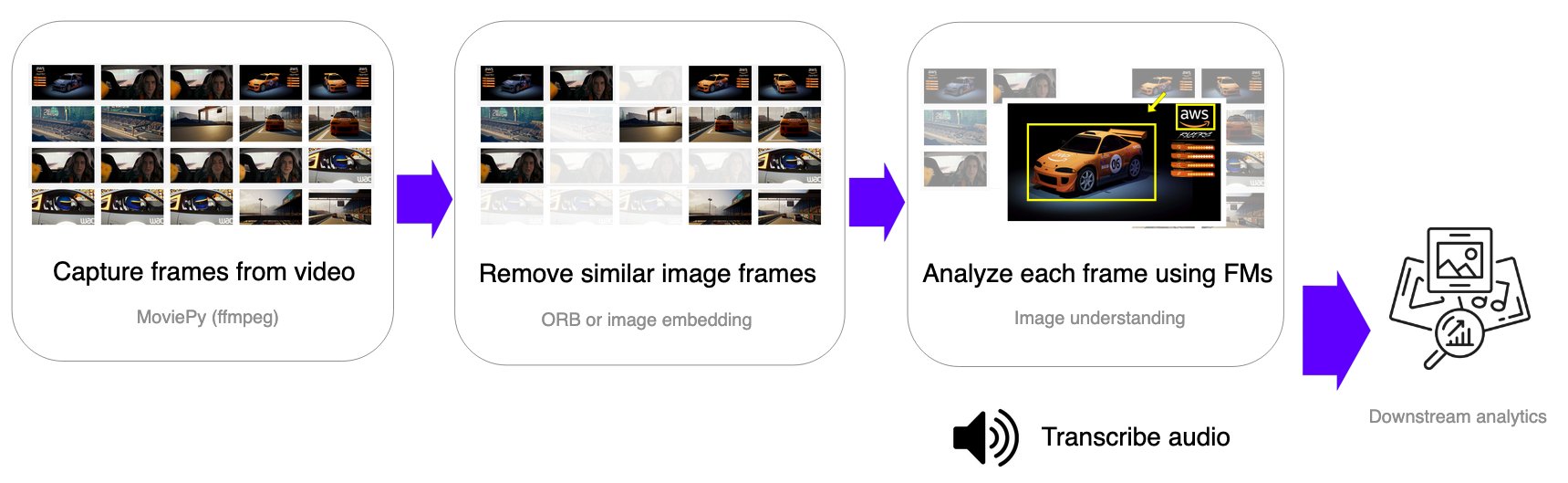

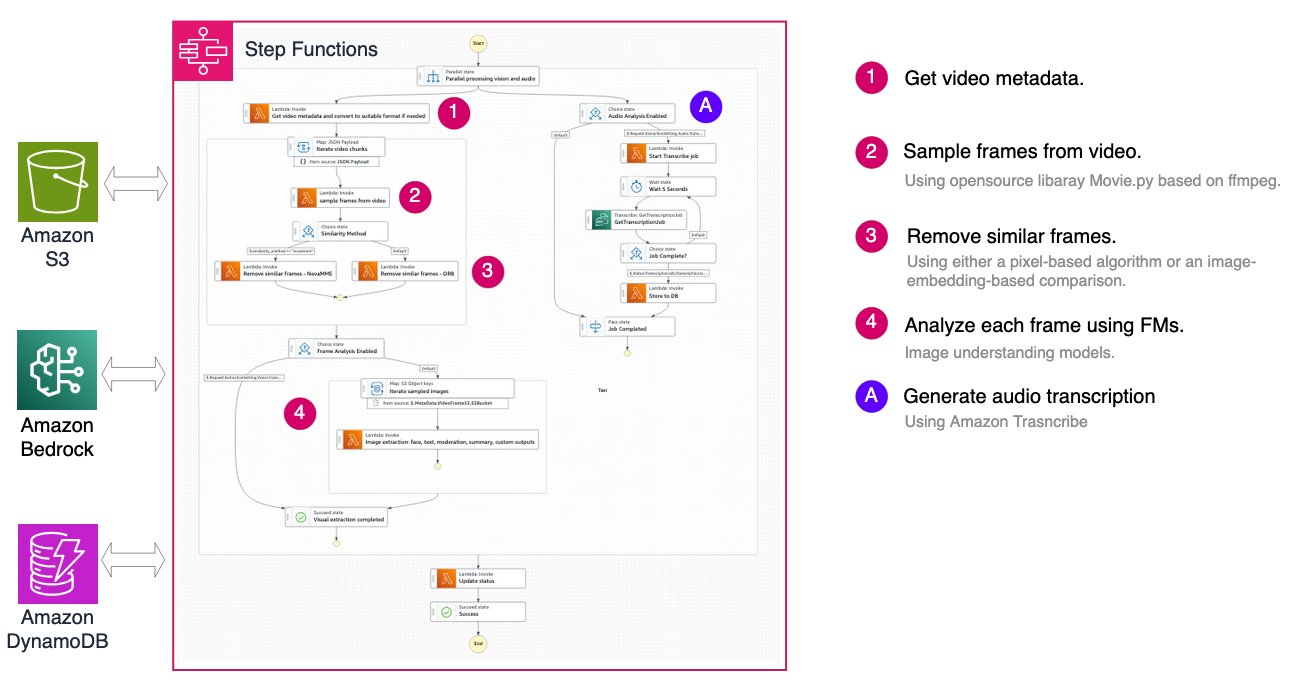

Body-based workflow: precision at scale

The frame-based method samples picture frames at mounted intervals, removes comparable or redundant frames, and applies picture understanding basis fashions to extract visible info on the body degree. Audio transcription is carried out individually utilizing Amazon Transcribe.

This workflow is right for:

- Safety and surveillance: Detect particular circumstances or occasions throughout time

- High quality assurance: Monitor manufacturing or operational processes

- Compliance monitoring: Confirm adherence to security protocols

The structure makes use of AWS Step Capabilities to orchestrate your complete pipeline:

Good sampling: optimizing value and high quality

A key characteristic of the frame-based workflow is clever body deduplication, which considerably reduces processing prices by eradicating redundant frames whereas preserving visible info. The answer supplies two distinct similarity comparability strategies.

Nova Multimodal Embeddings (MME) Comparability makes use of the multimodal embeddings mannequin of Amazon Nova to generate 256-dimensional vector representations of every body. Every body is encoded right into a vector embedding utilizing the Nova MME mannequin, and the cosine distance between consecutive frames is computed. Frames with distance beneath the edge (default 0.2, the place decrease values point out greater similarity) are eliminated. This method excels at semantic understanding of picture content material, remaining sturdy to minor variations in lighting and perspective whereas capturing high-level visible ideas. Nonetheless, it incurs extra Amazon Bedrock API prices for embedding era and provides barely greater latency per body. This methodology is beneficial for content material the place semantic similarity issues greater than pixel-level variations, comparable to detecting scene modifications or figuring out distinctive moments.

OpenCV ORB (Oriented FAST and Rotated BRIEF) takes a pc imaginative and prescient method, utilizing characteristic detection to determine and match key factors between consecutive frames with out requiring exterior API calls. ORB detects key factors and computes binary descriptors for every body, calculating the similarity rating because the ratio of matched options to complete key factors. With a default threshold of 0.325 (the place greater values point out greater similarity), this methodology gives quick processing with minimal latency and no extra API prices. The rotation-invariant characteristic matching makes it glorious for detecting digital camera motion and body transitions. Nonetheless, it may be delicate to vital lighting modifications and will not seize semantic similarity as successfully as embedding-based approaches. This methodology is beneficial for static digital camera situations like surveillance footage, or cost-sensitive functions the place pixel-level similarity is enough.

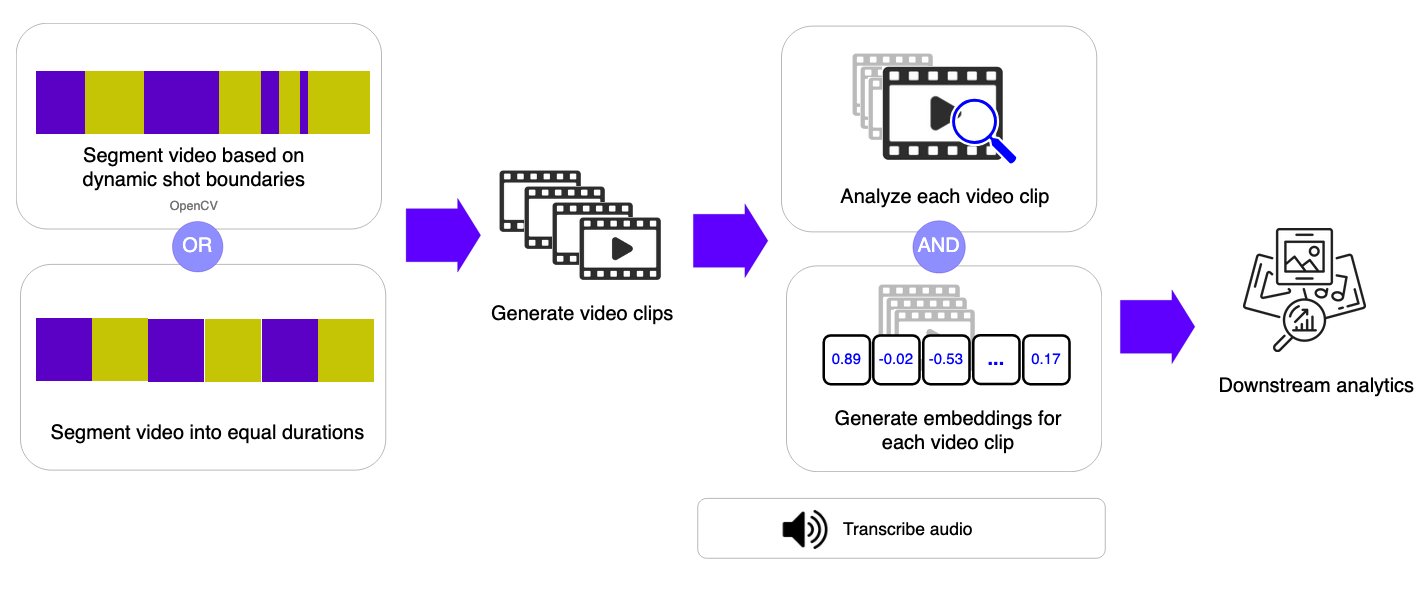

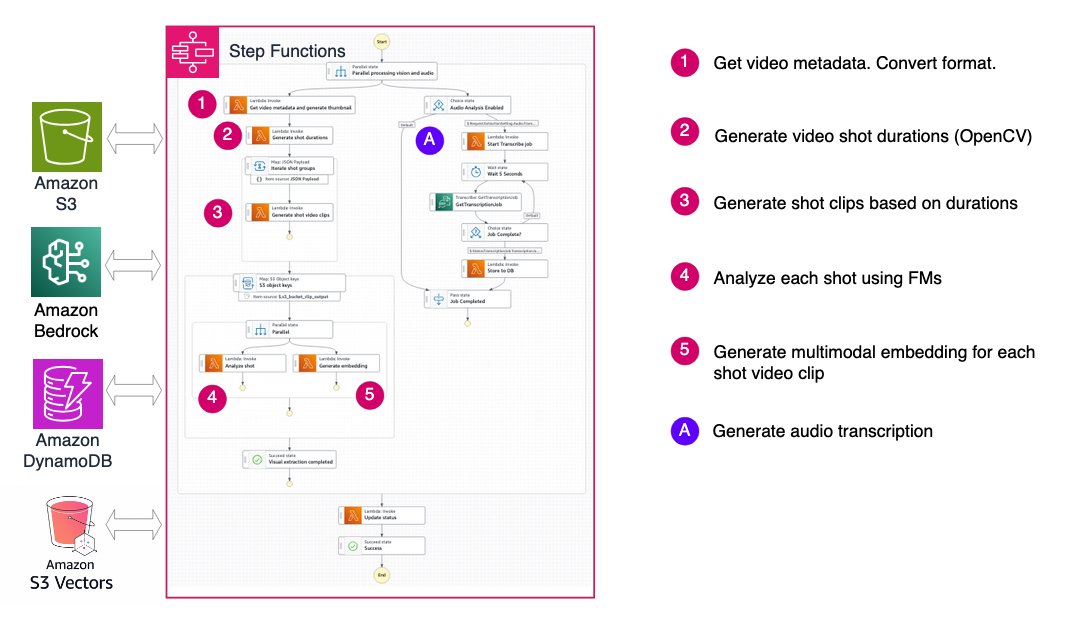

Shot-based workflow: understanding narrative circulation

As an alternative of sampling particular person frames, the shot-based workflow segments video into quick clips (pictures) or fixed-duration segments and applies video understanding basis fashions to every phase. This method captures temporal context inside every shot whereas sustaining the pliability to course of longer movies.

By producing each semantic labels and embeddings for every shot, this methodology permits environment friendly video search and retrieval whereas balancing accuracy and adaptability. The structure teams pictures into batches of 10 for parallel processing in subsequent steps, bettering throughput whereas managing AWS Lambda concurrency limits.

This workflow excels at:

- Media manufacturing: Analyze footage for chapter markers and scene descriptions

- Content material cataloging: Robotically tag and arrange video libraries

- Spotlight era: Determine key moments in long-form content material

Video segmentation: two approaches

The shot-based workflow supplies versatile segmentation choices to match totally different video traits and use instances. The system downloads the video file from Amazon Easy Storage Service (Amazon S3) to short-term storage in AWS Lambda, then applies the chosen segmentation algorithm based mostly on the configuration parameters.

OpenCV Scene Detection mechanically divides a video into segments based mostly on visible modifications within the content material. This method makes use of the PySceneDetect library to detect transitions comparable to cuts, digital camera modifications, or vital shifts in visible content material.

By figuring out pure scene boundaries, the system retains associated moments grouped collectively. This makes the strategy notably efficient for edited or narrative-driven movies comparable to motion pictures, TV exhibits, shows, and vlogs, the place scenes characterize significant models of content material. As a result of segmentation follows the construction of the video itself, phase lengths can fluctuate relying on the pacing and enhancing model.

Fastened-Length Segmentation divides a video into equal-length time intervals, regardless of what’s occurring within the video.

Every phase covers a constant length (for instance, 10 seconds), creating predictable and uniform clips. This method streamlines processing and improves processing time and price estimations. Though it would cut up scenes mid-action, fixed-duration segmentation works properly for steady recordings comparable to surveillance footage, sports activities occasions, or stay streams, the place common time sampling is extra essential than preserving narrative boundaries.

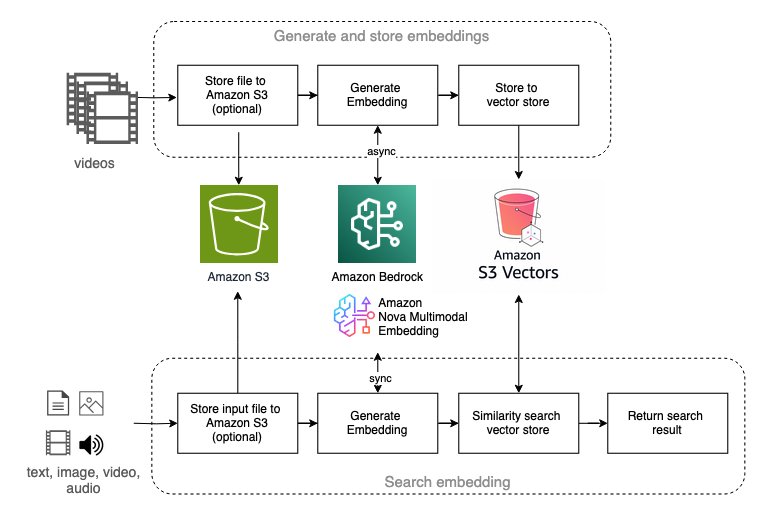

Multimodal embedding: semantic video search

Multimodal embedding represents an rising method to video understanding, notably highly effective for video semantic search functions. The answer gives workflows utilizing Amazon Nova Multimodal Embedding and TwelveLabs Marengo fashions out there on Amazon Bedrock.

These workflows allow:

- Pure language search: Discover video segments utilizing textual content queries

- Visible similarity search: Find content material utilizing reference photographs

- Cross-modal retrieval: Bridge the hole between textual content and visible content material

The structure helps each embedding fashions with a unified interface:

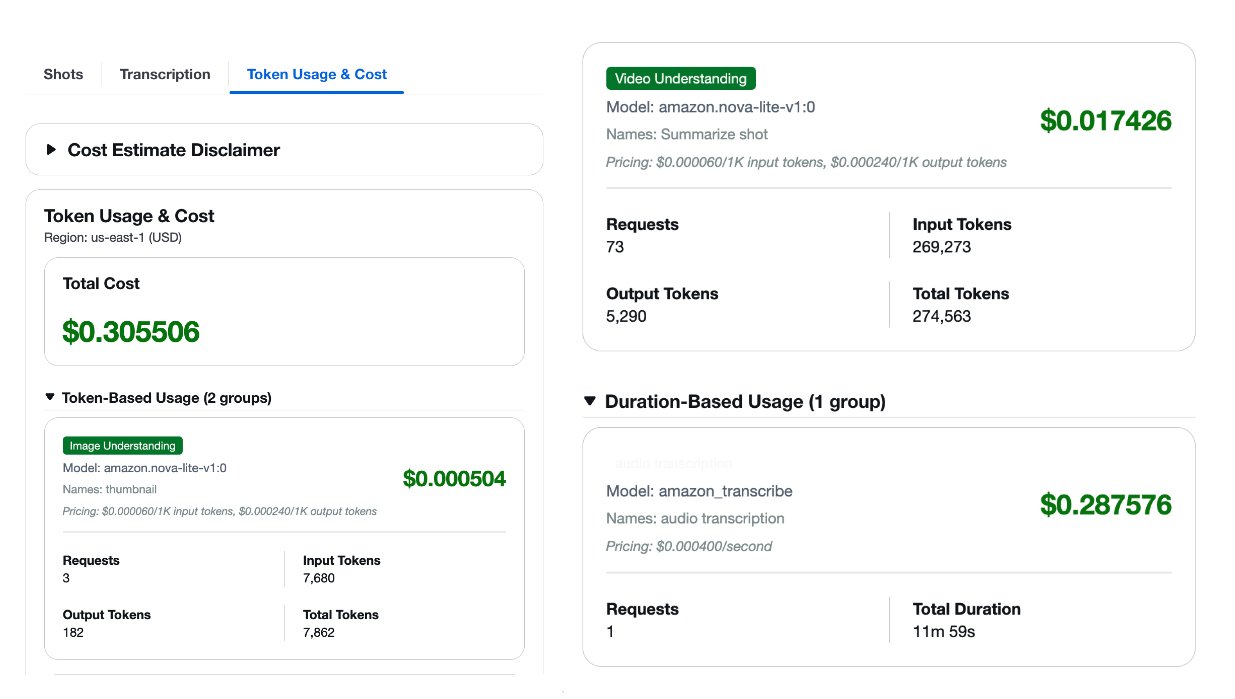

Understanding value and efficiency trade-offs

One of many key challenges in manufacturing video evaluation is managing prices whereas sustaining high quality. The answer supplies built-in token utilization monitoring and price estimation that can assist you make knowledgeable selections about mannequin choice and workflow configuration.

The earlier screenshot exhibits a pattern value estimate generated by the answer as an instance the format. It shouldn’t be used as a pricing supply.For every processed video, you obtain an in depth value breakdown by mannequin kind, masking Amazon Bedrock basis fashions and Amazon Transcribe for audio transcription. With this visibility, you possibly can enhance your configuration based mostly in your particular necessities and finances constraints.

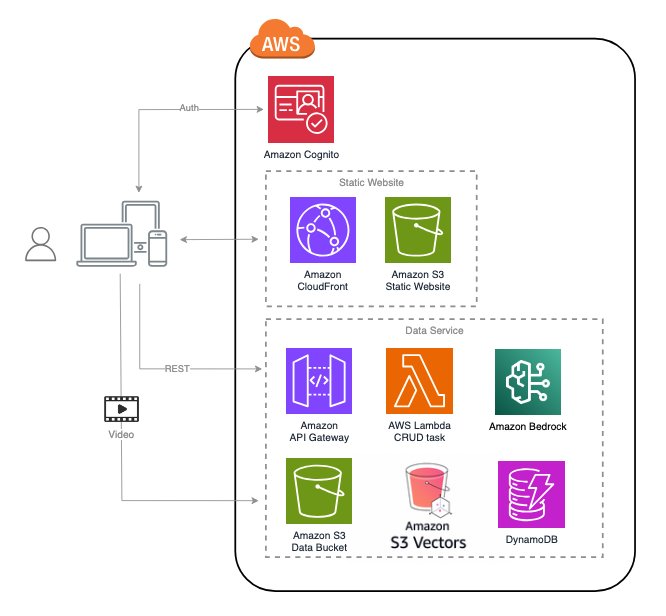

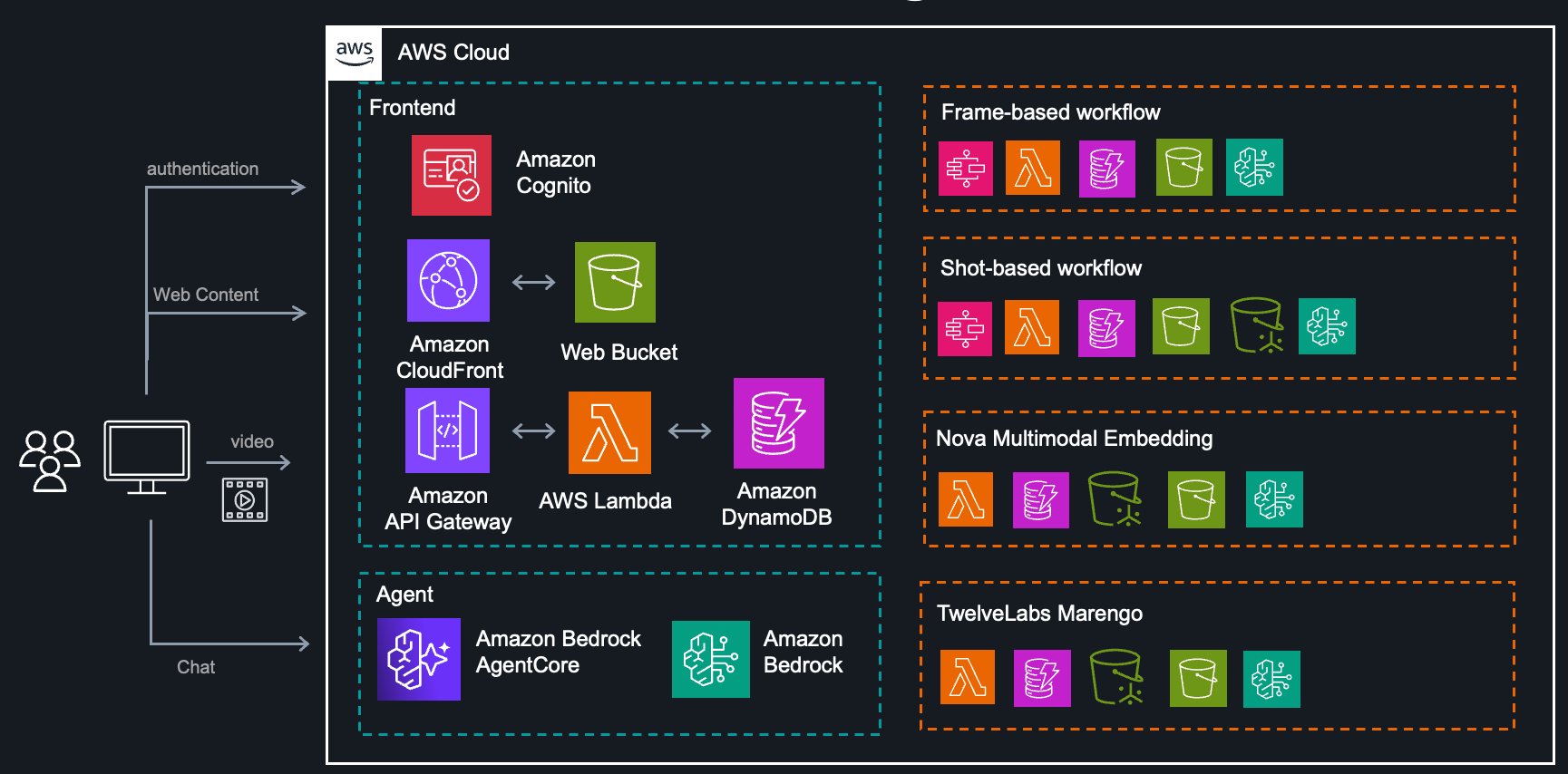

System structure

The entire resolution is constructed on AWS serverless providers, offering scalability and cost-efficiency:

The structure contains:

- Extraction Service: Orchestrates frame-based and shot-based workflows utilizing Step Capabilities

- Nova Service: Backend for Nova Multimodal Embedding with vector search

- TwelveLabs Service: Backend for Marengo embedding fashions with vector search

- Agent Service: AI assistant powered by Amazon Bedrock Brokers for workflow suggestions

- Frontend: React utility served utilizing Amazon CloudFront for consumer interplay

- Analytics Service: Pattern notebooks demonstrating downstream evaluation patterns

Accessing your video metadata

The answer shops extracted metadata in a number of codecs for versatile entry:

- Amazon S3: Uncooked basis mannequin outputs, full process metadata, and processed property organized by process ID and information kind.

- Amazon DynamoDB: Structured, queryable information optimized for retrieval by video, timestamp, or evaluation kind throughout a number of tables for various providers.

- Programmatic API: Direct invocation for automation, bulk processing, and integration into current pipelines.

You should use this versatile entry mannequin to combine the instrument into your workflows—whether or not conducting exploratory evaluation in notebooks, constructing automated pipelines, or growing manufacturing functions.

Actual-world use instances

The answer contains sample notebooks demonstrating three widespread situations:

- IP Digital camera Occasion Detection: Robotically monitor surveillance footage for particular occasions or circumstances with out fixed human oversight.

- Media Chapter Evaluation: Phase long-form video content material into logical chapters with computerized descriptions and metadata.

- Social Media Content material Moderation: Evaluate user-generated video content material at scale to make sure that platform pointers are met.

These examples present beginning factors you could lengthen and customise in your particular use instances.

Getting began

Deploy the answer

The answer is out there as a CDK package on GitHub and could be deployed to your AWS account with just a few instructions. The deployment creates all crucial assets together with:

- Step Capabilities state machines for orchestration

- Lambda features for processing logic

- DynamoDB tables for metadata storage

- S3 buckets for asset storage

- CloudFront distribution for the net interface

- Amazon Cognito consumer pool for authentication

After deployment, you possibly can instantly begin importing movies, experimenting with totally different evaluation pipelines and basis fashions, and evaluating efficiency throughout configurations.

Conclusion

Video understanding is now not restricted to organizations with specialised pc imaginative and prescient groups and infrastructure. The multimodal basis fashions of Amazon Bedrock, mixed with AWS serverless providers, make subtle video evaluation accessible and cost-effective.Whether or not you’re constructing safety monitoring programs, media manufacturing instruments, or content material moderation platforms, the three architectural approaches demonstrated on this resolution present versatile beginning factors designed for various necessities. The secret’s selecting the best method in your use case: frame-based for precision monitoring, shot-based for narrative content material, and embedding-based for semantic search.As multimodal fashions proceed to evolve, we are going to see much more subtle video understanding capabilities emerge. The longer term is about AI that doesn’t solely see video frames, however really understands the story they inform.

Able to get began?

Be taught extra:

In regards to the authors