Alibaba Cloud simply up to date its open supply standing. In the present day, Qwen staff launched Kwen 3.5the newest technology of the large-scale language mannequin (LLM) household. Probably the most highly effective model is Quen 3.5-397B-A17B. This mannequin is a sparse combination of specialists (MoE) system. It combines monumental reasoning energy and excessive effectivity.

Qwen3.5 is a local imaginative and prescient language mannequin. Designed particularly for AI brokers. You possibly can see, code, and purpose about the entire thing 201 language.

Core structure: 397B whole, 17B lively

Technical specs Quen 3.5-397B-A17B is spectacular. Mannequin contains: 397B Whole parameters. Nevertheless, a sparse MoE design is used. That’s, simply activate 17B parameters throughout a single ahead move.

this 17B The variety of activations is an important quantity for builders. This enables the mannequin to supply intelligence corresponding to: 400B mannequin. Nevertheless, it operates at speeds similar to a lot smaller fashions. The Qwen staff experiences: 8.6 instances to 19.0 instances Improved decoding throughput in comparison with earlier technology. This effectivity solves the excessive value of working AI at scale.

Environment friendly Hybrid Structure: Gate Delta Community

Qwen3.5 doesn’t use the usual Transformer design. It makes use of an “environment friendly hybrid structure.” Most LLMs rely solely on consideration mechanisms. Lengthy texts may be sluggish. Qwen3.5 Mix gate delta community (linear consideration) Blended Specialists (MoE).

The mannequin consists of 60 layer. The dimensions of the hidden dimension is 4,096. These layers comply with a sure “hidden format”. Layouts group layers into the next units: 4.

- 3 The block makes use of Gated DeltaNet-plus-MoE.

- 1 The block makes use of Gated Attendant-plus-MoE.

- this sample repeats 15 time to achieve 60 layer.

Technical particulars embrace:

- Gated delta internet: use 64 Linear consideration goes to the worth (V). use 16 Proceed to Queries and Keys (QK).

- MoE construction: The mannequin has 512 Complete knowledgeable. Every token is activated 10 with dispatched specialists 1 Sharing specialists. that is equal 11 Lively specialists per token.

- vocabulary: The mannequin makes use of the next padded vocabulary: 248,320 token.

Native multimodal coaching: early fusion

Qwen3.5 is native visible language mannequin. Many different fashions have imaginative and prescient capabilities added later. Qwen3.5 used “Early Fusion” coaching. Because of this the mannequin discovered from pictures and textual content concurrently.

Trillions of multimodal tokens have been utilized in coaching. This makes Qwen3.5 higher at visible reasoning than earlier than. Quen 3-VL model. Very able to “agent” duties. For instance, you’ll be able to consult with UI screenshots and generate correct HTML and CSS code. You can too analyze lengthy movies with second-level accuracy.

The mannequin helps Mannequin Context Protocol (MCP). It additionally handles advanced perform calls. These options are important for constructing brokers that management apps and browse the online. in IF bench I scored the check 76.5. This rating exceeds many proprietary fashions.

Fixing the reminiscence barrier: 1M context size

Lengthy-form information processing is a core characteristic of Qwen3.5. The bottom mannequin has a local context window. 262,144 (256K) tokens. hosted Qwen3.5-Plus The model goes additional. I assist 1 million tokens.

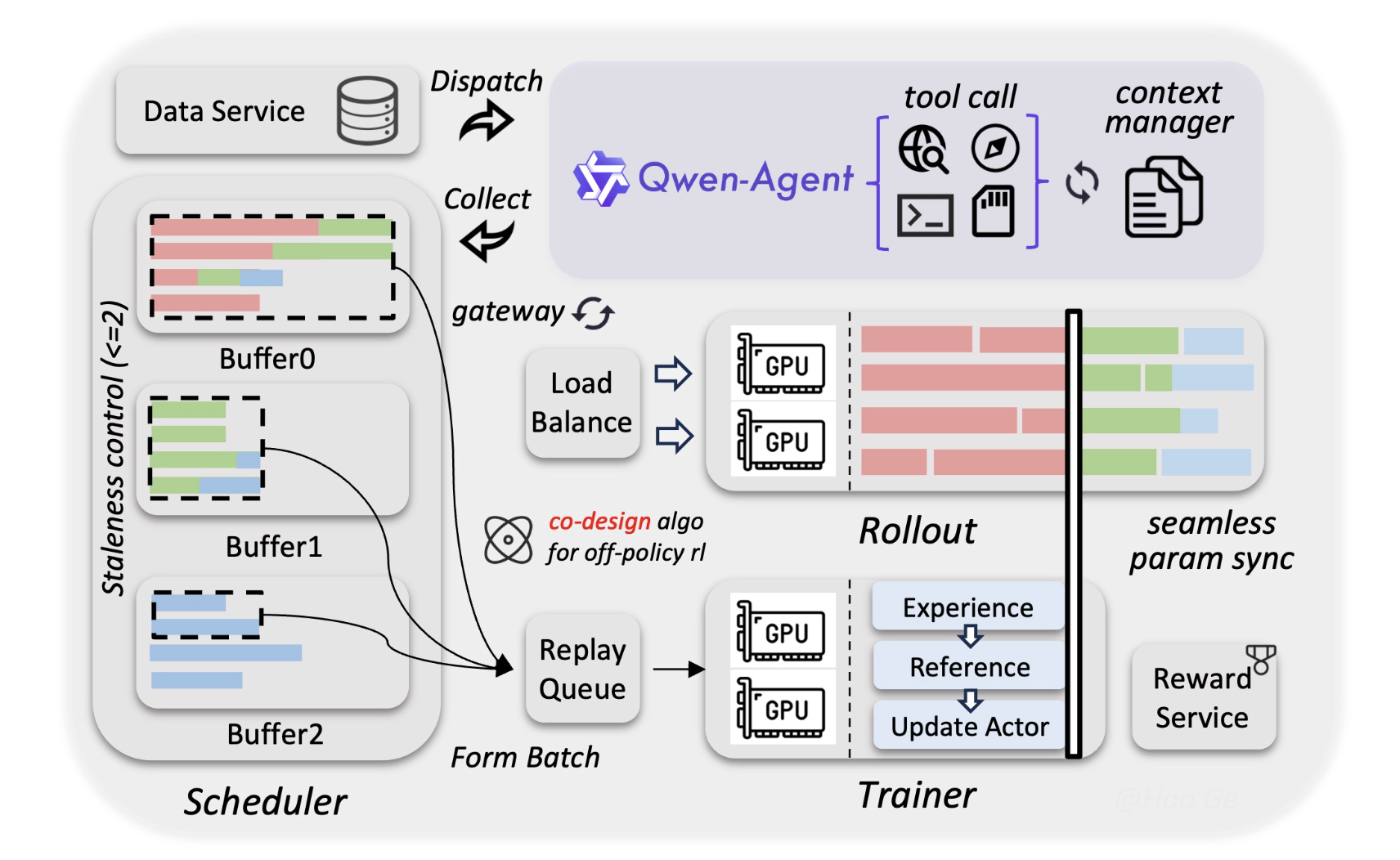

The Alibaba Qwen staff used a brand new asynchronous reinforcement studying (RL) framework for this. This ensures that the mannequin stays correct even when completed. 1M Token doc. For builders, this implies they’ll feed their whole codebase right into a single immediate. A fancy search extension technology (RAG) system will not be at all times crucial.

Efficiency and benchmarks

This is a superb mannequin within the technical subject. achieved a excessive rating in Humanity’s Final Check (HLE Licensed). It is a tough benchmark for AI data.

- coding: It reveals comparable outcomes to the top-level closed-source mannequin.

- Arithmetic: This mannequin makes use of Adaptive Device Use. Write Python code to resolve math issues. Then run the code and test your reply.

- language: I assist 201 completely different languages and dialects. It is a large leap ahead from the standard 119 Earlier model language.

Necessary factors

- Hybrid effectivity (MoE + gate delta community): Qwen3.5 is 3:1 ratio of gate delta community (linear consideration) to plain gated consideration past the block 60 layer. This hybrid design permits 8.6 instances to 19.0 instances Improved decoding throughput in comparison with earlier technology.

- Giant scale, low footprint: of Quen 3.5-397B-A17B Options 397B The sum of parameters is legitimate, however 17B per token. you get 400B class Obtain intelligence with a lot smaller mannequin inference pace and reminiscence necessities.

- Native multimodal basis: Not like “bolt-on” imaginative and prescient fashions, Qwen3.5 was educated within the following manner. preliminary fusion Course of trillions of textual content and picture tokens concurrently. This may make you a top-notch visible agent and enhance your rating. 76.5 above IF bench To comply with advanced directions in a visible context.

- 1M token context: The bottom mannequin helps native, however 256k token context, hosted Qwen3.5-Plus deal with as much as 1M token. This large window permits builders to course of a whole codebase or two hours of video with out the necessity for advanced RAG pipelines.

Please test technical details, model weights and GitHub repository. Additionally, be happy to comply with us Twitter Do not forget to affix us 100,000+ ML subreddits and subscribe our newsletter. hold on! Are you on telegram? You can now also participate by telegram.