Researchers on the Allen Institute for AI (AI2) launched SERA (Delicate Verified Environment friendly Repository Brokers), a household of coding brokers meant to suit a lot bigger closed methods utilizing solely supervised coaching and artificial trajectories.

What’s Sera?

SERA is the primary launch in AI2’s sequence of open coding brokers. The flagship mannequin, SERA-32B, is constructed on the Qwen 3 32B structure and educated as a repository-level coding agent.

On the SWE bench validated in a 32K context, SERA-32B reaches a decision fee of 49.5 p.c. In a 64K context, it reaches 54.2 p.c. These numbers are in the identical efficiency band as open weight methods akin to Devstral-Small-2 with 24B parameters and GLM-4.5 Air with 110B parameters, however SERA stays utterly open in code, knowledge, and weights.

This sequence at the moment consists of 4 fashions: SERA-8B, SERA-8B GA, SERA-32B, and SERA-32B GA. All of those are launched with Hugging Face underneath the Apache 2.0 license.

Delicate verified era

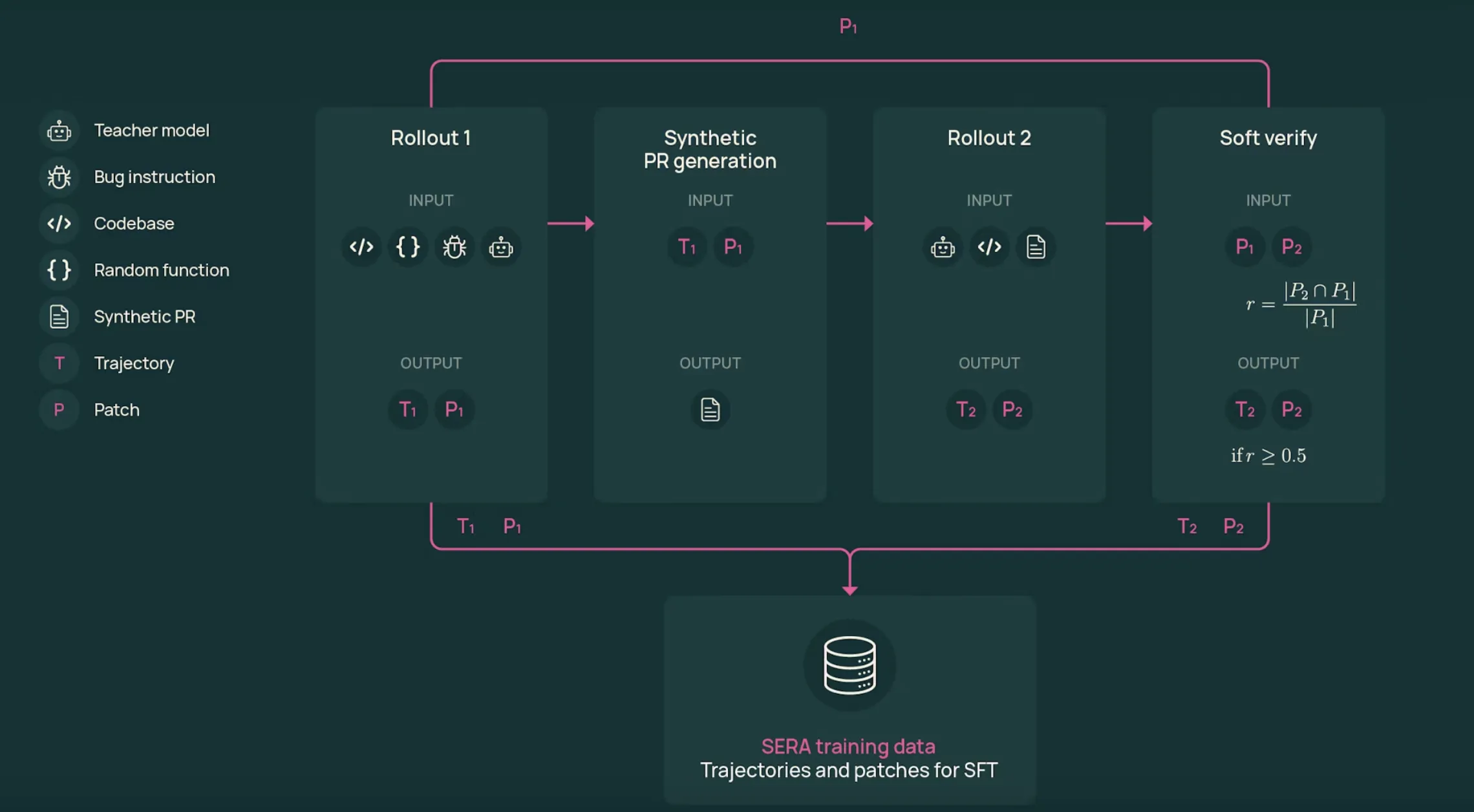

The coaching pipeline depends on Delicate Verified Technology (SVG). SVG generates agent trajectories that appear like a sensible developer workflow, utilizing patch matching between two rollouts as a smooth sign of accuracy.

The method is as follows:

- first rollout: Capabilities are sampled from actual repositories. GLM-4.6, the instructor mannequin for the SERA-32B setup, takes a bug model or change description and works with instruments to view recordsdata, edit code, and run instructions. Trajectory T1 and patch P1 are generated.

- Synthesis pull request: The system converts the trajectory right into a pull request-like description. This textual content summarizes intent and key edits in a format just like an precise pull request.

- Second rollout: Lecturers begin once more from the unique repository, however now solely see the pull request description and instruments. This generates a brand new trajectory T2 and patch P2 that makes an attempt to implement the described modifications.

- software program verification: Examine patches P1 and P2 line by line. The recall rating r is calculated because the proportion of modified strains in P1 that seem in P2. If r is the same as 1, the trajectory is rigorously verified. For intermediate values, the pattern is soft-validated.

An essential results of ablation research is that rigorous validation just isn’t needed. If the mannequin is educated on the T2 trajectory with completely different thresholds for r, the efficiency of SWE bench validation is analogous for a hard and fast variety of samples, even when r is the same as 0. This means that reasonable multi-step traces, even when noisy, are helpful monitoring for coding brokers.

Knowledge scale, coaching, and value

SVG is utilized to 121 Python repositories derived from the SWE-smith corpus. The whole SERA dataset spanning GLM-4.5 Air and GLM-4.6 instructor runs consists of over 200,000 trajectories from each rollouts, making it one of many largest open coding agent datasets.

SERA-32B is educated on a subset of 25,000 T2 trajectories from the Sera-4.6-Lite T2 dataset. Coaching makes use of normal supervised fine-tuning utilizing Axolotl on Qwen-3-32B with 3 epochs, a studying fee of 1e-5, a weight decay of 0.01, and a most sequence size of 32,768 tokens.

Many trajectories exceed the constraints of context. The analysis crew defines the truncation fee, which is the share of steps that match inside 32,000 tokens. Then, it prioritizes trajectories which can be already fitted, and selects slices with excessive reducing charges for the remainder. Evaluating the SWE bench validation scores, this ordered truncation technique clearly outperforms the random truncation technique.

The reported computing finances for SERA-32B is roughly 40 GPU days, together with knowledge era and coaching. Utilizing scaling legal guidelines for dataset dimension and efficiency, the researchers estimated that the SVG method can be roughly 26 occasions cheaper than reinforcement learning-based methods akin to SkyRL-Agent and 57 occasions cheaper than earlier artificial knowledge pipelines akin to SWE-smith to attain comparable SWE bench scores.

Repository specialization

The core use case is adapting an agent to a selected repository. The analysis crew is learning this for 3 main SWE bench-validated initiatives: Django, SymPy, and Sphinx.

SVG generates roughly 46,000 to 54,000 trajectories per repository. Resulting from computing limitations, the specialization experiment is educated with 8,000 trajectories per repository, blended with 3,000 soft-validated T2 trajectories and 5,000 filtered T1 trajectories.

Within the 32K context, these skilled college students carry out as properly or barely higher than the GLM-4.5-Air instructor, and in addition evaluate properly with Devstral-Small-2 on these repository subsets. For Django, the answer fee for skilled college students reaches 52.23 p.c, whereas for GLM-4.5-Air it reaches 51.20 p.c. For SymPy, the particular mannequin reaches 51.11 p.c, whereas for GLM-4.5-Air it reaches 48.89 p.c.

Essential factors

- SERA turns coding brokers into supervised studying issues: SERA-32B is educated with normal supervised fine-tuning on artificial trajectories in GLM-4.6, with none reinforcement studying loops or dependencies on repository check suites.

- Delicate Verified Technology eliminates testing: SVG makes use of the overlap of the 2 rollouts and patches between P1 and P2 to calculate the smooth validation rating. The analysis crew reveals that it’s potential to coach efficient coding brokers even on unvalidated or poorly validated trajectories.

- Giant, reasonable agent datasets from actual repositories: This pipeline applies SVG to 121 Python initiatives within the SWE smith corpus, producing over 200,000 trajectories and creating one of many largest open datasets for coding brokers.

- Environment friendly coaching with express value and scaling evaluation: SERA-32B is educated with 25,000 T2 trajectories, and scaling research present that SVG is roughly 26 occasions cheaper than SkyRL-Agent and 57 occasions cheaper than SWE-smith with comparable SWE bench-validated efficiency.

Please test paper, lipo and model weights. Please be at liberty to comply with us too Twitter Do not forget to hitch us 100,000+ ML subreddits and subscribe our newsletter. hold on! Are you on telegram? You can now also participate by telegram.