This submit is written by Chaim Rand, Principal Engineer, Pini Reisman, Software program Senior Principal Engineer, and Eliyah Weinberg, Efficiency and Know-how Innovation Engineer, at Mobileye. The Mobileye staff wish to thank Sunita Nadampalli and Man Almog from AWS for his or her contributions to this resolution and this submit.

Mobileye is driving the worldwide evolution towards smarter, safer mobility by combining pioneering AI, intensive real-world expertise, a sensible imaginative and prescient for the superior driving programs of at present, and the autonomous mobility of tomorrow. Road Experience Management™ (REM™) is a vital element of Mobileye’s autonomous driving ecosystem. REM™ is liable for creating and sustaining extremely correct, crowdsourced high-definition (HD) maps of highway networks worldwide. These maps are important for:

- Exact automobile localization

- Actual-time navigation

- Figuring out modifications in highway circumstances

- Enhancing total autonomous driving capabilities

Mobileye Street Expertise Administration (REM)™ (Supply: https://www.mobileye.com/technology/rem/)

Map technology is a steady course of that requires amassing and processing information from hundreds of thousands of automobiles outfitted with Mobileye expertise, making it a computationally intensive operation that requires environment friendly and scalable options.

On this submit, we give attention to one portion of the REM™ system: the automated identification of modifications to the highway construction which we’ll discuss with as Change Detection. We are going to share our journey of architecting and deploying an answer for Change Detection, the core of which is a deep studying mannequin known as CDNet. We are going to cowl the next factors:

- The tradeoff between working on GPU in comparison with CPU, and why our present resolution runs on CPU.

- The affect of utilizing a mannequin inference server, particularly Triton Inference Server.

- Working the Change Detection pipeline on AWS Graviton primarily based Amazon Elastic Compute Cloud (Amazon EC2) cases and its affect on deployment flexibility, in the end ensuing greater than a 2x enchancment in throughput.

We are going to share real-life choices and tradeoffs when constructing and deploying a high-scale, extremely parallelized algorithmic pipeline primarily based on a Deep Studying (DL) mannequin, with an emphasis on effectivity and throughput.

Street change detection

Excessive-definition maps are one in all many elements of Mobileye’s resolution for autonomous driving which are generally utilized by autonomous automobiles (AVs) for automobile localization and navigation. Nonetheless, as human drivers know, it’s not unusual for highway construction to vary. Borrowing a quote typically attributed to the Greek thinker Heraclitus: In relation to highway maps – “The one fixed in life is change.” A typical reason for a highway change is highway building, when lanes, and their related lane-markings, could also be added, eliminated, or repositioned.

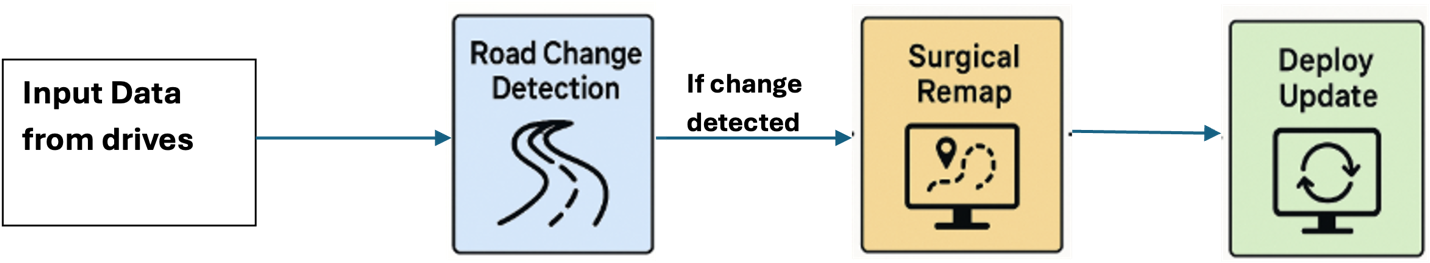

For human drivers, modifications within the highway could also be inconvenient, however they’re normally manageable. However for autonomous automobiles, such modifications can pose important challenges if not correctly accounted for. The opportunity of highway modifications requires that the AV programs be programmed with ample redundancy and flexibility. It additionally requires acceptable mechanisms for modifying and deploying corrected REM™ maps as shortly as potential. The diagram beneath captures the change detection subsystem in REM™ that’s liable for figuring out modifications within the map and, within the case a change is detected, deploying a map replace.

REM™ Street Change Detection and Map Replace circulate

REM™ Street Change Detection and Map Replace circulate

Change detection is run in parallel and independently on a number of highway segments from world wide. It’s triggered utilizing a proprietary algorithm that proactively inspects information collected from automobiles outfitted with Mobileye expertise. The change detection activity is usually triggered hundreds of thousands of instances a day the place every activity runs on a separate highway section. Every highway section is evaluated at a minimal, predetermined, cadence.

The principle element of the Change Detection activity is Mobileye’s proprietary AI mannequin, CDNet, that consumes a proprietary encoding of the info collected from a number of latest drives, together with the present map information, and produces a sequence of outputs which are used to routinely assess whether or not, actually, a highway change occurred, and decide if remapping is required. Though the complete change detection algorithm contains extra elements, the CDNet mannequin is the heaviest when it comes to its compute and reminiscence necessities. Throughout a single Change Detection activity working on a single section, the CDNet mannequin may be known as dozens of instances.

Prioritizing value effectivity

Given the big scale of the change detection system, the first goal we set for ourselves when designing an answer for its deployment was minimizing prices via rising the common variety of accomplished change detection duties per greenback. This goal took priority over different frequent metrics resembling minimizing latency or maximizing reliability. For instance, a key element of the deployment resolution is reliance on Amazon EC2 Spot Cases for our compute sources, that are finest to run fault-tolerant workloads. When working offline processes, we’re ready for the opportunity of occasion preemption and a delayed algorithm response as a way to profit from the steep reductions of utilizing Spot Cases. As we’ll clarify, prioritizing value effectivity motivated lots of our design choices.

Architecting an answer

We made the next concerns when designing our structure.

1. Run Deep Studying inference on CPU as an alternative of GPU

Because the core of the Change Detection pipeline is an AI/ML mannequin, the preliminary strategy was to design an answer primarily based on the usage of GPU cases. And certainly, when isolating simply the CDNet mannequin inference execution, GPUs demonstrated a big benefit over CPUs. The next desk illustrates the CDNet inference uncooked efficiency on CPU in comparison with GPU.

| Occasion kind | Samples per second |

| CPU (c7i.4xlarge) | 5.85 |

| GPU (g6e.2xlarge) | 54.8 |

Nonetheless, we shortly concluded that though CDNet inference could be slower, working it on a CPU occasion would enhance total value effectivity with out compromising end-to-end pace, for the next causes:

- The pricing of GPU cases is usually a lot increased than CPU cases. Compound that with the truth that, as a result of they’re in excessive demand, GPU cases have a lot decrease Spot availability, and endure from extra frequent Spot preemptions, than CPU cases.

- Whereas CDNet is a major element, the change detection algorithm contains many extra elements which are extra suited to working on CPU. Though the GPU was extraordinarily quick for working CDNet, it might stay idle for a lot of the change detection pipeline, thereby lowering its effectivity. Moreover, working the whole algorithm on CPU reduces the overhead of managing and passing information between completely different compute sources (utilizing CPU cases for the non-inference work and GPU cases for inference work).

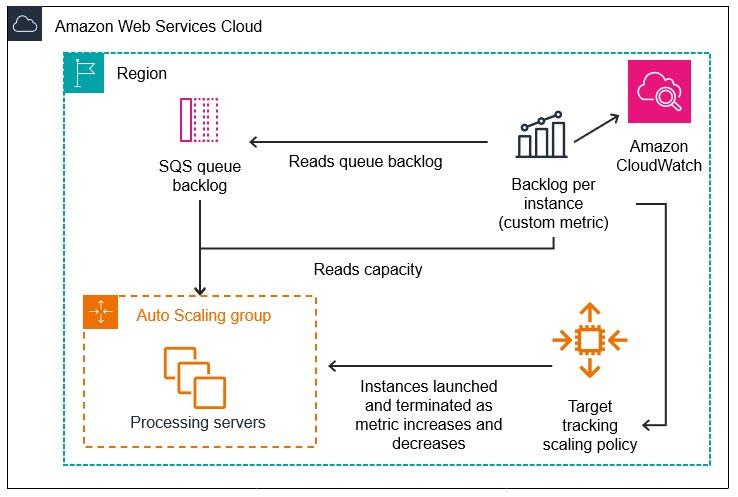

Preliminary deployment resolution

For our preliminary strategy, we designed an auto-scaling resolution primarily based on multi-core EC2 CPU Spot Cases processing duties which are streamed from Amazon Easy Queue Service (Amazon SQS). As change detection duties had been acquired, they might be scheduled, distributed, and run in a brand new course of on a vacant slot on one of many CPU cases. The cases could be scaled up and down primarily based on the duty load.

The next diagrams illustrate the structure of this configuration.

At this stage in growth, every course of would load and handle its personal copy of CDNet. Nonetheless, this turned out to be a big and limiting bottleneck. The reminiscence sources required by every course of for loading and working its copy of CDNet was 8.5 GB. Assuming for instance, that our occasion kind was a r6i.8xlarge with 256 GB of reminiscence, this implied that we had been restricted to working simply 30 duties per occasion. Furthermore, we discovered that roughly 50% of the whole time of a change detection activity was spent downloading the mannequin weights and initializing the mannequin.

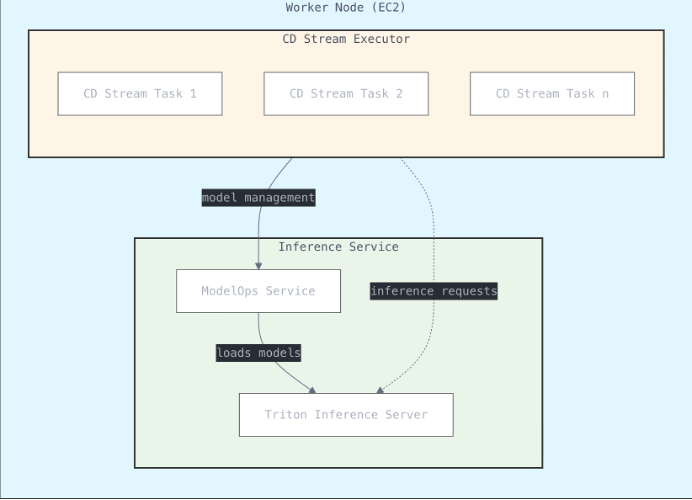

2. Serve mannequin inference with Triton Inference Server

The primary optimization we utilized was to centralize the mannequin inference executions utilizing a mannequin inference server resolution. As an alternative of every course of sustaining its personal copy of CDNet, every CPU employee occasion could be initialized with a single (containerized) copy of CDNet managed by an inference server, serving the change detection processes working on the occasion. We selected to make use of Triton Inference Server as our inference server as a result of it’s open supply, easy to deploy, and contains assist for a number of runtime environments and AI/ML frameworks.

The outcomes of this optimization had been profound: The reminiscence footprint of 8.5 GB per course of dropped all the best way right down to 2.5 GB and the common runtime per change detection activity dropped from 4 minutes to 2 minutes. With removing of the CPU reminiscence bottleneck we may improve the variety of duties per occasion as much as full CPU utilization. Within the case of Change Detection, the optimum variety of duties per 32-vCPU occasion turned out to be 32. General, this optimization elevated effectivity by simply over 2x.

The next desk illustrates the CDNet Inference efficiency enchancment with centralized Triton Inference Server internet hosting.

| Reminiscence required per activity | Duties per occasion | Common runtime | Duties per minute | |

| Remoted inference | 8.5 GB | 30 | 4 minutes | 7.5 |

| Centralized inference | 2.5 GB | 32 | 2 minutes | 16 |

We additionally thought-about another structure wherein a scalable inference server would run in a separate unit and on unbiased cases, probably on GPUs. Nonetheless, this feature was rejected for a number of causes:

- Elevated latency: Calling CDNet over the community somewhat than on the identical machine added important latency.

- Elevated community site visitors: The comparatively giant payload of CDNet considerably elevated community site visitors, thereby additional rising latency.

We discovered that the automated scaling of inference capability inherent in our resolution (utilizing a further server for every CPU employee occasion), was nicely suited to the inference demand.

Optimizing Triton Inference Server: Decreasing Docker picture measurement for leaner deployments

The default Triton picture contains assist for a number of machine studying backends and each CPU and GPU execution, leading to a hefty picture measurement of round 15 GB. To streamline this, we rebuilt the Docker picture by together with solely the ML backend we required and limiting execution to CPU-only. The outcome was a dramatically decreased picture measurement, down to simply 2.7 GB. This served to additional cut back reminiscence utilization and improve the capability for added change detection processes. A smaller picture measurement interprets to sooner container startup instances.

3. Improve occasion diversification: Use AWS Graviton cases for higher value efficiency

At peak capability there are numerous 1000’s of change detection duties working concurrently on a big group of Spot Cases. Inevitably, Spot availability per occasion fluctuates. A key to maintaining with the demand is to assist a big pool of occasion sorts. Our sturdy desire was for newer and stronger CPU cases which demonstrated important advantages each in pace and in value effectivity in comparison with different comparable cases. Right here is the place AWS Graviton offered a big alternative.

AWS Graviton is a household of processors designed to ship the perfect value efficiency for cloud workloads working in Amazon EC2. They’re additionally optimized for ML workloads, together with Neon vector processing engines, assist for bfloat16, Scalable Vector Extension (SVE), and Matrix Multiplication (MMLA) directions, making them a really perfect option to run our batched deep studying inference workloads for our Change Detection programs. Main machine studying frameworks resembling PyTorch, TensorFlow, and ONNX have been optimized for Graviton processors.

Because it turned out, adapting our resolution to run on Graviton was easy. Most fashionable AI/ML frameworks together with Triton Inference Server embody in-built assist for AWS Graviton. To adapt our resolution, we needed to make the next modifications:

- Create a brand new Docker picture devoted to working the change detection pipeline on AWS Graviton (ARM structure).

- Recompile the trimmed down model of Triton Inference Server for Graviton.

- Add Graviton cases to node pool.

Outcomes

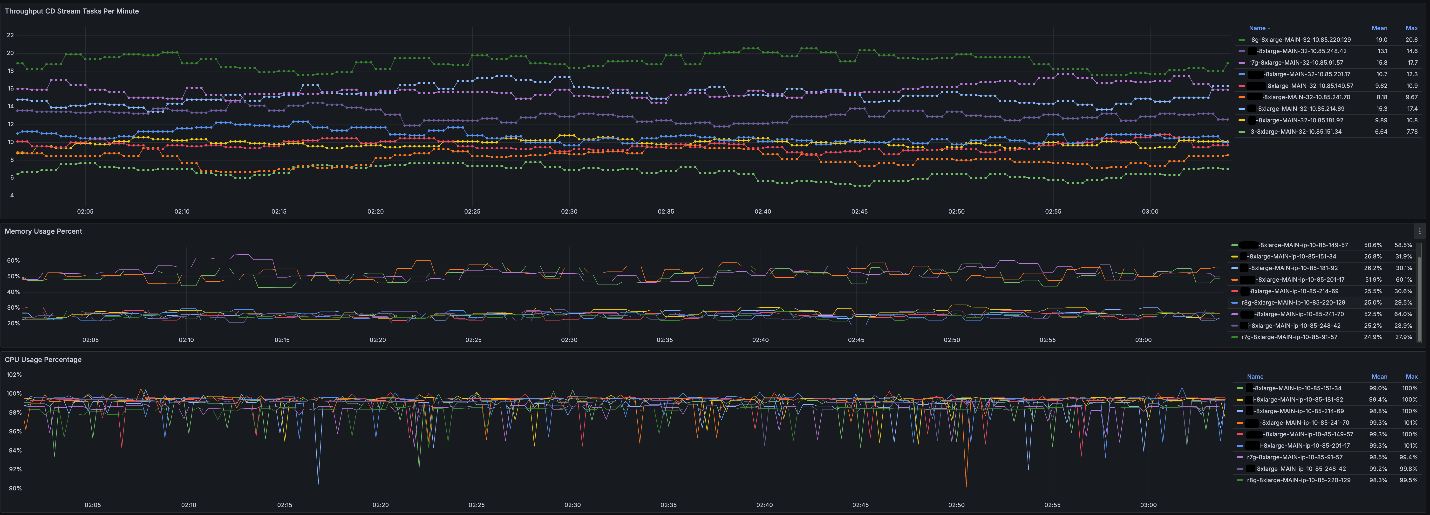

By enabling change detection to run on AWS Graviton cases we improved the general value effectivity of the change detection sub-system and elevated our occasion diversification and Spot Occasion availability considerably.

1. Elevated throughput

To quantify the affect, we will share an instance. Suppose that the present activity load calls for 5,000 compute cases, solely half of which may be crammed by fashionable non-Graviton CPU cases. Earlier than including AWS Graviton to our useful resource pool, we would wish to fill the remainder of the demand with older technology CPUs which run 3x slower. Following our occasion diversification optimization, we will fill these with AWS Graviton Spot availability. Within the case of our instance, this doubles the general effectivity.Lastly, on this instance, the throughput enchancment seems to exceed 2x, because the runtime efficiency of CDNet on AWS Graviton cases is commonly sooner than the comparable EC2 cases.

The next desk illustrates the CDNet Inference efficiency enchancment with AWS Graviton cases.

| Occasion Kind | Samples per second |

| AWS Graviton primarily based EC2 occasion – r8g.8xlarge | 19.4 |

| Comparable non Graviton CPU occasion – 8xlarge | 13.5 |

| Older Era non Graviton CPU occasion – 8xlarge | 6.64 |

With AWS Graviton cases, we may see the next CDNet Inference efficiency.

2. Improved consumer expertise

With the Triton Inference Server deployment and elevated fleet diversification and occasion availability, we now have improved our Change Detection system throughput considerably that gives an enhanced consumer expertise for our clients.

3. Skilled seamless migration

Most fashionable AI/ML frameworks together with Triton Inference Server embody in-built assist for AWS Graviton which made adapting our resolution to run on Graviton easy.

Conclusion

In relation to optimizing runtime effectivity, the work shouldn’t be completed. There are sometimes extra parameters to tune and extra flags to use. AI/ML frameworks and libraries are continuously enhancing and optimizing their assist for a lot of completely different endpoint occasion sorts, notably AWS Graviton. We anticipate that with additional effort, we’ll proceed to enhance on our optimization efforts. We look ahead to sharing the subsequent steps in our journey in a future submit.For additional studying, discuss with the next:

In regards to the authors

Chaim Rand is a Principal Engineer and machine studying algorithm developer engaged on deep studying and laptop imaginative and prescient applied sciences for Autonomous Car options at Mobileye.

Chaim Rand is a Principal Engineer and machine studying algorithm developer engaged on deep studying and laptop imaginative and prescient applied sciences for Autonomous Car options at Mobileye.

Pini Reisman is a Software program Senior Principal Engineer main the Efficiency Engineering and Technological Innovation within the Engineering group in REM – the mapping group in Mobileye.

Pini Reisman is a Software program Senior Principal Engineer main the Efficiency Engineering and Technological Innovation within the Engineering group in REM – the mapping group in Mobileye.

Eliyah Weinberg is a Efficiency and scale optimization and expertise innovation engineer at Mobileye REM.

Eliyah Weinberg is a Efficiency and scale optimization and expertise innovation engineer at Mobileye REM.

Sunita Nadampalli is a Principal Engineer and AI/ML professional at AWS. She leads AWS Graviton software program efficiency optimizations for AI/ML and HPC workloads. She is enthusiastic about open-source software program growth and delivering high-performance and sustainable software program options for SoCs primarily based on the Arm ISA.

Sunita Nadampalli is a Principal Engineer and AI/ML professional at AWS. She leads AWS Graviton software program efficiency optimizations for AI/ML and HPC workloads. She is enthusiastic about open-source software program growth and delivering high-performance and sustainable software program options for SoCs primarily based on the Arm ISA.

Man Almog is a Senior Options Architect at AWS, specializing in compute and machine studying. He works with giant enterprise AWS clients to design and implement scalable cloud options. His function entails offering technical steering on AWS providers, growing high-level options, and making architectural suggestions that target safety, efficiency, resiliency, value optimization, and operational effectivity.

Man Almog is a Senior Options Architect at AWS, specializing in compute and machine studying. He works with giant enterprise AWS clients to design and implement scalable cloud options. His function entails offering technical steering on AWS providers, growing high-level options, and making architectural suggestions that target safety, efficiency, resiliency, value optimization, and operational effectivity.