Predicting egocentric videos from human behavior (PEVA). Given a previous video body and an motion specifying a desired change in 3D pose, PEVA predicts the following video body. Our outcomes present that, given an preliminary body and a sequence of actions, the mannequin can generate movies of atomic actions (a), simulate counterfactuals (b), and help the technology of lengthy movies (c).

Lately, nice advances have been made in world fashions that learn to simulate future outcomes for planning and management functions. From intuitive physics to multi-step video predictions, these fashions have turn into more and more highly effective and expressive. However only a few are designed for really embodied brokers. To create a world mannequin for an embodied agent, real A materialized agent that operates in real world. a real Embodied brokers have complicated motion areas which might be bodily grounded, versus summary management alerts. It should additionally act in quite a lot of real-life eventualities and be characterised by an selfish perspective, versus an aesthetic scene or a set digicam.

💡 Tip: Click on on the picture to view it in full decision.

why is it troublesome

- Actions and imaginative and prescient are extremely depending on the scenario. The identical view could cause totally different actions and vice versa. It’s because people function in complicated, embodied, and goal-directed environments.

- Human management is extremely structured. Entire-body motion spans over 48 levels of freedom with hierarchical and time-dependent dynamics.

- An selfish view reveals intentions however hides the physique. The primary particular person view displays the purpose, however not the execution of the movement. Fashions should infer outcomes from invisible bodily actions.

- Consciousness lags behind motion. Visible suggestions is usually obtained after a number of seconds and requires long-term prediction and temporal reasoning.

To develop an embodied agent world mannequin, we have to base our method on brokers that meet these standards. People routinely look first after which act. The eyes fixate on the goal, the mind runs a brief visible “simulation” of the end result, and solely then does the physique transfer. At each second, our selfish perspective serves as an enter from the environment whereas additionally reflecting the intentions/objectives behind our subsequent transfer. When contemplating the motion of our our bodies, we have to think about each foot actions (locomotion and navigation) and hand actions (manipulation), or extra usually management of the entire physique.

what did we do?

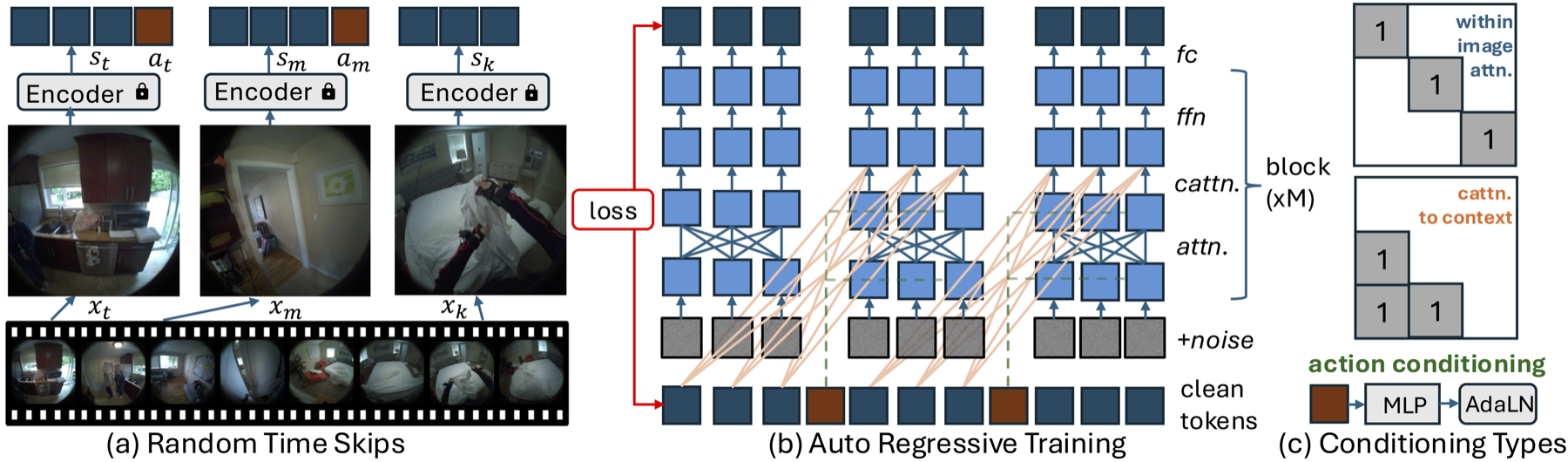

I skilled the mannequin as follows Pdeclare Egocentric Vconcepts from people aMotion (Peva) for selfish video prediction of whole-body situations. PEVA learns to situation kinematic pose trajectories structured by the physique’s joint hierarchy to simulate how human bodily actions form the setting from a first-person perspective. We prepare an autoregressive conditional diffusion transformer on Nymeria, a big dataset that mixes real-world selfish movies and physique pose captures. Our hierarchical analysis protocol checks more and more troublesome duties and gives a complete evaluation of the predictive and management capabilities constructed into the mannequin. This work represents the primary try and mannequin complicated real-world environments and the conduct of embodied brokers via video prediction from a human perspective.

technique

Structured motion expression from motion

To bridge human motion and selfish imaginative and prescient, we signify every motion as a wealthy high-dimensional vector that captures each whole-body dynamics and detailed joint actions. As an alternative of utilizing simplified controls, we encode international translations and relative joint rotations primarily based on the physique’s kinematics tree. Movement is represented in 3D house with 3 levels of freedom for root motion and 15 higher physique joints. Utilizing Euler angles for the relative rotations of the joints provides us a 48-dimensional motion house (3 + 15 × 3 = 48). The movement seize information is aligned with the video utilizing timestamps and remodeled from international coordinates to a neighborhood body centered on the pelvis to maintain the place and orientation invariant. All positions and rotations are normalized to make sure secure studying. Every motion captures adjustments in motion between frames, permitting the mannequin to attach bodily motion with visible outcomes over time.

Designing PEVA: Autoregressive Conditional Diffusion Transformer

Navigation World Fashions’ conditional diffusion transformers (CDiTs) use easy management alerts akin to velocity and rotation, however modeling full-body human movement poses vital challenges. Human conduct is high-dimensional, temporally prolonged, and bodily constrained. To deal with these challenges, we prolong the CDiT technique in 3 ways.

- random timeskip: Permits the mannequin to study each short-term motion dynamics and long-term exercise patterns.

- Sequence stage coaching: Mannequin your complete movement sequence by making use of a loss to every body prefix.

- Embed an motion: Concatenate all actions at time t right into a 1D tensor to align every AdaLN layer to high-dimensional whole-body movement.

Sampling and deployment technique

Throughout testing, we situation a set of previous context frames to generate future frames. Encode these frames right into a latent state and add noise to the goal body. It’s then progressively denoised utilizing a diffusion mannequin. Restrict consideration to hurry up inference. In-image consideration is utilized solely to the goal body, and cross-context consideration is utilized solely to the final body. For action-conditional predictions, use an autoregressive rollout technique. Beginning with a context body, encode the body utilizing the VAE encoder and add the present motion. The mannequin then predicts the following body to be added to the context whereas eradicating the oldest body, and this course of is repeated for every motion within the sequence. Lastly, we use a VAE decoder to decode the expected latent into pixel house.

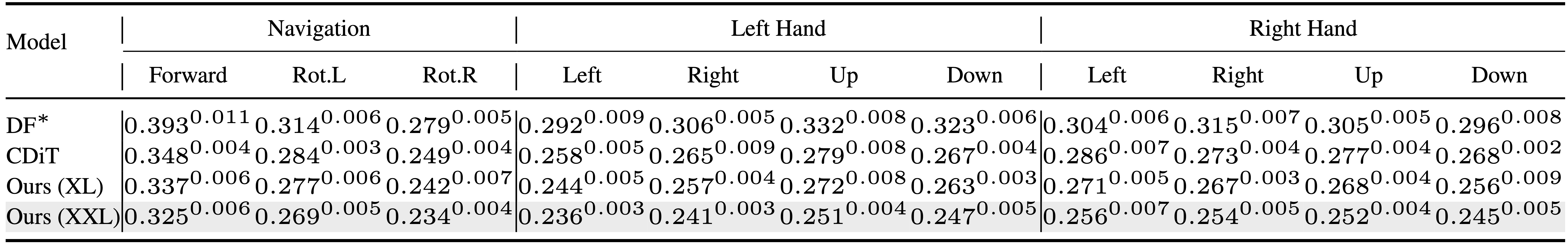

atomic motion

We decompose complicated human actions into atomic actions, akin to hand actions (up, down, left and proper) and entire physique actions (ahead, rotation), and take a look at the mannequin’s understanding of how particular joint-level actions have an effect on selfish perspective. Some samples are included right here.

long-term improvement

Right here you’ll be able to see the mannequin’s capability to take care of visible and semantic consistency over prolonged prediction horizons. Listed below are some examples of PEVA that generate coherent 16-second rollouts conditioned on whole-body movement. Listed below are some video and picture samples for a better look.

sequence 1

sequence 2

sequence 3

plan

PEVA can be utilized for planning by simulating a number of motion candidates and scoring them primarily based on their perceptual similarity to the goal, as measured by LPIPS.

This instance finds the proper path to open the fridge, excluding paths that result in the sink or the outside.

On this instance, we’ll discover a cheap plan of action that results in the shelf, whereas excluding paths that result in selecting up a close-by plant or going to the kitchen.

Permits visible planning capability

Following the method launched within the navigation world mannequin, we formulate the plan as an vitality minimization drawback and carry out motion optimization utilizing the cross-entropy technique (CEM). [arXiv:2412.03572]. Particularly, it optimizes the motion sequence of the left or proper arm whereas protecting the opposite physique elements mounted. A typical instance of the ensuing plan is proven under.

On this case, you’ll be able to predict the sequence of elevating your proper arm in the direction of the blending stick. We will see that this technique has limitations, because it solely predicts the best arm and never the corresponding downward motion of the left arm.

On this case, you’ll be able to predict a sequence of actions the place you attain for the kettle however, just like the purpose, you do not fairly grasp it.

On this case, much like the purpose, you’ll be able to predict a sequence of actions that can pull the left arm nearer collectively.

quantitative outcomes

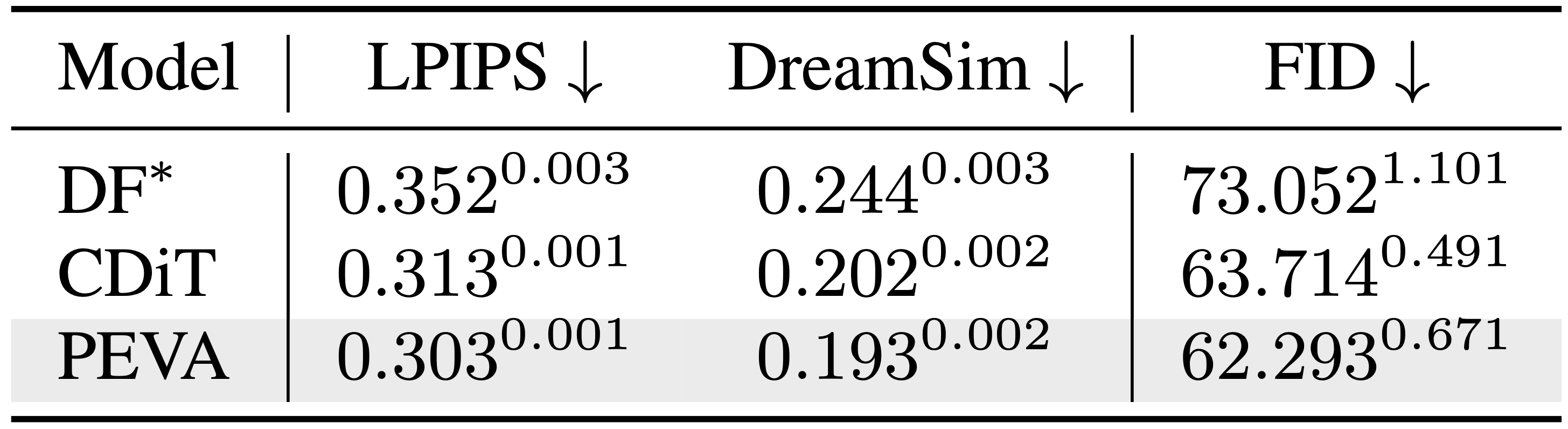

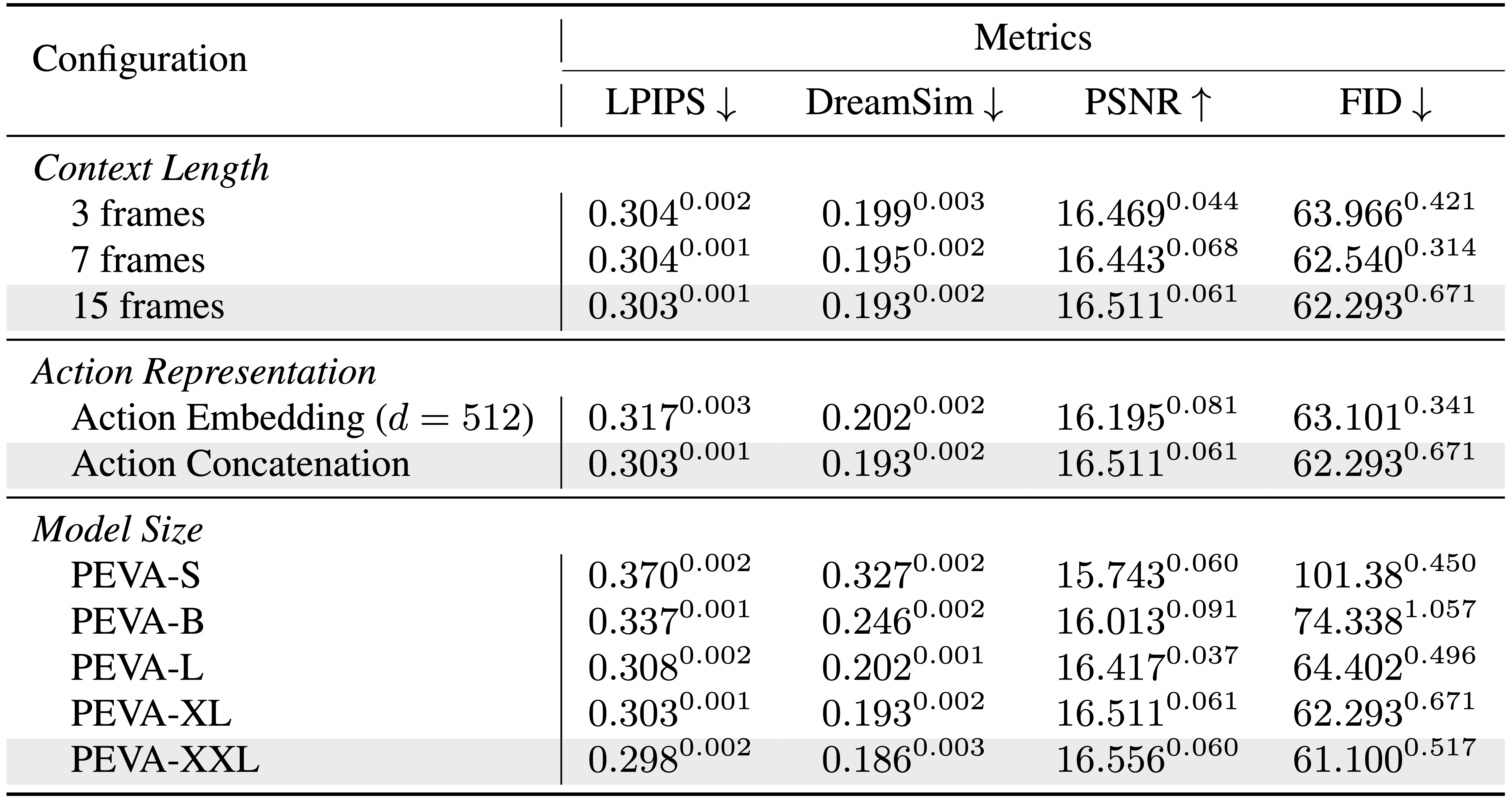

We consider PEVA throughout a number of metrics and exhibit its effectiveness in producing high-quality selfish movies from whole-body movement. Our mannequin constantly outperforms the baseline in perceptual high quality, stays constant over time, and reveals robust scaling properties with mannequin dimension.

Baseline perceptual metrics

Comparability of baseline perceptual metrics throughout totally different fashions.

Atomic motion efficiency

Comparability of fashions in video technology of atomic actions.

FID comparability

Comparability of FID throughout totally different fashions and time durations.

scaling

PEVA has nice scaling capability. Bigger fashions enhance efficiency.

Future course

Though our mannequin reveals promising ends in predicting selfish movies from whole-body actions, it’s nonetheless at an early stage in the direction of a fleshed-out plan. Planning is restricted to simulating candidate arm motions and lacks long-term planning and full trajectory optimization. Extending PEVA to closed-loop management or interactive environments is a vital subsequent step. At present, fashions lack express conditioning relating to activity intent or semantic objectives. In our analysis, we use picture similarity in its place purpose. Future research might mix PEVA with higher-level purpose conditioning or combine object-centered representations.

Acknowledgment

The authors wish to thank Rithwik Nukala for his assist annotating the atomic actions. respect Katerina Flagkiadaki, philip kleenbühl, Bharath Hariharan, Guanya Xi, Shubham Tulsiani and Deva Ramanan For useful ideas and suggestions to enhance the paper. History of Kenpaku For dialogue on management idea. Do Irun Help for diffusion enforcement. Brent Ee For cooperation in human movement associated works, Alexei Efros For dialogue and dialogue about world fashions. This analysis is partially supported by ONR MURI N00014-21-1-2801.

Learn under for extra data. full paper Or go to Project website.