The AWS Deepracer League is the world’s first autonomous race league and is open to everybody. Machine studying in all builders’ palms by the enjoyable and pleasure of the event of this developer and the self-driving distant management vehicles introduced at RE: Invent 2018. Over the previous seven years, over 560,000 builders of all ability ranges have competed within the league at 1000’s of Amazon and buyer occasions around the globe. The ultimate championship ended with Re:Invent 2024, however that very same occasion performed host to a model new AI competitors, resulting in a brand new period of gaming studying within the age of generator AI.

In December 2024, AWS launched the AWS Giant Language Mannequin League (AWS LLM League) with RE:Invent 2024. This primary occasion marked a key milestone within the democratization of machine studying, attracting over 200 enthusiastic members from various backgrounds, participating in a hands-on technical workshop and a fine-tuning problem for a aggressive basis mannequin. Utilizing Deepracer studying, the primary goal of this occasion was to simplify mannequin customization studying whereas fostering collaborative communities round producing AI innovation by game-imaged aggressive codecs.

AWS LLM League Construction and Outcomes

The AWS LLM League was designed to decrease the barrier to customization of the generated AI mannequin by offering members with expertise that enables them to fine-tune LLM no matter their earlier information science expertise. Utilizing Amazon Sagemaker Jumpstart, members guided the method of customizing LLMS and addressed actual enterprise challenges that may very well be tailored to the area.

As proven within the earlier diagram, the duty started with a workshop, and members launched into a aggressive journey to develop a extremely efficient, fine-tuned LLM. Opponents had been accountable for customizing Meta’s Llama 3.2 3B base mannequin for a selected area, making use of the instruments and methods they realized. The submitted fashions are in comparison with the bigger 90B reference mannequin with the standard of responses decided utilizing the LLM-As-a-Choose method. Individuals gained every query that the LLM choose deemed fine-tuned mannequin responses to be extra correct and inclusive than that of the bigger mannequin.

Within the preliminary spherical, members submit a whole bunch of distinctive fine-tuned fashions to the aggressive leaderboard, every striving to outperform the baseline mannequin. These submissions had been assessed based mostly on accuracy, consistency, and domain-specific adaptability. After a rigorous analysis, the highest 5 finalists had been finalists, with the most effective mannequin attaining a win fee of over 55% towards the big reference mannequin (as proven within the earlier diagram). Demonstrating that small fashions can obtain aggressive efficiency presents important benefits for large-scale computational effectivity. Utilizing the 3B mannequin as a substitute of the 90B mannequin reduces operational prices, permits for quicker inference, and makes superior AI extra accessible in quite a lot of industries and use instances.

This competitors culminates within the grand finale, with the finalists introducing the mannequin within the remaining spherical and figuring out the last word winner.

A fine-tuned journey

This journey includes rigorously designing members by the essential stage of fine-tuning large-scale language fashions, from dataset creation to mannequin analysis, and utilizing a set of code AWS instruments. Whether or not they had been newcomers or skilled builders, members gained sensible expertise in customizing the underlying mannequin by a structured, accessible course of. Let’s begin by how members put together their datasets and take a more in-depth have a look at how the duty unfolded.

Stage 1: Put together the dataset with PartyRock

Throughout the workshop, members Amazon PartyRock Playground (as proven within the following picture). PartyRock presents entry to quite a lot of high basis fashions by way of Amazon Bedrock at no further price. This allowed members to make use of the no-code AI era app to create the artificial coaching information used for fine-tuning.

Individuals started by defining goal domains for fine-tuning duties, resembling finance, healthcare, and authorized compliance. We used PartyRock’s intuitive interface to generate instruction response pairs that mimic real-world interactions. To enhance the standard of the dataset, they used the power to iteratively enhance PartyRock’s responses to make sure that the generated information had been context-related and according to the aims of the competitors.

This part was essential as the standard of the artificial information straight affected the mannequin’s capacity to outweigh the bigger baseline mannequin. Some members additional enhanced their dataset by adopting exterior validation strategies resembling in-loop critiques and augmented learning-based filtering.

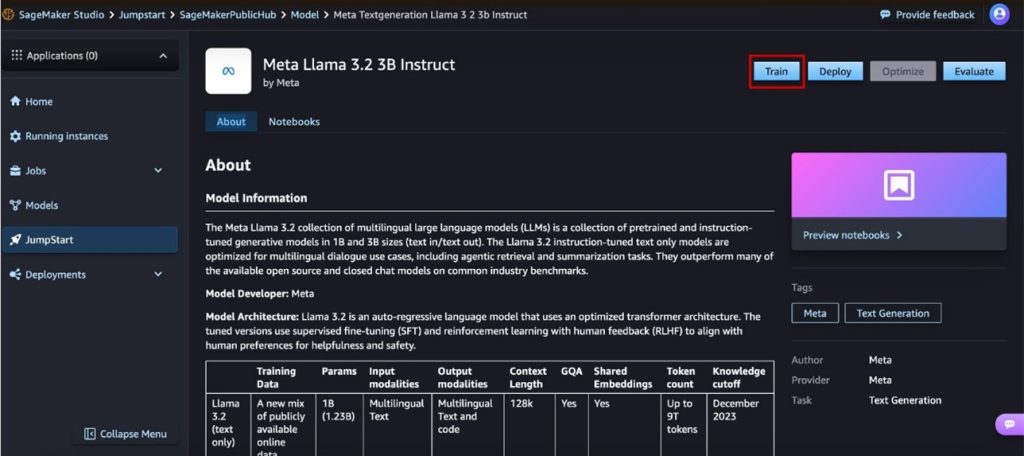

Stage 2: Fantastic changes with Sage Maker’s bounce begin

After the dataset was ready, members had been moved to Sagemaker Jumpstart, a totally managed machine studying hub that simplifies the fine-tuning course of. Utilizing a pre-trained Metalama 3.2 3B mannequin as a base, I custom-made it with the curated dataset and adjusted the next hyperparameters (proven within the following diagram):

- epoch: Determines what number of instances the mannequin repeats the dataset.

- Studying fee: Controls how a lot the mannequin weights are adjusted for every iteration.

- LORA parameters: Optimize effectivity with low rank adaptation (LORA) know-how.

One of many key advantages of Sagemaker Jumpstart is that it gives a codeless UI proven within the following diagram, permitting members to fine-tune the mannequin with out having to put in writing code. This accessibility has enabled folks with minimal machine studying experiences to successfully customise their fashions.

By utilizing Sagemaker’s distributed coaching function, members had been in a position to run a number of experiments in parallel, permitting them to optimize the mannequin for accuracy and response high quality. The iterative fine-tuning course of allowed us to discover totally different configurations to maximise efficiency.

Stage 3: Sagemaker rankings and evaluations

To make sure that the mannequin was not solely correct, but in addition unfair, members had the choice to make use of Amazon Sagemaker for analysis, as proven within the following diagram.

This part contains:

- Bias detection: Identifies distorted response patterns that will help a specific perspective.

- Explanability Metrics: Perceive why the mannequin made a specific prediction.

- Efficiency Scoring: Examine the bottom reality label and mannequin output.

Though not required, Sagemaker integration supplied a further layer of assurance for members who wished to additional validate the mannequin, making certain that the output was dependable and performant.

Stage 4: Submission and analysis utilizing LLM-as-a-Choose from Amazon bedrock

After the fine-tuned mannequin was prepared, it was submitted to the aggressive leaderboard for analysis utilizing the Amazon Bedrock As-AA-Choose method. This automated analysis system compares the finely tuned mannequin with the reference 90B mannequin utilizing predefined benchmarks, as proven within the following diagram.

Every response was scored based mostly on:

- Relevance: How nicely did the reply take care of the query?

- Depth: Stage of particulars and perception supplied.

- Consistency: The logical circulation and consistency of the reply.

Participant fashions scored every time the response exceeded the 90B mannequin in direct comparability. The leaderboard was dynamically up to date when new submissions had been evaluated, facilitating a aggressive however collaborative studying atmosphere.

Grand Finale Showcase

The AWS LLM League grand finale was an emotional showdown, with the highest 5 finalists rigorously chosen from a whole bunch of submissions participating within the Excessive Stakes Stay Occasion. Amongst them was Ray, the essential candidate whose fine-tuned fashions constantly produced sturdy outcomes by competitors. Every finalist needed to exhibit not solely the technical benefits of the finely tuned fashions, but in addition the power to adapt and refine responses in actual time.

The competitors was fierce from the start, with every participant bringing a singular technique to the desk. Ray’s capacity to coordinate prompts makes him stand out dynamically early and gives the most effective response to the vary of domain-specific questions. Room vitality was palpable as responses generated by the finalist AI had been judged at 40% within the hybrid score system, 40% within the Meta AI and AWS skilled panelists, and 20% within the enthusiastic reside viewers for the subsequent rubric.

- Generalization capacity: How nicely did the fine-tuned mannequin adapt to beforehand invisible questions?

- Response high quality: Depth, accuracy, and contextual understanding.

- effectivity: The flexibility of the mannequin to supply complete solutions with minimal latency.

Probably the most fascinating moments got here when the competitor encountered one thing notorious Strawberry drawbacka seemingly easy letter counting problem that uncovered weaknesses inherent in LLMS. Ray’s mannequin supplied the proper reply, however AI judges misclassified it, inflicting debate between human judges and audiences. This pivotal second highlighted the significance of human loop evaluation, and the best way AI and human judgments complement one another for honest and correct evaluation.

Simply as the ultimate spherical was over, Ray’s mannequin constantly surpassed expectations, securing the AWS LLM League Champion title. The grand finale was not merely a check of AI, however a showcase of the evolving synergy of innovation, technique, synthetic intelligence and human ingenuity.

Wanting forward and conclusion

The primary AWS LLM League Competitors has efficiently demonstrated that fine-tuning of language fashions will be gaming to advertise innovation and engagement. By offering hands-on expertise with cutting-edge AWS AI and machine studying (ML) providers, this competitors not solely categorizes the fine-tuning course of, but in addition stimulates new waves of AI fans, experimenting and innovating within the subject.

Because the AWS LLM League strikes ahead, future iterations will increase these learnings and incorporate alternatives for extra subtle challenges, bigger datasets and deeper mannequin customization. Whether or not you are a veteran AI practitioner or a newcomer in machine studying, the AWS LLM League presents an thrilling and accessible solution to develop real-world AI experience.

Keep tuned for the upcoming AWS LLM League occasions and prepare to information your tweak expertise to the check!

Concerning the writer

Vincent ah I’m a senior specialist answer architect at AWS at AI & Innovation. He works with ASEAN public sector shoppers to personal technical engagement and assist design scalable cloud options on quite a lot of innovation initiatives. He created the LLM League whereas serving to prospects leverage the ability of AI of their use instances by gamerization studying. He’s additionally an adjunct professor on the College of Administration of Singapore (SMU) and teaches pc science modules below the Laptop Info Techniques (SCIS). Previous to becoming a member of Amazon, he led UST as a Senior Principal Digital Architect for Accenture and Cloud Engineering Observe Lead.

Vincent ah I’m a senior specialist answer architect at AWS at AI & Innovation. He works with ASEAN public sector shoppers to personal technical engagement and assist design scalable cloud options on quite a lot of innovation initiatives. He created the LLM League whereas serving to prospects leverage the ability of AI of their use instances by gamerization studying. He’s additionally an adjunct professor on the College of Administration of Singapore (SMU) and teaches pc science modules below the Laptop Info Techniques (SCIS). Previous to becoming a member of Amazon, he led UST as a Senior Principal Digital Architect for Accenture and Cloud Engineering Observe Lead.

Natasaya Okay. Idries I’m the product advertising supervisor for the AWS AI/ML Gameifed Studying program. She is obsessed with democratizing AI/ML expertise by participating and sensible training initiatives that bridge the hole between superior know-how and sensible enterprise implementation. Her experience in constructing studying communities and selling digital innovation continues to form her method to creating impactful AI teaching programs. Outdoors of labor, Natasia enjoys touring, cooking Southeast Asian delicacies and exploring nature trails.

Natasaya Okay. Idries I’m the product advertising supervisor for the AWS AI/ML Gameifed Studying program. She is obsessed with democratizing AI/ML expertise by participating and sensible training initiatives that bridge the hole between superior know-how and sensible enterprise implementation. Her experience in constructing studying communities and selling digital innovation continues to form her method to creating impactful AI teaching programs. Outdoors of labor, Natasia enjoys touring, cooking Southeast Asian delicacies and exploring nature trails.