The next essay is reproduced with permission. conversationa web-based publication overlaying the most recent analysis.

We’re more and more conscious of how misinformation can have an effect on elections. About 73% of Americans About half report seeing deceptive election information, and about half have hassle discerning what’s true and what’s false.

In the case of misinformation, it appears the phrase “going viral” is greater than only a catchphrase. Scientists have discovered an in depth parallel between the unfold of misinformation and the unfold of viruses. In actual fact, how does misinformation unfold? explained effectively It makes use of mathematical fashions designed to simulate the unfold of pathogens.

About supporting science journalism

When you loved this text, please take into account supporting our award-winning journalism. At the moment subscribing. By subscribing, you assist guarantee future generations of impactful tales concerning the discoveries and concepts that form the world at this time.

Considerations about misinformation are widespread; Recent UN research It means that 85% of individuals all over the world are anxious about it.

These issues are well-founded. International disinformation is on the rise sophistication and range For the reason that 2016 US election. The 2024 election cycle noticed harmful conspiracy theories about: “Weather manipulation” spoil Acceptable administration of hurricanes and faux information Immigrants who eat pets inciting violence in opposition to the Haitian group; misleading election conspiracy theory Amplified by the richest man on the planet, Elon Musk.

current the study employs a mathematical mannequin derived from epidemiology (The examine of how and why ailments happen in populations). Though these fashions have been initially developed to check the unfold of viruses, they are often successfully used to check the unfold of misinformation throughout social networks.

One class of epidemiological fashions that works in opposition to misinformation is the Susceptible-Infectious-Recovered (SIR) mannequin. These simulate the dynamics between a inclined individual (S), an contaminated individual (I), and a recovered or resistant individual (R).

These fashions are generated from a set of differential equations (which assist mathematicians perceive charges of change) and are simply utilized to the unfold of misinformation. For instance, on social media, misinformation is transmitted from particular person to particular person, with some changing into contaminated and others remaining immune. Others act as asymptomatic vectors (vectors of illness), spreading misinformation with out figuring out or struggling any detrimental penalties.

These fashions are very helpful as a result of they mean you can: predict We simulate inhabitants dynamics and devise measures comparable to the fundamental replica quantity (R0), or the common variety of instances produced by an “contaminated” particular person.

Because of this, the expansion was interest in making use of such an epidemiological strategy to our info ecosystem. Most social media platforms embody Estimation An R0 higher than 1 signifies that the platform has the potential to unfold misinformation like an epidemic.

on the lookout for an answer

Mathematical modeling usually includes one of many so-called: phenomenological analysis (wherein researchers clarify noticed patterns) or mechanistic work (involving making predictions primarily based on identified relationships). These fashions are notably helpful as a result of they permit us to discover how doable interventions will help scale back the unfold of misinformation on social networks.

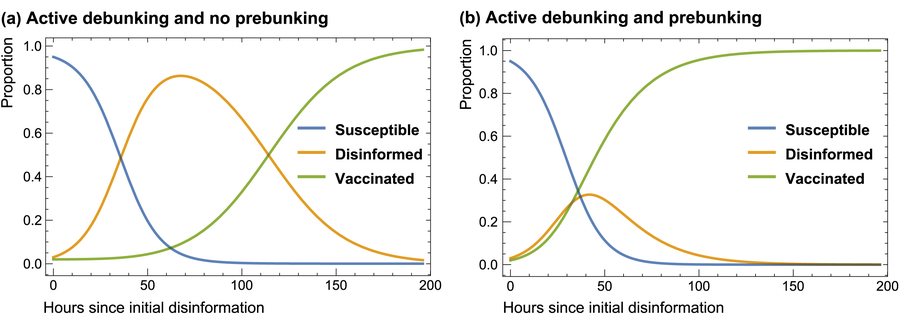

This fundamental course of will be defined with a easy explanatory mannequin proven within the graph beneath. This lets you discover and check how the system evolves underneath totally different hypotheses.

A outstanding social media determine with a lot of followers will be “.super spreader” spreading disinformation concerning the election and doubtlessly billions of millions individuals. This displays the present state of election directors. report that you are losing Of their makes an attempt to fact-check disinformation.

Our mannequin conservatively assumes that individuals have solely a ten% probability of changing into contaminated after publicity, then debunking misinformation requires small effectin accordance with analysis. Within the 10% an infection likelihood state of affairs, the inhabitants contaminated by election misinformation will increase quickly (orange line, left panel).

The “compartment” mannequin of disinformation unfold inside a cohort of customers over a interval of 1 week. Disinformation has a ten% probability of transmitting an infection when uncovered to inclined, unvaccinated people. The effectiveness in uncovering lies is assumed to be 5%. The dynamics of disinformation transmission change considerably when a previous fallacy is launched and is about twice as efficient as debunking.

Sander van der Linden/Robert David Grimes

Psychological “vaccination”

The virus-spread analogy for misinformation is apt as a result of it permits scientists to simulate methods to fight the unfold of a virus. These interventions embody an strategy referred to as . “Psychological inoculation”additionally referred to as pre-banking.

Right here, researchers pre-emptively introduce falsehoods after which refute them so that individuals can develop immunity in opposition to misinformation sooner or later. That is just like vaccination, the place individuals are given a (weakened) dose of the virus to arrange their immune techniques for future infections.

For instance, current study We used an AI chatbot to debunk widespread myths about election fraud. This consists of the potential for political actors to control public opinion with sensational tales, comparable to false claims that huge in a single day vote dumping is upending elections. It included vital tips about the best way to warn individuals upfront and the best way to spot such deceptive rumors. These “inoculations” will be integrated into inhabitants fashions of the unfold of misinformation.

Our graph reveals that when proactive banking will not be adopted, it takes for much longer for individuals to construct up immunity to misinformation (left panel, orange line). The appropriate panel reveals how the quantity of people that lose their info will be contained if proactive banking is rolled out at scale (orange line).

The purpose of those fashions is to not make the issue appear scary or to counsel that individuals are gullible vectors of illness. However there’s something clear evidence Some pretend information articles can unfold like a easy contagion and infect customers immediately.

In the meantime, different tales behave like extra complicated contagions, requiring individuals to repeatedly come into contact with deceptive sources earlier than changing into “contaminated.”

The truth that people differ of their susceptibility to misinformation doesn’t undermine the usefulness of epidemiologically derived approaches. For instance, fashions will be adjusted relying on how tough or tough it’s for misinformation to “infect” totally different subpopulations.

Though some individuals could discover it psychologically offensive to think about individuals this fashion, most misinformation spread As with viruses, it’s attributable to a small variety of influential superspreaders.

ingest epidemiological Our strategy to learning pretend information permits us to foretell and mannequin its unfold. effect of interventions comparable to Bank in advance.

A few of the current analysis has been validated viral approach Utilizing the social media dynamics of the 2020 US presidential election. This examine discovered {that a} mixture of interventions was efficient in lowering the unfold of misinformation.

Fashions are by no means good. But when we wish to cease the unfold of misinformation, we have to perceive it so as to successfully counter its social hurt.

This text was first printed conversation. please learn original article.