You’ve gotten doubtless already had the chance to work together with generative synthetic intelligence (AI) instruments (comparable to digital assistants and chatbot purposes) and seen that you just don’t all the time get the reply you’re searching for, and that reaching it is probably not simple. Massive language fashions (LLMs), the fashions behind the generative AI revolution, obtain directions on what to do, methods to do it, and a set of expectations for his or her response by the use of a pure language textual content known as a immediate. The way in which prompts are crafted vastly impacts the outcomes generated by the LLM. Poorly written prompts will typically result in hallucinations, sub-optimal outcomes, and total poor high quality of the generated response, whereas good-quality prompts will steer the output of the LLM to the output we would like.

On this submit, we present methods to construct environment friendly prompts in your purposes. We use the simplicity of Amazon Bedrock playgrounds and the state-of-the-art Anthropic’s Claude 3 household of fashions to exhibit how one can construct environment friendly prompts by making use of easy strategies.

Immediate engineering

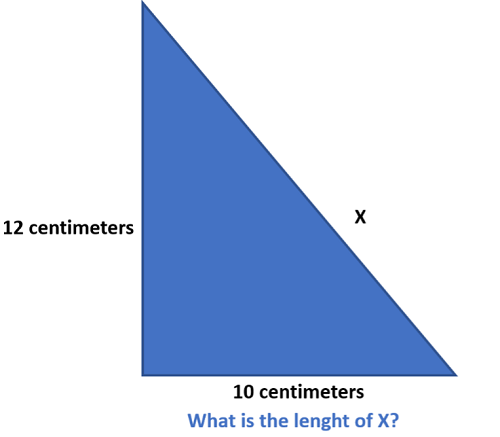

Immediate engineering is the method of fastidiously designing the prompts or directions given to generative AI fashions to supply the specified outputs. Prompts act as guides that present context and set expectations for the AI. With well-engineered prompts, builders can benefit from LLMs to generate high-quality, related outputs. As an illustration, we use the next immediate to generate a picture with the Amazon Titan Picture Technology mannequin:

An illustration of an individual speaking to a robotic. The individual seems to be visibly confused as a result of he can’t instruct the robotic to do what he desires.

We get the next generated picture.

Let’s take a look at one other instance. All of the examples on this submit are run utilizing Claude 3 Haiku in an Amazon Bedrock playground. Though the prompts could be run utilizing any LLM, we focus on finest practices for the Claude 3 household of fashions. With a purpose to get entry to the Claude 3 Haiku LLM on Amazon Bedrock, discuss with Mannequin entry.

We use the next immediate:

Claude 3 Haiku’s response:

The request immediate is definitely very ambiguous. 10 + 10 could have a number of legitimate solutions; on this case, Claude 3 Haiku, utilizing its inner information, decided that 10 + 10 is 20. Let’s change the immediate to get a unique reply for a similar query:

Claude 3 Haiku’s response:

The response modified accordingly by specifying that 10 + 10 is an addition. Moreover, though we didn’t request it, the mannequin additionally supplied the results of the operation. Let’s see how, by way of a quite simple prompting method, we are able to acquire an much more succinct outcome:

Claude 3 Haiku response:

Nicely-designed prompts can enhance consumer expertise by making AI responses extra coherent, correct, and helpful, thereby making generative AI purposes extra environment friendly and efficient.

The Claude 3 mannequin household

The Claude 3 household is a set of LLMs developed by Anthropic. These fashions are constructed upon the newest developments in pure language processing (NLP) and machine studying (ML), permitting them to grasp and generate human-like textual content with exceptional fluency and coherence. The household is comprised of three fashions: Haiku, Sonnet, and Opus.

Haiku is the quickest and most cost-effective mannequin available on the market. It’s a quick, compact mannequin for near-instant responsiveness. For the overwhelming majority of workloads, Sonnet is 2 instances sooner than Claude 2 and Claude 2.1, with greater ranges of intelligence, and it strikes the perfect stability between intelligence and velocity—qualities particularly essential for enterprise use instances. Opus is probably the most superior, succesful, state-of-the-art basis mannequin (FM) with deep reasoning, superior math, and coding skills, with top-level efficiency on extremely complicated duties.

Among the many key options of the mannequin’s household are:

- Imaginative and prescient capabilities – Claude 3 fashions have been educated to not solely perceive textual content but in addition photographs, charts, diagrams, and extra.

- Greatest-in-class benchmarks – Claude 3 exceeds current fashions on standardized evaluations comparable to math issues, programming workout routines, and scientific reasoning. Particularly, Opus outperforms its friends on many of the frequent analysis benchmarks for AI methods, together with undergraduate stage skilled information (MMLU), graduate stage skilled reasoning (GPQA), primary arithmetic (GSM8K), and extra. It displays excessive ranges of comprehension and fluency on complicated duties, main the frontier of basic intelligence.

- Lowered hallucination – Claude 3 fashions mitigate hallucination by way of constitutional AI strategies that present transparency into the mannequin’s reasoning, in addition to improved accuracy. Claude 3 Opus exhibits an estimated twofold achieve in accuracy over Claude 2.1 on tough open-ended questions, decreasing the chance of defective responses.

- Lengthy context window – Claude 3 fashions excel at real-world retrieval duties with a 200,000-token context window, the equal of 500 pages of data.

To study extra in regards to the Claude 3 household, see Unlocking Innovation: AWS and Anthropic push the boundaries of generative AI collectively, Anthropic’s Claude 3 Sonnet basis mannequin is now accessible in Amazon Bedrock, and Anthropic’s Claude 3 Haiku mannequin is now accessible on Amazon Bedrock.

The anatomy of a immediate

As prompts turn out to be extra complicated, it’s essential to establish its numerous components. On this part, we current the elements that make up a immediate and the beneficial order wherein they need to seem:

- Process context: Assign the LLM a job or persona and broadly outline the duty it’s anticipated to carry out.

- Tone context: Set a tone for the dialog on this part.

- Background knowledge (paperwork and pictures): Often known as context. Use this part to offer all the mandatory info for the LLM to finish its activity.

- Detailed activity description and guidelines: Present detailed guidelines in regards to the LLM’s interplay with its customers.

- Examples: Present examples of the duty decision for the LLM to study from them.

- Dialog historical past: Present any previous interactions between the consumer and the LLM, if any.

- Rapid activity description or request: Describe the precise activity to satisfy throughout the LLMs assigned roles and duties.

- Assume step-by-step: If vital, ask the LLM to take a while to suppose or suppose step-by-step.

- Output formatting: Present any particulars in regards to the format of the output.

- Prefilled response: If vital, prefill the LLMs response to make it extra succinct.

The next is an instance of a immediate that comes with all of the aforementioned components:

Greatest prompting practices with Claude 3

Within the following sections, we dive deep into Claude 3 finest practices for immediate engineering.

Textual content-only prompts

For prompts that deal solely with textual content, observe this set of finest practices to attain higher outcomes:

- Mark components of the immediate with XLM tags – Claude has been fine-tuned to pay particular consideration to XML tags. You’ll be able to benefit from this attribute to obviously separate sections of the immediate (directions, context, examples, and so forth). You should use any names you favor for these tags; the primary thought is to delineate in a transparent means the content material of your immediate. Be sure you embody <> and </> for the tags.

- All the time present good activity descriptions – Claude responds properly to clear, direct, and detailed directions. Whenever you give an instruction that may be interpreted in several methods, just remember to clarify to Claude what precisely you imply.

- Assist Claude study by instance – One strategy to improve Claude’s efficiency is by offering examples. Examples function demonstrations that enable Claude to study patterns and generalize acceptable behaviors, very similar to how people study by statement and imitation. Nicely-crafted examples considerably enhance accuracy by clarifying precisely what is predicted, improve consistency by offering a template to observe, and increase efficiency on complicated or nuanced duties. To maximise effectiveness, examples ought to be related, numerous, clear, and supplied in adequate amount (begin with three to 5 examples and experiment primarily based in your use case).

- Preserve the responses aligned to your required format – To get Claude to supply output within the format you need, give clear instructions, telling it precisely what format to make use of (like JSON, XML, or markdown).

- Prefill Claude’s response – Claude tends to be chatty in its solutions, and would possibly add some further sentences firstly of the reply regardless of being instructed within the immediate to reply with a selected format. To enhance this habits, you need to use the assistant message to offer the start of the output.

- All the time outline a persona to set the tone of the response – The responses given by Claude can range vastly relying on which persona is supplied as context for the mannequin. Setting a persona helps Claude set the correct tone and vocabulary that might be used to offer a response to the consumer. The persona guides how the mannequin will talk and reply, making the dialog extra real looking and tuned to a specific character. That is particularly essential when utilizing Claude because the AI behind a chat interface.

- Give Claude time to suppose – As beneficial by Anthropic’s analysis staff, giving Claude time to suppose by way of its response earlier than producing the ultimate reply results in higher efficiency. The best strategy to encourage that is to incorporate the phrase “Assume step-by-step” in your immediate. You too can seize Claude’s step-by-step thought course of by instructing it to “please give it some thought step-by-step inside <pondering></pondering> tags.”

- Break a fancy activity into subtasks – When coping with complicated duties, it’s a good suggestion to interrupt them down and use immediate chaining with LLMs like Claude. Immediate chaining entails utilizing the output from one immediate because the enter for the subsequent, guiding Claude by way of a sequence of smaller, extra manageable duties. This improves accuracy and consistency for every step, makes troubleshooting easier, and makes certain Claude can absolutely concentrate on one subtask at a time. To implement immediate chaining, establish the distinct steps or subtasks in your complicated course of, create separate prompts for every, and feed the output of 1 immediate into the subsequent.

- Benefit from the lengthy context window – Working with lengthy paperwork and huge quantities of textual content could be difficult, however Claude’s prolonged context window of over 200,000 tokens permits it to deal with complicated duties that require processing intensive info. This characteristic is especially helpful with Claude Haiku as a result of it could possibly assist present high-quality responses with a cheap mannequin. To take full benefit of this functionality, it’s essential to construction your prompts successfully.

- Permit Claude to say “I don’t know” – By explicitly giving Claude permission to acknowledge when it’s not sure or lacks adequate info, it’s much less more likely to generate inaccurate responses. This may be achieved by including a preface to the immediate, comparable to, “In case you are not sure or don’t have sufficient info to offer a assured reply, merely say ‘I don’t know’ or ‘I’m unsure.’”

Prompts with photographs

The Claude 3 household gives imaginative and prescient capabilities that may course of photographs and return textual content outputs. It’s able to analyzing and understanding charts, graphs, technical diagrams, experiences, and different visible property. The next are finest practices when working with photographs with Claude 3:

- Picture placement and measurement issues – For optimum efficiency, when working with Claude 3’s imaginative and prescient capabilities, the perfect placement for photographs is on the very begin of the immediate. Anthropic additionally recommends resizing a picture earlier than importing and putting a stability between picture readability and picture measurement. For extra info, discuss with Anthropic’s guidance on image sizing.

- Apply conventional strategies – When working with photographs, you possibly can apply the identical strategies used for text-only prompts (comparable to giving Claude time to suppose or defining a job) to assist Claude enhance its responses.

Think about the next instance, which is an extraction of the image “a tremendous gathering” (Writer: Ian Kirck, https://en.m.wikipedia.org/wiki/File:A_fine_gathering_(8591897243).jpg).

We ask Claude 3 to rely what number of birds are within the picture:

Claude 3 Haiku’s response:

On this instance, we requested Claude to take a while to suppose and put its

reasoning in an XML tag and the ultimate reply in one other. Additionally, we gave Claude time to suppose and clear directions to concentrate to particulars, which helped Claude to offer the proper response.

- Benefit from visible prompts – The power to make use of photographs additionally lets you add prompts immediately throughout the picture itself as an alternative of offering a separate immediate.

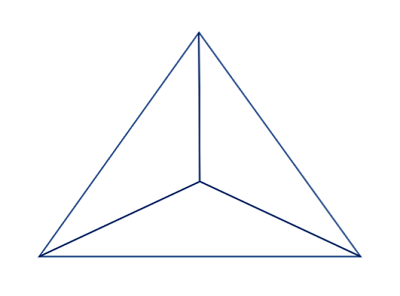

Let’s see an instance with the next picture:

On this case, the picture itself is the immediate:

Claude 3 Haiku’s response:

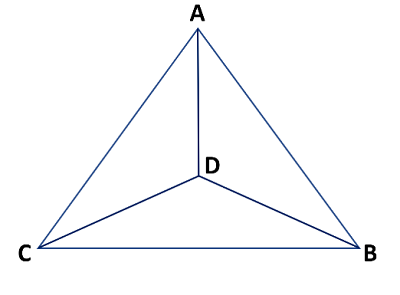

- Examples are additionally legitimate utilizing photographs – You’ll be able to present a number of photographs in the identical immediate and benefit from Claude’s imaginative and prescient capabilities to offer examples and extra priceless info utilizing the photographs. Be sure you use picture tags to obviously establish the completely different photographs. As a result of this query is a reasoning and mathematical query, set the temperature to 0 for a extra deterministic response.

Let’s take a look at the next instance:

Immediate:

Claude 3 Haiku’s response:

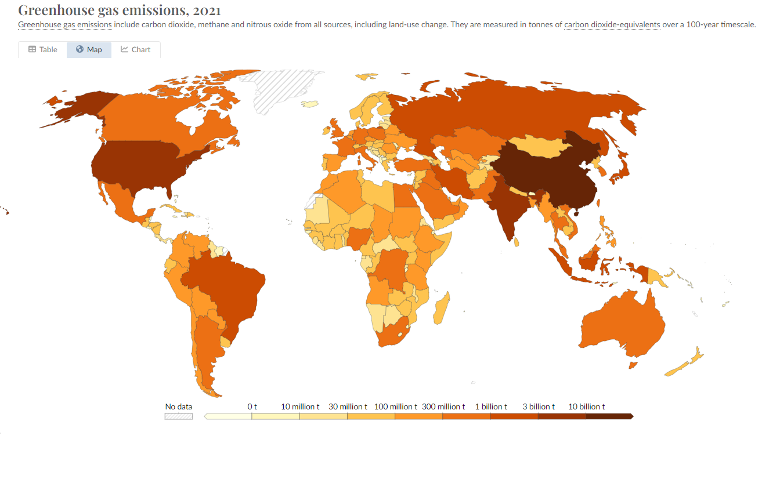

- Use detailed descriptions when working with difficult charts or graphics – Working with charts or graphics is a comparatively simple activity when utilizing Claude’s fashions. We merely benefit from Claude’s imaginative and prescient capabilities, cross the charts or graphics in picture format, after which ask questions in regards to the supplied photographs. Nonetheless, when working with difficult charts which have plenty of colours (which look very related) or plenty of knowledge factors, it’s a very good apply to assist Claude higher perceive the data with the next strategies:

- Ask Claude to explain intimately every knowledge level that it sees within the picture.

- Ask Claude to first establish the HEX codes of the colours within the graphics to obviously see the distinction in colours.

Let’s see an instance. We cross to Claude the next map chart in picture format (supply: https://ourworldindata.org/co2-and-greenhouse-gas-emissions), then we ask about Japan’s greenhouse fuel emissions.

Immediate:

Claude 3 Haiku’s response: