AI acquires data from three completely different layers: coaching knowledge, acquisition programs, and reside device entry reminiscent of APIs and MCPs.

Every layer of knowledge has its personal strengths and weaknesses, so in case you’ve ever questioned why an AI confidently mentioned one thing improper, why one device appears to know final week’s information whereas one other would not, or why a competitor’s product will get talked about so much however yours would not, the reply virtually all the time goes again to which layer answered your query.[/intro_text]

This text supplies a layman’s clarification of the place AI’s data truly comes from and why it issues, how a lot it is best to belief sure responses.

Earlier than an AI mannequin can reply a single query, it goes by means of a section referred to as coaching.

Throughout coaching, the mannequin ingests billions of textual content, pictures, and code samples (public internet crawls, books, Wikipedia, code repositories, and licensed databases) and learns to foretell patterns throughout all of it. By the top of coaching, the mannequin successfully remembers a statistical snapshot of the human’s data as much as that time.

Visualization of widespread knowledge sources used to coach large-scale language fashions.

That is how AI fashions develop their “understanding” in regards to the world. The incidence of various entities within the coaching knowledge (reminiscent of model names and merchandise: “Patagonia” and “Nano Puff Hoodie”) and the phrases with which they generally co-occur (reminiscent of “eco-friendly” and “top quality”) form the mannequin’s understanding of the model.

Gianluca Fiorelli explains:

The LLM will research the connection between manufacturers and ideas reminiscent of ‘fitness center’ and ‘noise canceling’. These semantic associations instantly have an effect on whether or not and the way you’re talked about.

It’s virtually troublesome to think about the size concerned in coaching. The coaching knowledge for the principle mannequin is measured in trillions of tokens (tough chunks of phrases). Take a look at the price and you will know what you want. The estimated prices for GPT-4 coaching are: $78 million; Google’s Gemini Extremely prices roughly $191 million.

The worldwide marketplace for AI coaching datasets is 3.2 billion dollars in 2025, It’s projected to succeed in $16.3 billion by 2033, an annual development price of twenty-two.6%, reflecting how central knowledge has turn into to your entire enterprise.

There are necessary factors to grasp right here. As soon as coaching is completed, the mannequin’s data is frozen. You can’t study from new occasions. You haven’t any thought what occurred yesterday, final month, or because the date your coaching knowledge was minimize off.

Some suppliers commonly tweak their fashions primarily based on new knowledge, but it surely’s nonetheless a discrete course of, extra akin to issuing software program updates than repeatedly studying the information.

One other main failure mode is hallucinations. When fashions haven’t got dependable coaching knowledge obtainable, they fill within the gaps with issues that sound believable: fabricated quotes, fabricated statistics, and assured non-answers (like those Google’s AI Overview cites). April Fool’s satire articles as factual sources).

The mannequin had no approach of understanding that the article was a joke. It simply appeared authoritative sufficient to suit the sample.

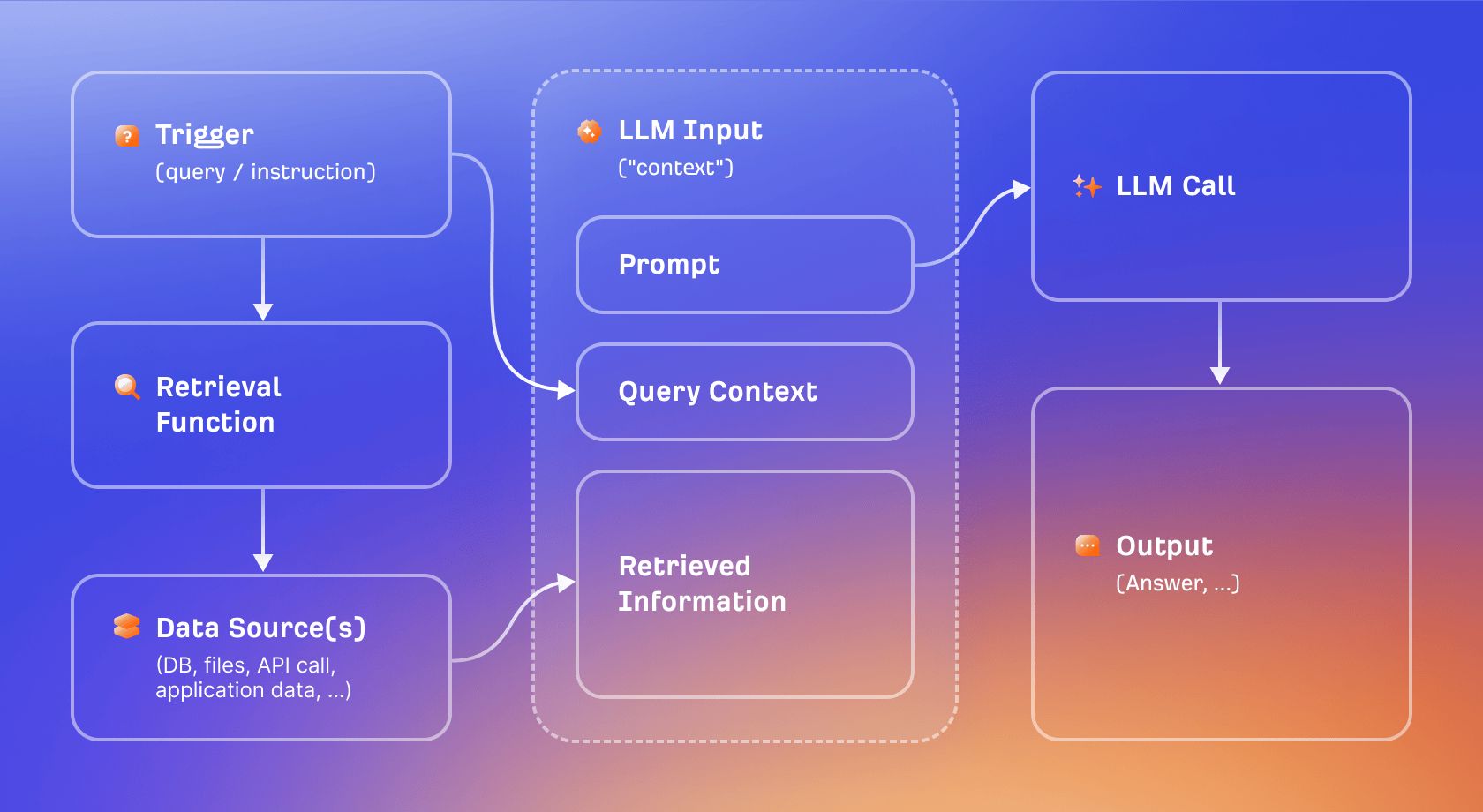

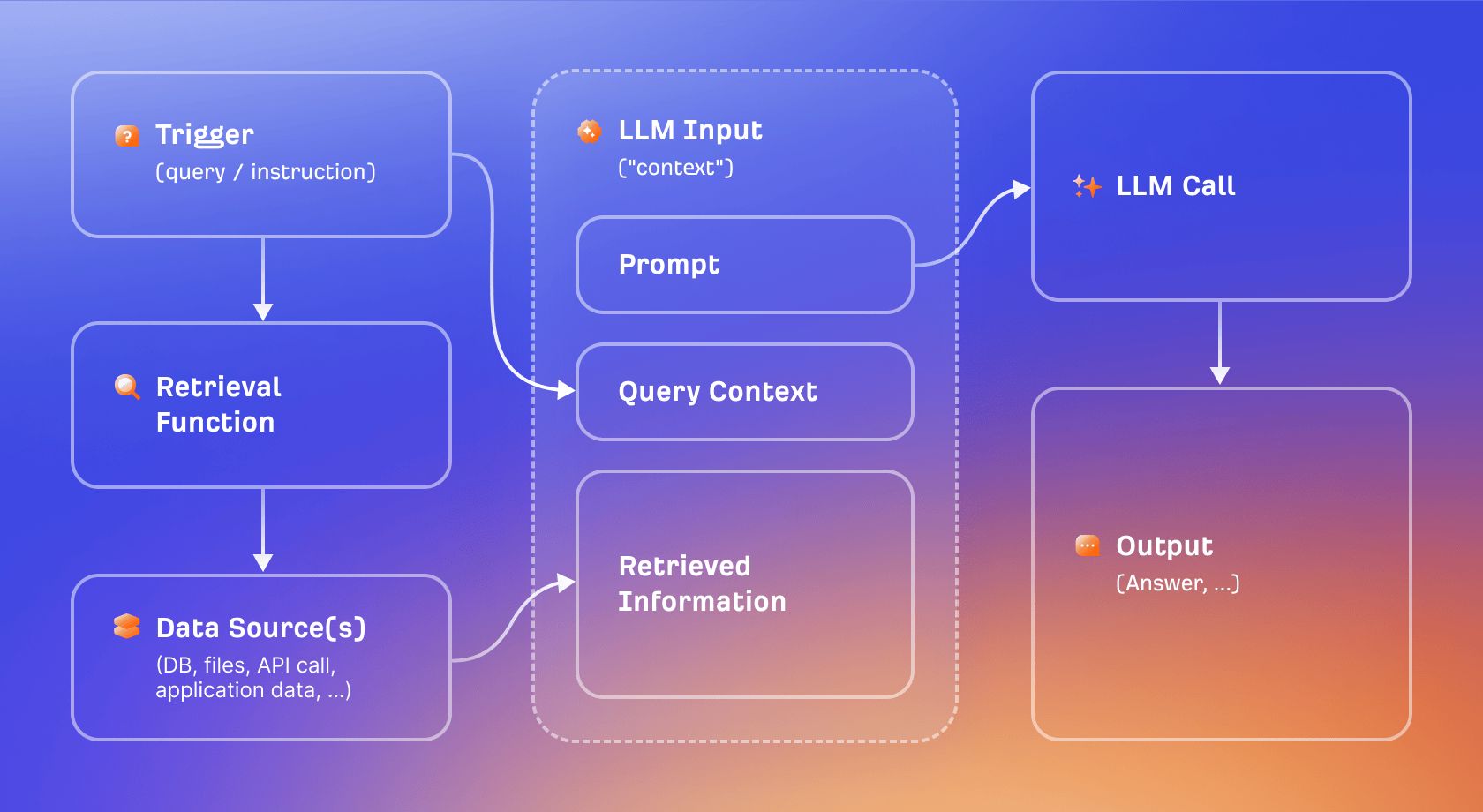

Search Augmentation and Technology (RAG) is the principle approach used to keep away from the data blocking downside.

Relatively than relying solely on what the mannequin has discovered throughout coaching, RAG permits the mannequin to retrieve related paperwork for the time being a query is requested and use these paperwork as context when producing a response.

Consider this because the distinction between a closed-book examination and an open-book examination. A training-only mannequin should reply primarily based on reminiscence. RAG-enabled fashions can first look at data after which reply. As a result of the solutions are primarily based on truly retrieved content material reasonably than statistical sample matching, the outcomes are extra up-to-date and, in precept, extra verifiable.

Search extension technology visualized.

“Grounding” is a broad time period for this anchoring. When the AI’s reply is grounded, it ties it again to the particular searched supply, significantly decreasing the chance of hallucinations.

Britney Muller explains:

Grounding comes from floor fact, which has its roots in statistics and initially cartography, and actually meant going out to verify the map matches actuality.

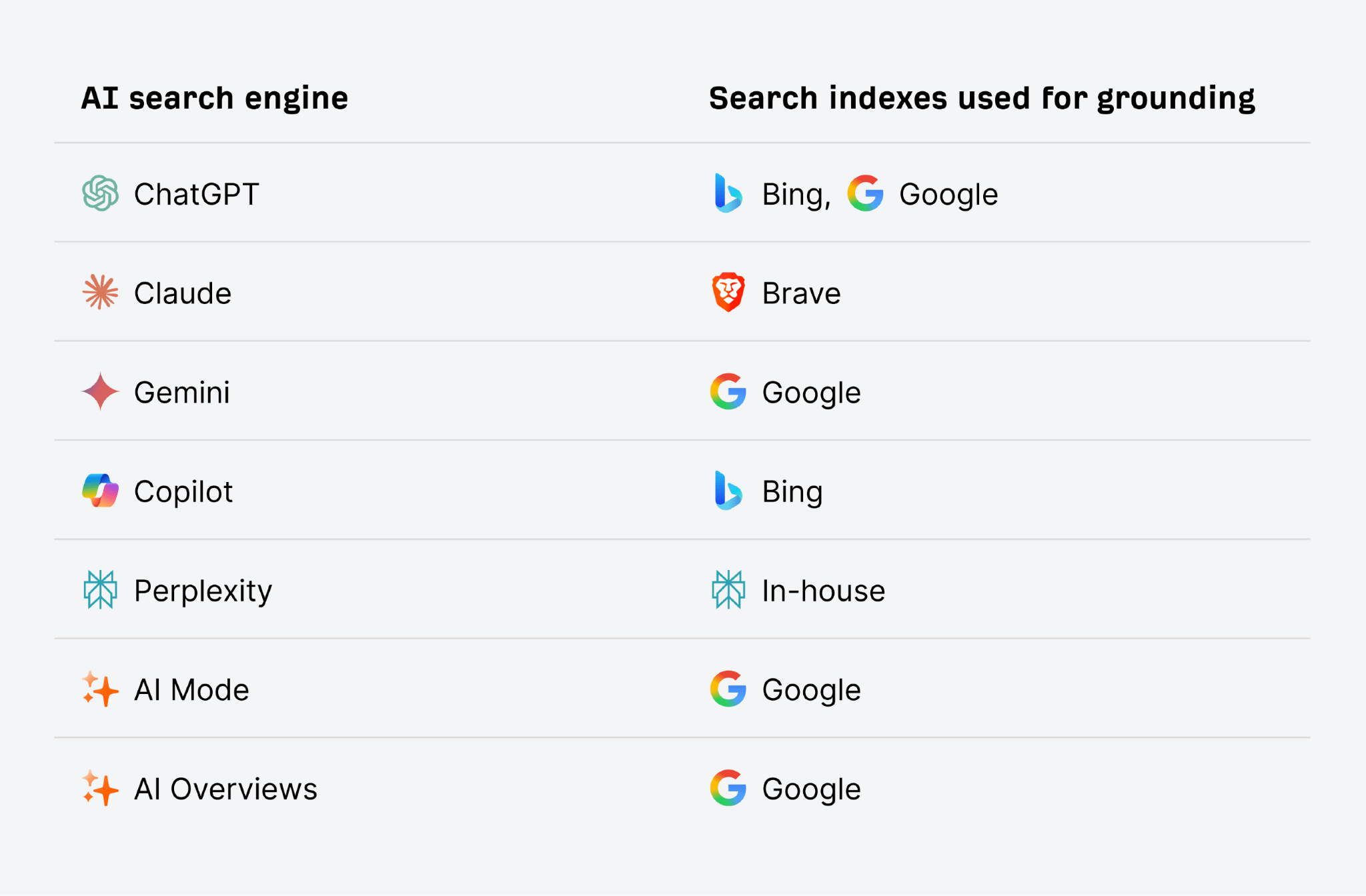

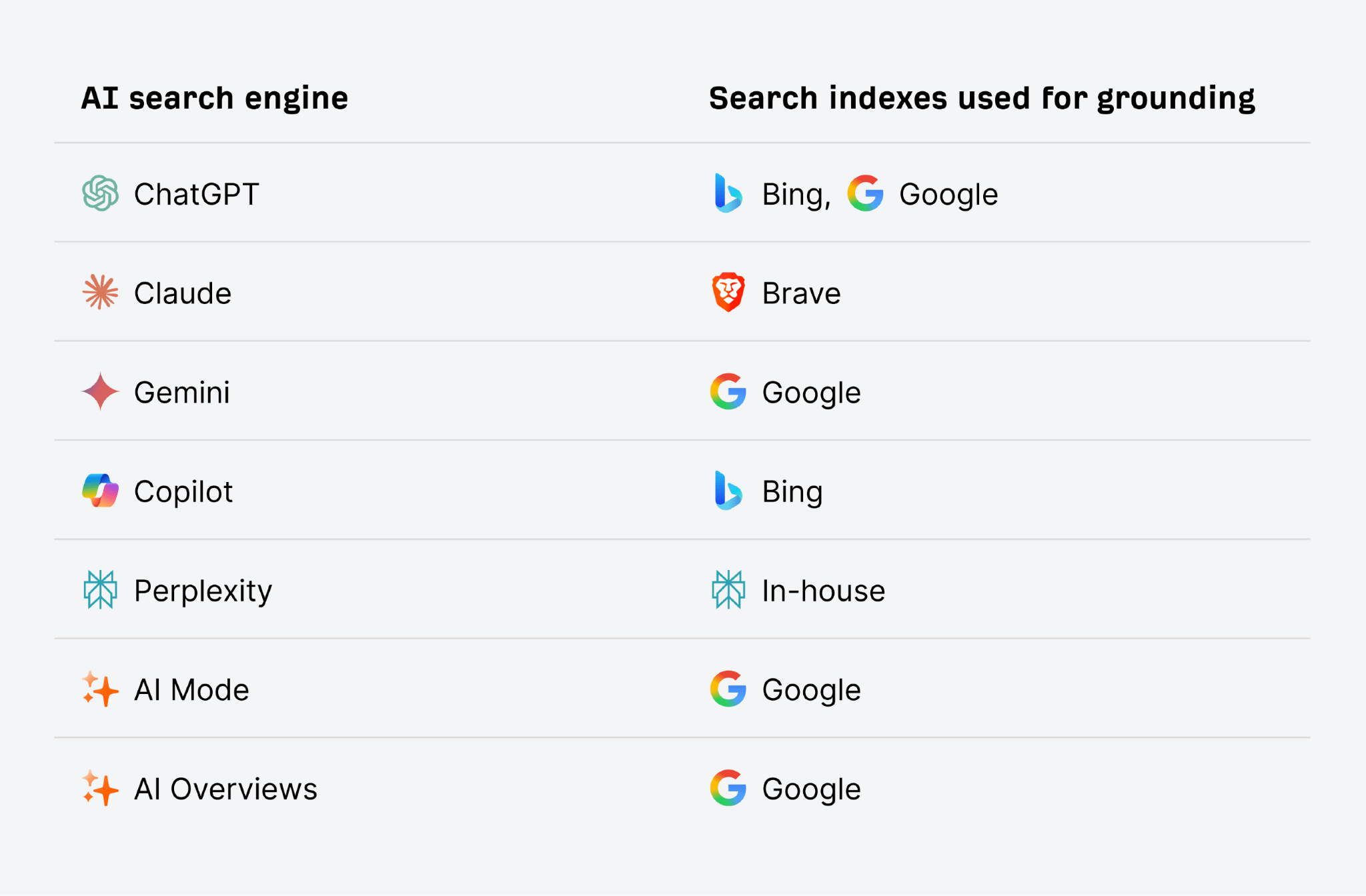

AI search engines like google like ChatGPT and Gemini use conventional search indexes like Google and Bing for this grounding course of. So, having good website positioning and rating effectively in conventional search can even enhance your AI visibility. The upper you seem within the search index for the time period the AI searches, the extra possible it’s to be searched and cited in solutions.

Not all AI merchandise use RAGs. For instance, a fundamental ChatGPT session with looking disabled is solely training-based. You do not have entry to present data and no approach to confirm your solutions in opposition to reside sources.

The tradeoff is velocity and ease. The training-only response is quicker however leaves a persistent date. Though RAG provides delay and introduces new failure modes (acquisition errors – ingesting the improper or poor high quality supply), it permits for recency.

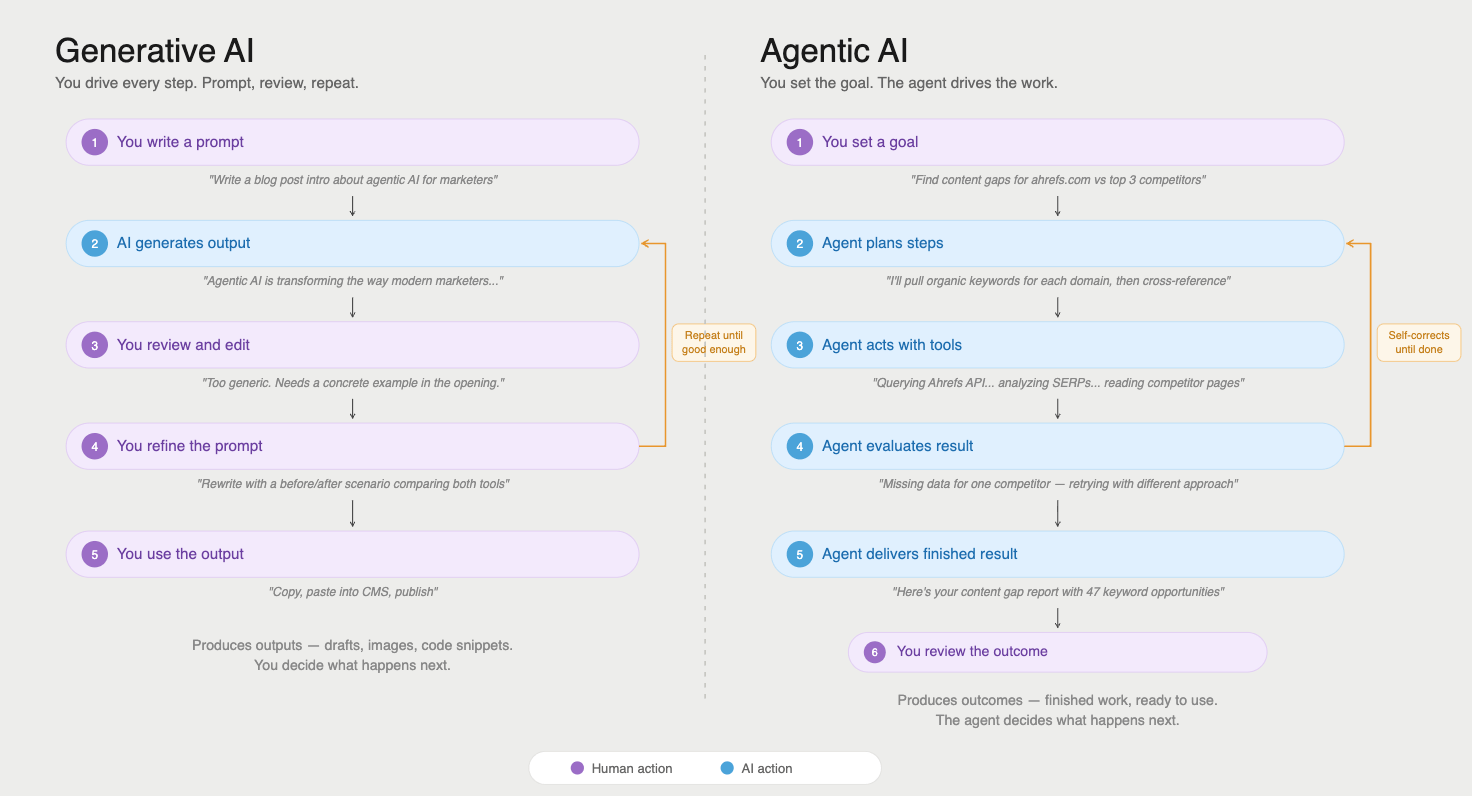

RAGs are one approach to incorporate new data into AI responses. Nonetheless, fashionable AI programs have gotten more and more superior, permitting fashions to invoke exterior instruments throughout conversations. That is the realm of AI brokers.

AI brokers do extra than simply retrieve paperwork. As a part of performing duties, you’ll be able to question APIs, carry out searches, run code, and work together with reside knowledge sources.

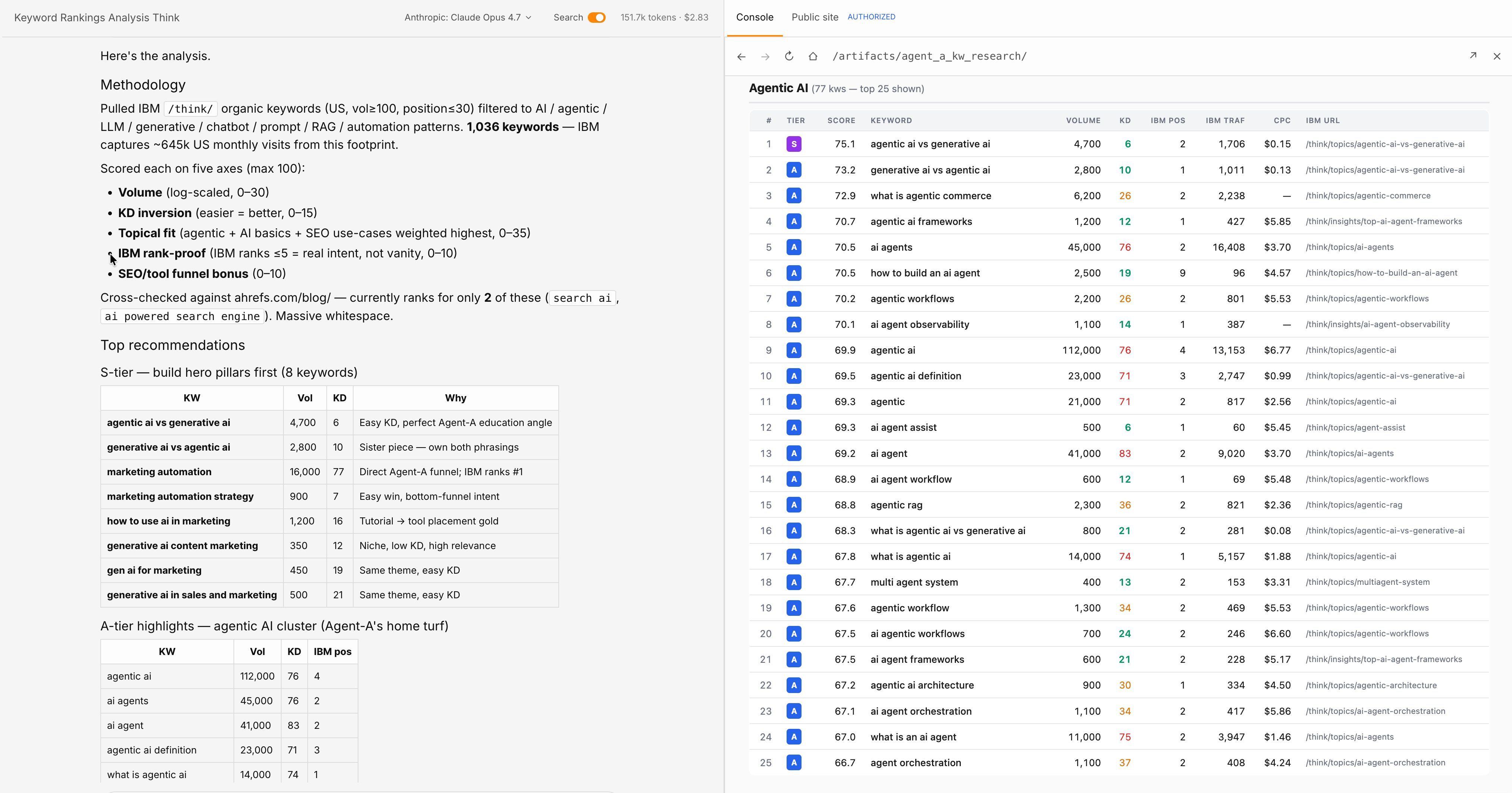

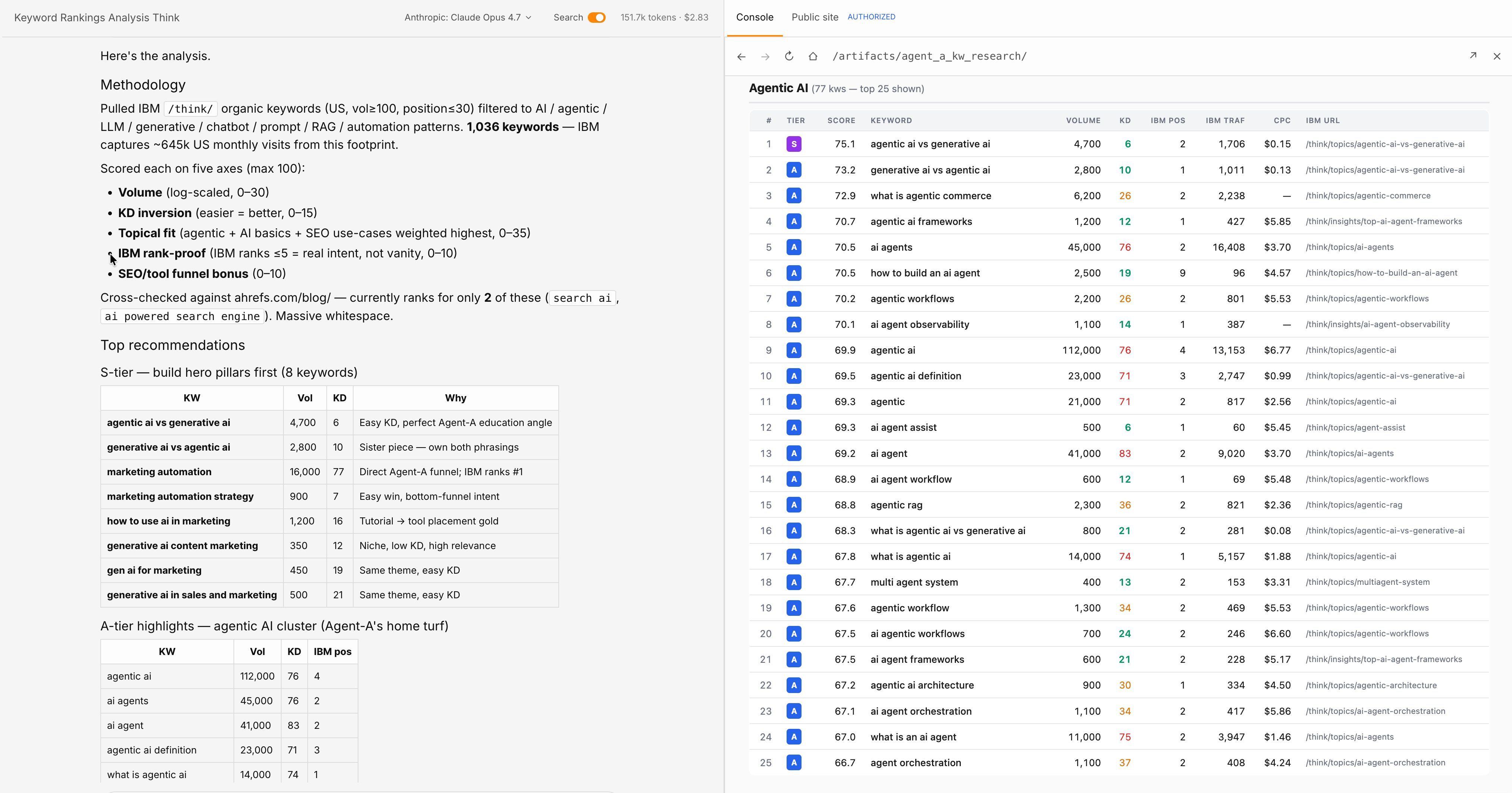

Evaluating using generative and agent AI.

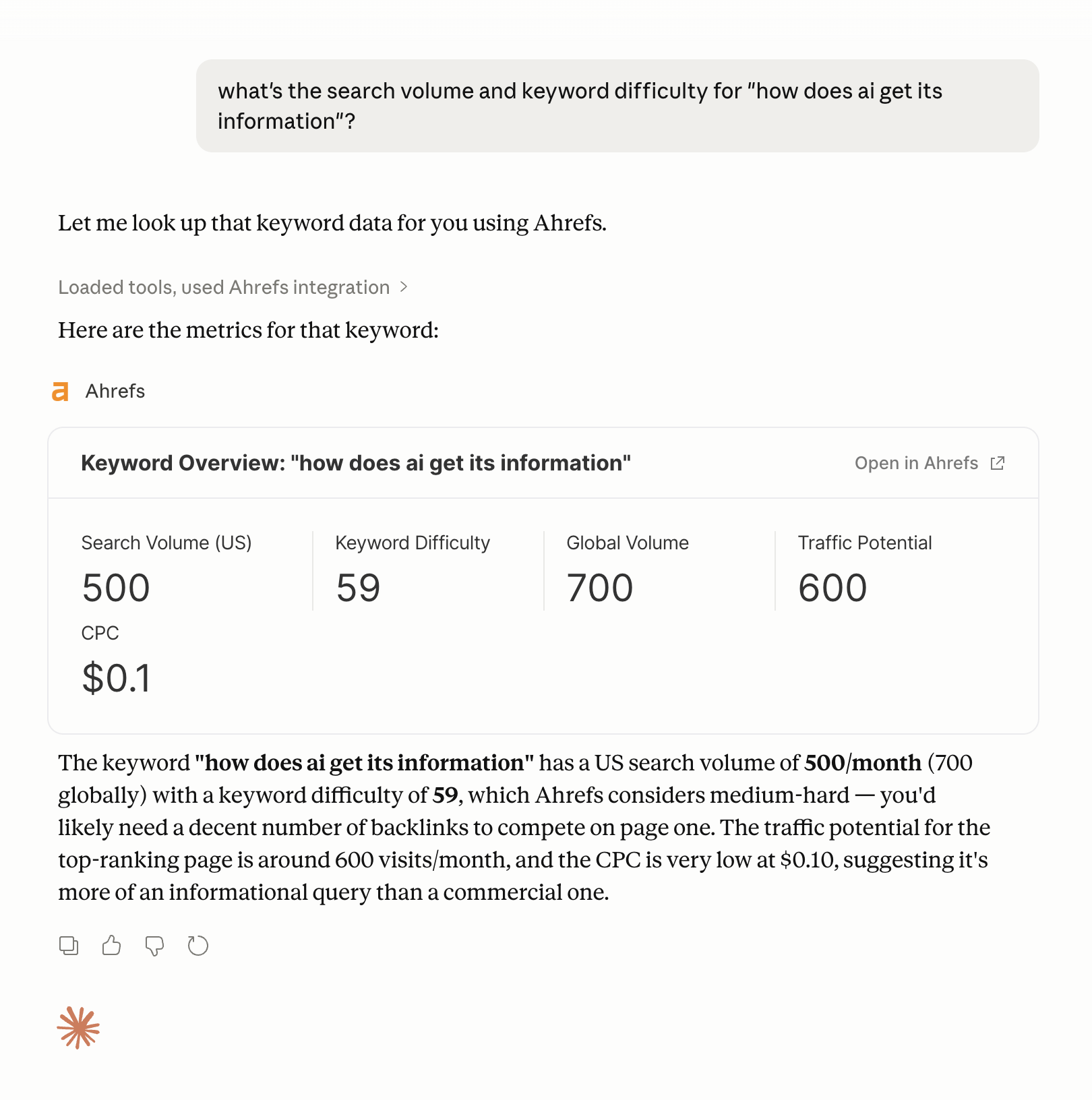

The brand new infrastructure for that is referred to as: Model Context Protocol (MCP). A standard that allows AI models to connect to external data sources in a structured way.

A concrete example: Ahrefs has MCP integration that allows AI agents to directly query Ahrefs data during tasks to obtain keyword metrics, backlink data, or competitor insights without the user leaving the workflow.

Example of using Ahrefs MCP with Claude to retrieve keyword data.

Try Agent A now

Ahrefs’ Agent A takes this even further. This is a marketing AI with direct and unrestricted access to Ahrefs’ complete internal datasets (keyword data, site metrics, competitive information, works, etc.).

Rather than an AI that has to approximate SEO insights from training data (which is outdated) or obtained from public sources (which are incomplete), Agent A works based on real data.

This is a big difference, especially for marketing and SEO tasks. Agent A can work on many SEO and marketing workflows without holding hands.

The broader principle is that a tool-enhanced AI is only as reliable as the tools it calls. If the API returns wrong data, the AI will confidently generate the wrong answer. The intelligence of the model eliminates the possibility of unnecessary inputs. What this does is extend the range of the model far beyond what the training dataset can cover.

Understanding the place the AI is getting its data will inform you the place your model wants to seem to maximise your probabilities of being cited.

- Off-site mentions. If you’d like AI to precisely symbolize your model, the place to begin is off-site mentions, not your web site. Fashions find out about your model from the sources they use for coaching, reminiscent of press protection, third-party critiques, discussion board discussions, Wikipedia entries, and citations from authoritative publications. Manufacturers that exist solely on their very own area are hardly ever acknowledged within the mannequin’s coaching knowledge.

- Question fanout. Past model consciousness, you could take into consideration question fan-out, or the adjoining questions that your AI system generates round your central matter. Model rankings for “Venture Administration Software program” also needs to goal content material reminiscent of “How one can Carry out a Dash Assessment” and “Agile vs. Waterfall.” As a result of these are the questions that the AI system surfaces when the person follows up on the preliminary question. Whenever you create content material that absolutely covers the semantic areas surrounding your central matter, you improve your probabilities of showing in that enlargement.

- AI accessibility. Technical accessibility additionally stays necessary. Clear HTML, quick loading instances, and a well-configured robots.txt file have an effect on whether or not AI crawlers can learn your content material. llms.txt has been proposed as an ordinary to permit LLMs to navigate the construction of a web site, however as of 2026, no main LLM supplier has confirmed that it’s revered (do not waste your time).

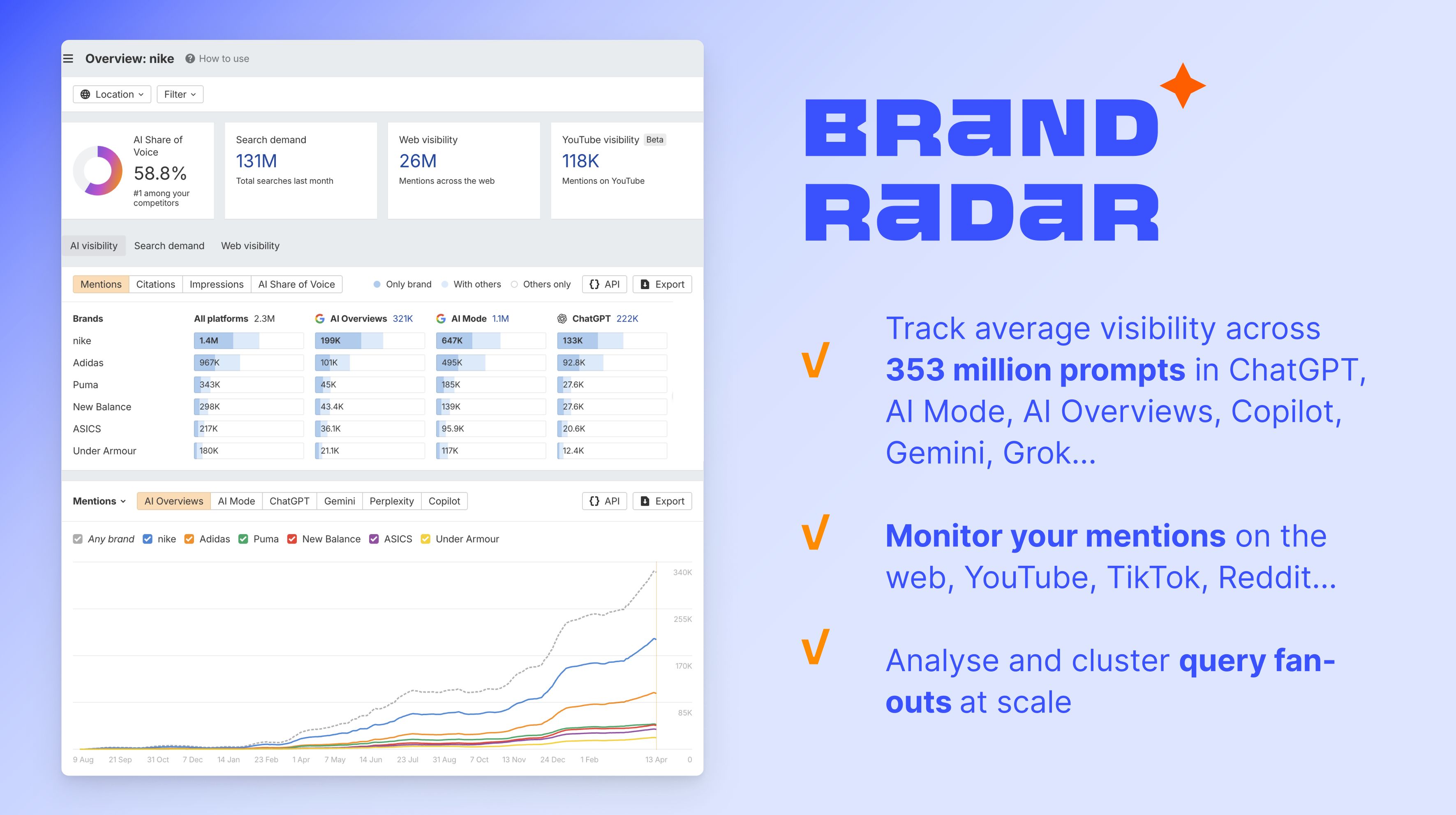

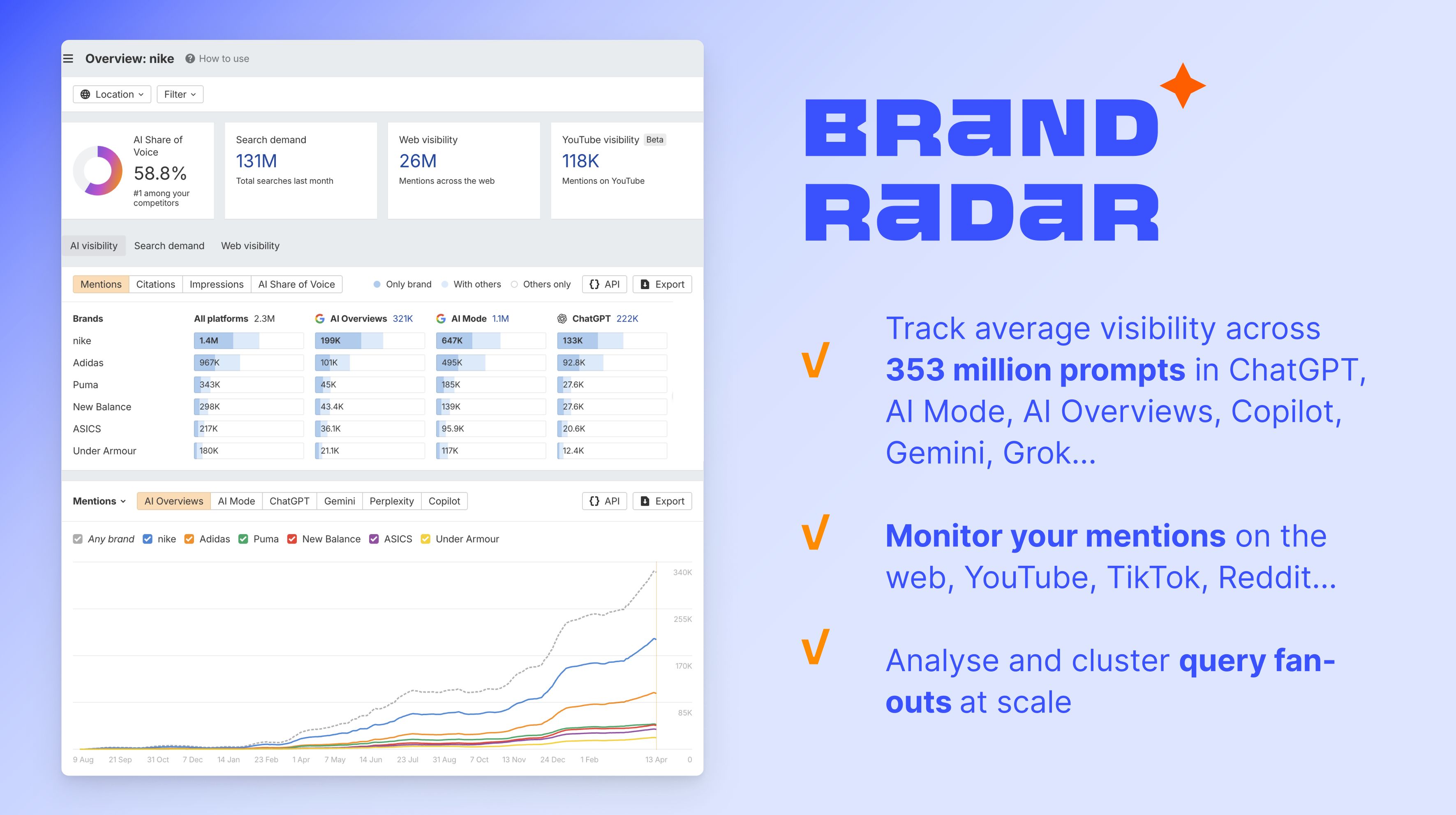

Begin monitoring your AI visibility with Model Radar

To measure how that is working in follow, Ahrefs’ Model Radar tracks AI voice share throughout ChatGPT, Gemini, Perplexity, AI Overviews, AI Mannequin Grok, and extra, displaying you ways usually your model is talked about in AI-generated responses in comparison with your opponents. Learn this text to study the way it works.

last ideas

AI data comes from three layers: frozen coaching knowledge, captured reside paperwork, and related exterior instruments reminiscent of APIs and MCPs. Every has a unique accuracy profile, relationship to recency, and alternative ways of failing.

Coaching knowledge is the muse and is huge, costly, and static. RAGs and grounding add forex on the expense of search reliability. Instrument integrations like Ahrefs’ MCP and devoted brokers like Agent A lengthen that even additional, giving AI entry to trusted reside knowledge when it wants it.

To study extra about how AI search engines like google sew these layers collectively to generate solutions, take a look at our information to how AI search engines like google work.