Conventional search monitoring is constructed on a easy promise: kind a question, get a consequence, and observe your rating. AI doesn’t work that method.

Assistants like ChatGPT, Gemini, and Perplexity don’t present mounted outcomes—they generate solutions that adjust with each run, each mannequin, and each person.

“AI rank monitoring” is a misnomer—you may’t observe AI such as you do conventional search.

However that doesn’t imply you shouldn’t observe it at all.

You simply want to regulate the questions you’re asking, and the way in which you measure your model’s visibility.

In web optimization rank monitoring, you may depend on steady, repeatable guidelines:

- Deterministic outcomes: The identical question typically returns related SERPs for everybody.

- Mounted positions: You’ll be able to measure precise ranks (#1, #5, #20).

- Recognized volumes: You know the way in style every key phrase is, so you understand what to prioritize.

AI breaks all three.

- Probabilistic solutions: The identical immediate can return completely different manufacturers, citations, or response codecs every time.

- No mounted positions: Mentions seem in passing, in various order—not as numbered ranks.

- Hidden demand: Immediate quantity knowledge is locked away. We don’t know what individuals truly ask at scale.

And it will get messier:

- Fashions don’t agree. Even inside variations of the identical assistant generate completely different responses to an an identical immediate.

- Personalization skews outcomes. Many AIs tailor their outputs to elements like location, context, and reminiscence of earlier conversations.

This is the reason you may’t deal with AI prompts like key phrases.

It doesn’t imply AI can’t be tracked, however that monitoring particular person prompts just isn’t sufficient.

As an alternative of asking “Did my model seem for this precise question?”, the higher query to ask is: “Throughout hundreds of prompts, how typically does AI join my model with this matter or class?”

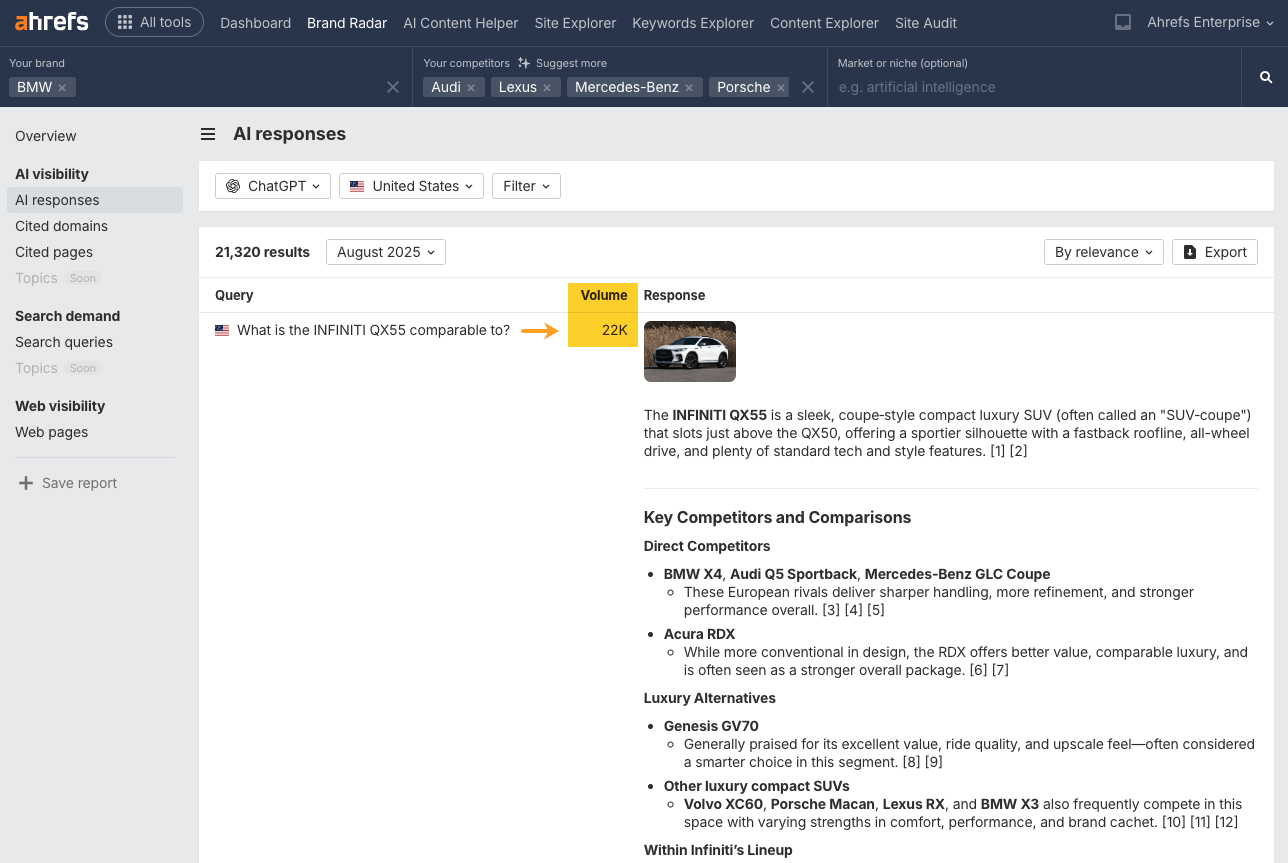

That’s the philosophy behind Ahrefs Brand Radar—our database of millions of AI prompts and responses that helps you track directionally.

A major stumbling block when it comes to AI search tracking is that none of us know what people are actually searching en masse.

Unlike search engines, which publish keyword volumes, AI companies keep prompt logs private—that data never leaves their servers.

That makes prioritization tricky, and means it’s hard to know where to start when it comes to optimizing for AI visibility.

To move past this, we seed Brand Radar’s database with real search data: questions from our keyword database and People Also Ask queries, paired with search volume.

These are still “synthetic” prompts, but they reflect real world demand.

Our goal isn’t to tell you whether you appear for a single AI query, it’s to show you how visible your brand is across entire topics.

If you can see that you have great visibility for a topic, you don’t need to track hundreds of specific prompts within that topic, because you already understand the underlying probability that you’ll be mentioned.

By focusing on aggregated visibility, you can move past noisy outputs:

- See if AI consistently ties you to a category—not just if you appeared once.

- Track trends over time—not just snapshots.

- Learn how your brand is positioned against competitors—not just mentioned.

Think of AI tracking less like rank tracking and more like polling.

You don’t care about one answer, you care about the direction of the trend across a statistically significant amount of data.

You can’t track your AI visibility like you can track your search visibility. But, even with flaws, AI tracking has clear value.

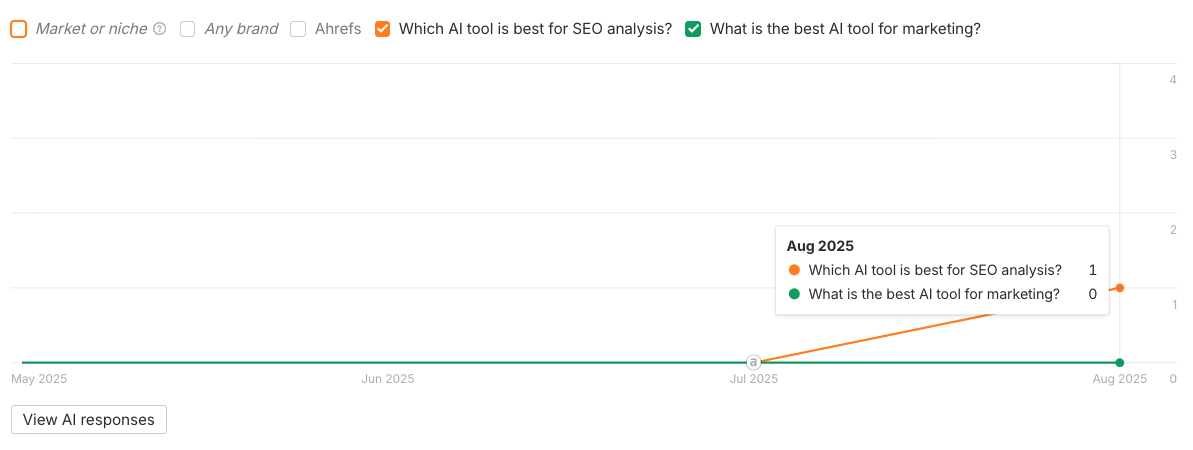

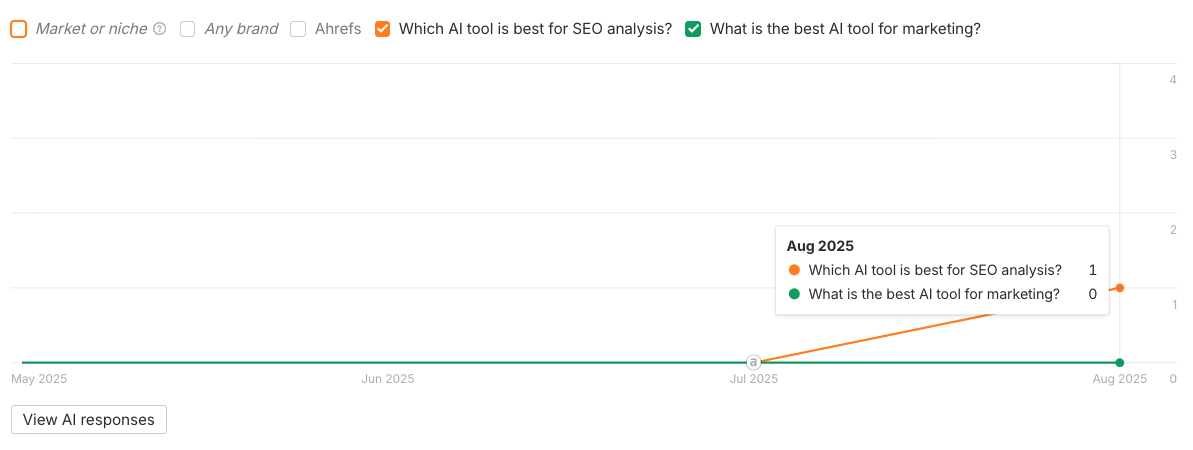

Particular person model mentions in AI fluctuate so much, however aggregating that knowledge offers you a extra steady view.

For instance, if you happen to run the identical immediate thrice, you’ll seemingly see three completely different solutions.

In a single your model is talked about, in one other it’s lacking, in a 3rd a competitor will get the highlight

However combination hundreds of prompts, and the variability evens out.

Immediately it’s clear: your model seems in ~60% of AI solutions.

![]()

![]()

Aggregation smooths out the randomness, outlier solutions get averaged into the bigger pattern, and also you get a greater concept of how a lot of the market you truly personal.

These are the identical ideas utilized in surveys: particular person solutions fluctuate, however combination developments are dependable sufficient to behave on.

They present you constant alerts you’d miss if you happen to solely centered on a handful of prompts.

The issue is, most AI monitoring instruments cap you at 50–100 queries—primarily as a result of working prompts at scale will get costly.

That’s not sufficient knowledge to inform you something significant.

With such a small pattern, you may’t get a transparent sense of your model’s precise AI visibility.

That’s why we’ve constructed our AI database of ~100M prompts—to help the form of combination evaluation that is sensible for AI search monitoring.

Finding out how your model reveals up throughout hundreds of AI prompts can assist you see patterns in demand, and check how your efforts on one channel impression visibility on the different.

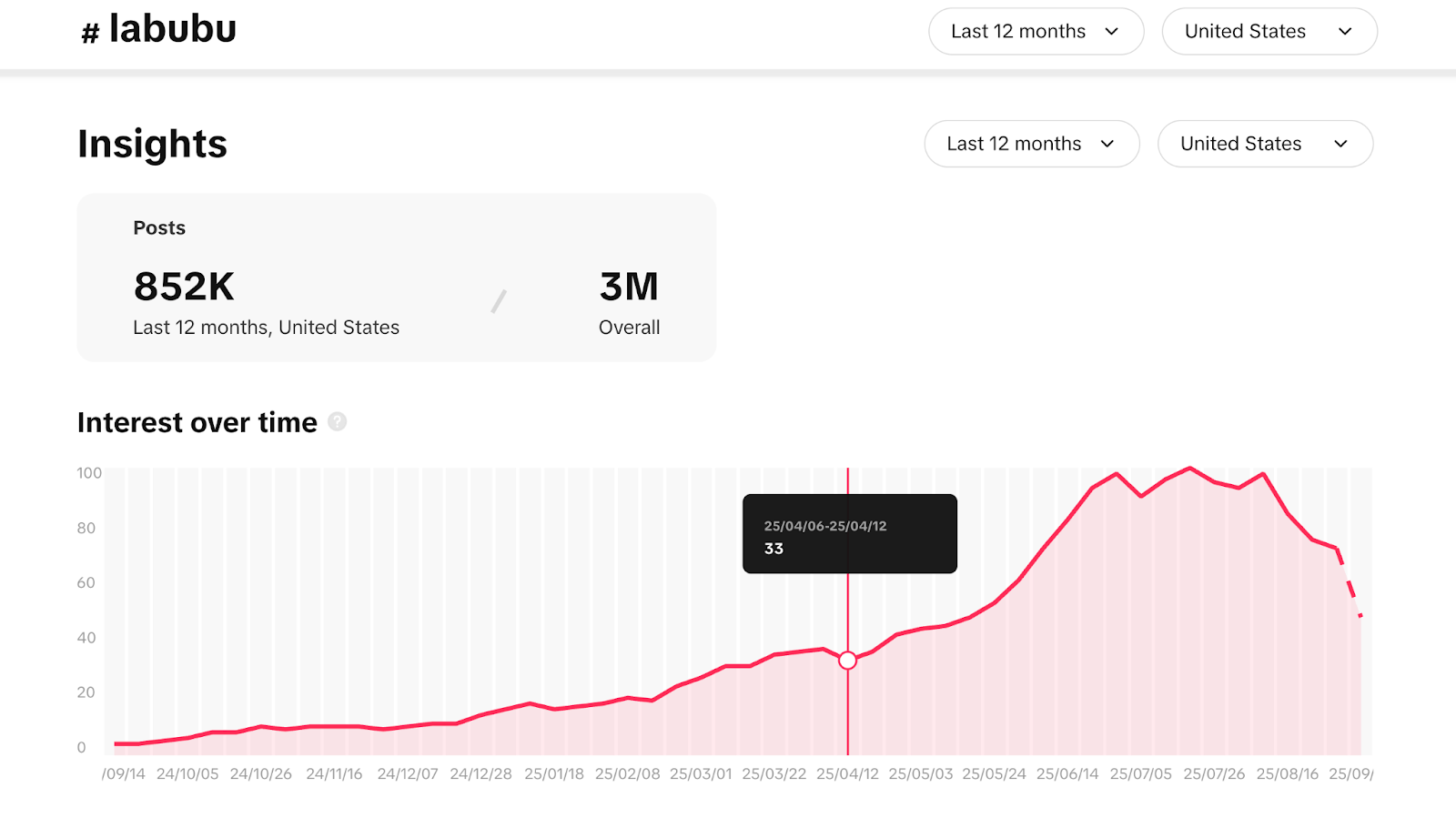

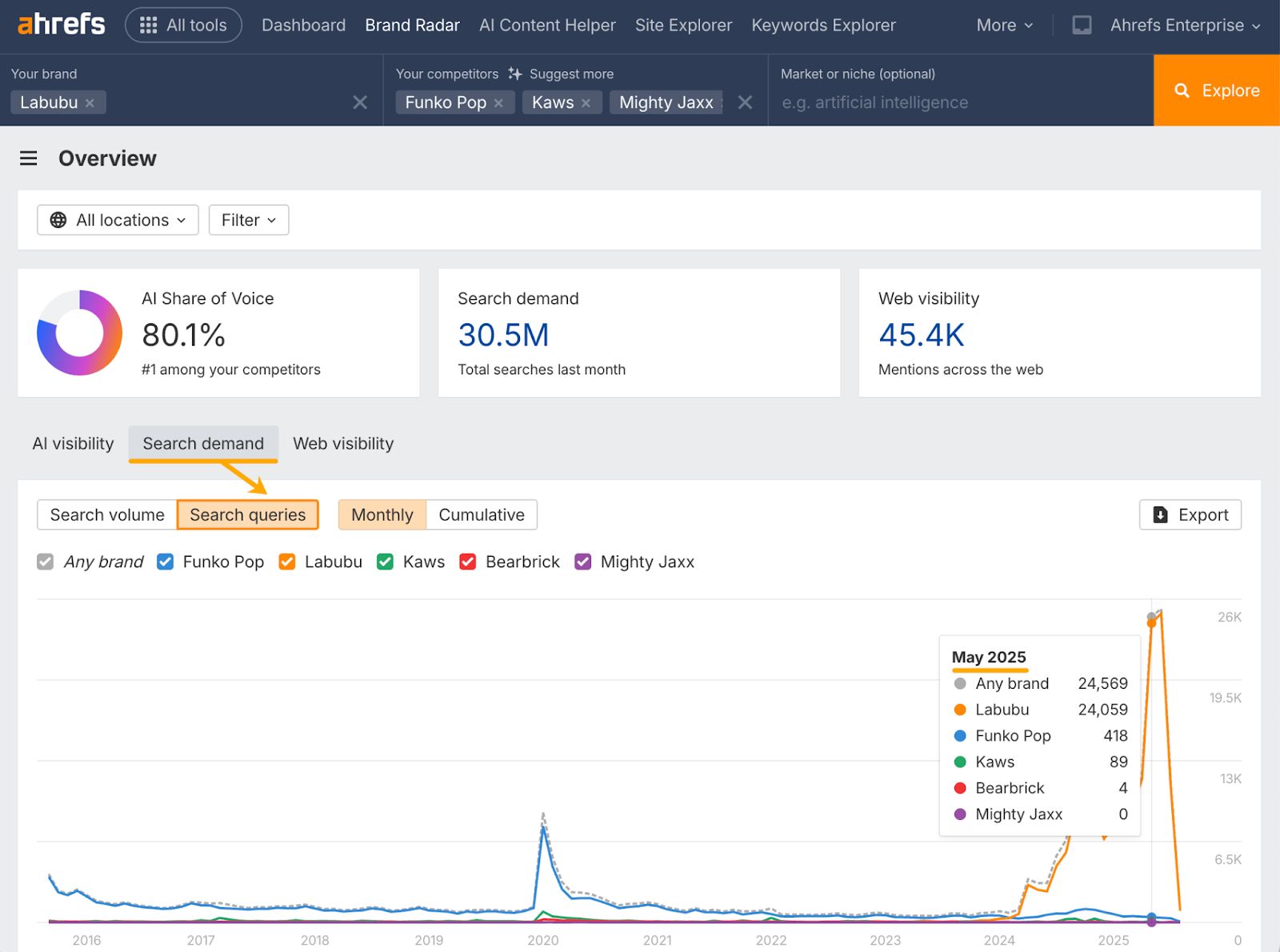

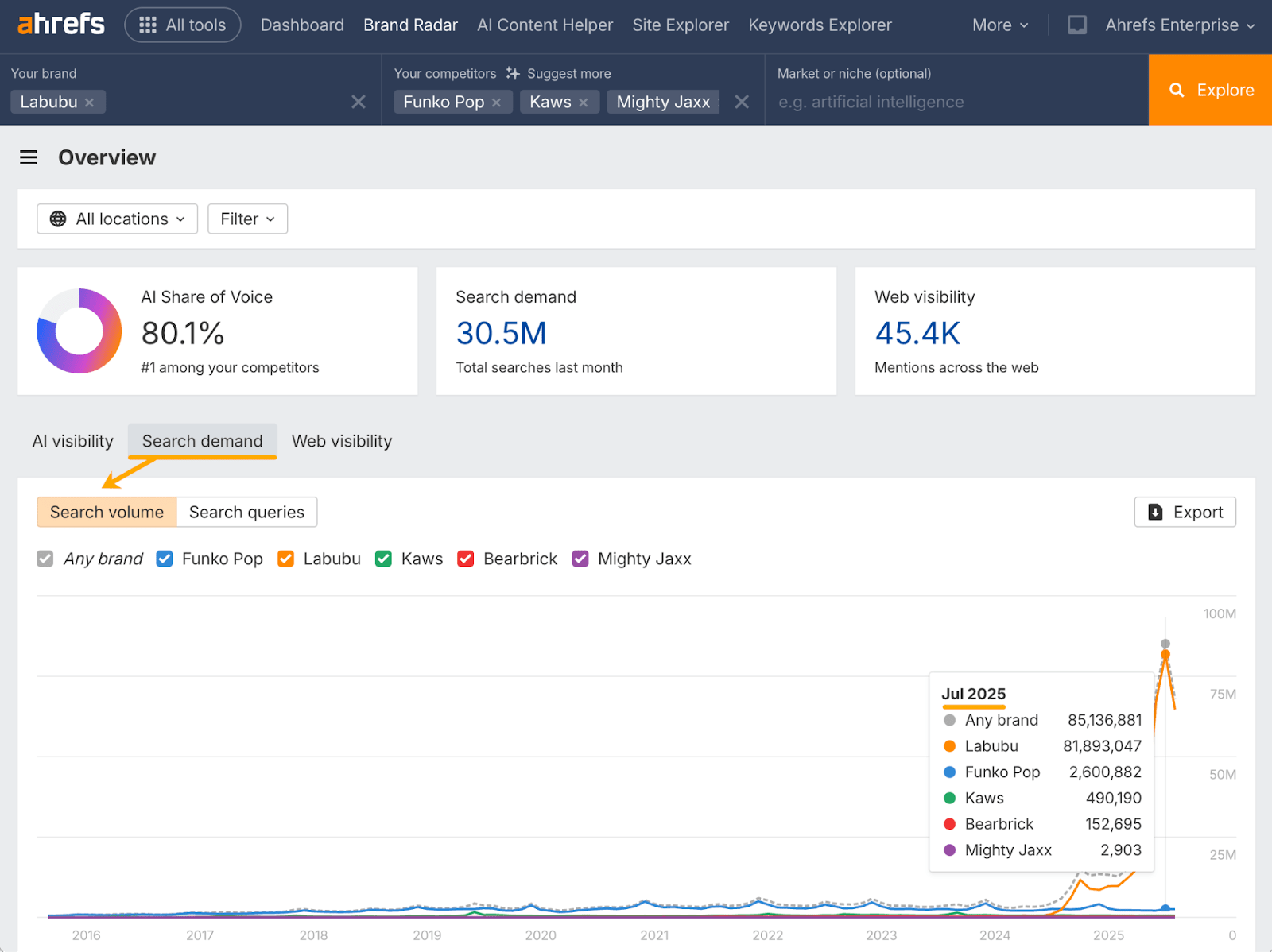

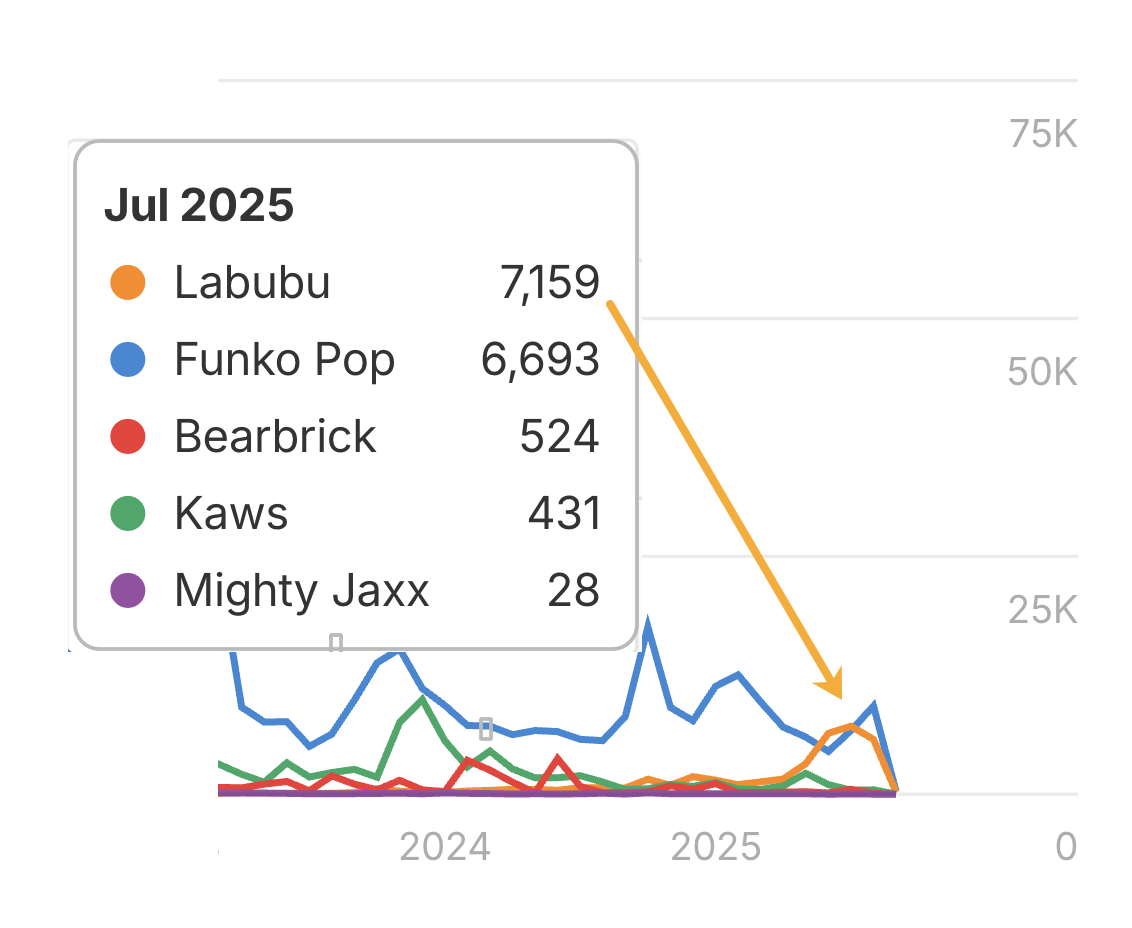

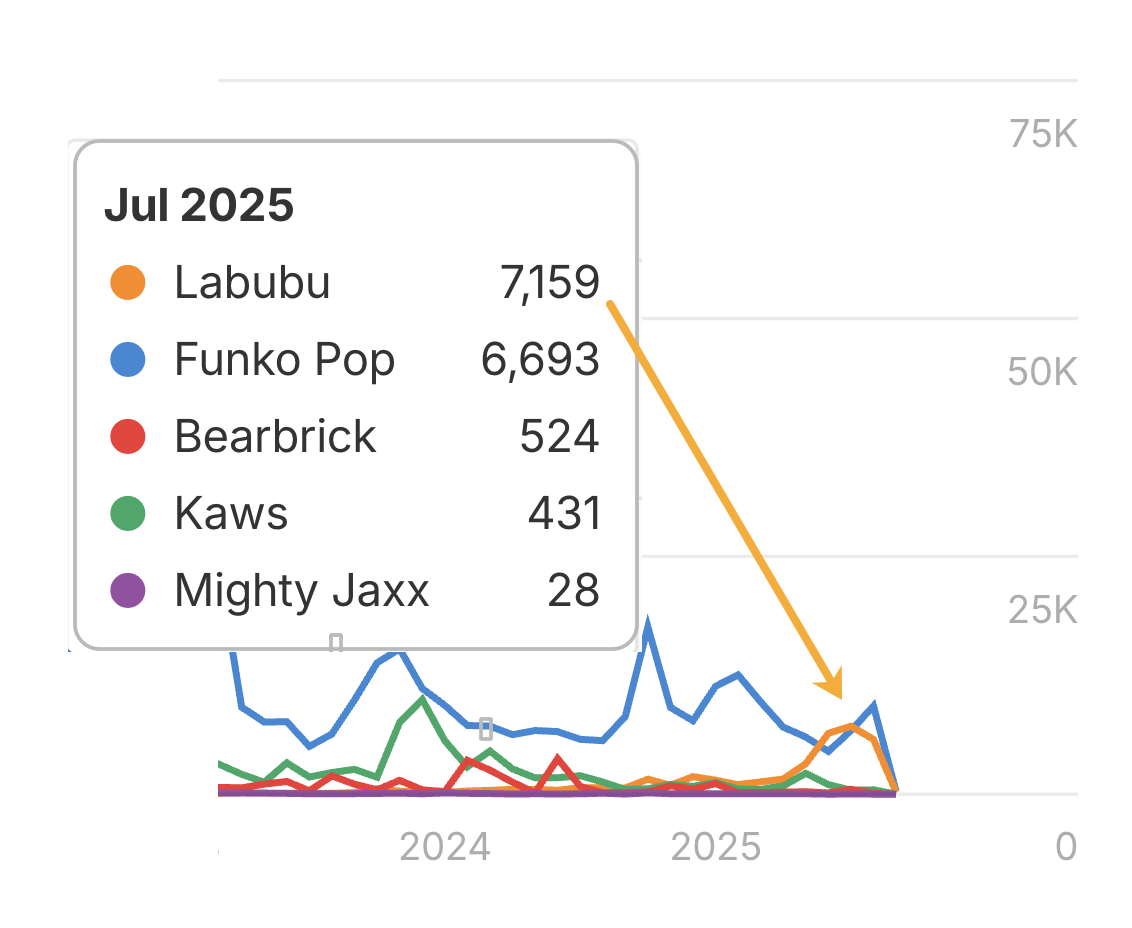

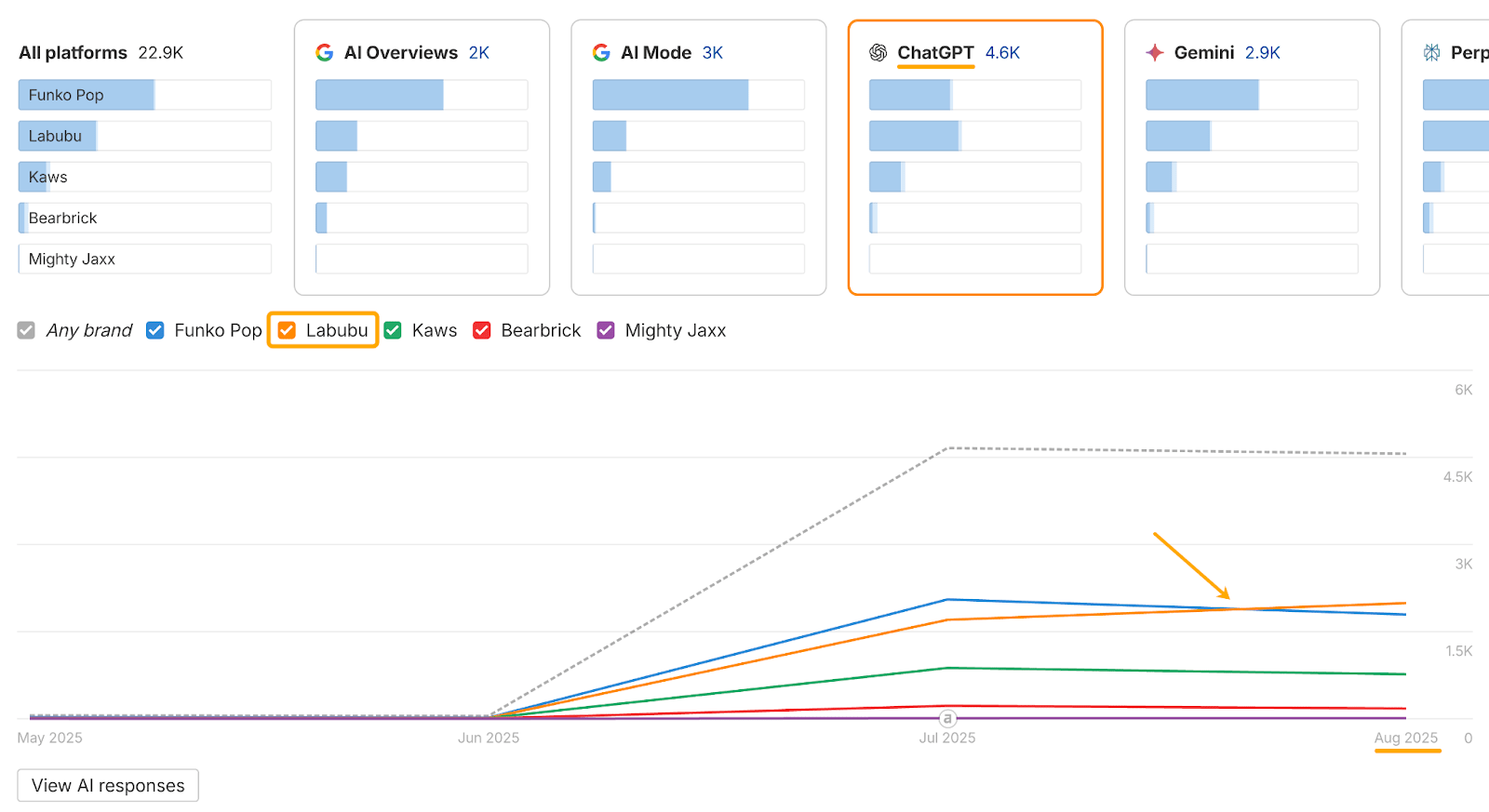

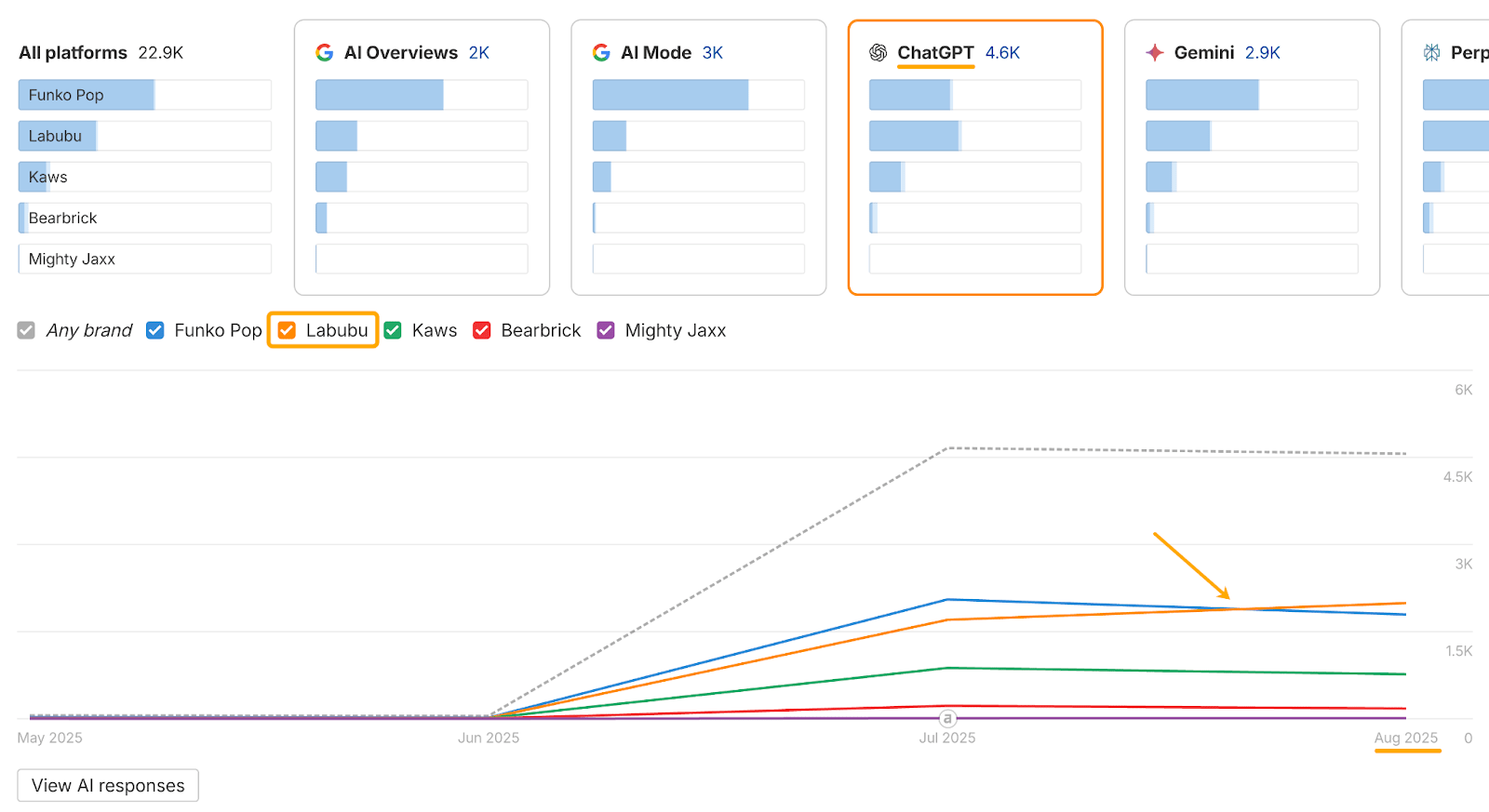

Right here’s what that appears like in apply, specializing in the instance of Labubu (these creepy doll issues that everybody has just lately grow to be obsessive about).

By combining TikTok knowledge with Ahrefs Model Radar, I traced how “Labubu” confirmed up throughout AI, social, search, and the broader internet.

It made for an fascinating timeline of occasions.

April: In keeping with TikTok’s Artistic Middle, which permits you observe trending key phrases and hashtags, Labubu went viral on TikTok after unboxing movies took off in April.

Might: Hundreds of “Labubu” associated search queries begin exhibiting up within the SERPs.

July: Search quantity spikes for those self same “Labubu” queries.

Additionally in July, internet mentions for “Labubu” surge, overtaking market-leading toy Funko Pop.

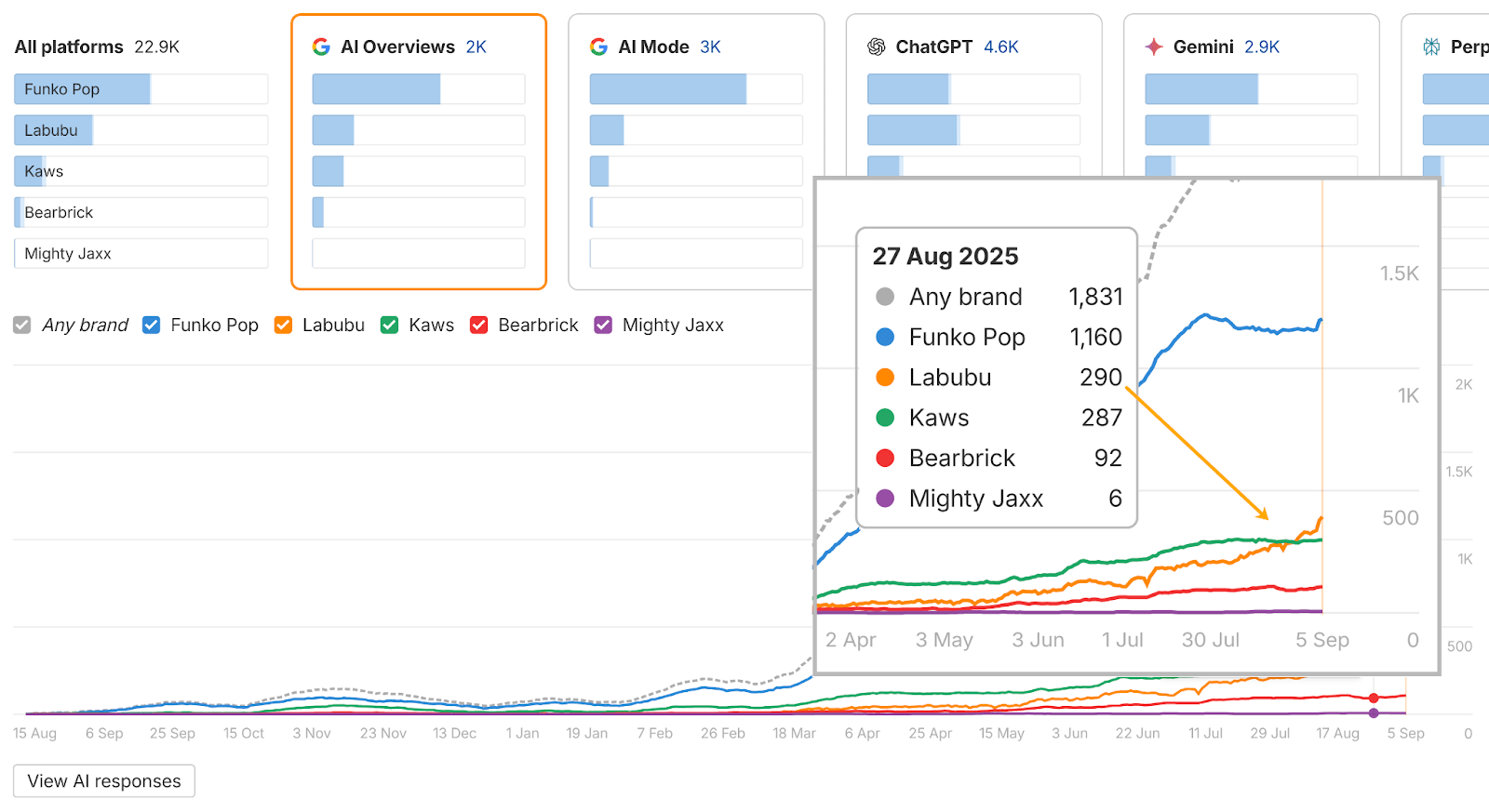

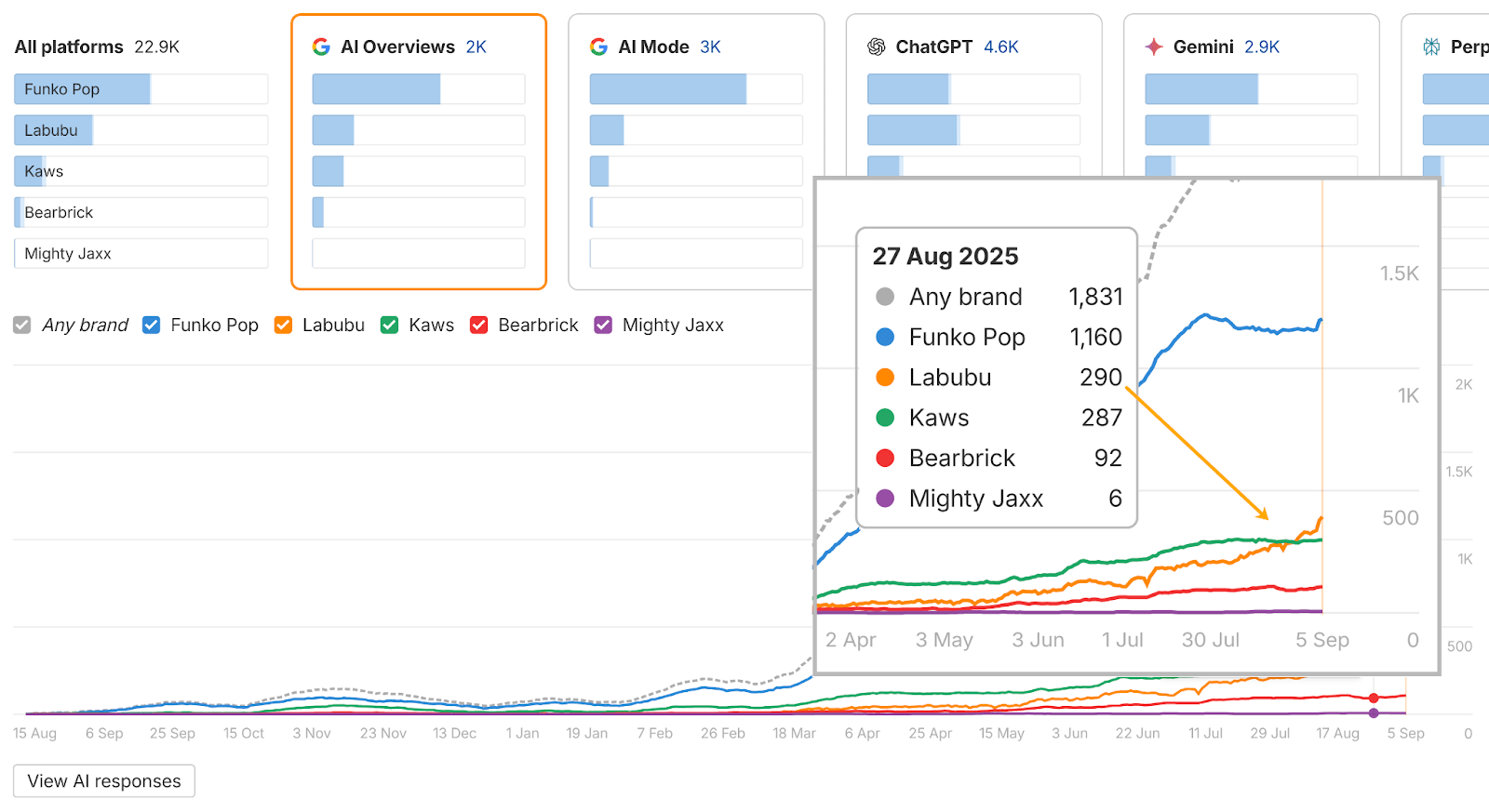

August: Labubu crosses over into AI visibility, gaining mentions in Google’s AI Overviews in late August—overtaking one other main toy model: Kaws.

Additionally in August, Labubu overtakes all different opponents in ChatGPT conversations.

This instance reveals that AI is a part of a wider discovery ecosystem.

By monitoring it directionally, you may see when and the way a model (or development) breaks via into AI.

In all, it took 4 months for the Labubu model to floor in AI conversations.

By working the identical evaluation on opponents, you may consider completely different situations, replicate what works, and set lifelike expectations in your personal AI visibility timeline.

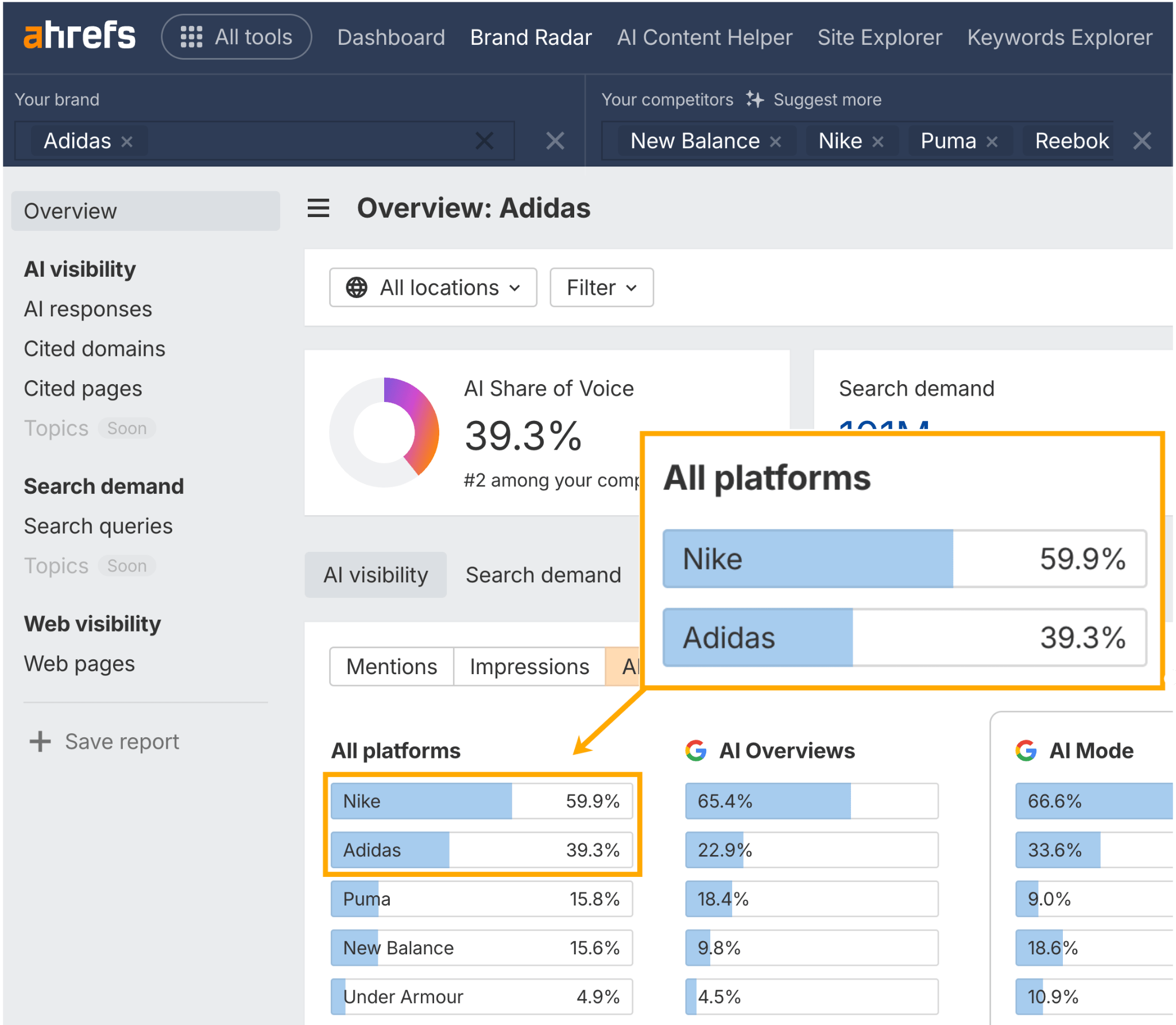

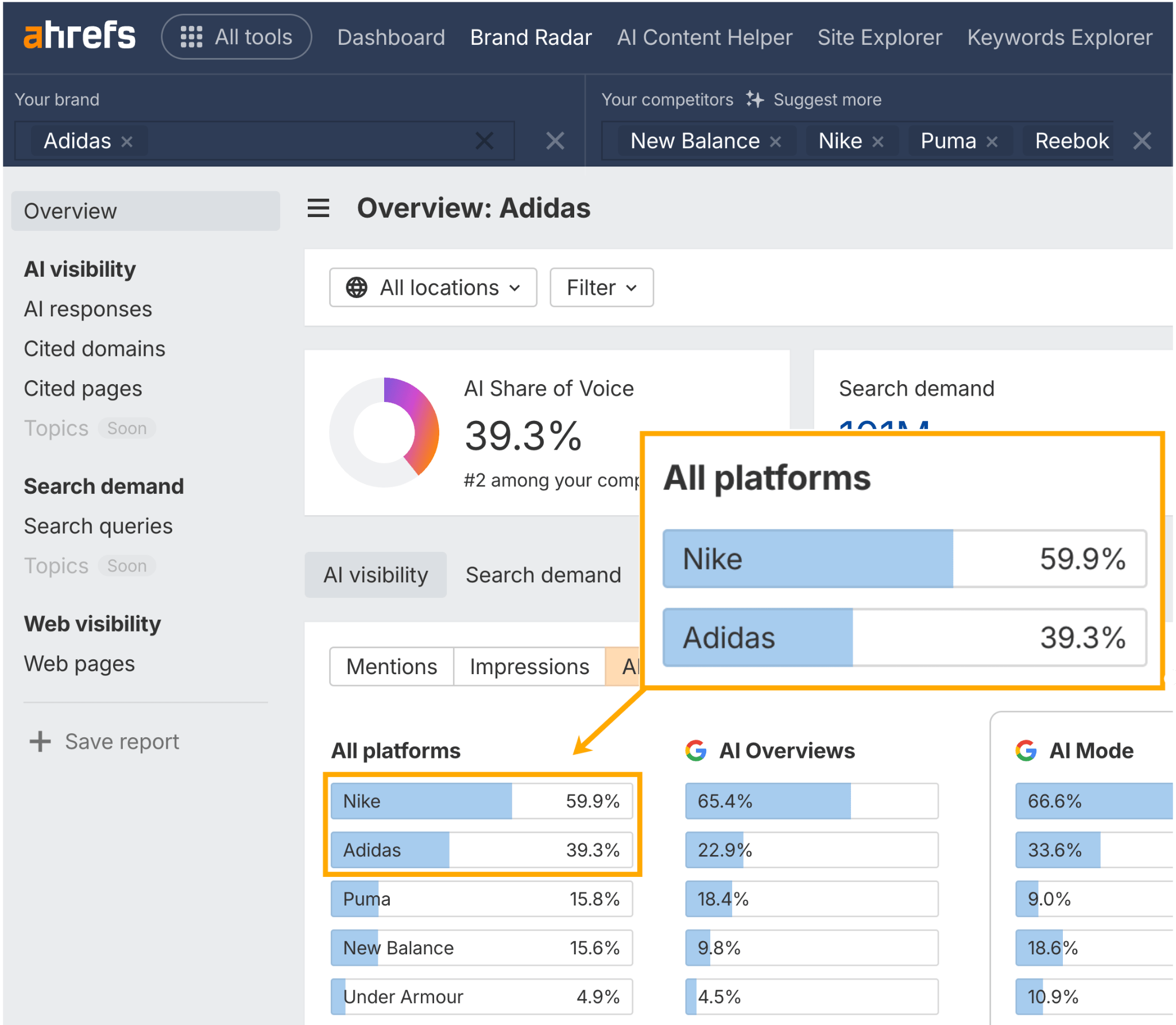

AI variance shouldn’t cease you evaluating your AI visibility to opponents.

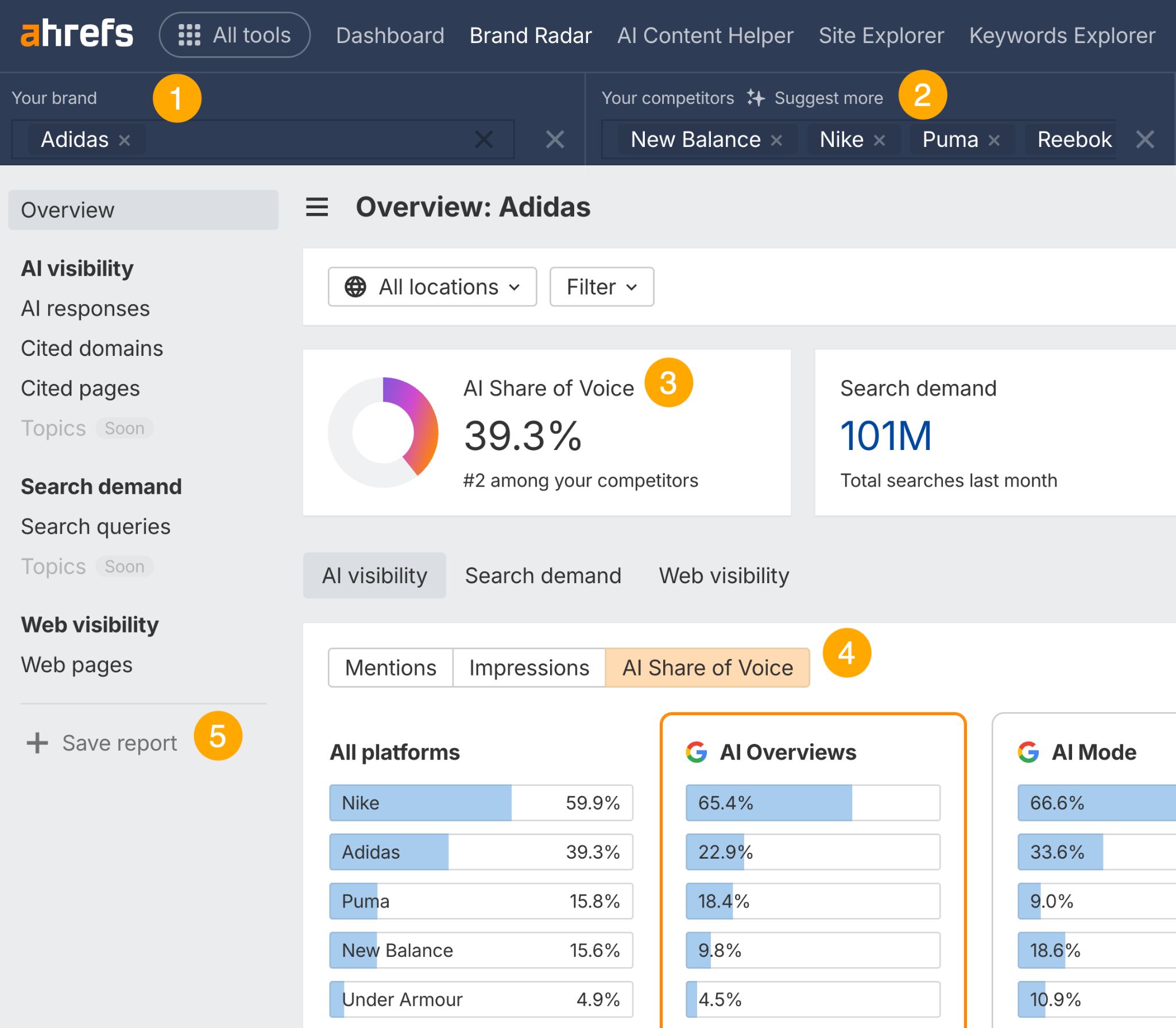

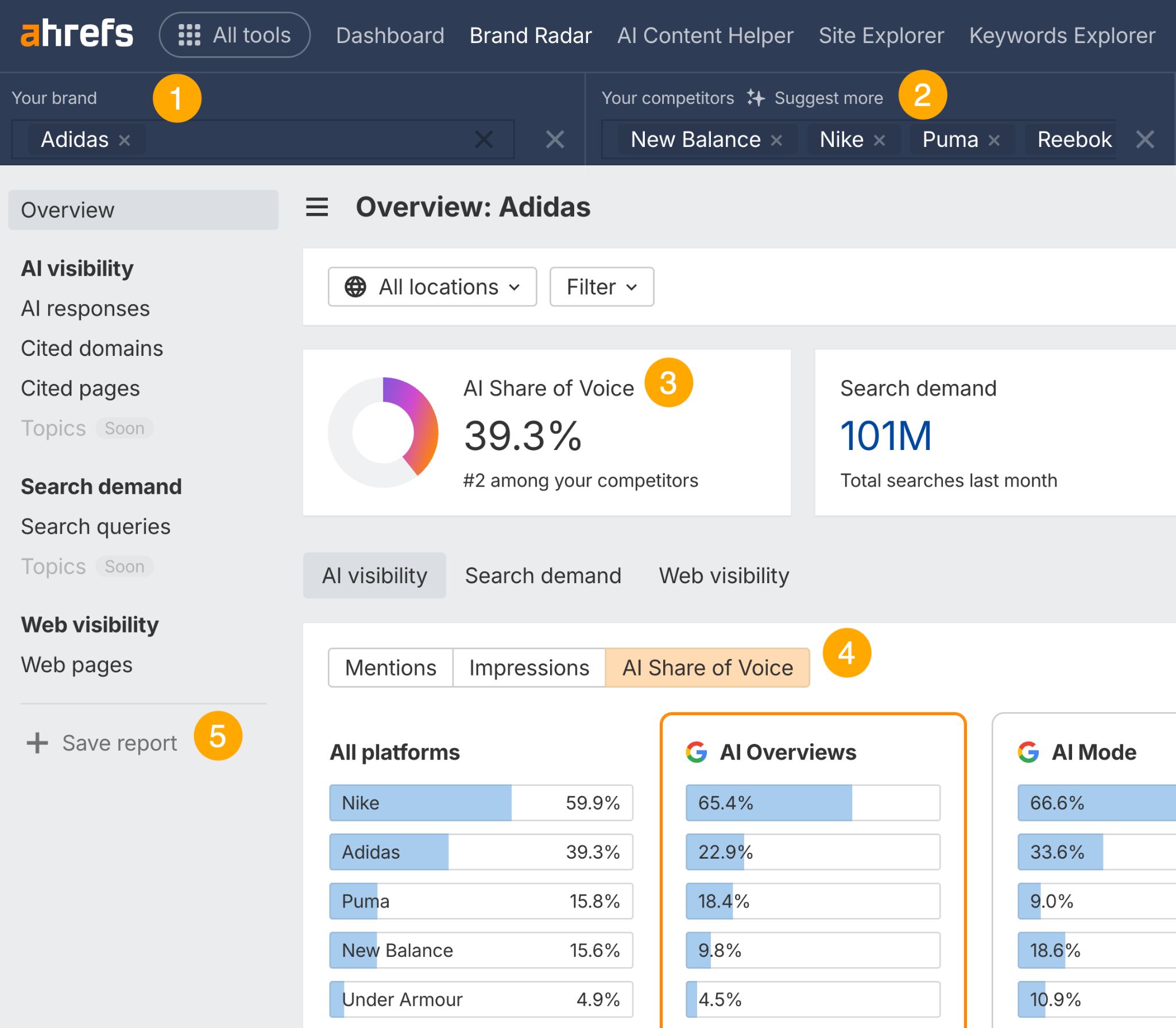

The hot button is to trace your model’s AI Share of Voice throughout hundreds of prompts—in opposition to the identical opponents—on a constant foundation, to gauge your relative possession of the market.

If a model (e.g. Adidas) seems in ~40% of prompts, however a competitor (e.g. Nike) reveals up in ~60% , that’s a transparent hole—even when the numbers bounce round barely from run to run.

Monitoring AI search can present you the way in which your AI visibility is trending.

For instance, if Adidas strikes from 40% to 45% protection, that’s a transparent directional win.

Model Radar helps this type of longitudinal AI Share of Voice monitoring.

Right here’s the way it works in 5 easy steps:

- Search your model

- Enter your opponents

- Verify your general AI Share of Voice proportion

- Hit the “AI Share of Voice” tab to benchmark in opposition to your opponents

- Save the identical immediate report and return to it to trace your progress

Over time, these benchmarks present whether or not you’re gaining or dropping floor in AI conversations.

A handful of prompts gained’t inform you a lot, even when they’re actual.

However while you have a look at tons of of variations, you may work out whether or not AI actually ties your model to its key subjects.

As an alternative of asking “Will we seem for [insert query]?”, we needs to be asking “Throughout all of the variations of prompts about this matter, how typically can we seem?”

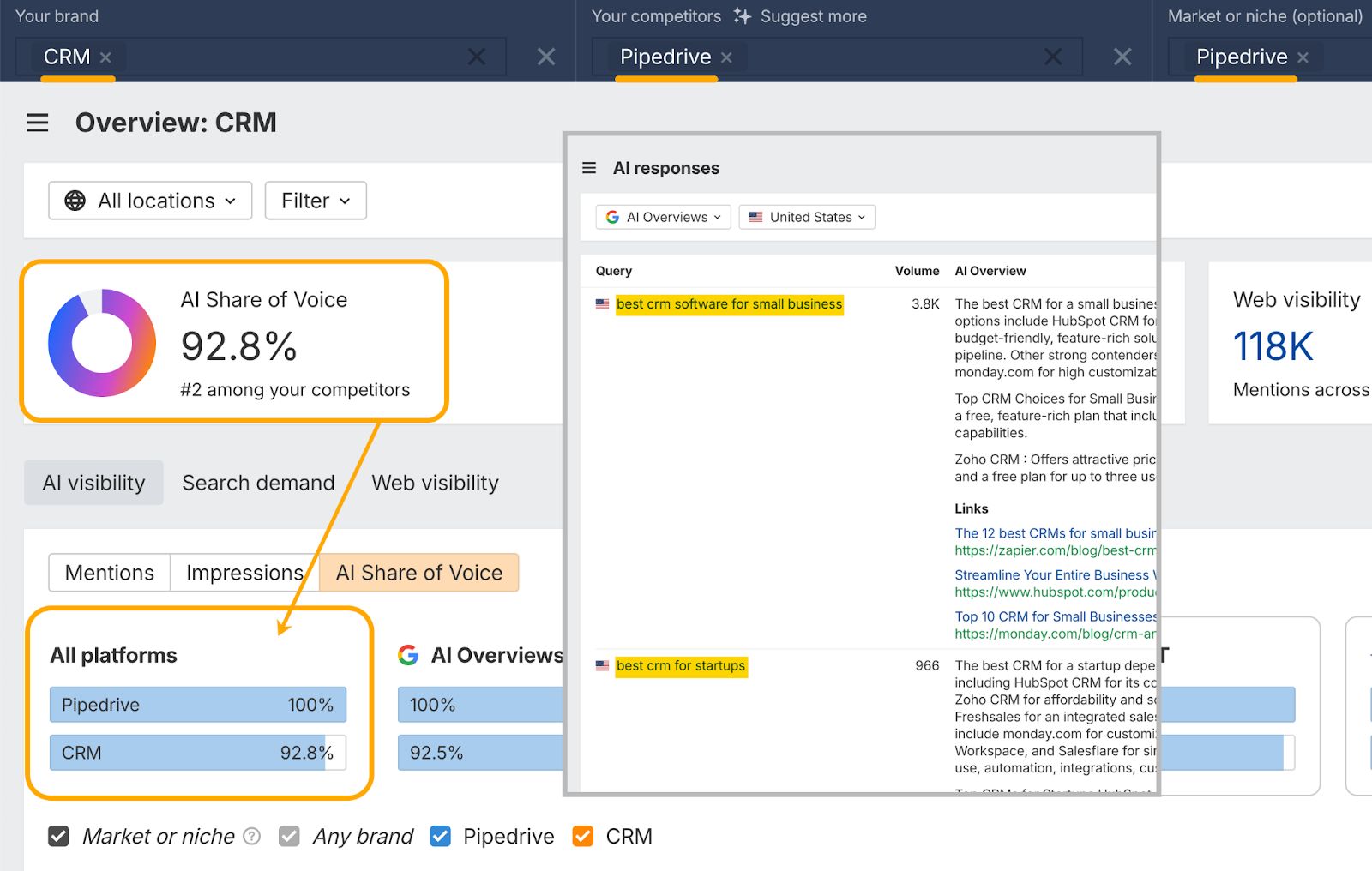

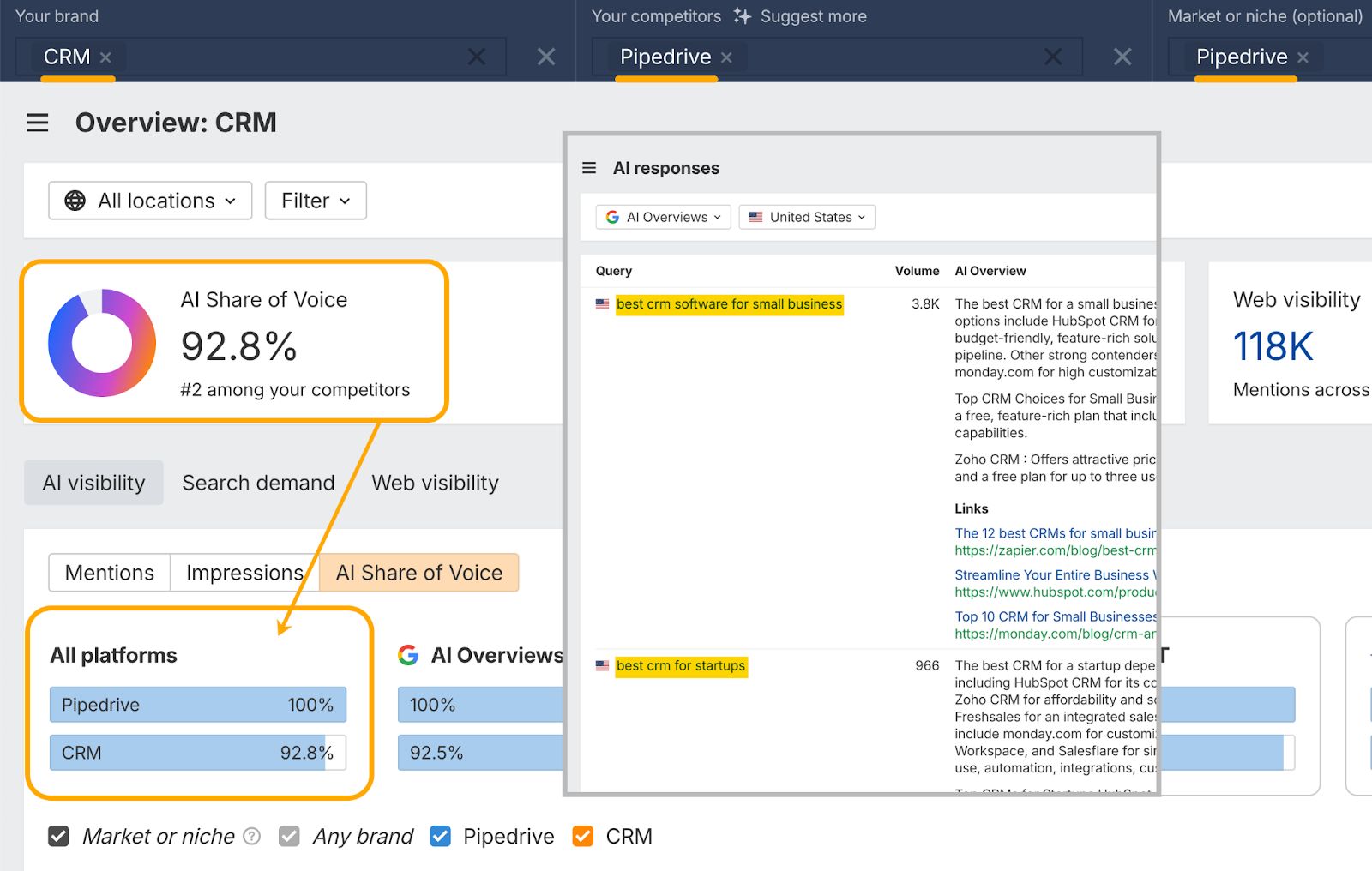

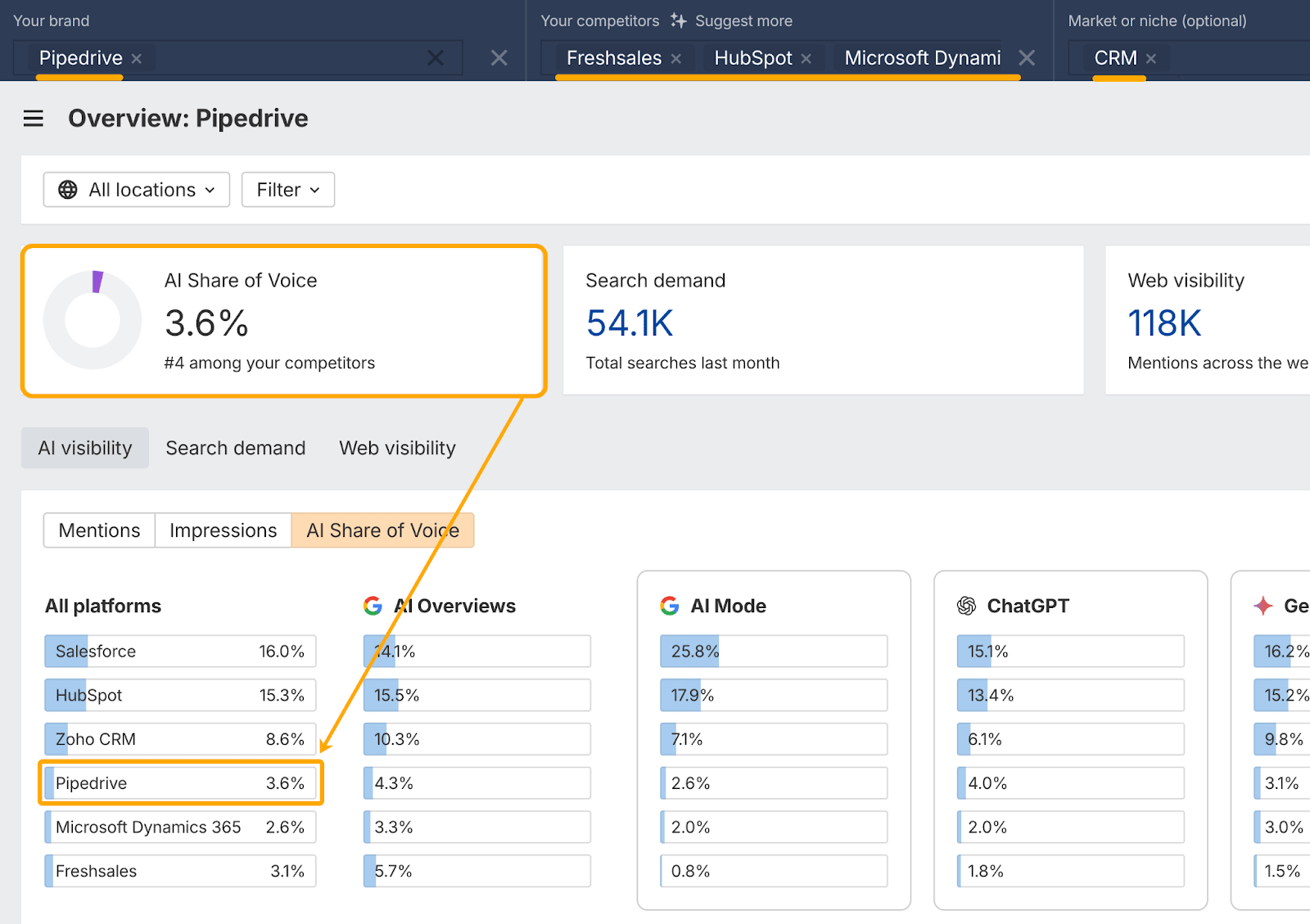

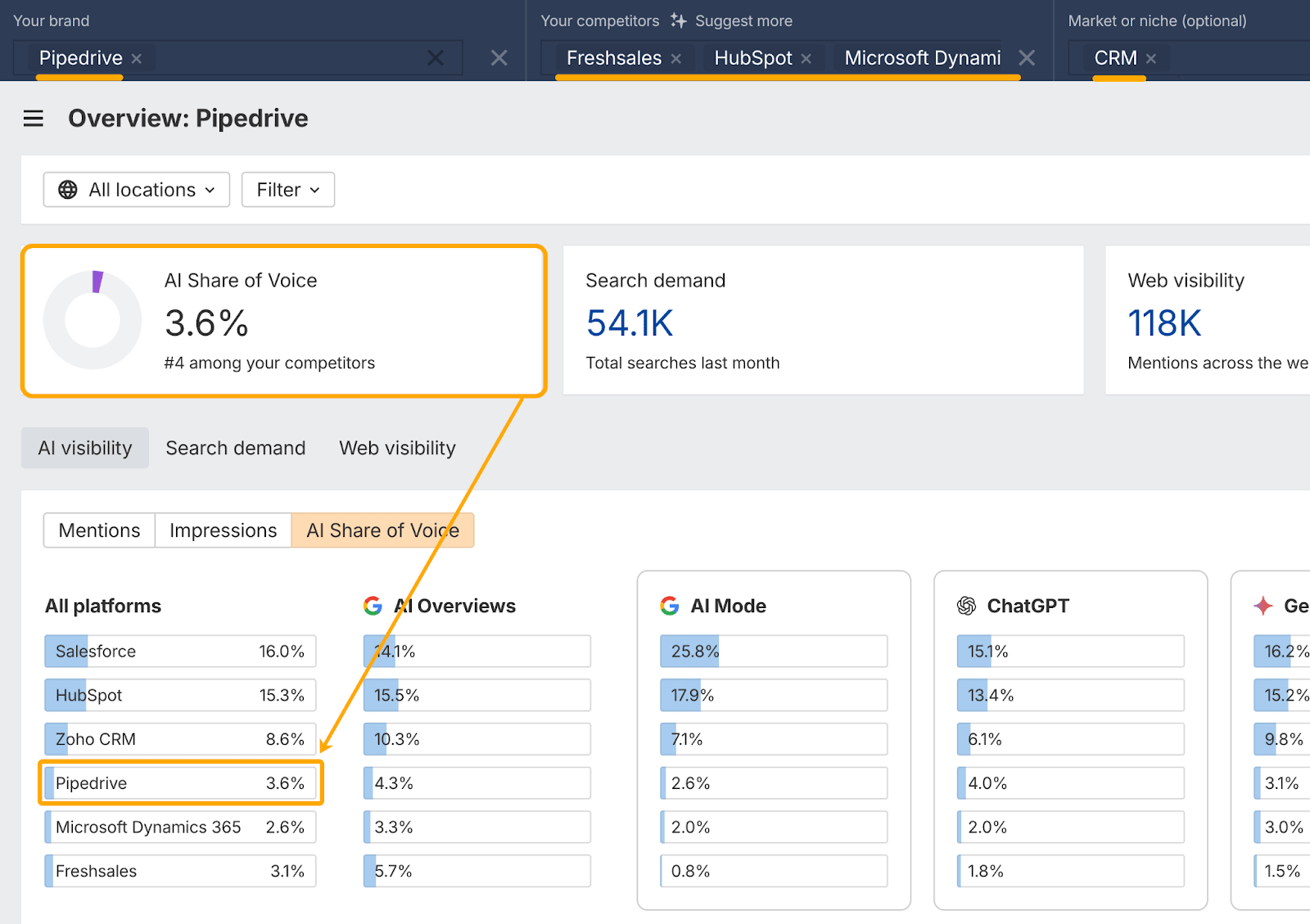

Take Pipedrive for example.

CRM associated prompts like “greatest CRM for startups” and “greatest CRM software program for small enterprise” account for 92.8% of Pipedrive’s AI visibility (~7K prompts).

However while you benchmark in opposition to your complete CRM market (~128K prompts), their general share of voice drops to simply 3.6%.

So, Pipedrive clearly “owns” sure CRM subtopics, however not the total class.

This model of AI monitoring offers you perspective.

It reveals you the way typically you seem throughout subtopics and the broader market, however simply as importantly, reveals the place you’re lacking.

These gaps—the “unknown unknowns”—are alternatives and dangers you wouldn’t have thought to verify for.

They provide you a roadmap of what to prioritize subsequent.

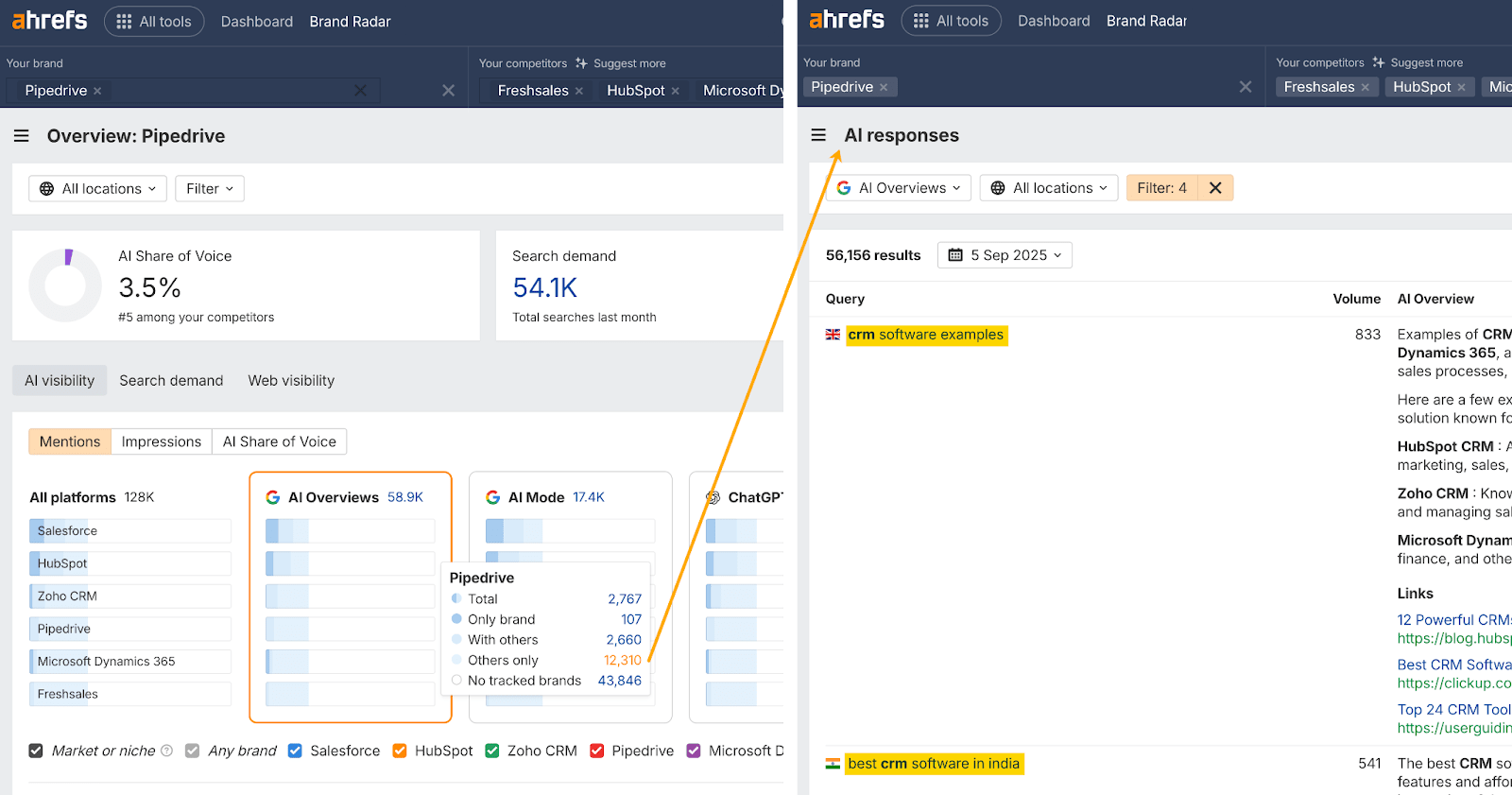

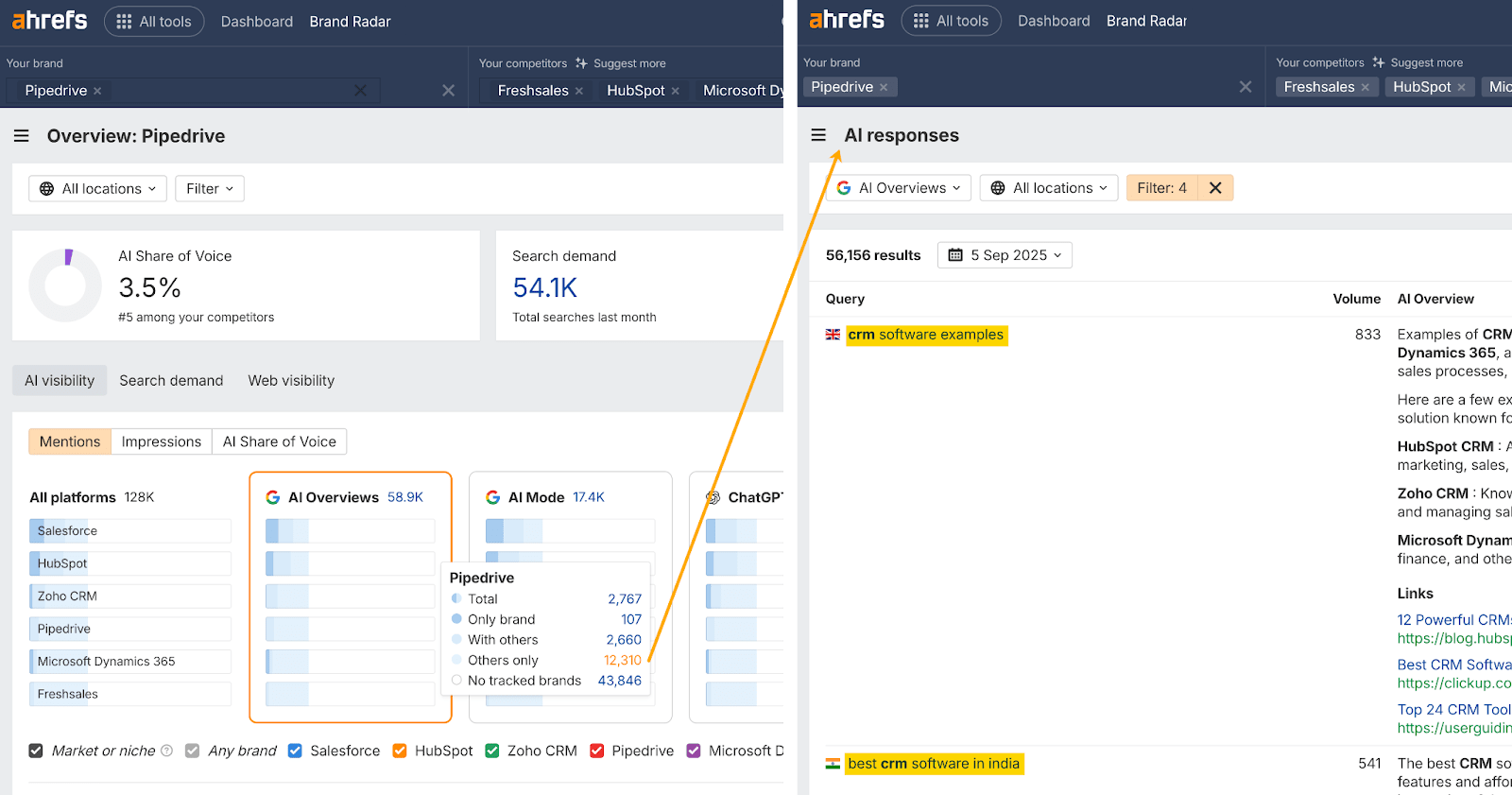

To seek out these alternatives, Pipedrive can do a competitor hole evaluation in three steps:

- Click on “Others solely”

- Examine the immediate subjects they’re lacking within the AI Responses report

- Create or optimize content material to say some extra of that visibility

AI outcomes are noisy and artificial prompts aren’t excellent, however that doesn’t cease them from revealing one thing essential: how your model is framed within the solutions that do seem.

You don’t want flawless knowledge to study helpful issues.

The best way AIs describe your model—the adjectives they use, the websites they group you with—can inform you a large number about your positioning, even when the prompts are proxies and the solutions fluctuate.

- Are you labeled the “budget-friendly” choice whereas opponents are framed as “enterprise-ready”?

- Do you constantly get advisable for “ease of use” whereas one other model is praised for “superior options”?

- Are you talked about alongside market leaders, or lumped in with area of interest alternate options?

These patterns reveal the narrative that AI assistants connect to your model.

And whereas particular person solutions could fluctuate, these recurring themes add as much as a transparent sign.

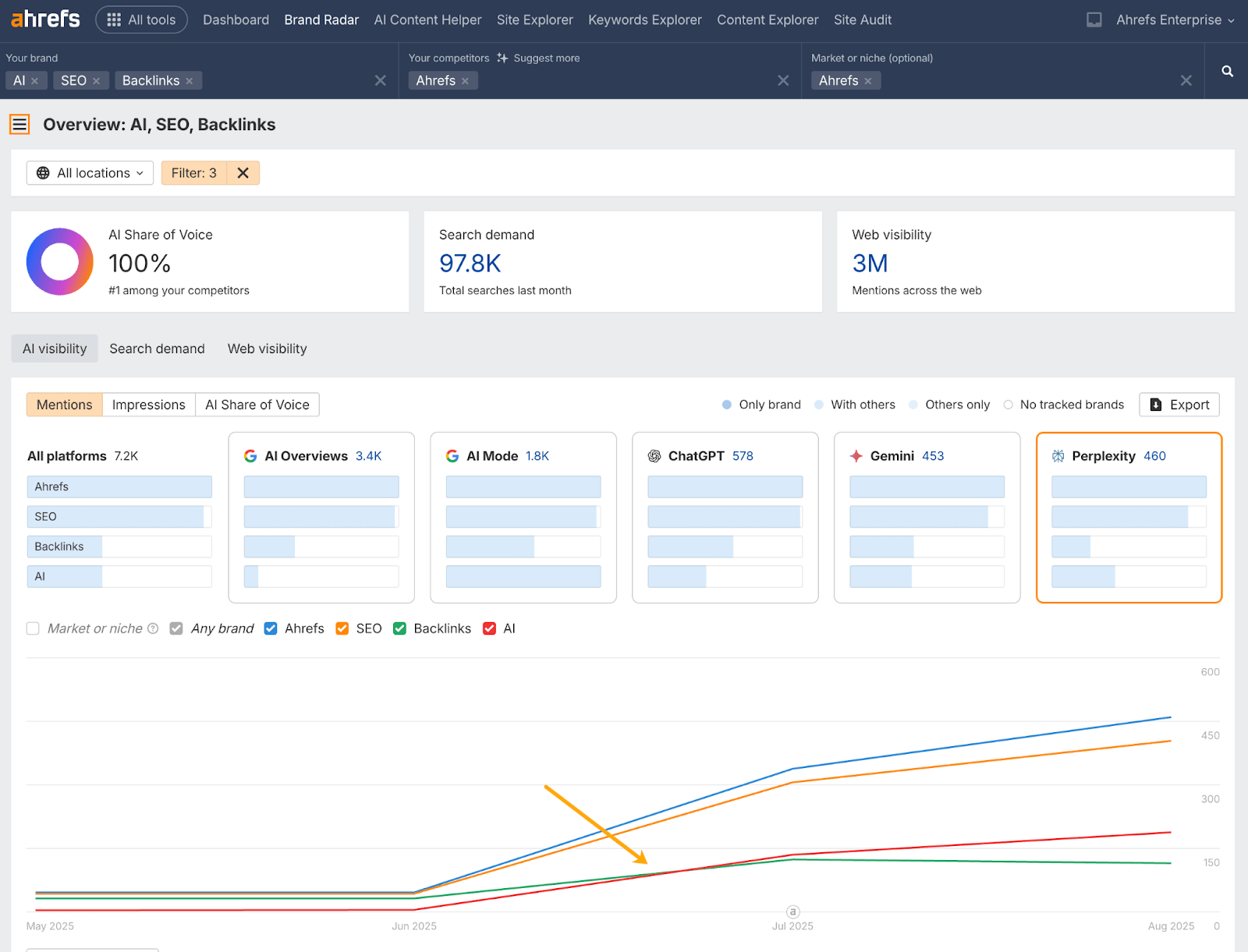

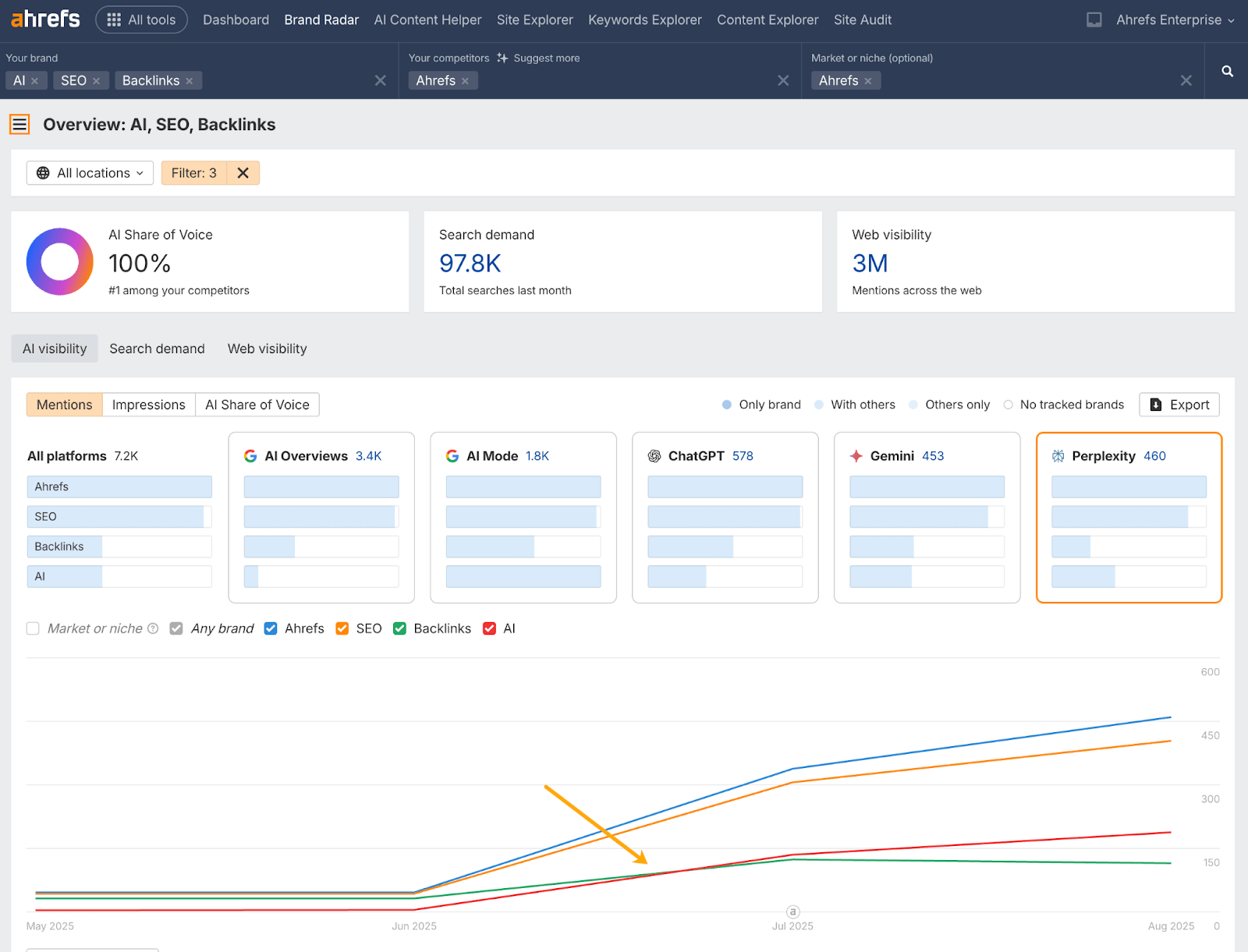

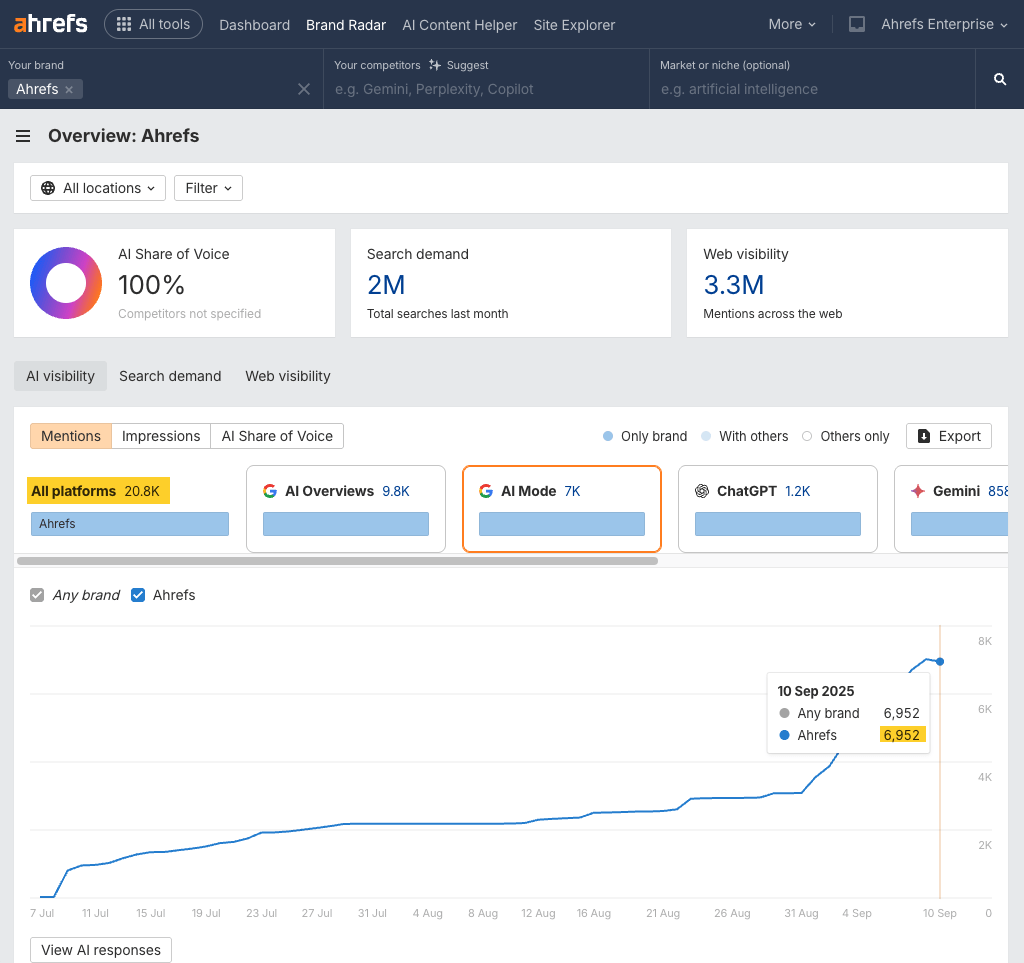

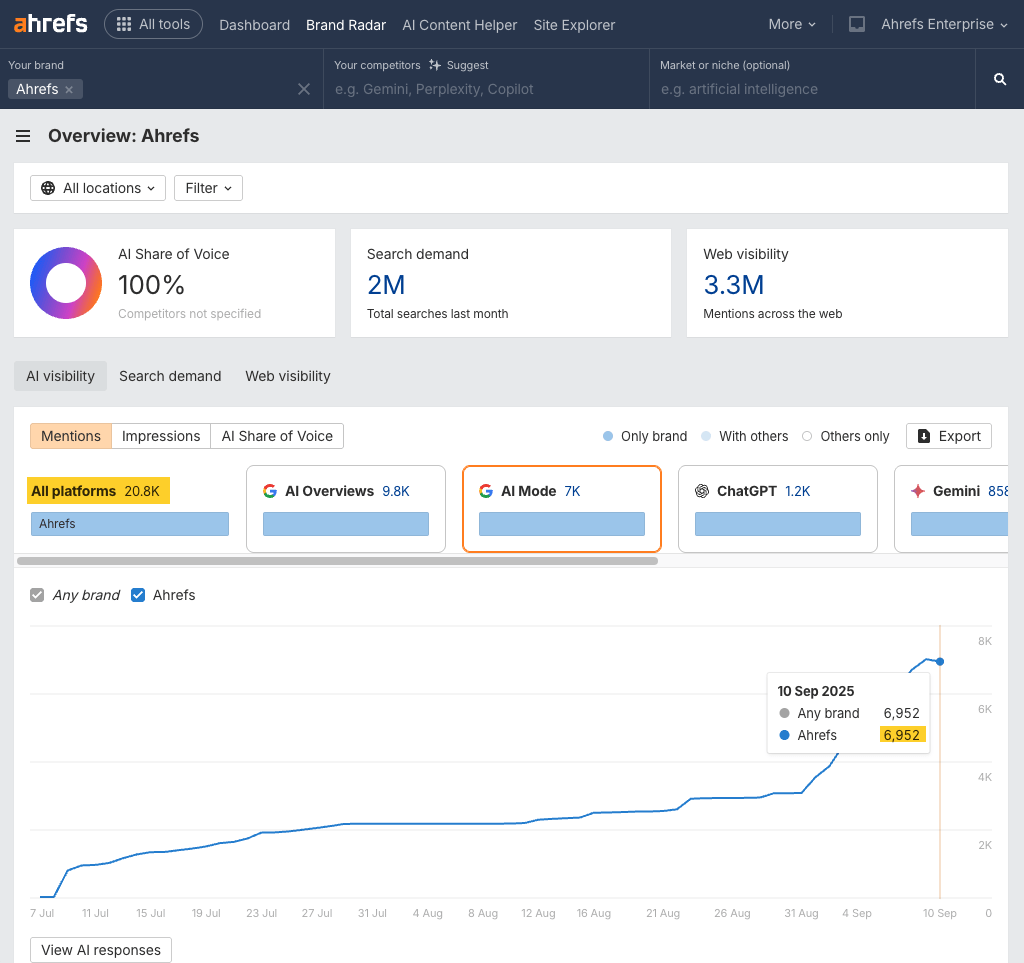

For instance, proper now we now have a difficulty with our personal AI visibility.

Ahrefs’ positioning has shifted prior to now 12 months as we’ve added new options and developed right into a marketing platform.

But, AI responses still describe us primarily as an ‘SEO’ or ‘Backlinks’ tool.

By putting out consistent AI features, products, content, and messaging, our positioning is now beginning to shift on some AI surfaces.

You can see this when the red trend line (AI) overtakes the green (Backlinks) in the chart below.

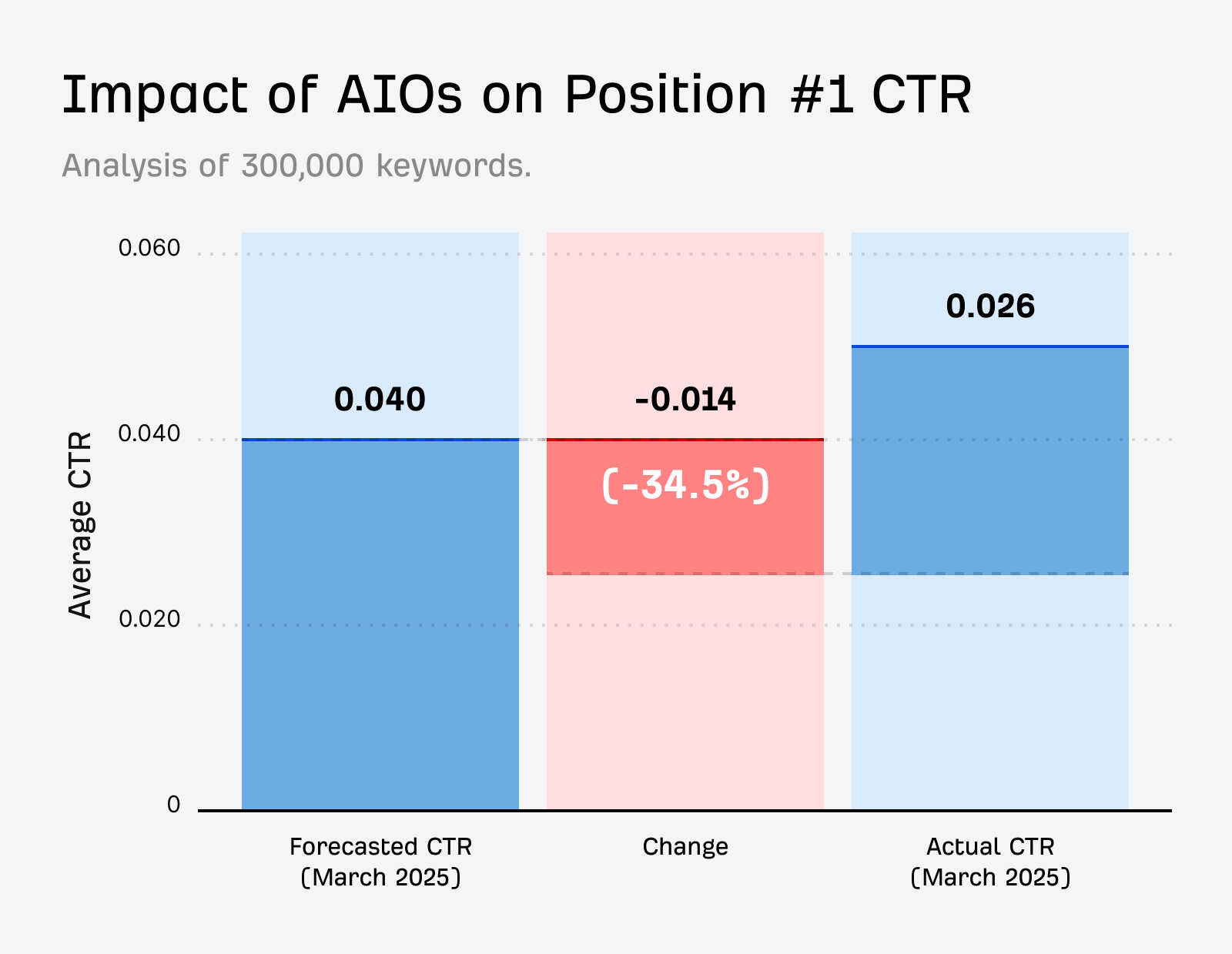

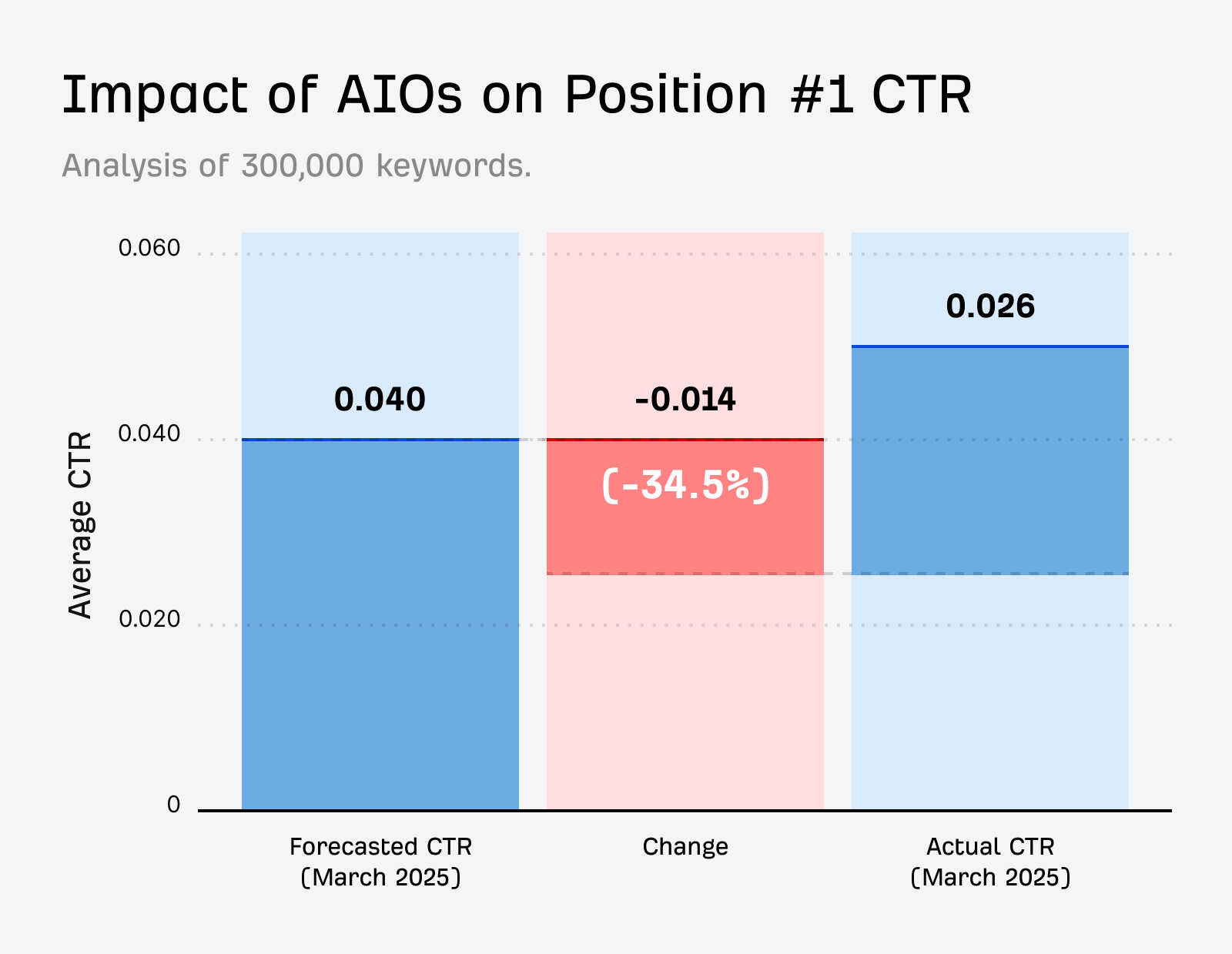

Natural site visitors is shrinking quick.

When Google’s AI Overview seems, clickthroughs to the highest search outcomes drop by about a third.

That means being named in AI answers is no longer optional.

AI assistants are already part of the discovery journey.

People turn to ChatGPT, Gemini, and Copilot for product recommendations, not just quick facts.

If your brand isn’t in those answers, you’re invisible at the exact moment decisions are made.

That’s why tracking AI visibility matters.

Even if the data is noisy, it shows whether you’re part of the conversation—or whether competitors are taking the spotlight.

In an ideal world, monitoring AI visibility on a micro and macro degree isn’t an both–or alternative.

Micro monitoring for high-stakes AI prompts

Micro monitoring is about zooming in on the handful of queries that basically matter to what you are promoting.

These would possibly embody:

- Branded prompts: e.g. “What’s [Brand] identified for?”

- Competitor comparisons: e.g. “[Brand] vs [Competitor]”

- Backside-of-funnel buy queries: e.g. “greatest for [audience]”

Despite the fact that AI responses are probabilistic, it’s nonetheless value monitoring these “make or break” queries the place visibility or accuracy actually issues.

Macro monitoring for general AI visibility

Macro monitoring is about zooming out to know the larger image of how AI connects your model to subjects and markets.

This strategy is about monitoring hundreds of variations to identify patterns, discover new alternatives, and map the aggressive panorama.

Most AI instruments solely deal with the primary mode, however Ahrefs’ Model Radar can assist you with each.

It permits you to hold tabs on business-critical prompts whereas additionally surfacing the unknown unknowns.

And shortly it’ll help customized prompts, so you may get much more granular together with your monitoring.

each ranges helps you reply two questions: are you current the place it counts, and are you robust sufficient to dominate the market?

Remaining ideas

No, you’ll by no means observe AI interactions in the identical method you observe conventional searches.

However that’s not the level.

AI search monitoring is a compass—it’s going to present if you happen to’re headed in the best path.

The true threat is ignoring your AI visibility whereas opponents construct presence within the house.

Begin now, deal with the info as directional, and use it to form your content material, PR, and positioning.