Google Analysis introduced A groundbreaking way to fine-tune large language models (LLMS) that reduce the amount of training data required by up to 10,000 timeswhile sustaining or enhancing the standard of the mannequin. This method focuses on essentially the most helpful examples: knowledgeable labelling efforts to actively be taught and concentrate on “boundary instances” the place mannequin uncertainty is at peak.

Conventional bottleneck

Giant, high-quality labeled datasets are sometimes required to fine-tune LLMS for duties that require deep contextual and cultural understanding, similar to promoting content material security and moderation. Many of the knowledge is benign. In different phrases, relating to coverage violation detection, solely a small fraction of the examples are vital, rising the price and complexity of information curation. Additionally, normal strategies battle to maintain up with altering insurance policies and problematic patterns and require costly retraining.

Google’s Energetic Studying Breakthrough

The way it works:

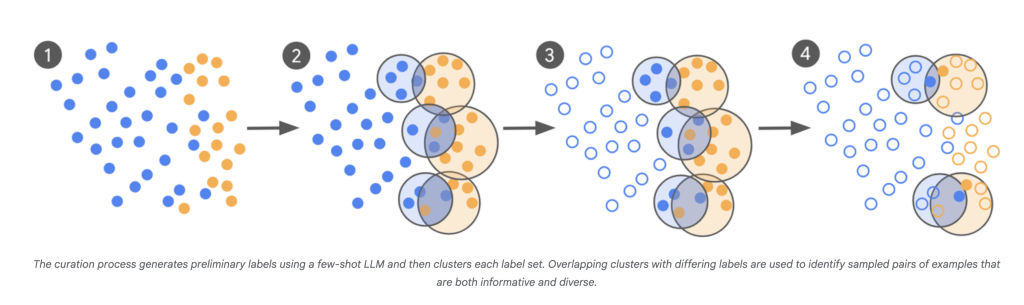

- llm-as-scout: LLM is used to scan an enormous variety of corpus (1000’s of billions of examples) and establish the least sure instances.

- Focused knowledgeable labeling: As an alternative of labeling 1000’s of random examples, human consultants solely annotate objects that trigger confusion in these boundaries.

- Iterative curation: This course of is repeated in every batch of recent “problematic” examples which are notified by the confusion factors of the newest mannequin.

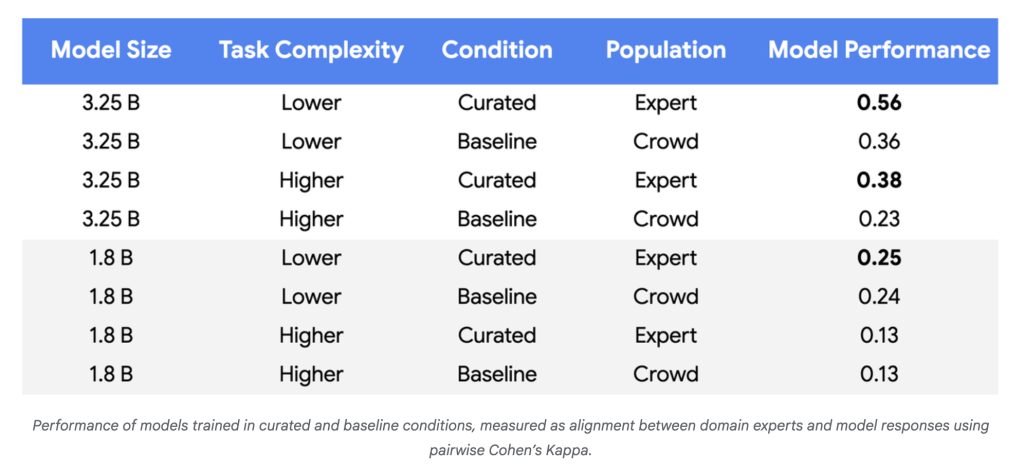

- Fast convergence: The mannequin is fine-tuned in a number of rounds and continues by means of iteration till the output of the mannequin is carefully matched with knowledgeable judgment. That is measured by Cohen’s kappa, which by accident compares matches between annotators.

Influence:

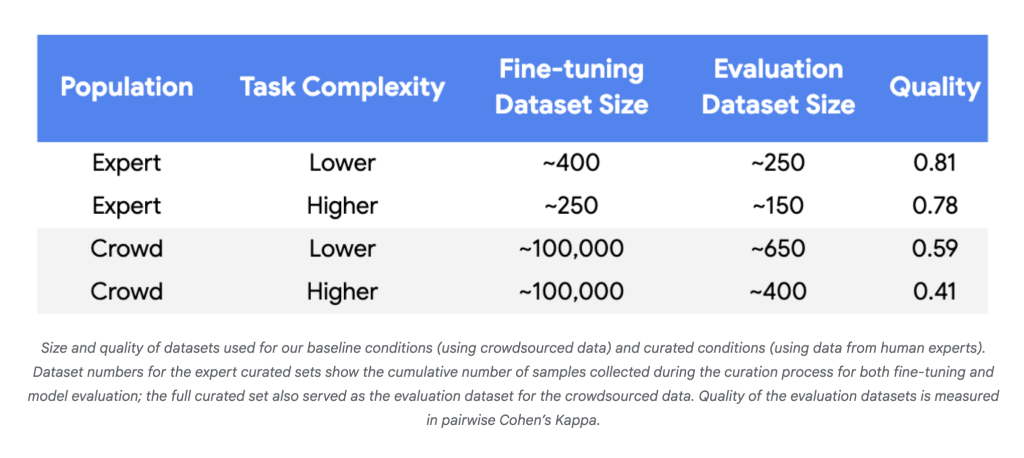

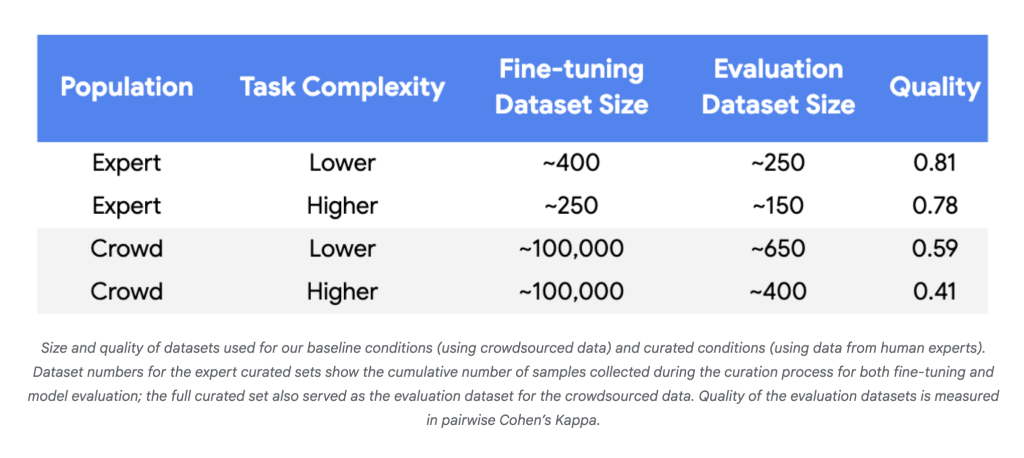

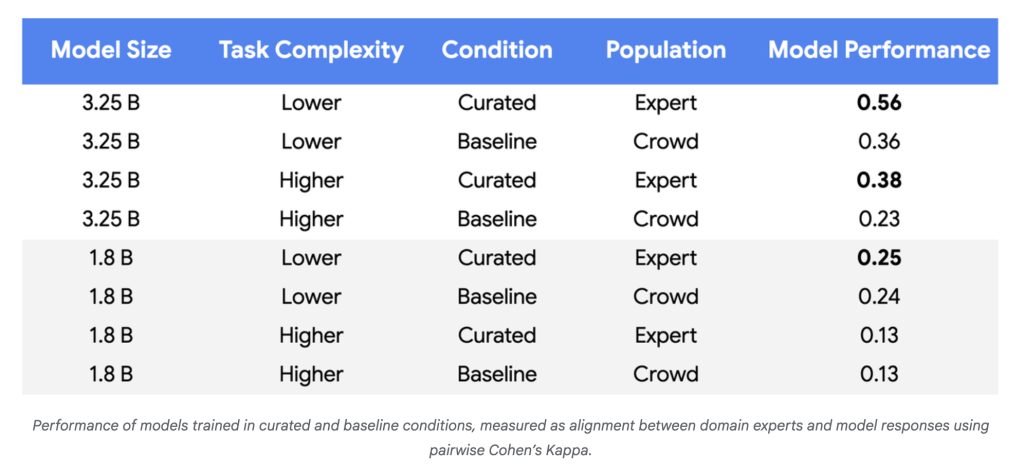

- Knowledge ought to plummet: Experiments utilizing Gemini Nano-1 and Nano-2 fashions reached greater than parity in consistency with human consultants. 250-450 correctly chosen examples It is a 3-4 digit discount, not a random crowdsourcing label of ~100,000.

- Mannequin high quality enchancment: For extra advanced duties and bigger fashions, efficiency enhancements reached 55-65% over baseline, indicating extra dependable consistency with coverage consultants.

- Label effectivity: A dependable revenue utilizing small datasets constantly required excessive label high quality (Cohen’s Kappa>0.8).

Why is it vital?

This method reverses the standard paradigm. Relatively than a mannequin owned by an enormous pool of noisy redundant knowledge, it leverages each the power of LLM to establish ambiguous instances and the area experience of the human annotator whose inputs are most dear. The benefit is profound:

- Value discount: There are considerably fewer examples of dramatically lowered labor and capital expenditures.

- Quicker updates: The power to retrain fashions with only a handful of examples makes adapting to new abuse patterns, coverage modifications, or area shifts fast and possible.

- Social affect: Improved capabilities for context and cultural understanding will enhance the security and reliability of automated programs that deal with delicate content material.

In abstract

Google’s new methodology permits LLM to be tweaked with advanced and evolving duties with lots of of 1000’s of focused (lots of of 1000’s) of excessive constancy labels.

Mikal Sutter is an information science knowledgeable with a Grasp’s diploma in Knowledge Science from Padova College. With its strong foundations of statistical evaluation, machine studying, and knowledge engineering, Michal excels at remodeling advanced datasets into actionable insights.